使用块的网络 - VGG。

使用多个 3 × 3 3\times 3 3×3的要比使用少个 5 × 5 5\times 5 5×5的效果要好。

VGG全称是Visual Geometry Group,因为是由Oxford的Visual Geometry Group提出的。AlexNet问世之后,很多学者通过改进AlexNet的网络结构来提高自己的准确率,主要有两个方向:小卷积核和多尺度。而VGG的作者们则选择了另外一个方向,即加深网络深度。

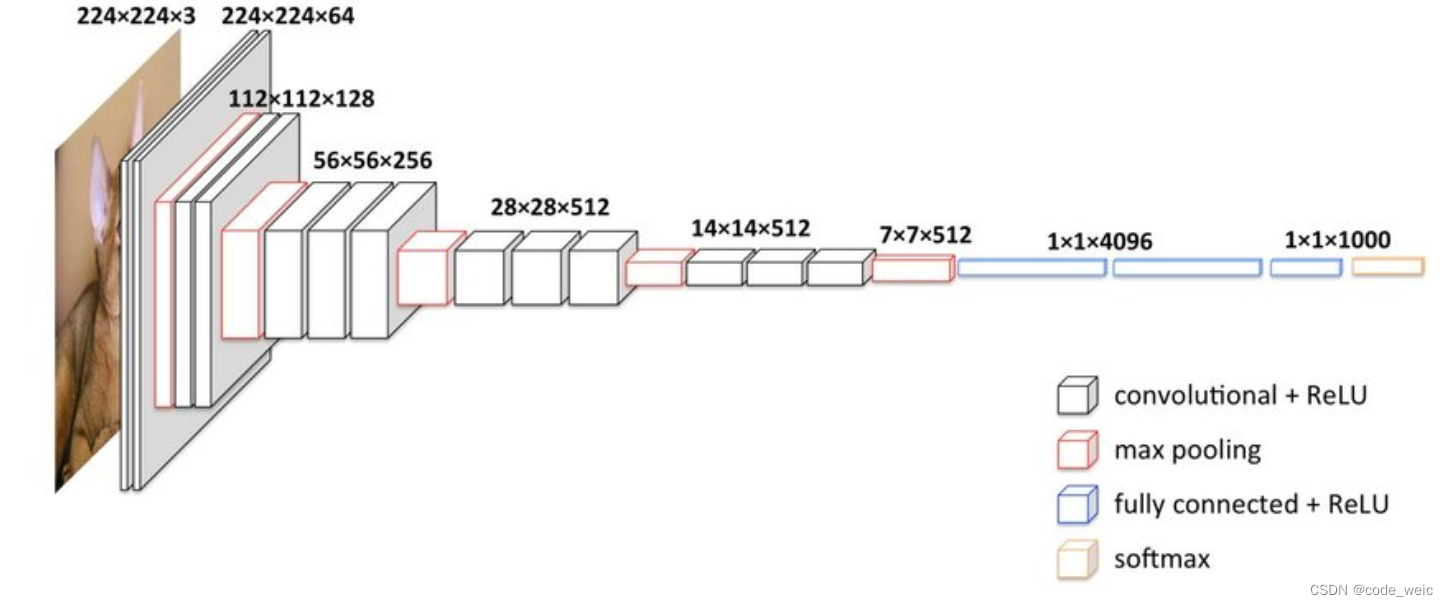

网络架构

卷积网络的输入是224 * 224的RGB图像,整个网络的组成是非常格式化的,基本上都用的是3 * 3的卷积核以及 2 * 2的max pooling,少部分网络加入了1 * 1的卷积核。因为想要体现出“上下左右中”的概念,3*3的卷积核已经是最小的尺寸了。

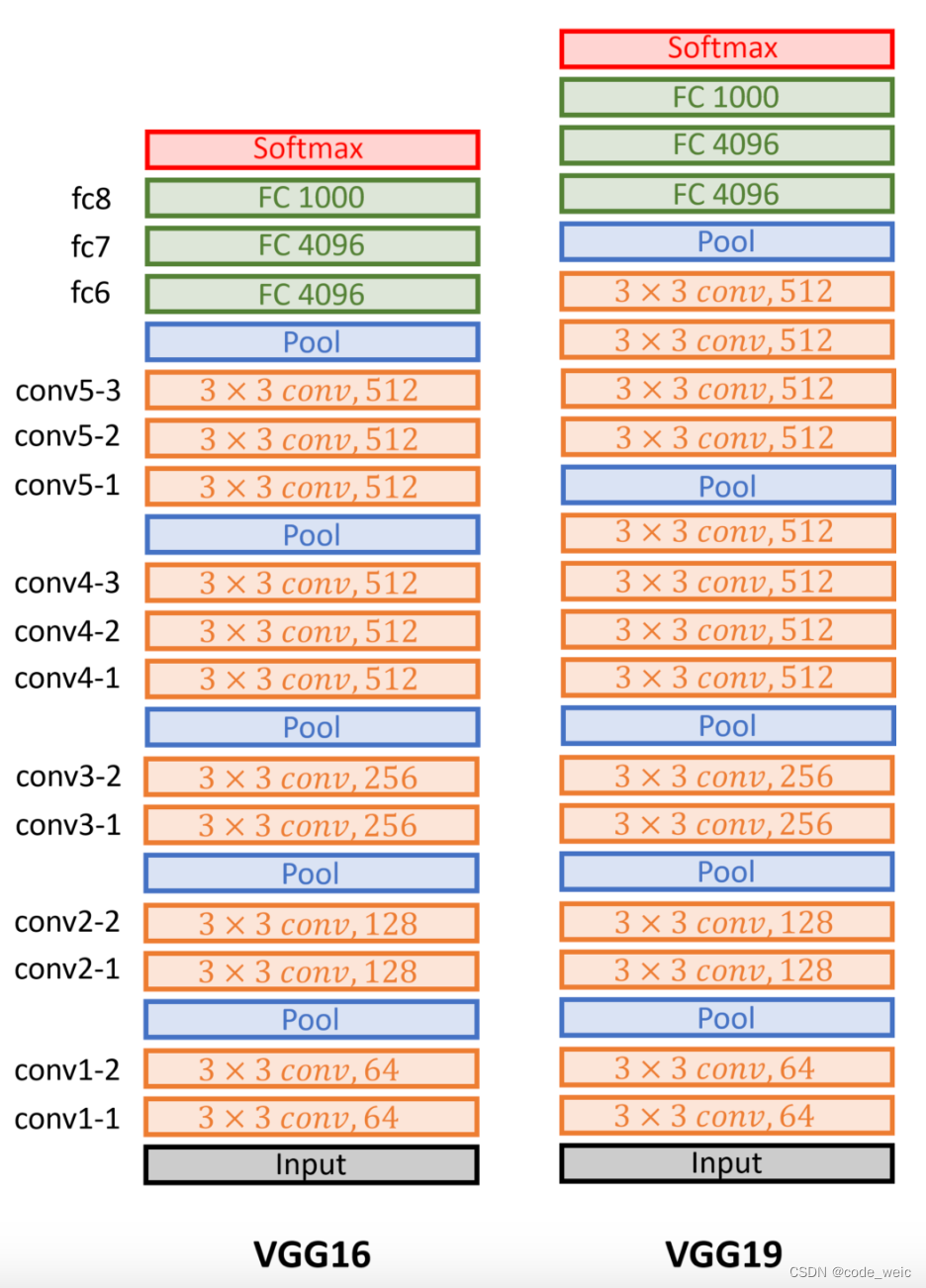

VGG16相比之前网络的改进是3个33卷积核来代替7x7卷积核,2个33卷积核来代替5*5卷积核,这样做的主要目的是在保证具有相同感知野的条件下,减少参数,提升了网络的深度。

多个VGG块后接全连接层。

不同次数的重复块得到不同的架构,如VGG-16,VGG-19等。

VGG:更大更深的AlexNet。

总结:

- VGG使用可重复使用的卷积块来构建深度卷积神经网络

- 不同的卷积块个数和超参数可以得到不同复杂度的变种

代码实现

使用数据集CIFAR

model.py

import torch

from torch import nn

class Vgg16(nn.Module):

def __init__(self, *args, **kwargs) -> None:

super().__init__(*args, **kwargs)

self.model = nn.Sequential(

nn.Conv2d(3,64,kernel_size=3,padding=1),

nn.ReLU(),

nn.Conv2d(64,64,kernel_size=3,padding=1),

nn.ReLU(),

nn.MaxPool2d(2,2),

nn.Conv2d(64,128,kernel_size=3,padding=1),

nn.ReLU(),

nn.Conv2d(128,128,kernel_size=3,padding=1),

nn.ReLU(),

nn.MaxPool2d(2,2),

nn.Conv2d(128,256,kernel_size=3,padding=1),

nn.ReLU(),

nn.Conv2d(256,256,kernel_size=3,padding=1),

nn.ReLU(),

nn.Conv2d(256,256,kernel_size=3,padding=1),

nn.ReLU(),

nn.MaxPool2d(2,2),

nn.Conv2d(256,512,kernel_size=3,padding=1),

nn.ReLU(),

nn.Conv2d(512,512,kernel_size=3,padding=1),

nn.ReLU(),

nn.Conv2d(512,512,kernel_size=3,padding=1),

nn.ReLU(),

nn.MaxPool2d(2,2),

nn.Conv2d(512, 512, kernel_size=3, padding=1),

nn.ReLU(),

nn.Conv2d(512, 512, kernel_size=3, padding=1),

nn.ReLU(),

nn.Conv2d(512, 512, kernel_size=3, padding=1),

nn.ReLU(),

nn.MaxPool2d(2,2),

nn.Flatten(),

nn.Linear(7*7*512,4096),

nn.Dropout(0.5),

nn.Linear(4096,4096),

nn.Dropout(0.5),

nn.Linear(4096,10)

)

def forward(self,x):

return self.model(x)

# 验证模型正确性

if __name__ == '__main__':

net = Vgg16()

x = torch.ones((64,3,244,244))

output = net(x)

print(output)

train.py

import torch

from torch import nn

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

from torchvision import datasets

from torchvision.transforms import transforms

from model import Vgg16

# 扫描数据次数

epochs = 3

# 分组大小

batch = 64

# 学习率

learning_rate = 0.01

# 训练次数

train_step = 0

# 测试次数

test_step = 0

# 定义图像转换

transform = transforms.Compose([

transforms.Resize(224),

transforms.ToTensor()

])

# 读取数据

train_dataset = datasets.CIFAR10(root="./dataset",train=True,transform=transform,download=True)

test_dataset = datasets.CIFAR10(root="./dataset",train=False,transform=transform,download=True)

# 加载数据

train_dataloader = DataLoader(train_dataset,batch_size=batch,shuffle=True,num_workers=0)

test_dataloader = DataLoader(test_dataset,batch_size=batch,shuffle=True,num_workers=0)

# 数据大小

train_size = len(train_dataset)

test_size = len(test_dataset)

print("训练集大小:{}".format(train_size))

print("验证集大小:{}".format(test_size))

# GPU

device = torch.device("mps" if torch.backends.mps.is_available() else "cpu")

print(device)

# 创建网络

net = Vgg16()

net = net.to(device)

# 定义损失函数

loss = nn.CrossEntropyLoss()

loss = loss.to(device)

# 定义优化器

optimizer = torch.optim.SGD(net.parameters(),lr=learning_rate)

writer = SummaryWriter("logs")

# 训练

for epoch in range(epochs):

print("-------------------第 {} 轮训练开始-------------------".format(epoch))

net.train()

for data in train_dataloader:

train_step = train_step + 1

images,targets = data

images = images.to(device)

targets = targets.to(device)

outputs = net(images)

loss_out = loss(outputs,targets)

optimizer.zero_grad()

loss_out.backward()

optimizer.step()

if train_step%100==0:

writer.add_scalar("Train Loss",scalar_value=loss_out.item(),global_step=train_step)

print("训练次数:{},Loss:{}".format(train_step,loss_out.item()))

# 测试

net.eval()

total_loss = 0

total_accuracy = 0

with torch.no_grad():

for data in test_dataloader:

test_step = test_step + 1

images, targets = data

images = images.to(device)

targets = targets.to(device)

outputs = net(images)

loss_out = loss(outputs, targets)

total_loss = total_loss + loss_out

accuracy = (targets == torch.argmax(outputs,dim=1)).sum()

total_accuracy = total_accuracy + accuracy

# 计算精确率

print(total_accuracy)

accuracy_rate = total_accuracy / test_size

print("第 {} 轮,验证集总损失为:{}".format(epoch+1,total_loss))

print("第 {} 轮,精确率为:{}".format(epoch+1,accuracy_rate))

writer.add_scalar("Test Total Loss",scalar_value=total_loss,global_step=epoch+1)

writer.add_scalar("Accuracy Rate",scalar_value=accuracy_rate,global_step=epoch+1)

torch.save(net,"./model/net_{}.pth".format(epoch+1))

print("模型net_{}.pth已保存".format(epoch+1))