本文翻译自:https://github.com/WongKinYiu/yolov7

YOLOv7

-

2022年发布,论文链接:YOLOv7: Trainable bag-of-freebies sets new state-of-the-art for real-time object detectors

-

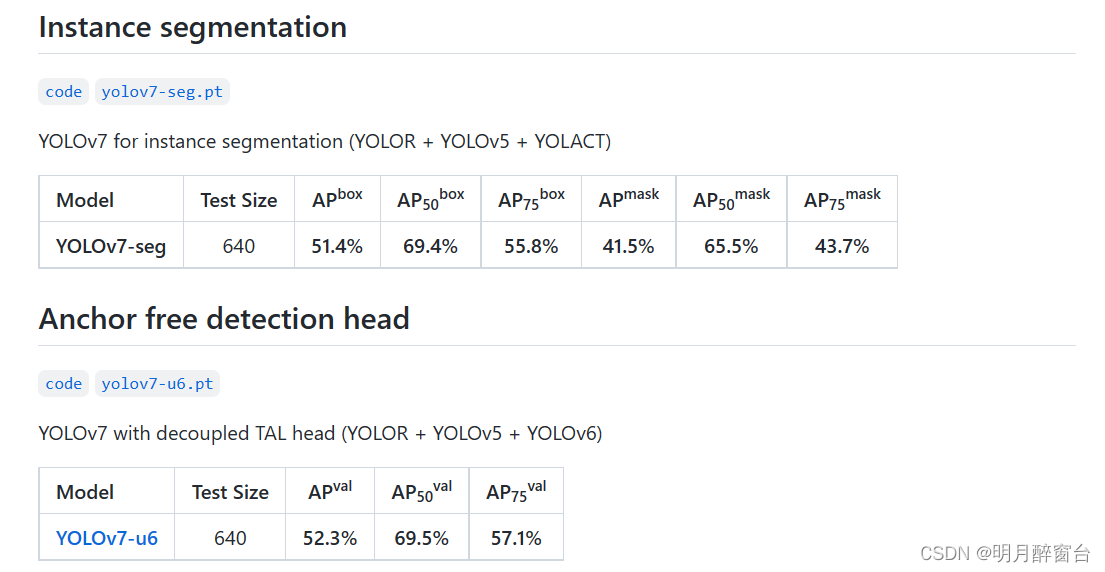

模型性能如下:

-

网页端可执行demo:Huggingface Spaces

-

模型表现:

-

安装:

- 推荐:docker环境

- 命令:

# create the docker container, you can change the share memory size if you have more.

nvidia-docker run --name yolov7 -it -v your_coco_path/:/coco/ -v your_code_path/:/yolov7 --shm-size=64g nvcr.io/nvidia/pytorch:21.08-py3

# apt install required packages

apt update

apt install -y zip htop screen libgl1-mesa-glx

# pip install required packages

pip install seaborn thop

# go to code folder

cd /yolov7

测试:

yolov7.pt … yolov7x.pt … yolov7-w6.pt … yolov7-e6.pt … yolov7-d6.pt … yolov7-e6e.pt

cmd 执行:

python test.py --data data/coco.yaml --img 640 --batch 32 --conf 0.001 --iou 0.65 --device 0 --weights yolov7.pt --name yolov7_640_val

You will get the results:

Average Precision (AP) @[ IoU=0.50:0.95 | area= all | maxDets=100 ] = 0.51206

Average Precision (AP) @[ IoU=0.50 | area= all | maxDets=100 ] = 0.69730

Average Precision (AP) @[ IoU=0.75 | area= all | maxDets=100 ] = 0.55521

Average Precision (AP) @[ IoU=0.50:0.95 | area= small | maxDets=100 ] = 0.35247

Average Precision (AP) @[ IoU=0.50:0.95 | area=medium | maxDets=100 ] = 0.55937

Average Precision (AP) @[ IoU=0.50:0.95 | area= large | maxDets=100 ] = 0.66693

Average Recall (AR) @[ IoU=0.50:0.95 | area= all | maxDets= 1 ] = 0.38453

Average Recall (AR) @[ IoU=0.50:0.95 | area= all | maxDets= 10 ] = 0.63765

Average Recall (AR) @[ IoU=0.50:0.95 | area= all | maxDets=100 ] = 0.68772

Average Recall (AR) @[ IoU=0.50:0.95 | area= small | maxDets=100 ] = 0.53766

Average Recall (AR) @[ IoU=0.50:0.95 | area=medium | maxDets=100 ] = 0.73549

Average Recall (AR) @[ IoU=0.50:0.95 | area= large | maxDets=100 ] = 0.83868

To measure accuracy, download COCO-annotations for Pycocotools to the

./coco/annotations/instances_val2017.json

训练:

- 数据准备

bash scripts/get_coco.sh

Download MS COCO dataset images (train, val, test) and labels. If you have previously used a different version of YOLO, we strongly recommend that you delete train2017.cache and val2017.cache files, and redownload labels

- 单GPU训练

# train p5 models

python train.py --workers 8 --device 0 --batch-size 32 --data data/coco.yaml --img 640 640 --cfg cfg/training/yolov7.yaml --weights '' --name yolov7 --hyp data/hyp.scratch.p5.yaml

# train p6 models

python train_aux.py --workers 8 --device 0 --batch-size 16 --data data/coco.yaml --img 1280 1280 --cfg cfg/training/yolov7-w6.yaml --weights '' --name yolov7-w6 --hyp data/hyp.scratch.p6.yaml

- 多GPU训练:

# train p5 models

python -m torch.distributed.launch --nproc_per_node 4 --master_port 9527 train.py --workers 8 --device 0,1,2,3 --sync-bn --batch-size 128 --data data/coco.yaml --img 640 640 --cfg cfg/training/yolov7.yaml --weights '' --name yolov7 --hyp data/hyp.scratch.p5.yaml

# train p6 models

python -m torch.distributed.launch --nproc_per_node 8 --master_port 9527 train_aux.py --workers 8 --device 0,1,2,3,4,5,6,7 --sync-bn --batch-size 128 --data data/coco.yaml --img 1280 1280 --cfg cfg/training/yolov7-w6.yaml --weights '' --name yolov7-w6 --hyp data/hyp.scratch.p6.yaml

迁移学习:

yolov7_training.pt … yolov7x_training.pt … yolov7-w6_training.pt … yolov7-e6_training.pt … yolov7-d6_training.pt … yolov7-e6e_training.pt

单GPU微调自定义数据集:

# finetune p5 models

python train.py --workers 8 --device 0 --batch-size 32 --data data/custom.yaml --img 640 640 --cfg cfg/training/yolov7-custom.yaml --weights 'yolov7_training.pt' --name yolov7-custom --hyp data/hyp.scratch.custom.yaml

# finetune p6 models

python train_aux.py --workers 8 --device 0 --batch-size 16 --data data/custom.yaml --img 1280 1280 --cfg cfg/training/yolov7-w6-custom.yaml --weights 'yolov7-w6_training.pt' --name yolov7-w6-custom --hyp data/hyp.scratch.custom.yaml

Re-parameterization:

See reparameterization.ipynb

交互:

- 视频处理

python detect.py --weights yolov7.pt --conf 0.25 --img-size 640 --source yourvideo.mp4

- 图像处理:

python detect.py --weights yolov7.pt --conf 0.25 --img-size 640 --source inference/images/horses.jpg

导出模型

- Pytorch to ONNX with NMS (and inference)

python export.py --weights yolov7-tiny.pt --grid --end2end --simplify \

--topk-all 100 --iou-thres 0.65 --conf-thres 0.35 --img-size 640 640 --max-wh 640

- Pytorch to TensorRT with NMS (and inference)

wget https://github.com/WongKinYiu/yolov7/releases/download/v0.1/yolov7-tiny.pt

python export.py --weights ./yolov7-tiny.pt --grid --end2end --simplify --topk-all 100 --iou-thres 0.65 --conf-thres 0.35 --img-size 640 640

git clone https://github.com/Linaom1214/tensorrt-python.git

python ./tensorrt-python/export.py -o yolov7-tiny.onnx -e yolov7-tiny-nms.trt -p fp16

- Pytorch to TensorRT another way:

wget https://github.com/WongKinYiu/yolov7/releases/download/v0.1/yolov7-tiny.pt

python export.py --weights yolov7-tiny.pt --grid --include-nms

git clone https://github.com/Linaom1214/tensorrt-python.git

python ./tensorrt-python/export.py -o yolov7-tiny.onnx -e yolov7-tiny-nms.trt -p fp16

# Or use trtexec to convert ONNX to TensorRT engine

/usr/src/tensorrt/bin/trtexec --onnx=yolov7-tiny.onnx --saveEngine=yolov7-tiny-nms.trt --fp16

Tested with: Python 3.7.13, Pytorch 1.12.0+cu113

姿态估计

code … yolov7-w6-pose.pt

See keypoint.ipynb.

实例分割

code … yolov7-mask.pt

See instance.ipynb.

其他:

引用

- https://github.com/AlexeyAB/darknet

- https://github.com/WongKinYiu/yolor

- https://github.com/WongKinYiu/PyTorch_YOLOv4

- https://github.com/WongKinYiu/ScaledYOLOv4

- https://github.com/Megvii-BaseDetection/YOLOX

- https://github.com/ultralytics/yolov3

- https://github.com/ultralytics/yolov5

- https://github.com/DingXiaoH/RepVGG

- https://github.com/JUGGHM/OREPA_CVPR2022

- https://github.com/TexasInstruments/edgeai-yolov5/tree/yolo-pose