Kubernetes资源调度之节点亲和

Pod节点选择器

nodeSelector指定的标签选择器过滤符合条件的节点作为可用目标节点,最终选择则基于打分机制完成。因此,后者也称为节点选择器。用户事先为特定部分的Node资源对象设定好标签,而后即可配置Pod通过节点选择器实现类似于节点的强制亲和调度。

可以通过下面的命令查看每个node上的标签

[root@k8s-01 ~]# kubectl get nodes --show-labels

NAME STATUS ROLES AGE VERSION LABELS

k8s-01 Ready control-plane,master 26d v1.22.3 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/fluentd-ds-ready=true,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-01,kubernetes.io/os=linux,node-role.kubernetes.io/control-plane=,node-role.kubernetes.io/master=,node.kubernetes.io/exclude-from-external-load-balancers=

k8s-02 Ready <none> 26d v1.22.3 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/fluentd-ds-ready=true,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-02,kubernetes.io/os=linux,type=kong,ype=kong

k8s-03 Ready <none> 26d v1.22.3 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/fluentd-ds-ready=true,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-03,kubernetes.io/os=linux,type=kong

k8s-04 Ready <none> 8d v1.22.3 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-04,kubernetes.io/os=linux

[root@k8s-01 ~]#给node4节点打上标签

[root@k8s-01 ~]# kubectl label nodes k8s-04 type=test

node/k8s-04 labeled测试的yaml

echo "

apiVersion: v1

kind: Pod

metadata:

labels:

app: nginx-test

name: nginx-test

spec:

containers:

- image: nginx

name: nginx-test

nodeSelector:

type: test

" | kubectl apply -f -查看pod的分配情况

[root@k8s-01 ~]# kubectl get pods -o wide |grep nginx-test

nginx-test 1/1 Running 0 58s 10.244.7.81 k8s-04 <none> <none>

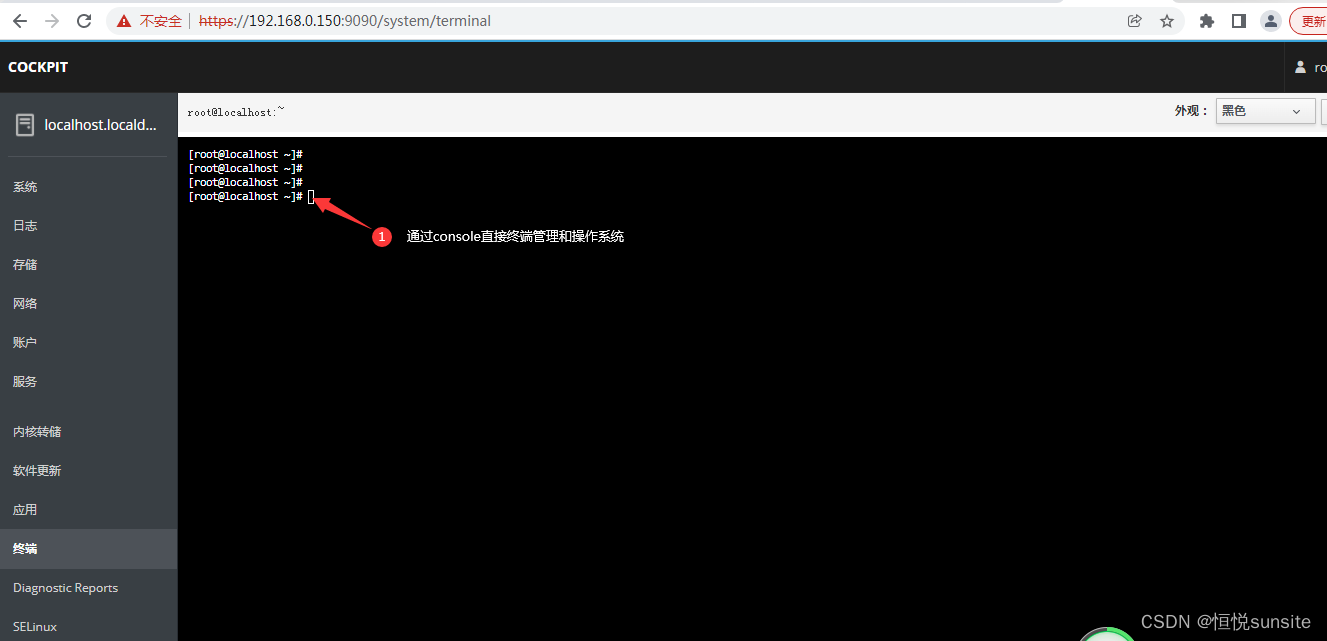

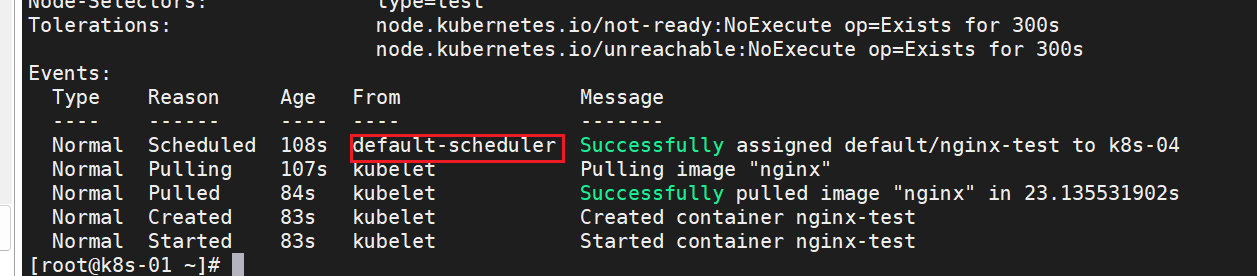

[root@k8s-01 ~]#然后我们可以通过 describe 命令查看调度结果:

事实上,多数情况下用户都无须关心Pod对象的具体运行位置,除非Pod依赖的特殊条件仅能由部分节点满足时,例如GPU和SSD等。即便如此,也应该尽量避免使用.spec.nodeName静态指定Pod对象的运行位置,而是应该让调度器基于标签和标签选择器为Pod挑选匹配的工作节点。另外,Pod规范中的.spec.nodeSelector仅支持简单等值关系的节点选择器,而.spec.affinity.nodeAffinity支持更灵活的节点选择器表达式,而且可以实现硬亲和与软亲和逻辑。

节点亲和调度

节点亲和性(nodeAffinity)主要是用来控制 Pod 要部署在哪些节点上,以及不能部署在哪些节点上的,它可以进行一些简单的逻辑组合了,不只是简单的相等匹配。在Pod上定义节点亲和规则时有两种类型的节点亲和关系:强制(required)亲和和首选(preferred)亲和,或分别称为硬亲和与软亲和。

- 强制亲和限定了调度Pod资源时必须要满足的规则,无可用节点时Pod对象会被置为Pending状态,直到满足规则的节点出现。

- 柔性亲和规则实现的是一种柔性调度限制,它同样倾向于将Pod运行在某类特定的节点之上,但无法满足调度需求时,调度器将选择一个无法匹配规则的节点,而非将Pod置于Pending状态。

在Pod规范上定义节点亲和规则的关键点有两个:

- 一是给节点规划并配置合乎期望的标签;

- 二是为Pod对象定义合理的标签选择器。

正如preferredDuringSchedulingIgnoredDuringExecution和requiredDuringSchedulingIgnoredDuringExecution字段名字中的后半段符串IgnoredDuringExecution隐含的意义所指,在Pod资源基于节点亲和规则调度至某节点之后,因节点标签发生了改变而变得不再符合Pod定义的亲和规则时,调度器也不会将Pod从此节点上移出,因而亲和调度仅在调度执行的过程中进行一次即时的判断,而非持续地监视亲和规则是否能够得以满足。

查看官方说明:

[root@k8s-01 ~]# kubectl explain pod.spec.affinity.nodeAffinity

KIND: Pod

VERSION: v1

RESOURCE: nodeAffinity <Object>

DESCRIPTION:

Describes node affinity scheduling rules for the pod.

Node affinity is a group of node affinity scheduling rules.

FIELDS:

preferredDuringSchedulingIgnoredDuringExecution <[]Object>

The scheduler will prefer to schedule pods to nodes that satisfy the

affinity expressions specified by this field, but it may choose a node that

violates one or more of the expressions. The node that is most preferred is

the one with the greatest sum of weights, i.e. for each node that meets all

of the scheduling requirements (resource request, requiredDuringScheduling

affinity expressions, etc.), compute a sum by iterating through the

elements of this field and adding "weight" to the sum if the node matches

the corresponding matchExpressions; the node(s) with the highest sum are

the most preferred.

requiredDuringSchedulingIgnoredDuringExecution <Object>

If the affinity requirements specified by this field are not met at

scheduling time, the pod will not be scheduled onto the node. If the

affinity requirements specified by this field cease to be met at some point

during pod execution (e.g. due to an update), the system may or may not try

to eventually evict the pod from its node.

[root@k8s-01 ~]#强制亲和

查看官方说明:

[root@k8s-01 ~]# kubectl explain pod.spec.affinity.nodeAffinity.requiredDuringSchedulingIgnoredDuringExecution

KIND: Pod

VERSION: v1

RESOURCE: requiredDuringSchedulingIgnoredDuringExecution <Object>

DESCRIPTION:

If the affinity requirements specified by this field are not met at

scheduling time, the pod will not be scheduled onto the node. If the

affinity requirements specified by this field cease to be met at some point

during pod execution (e.g. due to an update), the system may or may not try

to eventually evict the pod from its node.

A node selector represents the union of the results of one or more label

queries over a set of nodes; that is, it represents the OR of the selectors

represented by the node selector terms.

FIELDS:

nodeSelectorTerms <[]Object> -required-

Required. A list of node selector terms. The terms are ORed.

[root@k8s-01 ~]# kubectl explain pod.spec.affinity.nodeAffinity.requiredDuringSchedulingIgnoredDuringExecution.nodeSelectorTerms

KIND: Pod

VERSION: v1

RESOURCE: nodeSelectorTerms <[]Object>

DESCRIPTION:

Required. A list of node selector terms. The terms are ORed.

A null or empty node selector term matches no objects. The requirements of

them are ANDed. The TopologySelectorTerm type implements a subset of the

NodeSelectorTerm.

FIELDS:

matchExpressions <[]Object>

A list of node selector requirements by node's labels.

matchFields <[]Object>

A list of node selector requirements by node's fields.

[root@k8s-01 ~]#- matchExpressions:标签选择器表达式,基于节点标签进行过滤;可重复使用以表达不同的匹配条件,各条件间为“或”关系。▪

- matchFields:以字段选择器表达的节点选择器;可重复使用以表达不同的匹配条件,各条件间为“或”关系。

注意:每个匹配条件可由一到多个匹配规则组成,例如某个matchExpressions条件下可同时存在两个表达式规则,如下面的示例所示,同一条件下的各条规则彼此间为“逻辑与”关系。这意味着某节点满足nodeSelectorTerms中的任意一个条件即可,但满足某个条件指的是可完全匹配该条件下定义的所有规则。

下面开始测试,给node3打上标签

[root@k8s-01 ~]# kubectl label nodes k8s-03 disktype=ssd

node/k8s-03 labeled测试yaml

apiVersion: v1

kind: Pod

metadata:

name: "busy-affinity"

labels:

app: "busy-affinity"

spec:

containers:

- name: busy-affinity

image: "busybox"

command: ["/bin/sh","-c","sleep 600"]

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution: ## 硬标准,后面是调度期间有效,忽略执行期间

nodeSelectorTerms:

- matchExpressions:

- key: disktype

values: ["ssd","hdd"]

operator: In查看pod

[root@k8s-01 ~]# kubectl get pods -o wide |grep busy-affinity

busy-affinity 1/1 Running 0 76s 10.244.165.209 k8s-03 <none> <none>

[root@k8s-01 ~]#从结果可以看出 Pod 被部署到了 node3 节点上,因为我们的硬策略就是部署到含有"ssd","hdd"标签的node节点上,所以会尽量满足。这里的匹配逻辑是 label 标签的值在某个列表中,现在 Kubernetes 提供的操作符有下面的几种:

- In:label 的值在某个列表中

- NotIn:label 的值不在某个列表中

- Gt:label 的值大于某个值

- Lt:label 的值小于某个值

- Exists:某个 label 存在

- DoesNotExist:某个 label 不存在

下面给node3和node4加上所有的标签,用于测试

[root@k8s-01 ~]# kubectl label nodes k8s-04 disktype=hdd

node/k8s-04 labeled

[root@k8s-01 ~]# kubectl label nodes k8s-04 disk=60

node/k8s-04 labeled

[root@k8s-01 ~]# kubectl label nodes k8s-04 gpu=3080

node/k8s-04 labeled

[root@k8s-01 ~]# kubectl label nodes k8s-03 gpu=3090

node/k8s-03 labeled

[root@k8s-01 ~]# kubectl label nodes k8s-03 disk=30

node/k8s-03 labeled

[root@k8s-01 ~]#柔性亲和

节点首选亲和机制为节点选择机制提供了一种柔性控制逻辑,被调度的Pod对象不再是“必须”,而是“应该”放置到某些特定节点之上,但条件不满足时,该Pod也能够接受被编排到其他不符合条件的节点之上。另外,多个柔性亲和条件并存时,它还支持为每个条件定义weight属性以区别它们优先级,取值范围是1~100,数字越大优先级越高。

修改后的yaml,加了柔性亲和

apiVersion: v1

kind: Pod

metadata:

name: "busy-affinity"

labels:

app: "busy-affinity"

spec:

containers:

- name: busy-affinity

image: "busybox"

command: ["/bin/sh","-c","sleep 600"]

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution: ## 硬标准,后面是调度期间有效,忽略执行期间

nodeSelectorTerms:

- matchExpressions:

- key: disktype

values: ["ssd","hdd"]

operator: In

preferredDuringSchedulingIgnoredDuringExecution: ## 软性分

- preference: ## 指定我们喜欢的条件

matchExpressions:

- key: disk

values: ["40"]

operator: Gt ## node3的disk不满足这个条件,node4满足

weight: 70 ## 权重 0-100

- preference: ## 指定我们喜欢的条件

matchExpressions:

- key: gpu

values: ["3080"]

operator: Gt ## node4的gpu不满足这个条件,node3满足

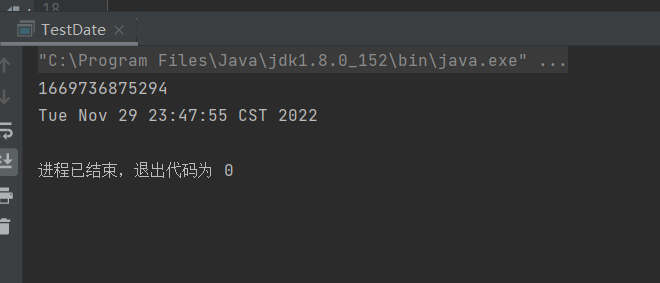

weight: 30 ## 权重 0-100因为在硬性条件中,node3和node4都满足硬性条件,在查看一下软性条件,其中node3满足第二个,node4满足第一个,但是第一个的权重高一些,所以最终选择了node4,查看运行的pods

[root@k8s-01 ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

busy-affinity 1/1 Running 0 34s 10.244.7.82 k8s-04 <none> <none>

counter 1/1 Running 0 8d 10.244.165.198 k8s-03 <none> <none>

nfs-client-provisioner-69b76b8dc6-ms4xg 1/1 Running 1 (14d ago) 26d 10.244.179.21 k8s-02 <none> <none>

nginx-5759cb8dcc-t4sdn 1/1 Running 0 7d6h 10.244.179.50 k8s-02 <none> <none>

nginx-liveness 1/1 Running 2 (5d17h ago) 5d17h 10.244.61.218 k8s-01 <none> <none>

nginx-nginx 1/1 Running 0 5d17h 10.244.179.3 k8s-02 <none> <none>

nginx-readiness 1/1 Running 0 5d17h 10.244.61.219 k8s-01 <none> <none>

nginx-test 1/1 Running 0 45m 10.244.7.81 k8s-04 <none> <none>

post-test 1/1 Running 0 5d18h 10.244.179.62 k8s-02 <none> <none>