文章目录

- k8s概念

- 安装部署

- 无密钥配置与hosts与关闭swap开启ipv4转发

- 安装前启用脚本

- 开启ip_vs

- 安装指定版本docker

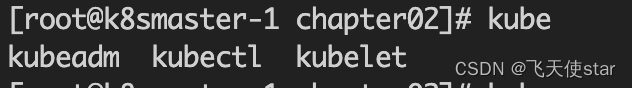

- 安装kubeadm kubectl kubelet

- k8s单节点部署

- 参考链接地址

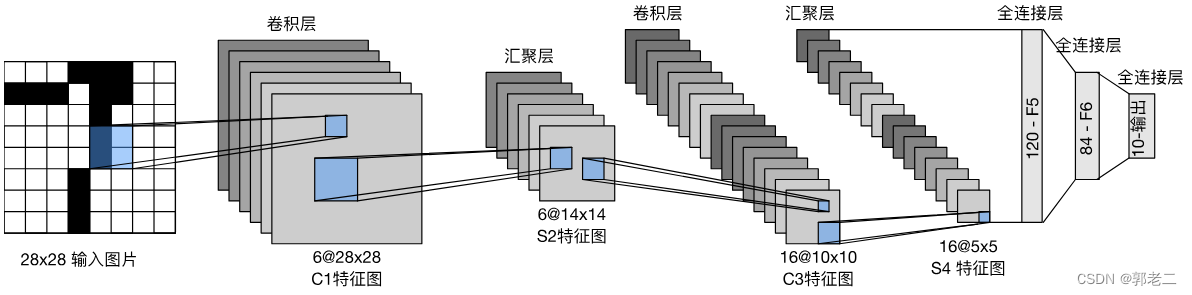

k8s概念

K8sMaster : 管理K8sNode的。

K8sNode:具有docker环境 和k8s组件(kubelet、k-proxy) ,载有容器服务的工作节点。

Controller-manager: k8s 的大脑,它通过 API Server监控和管理整个集群的状态,并确保集群处于预期的工作状态。

API Server: k8s API Server提供了k8s各类资源对象(pod,RC,Service等)的增删改查及watch等HTTP Rest接口,是整个系统的数据总线和数据中心。

etcd: 高可用强一致性的服务发现存储仓库,kubernetes集群中,etcd主要用于配置共享和服务发现

Scheduler: 主要是为新创建的pod在集群中寻找最合适的node,并将pod调度到K8sNode上。

kubelet: 作为连接Kubernetes Master和各Node之间的桥梁,用于处理Master下发到本节点的任务,管理 Pod及Pod中的容器

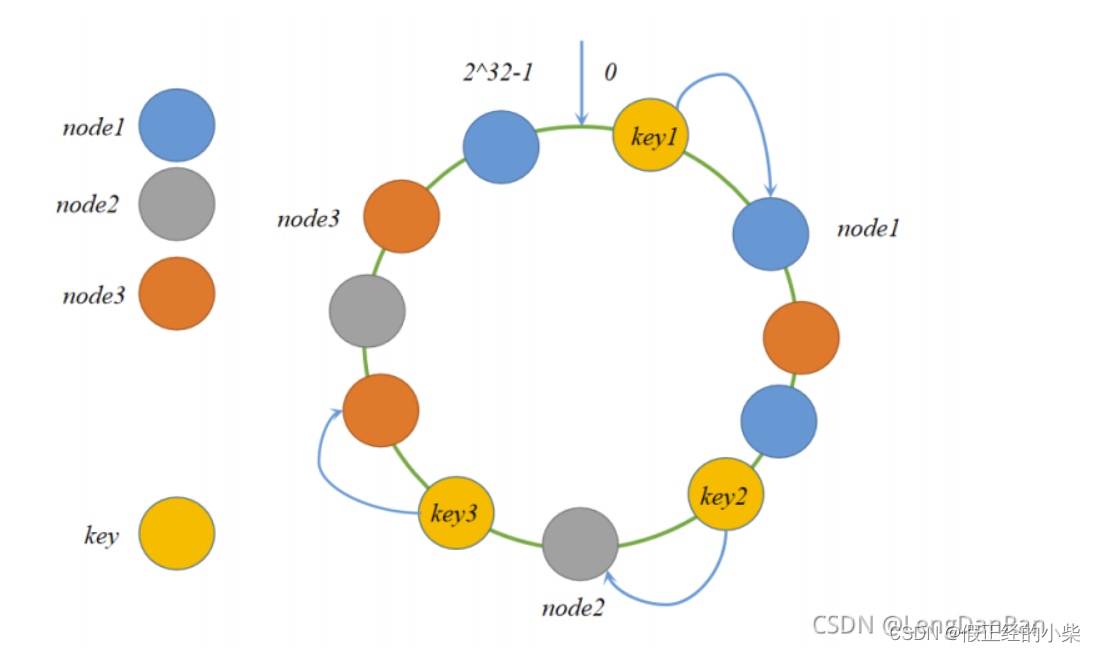

k-proxy 是 kubernetes 工作节点上的一个网络代理组件,运行在每个节点上,维护节点上的网络规则。这些网络规则允许从集群内部或外部的网络会话与 Pod 进行网络通信。监听 API server 中 资源对象的变化情况,代理后端来为服务配置负载均衡。

Pod: 一组容器的打包环境。在Kubernetes集群中,Pod是所有业务类型的基础,也是K8S管理的最小单位级,它是一个或多个容器的组合。这些容器共享存储、网络和命名空间,以及如何运行的规范。(k8s =学校、pod = 班级、容器= 学生)

安装部署

无密钥配置与hosts与关闭swap开启ipv4转发

...略...

/etc/ssh/sshd_config 配置文件注意

ChallengeResponseAuthentication no

PermitRootLogin no

PasswordAuthentication yes

PubkeyAuthentication yes

/etc/hosts

192.168.100.8 k8sMaster-1

192.168.100.9 k8sNode-1

192.168.100.10 k8sNode-2

安装前启用脚本

#!/bin/bash

################# 系统环境配置 #####################

# 关闭 Selinux/firewalld

systemctl stop firewalld && systemctl disable firewalld

setenforce 0

sed -i "s/SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config

# 关闭交换分区

swapoff -a

cp /etc/{fstab,fstab.bak}

cat /etc/fstab.bak | grep -v swap > /etc/fstab

# 设置 iptables

echo """

vm.swappiness = 0

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

""" > /etc/sysctl.conf

modprobe br_netfilter

sysctl -p

# 同步时间

yum install -y ntpdate

ln -nfsv /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

开启ip_vs

#!/bin/bash

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

ipvs_modules="ip_vs ip_vs_lc ip_vs_wlc ip_vs_rr ip_vs_wrr ip_vs_lblc ip_vs_lblcr ip_vs_dh ip_vs_sh ip_vs_nq ip_vs_sed ip_vs_ftp nf_conntrack"

for kernel_module in \${ipvs_modules}; do

/sbin/modinfo -F filename \${kernel_module} > /dev/null 2>&1

if [ $? -eq 0 ]; then

/sbin/modprobe \${kernel_module}

fi

done

EOF

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep ip_vs

安装指定版本docker

移除老版本

yum remove docker \

docker-client \

docker-client-latest \

docker-common \

docker-latest \

docker-latest-logrotate \

docker-logrotate \

docker-engine

安装所需依赖库

yum install –y yum-utils device-mapper-persistent-data lvm2

添加软件源信息

Sudo yum-config-manager –-add-repo https://mirrors.aliyun.com/doccker-ce/linux/centos/docker-ce.repo

更新并安装Docker-CE

yum makecache fast

yum install docker-ce-18.09.9-3.el7 docker-ce-cli-18.09.9-3.el7 containerd.io –y

配置Docker镜像加速器等

mkdir -p /etc/docker

tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://xxxxxx.aliyuncs.com"]

}

EOF

sudo systemctl daemon-reload

sudo systemctl restart docker

安装kubeadm kubectl kubelet

#!/bin/bash

# 安装软件可能需要的依赖关系

yum install -y yum-utils device-mapper-persistent-data lvm2

# 配置使用阿里云仓库,安装Kubernetes工具

cat > /etc/yum.repos.d/kubernetes.repo <<EOF

[kubernetes]

name=kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

EOF

# 执行安装kubeadm, kubelet, kubectl工具

yum -y install kubeadm-1.17.0 kubectl-1.17.0 kubelet-1.17.0

# 配置防火墙

sed -i "13i ExecStartPost=/usr/sbin/iptables -P FORWARD ACCEPT" /usr/lib/systemd/system/docker.service

# 创建文件夹

if [ ! -d "/etc/docker" ];then

mkdir -p /etc/docker

fi

# 配置 docker 启动参数

cat > /etc/docker/daemon.json <<EOF

{

"registry-mirrors": ["https://xxxx.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"storage-driver": "overlay2"

}

EOF

# 配置开启自启

systemctl enable docker && systemctl enable kubelet

systemctl daemon-reload

systemctl restart docker

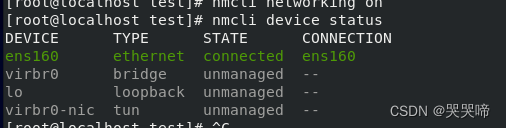

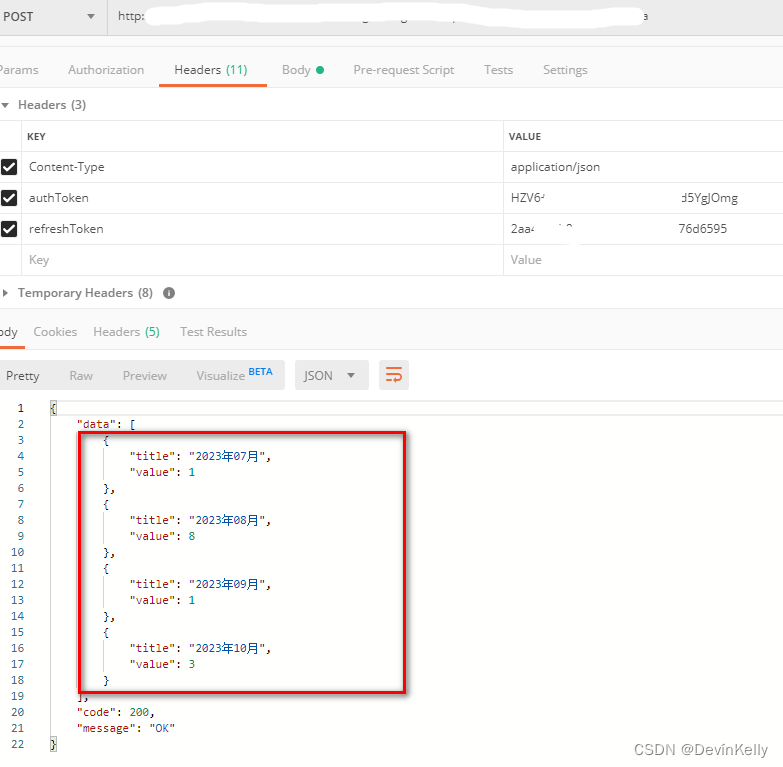

安装完成之后如图所示

k8s单节点部署

1.在master节点配置K8S配置文件

cat /etc/kubernetes/kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1beta1

kind: ClusterConfiguration

kubernetesVersion: v1.17.0

controlPlaneEndpoint: "192.168.100.8:6443"

apiServer:

certSANs:

- 192.168.100.8

networking:

podSubnet: 10.244.0.0/16

imageRepository: "registry.aliyuncs.com/google_containers"

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

mode: ipvs

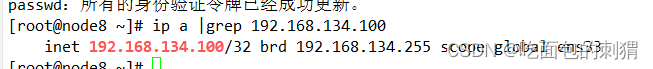

上面配置文件中 192.168.100.8 是master 配置文件

2. 执行如下命令初始化集群

# kubeadm init --config /etc/kubernetes/kubeadm-config.yaml

# mkdir -p $HOME/.kube

# cp -f /etc/kubernetes/admin.conf ${HOME}/.kube/config

# curl -fsSL https://docs.projectcalico.org/v3.9/manifests/calico.yaml| sed "s@192.168.0.0/16@10.244.0.0/16@g" | kubectl apply -f -

configmap/calico-config created

customresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamblocks.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/blockaffinities.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamhandles.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamconfigs.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networksets.crd.projectcalico.org created

clusterrole.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrole.rbac.authorization.k8s.io/calico-node created

clusterrolebinding.rbac.authorization.k8s.io/calico-node created

daemonset.apps/calico-node created

serviceaccount/calico-node created

deployment.apps/calico-kube-controllers created

serviceaccount/calico-kube-controllers created

3. Worker节点加入master集群

# kubeadm join 192.168.100.8:6443 --token hrz6jc.8oahzhyv74yrpem5 \

--discovery-token-ca-cert-hash sha256:25f51d27d64c55ea9d89d5af839b97d37dfaaf0413d00d481f7f59bd6556ee43

4. 查看集群状态

# kubectl get nodes

参考链接地址

https://gitee.com/hanfeng_edu/mastering_kubernetes.git