文章目录

- 前言

- 一、环境配置

- 1、anaconda安装

- 2、修改jupyter notebook工作目录

- 3、配置TensorFlow、Keras

- 二、数据集分类

- 1、分类源码

- 2、训练流程

- 三、模型调整

- 1.图像增强

- 2、网络模型添加dropout层

- 四、使用VGG19优化提高猫狗图像分类

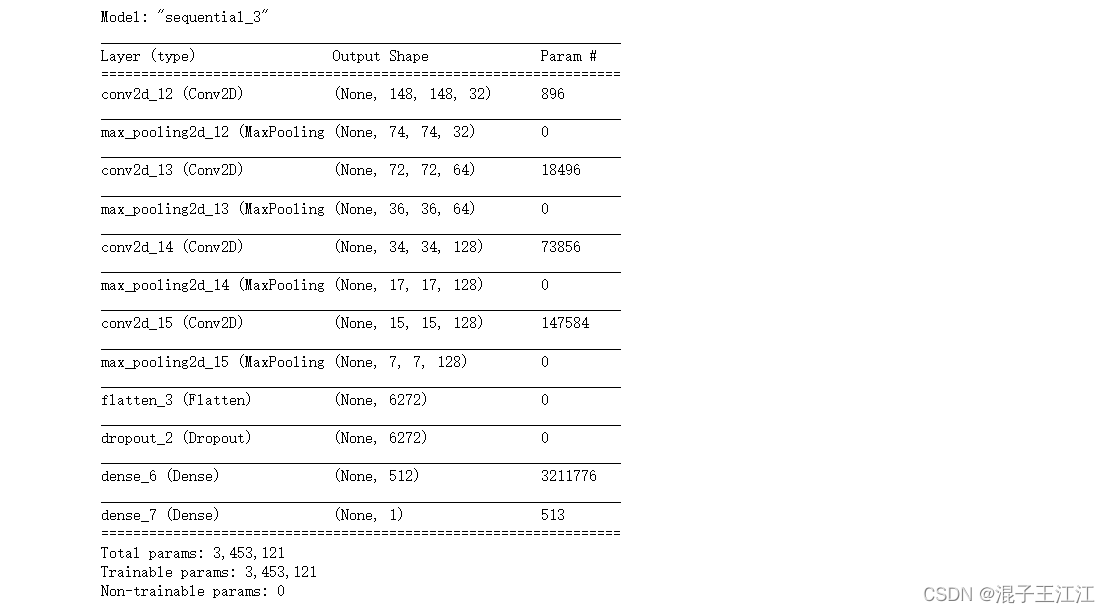

- 1、构建网络模型

- 2、初始化一个VGG19网络实例

- 3、将数据集传给神经网络

- 4、将抽取的特征输入到我们自己的神经层中进行分类训练

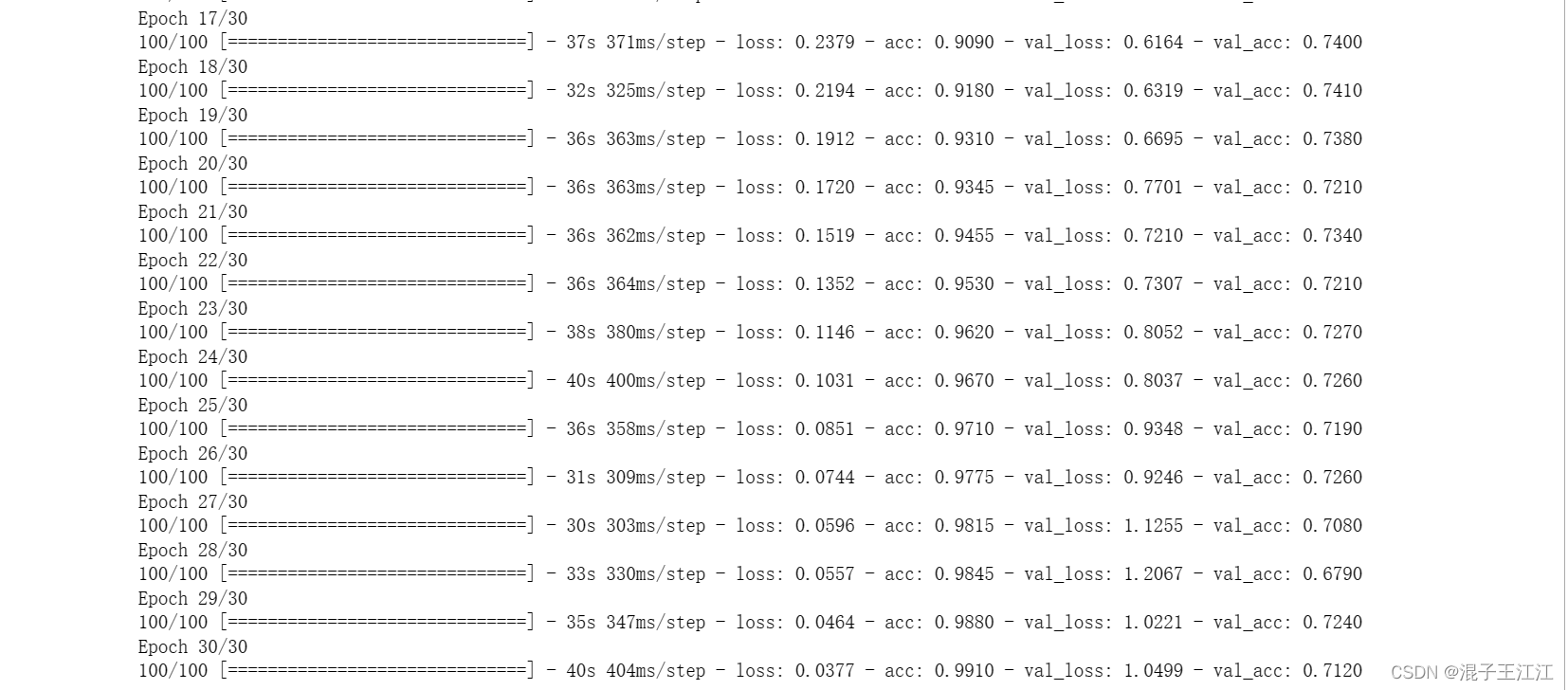

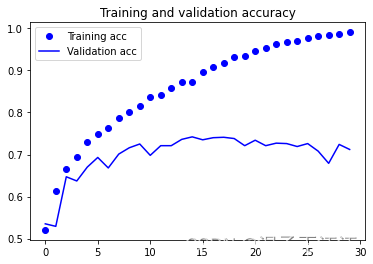

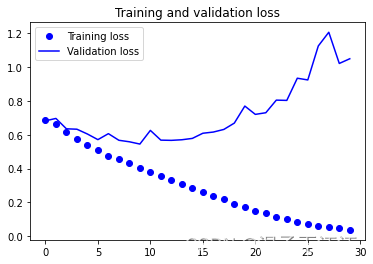

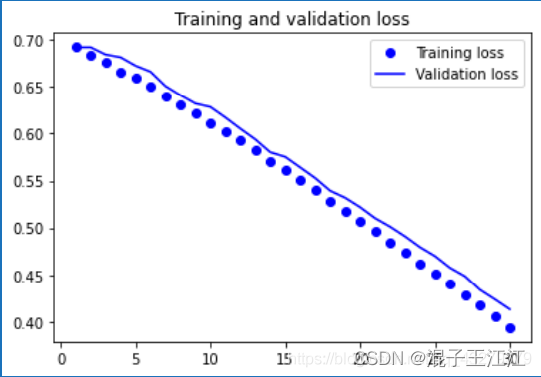

- 5、训练结果

- 五、总结

- 六、参考资料

前言

解释什么是overfit(过拟合)?

简单理解就是训练样本得到的输出和期望输出过于一致,而测试样本输出与期望输出相差却很大。为了得到一致假设而使假设变得过度复杂称为过拟合。想像某种学习算法产生了一个过拟合的分类器,这个分类器能够百分之百的正确分类样本数据(即再拿样本中的文档来给它,它绝对不会分错),但也就为了能够对样本完全正确的分类,使得它的构造如此精细复杂,规则如此严格,以至于任何与样本数据稍有不同的文档它全都认为不属于这个类别!

什么是数据增强?

数据集增强主要是为了减少网络的过拟合现象,通过对训练图片进行变换可以得到泛化能力更强的网络,更好的适应应用场景。数据增强也叫数据扩增,意思是在不实质性的增加数据的情况下,让有限的数据产生等价于更多数据的价值。

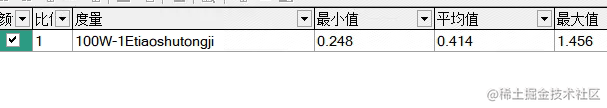

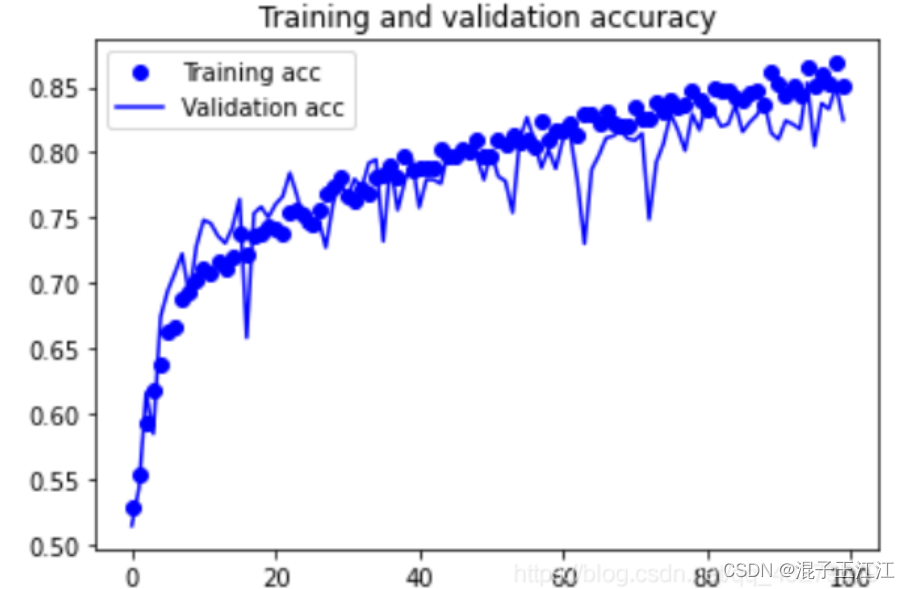

如果单独只做数据增强,精确率提高了多少?

大约提高了0.07

然后再添加的dropout层,是什么实际效果?。

只进行图像增强获得的模型和进行图像增强与添加dropout层获得的模型,可以发现前者在训练过程中波动会更大,后者在准确上小于前者。两者虽然在准确率有所变小,但是都避免了过拟合。

一、环境配置

1、anaconda安装

下载链接:anaconda

一路next,选择路径即可。

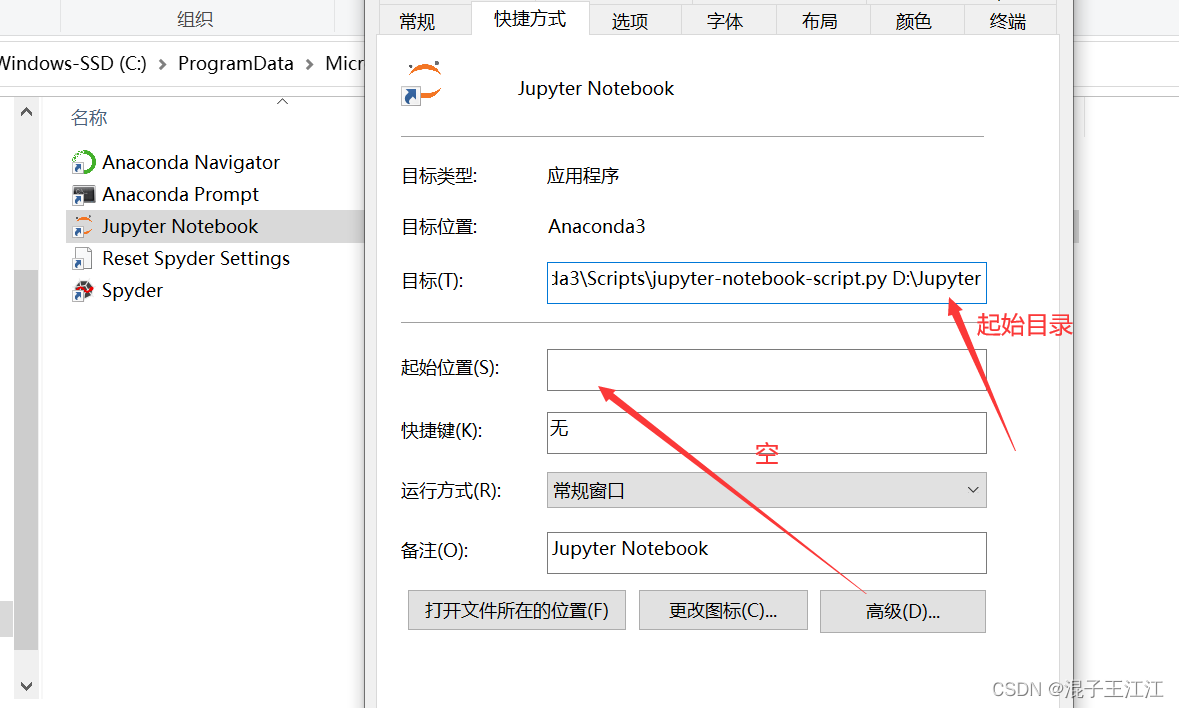

2、修改jupyter notebook工作目录

这里提一个使用事项,在打开jupyter notebook时,最好使用管理员身份打开,否则可能因为权限无法打开文件。

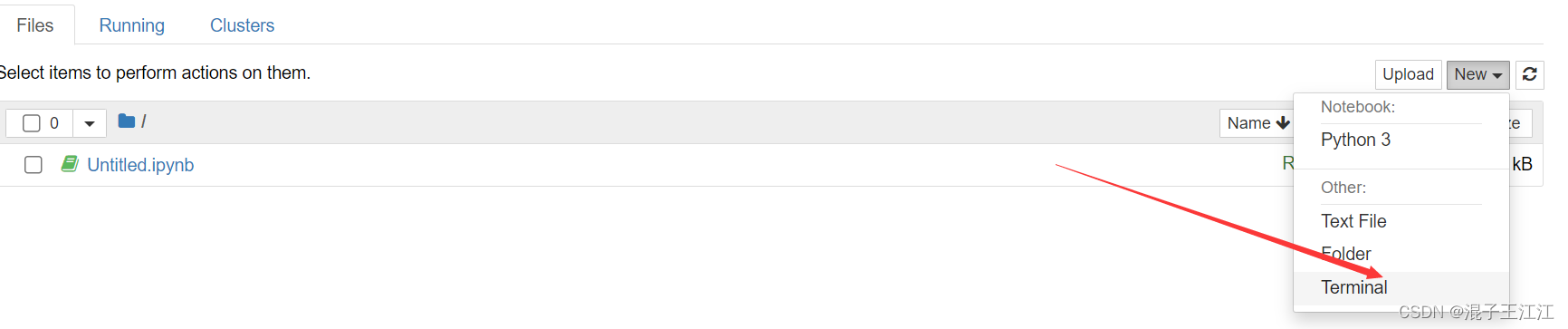

3、配置TensorFlow、Keras

- 新建一个命令行界面:

- 键入下面的命令:

pip install -i https://pypi.tuna.tsinghua.edu.cn/simple tensorflow==1.14.0

pip install -i https://pypi.tuna.tsinghua.edu.cn/simple keras==2.2.5

二、数据集分类

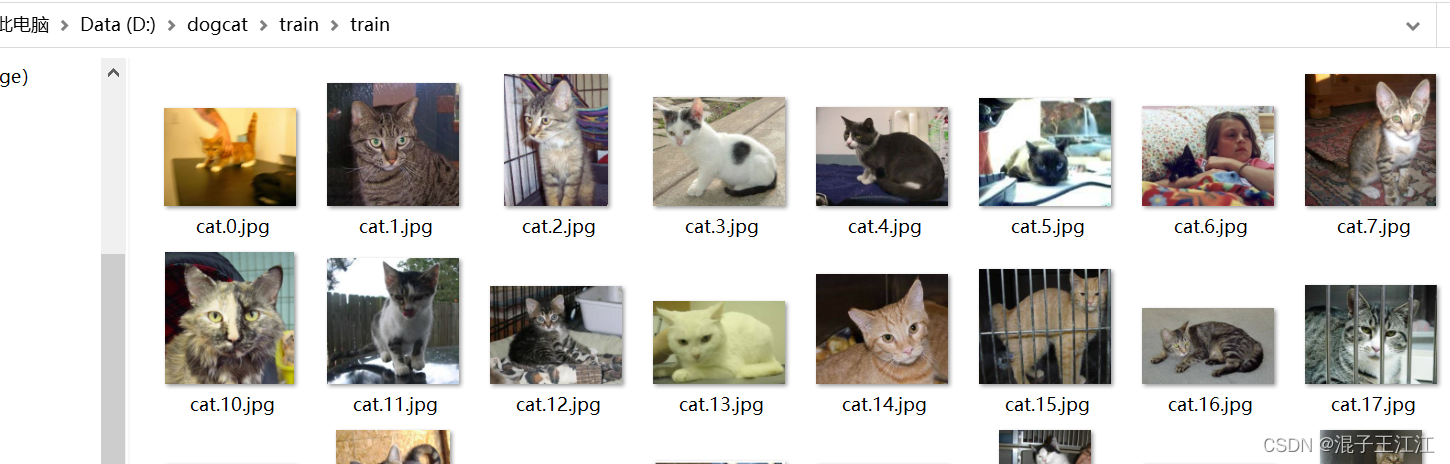

链接:猫狗数据集

提取码:6688

- 解压前的数据结构

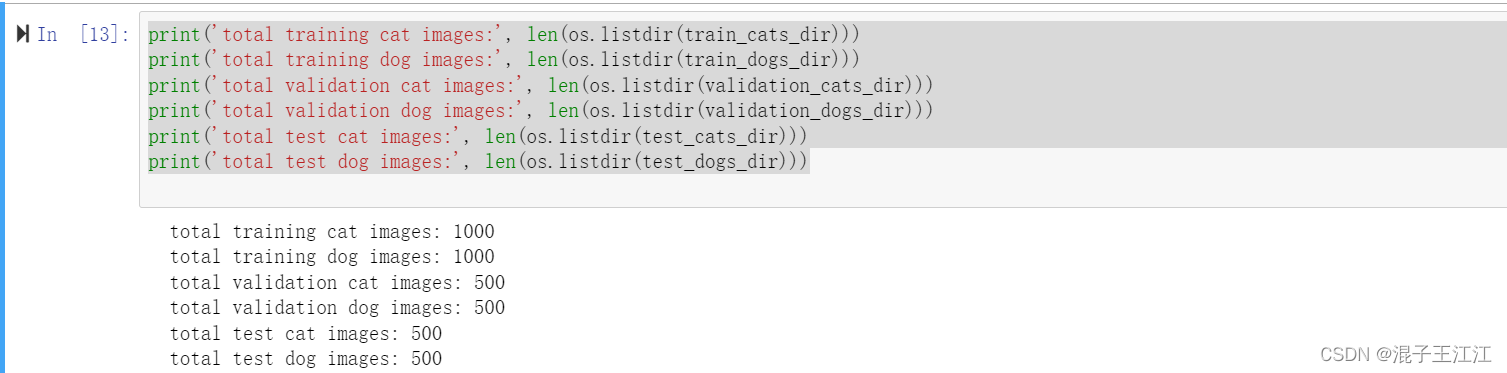

分类后数据集分为测试、训练、验证集。猫狗训练图片各1000张,验证图片各500张,测试图片各500张。

1、分类源码

import os, shutil

# The path to the directory where the original

# dataset was uncompressed

original_dataset_dir = 'D:/dogcat/train/train'

# The directory where we will

# store our smaller dataset

base_dir = 'D:/dogcat/find_cats_and_dogs'

os.mkdir(base_dir)

# Directories for our training,

# validation and test splits

train_dir = os.path.join(base_dir, 'train')

os.mkdir(train_dir)

validation_dir = os.path.join(base_dir, 'validation')

os.mkdir(validation_dir)

test_dir = os.path.join(base_dir, 'test')

os.mkdir(test_dir)

# Directory with our training cat pictures

train_cats_dir = os.path.join(train_dir, 'cats')

os.mkdir(train_cats_dir)

# Directory with our training dog pictures

train_dogs_dir = os.path.join(train_dir, 'dogs')

os.mkdir(train_dogs_dir)

# Directory with our validation cat pictures

validation_cats_dir = os.path.join(validation_dir, 'cats')

os.mkdir(validation_cats_dir)

# Directory with our validation dog pictures

validation_dogs_dir = os.path.join(validation_dir, 'dogs')

os.mkdir(validation_dogs_dir)

# Directory with our validation cat pictures

test_cats_dir = os.path.join(test_dir, 'cats')

os.mkdir(test_cats_dir)

# Directory with our validation dog pictures

test_dogs_dir = os.path.join(test_dir, 'dogs')

os.mkdir(test_dogs_dir)

# Copy first 1000 cat images to train_cats_dir

fnames = ['cat.{}.jpg'.format(i) for i in range(1000)]

for fname in fnames:

src = os.path.join(original_dataset_dir, fname)

dst = os.path.join(train_cats_dir, fname)

shutil.copyfile(src, dst)

# Copy next 500 cat images to validation_cats_dir

fnames = ['cat.{}.jpg'.format(i) for i in range(1000, 1500)]

for fname in fnames:

src = os.path.join(original_dataset_dir, fname)

dst = os.path.join(validation_cats_dir, fname)

shutil.copyfile(src, dst)

# Copy next 500 cat images to test_cats_dir

fnames = ['cat.{}.jpg'.format(i) for i in range(1500, 2000)]

for fname in fnames:

src = os.path.join(original_dataset_dir, fname)

dst = os.path.join(test_cats_dir, fname)

shutil.copyfile(src, dst)

# Copy first 1000 dog images to train_dogs_dir

fnames = ['dog.{}.jpg'.format(i) for i in range(1000)]

for fname in fnames:

src = os.path.join(original_dataset_dir, fname)

dst = os.path.join(train_dogs_dir, fname)

shutil.copyfile(src, dst)

# Copy next 500 dog images to validation_dogs_dir

fnames = ['dog.{}.jpg'.format(i) for i in range(1000, 1500)]

for fname in fnames:

src = os.path.join(original_dataset_dir, fname)

dst = os.path.join(validation_dogs_dir, fname)

shutil.copyfile(src, dst)

# Copy next 500 dog images to test_dogs_dir

fnames = ['dog.{}.jpg'.format(i) for i in range(1500, 2000)]

for fname in fnames:

src = os.path.join(original_dataset_dir, fname)

dst = os.path.join(test_dogs_dir, fname)

shutil.copyfile(src, dst)

- 统计图片数量

print('total training cat images:', len(os.listdir(train_cats_dir)))

print('total training dog images:', len(os.listdir(train_dogs_dir)))

print('total validation cat images:', len(os.listdir(validation_cats_dir)))

print('total validation dog images:', len(os.listdir(validation_dogs_dir)))

print('total test cat images:', len(os.listdir(test_cats_dir)))

print('total test dog images:', len(os.listdir(test_dogs_dir)))

效果:

2、训练流程

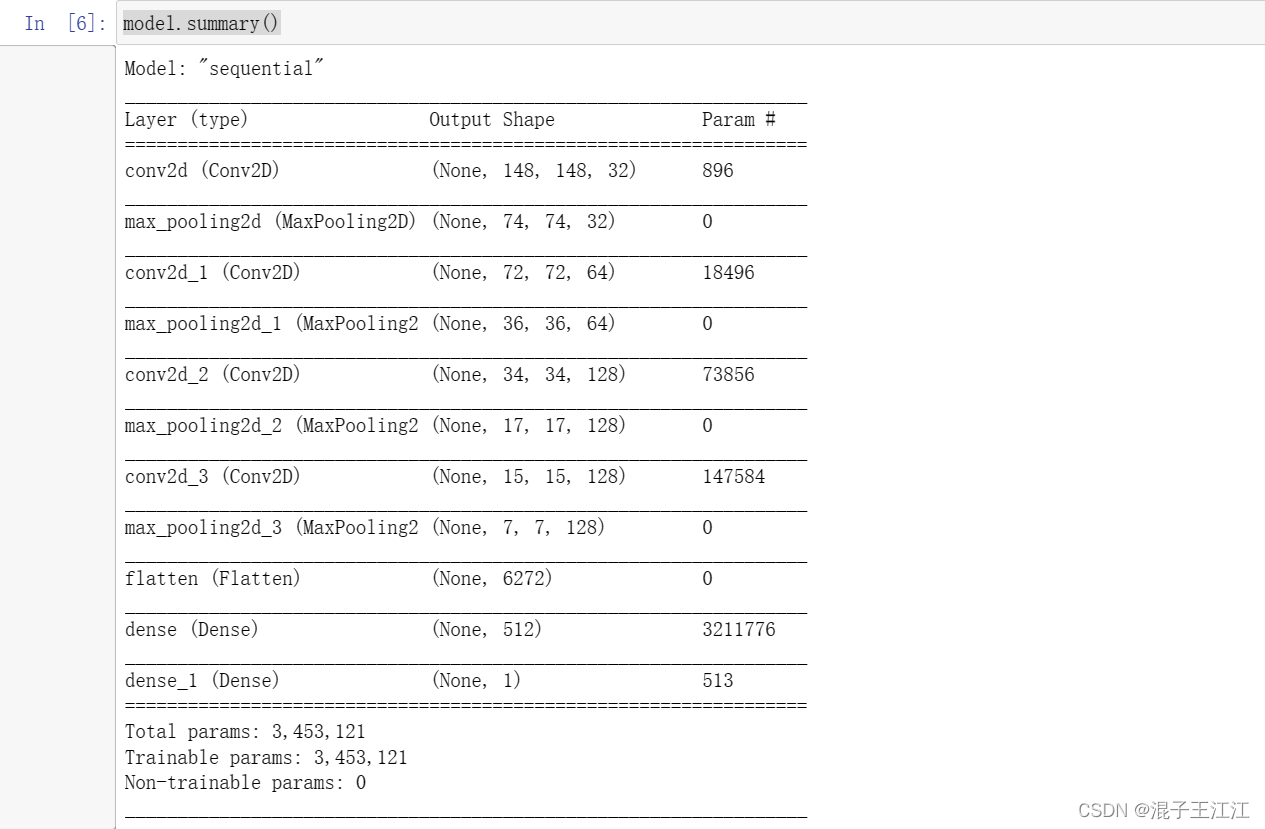

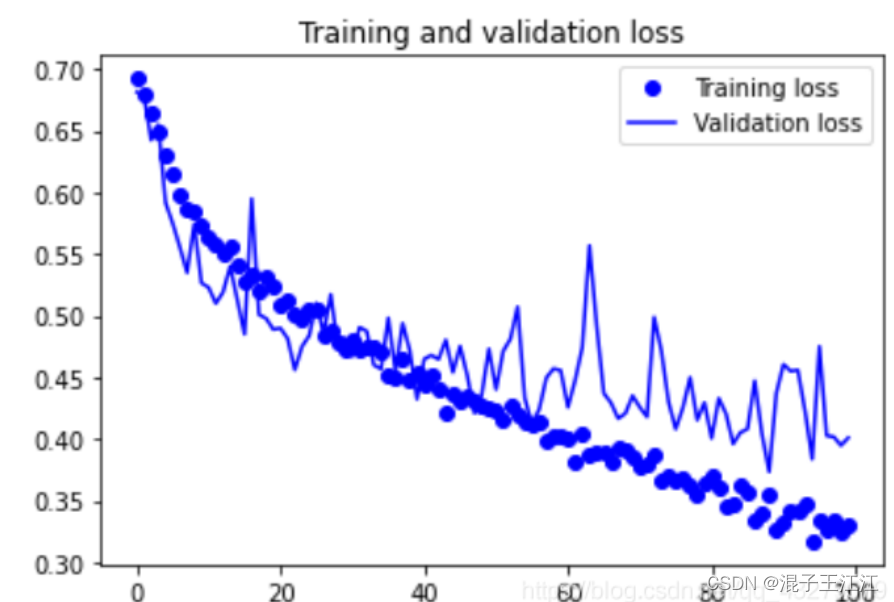

- 构建网络模型:

#网络模型构建

from keras import layers

from keras import models

#keras的序贯模型

model = models.Sequential()

#卷积层,卷积核是3*3,激活函数relu

model.add(layers.Conv2D(32, (3, 3), activation='relu',

input_shape=(150, 150, 3)))

#最大池化层

model.add(layers.MaxPooling2D((2, 2)))

#卷积层,卷积核2*2,激活函数relu

model.add(layers.Conv2D(64, (3, 3), activation='relu'))

#最大池化层

model.add(layers.MaxPooling2D((2, 2)))

#卷积层,卷积核是3*3,激活函数relu

model.add(layers.Conv2D(128, (3, 3), activation='relu'))

#最大池化层

model.add(layers.MaxPooling2D((2, 2)))

#卷积层,卷积核是3*3,激活函数relu

model.add(layers.Conv2D(128, (3, 3), activation='relu'))

#最大池化层

model.add(layers.MaxPooling2D((2, 2)))

#flatten层,用于将多维的输入一维化,用于卷积层和全连接层的过渡

model.add(layers.Flatten())

#全连接,激活函数relu

model.add(layers.Dense(512, activation='relu'))

#全连接,激活函数sigmoid

model.add(layers.Dense(1, activation='sigmoid'))

- 查看模型各层参数状态:

#输出模型各层的参数状况

model.summary()

- 对于编译步骤,我们将像往常一样使用RMSprop优化器。由于我们的网络是以一个单一的sigmoid单元结束的,所以我们将使用二元交叉矩阵作为我们的损失。

from keras import optimizers

model.compile(loss='binary_crossentropy',

optimizer=optimizers.RMSprop(lr=1e-4),

metrics=['acc'])

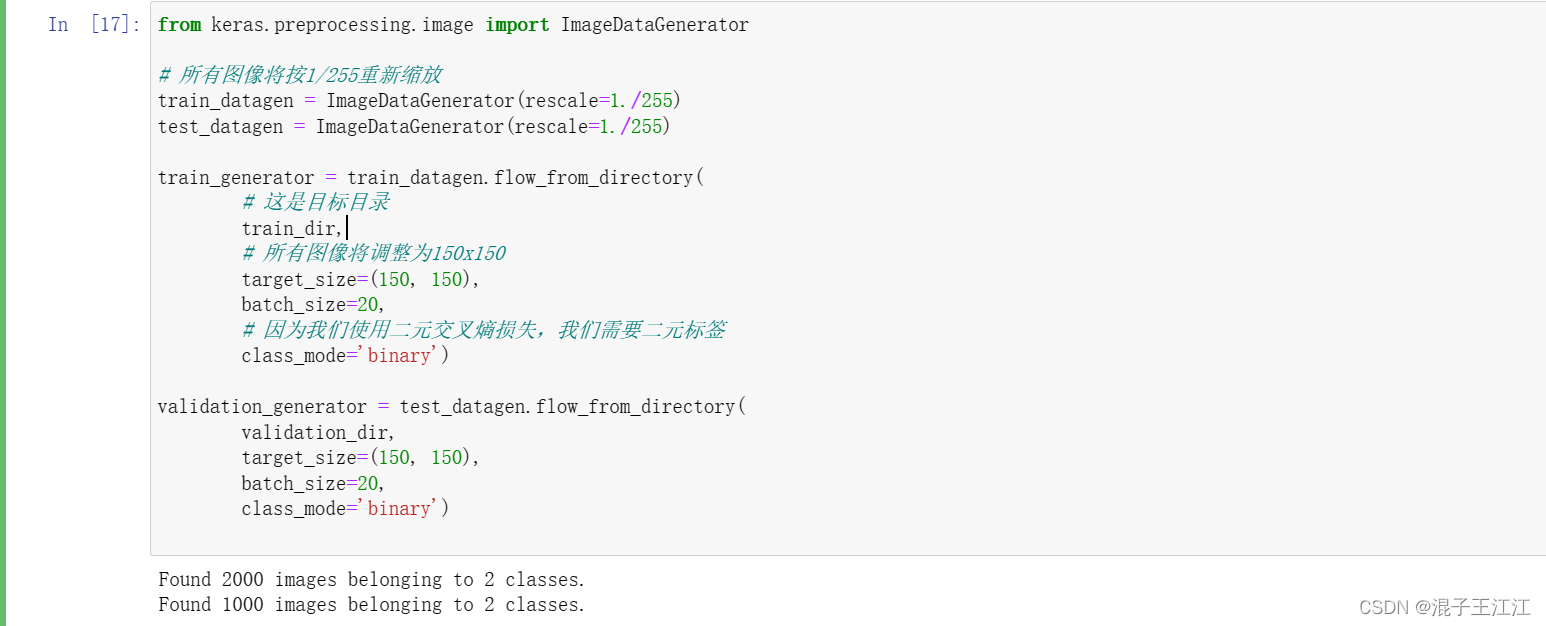

- 调整数据格式

from keras.preprocessing.image import ImageDataGenerator

# 所有图像将按1/255重新缩放

train_datagen = ImageDataGenerator(rescale=1./255)

test_datagen = ImageDataGenerator(rescale=1./255)

train_generator = train_datagen.flow_from_directory(

# 这是目标目录

train_dir,

# 所有图像将调整为150x150

target_size=(150, 150),

batch_size=20,

# 因为我们使用二元交叉熵损失,我们需要二元标签

class_mode='binary')

validation_generator = test_datagen.flow_from_directory(

validation_dir,

target_size=(150, 150),

batch_size=20,

class_mode='binary')

- 查看生成器输出:

for data_batch, labels_batch in train_generator:

print('data batch shape:', data_batch.shape)

print('labels batch shape:', labels_batch.shape)

break

- 使用生成器使我们的模型适合于数据并保存生成的模型

#模型训练过程

history = model.fit_generator(

train_generator,

steps_per_epoch=100,

epochs=30,

validation_data=validation_generator,

validation_steps=50)

#保存训练得到的的模型

model.save('D:\\dogcat\\cats_and_dogs_small_1.h5')

- 训练过程:

- 在训练和验证数据上绘制模型的损失和准确性

import matplotlib.pyplot as plt

acc = history.history['acc']

val_acc = history.history['val_acc']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs = range(len(acc))

plt.plot(epochs, acc, 'bo', label='Training acc')

plt.plot(epochs, val_acc, 'b', label='Validation acc')

plt.title('Training and validation accuracy')

plt.legend()

plt.figure()

plt.plot(epochs, loss, 'bo', label='Training loss')

plt.plot(epochs, val_loss, 'b', label='Validation loss')

plt.title('Training and validation loss')

plt.legend()

plt.show()

三、模型调整

1.图像增强

#该部分代码及以后的代码,用于替代基准模型中分类后面的代码(执行代码前,需要先将之前分类的目录删掉,重写生成分类,否则,会发生错误)

from keras.preprocessing.image import ImageDataGenerator

datagen = ImageDataGenerator(

rotation_range=40,

width_shift_range=0.2,

height_shift_range=0.2,

shear_range=0.2,

zoom_range=0.2,

horizontal_flip=True,

fill_mode='nearest')

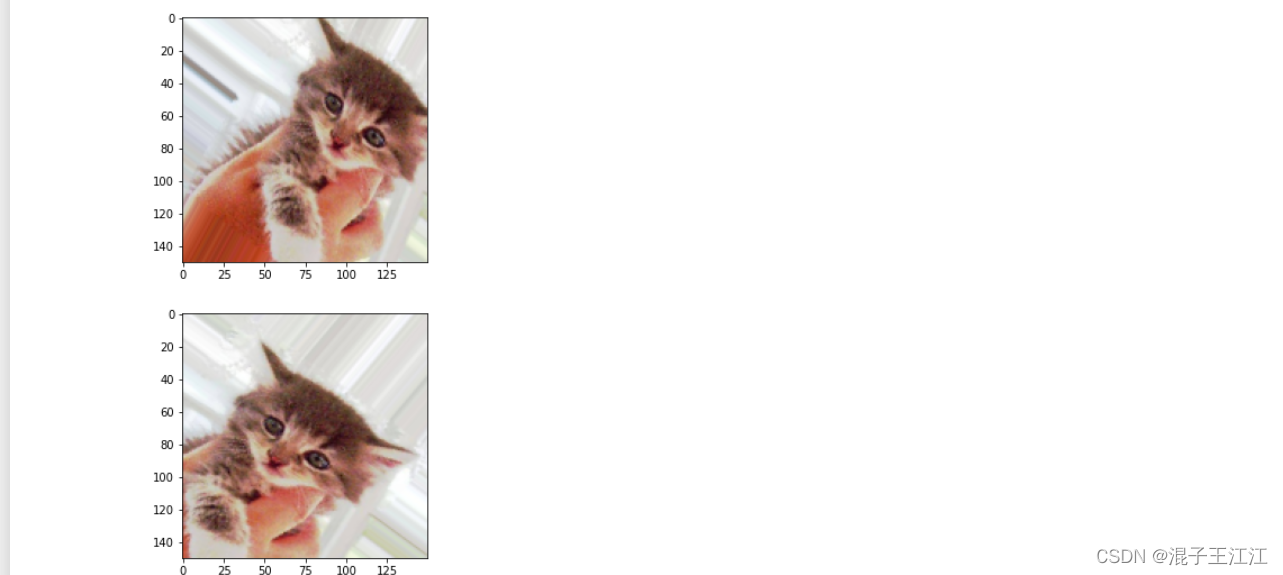

- 增强后的图像

import matplotlib.pyplot as plt

# This is module with image preprocessing utilities

from keras.preprocessing import image

fnames = [os.path.join(train_cats_dir, fname) for fname in os.listdir(train_cats_dir)]

# We pick one image to "augment"

img_path = fnames[3]

# Read the image and resize it

img = image.load_img(img_path, target_size=(150, 150))

# Convert it to a Numpy array with shape (150, 150, 3)

x = image.img_to_array(img)

# Reshape it to (1, 150, 150, 3)

x = x.reshape((1,) + x.shape)

# The .flow() command below generates batches of randomly transformed images.

# It will loop indefinitely, so we need to `break` the loop at some point!

i = 0

for batch in datagen.flow(x, batch_size=1):

plt.figure(i)

imgplot = plt.imshow(image.array_to_img(batch[0]))

i += 1

if i % 4 == 0:

break

plt.show()

- 效果

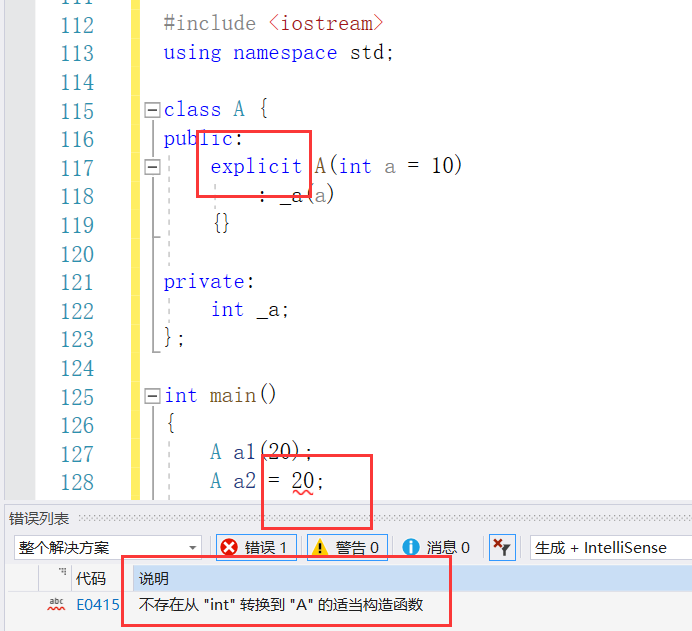

2、网络模型添加dropout层

- 代码

#网络模型构建

from keras import layers

from keras import models

#keras的序贯模型

model = models.Sequential()

#卷积层,卷积核是3*3,激活函数relu

model.add(layers.Conv2D(32, (3, 3), activation='relu',

input_shape=(150, 150, 3)))

#最大池化层

model.add(layers.MaxPooling2D((2, 2)))

#卷积层,卷积核2*2,激活函数relu

model.add(layers.Conv2D(64, (3, 3), activation='relu'))

#最大池化层

model.add(layers.MaxPooling2D((2, 2)))

#卷积层,卷积核是3*3,激活函数relu

model.add(layers.Conv2D(128, (3, 3), activation='relu'))

#最大池化层

model.add(layers.MaxPooling2D((2, 2)))

#卷积层,卷积核是3*3,激活函数relu

model.add(layers.Conv2D(128, (3, 3), activation='relu'))

#最大池化层

model.add(layers.MaxPooling2D((2, 2)))

#flatten层,用于将多维的输入一维化,用于卷积层和全连接层的过渡

model.add(layers.Flatten())

#退出层

model.add(layers.Dropout(0.5))

#全连接,激活函数relu

model.add(layers.Dense(512, activation='relu'))

#全连接,激活函数sigmoid

model.add(layers.Dense(1, activation='sigmoid'))

#输出模型各层的参数状况

model.summary()

from keras import optimizers

model.compile(loss='binary_crossentropy',

optimizer=optimizers.RMSprop(lr=1e-4),

metrics=['acc'])

-

添加dropout后的网络结构

-

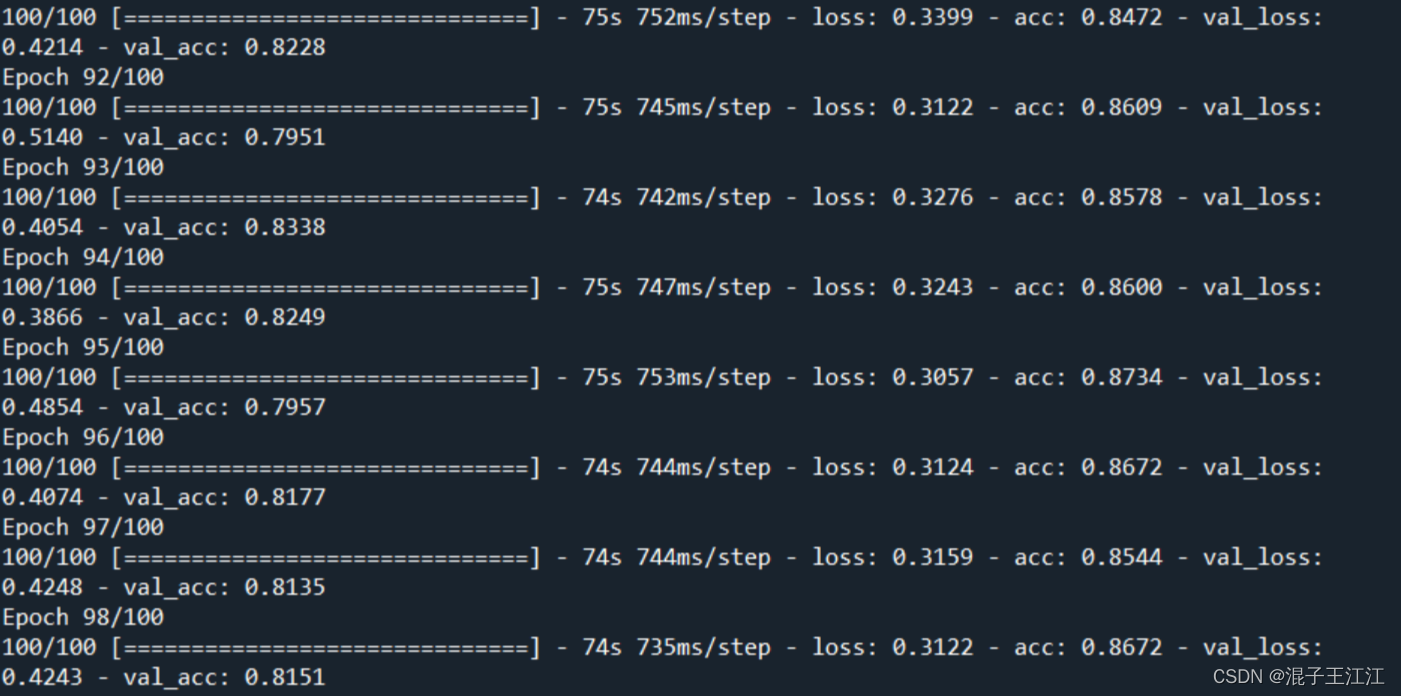

模型训练

train_datagen = ImageDataGenerator(

rescale=1./255,

rotation_range=40,

width_shift_range=0.2,

height_shift_range=0.2,

shear_range=0.2,

zoom_range=0.2,

horizontal_flip=True,)

# Note that the validation data should not be augmented!

test_datagen = ImageDataGenerator(rescale=1./255)

train_generator = train_datagen.flow_from_directory(

# This is the target directory

train_dir,

# All images will be resized to 150x150

target_size=(150, 150),

batch_size=32,

# Since we use binary_crossentropy loss, we need binary labels

class_mode='binary')

validation_generator = test_datagen.flow_from_directory(

validation_dir,

target_size=(150, 150),

batch_size=32,

class_mode='binary')

history = model.fit_generator(

train_generator,

steps_per_epoch=100,

epochs=100,

validation_data=validation_generator,

validation_steps=50)

model.save('D:\\dogcat\\cats_and_dogs_small_2.h5')

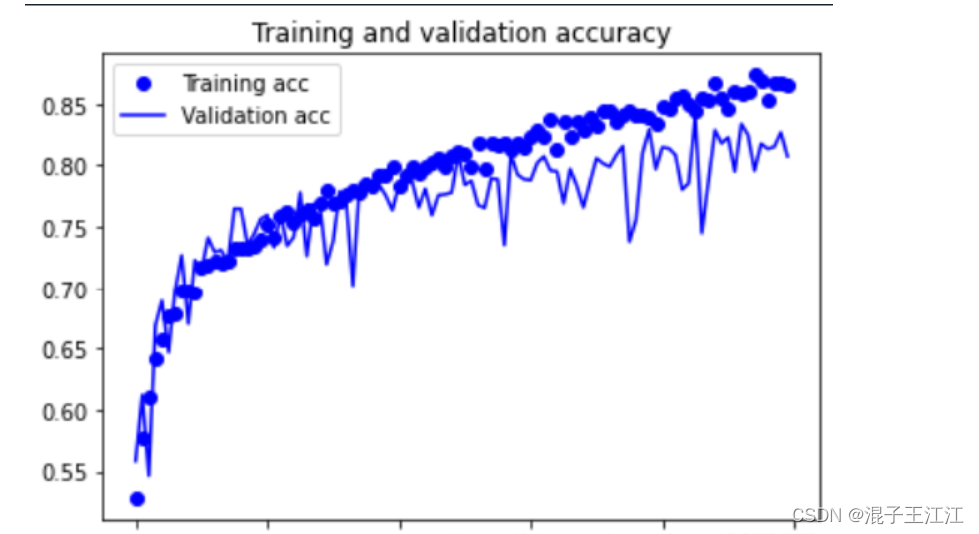

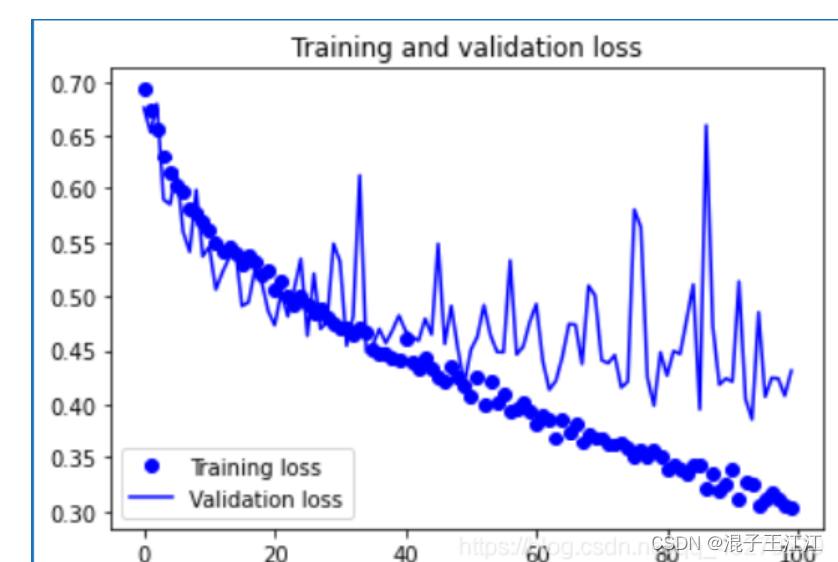

- 只进行数据增强的训练效果

- 数据增强和添加dropout层的训练效果

- 训练效果

acc = history.history['acc']

val_acc = history.history['val_acc']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs = range(len(acc))

plt.plot(epochs, acc, 'bo', label='Training acc')

plt.plot(epochs, val_acc, 'b', label='Validation acc')

plt.title('Training and validation accuracy')

plt.legend()

plt.figure()

plt.plot(epochs, loss, 'bo', label='Training loss')

plt.plot(epochs, val_loss, 'b', label='Validation loss')

plt.title('Training and validation loss')

plt.legend()

plt.show()

- 只进行数据增强的情况

- 数据增强和添加dropout层的训练效果

四、使用VGG19优化提高猫狗图像分类

1、构建网络模型

from keras import layers

from keras import models

from keras import optimizers

model = models.Sequential()

#输入图片大小是150*150 3表示图片像素用(R,G,B)表示

model.add(layers.Conv2D(32, (3,3), activation='relu', input_shape=(150 , 150, 3)))

model.add(layers.MaxPooling2D((2,2)))

model.add(layers.Conv2D(64, (3,3), activation='relu'))

model.add(layers.MaxPooling2D((2,2)))

model.add(layers.Conv2D(128, (3,3), activation='relu'))

model.add(layers.MaxPooling2D((2,2)))

model.add(layers.Conv2D(128, (3,3), activation='relu'))

model.add(layers.MaxPooling2D((2,2)))

model.add(layers.Flatten())

model.add(layers.Dense(512, activation='relu'))

model.add(layers.Dense(1, activation='sigmoid'))

model.compile(loss='binary_crossentropy', optimizer=optimizers.RMSprop(lr=1e-4),

metrics=['acc'])

model.summary()

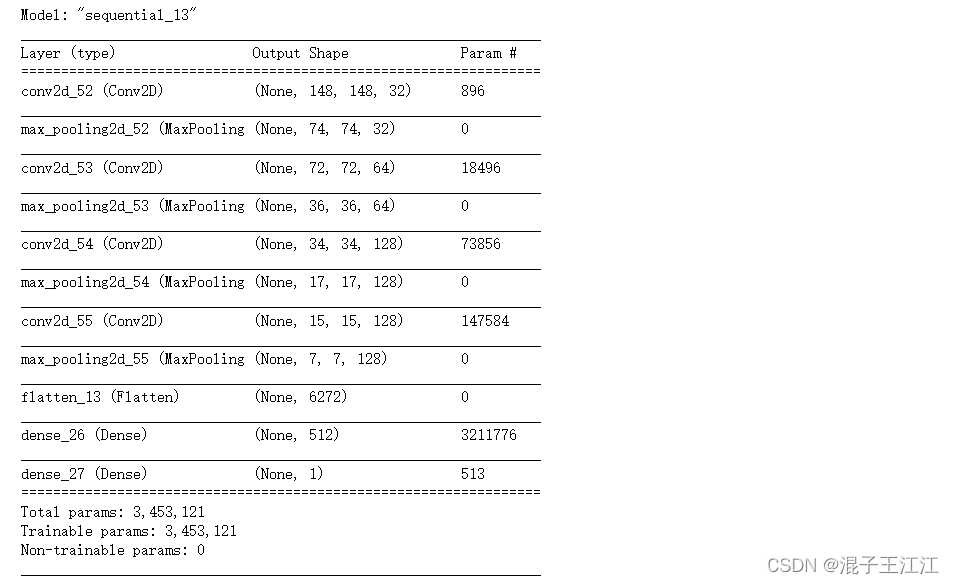

2、初始化一个VGG19网络实例

from keras.applications import VGG19

conv_base = VGG19(weights = 'imagenet',include_top = False,input_shape=(150, 150, 3))

conv_base.summary()

首次运行时候,会自动从对应网站下载h5格式文件,上面下载很慢,而且还有可能在中途挂掉,因此建议将网址复制到浏览器上,直接下载。然后,将下载的文件,放到对应的目录下。

- 模型网络结构

3、将数据集传给神经网络

import os

import numpy as np

from keras.preprocessing.image import ImageDataGenerator

# 数据集分类后的目录

base_dir = 'D:\\daoandcat\\cats_and_dogs_small'

train_dir = os.path.join(base_dir, 'train')

validation_dir = os.path.join(base_dir, 'validation')

test_dir = os.path.join(base_dir, 'test')

datagen = ImageDataGenerator(rescale = 1. / 255)

batch_size = 20

def extract_features(directory, sample_count):

features = np.zeros(shape = (sample_count, 4, 4, 512))

labels = np.zeros(shape = (sample_count))

generator = datagen.flow_from_directory(directory, target_size = (150, 150),

batch_size = batch_size,

class_mode = 'binary')

i = 0

for inputs_batch, labels_batch in generator:

#把图片输入VGG16卷积层,让它把图片信息抽取出来

features_batch = conv_base.predict(inputs_batch)

#feature_batch 是 4*4*512结构

features[i * batch_size : (i + 1)*batch_size] = features_batch

labels[i * batch_size : (i+1)*batch_size] = labels_batch

i += 1

if i * batch_size >= sample_count :

#for in 在generator上的循环是无止境的,因此我们必须主动break掉

break

return features , labels

#extract_features 返回数据格式为(samples, 4, 4, 512)

train_features, train_labels = extract_features(train_dir, 2000)

validation_features, validation_labels = extract_features(validation_dir, 1000)

test_features, test_labels = extract_features(test_dir, 1000)

4、将抽取的特征输入到我们自己的神经层中进行分类训练

from keras import models

from keras import layers

from keras import optimizers

#构造我们自己的网络层对输出数据进行分类

model = models.Sequential()

model.add(layers.Dense(256, activation='relu', input_dim = 4 * 4 * 512))

model.add(layers.Dropout(0.5))

model.add(layers.Dense(1, activation = 'sigmoid'))

model.compile(optimizer=optimizers.RMSprop(lr = 2e-5), loss = 'binary_crossentropy', metrics = ['acc'])

history = model.fit(train_features, train_labels, epochs = 30, batch_size = 20,

validation_data = (validation_features, validation_labels))

- 训练过程

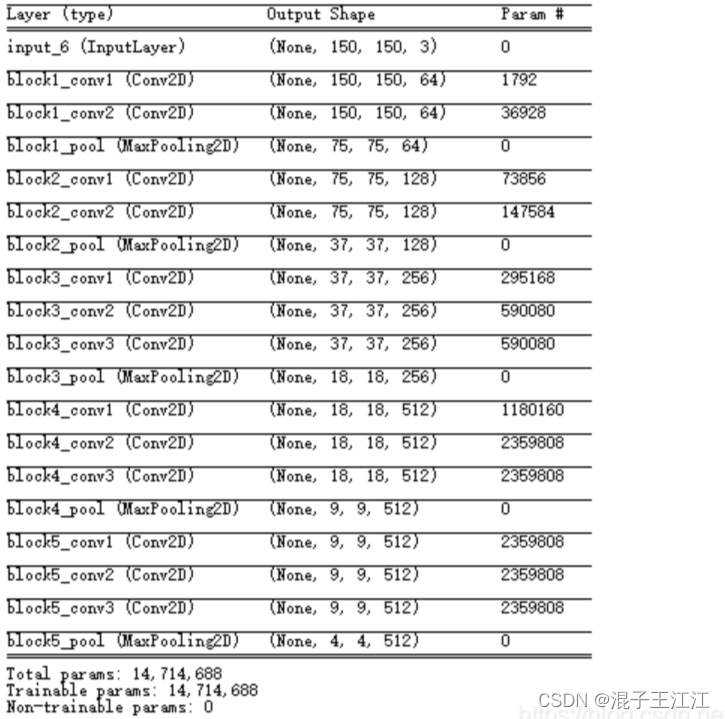

5、训练结果

import matplotlib.pyplot as plt

acc = history.history['acc']

val_acc = history.history['val_acc']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs = range(1, len(acc) + 1)

plt.plot(epochs, acc, 'bo', label = 'Train_acc')

plt.plot(epochs, val_acc, 'b', label = 'Validation acc')

plt.title('Trainning and validation accuracy')

plt.legend()

plt.figure()

plt.plot(epochs, loss, 'bo', label = 'Training loss')

plt.plot(epochs, val_loss, 'b', label = 'Validation loss')

plt.title('Training and validation loss')

plt.legend()

plt.show()

五、总结

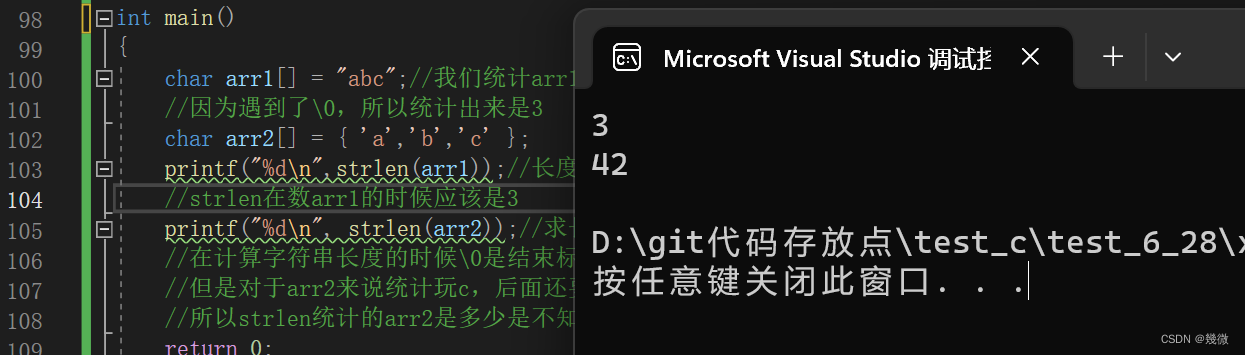

这是第一次训练模型,遇到了许多环境问题,在安装Anaconda后,因为python环境与TensorFlow、Keras有一定的版本要求导致无法下载。最后是在本地环境上进行,这是不好的操作。还有就是在使用jupyter notebook时,被告知缺少一些包,在命令行进行下载过后,重新执行命令时会被告知未定义,需要重新执行前面的操作。还有就是会报一些模块未定义的话题,无法解决。

六、参考资料

基于Tensorflow和Keras实现卷积神经网络CNN

基于jupyter notebook的python编程-----猫狗数据集的阶段分类得到模型精度并进行数据集优化