文章目录

- Original Paper

- Motivation

- Method

- Task Description and Problem Formulation

- NMIR Framework: A High-Level Overview

- Model Implementation and Training

- Data

Original Paper

Learning Multiple Intent Representations for Search Queries

More related papers can be found in :

- ShiyuNee/Awesome-Conversation-Clarifying-Questions-for-Information-Retrieval: Papers about Conversation and Clarifying Questions (github.com)

Motivation

The typical use of representation models has a major limitation in that they generate only a single representation for a query, which may have multiple intents or facets.

- propose NMIR(Neural Multiple Intent Representations) to support multiple intent representations for each query

Method

Task Description and Problem Formulation

- training query set: Q = { q 1 , ⋯ , q n } Q = \{q_1,\cdots,q_n\} Q={q1,⋯,qn}

- D i = d i 1 , ⋯ , d i m D_i = {d_{i1},\cdots,d_{im}} Di=di1,⋯,dim be the top m retrieved documents in response to the query q i q_i qi

-

F

i

=

{

f

i

1

,

⋯

,

f

i

k

}

F_i=\{f_{i1},\cdots,f_{ik}\}

Fi={fi1,⋯,fik} denote the set of all textual intent descriptions associated with the query

q

i

q_i

qi

- k i k_i ki is the number of query intents

NMIR Framework: A High-Level Overview

- one straightforward solution:

- using an encoder-decoder architecture

- input: query q i q_i qi

- output: generates multiple query intent descriptions of the query by taking the top k i k_i ki most likely predictions

- drawback: These generations are often synonyms or refer to the same concept

- using an encoder-decoder architecture

- another straightforward solution:

- task as a sequence-to-sequence problem

- input: query q i q_i qi

- output: generate all the query intent descriptions concatenated with each other(like translation)

- drawback:

- different intent representations are not distinguishable in the last layer of the model.

- most existing effective text encoding models are not able to represent long sequences of tokens, such as a concatenation of the top 𝑚 retrieved documents

- task as a sequence-to-sequence problem

NMIR Framework:

- 𝜙 (·) and 𝜓 (·) denote a text encoder and decoder pair

Step1: NMIR assigns each learned document representation to one of the query intent descriptions f i j f_ij fij ∈ 𝐹𝑖 using a document-intent matching algorithm 𝛾:

- C i ∗ C_i^* Ci∗ is a set of documents and each C i j ∗ C_{ij}^* Cij∗ is a set of documents form D i D_i Di that are assigned to f i j f_{ij} fij by 𝛾.

Step2: NMIR then transforms the encoded general query representation to its intent representations through a query intent encoder 𝜁.

- the representation for the j t h j^{th} jth query intent is obtained using 𝜁 (𝑞𝑖 , C i j ∗ C_{ij}^* Cij∗ ;𝜙).

Train: training for a mini-batch 𝑏 is based on a gradient descent-based minimization:

-

q

i

j

∗

q_{ij}^*

qij∗ is a concatenation of the query string, the first 𝑗 −1 intent descriptions, and 𝑘𝑖 − 𝑗 mask tokens

- given the associated cluster C i j ∗ C_{ij}^* Cij∗ and the encoded query text plus the past 𝑗−1 intent descriptions.

- helps the model avoid generating the previous intent representations and learn widely distributed representations

where L C E L_{CE} LCE is the cross-entropy loss

- f i j t f_{ijt} fijt is the t t h t^{th} tth token in the given intent description f i j f_{ij} fij.

Inference: q i j ∗ q_{ij}^* qij∗ s are constructed differently.

- first feed“𝑞𝑖 …” to the model and apply beam search to the decoder’s output to obtain

the first intent description f i 1 f_{i1} fi1'. - then use the model’s output to iteratively create the input for the next step “𝑞𝑖 f i 1 f_{i1} fi1’ …”and repeat this process

Model Implementation and Training

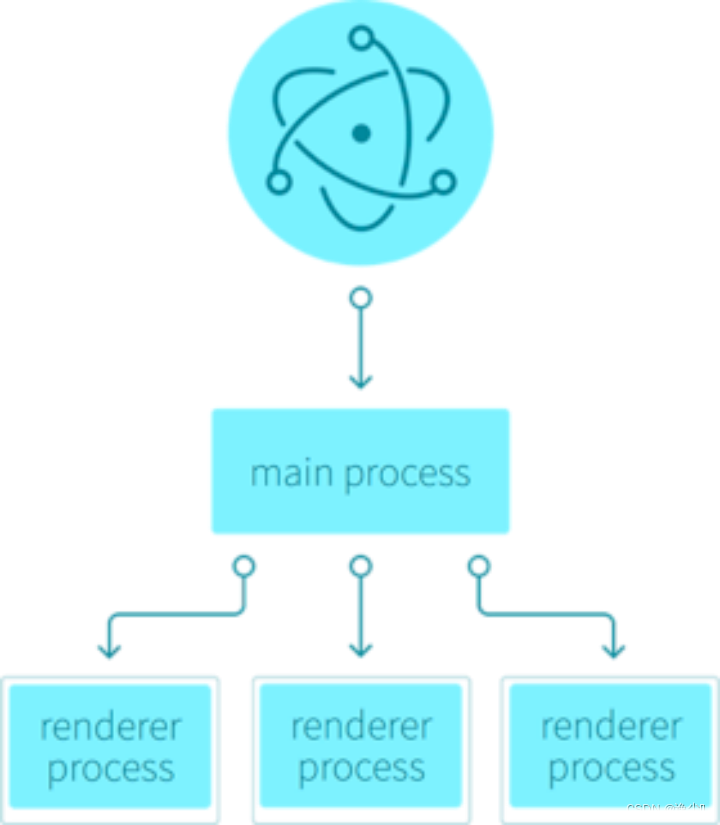

Figure1(a) represents the model architecture.

- use

Transformer encoder and decoder architectures(pre-trainedBART) for implementing 𝜙 and𝜓, respectively

The intent encoding component 𝜁 : use

N

′

N'

N′ layers Guided Transformer model

Guided Transformeris used for influencing an input representation by the guidance of some external information.- we use 𝜙 ( q i j q_{ij} qij ) as the input representation and 𝜙 (𝑑) :∀𝑑 ∈ C i j ∗ C_{ij}^* Cij∗ as the external information.

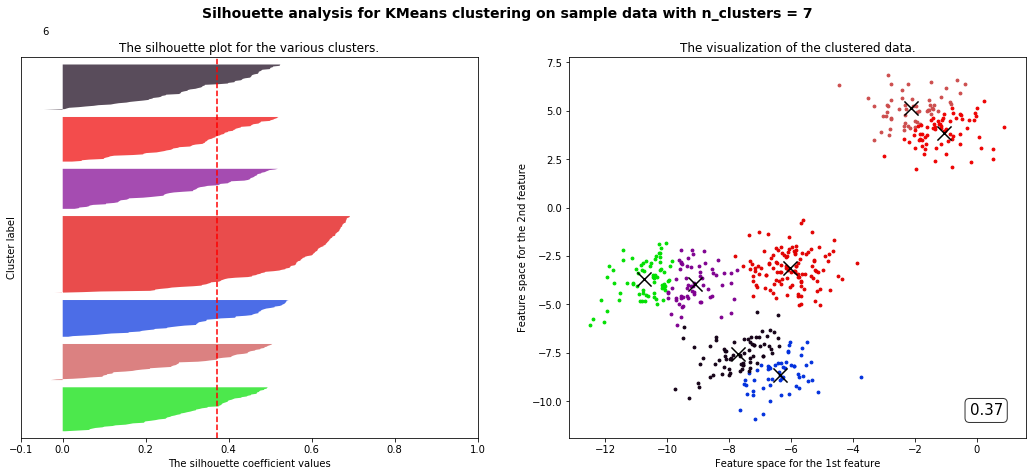

The document-intent matching component 𝛾 : develop a clustering algorithm

-

encodes all the top retrieved documents and creates k i k_i ki clusters, using a clustering algorithm(use K-Means).

- C i j = { C i 1 , ⋯ , C i k i } C_{ij} = \{C_{i1},\cdots,C_{ik_i}\} Cij={Ci1,⋯,Ciki} denotes a set of clusters and each C i j C_{ij} Cij contains all the documents in the 𝑗 th cluster associated with the query 𝑞𝑖 .

- M i = { μ i 1 , ⋯ μ i k i } M_i=\{\mu_{i1},\cdots\mu_{ik_i}\} Mi={μi1,⋯μiki}is a set of all cluster centroids such that μ i j \mu_{ij} μij = centroid( C i j C_{ij} Cij).

-

K-Means requires the number of clusters as input.

- consider two cases at inference time

- assume the number of clusters is equal to a tuned hyper-parameter 𝑘∗ for all queries

- replace the K-Means algorithm by a non-parametric version of K-Means

- consider two cases at inference time

-

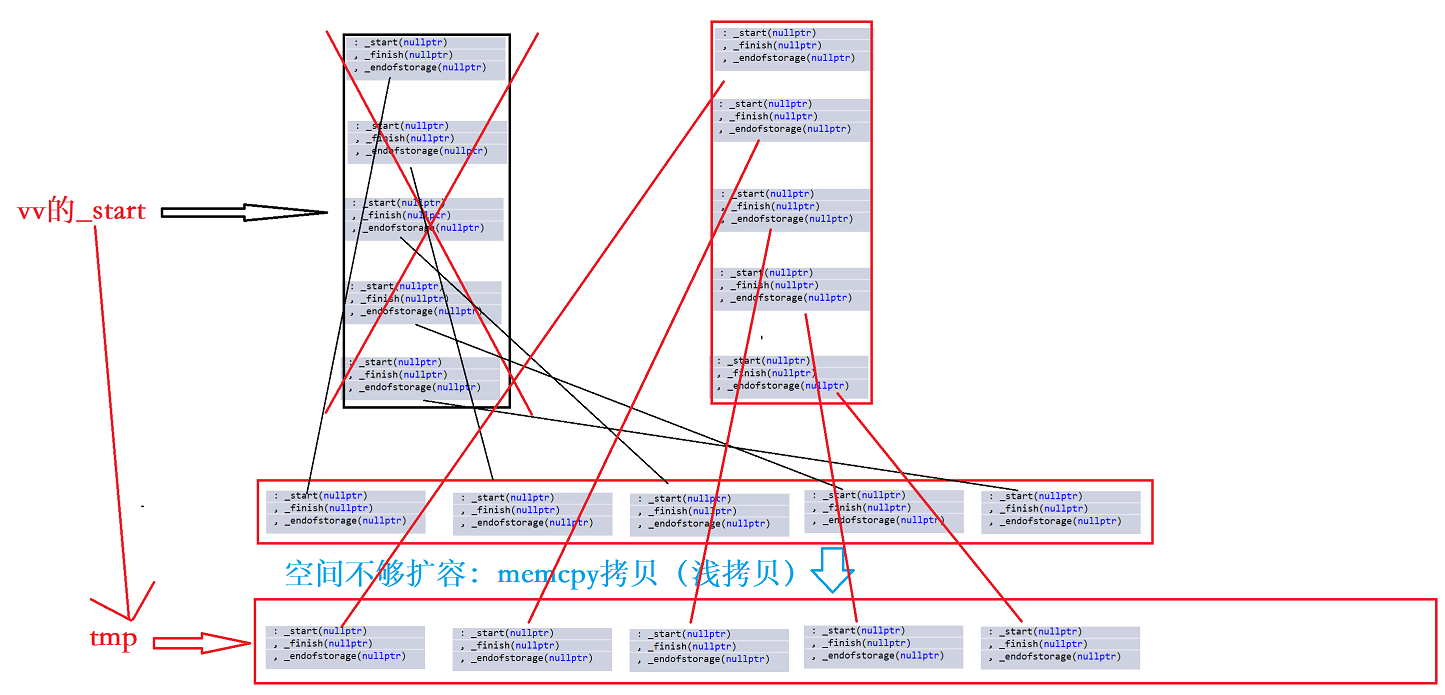

Issue: The component 𝛾 requires a one-to-one assignment between the cluster centroids and the query intents in the training data, all clusters may be assigned to a single most dominant query intent. So we use the intent identification function I:

- my view: the problem is how to assign centroids to query intents after clustering.

-

output:

𝛾 is not differentiable and cannot be part of the network for gradient descent-based optimization. We move it to an asynchronous process as figure1(b) below:

Asynchronous training: use asynchronous training method to speed up(the clustering of document representations is an efficiency bottleneck) described as figure1(b)

Data

- training data: We follow a weak supervision solution based on the

MIMIC-Clickdataset, recently released by Zamani et al.MIMICS: A Large-Scale Data Collection for Search Clarification - evaluation data:

Qulacdataset

![Java高效率复习-MySQL上篇[MySQL]](https://img-blog.csdnimg.cn/53b1cf967a2b409ba856f2e0e908d02a.png)