文章目录

- 一、CIFAR10 与 lenet5

- 二、CIFAR10 与 ResNet

一、CIFAR10 与 lenet5

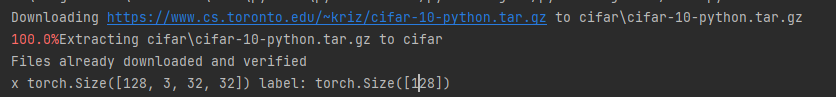

第一步:准备数据集

lenet5.py

import torch

from torch.utils.data import DataLoader

from torchvision import datasets

from torchvision import transforms

def main():

batchsz = 128

CIFAR_train = datasets.CIFAR10('cifar', True, transform=transforms.Compose([

transforms.Resize((32, 32)),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225])

]), download=True)

cifar_train = DataLoader(CIFAR_train, batch_size=batchsz, shuffle=True)

CIFAR_test = datasets.CIFAR10('cifar', False, transform=transforms.Compose([

transforms.Resize((32, 32)),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225])

]), download=True)

cifar_test = DataLoader(CIFAR_test, batch_size=batchsz, shuffle=True)

x,label = iter(cifar_train).next()

print('x',x.shape,'label:',label.shape)

if __name__ =='__main__':

main()

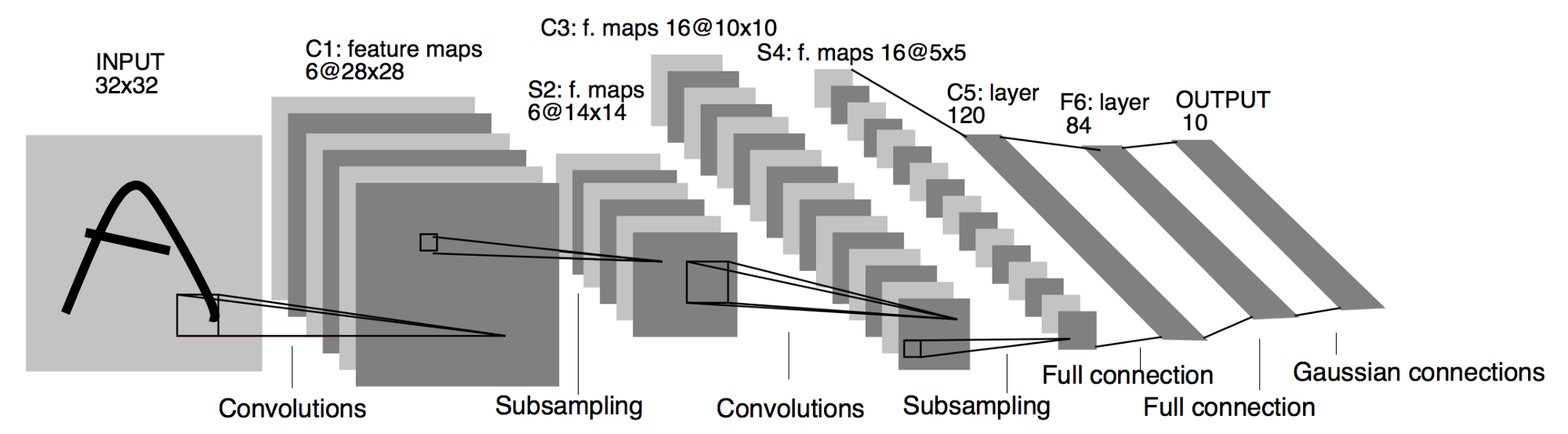

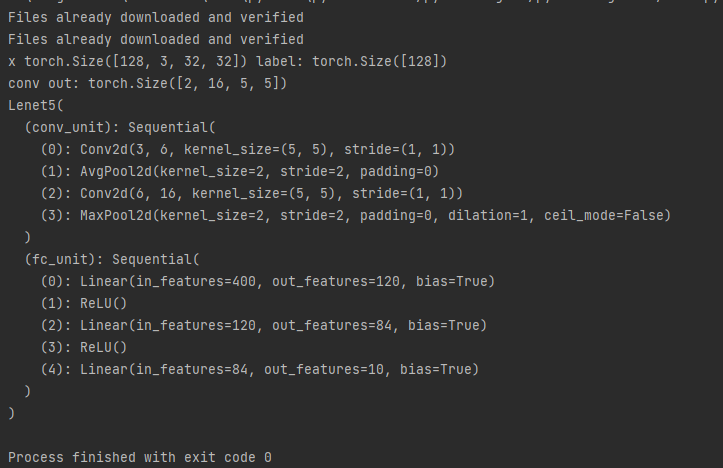

第二步:确认Lenet5网络流程结构

main.py

import torch

from torch import nn

from torch.nn import functional as F

class Lenet5(nn.Module):

def __init__(self):

super(Lenet5, self).__init__()

self.conv_unit = nn.Sequential(

# x: [b, 3, 32, 32] => [b, 6, ]

nn.Conv2d(3, 6, kernel_size=5, stride=1, padding=0),

nn.AvgPool2d(kernel_size=2, stride=2, padding=0),

#

nn.Conv2d(6, 16, kernel_size=5, stride=1, padding=0),

nn.MaxPool2d(kernel_size=2, stride=2, padding=0),

)

self.fc_unit = nn.Sequential(

nn.Linear(2,120), # 由输出结果反推(拉直打平)

nn.ReLU(),

nn.Linear(120,84),

nn.ReLU(),

nn.Linear(84,10)

)

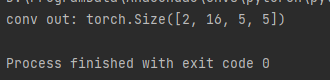

#[b,3,32,32]

tmp = torch.randn(2, 3, 32, 32)

out = self.conv_unit(tmp)

#[2,16,5,5] 由输出结果得到

print('conv out:', out.shape)

def main():

net = Lenet5()

if __name__ == '__main__':

main()

第三步:完善lenet5 结构并使用GPU加速

lenet5.py

import torch

from torch import nn

from torch.nn import functional as F

class Lenet5(nn.Module):

def __init__(self):

super(Lenet5, self).__init__()

self.conv_unit = nn.Sequential(

# x: [b, 3, 32, 32] => [b, 6, ]

nn.Conv2d(3, 6, kernel_size=5, stride=1, padding=0),

nn.AvgPool2d(kernel_size=2, stride=2, padding=0),

#

nn.Conv2d(6, 16, kernel_size=5, stride=1, padding=0),

nn.MaxPool2d(kernel_size=2, stride=2, padding=0),

)

self.fc_unit = nn.Sequential(

nn.Linear(16*5*5,120),

nn.ReLU(),

nn.Linear(120,84),

nn.ReLU(),

nn.Linear(84,10)

)

#[b,3,32,32]

tmp = torch.randn(2, 3, 32, 32)

out = self.conv_unit(tmp)

#[b,16,5,5]

print('conv out:', out.shape)

def forward(self,x):

batchsz = x.size(0)

# [b, 3, 32, 32] => [b, 16, 5, 5]

x = self.conv_unit(x)

#[b, 16, 5, 5] => [b,16*5*5]

x = x.view(batchsz,16*5*5)

# [b, 16*5*5] => [b, 10]

logits = self.fc_unit(x)

pred = F.softmax(logits,dim=1)

return logits

def main():

net = Lenet5()

tmp = torch.randn(2, 3, 32, 32)

out = net(tmp)

print('lenet out:', out.shape)

if __name__ == '__main__':

main()

main.py

import torch

from torch.utils.data import DataLoader

from torchvision import datasets

from torchvision import transforms

from lenet5 import Lenet5

from torch import nn, optim

def main():

batchsz = 128

CIFAR_train = datasets.CIFAR10('cifar', True, transform=transforms.Compose([

transforms.Resize((32, 32)),

transforms.ToTensor()

]), download=True)

cifar_train = DataLoader(CIFAR_train, batch_size=batchsz, shuffle=True)

CIFAR_test = datasets.CIFAR10('cifar', False, transform=transforms.Compose([

transforms.Resize((32, 32)),

transforms.ToTensor()

]), download=True)

cifar_test = DataLoader(CIFAR_test, batch_size=batchsz, shuffle=True)

x,label = iter(cifar_train).next()

print('x',x.shape,'label:',label.shape)

device = torch.device('cuda')

model = Lenet5().to(device)

print(model)

if __name__ =='__main__':

main()

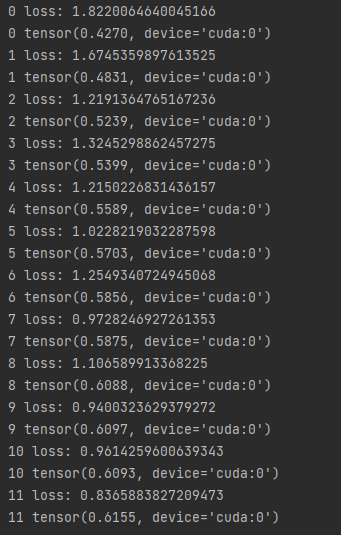

第四步:计算交叉熵和准确率,完成迭代

import torch

from torch.utils.data import DataLoader

from torchvision import datasets

from torchvision import transforms

from lenet5 import Lenet5

from torch import nn, optim

def main():

batchsz = 128

CIFAR_train = datasets.CIFAR10('cifar', True, transform=transforms.Compose([

transforms.Resize((32, 32)),

transforms.ToTensor()

]), download=True)

cifar_train = DataLoader(CIFAR_train, batch_size=batchsz, shuffle=True)

CIFAR_test = datasets.CIFAR10('cifar', False, transform=transforms.Compose([

transforms.Resize((32, 32)),

transforms.ToTensor()

]), download=True)

cifar_test = DataLoader(CIFAR_test, batch_size=batchsz, shuffle=True)

x,label = iter(cifar_train).next()

print('x',x.shape,'label:',label.shape)

device = torch.device('cuda')

model = Lenet5().to(device)

criteon = nn.CrossEntropyLoss().to(device)

optimizer = optim.Adam(model.parameters(),lr=1e-3)

print(model)

for epoch in range(1000):

for batchidx, (x,label) in enumerate(cifar_train):

# [b, 3, 32, 32]

# [b]

x,label = x.to(device),label.to(device)

logits = model(x)

# logits: [b, 10]

# label: [b]

loss = criteon(logits,label)

# backprop

optimizer.zero_grad()

loss.backward()

optimizer.step()

print(epoch,'loss:',loss.item())

model.eval()

with torch.no_grad(): #之后代码不需backprop

total_correct = 0

total_num = 0

for x ,label in cifar_test:

# [b, 3, 32, 32]

# [b]

x,label = x.to(device),label.to(device)

logits = model(x)

pred = logits.argmax(dim=1)

total_correct += torch.eq(pred,label).float().sum()

total_num += x.size(0)

acc = total_correct / total_num

print(epoch,acc)

if __name__ =='__main__':

main()

注意事项:

- 之所以在 测试时 添加 model.eval()是因为eval()时,BN会使用之前计算好的值,并且停止使用DropOut。保证用全部训练的均值和方差

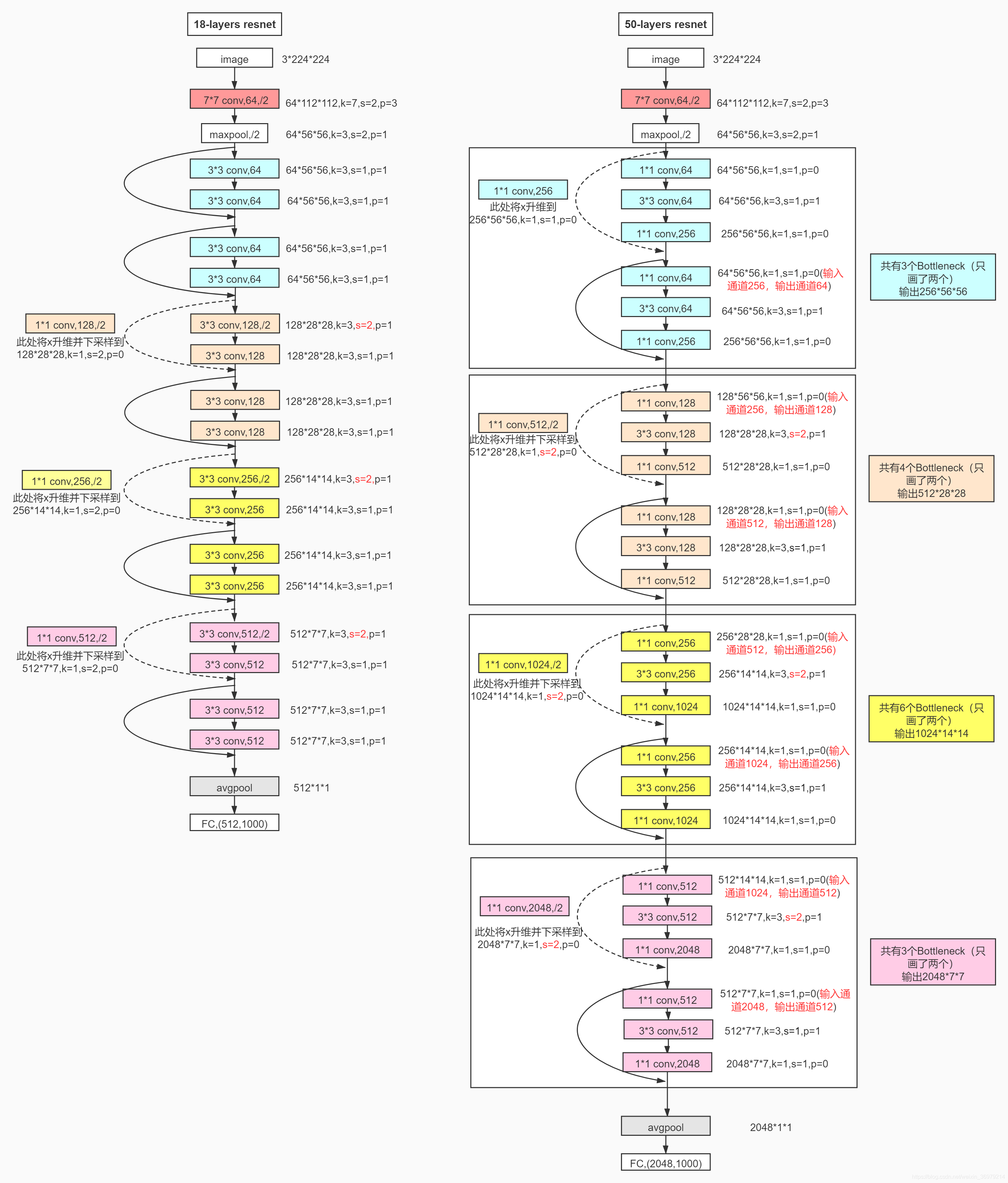

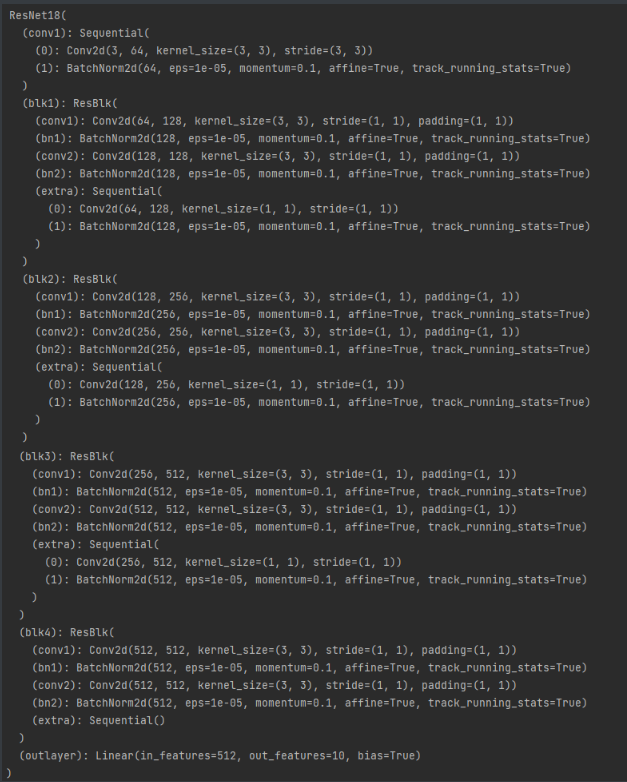

二、CIFAR10 与 ResNet

第一步:构建ResNet18的网络结构

ResNet.py

import torch

from torch import nn

from torch.nn import functional as F

class ResBlk(nn.Module):

def __init__(self,ch_in,ch_out,stride=1):

super(ResBlk,self).__init__()

self.conv1 = nn.Conv2d(ch_in,ch_out,kernel_size=3,stride=stride,padding=1)

self.bn1 = nn.BatchNorm2d(ch_out)

self.conv2 = nn.Conv2d(ch_out,ch_out,kernel_size=3,stride=1,padding=1)

self.bn2 = nn.BatchNorm2d(ch_out)

self.extra = nn.Sequential()

if ch_out != ch_in:

self.extra = nn.Sequential(

nn.Conv2d(ch_in,ch_out,kernel_size=1,stride=stride),

nn.BatchNorm2d(ch_out)

)

def forward(self, x):

out = F.relu(self.bn1(self.conv1(x)))

out = self.bn2(self.conv2(out))

#[b, ch_in, h, w] = > [b, ch_out, h, w]

out = self.extra(x) + out

out = F.relu((out))

return out

class ResNet18(nn.Module):

def __init__(self):

super(ResNet18, self).__init__()

self.conv1 = nn.Sequential(

nn.Conv2d(3,64,kernel_size=3,stride=3,padding=0),

nn.BatchNorm2d(64)

)

# followed 4 blocks

# [b, 64, h, w] => [b, 128, h ,w]

self.blk1 = ResBlk(64,128)

# [b, 128, h, w] => [b, 256, h ,w]

self.blk2 = ResBlk(128,256)

# [b, 256, h, w] => [b, 512, h ,w]

self.blk3 = ResBlk(256,512)

# [b, 512, h, w] => [b, 1024, h ,w]

self.blk4 = ResBlk(512,512)

self.outlayer = nn.Linear(512*1*1,10)

def forward(self,x):

x = F.relu(self.conv1(x))

x = self.blk1(x)

x = self.blk2(x)

x = self.blk3(x)

x = self.blk4(x)

print('after conv:', x.shape)

# [b, 512, h, w] => [b, 512, 1, 1]

x = F.adaptive_avg_pool2d(x, [1, 1])

print('after pool:', x.shape)

x = x.view(x.size(0), -1)

x = self.outlayer(x)

return x

def main():

blk = ResBlk(64,128,stride=2)

tmp = torch.randn(2,64,32,32)

out = blk(tmp)

print('block:',out.shape)

x = torch.randn(2,3,32,32)

model = ResNet18()

out = model(x)

print('resnet:',out.shape)

if __name__ == '__main__':

main()

第二步:代入第一个项目的main函数中即可

main.py

import torch

from torch.utils.data import DataLoader

from torchvision import datasets

from torchvision import transforms

from resnet import ResNet18

from torch import nn, optim

def main():

batchsz = 128

CIFAR_train = datasets.CIFAR10('cifar', True, transform=transforms.Compose([

transforms.Resize((32, 32)),

transforms.ToTensor()

]), download=True)

cifar_train = DataLoader(CIFAR_train, batch_size=batchsz, shuffle=True)

CIFAR_test = datasets.CIFAR10('cifar', False, transform=transforms.Compose([

transforms.Resize((32, 32)),

transforms.ToTensor()

]), download=True)

cifar_test = DataLoader(CIFAR_test, batch_size=batchsz, shuffle=True)

x,label = iter(cifar_train).next()

print('x',x.shape,'label:',label.shape)

device = torch.device('cuda')

model = ResNet18().to(device)

criteon = nn.CrossEntropyLoss().to(device)

optimizer = optim.Adam(model.parameters(),lr=1e-3)

print(model)

for epoch in range(1000):

for batchidx, (x,label) in enumerate(cifar_train):

# [b, 3, 32, 32]

# [b]

x,label = x.to(device),label.to(device)

logits = model(x)

# logits: [b, 10]

# label: [b]

loss = criteon(logits,label)

# backprop

optimizer.zero_grad()

loss.backward()

optimizer.step()

print(epoch,'loss:',loss.item())

model.eval()

with torch.no_grad(): #之后代码不需backprop

total_correct = 0

total_num = 0

for x ,label in cifar_test:

# [b, 3, 32, 32]

# [b]

x,label = x.to(device),label.to(device)

logits = model(x)

pred = logits.argmax(dim=1)

total_correct += torch.eq(pred,label).float().sum()

total_num += x.size(0)

acc = total_correct / total_num

print(epoch,acc)

if __name__ =='__main__':

main()

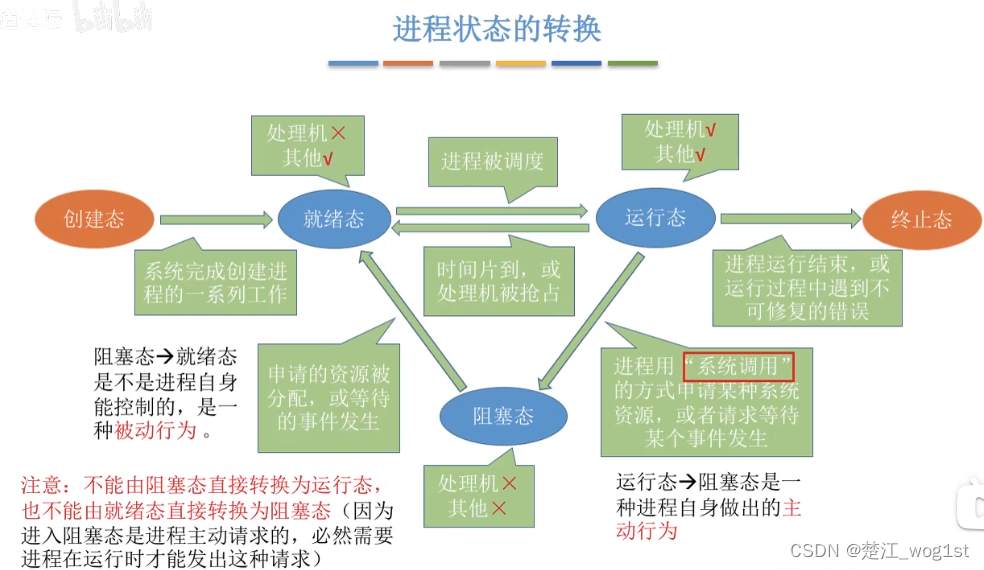

网络结构如下:

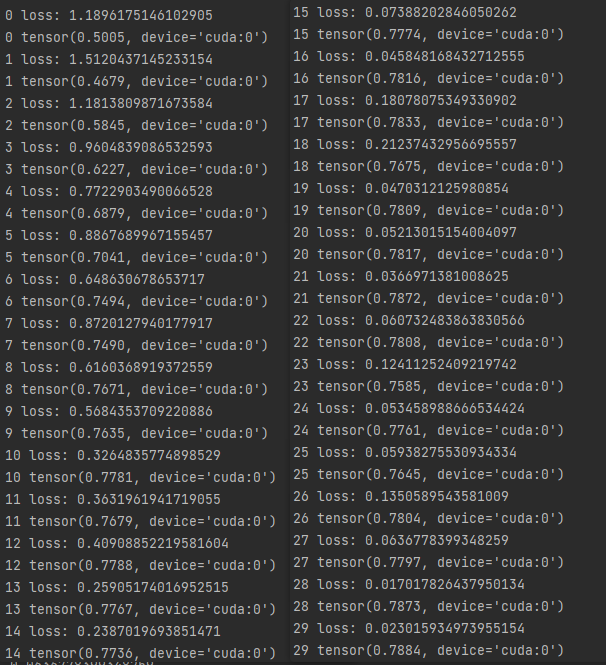

迭代准确率和交叉熵计算如下:

其他需要注意的地方:

- 并不是ResNet的paper中流程完全相同,但是十分类似

- 可以对数据进行数据增强和归一化等操作进一步提升效果