Day11-K8S日志收集及搭建高可用的kubernetes集群实战案例

- 0、昨日内容回顾

- 1、日志收集

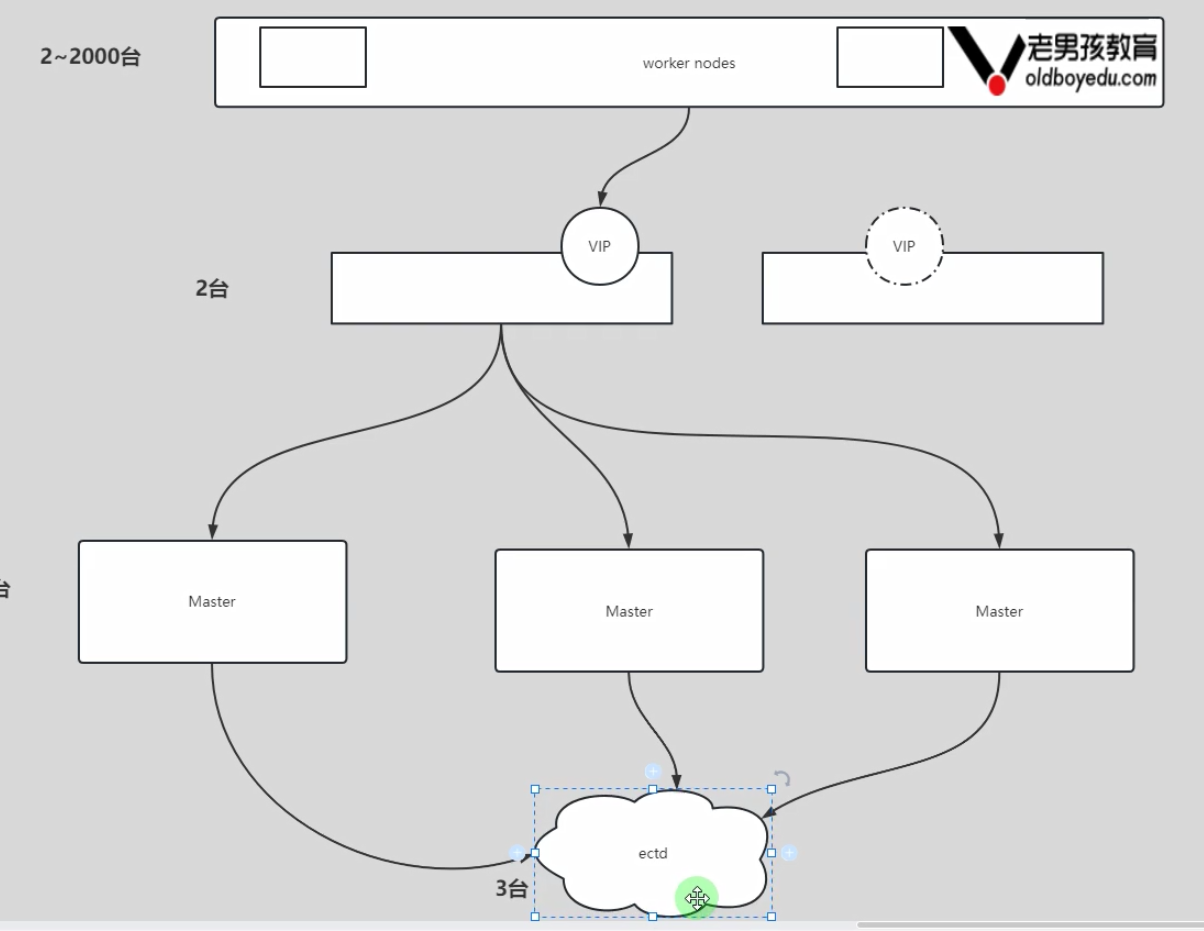

- 1.1 K8S日志收集项目之架构图解三种方案

- 1.2 部署ES

- 1.3 部署kibana

- 1.4 部署filebeat

- 2、监控系统

- 2.1 部署prometheus

- 3、K8S二进制部署

- 3.1 K8S二进制部署准备环境

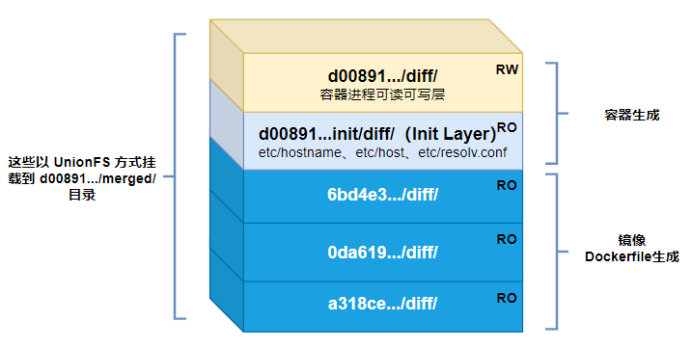

- 3.2 基础组件安装

- 3.3 生成K8S集群证书文件

- 3.4 二进制高可用及etcd配置

- 3.5 高可用配置(haproxy+keepalived)

- 3.6 二进制K8s master组件配置

- 3.7 创建Bootstrapping自动颁发证书

- 3.8 部署Node节点

- 3.9 部署网络插件

- 3.10 附加组件部署

0、昨日内容回顾

- 作业讲解

- Ingress资源

- Jenkins集成K8S

1、日志收集

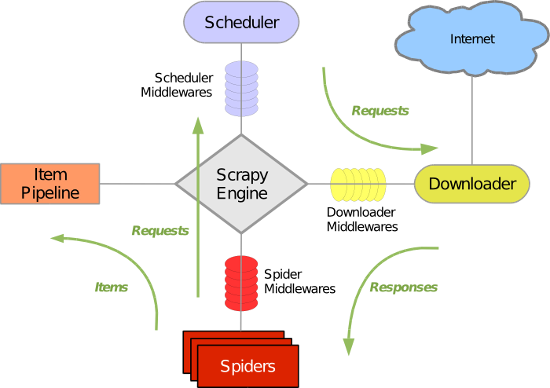

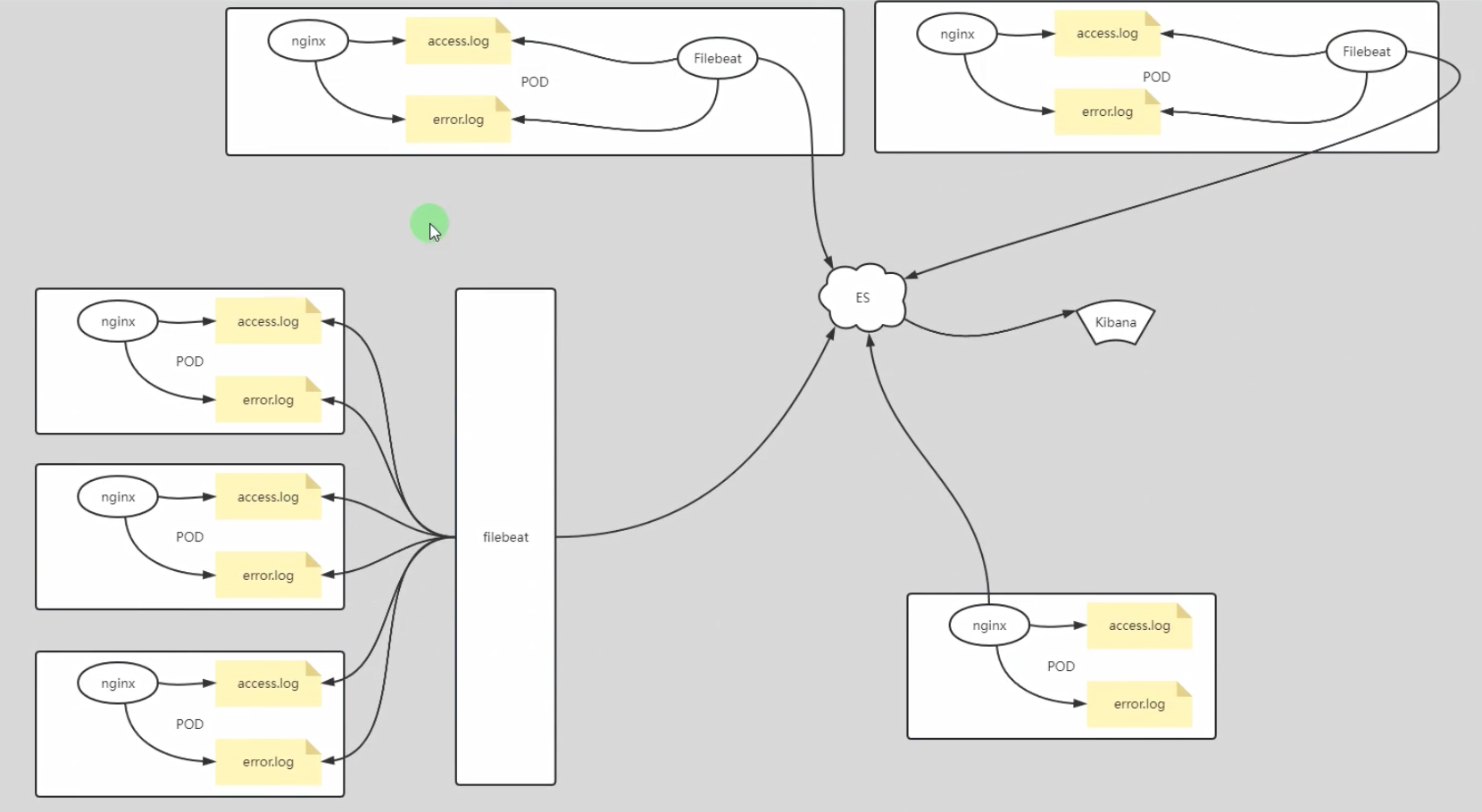

1.1 K8S日志收集项目之架构图解三种方案

1.2 部署ES

[root@k8s231 ~]# docker pull elasticsearch:7.17.5

[root@k8s231 ~]# docker tag elasticsearch:7.17.5 harbor.oldboyedu.com/project/elasticsearch:7.17.5

[root@k8s231 ~]# docker login -u admin -p 1 harbor.oldboyedu.com

[root@k8s231 ~]# docker push harbor.oldboyedu.com/project/elasticsearch:7.17.5

[root@k8s231.oldboyedu.com elasticstack]# cat deploy-es.yaml

apiVersion: v1

kind: Namespace

metadata:

name: oldboyedu-efk

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: elasticsearch

namespace: oldboyedu-efk

labels:

k8s-app: elasticsearch

spec:

replicas: 1

selector:

matchLabels:

k8s-app: elasticsearch

template:

metadata:

labels:

k8s-app: elasticsearch

spec:

containers:

# 指定需要安装的ES版本号

# - image: elasticsearch:7.17.5

- image: harbor.oldboyedu.com/project/elasticsearch:7.17.5

name: elasticsearch

resources:

limits:

cpu: 2

memory: 3Gi

requests:

cpu: 0.5

memory: 500Mi

env:

# 配置集群部署模式,此处我由于是实验,配置的是单点

- name: "discovery.type"

value: "single-node"

- name: ES_JAVA_OPTS

value: "-Xms512m -Xmx512m"

ports:

- containerPort: 9200

name: db

protocol: TCP

volumeMounts:

- name: elasticsearch-data

mountPath: /usr/share/elasticsearch/data

volumes:

- name: elasticsearch-data

persistentVolumeClaim:

claimName: es-pvc

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: es-pvc

namespace: oldboyedu-efk

spec:

storageClassName: "managed-nfs-storage"

accessModes:

- ReadWriteMany

resources:

requests:

storage: 10Gi

---

apiVersion: v1

kind: Service

metadata:

name: elasticsearch

namespace: oldboyedu-efk

spec:

ports:

- port: 9200

protocol: TCP

targetPort: 9200

selector:

k8s-app: elasticsearch

[root@k8s231.oldboyedu.com elasticstack]#

[root@k8s231.oldboyedu.com elasticstack]# kubectl apply -f deploy-es.yaml

namespace/oldboyedu-efk created

deployment.apps/elasticsearch created

persistentvolumeclaim/es-pvc created

service/elasticsearch created

[root@k8s231.oldboyedu.com elasticstack]#

1.3 部署kibana

[root@k8s231 ~]# docker pull kibana:7.17.5

[root@k8s231 ~]# docker tag kibana:7.17.5 harbor.oldboyedu.com/project/kibana:7.17.5

[root@k8s231 ~]# docker push harbor.oldboyedu.com/project/kibana:7.17.5

[root@k8s231.oldboyedu.com elasticstack]# cat deploy-kibana.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: kibana

namespace: oldboyedu-efk

spec:

replicas: 1

selector:

matchLabels:

k8s-app: kibana

template:

metadata:

labels:

k8s-app: kibana

spec:

containers:

- name: kibana

# image: kibana:7.17.5

image: harbor.oldboyedu.com/project/kibana:7.17.5

resources:

limits:

cpu: 2

memory: 2Gi

requests:

cpu: 0.5

memory: 500Mi

env:

- name: ELASTICSEARCH_HOSTS

value: http://elasticsearch.oldboyedu-efk.svc.oldboyedu.com:9200

- name: I18N_LOCALE

value: zh-CN

ports:

- containerPort: 5601

name: ui

protocol: TCP

---

apiVersion: v1

kind: Service

metadata:

name: oldboyedu-kibana

namespace: oldboyedu-efk

spec:

# type: NodePort

ports:

- port: 5601

protocol: TCP

targetPort: ui

# nodePort: 35601

selector:

k8s-app: kibana

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: oldboyedu-kibana-ing

namespace: oldboyedu-efk

annotations:

kubernetes.io/ingress.class: traefik

spec:

rules:

- host: kibana.oldboyedu.com

http:

paths:

- backend:

service:

name: oldboyedu-kibana

port:

number: 5601

path: "/"

pathType: "Prefix"

[root@k8s231.oldboyedu.com elasticstack]#

[root@k8s231.oldboyedu.com elasticstack]#

[root@k8s231.oldboyedu.com elasticstack]#

[root@k8s231.oldboyedu.com elasticstack]# kubectl apply -f deploy-kibana.yaml

deployment.apps/kibana created

service/oldboyedu-kibana created

ingress.networking.k8s.io/oldboyedu-kibana-ing created

[root@k8s231.oldboyedu.com elasticstack]#

1.4 部署filebeat

[root@k8s231 ~]# docker pull filebeat:7.10.2

Error response from daemon: pull access denied for filebeat, repository does not exist or may require 'docker login': denied: requested access to the resource is denied

[root@k8s231 ~]# docker pull elastic/filebeat:7.10.2

[root@k8s231 ~]# docker tag elastic/filebeat:7.10.2 harbor.oldboyedu.com/project/filebeat:7.10.2

[root@k8s231 ~]# docker push harbor.oldboyedu.com/project/filebeat:7.10.2

[root@k8s231.oldboyedu.com elasticstack]# cat deploy-filebeat.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: filebeat-config

namespace: oldboyedu-efk

labels:

k8s-app: filebeat

data:

filebeat.yml: |-

filebeat.config:

inputs:

# Mounted `filebeat-inputs` configmap:

path: ${path.config}/inputs.d/*.yml

# Reload inputs configs as they change:

reload.enabled: false

modules:

path: ${path.config}/modules.d/*.yml

# Reload module configs as they change:

reload.enabled: false

output.elasticsearch:

# hosts: ['elasticsearch.oldboyedu-efk:9200']

# hosts: ['elasticsearch.oldboyedu-efk.svc.oldboyedu.com:9200']

hosts: ['elasticsearch:9200']

# 不建议修改索引,因为索引名称该成功后,pod的数据也将收集不到啦!

# 除非你明确知道自己不收集Pod日志且需要自定义索引名称的情况下,可以打开下面的注释哟~

# index: 'oldboyedu-linux-elk-%{+yyyy.MM.dd}'

# 配置索引模板

# setup.ilm.enabled: false

# setup.template.name: "oldboyedu-linux-elk"

# setup.template.pattern: "oldboyedu-linux-elk*"

# setup.template.overwrite: true

# setup.template.settings:

# index.number_of_shards: 3

# index.number_of_replicas: 0

---

# 注意,官方在filebeat 7.2就已经废弃docker类型,建议后期更换为container.

apiVersion: v1

kind: ConfigMap

metadata:

name: filebeat-inputs

namespace: oldboyedu-efk

labels:

k8s-app: filebeat

data:

kubernetes.yml: |-

- type: docker

containers.ids:

- "*"

processors:

- add_kubernetes_metadata:

in_cluster: true

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: filebeat

namespace: oldboyedu-efk

labels:

k8s-app: filebeat

spec:

selector:

matchLabels:

k8s-app: filebeat

template:

metadata:

labels:

k8s-app: filebeat

spec:

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

operator: Exists

serviceAccountName: filebeat

terminationGracePeriodSeconds: 30

containers:

- name: filebeat

# 注意官方的filebeat版本推荐使用"elastic/filebeat:7.10.2",

# 如果高于该版本("elastic/filebeat:7.10.2")可能收集不到K8s集群的Pod相关日志指标哟~

# 经过我测试,直到2022-04-01开源的7.12.2版本依旧没有解决该问题!

# filebeat和ES版本可以不一致哈,因为我测试ES的版本是7.17.2

#

# 待完成: 后续可以尝试更新最新的镜像,并将输入的类型更换为container,因为docker输入类型官方在filebeat 7.2已废弃!

# image: elastic/filebeat:7.10.2

image: harbor.oldboyedu.com/project/filebeat:7.10.2

args: [

"-c", "/etc/filebeat.yml",

"-e",

]

# 出问题后可以用作临时调试,注意需要将args注释哟

# command: ["sleep","3600"]

securityContext:

runAsUser: 0

# If using Red Hat OpenShift uncomment this:

#privileged: true

resources:

limits:

memory: 200Mi

requests:

cpu: 100m

memory: 100Mi

volumeMounts:

- name: config

mountPath: /etc/filebeat.yml

readOnly: true

subPath: filebeat.yml

- name: inputs

mountPath: /usr/share/filebeat/inputs.d

readOnly: true

- name: data

mountPath: /usr/share/filebeat/data

- name: varlibdockercontainers

mountPath: /var/lib/docker/containers

readOnly: true

volumes:

- name: config

configMap:

defaultMode: 0600

name: filebeat-config

- name: varlibdockercontainers

hostPath:

path: /var/lib/docker/containers

- name: inputs

configMap:

defaultMode: 0600

name: filebeat-inputs

# data folder stores a registry of read status for all files, so we don't send everything again on a Filebeat pod restart

- name: data

hostPath:

path: /var/lib/filebeat-data

type: DirectoryOrCreate

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: filebeat

subjects:

- kind: ServiceAccount

name: filebeat

namespace: oldboyedu-efk

roleRef:

kind: ClusterRole

name: filebeat

apiGroup: rbac.authorization.k8s.io

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: filebeat

labels:

k8s-app: filebeat

rules:

- apiGroups: [""] # "" indicates the core API group

resources:

- namespaces

- pods

verbs:

- get

- watch

- list

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: filebeat

namespace: oldboyedu-efk

labels:

k8s-app: filebeat

[root@k8s231.oldboyedu.com elasticstack]#

[root@k8s231.oldboyedu.com elasticstack]# kubectl apply -f deploy-filebeat.yaml

2、监控系统

2.1 部署prometheus

(1)下载资源清单

[root@k8s231.oldboyedu.com project]# wget http://192.168.15.253/Kubernetes/day11-/prometheus.zip

[root@k8s231.oldboyedu.com project]# unzip prometheus.zip

[root@k8s231.oldboyedu.com project]# cd prometheus/

[root@k8s231.oldboyedu.com prometheus]#

(2)根据集群情况自行修改ep资源的配置,如上图所示

[root@k8s231 prometheus]# cd serviceMonitor/ && ls | xargs grep ip

kube-state-metrics-serviceMonitor.yaml: insecureSkipVerify: true

kube-state-metrics-serviceMonitor.yaml: insecureSkipVerify: true

node-exporter-serviceMonitor.yaml: insecureSkipVerify: true

prometheus-EtcdService.yaml: - ip: 10.0.0.151

prometheus-kubeControllerManagerService.yaml: - ip: 10.0.0.151

prometheus-KubeProxyService.yaml: - ip: 10.0.0.151

prometheus-KubeProxyService.yaml: - ip: 10.0.0.152

prometheus-KubeProxyService.yaml: - ip: 10.0.0.153

prometheus-kubeSchedulerService.yaml: - ip: 10.0.0.151

prometheus-serviceMonitorKubelet.yaml: insecureSkipVerify: true

prometheus-serviceMonitorKubelet.yaml: insecureSkipVerify: true

[root@k8s231.oldboyedu.com prometheus]# sed -i 's#10.0.0.151#10.0.0.231#' `ls serviceMonitor/*`

[root@k8s231.oldboyedu.com prometheus]#

[root@k8s231.oldboyedu.com prometheus]# sed -i 's#10.0.0.152#10.0.0.232#' `ls serviceMonitor/*`

[root@k8s231.oldboyedu.com prometheus]#

[root@k8s231.oldboyedu.com prometheus]# sed -i 's#10.0.0.153#10.0.0.233#' `ls serviceMonitor/*`

[root@k8s231.oldboyedu.com prometheus]#

[root@k8s231.oldboyedu.com prometheus]# grep ip serviceMonitor/*

serviceMonitor/kube-state-metrics-serviceMonitor.yaml: insecureSkipVerify: true

serviceMonitor/kube-state-metrics-serviceMonitor.yaml: insecureSkipVerify: true

serviceMonitor/node-exporter-serviceMonitor.yaml: insecureSkipVerify: true

serviceMonitor/prometheus-EtcdService.yaml: - ip: 10.0.0.231

serviceMonitor/prometheus-kubeControllerManagerService.yaml: - ip: 10.0.0.231

serviceMonitor/prometheus-KubeProxyService.yaml: - ip: 10.0.0.231

serviceMonitor/prometheus-KubeProxyService.yaml: - ip: 10.0.0.232

serviceMonitor/prometheus-KubeProxyService.yaml: - ip: 10.0.0.233

serviceMonitor/prometheus-kubeSchedulerService.yaml: - ip: 10.0.0.231

serviceMonitor/prometheus-serviceMonitorKubelet.yaml: insecureSkipVerify: true

serviceMonitor/prometheus-serviceMonitorKubelet.yaml: insecureSkipVerify: true

[root@k8s231.oldboyedu.com prometheus]#

(3)创建自定义资源

kubectl apply -f setup

(3)创建alertmanager服务

kubectl apply -f alertmanager

(4)创建node-exporter服务

kubectl apply -f node-exporter

(5)创建granfa服务

kubectl apply -f grafana

(6)创建promethus服务

kubectl apply -f prometheus

(7)创建serviceMonitor服务

kubectl apply -f serviceMonitor

(8)访问grafna查看数据监控情况

kubectl get svc -A | grep grafana

(9)导入仪表盘,展示数据

略,如下图所示。

温馨提示:

我已经将dashboard多个模板给到大家啦,下图导入的是"node-exporter_rev17.json"文件哟~

准备5台机器,二进制部署K8S高可用集群:

10.0.0.201 k8s-master01

10.0.0.202 k8s-master02

10.0.0.203 k8s-master03

10.0.0.204 k8s-node01

10.0.0.205 k8s-node02

3、K8S二进制部署

3.1 K8S二进制部署准备环境

1.所有节点下载软件包

[root@k8s-master01 ~]# curl -o softwares.zip http://192.168.15.253/Kubernetes/day11-/softwares.zip

[root@k8s-master01 ~]# unzip softwares.zip

[root@k8s-master01 ~]# cd softwares

[root@k8s-master01 softwares]#

2.所有节点安装常用的软件包

# yum -y install bind-utils expect rsync wget jq psmisc vim net-tools telnet yum-utils device-mapper-persistent-data lvm2 git ntpdate

yum -y localinstall 01-Linux常用的软件包/*.rpm

3.免密钥登录集群并配置同步脚本

3.1 设置主机名,各节点参考如下命令修改即可

hostnamectl set-hostname k8s-master01

3.2 所有节点设置相应的主机名及hosts文件解析

cat >> /etc/hosts <<'EOF'

10.0.0.201 k8s-master01

10.0.0.202 k8s-master02

10.0.0.203 k8s-master03

10.0.0.204 k8s-node01

10.0.0.205 k8s-node02

EOF

3.3 将"k8s-master01"节点配置免密码登录其他节点

cat > password_free_login.sh <<'EOF'

#!/bin/bash

# auther: Jason Yin

# 创建密钥对

ssh-keygen -t rsa -P "" -f /root/.ssh/id_rsa -q

# 声明你服务器密码,建议所有节点的密码均一致,否则该脚本需要再次进行优化

export mypasswd=123456

# 定义主机列表

k8s_host_list=(k8s-master01 k8s-master02 k8s-master03 k8s-node01 k8s-node02)

# 配置免密登录,利用expect工具免交互输入

for i in ${k8s_host_list[@]};do

expect -c "

spawn ssh-copy-id -i /root/.ssh/id_rsa.pub root@$i

expect {

\"*yes/no*\" {send \"yes\r\"; exp_continue}

\"*password*\" {send \"$mypasswd\r\"; exp_continue}

}"

done

EOF

sh password_free_login.sh

3.4 编写同步脚本

cat > /usr/local/sbin/data_rsync.sh <<'EOF'

#!/bin/bash

# Auther: Jason Yin

if [ $# -ne 1 ];then

echo "Usage: $0 /path/to/file(绝对路径)"

exit

fi

if [ ! -e $1 ];then

echo "[ $1 ] dir or file not find!"

exit

fi

fullpath=`dirname $1`

basename=`basename $1`

cd $fullpath

k8s_host_list=(k8s-master01 k8s-master02 k8s-master03 k8s-node01 k8s-node02)

for host in ${k8s_host_list[@]};do

tput setaf 2

echo ===== rsyncing ${host}: $basename =====

tput setaf 7

rsync -az $basename `whoami`@${host}:$fullpath

if [ $? -eq 0 ];then

echo "命令执行成功!"

fi

done

EOF

chmod +x /usr/local/sbin/data_rsync.sh

3.5 测试同步脚本是否正常工作

cp /etc/hosts /tmp/

data_rsync.sh /tmp/hosts

4.Linux基础环境优化

4.1 所有节点关闭firewalld,selinux,NetworkManager

systemctl disable --now firewalld

systemctl disable --now NetworkManager

setenforce 0

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/sysconfig/selinux

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config

4.2 所有节点关闭swap分区,fstab注释swap

swapoff -a && sysctl -w vm.swappiness=0

sed -ri '/^[^#]*swap/s@^@#@' /etc/fstab

free -h

4.3 所有节点同步时间

- 手动同步时区和时间

ln -svf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

ntpdate ntp.aliyun.com

- 定期任务同步(“crontab -e”)

*/5 * * * * /usr/sbin/ntpdate ntp.aliyun.com

4.4 所有节点配置limit

cat >> /etc/security/limits.conf <<'EOF'

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

EOF

4.5 所有节点优化sshd服务

sed -i 's@#UseDNS yes@UseDNS no@g' /etc/ssh/sshd_config

sed -i 's@^GSSAPIAuthentication yes@GSSAPIAuthentication no@g' /etc/ssh/sshd_config

- UseDNS选项:

打开状态下,当客户端试图登录SSH服务器时,服务器端先根据客户端的IP地址进行DNS PTR反向查询出客户端的主机名,然后根据查询出的客户端主机名进行DNS正向A记录查询,验证与其原始IP地址是否一致,这是防止客户端欺骗的一种措施,但一般我们的是动态IP不会有PTR记录,打开这个选项不过是在白白浪费时间而已,不如将其关闭。 - GSSAPIAuthentication:

当这个参数开启( GSSAPIAuthentication yes )的时候,通过SSH登陆服务器时候会有些会很慢!这是由于服务器端启用了GSSAPI。登陆的时候客户端需要对服务器端的IP地址进行反解析,如果服务器的IP地址没有配置PTR记录,那么就容易在这里卡住了。

4.6 Linux内核调优

cat > /etc/sysctl.d/k8s.conf <<'EOF'

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv6.conf.all.disable_ipv6 = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOF

sysctl --system

4.7 修改终端颜色

cat <<EOF >> ~/.bashrc

PS1='[\[\e[34;1m\]\u@\[\e[0m\]\[\e[32;1m\]\H\[\e[0m\]\[\e[31;1m\] \W\[\e[0m\]]# '

EOF

source ~/.bashrc

5.所有节点升级Linux内核

5.1 下载并安装内核软件包

wget http://193.49.22.109/elrepo/kernel/el7/x86_64/RPMS/kernel-ml-devel-4.19.12-1.el7.elrepo.x86_64.rpm

wget http://193.49.22.109/elrepo/kernel/el7/x86_64/RPMS/kernel-ml-4.19.12-1.el7.elrepo.x86_64.rpm

yum -y localinstall 02-Linux-kernel/*.rpm

5.2 更改内核启动顺序

grub2-set-default 0 && grub2-mkconfig -o /etc/grub2.cfg

grubby --args="user_namespace.enable=1" --update-kernel="$(grubby --default-kernel)"

grubby --default-kernel

5.3 更新软件版本,但不需要更新内核,因为我内核已经更新到了指定的版本

# yum -y update --exclude=kernel*

yum -y localinstall 03-Linux-yum-update/*.rpm

6.所有节点安装ipvsadm以实现kube-proxy的负载均衡

6.1 安装ipvsadm等相关工具

# yum -y install ipvsadm ipset sysstat conntrack libseccomp

yum -y localinstall 04-Linux-ipvsadm/*.rpm

6.2 手动加载模块

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack

6.3 创建要开机自动加载的模块配置文件

cat > /etc/modules-load.d/ipvs.conf << 'EOF'

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_fo

ip_vs_nq

ip_vs_sed

ip_vs_ftp

ip_vs_sh

nf_conntrack

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

EOF

6.4 启动模块,如上图所示,这是Linux 3.10.X系列的内核模块,并不是我们需要的!

lsmod | grep --color=auto -e ip_vs -e nf_conntrack

温馨提示:

Linux kernel 4.19+版本已经将之前的"nf_conntrack_ipv4"模块更名为"nf_conntrack"模块哟~

7.重启所有节点并检查内核和模块是否配置成功

7.1 查看现有内核版本

uname -r

7.2 检查默认加载的内核版本

grubby --default-kernel

7.3 重启所有节点

reboot

7.4 检查支持ipvs的内核模块是否加载成功,如上图所示,支持了更多的内核参数。

lsmod | grep --color=auto -e ip_vs -e nf_conntrack

7.5 再次查看内核版本

uname -r

3.2 基础组件安装

1.所有节点部署docker环境

1.1 所有节点安装docker

# yum -y install docker-ce-19.03.*

yum -y localinstall 05-Linux-docker-ce-19_03/*.rpm

1.2 将docker的CgroupDriver改成systemd,并配置镜像加速和私有镜像仓库地址

mkdir /etc/docker

cat > /etc/docker/daemon.json <<EOF

{

"exec-opts": ["native.cgroupdriver=systemd"],

"registry-mirrors": ["https://registry.docker-cn.com","https://tuv7rqqq.mirror.aliyuncs.com"],

"log-driver": "json-file",

"log-opts": {"max-size": "200m"},

"storage-driver": "overlay2"

}

EOF

1.3 设置开机自启动

systemctl daemon-reload && systemctl enable --now docker

systemctl status docker

docker info | grep "Cgroup Driver"

docker info | grep "Registry Mirrors" -A 2

1.4 配置自动补全功能

# yum -y install bash-completion

source /usr/share/bash-completion/bash_completion

2.部署etcd和K8S程序

2.1 下载K8S,etcd的软件包

# wget https://dl.k8s.io/v1.23.4/kubernetes-server-linux-amd64.tar.gz

wget https://dl.k8s.io/v1.23.15/kubernetes-server-linux-amd64.tar.gz

wget https://github.com/etcd-io/etcd/releases/download/v3.5.2/etcd-v3.5.2-linux-amd64.tar.gz

2.2 解压K8S的二进制程序包到PATH环境变量路径(master节点)

# tar -xf kubernetes-server-linux-amd64.tar.gz --strip-components=3 -C /usr/local/bin kubernetes/server/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy}

tar -xf 06-etcd_k8s/kubernetes-server-linux-amd64.tar.gz --strip-components=3 -C /usr/local/bin kubernetes/server/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy}

2.3 解压etcd的二进制程序包到PATH环境变量路径(master节点)

# tar -xf etcd-v3.5.2-linux-amd64.tar.gz --strip-components=1 -C /usr/local/bin etcd-v3.5.2-linux-amd64/etcd{,ctl}

tar -xf 06-etcd_k8s/etcd-v3.5.2-linux-amd64.tar.gz --strip-components=1 -C /usr/local/bin etcd-v3.5.2-linux-amd64/etcd{,ctl}

2.4 将组建发送到其他节点

MasterNodes='k8s-master02 k8s-master03'

WorkNodes='k8s-node01 k8s-node02'

for NODE in $MasterNodes; do echo $NODE; scp /usr/local/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy} $NODE:/usr/local/bin/; scp /usr/local/bin/etcd* $NODE:/usr/local/bin/; done

for NODE in $WorkNodes; do scp /usr/local/bin/kube{let,-proxy} $NODE:/usr/local/bin/ ; done

2.5 查看kubernetes的版本

kube-apiserver --version

kube-controller-manager --version

kube-scheduler --version

etcdctl version

kubelet --version

kube-proxy --version

kubectl version

2.6 所有节点创建工作目录

mkdir -p /opt/cni/bin

2.7 切换分支,版本取决于所部署的K8S版本

git clone https://github.com/dotbalo/k8s-ha-install.git

cd k8s-ha-install/

git checkout manual-installation-v1.23.x

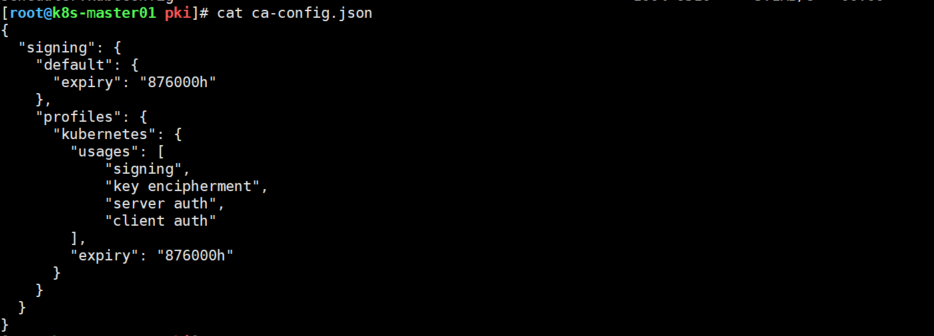

3.3 生成K8S集群证书文件

1.k8s-master01节点下载证书管理工具

1.1 k8s-master01节点下载证书管理工具(该证书文件可以提前下载好发给大家即可)

# wget "https://pkg.cfssl.org/R1.2/cfssl_linux-amd64" -O /usr/local/bin/cfssl

# wget "https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64" -O /usr/local/bin/cfssljson

# chmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson

cp 08-cfssl/* /usr/local/bin

chmod +x /usr/local/bin/{cfssl,cfssljson}

1.2 所有Master节点创建etcd证书目录

mkdir /etc/etcd/ssl -p

1.3 所有节点创建kubernetes相关目录

mkdir -p /etc/kubernetes/pki

2.k8s-master01节点生成etcd证书

2.1 生成etcd CA证书和CA证书的key

# cd /root/k8s-ha-install/pki

# cfssl gencert -initca etcd-ca-csr.json | cfssljson -bare /etc/etcd/ssl/etcd-ca

cd 07-k8s-ha-install/pki/

cfssl gencert -initca etcd-ca-csr.json | cfssljson -bare /etc/etcd/ssl/etcd-ca

2.2 颁发证书

cfssl gencert \

-ca=/etc/etcd/ssl/etcd-ca.pem \

-ca-key=/etc/etcd/ssl/etcd-ca-key.pem \

-config=ca-config.json \

-hostname=127.0.0.1,k8s-master01,k8s-master02,k8s-master03,10.0.0.201,10.0.0.202,10.0.0.203 \

-profile=kubernetes \

etcd-csr.json | cfssljson -bare /etc/etcd/ssl/etcd

2.3 将证书复制到其他节点

MasterNodes='k8s-master02 k8s-master03'

for NODE in $MasterNodes; do

ssh $NODE "mkdir -p /etc/etcd/ssl"

for FILE in etcd-ca-key.pem etcd-ca.pem etcd-key.pem etcd.pem; do

scp /etc/etcd/ssl/${FILE} $NODE:/etc/etcd/ssl/${FILE}

done

done

3.k8s组件apiserver相关证书

3.1 生成kubernetes证书

# cd /root/k8s-ha-install/pki

# cfssl gencert -initca ca-csr.json | cfssljson -bare /etc/kubernetes/pki/ca

cd 07-k8s-ha-install/pki/

cfssl gencert -initca ca-csr.json | cfssljson -bare /etc/kubernetes/pki/ca

3.2 生成apiserver的客户端证书

cfssl gencert -ca=/etc/kubernetes/pki/ca.pem -ca-key=/etc/kubernetes/pki/ca-key.pem -config=ca-config.json -hostname=10.96.0.1,10.0.0.222,127.0.0.1,kubernetes,kubernetes.default,kubernetes.default.svc,kubernetes.default.svc.cluster,kubernetes.default.svc.cluster.local,10.0.0.201,10.0.0.202,10.0.0.203 -profile=kubernetes apiserver-csr.json | cfssljson -bare /etc/kubernetes/pki/apiserver

3.3 生成apiserver的聚合证书

cfssl gencert -initca front-proxy-ca-csr.json | cfssljson -bare /etc/kubernetes/pki/front-proxy-ca

cfssl gencert -ca=/etc/kubernetes/pki/front-proxy-ca.pem -ca-key=/etc/kubernetes/pki/front-proxy-ca-key.pem -config=ca-config.json -profile=kubernetes front-proxy-client-csr.json | cfssljson -bare /etc/kubernetes/pki/front-proxy-client

温馨提示:

(1)“10.96.0.0"是k8s service的网段,如果说需要更改k8s service网段,那就需要更改"10.96.0.1”;

(2)如果不是高可用集群,10.0.0.250为Master01的IP,我这里这个是高可用的vip;

4.k8s组件controller manager相关证书

4.1 生成 controller-manage的证书

cfssl gencert \

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=ca-config.json \

-profile=kubernetes \

manager-csr.json | cfssljson -bare /etc/kubernetes/pki/controller-manager

# 注意,如果不是高可用集群,10.0.0.222:6443改为master01的地址,6443改为apiserver的端口,默认是6443

# set-cluster:设置一个集群项

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=https://10.0.0.222:6443 \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

# set-credentials 设置一个用户项

kubectl config set-credentials system:kube-controller-manager \

--client-certificate=/etc/kubernetes/pki/controller-manager.pem \

--client-key=/etc/kubernetes/pki/controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

# 设置一个环境项,一个上下文

kubectl config set-context system:kube-controller-manager@kubernetes \

--cluster=kubernetes \

--user=system:kube-controller-manager \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

# 使用某个环境当做默认环境

kubectl config use-context system:kube-controller-manager@kubernetes \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

5.k8s组件scheduler相关证书

cfssl gencert \

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=ca-config.json \

-profile=kubernetes \

scheduler-csr.json | cfssljson -bare /etc/kubernetes/pki/scheduler

# 注意,如果不是高可用集群,10.0.0.222:6443改为master01的地址,6443改为apiserver的端口,默认是6443

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=https://10.0.0.222:6443 \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

kubectl config set-credentials system:kube-scheduler \

--client-certificate=/etc/kubernetes/pki/scheduler.pem \

--client-key=/etc/kubernetes/pki/scheduler-key.pem \

--embed-certs=true \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

kubectl config set-context system:kube-scheduler@kubernetes \

--cluster=kubernetes \

--user=system:kube-scheduler \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

kubectl config use-context system:kube-scheduler@kubernetes \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

6.生成admin的证书

cfssl gencert \

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=ca-config.json \

-profile=kubernetes \

admin-csr.json | cfssljson -bare /etc/kubernetes/pki/admin

# 注意,如果不是高可用集群,10.0.0.222:6443改为master01的地址,6443改为apiserver的端口,默认是6443

kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://10.0.0.222:6443 --kubeconfig=/etc/kubernetes/admin.kubeconfig

kubectl config set-credentials kubernetes-admin --client-certificate=/etc/kubernetes/pki/admin.pem --client-key=/etc/kubernetes/pki/admin-key.pem --embed-certs=true --kubeconfig=/etc/kubernetes/admin.kubeconfig

kubectl config set-context kubernetes-admin@kubernetes --cluster=kubernetes --user=kubernetes-admin --kubeconfig=/etc/kubernetes/admin.kubeconfig

kubectl config use-context kubernetes-admin@kubernetes --kubeconfig=/etc/kubernetes/admin.kubeconfig

温馨提示:

我们用同样的命令生成了admin.kubeconfig,scheduler.kubeconfig,controller-manager.kubeconfig,它们之间是如何区分的?

我们生成的证书会定义一个用户 admin,它是属于 system:masters 这个组,k8s 安装的时候会有一个 clusterrole,它是一个集群角色,相当于一个配置,它有着集群最高的管理权限,同时会创建一个 clusterrolebinding,它会把 admin 绑到 system:masters 这个组上,然后这个组上的所有用户都会有这个集群的权限

7.创建ServiceAccount Key

7.1 ServiceAccount是k8s一种认证方式,创建ServiceAccount的时候会创建一个与之绑定的secret,这个secret会生成一个token

openssl genrsa -out /etc/kubernetes/pki/sa.key 2048

openssl rsa -in /etc/kubernetes/pki/sa.key -pubout -out /etc/kubernetes/pki/sa.pub

7.2 发送证书至其他节点

for NODE in k8s-master02 k8s-master03;

do

for FILE in $(ls /etc/kubernetes/pki | grep -v etcd);

do

scp /etc/kubernetes/pki/${FILE} $NODE:/etc/kubernetes/pki/${FILE};

done;

for FILE in admin.kubeconfig controller-manager.kubeconfig scheduler.kubeconfig;

do

scp /etc/kubernetes/${FILE} $NODE:/etc/kubernetes/${FILE};

done;

done

7.3 查看ca证书的有效期

如上图所示,我此处给证书的有效期是100年。

3.4 二进制高可用及etcd配置

1.创建配置文件

1.1 k8s-master01节点的配置文件

cat > /etc/etcd/etcd.config.yml <<'EOF'

name: 'k8s-master01'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://10.0.0.201:2380'

listen-client-urls: 'https://10.0.0.201:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://10.0.0.201:2380'

advertise-client-urls: 'https://10.0.0.201:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'k8s-master01=https://10.0.0.201:2380,k8s-master02=https://10.0.0.202:2380,k8s-master03=https://10.0.0.203:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOF

1.2 k8s-master02节点的配置文件

cat > /etc/etcd/etcd.config.yml << 'EOF'

name: 'k8s-master02'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://10.0.0.202:2380'

listen-client-urls: 'https://10.0.0.202:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://10.0.0.202:2380'

advertise-client-urls: 'https://10.0.0.202:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'k8s-master01=https://10.0.0.201:2380,k8s-master02=https://10.0.0.202:2380,k8s-master03=https://10.0.0.203:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOF

1.3 k8s-master03节点的配置文件

cat > /etc/etcd/etcd.config.yml << 'EOF'

name: 'k8s-master03'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://10.0.0.203:2380'

listen-client-urls: 'https://10.0.0.203:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://10.0.0.203:2380'

advertise-client-urls: 'https://10.0.0.203:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'k8s-master01=https://10.0.0.201:2380,k8s-master02=https://10.0.0.202:2380,k8s-master03=https://10.0.0.203:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/etc/kubernetes/pki/etcd/etcd.pem'

key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/etc/kubernetes/pki/etcd/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

EOF

2.所有节点启动服务

2.1 创建启动脚本

cat > /usr/lib/systemd/system/etcd.service <<'EOF'

[Unit]

Description=Etcd Service

Documentation=https://coreos.com/etcd/docs/latest/

After=network.target

[Service]

Type=notify

ExecStart=/usr/local/bin/etcd --config-file=/etc/etcd/etcd.config.yml

Restart=on-failure

RestartSec=10

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

Alias=etcd3.service

EOF

2.2 启动服务

mkdir /etc/kubernetes/pki/etcd

ln -s /etc/etcd/ssl/* /etc/kubernetes/pki/etcd/

systemctl daemon-reload

systemctl enable --now etcd

systemctl status etcd

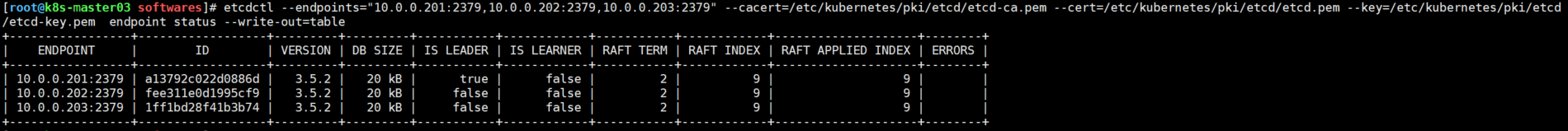

2.3 查看etcd状态

etcdctl --endpoints="10.0.0.201:2379,10.0.0.202:2379,10.0.0.203:2379" --cacert=/etc/kubernetes/pki/etcd/etcd-ca.pem --cert=/etc/kubernetes/pki/etcd/etcd.pem --key=/etc/kubernetes/pki/etcd/etcd-key.pem endpoint status --write-out=table

3.5 高可用配置(haproxy+keepalived)

1.所有节点(k8s-master0[1-3])安装keepalived和haproxy

# yum -y install keepalived haproxy

yum -y localinstall 09-keepalive-haproxy/*.rpm

2.所有节点(k8s-master0[1-3])配置haproxy,配置文件各个节点相同

2.1 备份配置文件

cp /etc/haproxy/haproxy.cfg{,`date +%F`}

2.2 所有节点的配置文件内容相同

cat > /etc/haproxy/haproxy.cfg <<'EOF'

global

maxconn 2000

ulimit-n 16384

log 127.0.0.1 local0 err

stats timeout 30s

defaults

log global

mode http

option httplog

timeout connect 5000

timeout client 50000

timeout server 50000

timeout http-request 15s

timeout http-keep-alive 15s

frontend monitor-in

bind *:33305

mode http

option httplog

monitor-uri /monitor

frontend k8s-master

bind 0.0.0.0:16443

bind 127.0.0.1:16443

mode tcp

option tcplog

tcp-request inspect-delay 5s

default_backend k8s-master

backend k8s-master

mode tcp

option tcplog

option tcp-check

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server k8s-master01 10.0.0.201:6443 check

server k8s-master02 10.0.0.202:6443 check

server k8s-master03 10.0.0.203:6443 check

EOF

3.所有节点(k8s-master0[1-3])配置keepalived,配置文件各节点不同

3.1 备份配置文件

cp /etc/keepalived/keepalived.conf{,`date +%F`}

3.2 "k8s-master01"节点创建配置文件

cat > /etc/keepalived/keepalived.conf <<'EOF'

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

script_user root

enable_script_security

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state MASTER

interface eth0

mcast_src_ip 10.0.0.201

virtual_router_id 51

priority 101

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

10.0.0.222

}

track_script {

chk_apiserver

}

}

EOF

3.3 "k8s-master02"节点创建配置文件

cat > /etc/keepalived/keepalived.conf <<'EOF'

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

script_user root

enable_script_security

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state MASTER

interface eth0

mcast_src_ip 10.0.0.202

virtual_router_id 51

priority 101

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

10.0.0.222

}

track_script {

chk_apiserver

}

}

EOF

3.4 "k8s-master03"节点创建配置文件

cat > /etc/keepalived/keepalived.conf <<'EOF'

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

script_user root

enable_script_security

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state MASTER

interface eth0

mcast_src_ip 10.0.0.203

virtual_router_id 51

priority 101

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

10.0.0.222

}

track_script {

chk_apiserver

}

}

EOF

3.4 所有节点(k8s-master0[1-3])配置KeepAlived健康检查文件

3.4.1 创建检查脚本

cat > /etc/keepalived/check_apiserver.sh <<'EOF'

#!/bin/bash

err=0

for k in $(seq 1 3)

do

check_code=$(pgrep haproxy)

if [[ $check_code == "" ]]; then

err=$(expr $err + 1)

sleep 1

continue

else

err=0

break

fi

done

if [[ $err != "0" ]]; then

echo "systemctl stop keepalived"

/usr/bin/systemctl stop keepalived

exit 1

else

exit 0

fi

EOF

3.4.2 添加执行权限

chmod +x /etc/keepalived/check_apiserver.sh

温馨提示:

(1)我们通过KeepAlived虚拟出来一个VIP,VIP会配置到一个master节点上面,它会通过haproxy暴露的16443的端口反向代理到我们的三个master节点上面,所以我们可以通过VIP的地址加上16443访问到我们的API server;

(2)健康检查会检查haproxy的状态,三次失败就会将KeepAlived停掉,停掉之后KeepAlived会跳到其他的节点;

5.所有master节点启动服务

5.1 启动harproxy

systemctl daemon-reload

systemctl enable --now haproxy

5.2 启动keepalived

systemctl enable --now keepalived

5.3 查看VIP,如上图所示

ip a

3.6 二进制K8s master组件配置

1.所有节点(k8s-master0[1-3])Apiserver服务启动

1.1 所有节点(k8s-master0[1-3])创建工作目录

mkdir -p /etc/kubernetes/manifests/ /etc/systemd/system/kubelet.service.d /var/lib/kubelet /var/log/kubernetes

1.2 k8s-master01节点创建配置文件

cat > /usr/lib/systemd/system/kube-apiserver.service << 'EOF'

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \

--v=2 \

--logtostderr=true \

--allow-privileged=true \

--bind-address=0.0.0.0 \

--secure-port=6443 \

--insecure-port=0 \

--advertise-address=10.0.0.201 \

--service-cluster-ip-range=10.96.0.0/12 \

--service-node-port-range=3000-50000 \

--etcd-servers=https://10.0.0.201:2379,https://10.0.0.202:2379,https://10.0.0.203:2379 \

--etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--client-ca-file=/etc/kubernetes/pki/ca.pem \

--tls-cert-file=/etc/kubernetes/pki/apiserver.pem \

--tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \

--kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \

--kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/pki/sa.pub \

--service-account-signing-key-file=/etc/kubernetes/pki/sa.key \

--service-account-issuer=https://kubernetes.default.svc.cluster.local \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \

--authorization-mode=Node,RBAC \

--enable-bootstrap-token-auth=true \

--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \

--proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \

--proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \

--requestheader-allowed-names=aggregator \

--requestheader-group-headers=X-Remote-Group \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-username-headers=X-Remote-User

# --token-auth-file=/etc/kubernetes/token.csv

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

EOF

1.3 k8s-master02节点创建配置文件

cat > /usr/lib/systemd/system/kube-apiserver.service <<'EOF'

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \

--v=2 \

--logtostderr=true \

--allow-privileged=true \

--bind-address=0.0.0.0 \

--secure-port=6443 \

--insecure-port=0 \

--advertise-address=10.0.0.202 \

--service-cluster-ip-range=10.96.0.0/12 \

--service-node-port-range=3000-50000 \

--etcd-servers=https://10.0.0.201:2379,https://10.0.0.202:2379,https://10.0.0.203:2379 \

--etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--client-ca-file=/etc/kubernetes/pki/ca.pem \

--tls-cert-file=/etc/kubernetes/pki/apiserver.pem \

--tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \

--kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \

--kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/pki/sa.pub \

--service-account-signing-key-file=/etc/kubernetes/pki/sa.key \

--service-account-issuer=https://kubernetes.default.svc.cluster.local \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \

--authorization-mode=Node,RBAC \

--enable-bootstrap-token-auth=true \

--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \

--proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \

--proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \

--requestheader-allowed-names=aggregator \

--requestheader-group-headers=X-Remote-Group \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-username-headers=X-Remote-User

# --token-auth-file=/etc/kubernetes/token.csv

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

EOF

1.4 k8s-master03节点创建配置文件

cat > /usr/lib/systemd/system/kube-apiserver.service << 'EOF'

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \

--v=2 \

--logtostderr=true \

--allow-privileged=true \

--bind-address=0.0.0.0 \

--secure-port=6443 \

--insecure-port=0 \

--advertise-address=10.0.0.203 \

--service-cluster-ip-range=10.96.0.0/12 \

--service-node-port-range=3000-50000 \

--etcd-servers=https://10.0.0.201:2379,https://10.0.0.202:2379,https://10.0.0.203:2379 \

--etcd-cafile=/etc/etcd/ssl/etcd-ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--client-ca-file=/etc/kubernetes/pki/ca.pem \

--tls-cert-file=/etc/kubernetes/pki/apiserver.pem \

--tls-private-key-file=/etc/kubernetes/pki/apiserver-key.pem \

--kubelet-client-certificate=/etc/kubernetes/pki/apiserver.pem \

--kubelet-client-key=/etc/kubernetes/pki/apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/pki/sa.pub \

--service-account-signing-key-file=/etc/kubernetes/pki/sa.key \

--service-account-issuer=https://kubernetes.default.svc.cluster.local \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \

--authorization-mode=Node,RBAC \

--enable-bootstrap-token-auth=true \

--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \

--proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.pem \

--proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client-key.pem \

--requestheader-allowed-names=aggregator \

--requestheader-group-headers=X-Remote-Group \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-username-headers=X-Remote-User

# --token-auth-file=/etc/kubernetes/token.csv

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

EOF

1.5 启动服务

systemctl daemon-reload && systemctl enable --now kube-apiserver && systemctl status kube-apiserver

2.所有节点(k8s-master0[1-3])ControllerManager服务启动

2.1 所有节点创建配置文件

cat > /usr/lib/systemd/system/kube-controller-manager.service << 'EOF'

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-controller-manager \

--v=2 \

--logtostderr=true \

--address=127.0.0.1 \

--root-ca-file=/etc/kubernetes/pki/ca.pem \

--cluster-signing-cert-file=/etc/kubernetes/pki/ca.pem \

--cluster-signing-key-file=/etc/kubernetes/pki/ca-key.pem \

--service-account-private-key-file=/etc/kubernetes/pki/sa.key \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig \

--leader-elect=true \

--use-service-account-credentials=true \

--node-monitor-grace-period=40s \

--node-monitor-period=5s \

--pod-eviction-timeout=2m0s \

--controllers=*,bootstrapsigner,tokencleaner \

--allocate-node-cidrs=true \

--cluster-cidr=172.16.0.0/12 \

--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \

--node-cidr-mask-size=24

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOF

2.2启动服务,查看状态如上图所示

systemctl daemon-reload

systemctl enable --now kube-controller-manager

systemctl status kube-controller-manager

3.所有节点(k8s-master0[1-3])Scheduler服务启动

3.1 所有节点创建配置文件

cat > /usr/lib/systemd/system/kube-scheduler.service <<'EOF'

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-scheduler \

--v=2 \

--logtostderr=true \

--address=127.0.0.1 \

--leader-elect=true \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOF

3.2 启动服务并查看状态,如上图所示

systemctl daemon-reload

systemctl enable --now kube-scheduler

systemctl status kube-scheduler

4.检查组件是否正常

[root@k8s-master01 pki]# kubectl get cs --kubeconfig=/etc/kubernetes/admin.kubeconfig

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-1 Healthy {"health":"true","reason":""}

etcd-0 Healthy {"health":"true","reason":""}

etcd-2 Healthy {"health":"true","reason":""}

[root@k8s-master01 pki]#

3.7 创建Bootstrapping自动颁发证书

1.k8s-master01节点创建bootstrap-kubelet.kubeconfig文件

# cd /root/k8s-ha-install/bootstrap

cd 07-k8s-ha-install/bootstrap/

kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://10.0.0.222:6443 --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig

kubectl config set-credentials tls-bootstrap-token-user --token=c8ad9c.2e4d610cf3e7426e --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig

kubectl config set-context tls-bootstrap-token-user@kubernetes --cluster=kubernetes --user=tls-bootstrap-token-user --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig

kubectl config use-context tls-bootstrap-token-user@kubernetes --kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig

温馨提示:

"bootstrap-kubelet.kubeconfig"是一个keepalived用来向apiserver申请证书的文件,如果要修改bootstrap.secret.yaml的token-id和token-secret,需要保证c8ad9c字符串一致的,并且位数是一样的。还要保证上个命令的黄色字体:c8ad9c.2e4d610cf3e7426e与你修改的字符串要一致

2.所有master节点拷贝管理证书

mkdir -p /root/.kube ; cp /etc/kubernetes/admin.kubeconfig /root/.kube/config

3.创建bootstrap

kubectl create -f bootstrap.secret.yaml

3.8 部署Node节点

1.拷贝证书

cd /etc/kubernetes/

for NODE in k8s-master02 k8s-master03 k8s-node01 k8s-node02; do

ssh $NODE mkdir -p /etc/kubernetes/pki /etc/etcd/ssl /etc/etcd/ssl

for FILE in etcd-ca.pem etcd.pem etcd-key.pem; do

scp /etc/etcd/ssl/$FILE $NODE:/etc/etcd/ssl/

done

for FILE in pki/ca.pem pki/ca-key.pem pki/front-proxy-ca.pem bootstrap-kubelet.kubeconfig; do

scp /etc/kubernetes/$FILE $NODE:/etc/kubernetes/${FILE}

done

done

温馨提示:

node节点使用自动颁发证书的形式配置

2.Kubelet配置

2.1 所有节点创建工作目录

mkdir -p /var/lib/kubelet /var/log/kubernetes /etc/systemd/system/kubelet.service.d /etc/kubernetes/manifests/

2.2 所有节点配置kubelet service

cat > /usr/lib/systemd/system/kubelet.service <<'EOF'

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=docker.service

Requires=docker.service

[Service]

ExecStart=/usr/local/bin/kubelet

Restart=always

StartLimitInterval=0

RestartSec=10

[Install]

WantedBy=multi-user.target

EOF

2.3 所有节点配置kubelet service的配置文件

cat > /etc/systemd/system/kubelet.service.d/10-kubelet.conf <<'EOF'

[Service]

Environment="KUBELET_KUBECONFIG_ARGS=--bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig --kubeconfig=/etc/kubernetes/kubelet.kubeconfig"

Environment="KUBELET_SYSTEM_ARGS=--network-plugin=cni --cni-conf-dir=/etc/cni/net.d --cni-bin-dir=/opt/cni/bin"

Environment="KUBELET_CONFIG_ARGS=--config=/etc/kubernetes/kubelet-conf.yml --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.2"

Environment="KUBELET_EXTRA_ARGS=--node-labels=node.kubernetes.io/node='' "

ExecStart=

ExecStart=/usr/local/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_SYSTEM_ARGS $KUBELET_EXTRA_ARGS

EOF

2.4 所有创建kubelet的配置文件

cat > /etc/kubernetes/kubelet-conf.yml <<'EOF'

apiVersion: kubelet.config.k8s.io/v1beta1

kind: KubeletConfiguration

address: 0.0.0.0

port: 10250

readOnlyPort: 10255

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /etc/kubernetes/pki/ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

cgroupDriver: systemd

cgroupsPerQOS: true

clusterDNS:

- 10.96.0.10

clusterDomain: oldboyedu.com

containerLogMaxFiles: 5

containerLogMaxSize: 10Mi

contentType: application/vnd.kubernetes.protobuf

cpuCFSQuota: true

cpuManagerPolicy: none

cpuManagerReconcilePeriod: 10s

enableControllerAttachDetach: true

enableDebuggingHandlers: true

enforceNodeAllocatable:

- pods

eventBurst: 10

eventRecordQPS: 5

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

evictionPressureTransitionPeriod: 5m0s

failSwapOn: true

fileCheckFrequency: 20s

hairpinMode: promiscuous-bridge

healthzBindAddress: 127.0.0.1

healthzPort: 10248

httpCheckFrequency: 20s

imageGCHighThresholdPercent: 85

imageGCLowThresholdPercent: 80

imageMinimumGCAge: 2m0s

iptablesDropBit: 15

iptablesMasqueradeBit: 14

kubeAPIBurst: 10

kubeAPIQPS: 5

makeIPTablesUtilChains: true

maxOpenFiles: 1000000

maxPods: 110

nodeStatusUpdateFrequency: 10s

oomScoreAdj: -999

podPidsLimit: -1

registryBurst: 10

registryPullQPS: 5

resolvConf: /etc/resolv.conf

rotateCertificates: true

runtimeRequestTimeout: 2m0s

serializeImagePulls: true

staticPodPath: /etc/kubernetes/manifests

streamingConnectionIdleTimeout: 4h0m0s

syncFrequency: 1m0s

volumeStatsAggPeriod: 1m0s

EOF

2.5 启动所有节点kubelet

systemctl daemon-reload

systemctl enable --now kubelet

systemctl status kubelet

2.6 在master201节点上查看node信息,如上图所示。

kubectl get nodes

3.kube-proxy配置

3.1 在“k8s-master01”节点生成"/etc/kubernetes/kube-proxy.kubeconfig"配置文件

# cd /root/k8s-ha-install

cd 07-k8s-ha-install/

kubectl -n kube-system create serviceaccount kube-proxy

kubectl create clusterrolebinding system:kube-proxy --clusterrole system:node-proxier --serviceaccount kube-system:kube-proxy

SECRET=$(kubectl -n kube-system get sa/kube-proxy \

--output=jsonpath='{.secrets[0].name}')

JWT_TOKEN=$(kubectl -n kube-system get secret/$SECRET \

--output=jsonpath='{.data.token}' | base64 -d)

PKI_DIR=/etc/kubernetes/pki

K8S_DIR=/etc/kubernetes

kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://10.0.0.222:6443 --kubeconfig=${K8S_DIR}/kube-proxy.kubeconfig

kubectl config set-credentials kubernetes --token=${JWT_TOKEN} --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

kubectl config set-context kubernetes --cluster=kubernetes --user=kubernetes --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

kubectl config use-context kubernetes --kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

3.2 在“k8s-master01”将kube-proxy的systemd Service文件发送到其他节点

for NODE in k8s-master01 k8s-master02 k8s-master03 k8s-node01 k8s-node02; do

scp /etc/kubernetes/kube-proxy.kubeconfig $NODE:/etc/kubernetes/kube-proxy.kubeconfig

done

3.3 所有节点创建kube-proxy.conf配置文件

cat > /etc/kubernetes/kube-proxy.conf << EOF

KUBE_PROXY_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/var/log/kubernetes/ \\

--config=/etc/kubernetes/kube-proxy-config.yml"

EOF

# 注意修改各个节点的"hostnameOverride"的值哟

cat > /etc/kubernetes/kube-proxy-config.yml << EOF

kind: KubeProxyConfiguration

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

metricsBindAddress: 0.0.0.0:10249

clientConnection:

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

hostnameOverride: k8s-node02

clusterCIDR: 172.30.0.0/16

EOF

3.4 所有节点使用systemd管理kube-proxy

cat > /usr/lib/systemd/system/kube-proxy.service << EOF

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=/etc/kubernetes/kube-proxy.conf

ExecStart=/usr/local/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

3.5 所有节点启动kube-proxy

systemctl daemon-reload

systemctl enable --now kube-proxy

systemctl status kube-proxy

温馨提示:

如果更改了集群Pod的网段,需要更改kube-proxy.conf的clusterCIDR参数,比如我上面的案例自定义的网段为"172.30.0.0/16"。

3.9 部署网络插件

1.部署calico网络插件

# 所有节点导入镜像文件

[root@k8s-master02 images]# ll

总用量 593716

-rw-r--r-- 1 root root 236422144 2024-02-18 13:31 cni_v3_22_0.tar.gz

-rw-r--r-- 1 root root 132667392 2024-02-18 13:29 kube-controllers_v3_22_0.tar.gz

-rw-r--r-- 1 root root 217369600 2024-02-18 13:31 node_v3_22_0.tar.gz

-rw-r--r-- 1 root root 21498368 2024-02-18 13:31 pod2daemon-flexvol_v3_22_0.tar.gz

[root@k8s-master02 images]# docker load -i cni_v3_22_0.tar.gz

[root@k8s-master02 images]# docker load -i kube-controllers_v3_22_0.tar.gz

[root@k8s-master02 images]# docker load -i node_v3_22_0.tar.gz

[root@k8s-master02 images]# docker load -i pod2daemon-flexvol_v3_22_0.tar.gz

# cd /root/k8s-ha-install/calico/

# 修改calico-etcd.yaml的以下位置

sed -i 's#etcd_endpoints: "http://<ETCD_IP>:<ETCD_PORT>"#etcd_endpoints: "https://10.0.0.201:2379,https://10.0.0.202:2379,https://10.0.0.203:2379"#g' calico-etcd.yaml

ETCD_CA=`cat /etc/kubernetes/pki/etcd/etcd-ca.pem | base64 | tr -d '\n'`

ETCD_CERT=`cat /etc/kubernetes/pki/etcd/etcd.pem | base64 | tr -d '\n'`

ETCD_KEY=`cat /etc/kubernetes/pki/etcd/etcd-key.pem | base64 | tr -d '\n'`

sed -i "s@# etcd-key: null@etcd-key: ${ETCD_KEY}@g; s@# etcd-cert: null@etcd-cert: ${ETCD_CERT}@g; s@# etcd-ca: null@etcd-ca: ${ETCD_CA}@g" calico-etcd.yaml

sed -i 's#etcd_ca: ""#etcd_ca: "/calico-secrets/etcd-ca"#g; s#etcd_cert: ""#etcd_cert: "/calico-secrets/etcd-cert"#g; s#etcd_key: "" #etcd_key: "/calico-secrets/etcd-key" #g' calico-etcd.yaml

# 更改此处为自己的pod网段

POD_SUBNET="172.30.110.0/24"

# 注意下面的这个步骤是把calico-etcd.yaml文件里面的CALICO_IPV4POOL_CIDR下的网段改成自己的Pod网段,也就是把192.168.x.x/16改成自己的集群网段,并打开注释,建议直接写IP地址网段,因此写的不是IP地址会报错,我有踩到坑。

sed -i 's@# - name: CALICO_IPV4POOL_CIDR@- name: CALICO_IPV4POOL_CIDR@g; s@# value: "192.168.0.0/16"@ value: '"${POD_SUBNET}"'@g' calico-etcd.yaml

温馨提示:

上述所有步骤均可以省略,就会出错。需要手动修改你自己集群的证书文件内容,我发你的你用不了!需要手动修改"etcd-key","etcd-cert"和"etcd-ca"。

- etcd证书文件存储路径:

/etc/kubernetes/pki/etcd/

- base64编码文件内容

cat <file> | base64 -w 0

最终执行创建网络:

kubectl apply -f calico-etcd.yaml

2.观察各节点是否部署成功

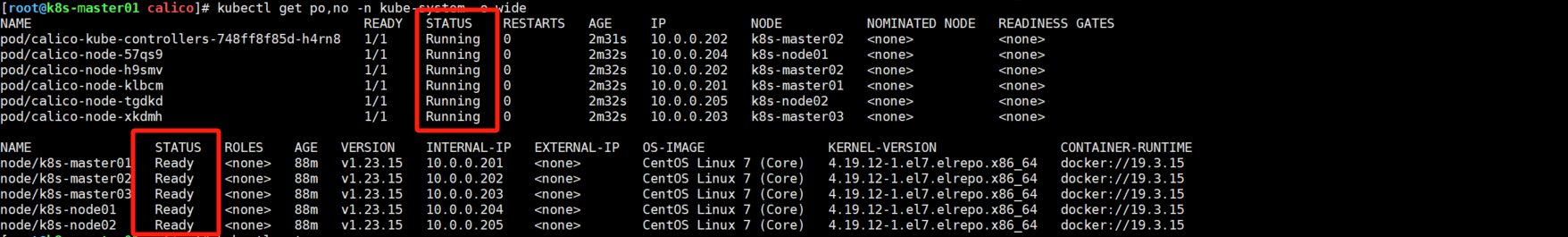

[root@k8s-master01 calico]# kubectl get po,no -n kube-system -o wide

3.10 附加组件部署

1 部署CoreDNS

(1)部署coreDNS

cd /root/k8s-ha-install/

# sed -i "s#10.96.0.1#10.96.0.10#g" CoreDNS/coredns.yaml # 修改"clusterIP"的值。

kubectl create -f CoreDNS/coredns.yaml

安装最新版CoreDNS(不推荐)

git clone https://github.com/coredns/deployment.git

cd deployment/kubernetes

./deploy.sh -s -i 10.96.0.10 | kubectl apply -f -

(2)查看状态

kubectl get po -n kube-system -l k8s-app=kube-dns

(3)验证DNS组件

dig @10.96.0.10 metrics-server.kube-system.svc.cluster.local +short

2 部署Metrics Server

(1)部署Metrics Server

cd /root/k8s-ha-install/metrics-server

kubectl create -f .

(2)查看node和pod的监控状态

kubectl top no

kubectl top po -A

3 安装dashboard

(1)安装dashboard服务

cd /root/k8s-ha-install/dashboard/

kubectl create -f .

(2)查看token并访问dashboard,如下图所示

kubectl get svc kubernetes-dashboard -n kubernetes-dashboard

kubectl get pod -A -owide | grep dashboard

kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep admin-user | awk '{print $1}')

温馨提示:

可以在官方dashboard查看到最新版dashboard,官方GitHub地址:

https://github.com/kubernetes/dashboard

(1)部署最新版本,如上图所示,注意观察dashboard是否支持对应的K8S版本哟!(建议将svc的类型手动修改为NodePort类型进行暴露哟!)

kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.5.1/aio/deploy/recommended.yaml

(2)创建管理员用户(安装最新版本的时候)

cat > admin.yaml <<'EOF'

# 添加以下内容

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kube-system

EOF

kubectl apply -f admin.yaml -n kube-system

其余部署参考: linkhttps://www.cnblogs.com/yinzhengjie/p/17069566.html#%E4%B9%9D%E9%83%A8%E7%BD%B2%E7%BD%91%E7%BB%9C%E6%8F%92%E4%BB%B6

作业:

- 完善Jenkins集成K8S项目,要求如下:

- 自建gitlab,harbor,Jenkins环境

- 将gitee代码迁移到gitlab上;

- 使用Jenkins的webhook自动拉取gitlab代码并编译推送到harbor仓库;

- 每次发布更新新版本必须邮件,企业微信,钉钉告警;

- 完善build.sh脚本,如果是第一次构建,则创建相应的资源,如果不是第一次创建则更新应用;

扩展部分:

- 将"build.sh"脚本的功能改写为pipeline实现

![[进阶]面向对象之多态(练习)](https://i-blog.csdnimg.cn/direct/974e59cd97514196a65e43ef5b1939aa.png)