Docker Network

Docker Network 是 Docker 引擎提供的一种功能,用于管理 Docker 容器之间以及容器与外部网络之间的网络通信。它允许用户定义和配置容器的网络环境,以便容器之间可以相互通信,并与外部网络进行连接。

Docker Network 提供了以下功能:

-

容器间通信:Docker Network 允许创建一个虚拟的网络环境,使得在同一个网络中的容器可以直接通过 IP 地址进行通信,就像在同一局域网中的不同主机之间进行通信一样。这使得容器之间的数据传输更加方便和高效。

-

连接外部网络:Docker Network 允许容器与外部网络进行连接,使得容器可以与其他主机、服务或互联网进行通信。通过配置网络参数,如网关、DNS 设置等,容器可以与外部网络进行交互。

-

网络隔离:Docker Network 提供了网络隔离的功能,使得不同的容器可以存在于独立的网络环境中,彼此之间互不影响。这提供了更好的安全性和隔离性,防止容器之间的意外干扰。

-

自定义网络配置:Docker Network 允许用户创建自定义的网络配置,如定义子网、指定 IP 地址范围、设置网络别名等。这使得用户可以灵活地根据应用需求来定制容器的网络环境。

使用 Docker Network,可以轻松地创建、管理和配置容器的网络。Docker 提供了多种网络驱动程序,如桥接网络(Bridge Network)、覆盖网络(Overlay Network)、主机网络(Host Network)等,以满足不同的网络需求。

总结来说,Docker Network 是 Docker 引擎提供的一种网络管理功能,用于容器之间的通信和容器与外部网络的连接。它提供了网络隔离、自定义网络配置等功能,使得容器的网络设置更加灵活和便捷。

docker network最大的作用

- 容器间的互联和通信,以及端口映射

- 容器ip变动的时候,可以通过服务名直接通信而不受影响

linux输入

ifconfig

先启动docker,在ifconfig

[root@localhost ~]# systemctl start docker

[root@localhost ~]# ifconfig

docker0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.17.0.1 netmask 255.255.0.0 broadcast 172.17.255.255

inet6 fe80::42:fff:fe0a:e164 prefixlen 64 scopeid 0x20<link>

ether 02:42:0f:0a:e1:64 txqueuelen 0 (Ethernet)

RX packets 65 bytes 3452 (3.3 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 52 bytes 3957 (3.8 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

ens33: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.0.101 netmask 255.255.255.0 broadcast 192.168.0.255

inet6 fe80::fabb:13bf:8ff7:4943 prefixlen 64 scopeid 0x20<link>

ether 00:0c:29:5c:ae:e9 txqueuelen 1000 (Ethernet)

RX packets 1578 bytes 1743249 (1.6 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 852 bytes 71714 (70.0 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

inet6 ::1 prefixlen 128 scopeid 0x10<host>

loop txqueuelen 1000 (Local Loopback)

RX packets 68 bytes 5912 (5.7 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 68 bytes 5912 (5.7 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

veth9f1669e: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet6 fe80::c40f:9fff:feb8:f8fe prefixlen 64 scopeid 0x20<link>

ether c6:0f:9f:b8:f8:fe txqueuelen 0 (Ethernet)

RX packets 8 bytes 656 (656.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 27 bytes 2743 (2.6 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

vethd37f657: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet6 fe80::d815:5dff:fe45:e8c0 prefixlen 64 scopeid 0x20<link>

ether da:15:5d:45:e8:c0 txqueuelen 0 (Ethernet)

RX packets 49 bytes 3050 (2.9 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 71 bytes 5349 (5.2 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

vethf4dfa31: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet6 fe80::b86b:5eff:fe1b:fb64 prefixlen 64 scopeid 0x20<link>

ether ba:6b:5e:1b:fb:64 txqueuelen 0 (Ethernet)

RX packets 8 bytes 656 (656.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 32 bytes 3411 (3.3 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

virbr0: flags=4099<UP,BROADCAST,MULTICAST> mtu 1500

inet 192.168.122.1 netmask 255.255.255.0 broadcast 192.168.122.255

ether 52:54:00:97:a6:aa txqueuelen 1000 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

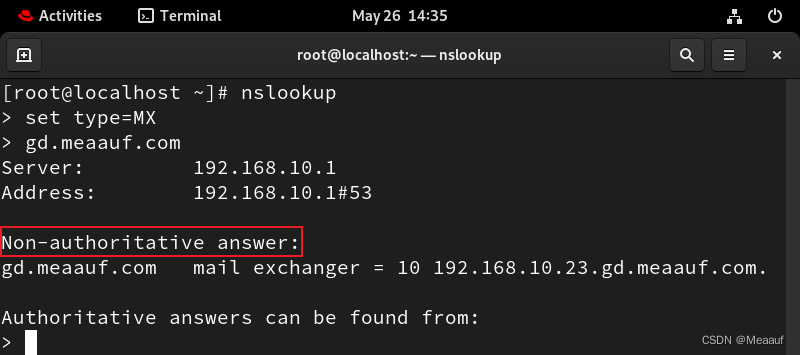

在输出的 ifconfig 命令结果中,以下是各个参数的含义:

-

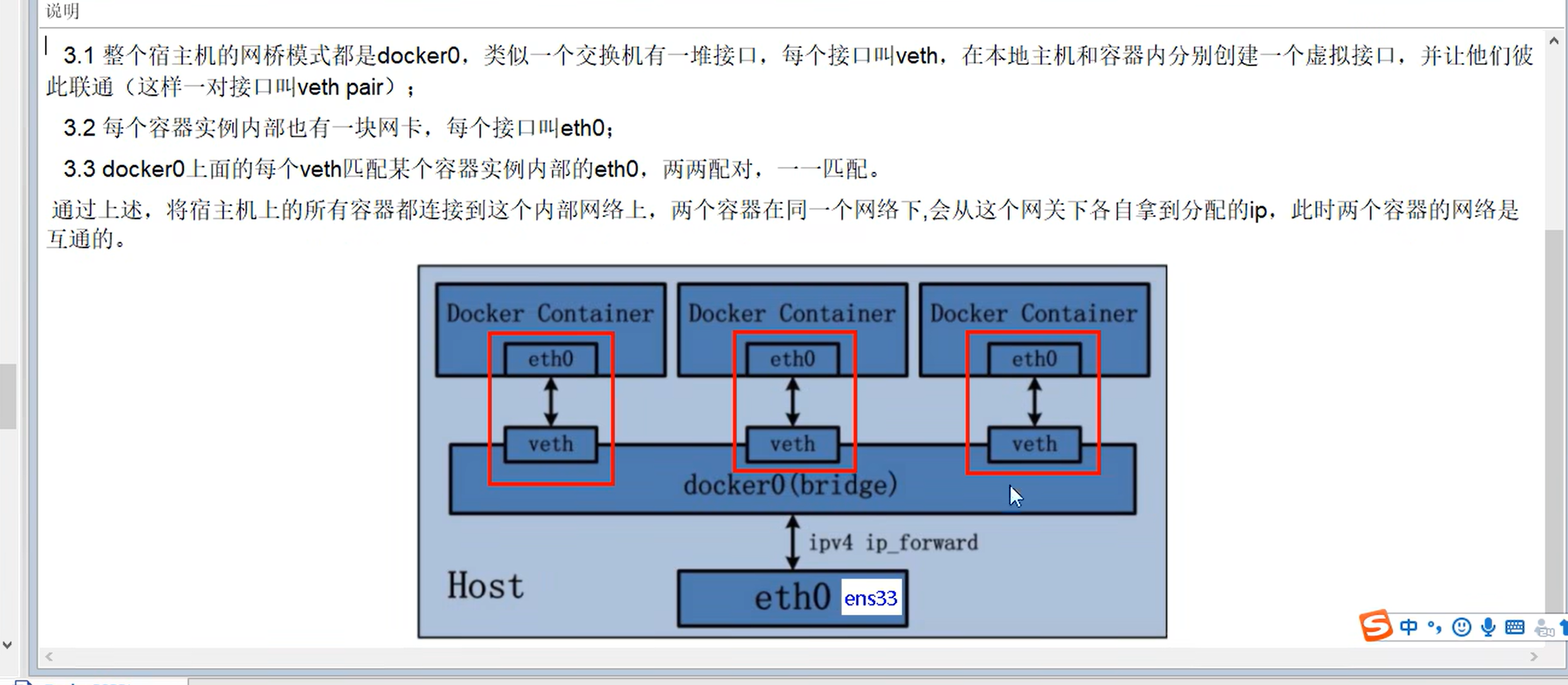

docker0: 这是 Docker 容器的默认网络桥接接口,用于连接 Docker 容器和主机的网络。它具有 IP 地址172.17.0.1,子网掩码255.255.0.0,用于容器之间的通信。当创建一个容器时,Docker 会在 docker0 桥接接口上创建一个虚拟以太网设备,并为容器分配一个 IP 地址,使得容器可以通过 docker0 桥接接口与其他容器或主机进行通信。Docker 还会为容器自动配置网络路由和 NAT(网络地址转换)规则,以便容器可以与外部网络进行通信。 总结来说,docker0 是 Docker Network 中的一个特殊网络桥接接口,用于连接 Docker 容器和主机的网络。它提供了默认的容器网络环境,使得容器可以进行互联和与外部网络通信。 -

ens33: linux服务器的ip地址,这是主机的物理网络接口,通常用于连接主机与外部网络。它具有 IP 地址192.168.0.101,子网掩码255.255.255.0,广播地址192.168.0.255。ens33是 Linux 服务器上的物理网络接口的名称,通常用于连接服务器与外部网络。 -

lo: 这是回环接口,用于主机内部的本地回环通信。它具有 IP 地址127.0.0.1,子网掩码255.0.0.0。 -

veth9f1669e,vethd37f657,vethf4dfa31: 这些是虚拟以太网设备,用于连接容器与主机之间的网络。它们是 Docker 容器网络与主机网络之间的桥接设备。 -

virbr0: 这是使用 libvirt 管理的虚拟网络接口,用于虚拟化环境中的虚拟机通信。它具有 IP 地址192.168.122.1,子网掩码255.255.255.0。

常用命令

介绍

以下是一些常用的 Docker Network 命令:

-

列出所有网络:

docker network ls这个命令将列出所有已经创建的 Docker 网络,包括默认网络和用户自定义网络。

-

创建网络:

docker network create <network-name>这个命令用于创建一个自定义的 Docker 网络。你需要为网络指定一个名称,并可以选择指定其他网络配置参数,如子网、网关等。

-

删除网络:

docker network rm <network-name>这个命令用于删除一个指定的 Docker 网络。在删除网络之前,请确保该网络上没有正在运行的容器。

-

查看网络详细信息:

docker network inspect <network-name>这个命令将显示指定 Docker 网络的详细信息,包括网络的名称、ID、驱动程序、子网、网关、连接的容器等。

-

连接容器到网络:

docker network connect <network-name> <container-name>这个命令用于将一个正在运行的容器连接到指定的 Docker 网络上。容器必须处于运行状态。

-

从网络中断开容器:

docker network disconnect <network-name> <container-name>这个命令用于将一个已连接到指定网络的容器从网络中断开。容器必须处于运行状态。

这些是一些常见的 Docker Network 命令,用于管理 Docker 网络。可以根据需要使用这些命令来创建、删除、查看和连接容器到网络。

使用

列出所有网络

docker network ls

安装docker后,默认会创建三大网络模式

[root@localhost ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

39848ebe130a bridge bridge local

adf217ba0e04 host host local

97c55498dc28 none null local

[root@localhost ~]#

在 Docker 中,有三种常见的网络模式:桥接模式(bridge)、主机模式(host)和无网络模式(none)。下面是对这三种模式的简要解释:

-

桥接模式(bridge):

这是 Docker 默认使用的网络模式。在桥接模式下,Docker 容器通过一个虚拟的网络桥接接口(通常是docker0)连接到主机的网络。容器之间可以通过桥接接口进行通信,也可以与主机和外部网络进行通信。桥接模式下,Docker 会为每个容器分配一个 IP 地址,并通过 NAT(网络地址转换)来实现容器与外部网络的连接。 -

主机模式(host):

在主机模式下,Docker 容器与主机共享网络命名空间,即它们使用相同的网络接口和 IP 地址。容器直接绑定到主机的网络接口,不会进行网络地址转换。这意味着容器可以使用主机的 IP 地址和端口,容器内的服务可以直接通过主机的 IP 地址和端口进行访问。 -

无网络模式(none):

- 在无网络模式下,容器没有网络连接。这意味着容器无法通过网络与其他容器或外部网络进行通信。通常情况下,无网络模式用于特殊应用场景,例如需要完全与网络隔离的容器。

- 自定义网络模式:

- 用户可以创建自定义的网络,并将容器连接到该网络。

- 自定义网络可以根据具体需求进行配置,如指定子网、网关、DNS 设置等。

- 自定义网络模式适用于需要更精细控制容器网络环境的场景,如多个应用之间的隔离、安全性要求较高的部署等。

-

容器模式:

容器模式(container)是 Docker 中的一种特殊网络模式,它允许多个容器共享一个网络命名空间。

在容器模式下,多个容器可以共享相同的网络栈和 IP 地址,它们可以直接相互通信,就像同一台主机上的进程之间通信一样。容器模式的主要特点包括:

- 容器在同一个网络命名空间中,彼此之间可以通过

localhost或127.0.0.1直接进行通信。 - 不需要进行端口映射或网络地址转换,容器之间可以使用相同的端口进行通信。

- 多个容器可以共享网络配置,如 IP 地址、子网、网关等。

容器模式适用于需要在同一主机上运行多个高度耦合的容器,这些容器需要直接进行本地通信而无需网络层的干预。通过使用容器模式,可以简化容器之间的通信配置,并提高容器之间的通信性能。

在 Docker 中,可以通过以下方式将容器设置为容器模式(container):

- 使用

--network=container:<container-name>选项在创建容器时指定容器模式。 - 使用

docker network connect命令将现有容器连接到另一个容器上,使它们共享网络命名空间。

- 容器在同一个网络命名空间中,彼此之间可以通过

创建网络

docker network create

创建一个名为wzynet的网络并查看

[root@localhost ~]# docker network create wzynet

60def88ff5c28a17db97e59fd92b4249ec7829a9887f5851441a11c63003b453

[root@localhost ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

39848ebe130a bridge bridge local

adf217ba0e04 host host local

97c55498dc28 none null local

60def88ff5c2 wzynet bridge local

[root@localhost ~]#

删除网络

docker network rm

删除名为wzynet的网络并查看

[root@localhost ~]# docker network rm wzynet

wzynet

[root@localhost ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

39848ebe130a bridge bridge local

adf217ba0e04 host host local

97c55498dc28 none null local

[root@localhost ~]#

查看网络详细信息

docker network inspect

查看桥接模式的网络详细信息

[root@localhost ~]# docker network inspect bridge

[

{

"Name": "bridge",

"Id": "39848ebe130a9baac0666d8898e01f9c66f974bd860f8db21250c4fcdd007a8a",

"Created": "2024-05-13T19:38:53.52796445+08:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": null,

"Config": [

{

"Subnet": "172.17.0.0/16",

"Gateway": "172.17.0.1"

}

]

},

"Internal": false,

"Attachable": false,

"Ingress": false,

"ConfigFrom": {

"Network": ""

},

"ConfigOnly": false,

"Containers": {

"1b8225f9abbc293754f04ad7798817f5f573f2cad1f49933742c4d41658f9526": {

"Name": "redis",

"EndpointID": "f78bf50201fdaab4200bb892b4f467127cc6434be9c41657c1fa189531202c16",

"MacAddress": "02:42:ac:11:00:02",

"IPv4Address": "172.17.0.2/16",

"IPv6Address": ""

},

"c7b0458076f3f13725c853a4d0b3415bd34910d05f29e863506993e00b3c5423": {

"Name": "mysql_slave",

"EndpointID": "5b4d737474034d710a235943cff7073e4b681a580369160e3cd307cf9c901835",

"MacAddress": "02:42:ac:11:00:03",

"IPv4Address": "172.17.0.3/16",

"IPv6Address": ""

},

"f165aa014e646f9608a144c27054ba844161365e702580f9a7830b73a8271450": {

"Name": "mysql",

"EndpointID": "502399393ba72dfc7102d38a9906718394751fc49abe0bcad64f5a8cbe3e0a5c",

"MacAddress": "02:42:ac:11:00:04",

"IPv4Address": "172.17.0.4/16",

"IPv6Address": ""

}

},

"Options": {

"com.docker.network.bridge.default_bridge": "true",

"com.docker.network.bridge.enable_icc": "true",

"com.docker.network.bridge.enable_ip_masquerade": "true",

"com.docker.network.bridge.host_binding_ipv4": "0.0.0.0",

"com.docker.network.bridge.name": "docker0",

"com.docker.network.driver.mtu": "1500"

},

"Labels": {}

}

]

[root@localhost ~]#

docker inspect 实例名或id

查看某个容器实例的网络信息,这里加上| tail -n 20则只查看最后20行。

[root@localhost ~]# docker inspect mysql | tail -n 20

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"MacAddress": "02:42:ac:11:00:04",

"NetworkID": "cd16b1996af392d4f0b5b5a8d4d0d78fa536eb652cb078734010d5673af4e212",

"EndpointID": "6a172c637c085dadb7a5758b42c8565c3cc02c7a88b33ba37e1f08786f2482e3",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.4",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"DriverOpts": null,

"DNSNames": null

}

}

}

}

]

桥接模式(bridge)

- 默认网络模式。

- 此模式下每个容器都会被随机分配一个ip。

- 容器通过一个虚拟的网络桥接接口(通常是

docker0)连接到主机的网络。 - 容器之间可以通过桥接接口进行通信,也可以与主机和外部网络进行通信。

- 桥接模式是最常用的网络模式,适用于大多数应用场景。

查看桥接模式容器的网络信息

先用docker ps列出运行的实例

[root@localhost ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

c7b0458076f3 mysql "docker-entrypoint.s…" 2 weeks ago Up 38 minutes 33060/tcp, 0.0.0.0:3307->3306/tcp, :::3307->3306/tcp mysql_slave

1b8225f9abbc redis:6.2.6 "docker-entrypoint.s…" 2 weeks ago Up 38 minutes 0.0.0.0:6379->6379/tcp, :::6379->6379/tcp redis

f165aa014e64 mysql "docker-entrypoint.s…" 3 weeks ago Up 38 minutes 0.0.0.0:3306->3306/tcp, :::3306->3306/tcp, 33060/tcp mysql

再用docker inspect 容器名查看某个容器的网络信息,这里加上| tail -n 20则只查看最后20行。

先看mysql,ip地址对应下面的IPAddress:172.17.0.4:

[root@localhost ~]# docker inspect mysql | tail -n 20

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"MacAddress": "02:42:ac:11:00:04",

"NetworkID": "cd16b1996af392d4f0b5b5a8d4d0d78fa536eb652cb078734010d5673af4e212",

"EndpointID": "6a172c637c085dadb7a5758b42c8565c3cc02c7a88b33ba37e1f08786f2482e3",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.4",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"DriverOpts": null,

"DNSNames": null

}

}

}

}

]

再看redis,ip地址对应下面的IPAddress": "172.17.0.2:

[root@localhost ~]# docker inspect redis | tail -n 20

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"MacAddress": "02:42:ac:11:00:02",

"NetworkID": "cd16b1996af392d4f0b5b5a8d4d0d78fa536eb652cb078734010d5673af4e212",

"EndpointID": "af36833a403042e0160b5fc4ae6414f5295c85cf9cef48e6e890e1e13a3c0117",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.2",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"DriverOpts": null,

"DNSNames": null

}

}

}

}

]

然后看mysql_slave,ip地址对应下面的IPAddress": "172.17.0.3:

[root@localhost ~]# docker inspect mysql_slave | tail -n 20

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"MacAddress": "02:42:ac:11:00:03",

"NetworkID": "cd16b1996af392d4f0b5b5a8d4d0d78fa536eb652cb078734010d5673af4e212",

"EndpointID": "0fc645afe5d1b94cbeb9dd134b5e078379389feff03df39703c978822e542693",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.3",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"DriverOpts": null,

"DNSNames": null

}

}

}

}

]

三者网关相同,ip不同,印证了桥接模式下各容器都被分配了不同的ip

主机模式(host)

- 容器与主机共享网络命名空间。

- 容器直接绑定到主机的网络接口,不进行网络地址转换。

- 容器可以使用主机的 IP 地址和端口,容器内的服务可以直接通过主机的 IP 地址和端口进行访问。

- 主机模式适用于需要容器与主机网络完全一致的场景,如性能敏感型应用。

使用如下命令以主机网络模式运行容器

docker run -d -p 映射端口号:应用端口号 --network host --name 名字 镜像名或镜像id

以tomcat为例:

[root@localhost ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

tomcat latest fb5657adc892 2 years ago 680MB

[root@localhost ~]# docker run -d -p 8088:8080 --network host --name hosttomcat fb5657adc892

WARNING: Published ports are discarded when using host network mode

d5772e22418ee658ef09c83ea9512114eb47862126df6609564369e48eddd1c9

在 Docker 中,当使用 “host” 网络模式时,会出现 WARNING: Published ports are discarded when using host network mode的警告信息。

因为主机模式下,容器与主机共享网络命名空间。在这种模式下,容器将直接使用主机的网络接口,而不是在容器内部创建一个独立的网络栈。这意味着容器可以使用主机的网络配置和端口,而不需要进行端口映射。

因此,在 “host” 主机网络模式下,容器内部的端口发布(通过 -p 或 --publish 标志)将被忽略。这是因为容器直接使用主机的网络接口,无需进行端口映射。所以会出现上述警告,告知这些发布的端口将被忽略。

查看tomcat的网络信息

Gateway与IPAddress为空,因为他们与主机共用网络

[root@localhost ~]# docker inspect hosttomcat | tail -n 20

"host": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"MacAddress": "",

"NetworkID": "adf217ba0e041e6b6e9cf3e2ac5ddbda66fefe65d705987ba78d701efb3a64bf",

"EndpointID": "8ff2951352eaea83d7b673ba8264c6ff25a1f5e1cb8ee75a9b5b5b835df0b593",

"Gateway": "",

"IPAddress": "",

"IPPrefixLen": 0,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"DriverOpts": null,

"DNSNames": null

}

}

}

}

]

如果要访问tomcat,则直接使用宿主机ip:8080即可。

无网络模式(none)

在none模式下,并不为Docker容器进行任何网络配置。也就是说,这个Docker容器没有网卡、IP、路由等信息,只有一个lo,需要我们自己为Docker容器添加网卡、配置IP等。

仍以tomcat为例,使用--network none:

[root@localhost ~]# docker run -d -p 8088:8080 --network none --name nonetomcat fb5657adc892

73ca6100c56467b54e5c5508e2cd69436f4a8a89d9a8ba376e57c87740d3f940

[root@localhost ~]# docker inspect nonetomcat | tail -n 20

"none": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"MacAddress": "",

"NetworkID": "97c55498dc28f755787bd7903a263e166a634a3637c829b83b37b3de8862570b",

"EndpointID": "8d9c4b1a41644e794f1a5d3faeddeaf17e793c156ef27bb1b93e5e77ed8aed95",

"Gateway": "",

"IPAddress": "",

"IPPrefixLen": 0,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"DriverOpts": null,

"DNSNames": null

}

}

}

}

]

发现没有Gateway与IPAddress,且json串开头模式为none

容器模式(container)

新创建的容器和已经存在的一个容器共享同一份网络ip配置。新创建的容器不会创建自己的网卡和配置自己的IP,而是和一个指定的容器共享IP、端口范围等。两个容器除了网络方面,其他的如文件系统、进程列表等还是相互隔离的。

这里不再用tomcat做演示,因为两个tomcat实例,都用8080端口会导致冲突,使用

alpine

Alpine Linux 是一款独立的、非商业的通用 Linux 发行版,专为追求安全性、简单性和资源效率的用户而设计。 可能很多人没听说过这个Linux 发行版本,但是经常用 Docker 的朋友可能都用过,因为他小,简单,安全而著称,所以作为基础镜像是非常好的一个选择。可谓是麻雀虽小但五脏俱全,镜像非常小巧,不到6M的大小,所以特别适合容器打包。

先拉取,即便不拉,在docker run时也会自动拉取

[root@localhost ~]# docker pull alpine

Using default tag: latest

latest: Pulling from library/alpine

59bf1c3509f3: Pull complete

Digest: sha256:21a3deaa0d32a8057914f36584b5288d2e5ecc984380bc0118285c70fa8c9300

Status: Downloaded newer image for alpine:latest

docker.io/library/alpine:latest

运行,注意alpine要用/bin/sh

[root@localhost ~]# docker run -it --name alpine1 alpine /bin/sh

/ #

运行第二个,使用容器模式与第一个alpine共享网络配置,主要添加了

--network container:alpine1

[root@localhost ~]# docker run -it --network container:alpine1 --name alpine2 alpine /bin/sh

/ #

验证共用网络配置

回到alpine1的终端,执行ip addr查看网络

[root@localhost ~]# docker run -it --name alpine1 alpine /bin/sh

/ # ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

12: eth0@if13: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue state UP

link/ether 02:42:ac:11:00:05 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.5/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::42:acff:fe11:5/64 scope link

valid_lft forever preferred_lft forever

/ #

再到alpine2的终端,执行ip addr查看网络

[root@localhost ~]# docker run -it --network container:alpine1 --name alpine2 alpine /bin/sh

/ # ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

12: eth0@if13: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue state UP

link/ether 02:42:ac:11:00:05 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.5/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::42:acff:fe11:5/64 scope link

valid_lft forever preferred_lft forever

/ #

可以看到二者的12: eth0@if13都是一样的ip地址:172.17.0.5

如果停掉alpine1,则alpine2将只剩下本地回环网络:

[root@localhost ~]# docker stop alpine1

alpine1

[root@localhost ~]#

[root@localhost ~]# docker run -it --network container:alpine1 --name alpine2 alpine /bin/sh

/ # ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

12: eth0@if13: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue state UP

link/ether 02:42:ac:11:00:05 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.5/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::42:acff:fe11:5/64 scope link

valid_lft forever preferred_lft forever

/ # ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

/ #

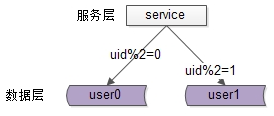

自定义模式

作用

在实际应用中,docker容器的ip是会变化的,比如一个容器挂掉,该容器的ip可能会被分配给新的容器。如此,会导致容器间的通信失败。

而使用自定义网络模式,可以直接使用容器名来进行通信,从而忽略容器的ip变动

案例演示

创建实例

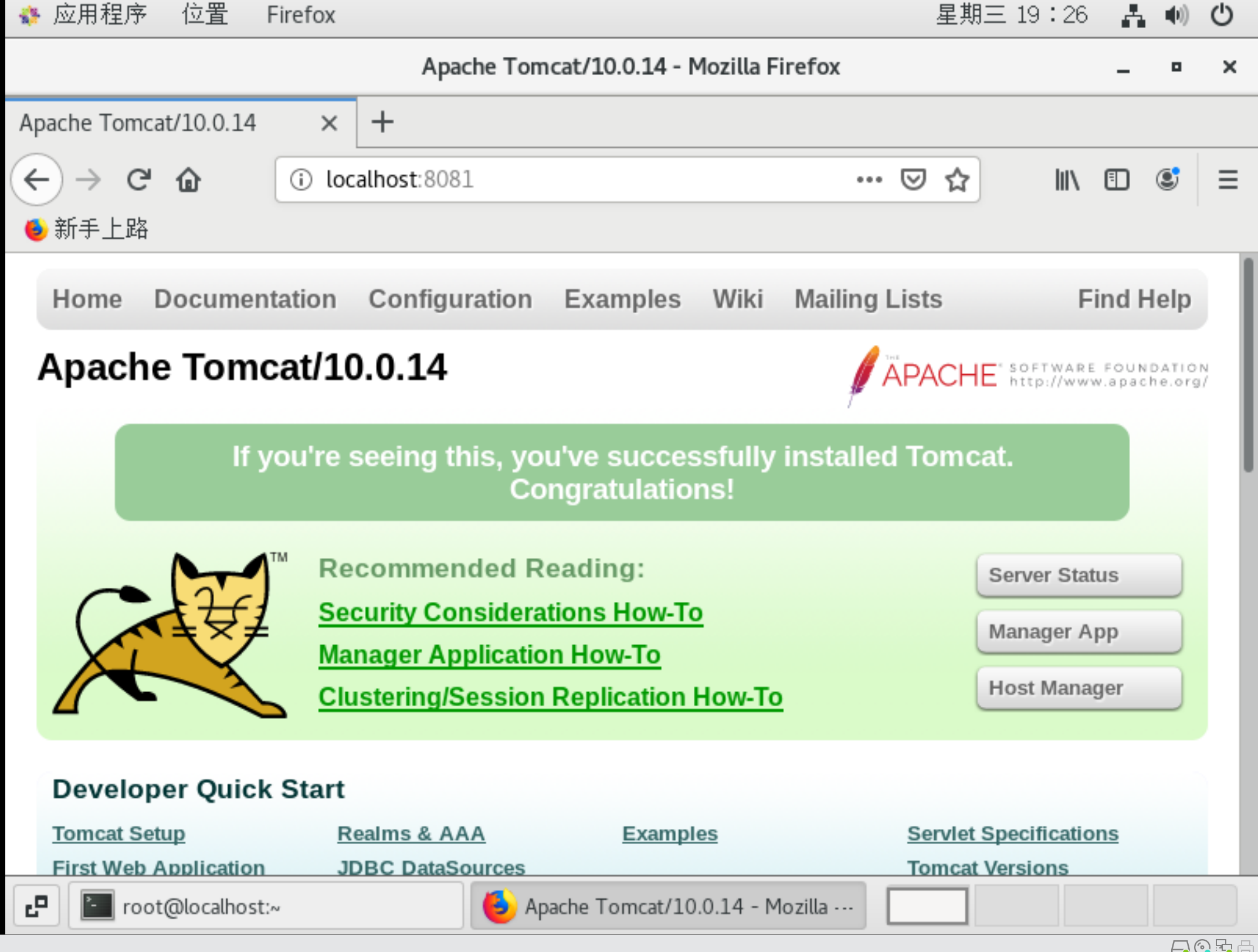

运行两个tomcat,修改其内部文件夹使其可以访问(详情参考前面的docker应用安装文档)

第一个,8081

[root@localhost ~]# docker run -d -p 8081:8080 --name tomcat81 tomcat

422f18c88a08939cc2bf1e07dee059db44ef13e05d3081535bb1ad4cf8abb1ba

[root@localhost ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

422f18c88a08 tomcat "catalina.sh run" 21 seconds ago Up 19 seconds 0.0.0.0:8081->8080/tcp, :::8081->8080/tcp tomcat81

[root@localhost ~]# docker exec -it 422f18c88a08 /bin/bash

root@422f18c88a08:/usr/local/tomcat# pwd

/usr/local/tomcat

root@422f18c88a08:/usr/local/tomcat# rm -r webapps

root@422f18c88a08:/usr/local/tomcat# mv webapps.dist webapps

第二个,8082

[root@localhost ~]# docker run -d -p 8082:8080 --name tomcat82 tomcat

e4808050e97155e3373d36bdf68f6cd3839c198873b0e0ddd5a316218ce0d417

[root@localhost ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

e4808050e971 tomcat "catalina.sh run" 4 seconds ago Up 2 seconds 0.0.0.0:8082->8080/tcp, :::8082->8080/tcp tomcat82

422f18c88a08 tomcat "catalina.sh run" 6 minutes ago Up 6 minutes 0.0.0.0:8081->8080/tcp, :::8081->8080/tcp tomcat81

[root@localhost ~]# docker exec -it e4808050e971 /bin/bash

root@e4808050e971:/usr/local/tomcat# pwd

/usr/local/tomcat

root@e4808050e971:/usr/local/tomcat# rm -r webapps

root@e4808050e971:/usr/local/tomcat# mv webapps.dist webapps

root@e4808050e971:/usr/local/tomcat#

分别查看两个tomcat容器的网络信息

[root@localhost ~]# docker inspect tomcat81 | tail -n 20

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"MacAddress": "02:42:ac:11:00:05",

"NetworkID": "8ded4f421d2ec5d1174aa8d73f94d5f66afa8b2d5743c40e2b64cc2dff06b57d",

"EndpointID": "fcf452bc367787b956d8c14923f3c7c5dc3252956c952f93271b559ecabb9759",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.5",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"DriverOpts": null,

"DNSNames": null

}

}

}

}

]

[root@localhost ~]# docker inspect tomcat82 | tail -n 20

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"MacAddress": "02:42:ac:11:00:06",

"NetworkID": "8ded4f421d2ec5d1174aa8d73f94d5f66afa8b2d5743c40e2b64cc2dff06b57d",

"EndpointID": "baa47c3fb8474a1f0644e7d017cade73918a428c8f12f5e33ba9dd05c42c4f3f",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.6",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"DriverOpts": null,

"DNSNames": null

}

}

}

}

]

tomcat81的ip是172.17.0.5,tomcat82的ip是172.17.0.6

使用ip地址进行容器互ping

然后在tomcat82中去ping tomcat81

这里需要注意,ping命令有时并不能直接用,因为容器内部的Linux版本不同,要根据版本安装网络工具来进行:

-

检查一下容器内部的 Linux 发行版:

cat /etc/*-release -

如果发现是基于 Debian 或 Ubuntu 的容器,你可以尝试使用

apt-get命令来安装软件包:apt-get update apt-get install -y iputils-ping -

如果发现是基于 CentOS 或 RHEL 的容器,你可以尝试使用

yum命令:yum update yum install -y iputils -

如果实在找不到

ping命令,你也可以考虑使用nc(netcat) 命令来代替ping。nc命令通常都包含在基础 Linux 容器镜像中。使用方法如下:nc -vz 172.17.0.5 icmp

总之,请先确认一下容器内部使用的是哪个 Linux 发行版,然后按照相应的包管理工具来安装 ping 命令。我这里是Debian,使用apt-get update与apt-get install -y iputils-ping安装:

[root@localhost ~]# docker exec -it tomcat82 /bin/bash

root@e4808050e971:/usr/local/tomcat# ping 172.17.0.5

bash: ping: command not found

root@e4808050e971:/usr/local/tomcat# cat /etc/*-release

PRETTY_NAME="Debian GNU/Linux 11 (bullseye)"

NAME="Debian GNU/Linux"

VERSION_ID="11"

VERSION="11 (bullseye)"

VERSION_CODENAME=bullseye

ID=debian

HOME_URL="https://www.debian.org/"

SUPPORT_URL="https://www.debian.org/support"

BUG_REPORT_URL="https://bugs.debian.org/"

root@e4808050e971:/usr/local/tomcat# apt-get update

Get:1 http://deb.debian.org/debian bullseye InRelease [116 kB]

Get:2 http://security.debian.org/debian-security bullseye-security InRelease [48.4 kB]

Get:3 http://deb.debian.org/debian bullseye-updates InRelease [44.1 kB]

Get:4 http://security.debian.org/debian-security bullseye-security/main amd64 Packages [273 kB]

Get:5 http://deb.debian.org/debian bullseye/main amd64 Packages [8068 kB]

Get:6 http://deb.debian.org/debian bullseye-updates/main amd64 Packages [18.8 kB]

Fetched 8568 kB in 5min 41s (25.1 kB/s)

Reading package lists... Done

root@e4808050e971:/usr/local/tomcat# apt-get install -y iputils-ping

Reading package lists... Done

Building dependency tree... Done

Reading state information... Done

The following additional packages will be installed:

libcap2 libcap2-bin libpam-cap

The following NEW packages will be installed:

iputils-ping libcap2 libcap2-bin libpam-cap

0 upgraded, 4 newly installed, 0 to remove and 74 not upgraded.

Need to get 121 kB of archives.

After this operation, 348 kB of additional disk space will be used.

Get:1 http://deb.debian.org/debian bullseye/main amd64 libcap2 amd64 1:2.44-1 [23.6 kB]

Get:2 http://deb.debian.org/debian bullseye/main amd64 libcap2-bin amd64 1:2.44-1 [32.6 kB]

Get:3 http://deb.debian.org/debian bullseye/main amd64 iputils-ping amd64 3:20210202-1 [49.8 kB]

Get:4 http://deb.debian.org/debian bullseye/main amd64 libpam-cap amd64 1:2.44-1 [15.4 kB]

Fetched 121 kB in 4s (32.1 kB/s)

debconf: delaying package configuration, since apt-utils is not installed

Selecting previously unselected package libcap2:amd64.

(Reading database ... 12672 files and directories currently installed.)

Preparing to unpack .../libcap2_1%3a2.44-1_amd64.deb ...

Unpacking libcap2:amd64 (1:2.44-1) ...

Selecting previously unselected package libcap2-bin.

Preparing to unpack .../libcap2-bin_1%3a2.44-1_amd64.deb ...

Unpacking libcap2-bin (1:2.44-1) ...

Selecting previously unselected package iputils-ping.

Preparing to unpack .../iputils-ping_3%3a20210202-1_amd64.deb ...

Unpacking iputils-ping (3:20210202-1) ...

Selecting previously unselected package libpam-cap:amd64.

Preparing to unpack .../libpam-cap_1%3a2.44-1_amd64.deb ...

Unpacking libpam-cap:amd64 (1:2.44-1) ...

Setting up libcap2:amd64 (1:2.44-1) ...

Setting up libcap2-bin (1:2.44-1) ...

Setting up libpam-cap:amd64 (1:2.44-1) ...

debconf: unable to initialize frontend: Dialog

debconf: (No usable dialog-like program is installed, so the dialog based frontend cannot be used. at /usr/share/perl5/Debconf/FrontEnd/Dialog.pm line 78.)

debconf: falling back to frontend: Readline

Setting up iputils-ping (3:20210202-1) ...

Processing triggers for libc-bin (2.31-13+deb11u2) ...

root@e4808050e971:/usr/local/tomcat#

然后再ping,可以ping通:

root@e4808050e971:/usr/local/tomcat# ping 172.17.0.5

PING 172.17.0.5 (172.17.0.5) 56(84) bytes of data.

64 bytes from 172.17.0.5: icmp_seq=1 ttl=64 time=0.379 ms

64 bytes from 172.17.0.5: icmp_seq=2 ttl=64 time=0.148 ms

64 bytes from 172.17.0.5: icmp_seq=3 ttl=64 time=0.150 ms

64 bytes from 172.17.0.5: icmp_seq=4 ttl=64 time=0.140 ms

64 bytes from 172.17.0.5: icmp_seq=5 ttl=64 time=0.098 ms

64 bytes from 172.17.0.5: icmp_seq=6 ttl=64 time=0.187 ms

^C

--- 172.17.0.5 ping statistics ---

6 packets transmitted, 6 received, 0% packet loss, time 5008ms

rtt min/avg/max/mdev = 0.098/0.183/0.379/0.091 ms

root@e4808050e971:/usr/local/tomcat#

同理,在tomcat81中再ping82

按照上面82的步骤安装tomcat81的网络工具,我这都是Debian,不再赘述,安装完直接ping 82

[root@localhost ~]# docker exec -it tomcat81 /bin/bash

root@422f18c88a08:/usr/local/tomcat# cat /etc/*-release

PRETTY_NAME="Debian GNU/Linux 11 (bullseye)"

NAME="Debian GNU/Linux"

VERSION_ID="11"

VERSION="11 (bullseye)"

VERSION_CODENAME=bullseye

ID=debian

HOME_URL="https://www.debian.org/"

SUPPORT_URL="https://www.debian.org/support"

BUG_REPORT_URL="https://bugs.debian.org/"

root@422f18c88a08:/usr/local/tomcat# ping

bash: ping: command not found

root@422f18c88a08:/usr/local/tomcat# apt-get update

Get:1 http://deb.debian.org/debian bullseye InRelease [116 kB]

Get:2 http://deb.debian.org/debian bullseye-updates InRelease [44.1 kB]

Get:3 http://deb.debian.org/debian bullseye/main amd64 Packages [8068 kB]

Get:4 http://security.debian.org/debian-security bullseye-security InRelease [48.4 kB]

Get:5 http://security.debian.org/debian-security bullseye-security/main amd64 Packages [273 kB]

Get:6 http://deb.debian.org/debian bullseye-updates/main amd64 Packages [18.8 kB]

Fetched 8568 kB in 2min 47s (51.3 kB/s)

Reading package lists... Done

root@422f18c88a08:/usr/local/tomcat# apt-get install -y iputils-ping

Reading package lists... 0%

Reading package lists... Done

Building dependency tree... Done

Reading state information... Done

The following additional packages will be installed:

libcap2 libcap2-bin libpam-cap

The following NEW packages will be installed:

iputils-ping libcap2 libcap2-bin libpam-cap

0 upgraded, 4 newly installed, 0 to remove and 74 not upgraded.

Need to get 121 kB of archives.

After this operation, 348 kB of additional disk space will be used.

Get:1 http://deb.debian.org/debian bullseye/main amd64 libcap2 amd64 1:2.44-1 [23.6 kB]

Get:2 http://deb.debian.org/debian bullseye/main amd64 libcap2-bin amd64 1:2.44-1 [32.6 kB]

Get:3 http://deb.debian.org/debian bullseye/main amd64 iputils-ping amd64 3:20210202-1 [49.8 kB]

Get:4 http://deb.debian.org/debian bullseye/main amd64 libpam-cap amd64 1:2.44-1 [15.4 kB]

Fetched 121 kB in 1s (88.6 kB/s)

debconf: delaying package configuration, since apt-utils is not installed

Selecting previously unselected package libcap2:amd64.

(Reading database ... 12672 files and directories currently installed.)

Preparing to unpack .../libcap2_1%3a2.44-1_amd64.deb ...

Unpacking libcap2:amd64 (1:2.44-1) ...

Selecting previously unselected package libcap2-bin.

Preparing to unpack .../libcap2-bin_1%3a2.44-1_amd64.deb ...

Unpacking libcap2-bin (1:2.44-1) ...

Selecting previously unselected package iputils-ping.

Preparing to unpack .../iputils-ping_3%3a20210202-1_amd64.deb ...

Unpacking iputils-ping (3:20210202-1) ...

Selecting previously unselected package libpam-cap:amd64.

Preparing to unpack .../libpam-cap_1%3a2.44-1_amd64.deb ...

Unpacking libpam-cap:amd64 (1:2.44-1) ...

Setting up libcap2:amd64 (1:2.44-1) ...

Setting up libcap2-bin (1:2.44-1) ...

Setting up libpam-cap:amd64 (1:2.44-1) ...

debconf: unable to initialize frontend: Dialog

debconf: (No usable dialog-like program is installed, so the dialog based frontend cannot be used. at /usr/share/perl5/Debconf/FrontEnd/Dialog.pm line 78.)

debconf: falling back to frontend: Readline

Setting up iputils-ping (3:20210202-1) ...

Processing triggers for libc-bin (2.31-13+deb11u2) ...

root@422f18c88a08:/usr/local/tomcat# ping 172.17.0.6

PING 172.17.0.6 (172.17.0.6) 56(84) bytes of data.

64 bytes from 172.17.0.6: icmp_seq=1 ttl=64 time=0.259 ms

64 bytes from 172.17.0.6: icmp_seq=2 ttl=64 time=0.177 ms

64 bytes from 172.17.0.6: icmp_seq=3 ttl=64 time=0.200 ms

64 bytes from 172.17.0.6: icmp_seq=4 ttl=64 time=0.138 ms

64 bytes from 172.17.0.6: icmp_seq=5 ttl=64 time=0.147 ms

^C

--- 172.17.0.6 ping statistics ---

5 packets transmitted, 5 received, 0% packet loss, time 4004ms

rtt min/avg/max/mdev = 0.138/0.184/0.259/0.043 ms

root@422f18c88a08:/usr/local/tomcat#

可以ping通tomcat82

使用名字进行容器互ping

tomcat81去ping tomcat82:

root@422f18c88a08:/usr/local/tomcat# ping tomcat82

ping: tomcat82: Name or service not known

root@422f18c88a08:/usr/local/tomcat#

tomcat82去ping tomcat81:

root@e4808050e971:/usr/local/tomcat# ping tomcat81

ping: tomcat81: Name or service not known

root@e4808050e971:/usr/local/tomcat#

可以发现以容器名互相通信时,报错服务名找不到,是不通的

自定义网络使用

创建自定义网络

先创建一个自定义网络模式

命令:docker network create 网络模式名字

然后查看

[root@localhost ~]# docker network create wzy_network

41e3ac96a18104c5c8aca6391f58382c3a7d1c2acd633bd38cbaed2a488fc669

[root@localhost ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

8ded4f421d2e bridge bridge local

adf217ba0e04 host host local

97c55498dc28 none null local

41e3ac96a181 wzy_network bridge local

[root@localhost ~]#

wzy_network的驱动依然是桥接,DRIVER为bridge

将实例加入到自定网络

然后让81与82两个tomcat容器加入到新建的自定义网络模式中

先停止并删除之前的tomcat实例,不然会报错:docker: Error response from daemon: Conflict. The container name "/tomcat81" is already in use by container "422f18c88a08939cc2bf1e07dee059db44ef13e05d3081535bb1ad4cf8abb1ba". You have to remove (or rename) that container to be able to reuse that name.

[root@localhost ~]# docker stop tomcat81

tomcat81

[root@localhost ~]# docker rm tomcat81

tomcat81

[root@localhost ~]# docker stop tomcat82

tomcat82

[root@localhost ~]# docker rm tomcat82

tomcat82

然后再运行:

tomcat81

docker run -d -p 8081:8080 --network wzy_network --name tomcat81 tomcat

[root@localhost ~]# docker run -d -p 8081:8080 --network wzy_network --name tomcat81 tomcat

93f18d68060050c3287d8896c20a46a6b243e1dff82e5c548ffa8b9421014b0c

[root@localhost ~]#

tomcat82

docker run -d -p 8082:8080 --network wzy_network --name tomcat82 tomcat

[root@localhost ~]# docker run -d -p 8082:8080 --network wzy_network --name tomcat82 tomcat

4956eb1b5a437cccafb3e3a48e4df9d73d7ce141f1767c85daf26ca86e0c9e54

[root@localhost ~]#

因为删除了旧的实例,这里无奈需要再安装一次网络工具包,建议在拉取tomcat镜像时,直接将此工具安装进去作为新镜像运行实例

[root@localhost ~]# docker exec -it tomcat81 /bin/bash

root@93f18d680600:/usr/local/tomcat# apt-get update

#省略安装内容

root@93f18d680600:/usr/local/tomcat# apt-get install -y iputils-ping

#省略安装内容

[root@localhost ~]# docker exec -it tomcat82 /bin/bash

root@4956eb1b5a43:/usr/local/tomcat# apt-get update

#省略安装内容

root@4956eb1b5a43:/usr/local/tomcat# apt-get install -y iputils-ping

#省略安装内容

验证

自定义网络无需额外配置,本省就维护好了主机名与ip的对应关系(ip和域名都能通)

用容器名字,在81中ping 82

root@93f18d680600:/usr/local/tomcat# ping tomcat82

PING tomcat82 (172.18.0.3) 56(84) bytes of data.

64 bytes from tomcat82.wzy_network (172.18.0.3): icmp_seq=1 ttl=64 time=0.125 ms

64 bytes from tomcat82.wzy_network (172.18.0.3): icmp_seq=2 ttl=64 time=0.110 ms

64 bytes from tomcat82.wzy_network (172.18.0.3): icmp_seq=3 ttl=64 time=0.093 ms

64 bytes from tomcat82.wzy_network (172.18.0.3): icmp_seq=4 ttl=64 time=0.084 ms

64 bytes from tomcat82.wzy_network (172.18.0.3): icmp_seq=5 ttl=64 time=0.114 ms

64 bytes from tomcat82.wzy_network (172.18.0.3): icmp_seq=6 ttl=64 time=0.119 ms

^C

--- tomcat82 ping statistics ---

6 packets transmitted, 6 received, 0% packet loss, time 5013ms

rtt min/avg/max/mdev = 0.084/0.107/0.125/0.014 ms

root@93f18d680600:/usr/local/tomcat#

用容器名字,在82中ping 81

root@4956eb1b5a43:/usr/local/tomcat# ping tomcat81

PING tomcat81 (172.18.0.2) 56(84) bytes of data.

64 bytes from tomcat81.wzy_network (172.18.0.2): icmp_seq=1 ttl=64 time=0.110 ms

64 bytes from tomcat81.wzy_network (172.18.0.2): icmp_seq=2 ttl=64 time=0.576 ms

64 bytes from tomcat81.wzy_network (172.18.0.2): icmp_seq=3 ttl=64 time=0.194 ms

64 bytes from tomcat81.wzy_network (172.18.0.2): icmp_seq=4 ttl=64 time=0.112 ms

64 bytes from tomcat81.wzy_network (172.18.0.2): icmp_seq=5 ttl=64 time=0.128 ms

^C

--- tomcat81 ping statistics ---

5 packets transmitted, 5 received, 0% packet loss, time 4018ms

rtt min/avg/max/mdev = 0.110/0.224/0.576/0.178 ms

root@4956eb1b5a43:/usr/local/tomcat#