一直想做一点3D目标检测,先来一篇单目3D目标检测Monodle(基于centernet的),训练代码参考官方【代码】,这里只讲讲如何部署。

模型和完整仿真测试代码,放在github上参考链接【模型和完整代码】。

1 模型训练

训练参考官方代码 https://github.com/xinzhuma/monodle

2 导出onnx

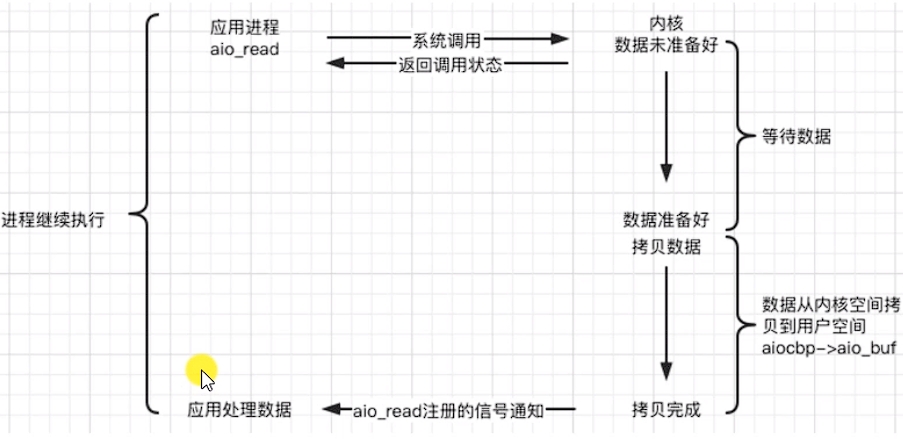

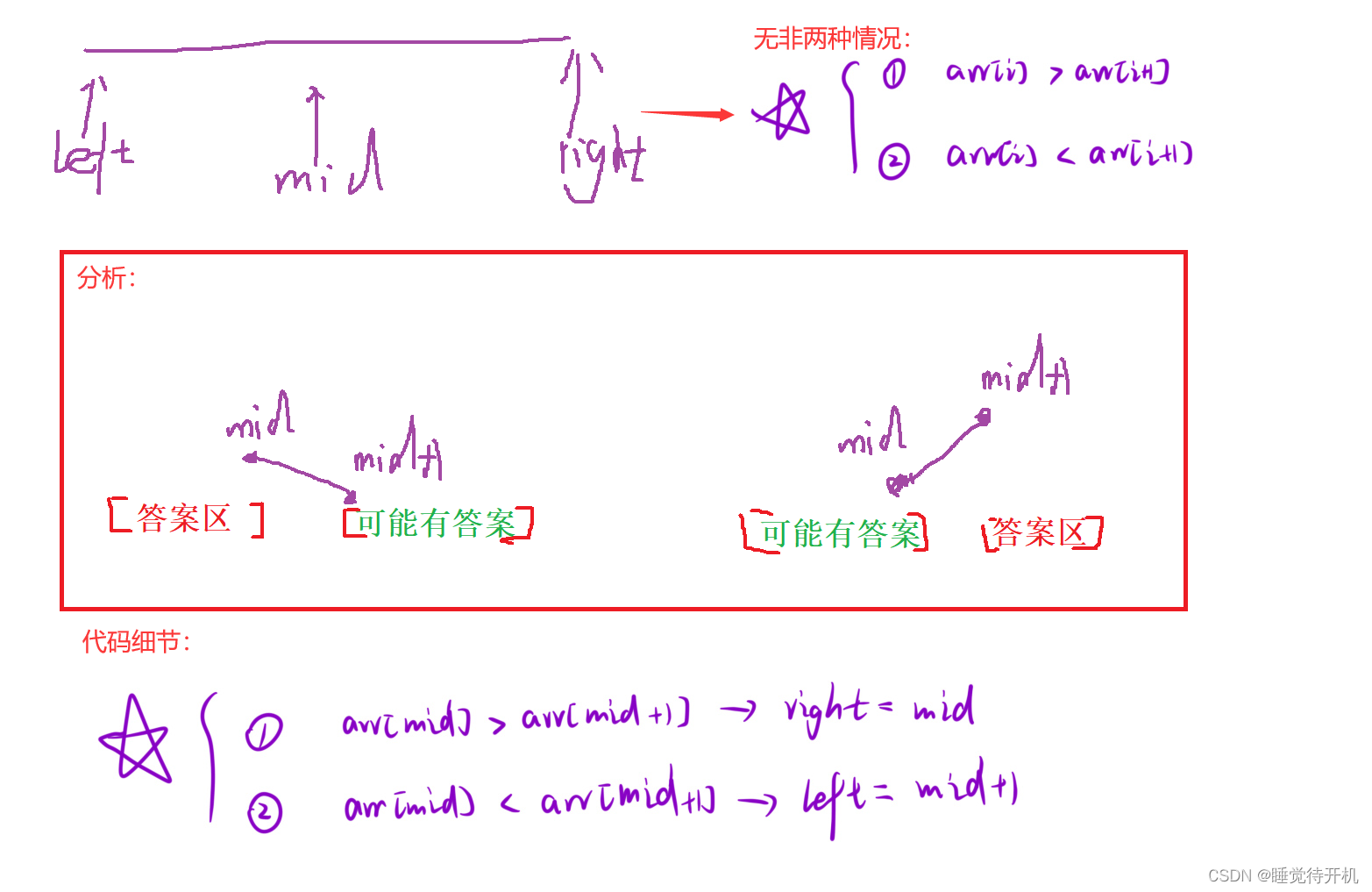

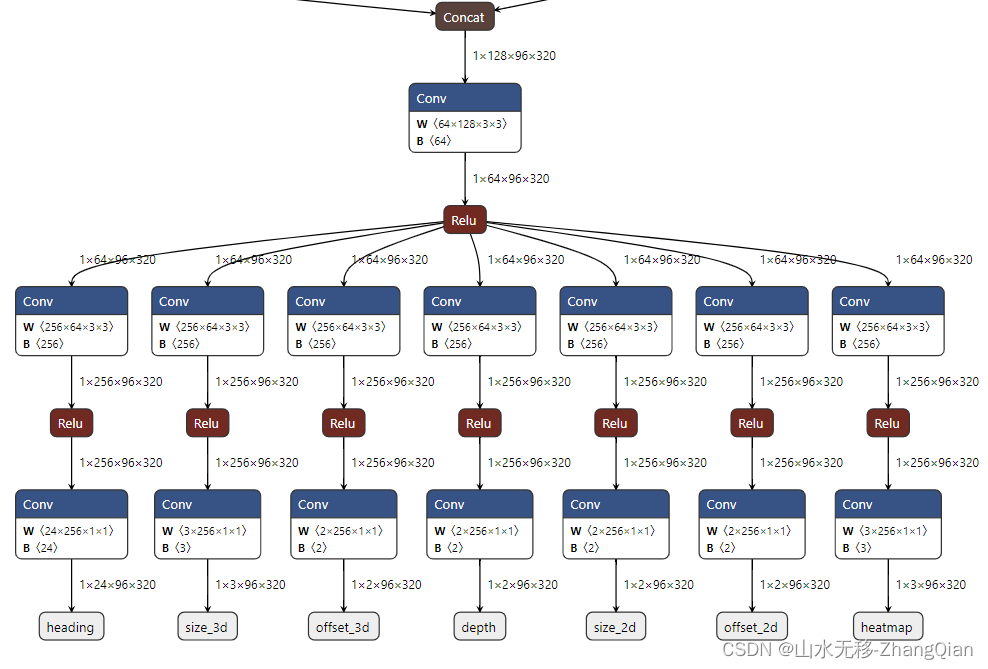

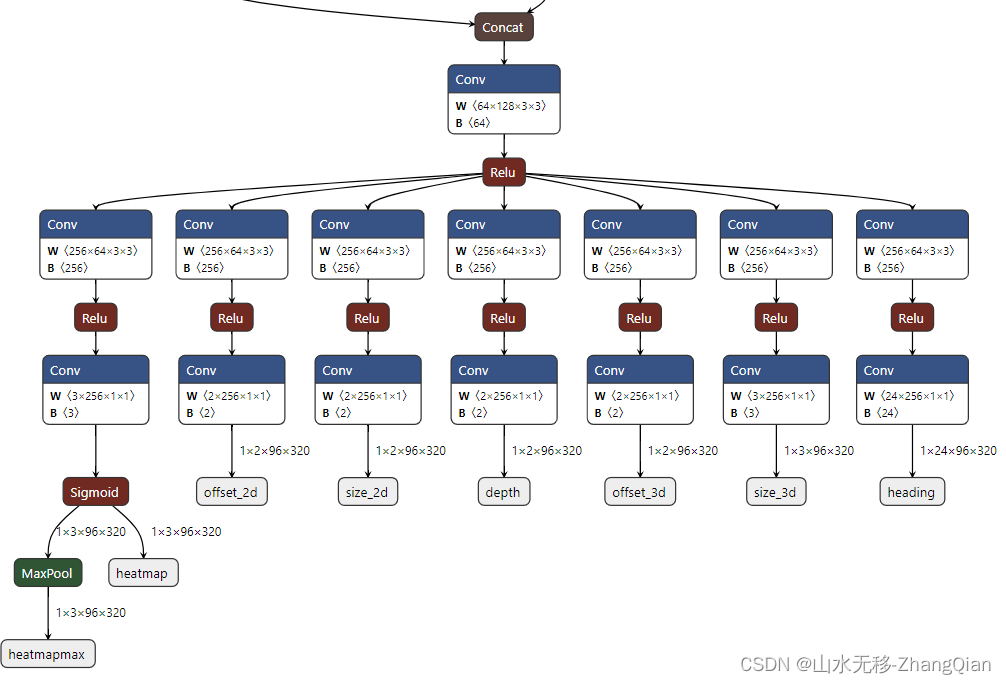

如果按照官方代码导出的onnx,后处理写起来比较复杂且后处理时耗比较长,这里将后处理的部分代码放到模型中。原始官方导出的onnx模型如下图:

本示例导出的onnx模型如下图,这样导出便于写后处理代码,模型+后处理整个时耗也比较优:

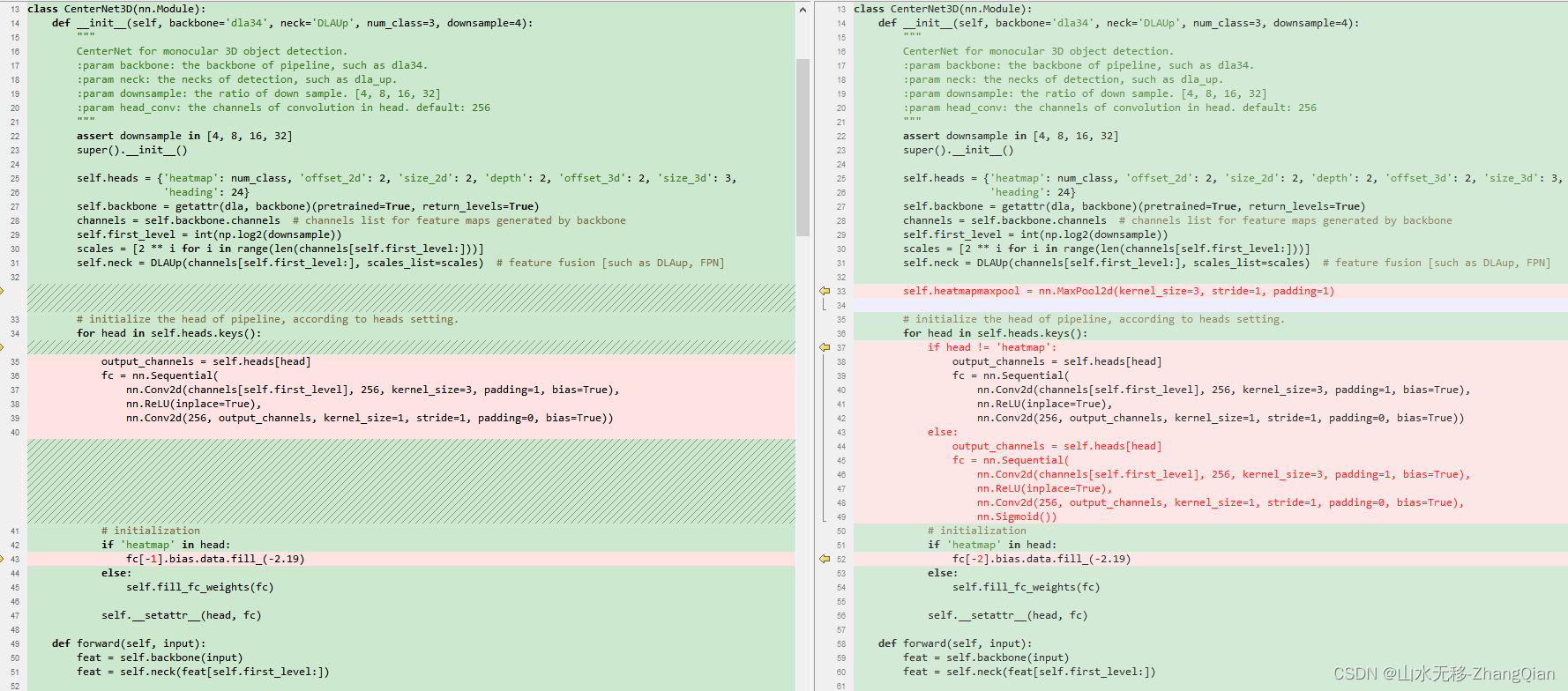

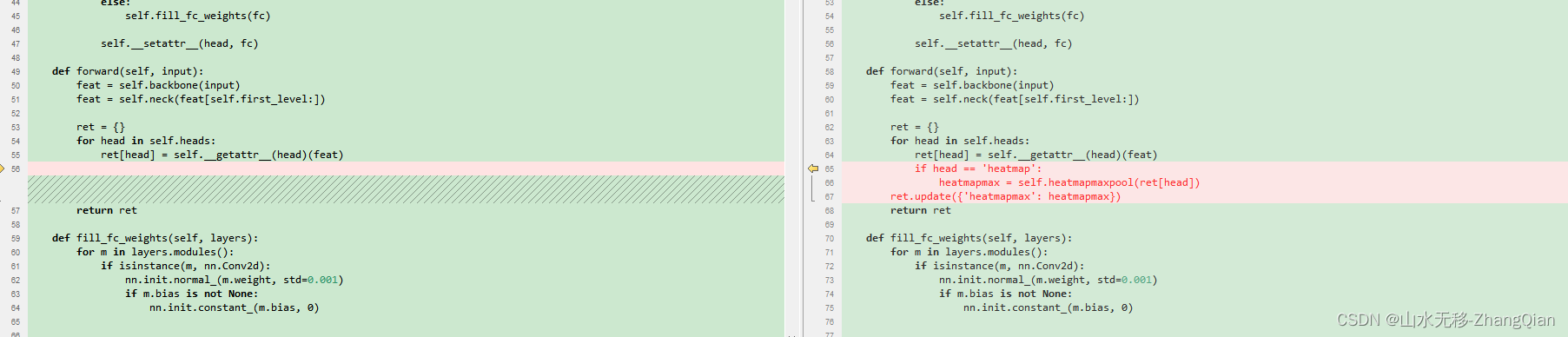

把centernet3d.py 文件拷贝一份,命名为export_onnx.py,并进行如下修改:

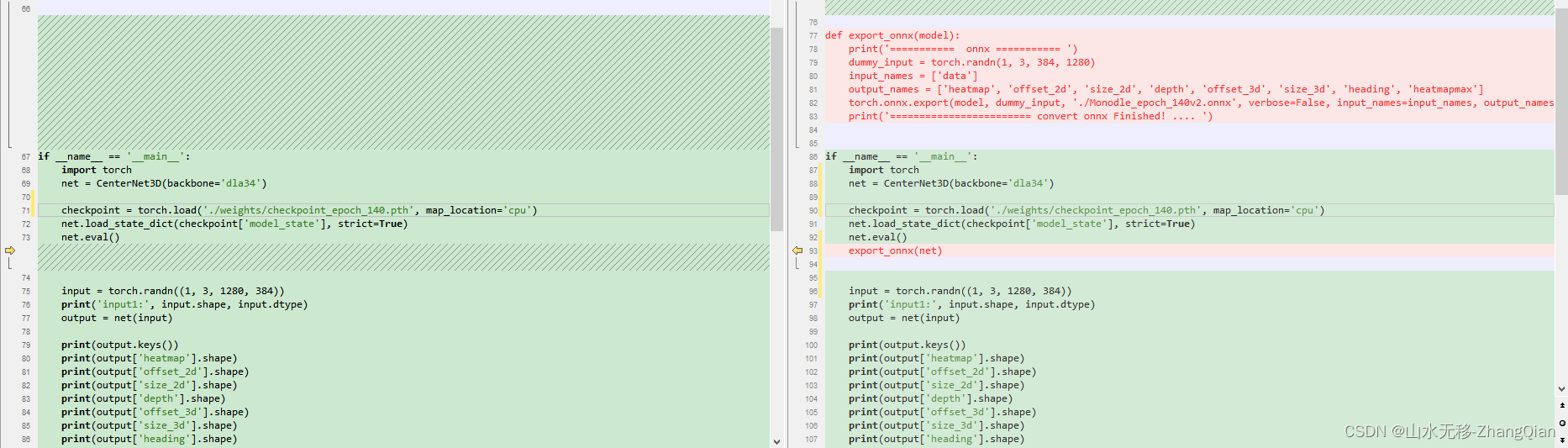

export_onnx.py 修改后的完整代码:

export_onnx.py 修改后的完整代码:

import os

import cv2

import torch

import torch.nn as nn

import numpy as np

from lib.backbones import dla

from lib.backbones.dlaup import DLAUp

from lib.backbones.hourglass import get_large_hourglass_net

from lib.backbones.hourglass import load_pretrian_model

class CenterNet3D(nn.Module):

def __init__(self, backbone='dla34', neck='DLAUp', num_class=3, downsample=4):

"""

CenterNet for monocular 3D object detection.

:param backbone: the backbone of pipeline, such as dla34.

:param neck: the necks of detection, such as dla_up.

:param downsample: the ratio of down sample. [4, 8, 16, 32]

:param head_conv: the channels of convolution in head. default: 256

"""

assert downsample in [4, 8, 16, 32]

super().__init__()

self.heads = {'heatmap': num_class, 'offset_2d': 2, 'size_2d': 2, 'depth': 2, 'offset_3d': 2, 'size_3d': 3,

'heading': 24}

self.backbone = getattr(dla, backbone)(pretrained=True, return_levels=True)

channels = self.backbone.channels # channels list for feature maps generated by backbone

self.first_level = int(np.log2(downsample))

scales = [2 ** i for i in range(len(channels[self.first_level:]))]

self.neck = DLAUp(channels[self.first_level:], scales_list=scales) # feature fusion [such as DLAup, FPN]

self.heatmapmaxpool = nn.MaxPool2d(kernel_size=3, stride=1, padding=1)

# initialize the head of pipeline, according to heads setting.

for head in self.heads.keys():

if head != 'heatmap':

output_channels = self.heads[head]

fc = nn.Sequential(

nn.Conv2d(channels[self.first_level], 256, kernel_size=3, padding=1, bias=True),

nn.ReLU(inplace=True),

nn.Conv2d(256, output_channels, kernel_size=1, stride=1, padding=0, bias=True))

else:

output_channels = self.heads[head]

fc = nn.Sequential(

nn.Conv2d(channels[self.first_level], 256, kernel_size=3, padding=1, bias=True),

nn.ReLU(inplace=True),

nn.Conv2d(256, output_channels, kernel_size=1, stride=1, padding=0, bias=True),

nn.Sigmoid())

# initialization

if 'heatmap' in head:

fc[-2].bias.data.fill_(-2.19)

else:

self.fill_fc_weights(fc)

self.__setattr__(head, fc)

def forward(self, input):

feat = self.backbone(input)

feat = self.neck(feat[self.first_level:])

ret = {}

for head in self.heads:

ret[head] = self.__getattr__(head)(feat)

if head == 'heatmap':

heatmapmax = self.heatmapmaxpool(ret[head])

ret.update({'heatmapmax': heatmapmax})

return ret

def fill_fc_weights(self, layers):

for m in layers.modules():

if isinstance(m, nn.Conv2d):

nn.init.normal_(m.weight, std=0.001)

if m.bias is not None:

nn.init.constant_(m.bias, 0)

def export_onnx(model):

print('=========== onnx =========== ')

dummy_input = torch.randn(1, 3, 384, 1280)

input_names = ['data']

output_names = ['heatmap', 'offset_2d', 'size_2d', 'depth', 'offset_3d', 'size_3d', 'heading', 'heatmapmax']

torch.onnx.export(model, dummy_input, './Monodle_epoch_140.onnx', verbose=False, input_names=input_names,

output_names=output_names, opset_version=11)

print('======================== convert onnx Finished! .... ')

if __name__ == '__main__':

print('This is main ...')

CLASSES = ['Pedestrian', 'Car', 'Cyclist']

net = CenterNet3D(backbone='dla34')

checkpoint = torch.load('./weights/checkpoint_epoch_140.pth',

map_location='cpu')

net.load_state_dict(checkpoint['model_state'], strict=True)

net.eval()

export_onnx(net)

input = torch.randn((1, 3, 1280, 384))

print('input1:', input.shape, input.dtype)

output = net(input)

print(output.keys())

print(output['heatmap'].shape)

print(output['offset_2d'].shape)

print(output['size_2d'].shape)

print(output['depth'].shape)

print(output['offset_3d'].shape)

print(output['size_3d'].shape)

print(output['heading'].shape)

运行 python export_onnx.py 生成.onn文件。

3 测试效果

官方pytorch 测试效果

onnx 测试效果

特别说明: 由于官方代码的2d框是用3d框计算得到的,而本博客是直接解码的模型预测出的2d框,所以2d框有所出入。

4 onnx、rknn、horizon、tensorRT测试转完整代码

模型和完整仿真测试代码、测试图片参考【模型和完整代码】。