必做题:

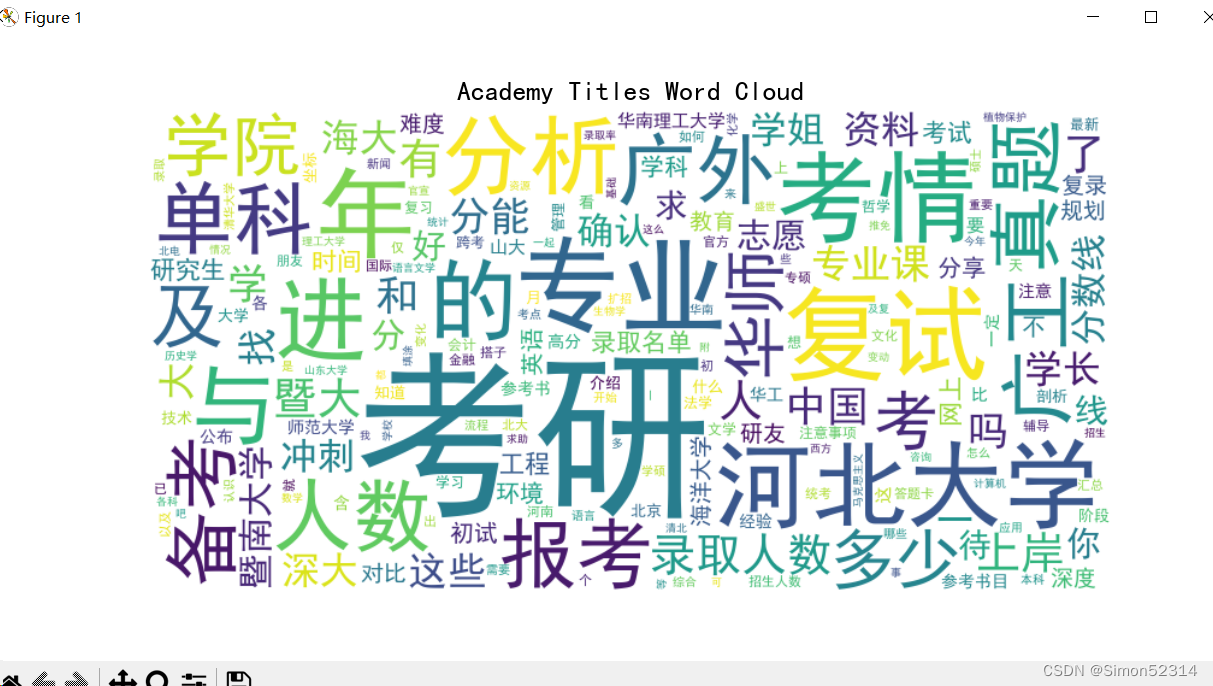

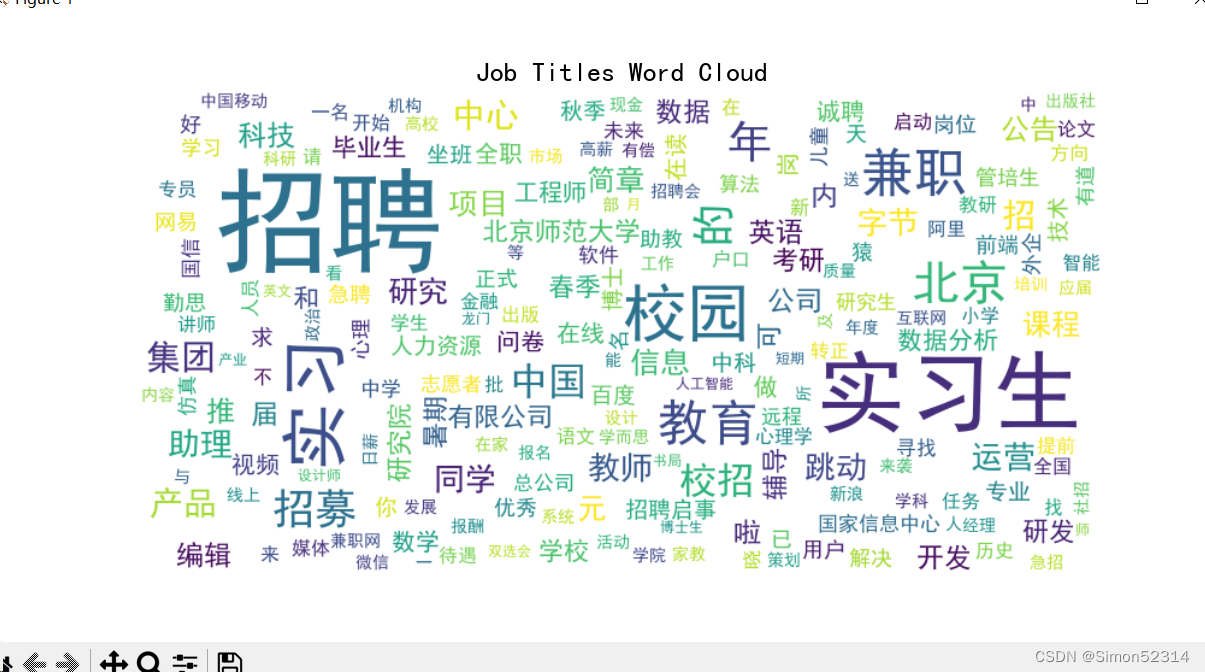

- 数据准备:academy_titles.txt为“考硕考博”板块的帖子标题,job_titles.txt为“招聘信息”板块的帖子标题,

- 使用jieba工具对academy_titles.txt进行分词,接着去除停用词,然后统计词频,最后绘制词云。同样的,也绘制job_titles.txt的词云。

- 将jieba替换为pkuseg工具,分别绘制academy_titles.txt和job_titles.txt的词云。要给出每一部分的代码。

效果图

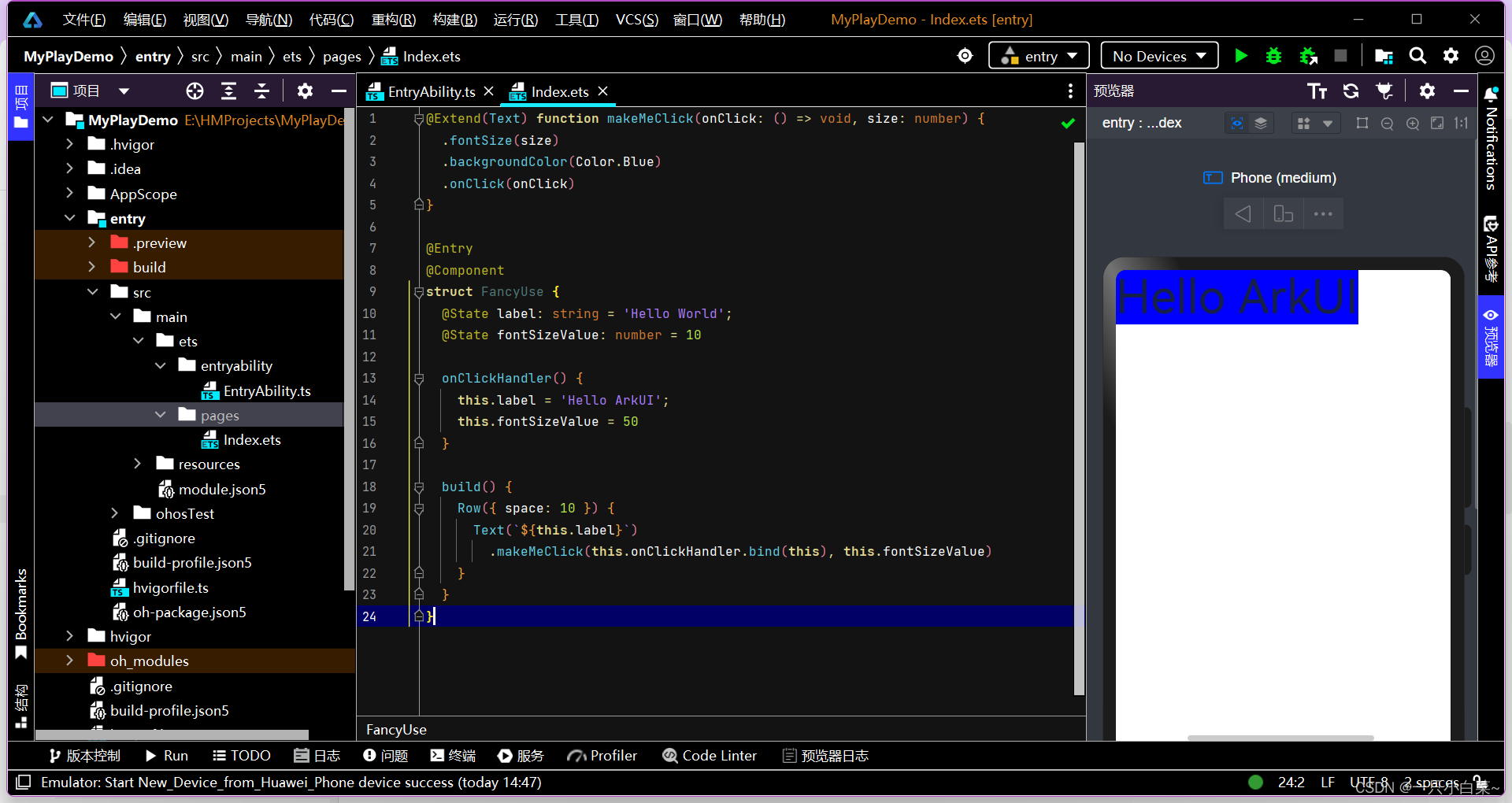

代码

import jieba import re from wordcloud import WordCloud from collections import Counter import matplotlib.pyplot as plt # 读取academy_titles文件内容 with open('C:\\Users\\hp\\Desktop\\实验3\\academy_titles.txt', 'r', encoding='utf-8') as file: academy_titles = file.readlines() # 读取job_titles文件内容 with open('C:\\Users\\hp\\Desktop\\实验3\\job_titles.txt', 'r', encoding='utf-8') as file: job_titles = file.readlines() # 将招聘信息与学术信息分开 academy_titles = [title.strip() for title in academy_titles] job_titles = [title.strip() for title in job_titles] # 分词、去除停用词、统计词频(对academy_titles) academy_words = [] for title in academy_titles: words = jieba.cut(title) filtered_words = [word for word in words if re.match(r'^[\u4e00-\u9fa5]+$', word)] academy_words.extend(filtered_words)请自行补全代码,或者这周五晚上更新完整代码

![[STM32] Keil 创建 HAL 库的工程模板](https://img-blog.csdnimg.cn/img_convert/c8064cca80503da147220eacbfa303ba.png#pic_center)