文章目录

- 1.神经网络迭代概念

- 1)训练误差与泛化误差

- 2)训练集、验证集和测试集划分

- 3)偏差与方差

- 2.正则化方法

- 1)提前终止

- 2)L2正则化

- 3)Dropout

- 3.优化算法

- 1)梯度下降

- 2)Momentum算法

- 3)RMSprop算法

- 4)Adam算法

- 4.PyTorch的初始化函数

- 1)普通初始化

- 2)Xavier 初始化

- 3)He初始化

1.神经网络迭代概念

超参数包括神经网络的层数、每层神经元的个数、学习率以及合适的激活函数。需要多次循环往复地进行“设置超参数》编码》检查实验结果”这一过程,才能设置最合适的超参数。数据样本的划分也很关键。

1)训练误差与泛化误差

机器学习在训练数据集上表现出的误差叫做训练误差,在任意一个测试数据样本上的误差的期望值叫做泛化误差。

2)训练集、验证集和测试集划分

训练集:用来训练模型内参数的数据集

验证集:用于在训练过程中检验模型的状态,收敛情况。验证集通常用于调整超参数,根据几组模型验证集上的表现决定哪组超参数拥有最好的性能。

同时验证集在训练过程中还可以用来监控模型是否发生过拟合,一般来说验证集表现稳定后,若继续训练,训练集表现还会继续上升,但是验证集会出现不升反降的情况,这样一般就发生了过拟合。所以验证集也用来判断何时停止训练

测试集:用来评价模型泛化能力,即之前模型使用验证集确定了超参数,使用训练集调整了参数,最后使用一个从没有见过的数据集来判断这个模型是否Work。

区别:形象上来说训练集就像是学生的课本,学生 根据课本里的内容来掌握知识,验证集就像是作业,通过作业可以知道 不同学生学习情况、进步的速度快慢,而最终的测试集就像是考试,考的题是平常都没有见过,考察学生举一反三的能力。

交叉验证法的作用就是尝试利用不同的训练集/测试集划分来对模型做多组不同的训练/测试,来应对单词测试结果过于片面以及训练数据不足的问题。

3)偏差与方差

偏差欠拟合;方差过拟合。

2.正则化方法

过拟合的解决方式有两种:一是收集更多数据,标注更多标签;二是正则化。

1)提前终止

基本思想:神经网络出现过拟合苗头时,提前终止。

方法:绘制训练和严重准确率及损失曲线;找到最佳次数;修改n_epochs为最佳次数。

另一种方式是记录每一轮的准确率,保存对应的参数。然后加载最佳网络参数。

创建文件mnist_early_stopping.py

添加代码如下:

# -*- coding: utf-8 -*-

"""

MNIST数据集分类示例

提前终止

"""

import torch

import numpy as np

import matplotlib.pyplot as plt

import matplotlib as mpl

import torch.nn as nn

from torchvision import datasets

from torch.utils.data import DataLoader

import torchvision.transforms as transforms

from torch.utils.data.sampler import SubsetRandomSampler

# 防止plt汉字乱码

mpl.rcParams[u'font.sans-serif'] = ['simhei']

mpl.rcParams['axes.unicode_minus'] = False

# 是否使用GPU

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# 超参数

batch_size = 256

n_epochs = 50 # 将原来的训练轮次从50改为14

# n_epochs = 50 # 将原来的训练轮次从50改为14

init_best_acc = 0.975 # 初始最佳验证准确率

checkpoint = "best_mnist_early_stopping.pt"

valid_ratio = 0.2 # 验证集划分比例

def load_mnist_datasets():

""" 加载MNIST数据集 """

# 简单数据转换

transform = transforms.ToTensor()

# 选择训练集和测试集

train_data = datasets.MNIST(root='../datasets/mnist/', train=True, download=True, transform=transform)

test_data = datasets.MNIST(root='../datasets/mnist/', train=False, download=True, transform=transform)

# 训练集和验证集划分

num_train = len(train_data)

indices = list(range(num_train))

np.random.shuffle(indices)

split = int(np.floor(valid_ratio * num_train))

train_idx, valid_idx = indices[split:], indices[:split]

# 定义获取训练及验证批数据的抽样器

train_sampler = SubsetRandomSampler(train_idx)

valid_sampler = SubsetRandomSampler(valid_idx)

# 加载训练集、验证集和测试集

train_loader = DataLoader(train_data, batch_size=batch_size, sampler=train_sampler, num_workers=0)

val_loader = DataLoader(train_data, batch_size=batch_size, sampler=valid_sampler, num_workers=0)

test_loader = DataLoader(test_data, batch_size=batch_size, num_workers=0)

return train_loader, val_loader, test_loader

class MLPModel(nn.Module):

""" 三层简单全连接网络 """

def __init__(self):

super(MLPModel, self).__init__()

self.fc1 = nn.Linear(28 * 28, 128)

self.fc2 = nn.Linear(128, 128)

self.fc3 = nn.Linear(128, 10)

self.relu = nn.ReLU()

def forward(self, x):

x = x.view(-1, 28 * 28)

x = self.relu(self.fc1(x))

x = self.relu(self.fc2(x))

x = self.fc3(x)

return x

def train_model(model, epochs, train_loader, val_loader, optimizer, criterion):

""" 训练模型 """

# 一轮训练的损失

train_loss = 0.

val_loss = 0.

# 多轮训练的损失历史

train_losses_history = []

val_losses_history = []

# 多轮训练的准确率历史

train_acc_history = []

val_acc_history = []

# 当前最佳准确率

best_acc = init_best_acc

for epoch in range(1, epochs + 1):

model.train() # 训练模式

num_correct = 0

num_samples = 0

for batch, (data, target) in enumerate(train_loader, 1):

data, target = data.to(device), target.to(device)

# 梯度清零

optimizer.zero_grad()

# 前向传播

output = model(data)

# 计算损失

loss = criterion(output, target)

# 反向传播

loss.backward()

# 更新参数

optimizer.step()

# 记录损失

train_loss += loss.item() * target.size(0)

# 将概率最高的类别作为预测类

_, predicted = torch.max(output.data, dim=1)

num_samples += target.size(0)

num_correct += (predicted == target).int().sum()

# 训练准确率

train_acc = 1.0 * num_correct / num_samples

train_acc_history.append(train_acc)

# 验证模型

with torch.no_grad():

model.eval() # 验证模式

num_correct = 0

num_samples = 0

for data, target in val_loader:

data, target = data.to(device), target.to(device)

# 前向传播

output = model(data)

# 计算损失

loss = criterion(output, target)

# 记录验证损失

val_loss += loss.item() * target.size(0)

# 将概率最高的类别作为预测类

_, predicted = torch.max(output.data, dim=1)

num_samples += target.size(0)

num_correct += (predicted == target).int().sum()

# 验证准确率

val_acc = 1.0 * num_correct / num_samples

val_acc_history.append(val_acc)

# 计算一轮训练后的损失

train_loss = train_loss / num_samples

val_loss = val_loss / num_samples

train_losses_history.append(train_loss)

val_losses_history.append(val_loss)

# 打印统计信息

epoch_len = len(str(epochs))

print(f'[{epoch:>{epoch_len}}/{epochs:>{epoch_len}}] ' +

f'训练准确率:{train_acc:.3%} ' +

f'验证准确率:{val_acc:.3%} ' +

f'训练损失:{train_loss:.5f} ' +

f'验证损失:{val_loss:.5f}')

# 记录最佳测试准确率

if val_acc > best_acc:

best_acc = val_acc

print("保存模型......")

torch.save(model.state_dict(), checkpoint)

# 为下一轮训练清除统计数据

train_loss = 0

val_loss = 0

# 加载最佳模型

model.load_state_dict(torch.load(checkpoint))

return model, train_acc_history, val_acc_history, train_losses_history, val_losses_history

def plot_metrics_curves(train_acc, val_acc, train_loss, val_loss):

""" 绘制性能曲线 """

# 训练和验证准确率

plt.figure(figsize=(10, 8))

plt.plot(range(1, len(train_acc) + 1), train_acc, label=u'训练准确率')

plt.plot(range(1, len(val_acc) + 1), val_acc, label=u'验证准确率')

max_position = np.argmin(val_acc)+1

# max_position = val_acc.index(max(val_acc)) + 1

plt.axvline(max_position, linestyle='--', color='r', label=u'提前终止检查点')

plt.xlabel(u'轮次')

plt.ylabel(u'准确率')

plt.ylim(0, 1.2)

plt.xlim(0, len(train_acc) + 1)

plt.grid(True)

plt.title(u'训练和验证准确率')

plt.legend()

plt.tight_layout()

plt.show()

# 训练和验证损失

plt.figure(figsize=(10, 8))

plt.plot(range(1, len(train_loss) + 1), train_loss, label=u'训练损失')

plt.plot(range(1, len(val_loss) + 1), val_loss, label=u'验证损失')

min_position = np.argmin(val_loss)+1

# min_position = val_loss.index(min(val_loss)) + 1

plt.axvline(min_position, linestyle='--', color='r', label=u'提前终止检查点')

plt.xlabel(u'轮次')

plt.ylabel(u'损失')

plt.ylim(0, 0.5)

plt.xlim(0, len(train_loss) + 1)

plt.grid(True)

plt.title(u'训练和验证损失')

plt.legend()

plt.tight_layout()

plt.show()

def test_model(model, test_loader, criterion):

""" 模型测试 """

test_loss = 0.0

total_correct = 0

total_examples = 0

model.eval() # 评估模式

for data, target in test_loader:

data, target = data.to(device), target.to(device)

output = model(data)

loss = criterion(output, target)

test_loss += loss.item() * data.size(0)

_, pred = torch.max(output, dim=1)

correct = np.squeeze(pred.eq(target.data.view_as(pred)))

total_correct += correct.sum()

total_examples += target.size(0)

test_loss = test_loss / len(test_loader.dataset)

print('测试损失:{:.6f}\n'.format(test_loss))

total_acc = 1.0 * total_correct / total_examples

print(f'\n总体测试准确率: {total_acc:.3%}({total_correct}/{total_examples})')

def main():

""" 主函数 """

# 实例化MLP模型

model = MLPModel().to(device)

print(model)

# 交叉熵损失函数

criterion = nn.CrossEntropyLoss()

# 优化器

optimizer = torch.optim.Adam(model.parameters())

# 加载数据集

train_loader, val_loader, test_loader = load_mnist_datasets()

# 模型训练

model, train_acc, val_acc, train_loss, val_loss = \

train_model(model, n_epochs, train_loader, val_loader, optimizer, criterion)

# 绘制性能曲线

# train_acc2 = train_acc.to(device='cpu')

# val_acc2 = val_acc.to(device='cpu')

# train_loss2 = train_loss.to(device='cpu')

# val_loss2 = val_loss.to(device='cpu')

# plot_metrics_curves(train_acc2, val_acc2, train_loss2, val_loss2)

train_acc2 = torch.tensor(train_acc).detach().cpu().clone().numpy()

val_acc2 = torch.tensor(val_acc).cpu().clone().numpy()

train_loss2 = torch.tensor(train_loss).cpu().clone().numpy()

val_loss2 = torch.tensor(val_loss).cpu().clone().numpy()

plot_metrics_curves(train_acc2, val_acc2, train_loss2, val_loss2)

# plot_metrics_curves(train_acc, val_acc, train_loss, val_loss)

# 模型测试

test_model(model, test_loader, criterion)

if __name__ == '__main__':

main()

运行结果:

总体测试准确率: 97.630%(9763/10000)

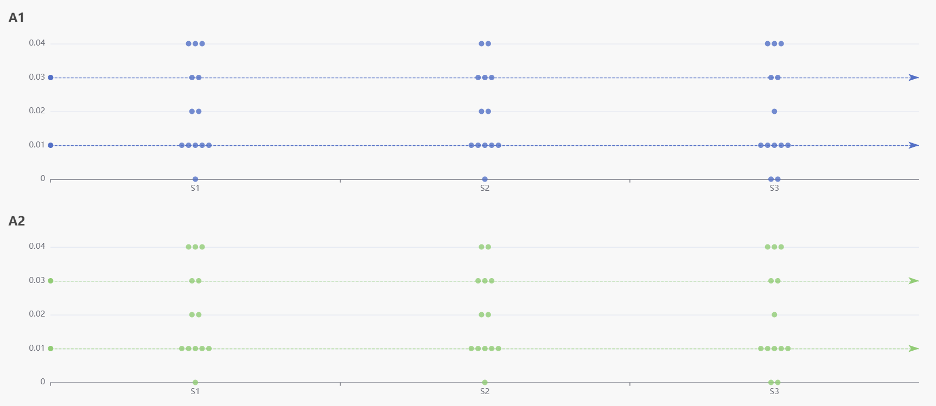

显示Mnist训练和验证准确率及损失曲线

验证 损失最小值处就是提前终止检查点。

2)L2正则化

通过对权重参数施加惩罚达到。它是一个减少方差的策略,也就是减少高方差。

误差可分解为:偏差,方差与噪声之和。即误差 = 偏差 + 方差 + 噪声

偏差度量了学习算法的期望预测与真实结果的偏离程度, 即刻画了学习算法本身的拟合能力

方差度量了同样大小的训练集的变动所导致的学习性能的变化,即刻画了数据扰动所造成的影响

噪声则表达了在当前任务上任何学习算法所能达到的期望泛化误差的下界。

L1 正则化的特点:

- 不容易计算, 在零点连续但不可导, 需要分段求导

- L1 模型可以将 一些权值缩小到零(稀疏)

- 执行隐式变量选择。这意味着一些变量值对结果的影响降为 0, 就像删除它们一样

- 其中一些预测因子对应较大的权值, 而其余的(几乎归零)

- 由于它可以提供稀疏的解决方案, 因此通常是建模特征数量巨大时的首选模型

- 它任意选择高度相关特征中的任何一个,并将其余特征对应的系数减少到 0**

- L1 范数对于异常值更具提抗力

L2 正则化的特点:

- 容易计算, 可导, 适合基于梯度的方法

- 将一些权值缩小到接近 0

- 相关的预测特征对应的系数值相似

- 当特征数量巨大时, 计算量会比较大

- 对于有相关特征存在的情况,它会包含所有这些相关的特征, 但是相关特征的权值分布取决于相关性。

- 对异常值非常敏感

- 相对于 L1 正则会更加准确

创建文件mnist_regularization.py

添加代码如下:

# -*- coding: utf-8 -*-

"""

MNIST数据集分类示例

L2正则化

"""

import torch

import numpy as np

import matplotlib.pyplot as plt

import matplotlib as mpl

import torch.nn as nn

from torchvision import datasets

from torch.utils.data import DataLoader

import torchvision.transforms as transforms

from torch.utils.data.sampler import SubsetRandomSampler

# 防止plt汉字乱码

mpl.rcParams[u'font.sans-serif'] = ['simhei']

mpl.rcParams['axes.unicode_minus'] = False

# 是否使用GPU

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# 超参数

batch_size = 256

n_epochs = 50

init_best_acc = 0.975 # 初始最佳验证准确率

checkpoint = "best_mnist_early_stopping.pt"

valid_ratio = 0.2 # 验证集划分比例

def load_mnist_datasets():

""" 加载MNIST数据集 """

# 简单数据转换

transform = transforms.ToTensor()

# 选择训练集和测试集

train_data = datasets.MNIST(root='../datasets/mnist/', train=True, download=True, transform=transform)

test_data = datasets.MNIST(root='../datasets/mnist/', train=False, download=True, transform=transform)

# 训练集和验证集划分

num_train = len(train_data)

indices = list(range(num_train))

np.random.shuffle(indices)

split = int(np.floor(valid_ratio * num_train))

train_idx, valid_idx = indices[split:], indices[:split]

# 定义获取训练及验证批数据的抽样器

train_sampler = SubsetRandomSampler(train_idx)

valid_sampler = SubsetRandomSampler(valid_idx)

# 加载训练集、验证集和测试集

train_loader = DataLoader(train_data, batch_size=batch_size, sampler=train_sampler, num_workers=0)

val_loader = DataLoader(train_data, batch_size=batch_size, sampler=valid_sampler, num_workers=0)

test_loader = DataLoader(test_data, batch_size=batch_size, num_workers=0)

return train_loader, val_loader, test_loader

class MLPModel(nn.Module):

""" 三层简单全连接网络 """

def __init__(self):

super(MLPModel, self).__init__()

self.fc1 = nn.Linear(28 * 28, 128)

self.fc2 = nn.Linear(128, 128)

self.fc3 = nn.Linear(128, 10)

self.relu = nn.ReLU()

def forward(self, x):

x = x.view(-1, 28 * 28)

x = self.relu(self.fc1(x))

x = self.relu(self.fc2(x))

x = self.fc3(x)

return x

def train_model(model, epochs, train_loader, val_loader, optimizer, criterion):

""" 训练模型 """

# 一轮训练的损失

train_loss = 0.

val_loss = 0.

# 多轮训练的损失历史

train_losses_history = []

val_losses_history = []

# 多轮训练的准确率历史

train_acc_history = []

val_acc_history = []

# 当前最佳准确率

best_acc = init_best_acc

for epoch in range(1, epochs + 1):

model.train() # 训练模式

num_correct = 0

num_samples = 0

for batch, (data, target) in enumerate(train_loader, 1):

data, target = data.to(device), target.to(device)

# 梯度清零

optimizer.zero_grad()

# 前向传播

output = model(data)

# 计算损失

loss = criterion(output, target)

# 反向传播

loss.backward()

# 更新参数

optimizer.step()

# 记录损失

train_loss += loss.item() * target.size(0)

# 将概率最高的类别作为预测类

_, predicted = torch.max(output.data, dim=1)

num_samples += target.size(0)

num_correct += (predicted == target).int().sum()

# 训练准确率

train_acc = 1.0 * num_correct / num_samples

train_acc_history.append(train_acc)

# 验证模型

with torch.no_grad():

model.eval() # 验证模式

num_correct = 0

num_samples = 0

for data, target in val_loader:

data, target = data.to(device), target.to(device)

# 前向传播

output = model(data)

# 计算损失

loss = criterion(output, target)

# 记录验证损失

val_loss += loss.item() * target.size(0)

# 将概率最高的类别作为预测类

_, predicted = torch.max(output.data, dim=1)

num_samples += target.size(0)

num_correct += (predicted == target).int().sum()

# 验证准确率

val_acc = 1.0 * num_correct / num_samples

val_acc_history.append(val_acc)

# 计算一轮训练后的损失

train_loss = train_loss / num_samples

val_loss = val_loss / num_samples

train_losses_history.append(train_loss)

val_losses_history.append(val_loss)

# 打印统计信息

epoch_len = len(str(epochs))

print(f'[{epoch:>{epoch_len}}/{epochs:>{epoch_len}}] ' +

f'训练准确率:{train_acc:.3%} ' +

f'验证准确率:{val_acc:.3%} ' +

f'训练损失:{train_loss:.5f} ' +

f'验证损失:{val_loss:.5f}')

# 记录最佳测试准确率

if val_acc > best_acc:

best_acc = val_acc

print("保存模型......")

torch.save(model.state_dict(), checkpoint)

# 为下一轮训练清除统计数据

train_loss = 0

val_loss = 0

# 加载最佳模型

model.load_state_dict(torch.load(checkpoint))

return model, train_acc_history, val_acc_history, train_losses_history, val_losses_history

def plot_metrics_curves(train_acc, val_acc, train_loss, val_loss):

""" 绘制性能曲线 """

# 训练和验证准确率

plt.figure(figsize=(10, 8))

plt.plot(range(1, len(train_acc) + 1), train_acc, label=u'训练准确率')

plt.plot(range(1, len(val_acc) + 1), val_acc, label=u'验证准确率')

max_position = val_acc.index(max(val_acc)) + 1

plt.axvline(max_position, linestyle='--', color='r', label=u'提前终止检查点')

plt.xlabel(u'轮次')

plt.ylabel(u'准确率')

plt.ylim(0, 1.2)

plt.xlim(0, len(train_acc) + 1)

plt.grid(True)

plt.title(u'训练和验证准确率')

plt.legend()

plt.tight_layout()

plt.show()

# 训练和验证损失

plt.figure(figsize=(10, 8))

plt.plot(range(1, len(train_loss) + 1), train_loss, label=u'训练损失')

plt.plot(range(1, len(val_loss) + 1), val_loss, label=u'验证损失')

min_position = val_loss.index(min(val_loss)) + 1

plt.axvline(min_position, linestyle='--', color='r', label=u'提前终止检查点')

plt.xlabel(u'轮次')

plt.ylabel(u'损失')

plt.ylim(0, 0.5)

plt.xlim(0, len(train_loss) + 1)

plt.grid(True)

plt.title(u'训练和验证损失')

plt.legend()

plt.tight_layout()

plt.show()

def test_model(model, test_loader, criterion):

""" 模型测试 """

test_loss = 0.0

total_correct = 0

total_examples = 0

model.eval() # 评估模式

for data, target in test_loader:

data, target = data.to(device), target.to(device)

output = model(data)

loss = criterion(output, target)

test_loss += loss.item() * data.size(0)

_, pred = torch.max(output, dim=1)

correct = np.squeeze(pred.eq(target.data.view_as(pred)))

total_correct += correct.sum()

total_examples += target.size(0)

test_loss = test_loss / len(test_loader.dataset)

print('测试损失:{:.6f}\n'.format(test_loss))

total_acc = 1.0 * total_correct / total_examples

print(f'\n总体测试准确率: {total_acc:.3%}({total_correct}/{total_examples})')

def main():

""" 主函数 """

# 实例化MLP模型

model = MLPModel().to(device)

print(model)

# 交叉熵损失函数

criterion = nn.CrossEntropyLoss()

# 优化器

optimizer = torch.optim.Adam(model.parameters(), weight_decay=0.0001)

# 加载数据集

train_loader, val_loader, test_loader = load_mnist_datasets()

# 模型训练

model, train_acc, val_acc, train_loss, val_loss = \

train_model(model, n_epochs, train_loader, val_loader, optimizer, criterion)

# 绘制性能曲线

plot_metrics_curves(train_acc, val_acc, train_loss, val_loss)

# 模型测试

test_model(model, test_loader, criterion)

if __name__ == '__main__':

main()

3)Dropout

在神经网络训练时,随机把一些神经单元去除,“瘦身”后的神经网络继续训练,最后的模型,是保留所有神经单元,但是神经的连接权重w乘上了一个刚才随机去除指数p.

# -*- coding: utf-8 -*-

"""

MNIST数据集分类示例

Dropout

"""

import torch

import numpy as np

import matplotlib.pyplot as plt

import matplotlib as mpl

import torch.nn as nn

from torchvision import datasets

from torch.utils.data import DataLoader

import torchvision.transforms as transforms

from torch.utils.data.sampler import SubsetRandomSampler

# 防止plt汉字乱码

mpl.rcParams[u'font.sans-serif'] = ['simhei']

mpl.rcParams['axes.unicode_minus'] = False

# 是否使用GPU

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# 超参数

batch_size = 256

n_epochs = 50

init_best_acc = 0.975 # 初始最佳验证准确率

checkpoint = "best_mnist_dropout.pt"

valid_ratio = 0.2 # 验证集划分比例

def load_mnist_datasets():

""" 加载MNIST数据集 """

# 简单数据转换

transform = transforms.ToTensor()

# 选择训练集和测试集

train_data = datasets.MNIST(root='../datasets/mnist/', train=True, download=True, transform=transform)

test_data = datasets.MNIST(root='../datasets/mnist/', train=False, download=True, transform=transform)

# 训练集和验证集划分

num_train = len(train_data)

indices = list(range(num_train))

np.random.shuffle(indices)

split = int(np.floor(valid_ratio * num_train))

train_idx, valid_idx = indices[split:], indices[:split]

# 定义获取训练及验证批数据的抽样器

train_sampler = SubsetRandomSampler(train_idx)

valid_sampler = SubsetRandomSampler(valid_idx)

# 加载训练集、验证集和测试集

train_loader = DataLoader(train_data, batch_size=batch_size, sampler=train_sampler, num_workers=0)

val_loader = DataLoader(train_data, batch_size=batch_size, sampler=valid_sampler, num_workers=0)

test_loader = DataLoader(test_data, batch_size=batch_size, num_workers=0)

return train_loader, val_loader, test_loader

class MLPModel(nn.Module):

""" 三层简单全连接网络 """

def __init__(self):

super(MLPModel, self).__init__()

self.fc1 = nn.Linear(28 * 28, 128)

self.fc2 = nn.Linear(128, 128)

self.fc3 = nn.Linear(128, 10)

self.relu = nn.ReLU()

self.dropout = nn.Dropout(0.4) # 随机丢弃的概率

def forward(self, x):

x = x.view(-1, 28 * 28)

x = self.relu(self.fc1(x))

x = self.dropout(x)

x = self.relu(self.fc2(x))

x = self.dropout(x)

x = self.fc3(x)

return x

def train_model(model, epochs, train_loader, val_loader, optimizer, criterion):

""" 训练模型 """

# 一轮训练的损失

train_loss = 0.

val_loss = 0.

# 多轮训练的损失历史

train_losses_history = []

val_losses_history = []

# 多轮训练的准确率历史

train_acc_history = []

val_acc_history = []

# 当前最佳准确率

best_acc = init_best_acc

for epoch in range(1, epochs + 1):

model.train() # 训练模式

num_correct = 0

num_samples = 0

for batch, (data, target) in enumerate(train_loader, 1):

data, target = data.to(device), target.to(device)

# 梯度清零

optimizer.zero_grad()

# 前向传播

output = model(data)

# 计算损失

loss = criterion(output, target)

# 反向传播

loss.backward()

# 更新参数

optimizer.step()

# 记录损失

train_loss += loss.item() * target.size(0)

# 将概率最高的类别作为预测类

_, predicted = torch.max(output.data, dim=1)

num_samples += target.size(0)

num_correct += (predicted == target).int().sum()

# 训练准确率

train_acc = 1.0 * num_correct / num_samples

train_acc_history.append(train_acc)

# 验证模型

with torch.no_grad():

model.eval() # 验证模式

num_correct = 0

num_samples = 0

for data, target in val_loader:

data, target = data.to(device), target.to(device)

# 前向传播

output = model(data)

# 计算损失

loss = criterion(output, target)

# 记录验证损失

val_loss += loss.item() * target.size(0)

# 将概率最高的类别作为预测类

_, predicted = torch.max(output.data, dim=1)

num_samples += target.size(0)

num_correct += (predicted == target).int().sum()

# 验证准确率

val_acc = 1.0 * num_correct / num_samples

val_acc_history.append(val_acc)

# 计算一轮训练后的损失

train_loss = train_loss / num_samples

val_loss = val_loss / num_samples

train_losses_history.append(train_loss)

val_losses_history.append(val_loss)

# 打印统计信息

epoch_len = len(str(epochs))

print(f'[{epoch:>{epoch_len}}/{epochs:>{epoch_len}}] ' +

f'训练准确率:{train_acc:.3%} ' +

f'验证准确率:{val_acc:.3%} ' +

f'训练损失:{train_loss:.5f} ' +

f'验证损失:{val_loss:.5f}')

# 记录最佳测试准确率

if val_acc > best_acc:

best_acc = val_acc

print("保存模型......")

torch.save(model.state_dict(), checkpoint)

# 为下一轮训练清除统计数据

train_loss = 0

val_loss = 0

# 加载最佳模型

model.load_state_dict(torch.load(checkpoint))

return model, train_acc_history, val_acc_history, train_losses_history, val_losses_history

def plot_metrics_curves(train_acc, val_acc, train_loss, val_loss):

""" 绘制性能曲线 """

# 训练和验证准确率

plt.figure(figsize=(10, 8))

plt.plot(range(1, len(train_acc) + 1), train_acc, label=u'训练准确率')

plt.plot(range(1, len(val_acc) + 1), val_acc, label=u'验证准确率')

max_position = val_acc.index(max(val_acc)) + 1

plt.axvline(max_position, linestyle='--', color='r', label=u'提前终止检查点')

plt.xlabel(u'轮次')

plt.ylabel(u'准确率')

plt.ylim(0, 1.2)

plt.xlim(0, len(train_acc) + 1)

plt.grid(True)

plt.title(u'训练和验证准确率')

plt.legend()

plt.tight_layout()

plt.show()

# 训练和验证损失

plt.figure(figsize=(10, 8))

plt.plot(range(1, len(train_loss) + 1), train_loss, label=u'训练损失')

plt.plot(range(1, len(val_loss) + 1), val_loss, label=u'验证损失')

min_position = val_loss.index(min(val_loss)) + 1

plt.axvline(min_position, linestyle='--', color='r', label=u'提前终止检查点')

plt.xlabel(u'轮次')

plt.ylabel(u'损失')

plt.ylim(0, 1.0)

plt.xlim(0, len(train_loss) + 1)

plt.grid(True)

plt.title(u'训练和验证损失')

plt.legend()

plt.tight_layout()

plt.show()

def test_model(model, test_loader, criterion):

""" 模型测试 """

test_loss = 0.0

total_correct = 0

total_examples = 0

model.eval() # 评估模式

for data, target in test_loader:

data, target = data.to(device), target.to(device)

output = model(data)

loss = criterion(output, target)

test_loss += loss.item() * data.size(0)

_, pred = torch.max(output, dim=1)

correct = np.squeeze(pred.eq(target.data.view_as(pred)))

total_correct += correct.sum()

total_examples += target.size(0)

test_loss = test_loss / len(test_loader.dataset)

print('测试损失:{:.6f}\n'.format(test_loss))

total_acc = 1.0 * total_correct / total_examples

print(f'\n总体测试准确率: {total_acc:.3%}({total_correct}/{total_examples})')

def main():

""" 主函数 """

# 实例化MLP模型

model = MLPModel().to(device)

print(model)

# 交叉熵损失函数

criterion = nn.CrossEntropyLoss()

# 优化器

optimizer = torch.optim.Adam(model.parameters())

# 加载数据集

train_loader, val_loader, test_loader = load_mnist_datasets()

# 模型训练

model, train_acc, val_acc, train_loss, val_loss = \

train_model(model, n_epochs, train_loader, val_loader, optimizer, criterion)

# 绘制性能曲线

plot_metrics_curves(train_acc, val_acc, train_loss, val_loss)

# 模型测试

test_model(model, test_loader, criterion)

if __name__ == '__main__':

main()

3.优化算法

寻找最优参数

1)梯度下降

批量梯度下降算法(BGD):计算全部样本平均梯度;

随机梯度下降算法(SGD):计算一个样本;

小批量梯度下降算法(MBGD):

2)Momentum算法

动量是一个能够对抗鞍点和局部最小值的技术。其运算速度较快。

结合当前梯度与上一次更新信息,用于当前更新;

动量,他的作用是尽量保持当前梯度的变化方向。没有动量的网络可以视为一个质量很轻的棉花团,风往哪里吹就往哪里走,一点风吹草动都影响他,四处跳动不容易学习到更好的局部最优。没有动力来源的时候可能又不动了。加了动量就像是棉花变成了铁球,咕噜咕噜的滚在参数空间里,很容易闯过鞍点,直到最低点。可以参照指数滑动平均。优化效果是梯度二阶导数不会过大,优化更稳定,也可以看做效果接近二阶方法,但是计算容易的多。

其实本质应该是对参数加了约束。

创建文件gradient_descent.py,演示梯度下降算法的缺点

添加代码如下:

import numpy as np

from matplotlib import pyplot as plt

# 尝试修改学习率为0.43和0.55,看看效果

learning_rate = 0.43

def f_2d(w, b):

""" 待优化的函数 """

return 0.1 * w ** 2 + 2 * b ** 2

def gd_2d(w, b):

""" 优化一步 """

return w - learning_rate * 0.2 * w, b - learning_rate * 4 * b

def train_2d(optimizer):

""" 使用定制优化器optimizer来训练二维目标函数 """

w, b = -7, 4 # 初始值

history = [(w, b)]

epochs = 20 # 训练轮次

for i in range(epochs):

w, b = optimizer(w, b)

history.append((w, b))

print('经过轮次:%d,最终的w:%f,b:%f' % (epochs, w, b))

return history

def trace_2d(f, hist):

""" 追踪训练二维目标函数的过程 """

w, b = zip(*hist)

plt.rcParams['figure.figsize'] = (5, 3)

plt.plot(w, b, '-o', color='blue')

w = np.arange(-7.5, 7.5, 0.1)

b = np.arange(min(-4.0, min(b) - 1), max(4.0, max(b) + 1), 0.1)

w, b = np.meshgrid(w, b)

plt.contour(w, b, f(w, b), colors='green')

plt.xlabel('w')

plt.ylabel('b')

plt.show()

def main():

history = train_2d(gd_2d)

trace_2d(f_2d, history)

if __name__ == '__main__':

main()

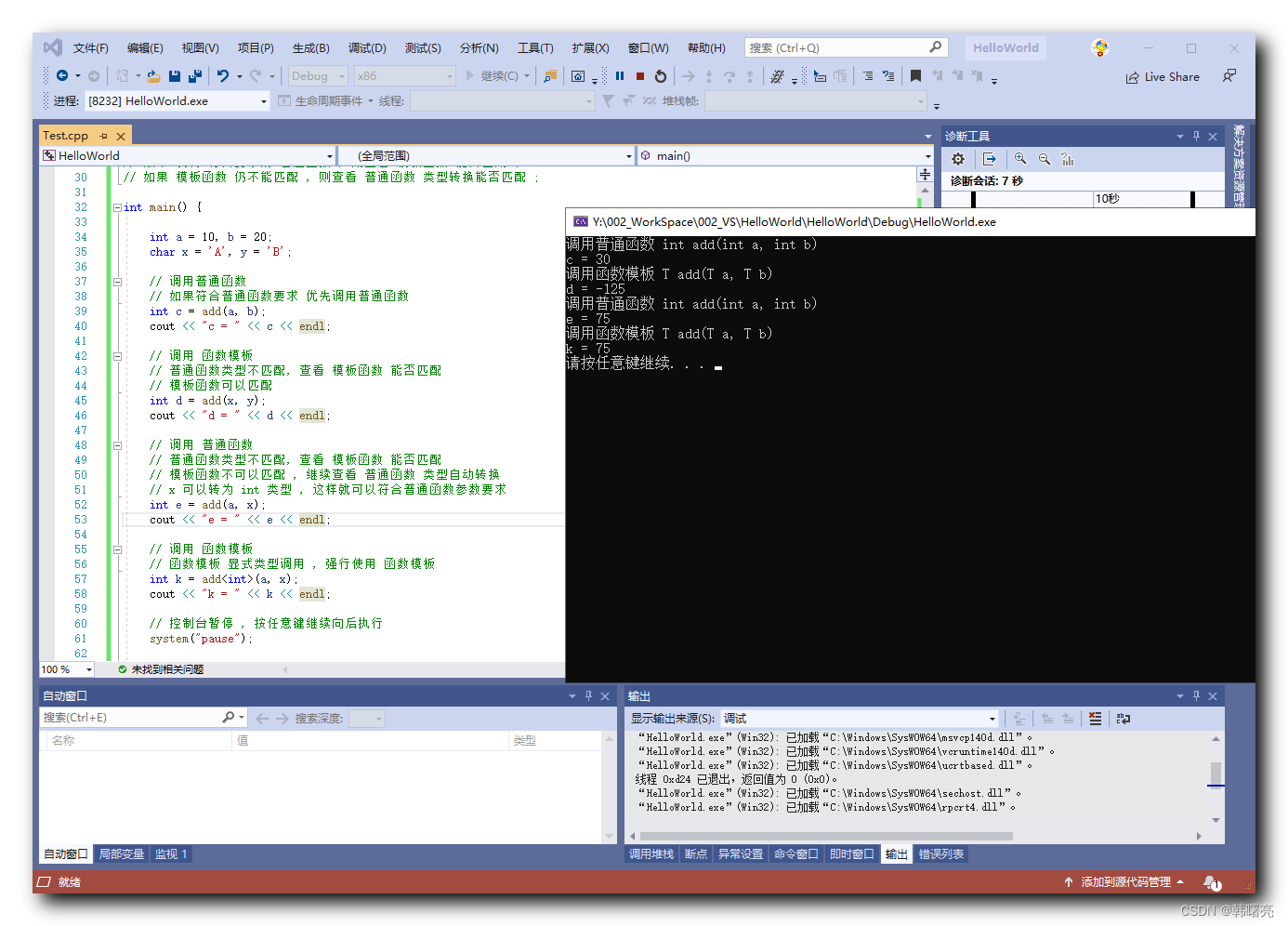

运行结果:

创建文件momentum.py,演示Momentum算法

添加代码如下:

# -*- coding: utf-8 -*-

"""

演示Momentum算法

"""

import numpy as np

from matplotlib import pyplot as plt

# 尝试修改学习率为0.43和0.55,看看效果

learning_rate = 0.55

gamma = 0.5

def f_2d(w, b):

""" 待优化的函数 """

return 0.1 * w ** 2 + 2 * b ** 2

def momentum_2d(w, b, vw, vb, nesterov=False):

""" 优化一步 """

if nesterov:

vw = gamma * vw + (1 - gamma) * 0.2 * w

vb = gamma * vb + (1 - gamma) * 4 * b

return w - learning_rate * vw, b - learning_rate * vb, vw, vb

else:

vw = gamma * vw + learning_rate * (1 - gamma) * 0.2 * w

vb = gamma * vb + learning_rate * (1 - gamma) * 4 * b

return w - vw, b - vb, vw, vb

def train_2d(optimizer):

""" 使用定制优化器optimizer来训练二维目标函数 """

w, b, vw, vb = -7, 4, 0, 0 # 初始值

history = [(w, b)]

epochs = 20 # 训练轮次

for i in range(epochs):

w, b, vw, vb = optimizer(w, b, vw, vb, True)

history.append((w, b))

print('经过轮次:%d,最终的w:%f,b:%f' % (epochs, w, b))

return history

def trace_2d(f, hist):

""" 追踪训练二维目标函数的过程 """

w, b = zip(*hist)

plt.rcParams['figure.figsize'] = (5, 3)

plt.plot(w, b, '-o', color='blue')

w = np.arange(-7.5, 7.5, 0.1)

b = np.arange(min(-4.0, min(b) - 1), max(4.0, max(b) + 1), 0.1)

w, b = np.meshgrid(w, b)

plt.contour(w, b, f(w, b), colors='green')

plt.xlabel('w')

plt.ylabel('b')

plt.show()

def main():

history = train_2d(momentum_2d)

trace_2d(f_2d, history)

if __name__ == '__main__':

main()

运行结果:

3)RMSprop算法

与动量梯度下降一样,都是消除梯度下降过程中的摆动来加速梯度下降的方法。

创建文件rmsprop.py

添加代码如下:

# -*- coding: utf-8 -*-

"""

演示RMSprop算法

"""

import numpy as np

from matplotlib import pyplot as plt

# 尝试修改学习率为0.43和0.55,看看效果

learning_rate = 0.55

gamma = 0.9

def f_2d(w, b):

""" 待优化的函数 """

return 0.1 * w ** 2 + 2 * b ** 2

def rmsprop_2d(w, b, sw, sb):

""" 优化一步 """

dw, db, eps = 0.2 * w, 4 * b, 1e-8

sw = gamma * sw + (1 - gamma) * dw ** 2

sb = gamma * sb + (1 - gamma) * db ** 2

return (w - learning_rate * dw / (np.sqrt(sw) + eps),

b - learning_rate * db / (np.sqrt(sb) + eps), sw, sb)

def train_2d(optimizer):

""" 使用定制优化器optimizer来训练二维目标函数 """

w, b, sw, sb = -7, 4, 0, 0 # 初始值

history = [(w, b)]

epochs = 20 # 训练轮次

for i in range(epochs):

w, b, sw, sb = optimizer(w, b, sw, sb)

history.append((w, b))

print('经过轮次:%d,最终的w:%f,b:%f' % (epochs, w, b))

return history

def trace_2d(f, hist):

""" 追踪训练二维目标函数的过程 """

w, b = zip(*hist)

plt.rcParams['figure.figsize'] = (5, 3)

plt.plot(w, b, '-o', color='blue')

w = np.arange(-7.5, 7.5, 0.1)

b = np.arange(min(-4.0, min(b) - 1), max(4.0, max(b) + 1), 0.1)

w, b = np.meshgrid(w, b)

plt.contour(w, b, f(w, b), colors='green')

plt.xlabel('w')

plt.ylabel('b')

plt.show()

def main():

history = train_2d(rmsprop_2d)

trace_2d(f_2d, history)

if __name__ == '__main__':

main()

运行结果:

4)Adam算法

是RMSProp的更新版本

Adam中动量直接并入了梯度一阶矩(指数加权)的估计。其次,相比于缺少修正因子导致二阶矩估计可能在训练初期具有很高偏置的RMSProp,Adam包括偏置修正,修正从原点初始化的一阶矩(动量项)和(非中心的)二阶矩估计。

创建文件adam.py

添加代码如下:

# -*- coding: utf-8 -*-

"""

演示Adam算法

"""

import numpy as np

from matplotlib import pyplot as plt

# 尝试修改学习率为0.43和0.55,看看效果

learning_rate = 0.43

beta1 = 0.9

beta2 = 0.999

def f_2d(w, b):

""" 待优化的函数 """

return 0.1 * w ** 2 + 2 * b ** 2

def adam_2d(w, b, vw, vb, sw, sb, t):

""" 优化一步 """

dw, db, eps = 0.2 * w, 4 * b, 1e-8

vw = beta1 * vw + (1 - beta1) * dw

vb = beta1 * vb + (1 - beta1) * db

sw = beta2 * sw + (1 - beta2) * dw ** 2

sb = beta2 * sb + (1 - beta2) * db ** 2

vwc = vw / (1 - beta1 ** t)

vbc = vb / (1 - beta1 ** t)

swc = sw / (1 - beta2 ** t)

sbc = sb / (1 - beta2 ** t)

return (w - learning_rate * vwc / np.sqrt(swc + eps),

b - learning_rate * vbc / np.sqrt(sbc + eps), vw, vb, sw, sb)

def train_2d(optimizer):

""" 使用定制优化器optimizer来训练二维目标函数 """

w, b, vw, vb, sw, sb = -7, 4, 0, 0, 0, 0 # 初始值

history = [(w, b)]

epochs = 20 # 训练轮次

for t in range(epochs):

w, b, vw, vb, sw, sb = optimizer(w, b, vw, vb, sw, sb, t + 1)

history.append((w, b))

print('经过轮次:%d,最终的w:%f,b:%f' % (epochs, w, b))

return history

def trace_2d(f, hist):

""" 追踪训练二维目标函数的过程 """

w, b = zip(*hist)

plt.rcParams['figure.figsize'] = (5, 3)

plt.plot(w, b, '-o', color='blue')

w = np.arange(-7.5, 7.5, 0.1)

b = np.arange(min(-4.0, min(b) - 1), max(4.0, max(b) + 1), 0.1)

w, b = np.meshgrid(w, b)

plt.contour(w, b, f(w, b), colors='green')

plt.xlabel('w')

plt.ylabel('b')

plt.show()

def main():

history = train_2d(adam_2d)

trace_2d(f_2d, history)

if __name__ == '__main__':

main()

运行结果:

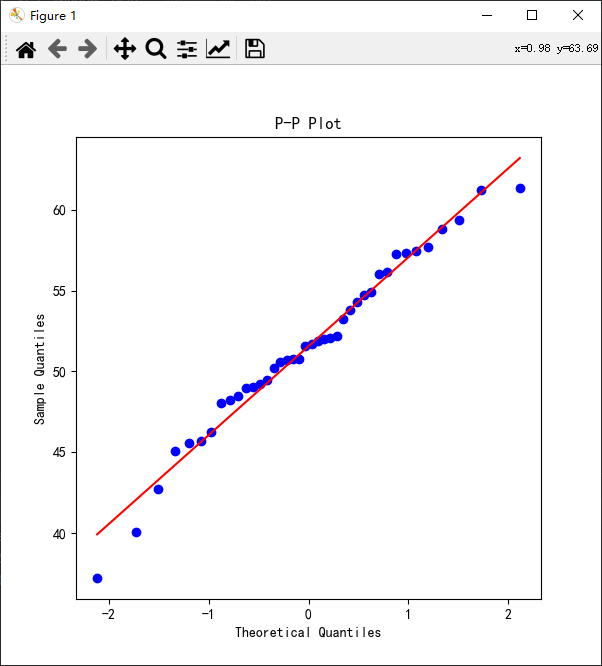

4.PyTorch的初始化函数

训练需要给参数赋初始值。torch.nn.init模块定义了多种初始化函数。

1)普通初始化

常数初始化;

均匀分布初始化;

正态分布

初始化为1;

初始化为0;

初始化为对角阵

初始化为狄拉克函数

2)Xavier 初始化

均匀分布初始化

高斯分布;

gain的计算

3)He初始化

均匀分布初始化

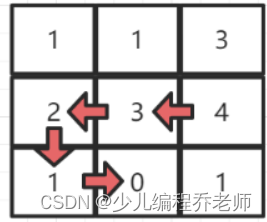

高斯分布;

![[PyTorch][chapter 63][强化学习-QLearning]](https://img-blog.csdnimg.cn/933bd586568f494fa86a22caa39a6652.png)