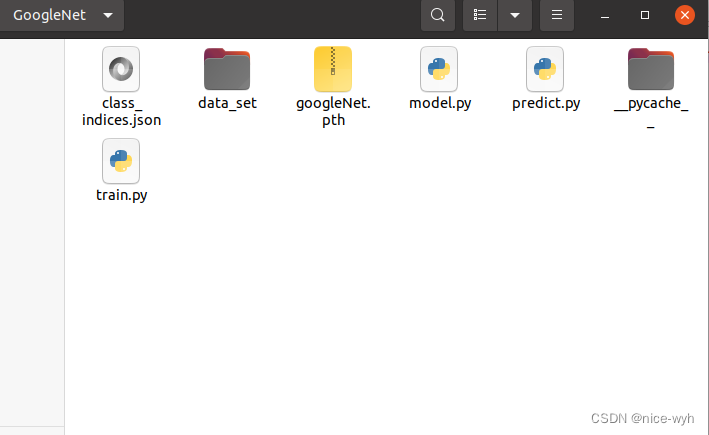

一.数据集准备

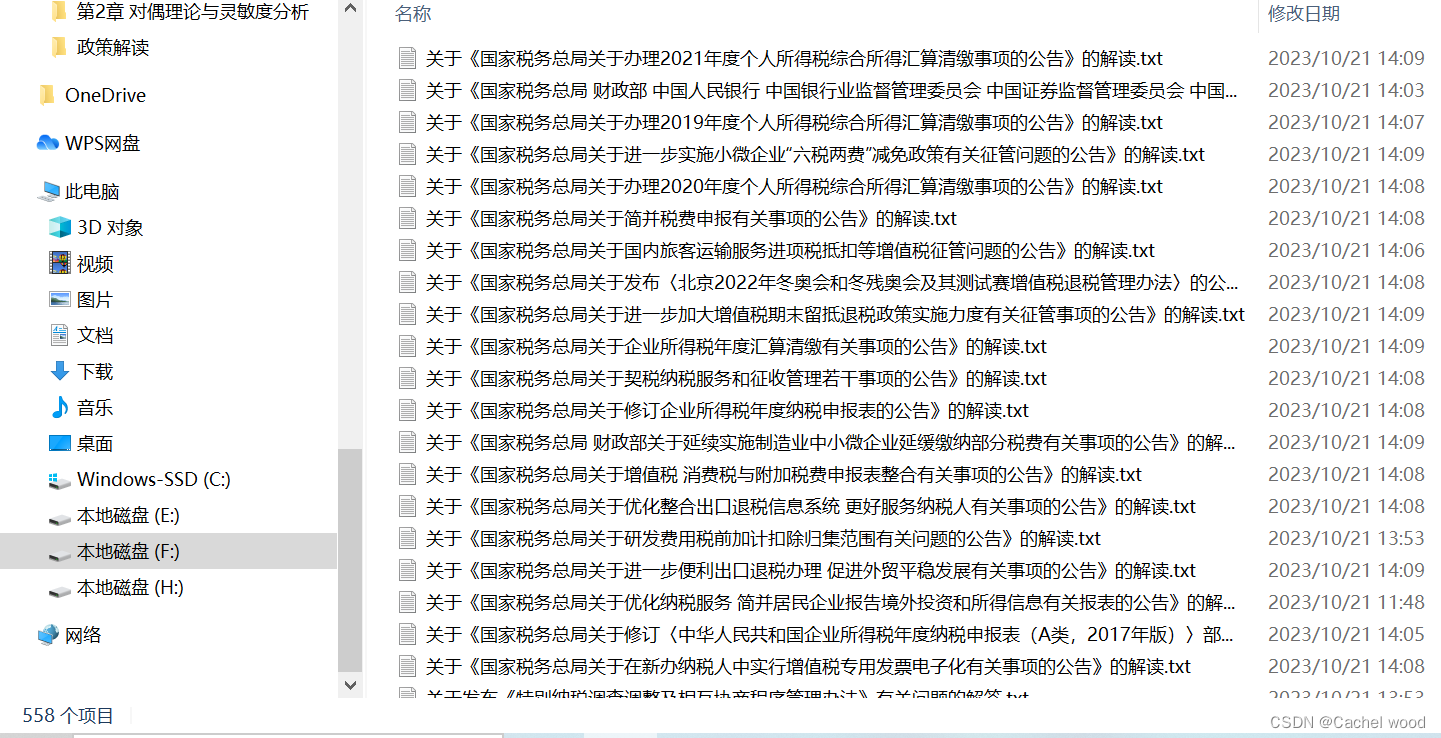

新建一个项目文件夹GoogleNet,并在里面建立data_set文件夹用来保存数据集,在data_set文件夹下创建新文件夹"flower_data",点击链接下载花分类数据集https://storage.googleapis.com/download.tensorflow.org/example_images/flower_photos.tgz,会下载一个压缩包,将它解压到flower_data文件夹下,执行"split_data.py"脚本自动将数据集划分成训练集train和验证集val。

split.py如下:

import os

from shutil import copy, rmtree

import random

def mk_file(file_path: str):

if os.path.exists(file_path):

# 如果文件夹存在,则先删除原文件夹在重新创建

rmtree(file_path)

os.makedirs(file_path)

def main():

# 保证随机可复现

random.seed(0)

# 将数据集中10%的数据划分到验证集中

split_rate = 0.1

# 指向你解压后的flower_photos文件夹

cwd = os.getcwd()

data_root = os.path.join(cwd, "flower_data")

origin_flower_path = os.path.join(data_root, "flower_photos")

assert os.path.exists(origin_flower_path), "path '{}' does not exist.".format(origin_flower_path)

flower_class = [cla for cla in os.listdir(origin_flower_path)

if os.path.isdir(os.path.join(origin_flower_path, cla))]

# 建立保存训练集的文件夹

train_root = os.path.join(data_root, "train")

mk_file(train_root)

for cla in flower_class:

# 建立每个类别对应的文件夹

mk_file(os.path.join(train_root, cla))

# 建立保存验证集的文件夹

val_root = os.path.join(data_root, "val")

mk_file(val_root)

for cla in flower_class:

# 建立每个类别对应的文件夹

mk_file(os.path.join(val_root, cla))

for cla in flower_class:

cla_path = os.path.join(origin_flower_path, cla)

images = os.listdir(cla_path)

num = len(images)

# 随机采样验证集的索引

eval_index = random.sample(images, k=int(num*split_rate))

for index, image in enumerate(images):

if image in eval_index:

# 将分配至验证集中的文件复制到相应目录

image_path = os.path.join(cla_path, image)

new_path = os.path.join(val_root, cla)

copy(image_path, new_path)

else:

# 将分配至训练集中的文件复制到相应目录

image_path = os.path.join(cla_path, image)

new_path = os.path.join(train_root, cla)

copy(image_path, new_path)

print("\r[{}] processing [{}/{}]".format(cla, index+1, num), end="") # processing bar

print()

print("processing done!")

if __name__ == '__main__':

main()

之后会在文件夹下生成train和val数据集,到此,完成了数据集的准备。

二.定义网络

新建model.py,参照GoogleNet的网络结构和pytorch官方给出的代码,对代码进行略微的修改即可,在他的代码里首先定义了三个类BasicConv2d、Inception、InceptionAux,即基础卷积、Inception模块、辅助分类器三个部分,接着定义了GoogleNet类,对上述三个类进行调用,完成前向传播。

pytorch官方示例GoogleNet代码

import warnings

from collections import namedtuple

from functools import partial

from typing import Any, Callable, List, Optional, Tuple

import torch

import torch.nn as nn

import torch.nn.functional as F

from torch import Tensor

class GoogLeNet(nn.Module):

def __init__(self, num_classes = 1000, aux_logits = True, transform_input = False, init_weights = True):

super(GoogLeNet,self).__init__()

self.aux_logits = aux_logits

self.transform_input = transform_input

self.conv1 = BasicConv2d(3, 64, kernel_size=7, stride=2, padding=3) #3为输入通道数,64为输出通道数

self.maxpool1 = nn.MaxPool2d(3, stride=2, ceil_mode=True)

self.conv2 = BasicConv2d(64, 64, kernel_size=1)

self.conv3 = BasicConv2d(64, 192, kernel_size=3, padding=1)

self.maxpool2 = nn.MaxPool2d(3, stride=2, ceil_mode=True)

self.inception3a = Inception(192, 64, 96, 128, 16, 32, 32)

self.inception3b = Inception(256, 128, 128, 192, 32, 96, 64)

self.maxpool3 = nn.MaxPool2d(3, stride=2, ceil_mode=True)

self.inception4a = Inception(480, 192, 96, 208, 16, 48, 64)

self.inception4b = Inception(512, 160, 112, 224, 24, 64, 64)

self.inception4c = Inception(512, 128, 128, 256, 24, 64, 64)

self.inception4d = Inception(512, 112, 144, 288, 32, 64, 64)

self.inception4e = Inception(528, 256, 160, 320, 32, 128, 128)

self.maxpool4 = nn.MaxPool2d(2, stride=2, ceil_mode=True)

self.inception5a = Inception(832, 256, 160, 320, 32, 128, 128)

self.inception5b = Inception(832, 384, 192, 384, 48, 128, 128)

if aux_logits:

self.aux1 = InceptionAux(512, num_classes)

self.aux2 = InceptionAux(528, num_classes)

self.avgpool = nn.AdaptiveAvgPool2d((1, 1)) #自适应平均池化下采样,对于任意尺寸的特征向量,都得到1*1特征矩阵

self.dropout = nn.Dropout(0.4)

self.fc = nn.Linear(1024, num_classes)

if init_weights:

for m in self.modules():

if isinstance(m, nn.Conv2d) or isinstance(m, nn.Linear):

torch.nn.init.trunc_normal_(m.weight, mean=0.0, std=0.01, a=-2, b=2)

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

def _transform_input(self, x):

if self.transform_input:

x_ch0 = torch.unsqueeze(x[:, 0], 1) * (0.229 / 0.5) + (0.485 - 0.5) / 0.5

x_ch1 = torch.unsqueeze(x[:, 1], 1) * (0.224 / 0.5) + (0.456 - 0.5) / 0.5

x_ch2 = torch.unsqueeze(x[:, 2], 1) * (0.225 / 0.5) + (0.406 - 0.5) / 0.5

x = torch.cat((x_ch0, x_ch1, x_ch2), 1)

return x

def forward(self, x):

x = self._transform_input(x)

# N x 3 x 224 x 224 ---- batch_size cahnnel height width

x = self.conv1(x)

# N x 64 x 112 x 112

x = self.maxpool1(x)

# N x 64 x 56 x 56

x = self.conv2(x)

# N x 64 x 56 x 56

x = self.conv3(x)

# N x 192 x 56 x 56

x = self.maxpool2(x)

# N x 192 x 28 x 28

x = self.inception3a(x)

# N x 256 x 28 x 28

x = self.inception3b(x)

# N x 480 x 28 x 28

x = self.maxpool3(x)

# N x 480 x 14 x 14

x = self.inception4a(x)

# N x 512 x 14 x 14

if self.training and self.aux_logits:

aux1 = self.aux1(x)

x = self.inception4b(x)

# N x 512 x 14 x 14

x = self.inception4c(x)

# N x 512 x 14 x 14

x = self.inception4d(x)

# N x 528 x 14 x 14

if self.training and self.aux_logits:

aux2 = self.aux2(x)

x = self.inception4e(x)

# N x 832 x 14 x 14

x = self.maxpool4(x)

# N x 832 x 7 x 7

x = self.inception5a(x)

# N x 832 x 7 x 7

x = self.inception5b(x)

# N x 1024 x 7 x 7

x = self.avgpool(x)

# N x 1024 x 1 x 1

x = torch.flatten(x, 1)

# N x 1024

x = self.dropout(x)

x = self.fc(x)

# N x 1000 (num_classes)

if self.training and self.aux_logits:

return x, aux2, aux1

return x

class Inception(nn.Module):

def __init__(self, in_channels, ch1x1, ch3x3red, ch3x3, ch5x5red, ch5x5, pool_proj):

super(Inception, self).__init__()

self.branch1 = BasicConv2d(in_channels, ch1x1, kernel_size=1)

self.branch2 = nn.Sequential(

BasicConv2d(in_channels, ch3x3red, kernel_size=1),

BasicConv2d(ch3x3red, ch3x3, kernel_size=3, padding=1) # 保证输出大小等于输入大小

)

self.branch3 = nn.Sequential(

BasicConv2d(in_channels, ch5x5red, kernel_size=1),

BasicConv2d(ch5x5red, ch5x5, kernel_size=3, padding=1), # 保证输出大小等于输入大小

)

self.branch4 = nn.Sequential(

nn.MaxPool2d(kernel_size=3, stride=1, padding=1, ceil_mode=True),

BasicConv2d(in_channels, pool_proj, kernel_size=1),

)

def forward(self, x):

branch1 = self.branch1(x)

branch2 = self.branch2(x)

branch3 = self.branch3(x)

branch4 = self.branch4(x)

outputs = [branch1, branch2, branch3, branch4]

return torch.cat(outputs, 1) #batch channel hetght width,在channel上拼接

class InceptionAux(nn.Module):

def __init__(self, in_channels, num_classes):

super(InceptionAux, self).__init__()

self.averagePool = nn.AvgPool2d(kernel_size=5, stride=3)

self.conv = BasicConv2d(in_channels, 128, kernel_size=1) # output[batch, 128, 4, 4]

self.fc1 = nn.Linear(2048, 1024)

self.fc2 = nn.Linear(1024, num_classes)

def forward(self, x):

# aux1: N x 512 x 14 x 14, aux2: N x 528 x 14 x 14

x = self.averagePool(x)

# aux1: N x 512 x 4 x 4, aux2: N x 528 x 4 x 4

x = self.conv(x)

# N x 128 x 4 x 4

x = torch.flatten(x, 1)

x = F.dropout(x, 0.5, training=self.training)

# N x 2048

x = F.relu(self.fc1(x), inplace=True)

x = F.dropout(x, 0.5, training=self.training)

# N x 1024

x = self.fc2(x)

# N x 1000 (num_classes)

return x

class BasicConv2d(nn.Module):

def __init__(self, in_channels, out_channels, **kwargs):

super(BasicConv2d, self).__init__()

self.conv = nn.Conv2d(in_channels, out_channels, bias=False, **kwargs)

self.bn = nn.BatchNorm2d(out_channels, eps=0.001)

def forward(self, x):

x = self.conv(x)

x = self.bn(x)

return F.relu(x, inplace=True)

if __name__ == "__main__":

googlenet = GoogLeNet(num_classes = 3, aux_logits = True, transform_input = False, init_weights = True)

in_data = torch.randn(1, 3, 224, 224)

out = googlenet(in_data)

print(out)完成网络的定义之后,可以单独执行一下这个文件,用来验证网络定义的是否正确。如果可以正确输出,就没问题。

三.开始训练

加载数据集

首先定义一个字典,用于用于对train和val进行预处理,包括裁剪成224*224大小,训练集随机水平翻转(一般验证集不需要此操作),转换成张量,图像归一化。

然后利用DataLoader模块加载数据集,并设置batch_size为32,同时,设置数据加载器的工作进程数nw,加快速度。

data_transform = {

"train": transforms.Compose([transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))]),

"val": transforms.Compose([transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])}

# 获取数据集路径

image_path = os.path.join(os.getcwd(), "data_set", "flower_data") # flower data set path

assert os.path.exists(image_path), "{} path does not exist.".format(image_path)

# 加载数据集,准备读取

train_dataset = datasets.ImageFolder(root=os.path.join(image_path, "train"),transform=data_transform["train"])

validate_dataset = datasets.ImageFolder(root=os.path.join(image_path, "val"), transform=data_transform["val"])

nw = min([os.cpu_count(), 32 if 32 > 1 else 0, 8]) # number of workers

print(f'Using {nw} dataloader workers every process')

# 加载数据集

train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=32, shuffle=True, num_workers=nw)

validate_loader = torch.utils.data.DataLoader(validate_dataset, batch_size=32, shuffle=False, num_workers=nw)

train_num = len(train_dataset)

val_num = len(validate_dataset)

print(f"using {train_num} images for training, {val_num} images for validation.") 生成json文件

将训练数据集的类别标签转换为字典格式,并将其写入名为'class_indices.json'的文件中。

- 从

train_dataset中获取类别标签到索引的映射关系,存储在flower_list变量中。 - 使用列表推导式将

flower_list中的键值对反转,得到一个新的字典cla_dict,其中键是原始类别标签,值是对应的索引。 - 使用

json.dumps()函数将cla_dict转换为JSON格式的字符串,设置缩进为4个空格。 - 使用

with open()语句以写入模式打开名为'class_indices.json'的文件,并将JSON字符串写入文件。

# {'daisy':0, 'dandelion':1, 'roses':2, 'sunflower':3, 'tulips':4} 雏菊 蒲公英 玫瑰 向日葵 郁金香

# 从训练集中获取类别标签到索引的映射关系,存储在flower_list变量

flower_list = train_dataset.class_to_idx

# 使用列表推导式将flower_list中的键值对反转,得到一个新的字典cla_dict

cla_dict = dict((val, key) for key, val in flower_list.items())

# write dict into json file,将cla_dict转换为JSON格式的字符串

json_str = json.dumps(cla_dict, indent=4)

with open('class_indices.json', 'w') as json_file:

json_file.write(json_str)定义网络,开始训练

首先定义网络对象net,传入要分类的类别数为5,使用辅助分类器并初始化权重;在这里训练30轮,并使用train_bar = tqdm(train_loader, file=sys.stdout)来可视化训练进度条,loss计算采用了GoogleNet原论文的方法,进行加权计算,之后再进行反向传播和参数更新;同时,每一轮训练完成都要进行学习率更新;之后开始对验证集进行计算精确度,完成后保存模型。

net = GoogLeNet(num_classes=5, aux_logits=True, init_weights=True)

net.to(device)

loss_function = nn.CrossEntropyLoss()

optimizer = optim.Adam(net.parameters(), lr=0.0003)

sculer = torch.optim.lr_scheduler.StepLR(optimizer, step_size=1)

epochs = 30

best_acc = 0.0

train_steps = len(train_loader)

for epoch in range(epochs):

# train

net.train()

running_loss = 0.0

train_bar = tqdm(train_loader, file=sys.stdout)

for step, data in enumerate(train_bar):

imgs, labels = data

optimizer.zero_grad()

logits, aux_logits2, aux_logits1 = net(imgs.to(device))

loss0 = loss_function(logits, labels.to(device))

loss1 = loss_function(aux_logits1, labels.to(device))

loss2 = loss_function(aux_logits2, labels.to(device))

loss = loss0 + loss1 * 0.3 + loss2 * 0.3

loss.backward()

optimizer.step()

# print statistics

running_loss += loss.item()

train_bar.desc = f"train epoch[{epoch+1}/{epochs}] loss:{loss:.3f}"

sculer.step()

# validate

net.eval()

acc = 0.0 # accumulate accurate number / epoch

with torch.no_grad():

val_bar = tqdm(validate_loader, file=sys.stdout)

for val_data in val_bar:

val_imgs, val_labels = val_data

outputs = net(val_imgs.to(device)) # eval model only have last output layer

predict_y = torch.max(outputs, dim=1)[1]

acc += torch.eq(predict_y, val_labels.to(device)).sum().item()

val_accurate = acc / val_num

print('[epoch %d] train_loss: %.3f val_accuracy: %.3f' %

(epoch + 1, running_loss / train_steps, val_accurate))

if val_accurate > best_acc:

best_acc = val_accurate

torch.save(net,"./googleNet.pth")

print('Finished Training')最后对代码进行整理,完整的train.py如下

import os

import sys

import json

import torch

import torch.nn as nn

from torchvision import transforms, datasets

from torch.utils.data import DataLoader

import torch.optim as optim

from tqdm import tqdm

from model import GoogLeNet

def main():

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(f"using {device} device.")

data_transform = {

"train": transforms.Compose([transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))]),

"val": transforms.Compose([transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])}

# 获取数据集路径

image_path = os.path.join(os.getcwd(), "data_set", "flower_data") # flower data set path

assert os.path.exists(image_path), "{} path does not exist.".format(image_path)

# 加载数据集,准备读取

train_dataset = datasets.ImageFolder(root=os.path.join(image_path, "train"),transform=data_transform["train"])

validate_dataset = datasets.ImageFolder(root=os.path.join(image_path, "val"), transform=data_transform["val"])

nw = min([os.cpu_count(), 32 if 32 > 1 else 0, 8]) # number of workers

print(f'Using {nw} dataloader workers every process')

# 加载数据集

train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=32, shuffle=True, num_workers=nw)

validate_loader = torch.utils.data.DataLoader(validate_dataset, batch_size=32, shuffle=False, num_workers=nw)

train_num = len(train_dataset)

val_num = len(validate_dataset)

print(f"using {train_num} images for training, {val_num} images for validation.")

# {'daisy':0, 'dandelion':1, 'roses':2, 'sunflower':3, 'tulips':4} 雏菊 蒲公英 玫瑰 向日葵 郁金香

# 从训练集中获取类别标签到索引的映射关系,存储在flower_list变量

flower_list = train_dataset.class_to_idx

# 使用列表推导式将flower_list中的键值对反转,得到一个新的字典cla_dict

cla_dict = dict((val, key) for key, val in flower_list.items())

# write dict into json file,将cla_dict转换为JSON格式的字符串

json_str = json.dumps(cla_dict, indent=4)

with open('class_indices.json', 'w') as json_file:

json_file.write(json_str)

"""如果要使用官方的预训练权重,注意是将权重载入官方的模型,不是我们自己实现的模型

官方的模型中使用了bn层以及改了一些参数,不能混用

import torchvision

net = torchvision.models.googlenet(num_classes=5)

model_dict = net.state_dict()

# 预训练权重下载地址: https://download.pytorch.org/models/googlenet-1378be20.pth

pretrain_model = torch.load("googlenet.pth")

del_list = ["aux1.fc2.weight", "aux1.fc2.bias",

"aux2.fc2.weight", "aux2.fc2.bias",

"fc.weight", "fc.bias"]

pretrain_dict = {k: v for k, v in pretrain_model.items() if k not in del_list}

model_dict.update(pretrain_dict)

net.load_state_dict(model_dict)"""

net = GoogLeNet(num_classes=5, aux_logits=True, init_weights=True)

net.to(device)

loss_function = nn.CrossEntropyLoss()

optimizer = optim.Adam(net.parameters(), lr=0.0003)

sculer = torch.optim.lr_scheduler.StepLR(optimizer, step_size=1)

epochs = 30

best_acc = 0.0

train_steps = len(train_loader)

for epoch in range(epochs):

# train

net.train()

running_loss = 0.0

train_bar = tqdm(train_loader, file=sys.stdout)

for step, data in enumerate(train_bar):

imgs, labels = data

optimizer.zero_grad()

logits, aux_logits2, aux_logits1 = net(imgs.to(device))

loss0 = loss_function(logits, labels.to(device))

loss1 = loss_function(aux_logits1, labels.to(device))

loss2 = loss_function(aux_logits2, labels.to(device))

loss = loss0 + loss1 * 0.3 + loss2 * 0.3

loss.backward()

optimizer.step()

# print statistics

running_loss += loss.item()

train_bar.desc = f"train epoch[{epoch+1}/{epochs}] loss:{loss:.3f}"

sculer.step()

# validate

net.eval()

acc = 0.0 # accumulate accurate number / epoch

with torch.no_grad():

val_bar = tqdm(validate_loader, file=sys.stdout)

for val_data in val_bar:

val_imgs, val_labels = val_data

outputs = net(val_imgs.to(device)) # eval model only have last output layer

predict_y = torch.max(outputs, dim=1)[1]

acc += torch.eq(predict_y, val_labels.to(device)).sum().item()

val_accurate = acc / val_num

print('[epoch %d] train_loss: %.3f val_accuracy: %.3f' %

(epoch + 1, running_loss / train_steps, val_accurate))

if val_accurate > best_acc:

best_acc = val_accurate

torch.save(net,"./googleNet.pth")

print('Finished Training')

if __name__ == '__main__':

main()

四.模型预测

新建一个predict.py文件用于预测,将输入图像处理后转换成张量格式,img = torch.unsqueeze(img, dim=0)是在输入图像张量 img 的第一个维度上增加一个大小为1的维度,因此将图像张量的形状从 [通道数, 高度, 宽度 ] 转换为 [1, 通道数, 高度, 宽度]。然后加载模型进行预测,并打印出结果,同时可视化。

import os

import json

import torch

from PIL import Image

from torchvision import transforms

import matplotlib.pyplot as plt

from model import GoogLeNet

def main():

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

data_transform = transforms.Compose(

[transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

# load image

img = Image.open("./2678588376_6ca64a4a54_n.jpg")

plt.imshow(img)

# [N, C, H, W]

img = data_transform(img)

# expand batch dimension

img = torch.unsqueeze(img, dim=0)

# read class_indict

with open("./class_indices.json", "r") as f:

class_indict = json.load(f)

# create model

model = GoogLeNet(num_classes=5, aux_logits=False).to(device)

model=torch.load("/home/lm/GoogleNet/googleNet.pth")

model.eval()

with torch.no_grad():

# predict class

output = torch.squeeze(model(img.to(device))).cpu()

predict = torch.softmax(output, dim=0)

predict_class = torch.argmax(predict).numpy()

print_result = f"class: {class_indict[str(predict_class)]} prob: {predict[predict_class].numpy():.3}"

plt.title(print_result)

for i in range(len(predict)):

print(f"class: {class_indict[str(i)]:10} prob: {predict[i].numpy():.3}")

plt.show()

if __name__ == '__main__':

main()

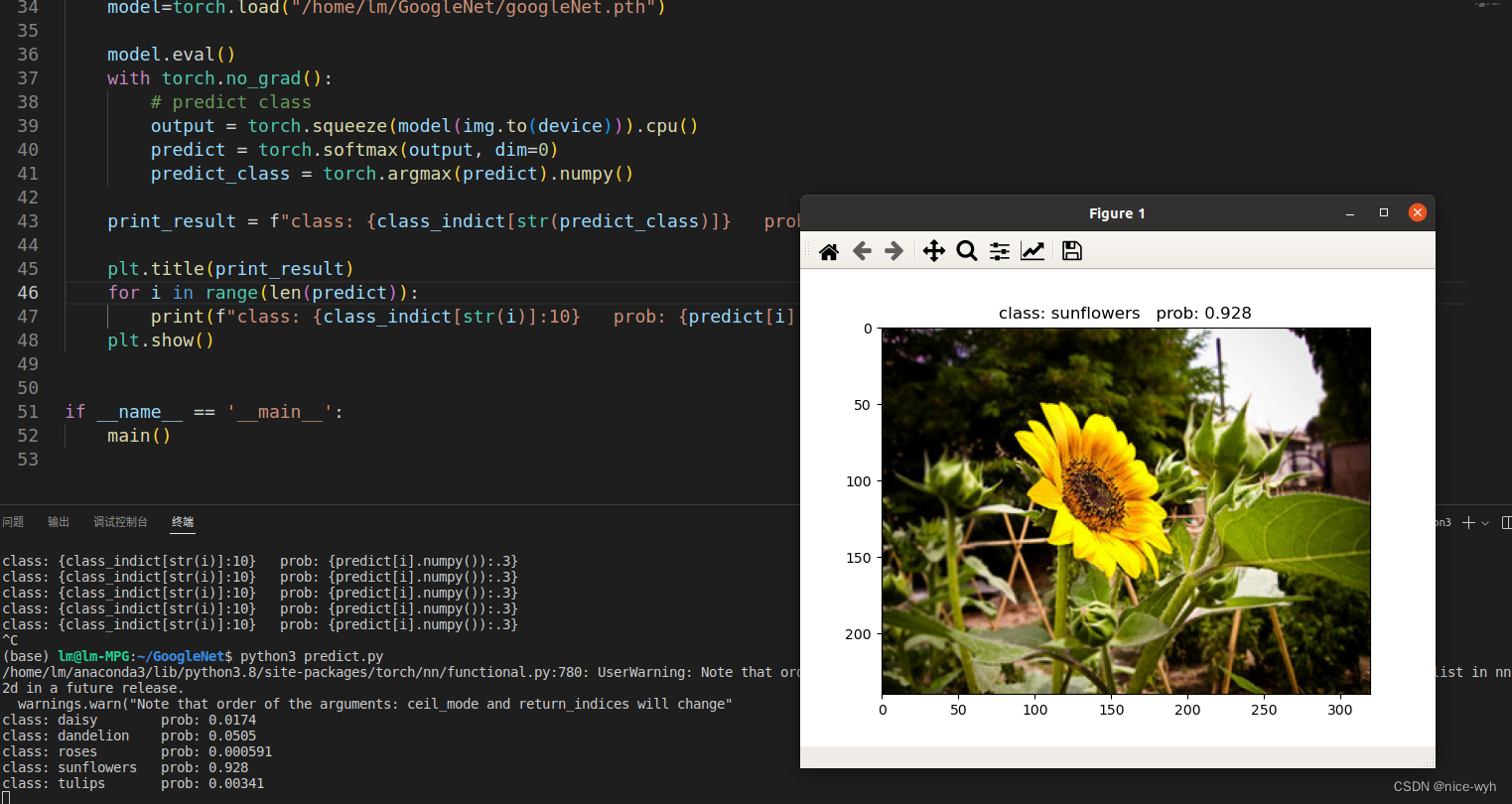

预测结果

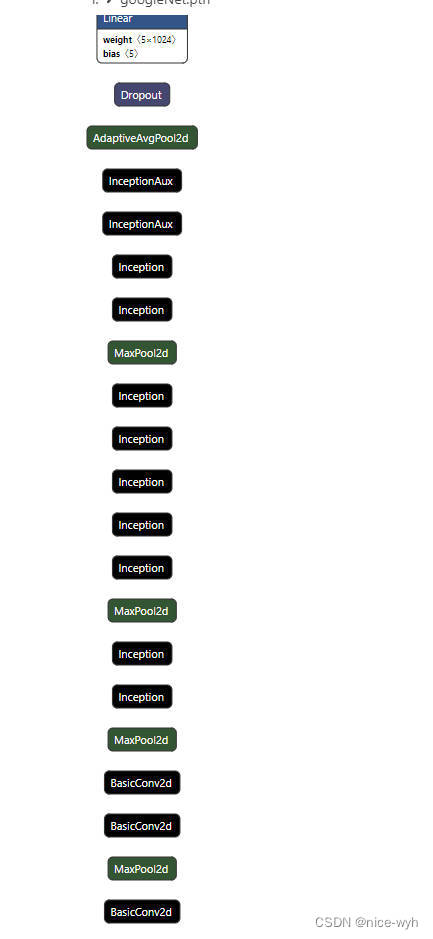

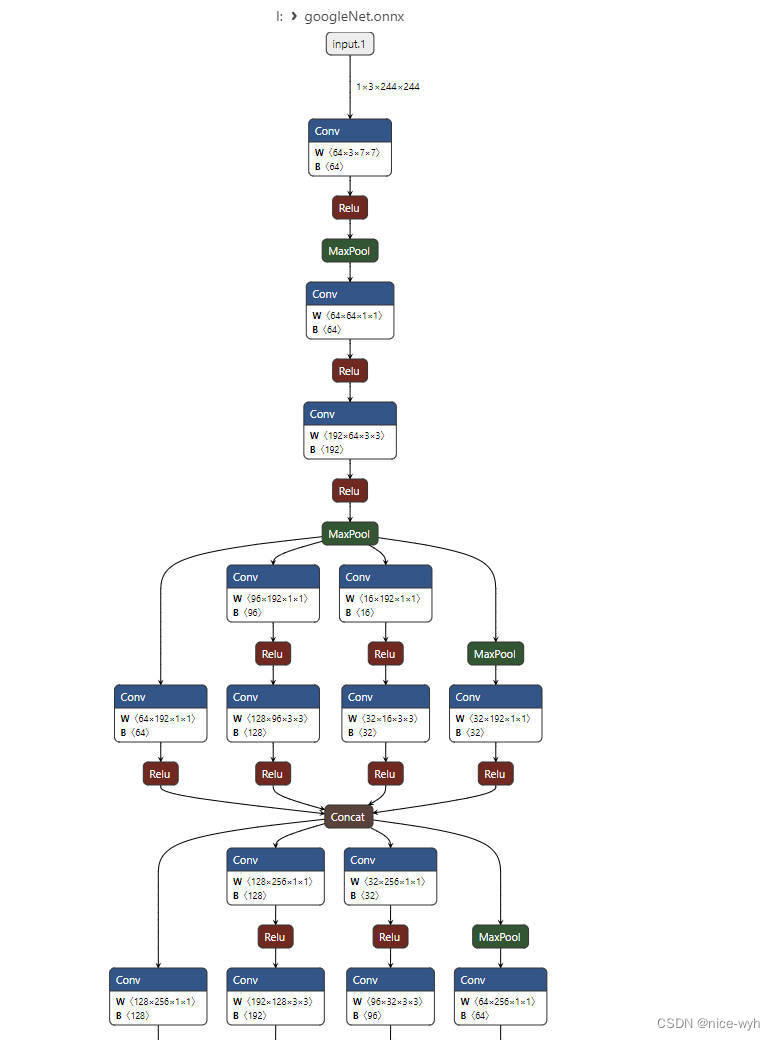

五.模型可视化

将生成的pth文件导入netron工具,可视化结果为

发现很不清晰,因此将它转换成多用于嵌入式设备部署的onnx格式

编写onnx.py

import torch

import torchvision

from model import GoogLeNet

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

model = GoogLeNet(num_classes=5, aux_logits=False).to(device)

model=torch.load("/home/lm/GoogleNet/googleNet.pth")

model.eval()

example = torch.ones(1, 3, 244, 244)

example = example.to(device)

torch.onnx.export(model, example, "googleNet.onnx", verbose=True, opset_version=11)

将生成的onnx文件导入,这样的可视化清晰了许多

六.模型改进

发现去掉学习率更新会提高准确率(从70%提升到83%),因此把train.py里面对应部分删掉。

还有其他方法会在之后进行补充。

![正则表达式[总结]](https://img-blog.csdnimg.cn/img_convert/7a6d55f743104e1232fe8916a1f09246.png)

![[计算机网络基础]数据链路层](https://img-blog.csdnimg.cn/343bfb05070c4239b1a734dc94ddac3b.png)