前言:

云原生|kubernetes|kubernetes集群部署神器kubekey的初步使用(centos7下的kubekey使用)_晚风_END的博客-CSDN博客

前面利用kubekey部署了一个简单的非高可用,etcd单实例的kubernetes集群,经过研究,发现部署过程可以简化,省去了一部分下载过程(主要是下载kubernetes组件的过程)只是kubernetes版本会固定在1.22.16版本,etcd集群可以部署成生产用的外部集群,并且apiserver等等组件也是高可用,并且部署非常简单,因此,也就非常nice了。

一,

离线安装包

####注,该离线包适用于centos7并在centos7下全系列验证通过,欧拉的部分版本应该也可以使用

链接:https://pan.baidu.com/s/1d4YR_a244iZj5aj2DJLU2w?pwd=kkey

提取码:kkey

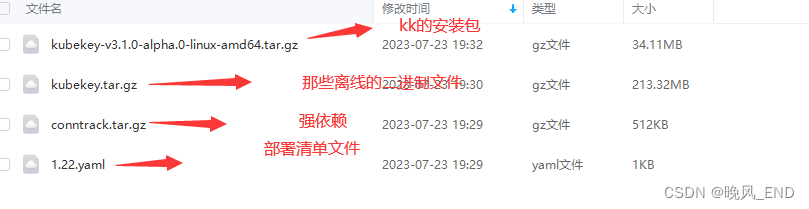

安装包内大体有如下文件:

第一个没什么好说的,kubekey的安装包,解压后查看是否有执行权限就可以了,如果没有,添加执行权限

第二个是kubernetes组件的二进制文件,直接解压到root目录下就可以了

第三个是强依赖,解压后,进入解压后目录,执行 rpm -ivh * 就可以了,

第四个事部署清单,需要按照 实际的情况填写IP,还有服务器的密码,别的基本不需要动

然后就可以执行部署工作了,只是会拉取一些镜像,这些镜像是从kubesphere官网拉取,如果嫌拉取镜像太慢,可以export KKZONE=cn ,然后镜像都会从阿里云拉取。

二,

部署清单文件的解析

文件内容如下:

主要是hosts标签,roleGroups标签

hosts标签下面,有几个节点写几个节点,我实验的时候是使用了四个VMware虚拟机,每个虚拟机是4G内存,2CPUI的规格,IP地址和密码按实际填写

用户使用的是root,其实也是避免一些失败的情况,毕竟root权限最高嘛,部署安装工作还是不要花里胡哨的用普通用户(yum部署都从来不用普通用户,就是避免失败的嘛)。

roleGroups的标签是11,12,13 这三个节点做主节点,也是etcd集群的节点

高可用使用的haproxy,具体实现细节还没分析出来。

具体的安装部署的日志在/root/kubekey/logs

apiVersion: kubekey.kubesphere.io/v1alpha2

kind: Cluster

metadata:

name: sample

spec:

hosts:

- {name: node1, address: 192.168.123.11, internalAddress: 192.168.123.11, user: root, password: "密码"}

- {name: node2, address: 192.168.123.12, internalAddress: 192.168.123.12, user: root, password: "密码"}

- {name: node3, address: 192.168.123.13, internalAddress: 192.168.123.13, user: root, password: "密码"}

- {name: node4, address: 192.168.123.14, internalAddress: 192.168.123.14, user: root, password: "密码"}

roleGroups:

etcd:

- node1

- node2

- node3

control-plane:

- node1

- node2

- node3

worker:

- node4

controlPlaneEndpoint:

## Internal loadbalancer for apiservers

internalLoadbalancer: haproxy

domain: lb.kubesphere.local

address: ""

port: 6443

kubernetes:

version: v1.23.16

clusterName: cluster.local

autoRenewCerts: true

containerManager: docker

etcd:

type: kubekey

network:

plugin: calico

kubePodsCIDR: 10.244.0.0/18

kubeServiceCIDR: 10.96.0.0/18

## multus support. https://github.com/k8snetworkplumbingwg/multus-cni

multusCNI:

enabled: false

registry:

privateRegistry: ""

namespaceOverride: ""

registryMirrors: []

insecureRegistries: []

addons: []

三,

部署完成的状态检查

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-2 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}

[root@centos1 ~]# kubectl get po -A -owide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

kube-system calico-kube-controllers-84897d7cdf-hrj4f 1/1 Running 0 152m 10.244.28.2 node3 <none> <none>

kube-system calico-node-2m7hp 1/1 Running 0 152m 192.168.123.11 node1 <none> <none>

kube-system calico-node-5ztjk 1/1 Running 0 152m 192.168.123.14 node4 <none> <none>

kube-system calico-node-96dmb 1/1 Running 0 152m 192.168.123.13 node3 <none> <none>

kube-system calico-node-rqp2p 1/1 Running 0 152m 192.168.123.12 node2 <none> <none>

kube-system coredns-b7c47bcdc-bbxck 1/1 Running 0 152m 10.244.28.3 node3 <none> <none>

kube-system coredns-b7c47bcdc-qtvhf 1/1 Running 0 152m 10.244.28.1 node3 <none> <none>

kube-system haproxy-node4 1/1 Running 0 152m 192.168.123.14 node4 <none> <none>

kube-system kube-apiserver-node1 1/1 Running 0 152m 192.168.123.11 node1 <none> <none>

kube-system kube-apiserver-node2 1/1 Running 0 152m 192.168.123.12 node2 <none> <none>

kube-system kube-apiserver-node3 1/1 Running 0 152m 192.168.123.13 node3 <none> <none>

kube-system kube-controller-manager-node1 1/1 Running 0 152m 192.168.123.11 node1 <none> <none>

kube-system kube-controller-manager-node2 1/1 Running 0 152m 192.168.123.12 node2 <none> <none>

kube-system kube-controller-manager-node3 1/1 Running 0 152m 192.168.123.13 node3 <none> <none>

kube-system kube-proxy-649mn 1/1 Running 0 152m 192.168.123.14 node4 <none> <none>

kube-system kube-proxy-7q7ts 1/1 Running 0 152m 192.168.123.13 node3 <none> <none>

kube-system kube-proxy-dmd7v 1/1 Running 0 152m 192.168.123.12 node2 <none> <none>

kube-system kube-proxy-fpb6z 1/1 Running 0 152m 192.168.123.11 node1 <none> <none>

kube-system kube-scheduler-node1 1/1 Running 0 152m 192.168.123.11 node1 <none> <none>

kube-system kube-scheduler-node2 1/1 Running 0 152m 192.168.123.12 node2 <none> <none>

kube-system kube-scheduler-node3 1/1 Running 0 152m 192.168.123.13 node3 <none> <none>

kube-system nodelocaldns-565pz 1/1 Running 0 152m 192.168.123.12 node2 <none> <none>

kube-system nodelocaldns-dpwlx 1/1 Running 0 152m 192.168.123.13 node3 <none> <none>

kube-system nodelocaldns-ndlbw 1/1 Running 0 152m 192.168.123.14 node4 <none> <none>

kube-system nodelocaldns-r8gjl 1/1 Running 0 152m 192.168.123.11 node1 <none> <none>

[root@centos1 ~]# kubectl get no -owide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

node1 Ready control-plane,master 152m v1.23.16 192.168.123.11 <none> CentOS Linux 7 (Core) 3.10.0-1062.el7.x86_64 docker://20.10.8

node2 Ready control-plane,master 152m v1.23.16 192.168.123.12 <none> CentOS Linux 7 (Core) 3.10.0-1062.el7.x86_64 docker://20.10.8

node3 Ready control-plane,master 152m v1.23.16 192.168.123.13 <none> CentOS Linux 7 (Core) 3.10.0-1062.el7.x86_64 docker://20.10.8

node4 Ready worker 152m v1.23.16 192.168.123.14 <none> CentOS Linux 7 (Core) 3.10.0-1062.el7.x86_64 docker://20.10.8

在将12 节点关闭后,可以看到 kubernetes集群仍可以正常运行(11不能关,因为是管理节点嘛,那些集群的config文件没拷贝到其它节点)