文章目录

- 1. nn.RNN 构建单向 RNN

- 2. nn.LSTM 构建单向 LSTM

- 3. 推荐参考资料

1. nn.RNN 构建单向 RNN

torch.nn.RNN 的 PyTorch 链接:torch.nn.RNN(*args, **kwargs)

nn.RNN 的用法和输入输出参数的介绍直接看代码:

import torch

import torch.nn as nn

# 单层单向 RNN

embed_dim = 5 # 每个输入元素的特征维度,如每个单词用长度为 5 的特征向量表示

hidden_dim = 6 # 隐状态的特征维度,如每个单词在隐藏层中用长度为 6 的特征向量表示

rnn_layers = 4 # 循环层数

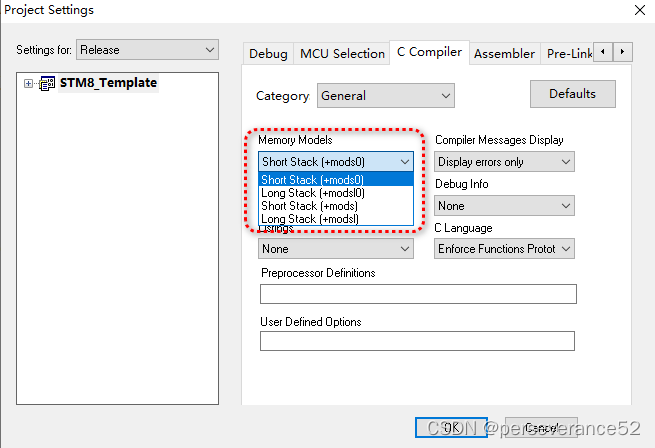

rnn = nn.RNN(input_size=embed_dim, hidden_size=hidden_dim, num_layers=rnn_layers, batch_first=True)

# 输入

batch_size = 2

sequence_length = 3 # 输入的序列长度,如 i love you 的序列长度为 3,每个单词用长度为 feature_num 的特征向量表示

input = torch.randn(batch_size, sequence_length, embed_dim)

h0 = torch.randn(rnn_layers, batch_size, hidden_dim)

# output 表示隐藏层在各个 time step 上计算并输出的隐状态

# hn 表示所有掩藏层的在最后一个 time step 隐状态, 即单词 you 的隐状态

output, hn = rnn(input, h0)

print(f"output = {output}")

print(f"hn = {hn}")

print(f"output.shape = {output.shape}") # torch.Size([2, 3, 6]) [batch_size, sequence_length, hidden_dim]

print(f"hn.shape = {hn.shape}") # torch.Size([4, 2, 6]) [rnn_layers, batch_size, hidden_dim]

"""

output = tensor([[[-0.3727, -0.2137, -0.3619, -0.6116, -0.1483, 0.8292],

[ 0.1138, -0.6310, -0.3897, -0.5275, 0.2012, 0.3399],

[-0.0522, -0.5991, -0.3114, -0.7089, 0.3824, 0.1903]],

[[ 0.1370, -0.6037, 0.3906, -0.5222, 0.8498, 0.8887],

[-0.3463, -0.3293, -0.1874, -0.7746, 0.2287, 0.1343],

[-0.2588, -0.4145, -0.2608, -0.3799, 0.4464, 0.1960]]],

grad_fn=<TransposeBackward1>)

hn = tensor([[[-0.2892, 0.7568, 0.4635, -0.2106, -0.0123, -0.7278],

[ 0.3492, -0.3639, -0.4249, -0.6626, 0.7551, 0.9312]],

[[ 0.0154, 0.0190, 0.3580, -0.1975, -0.1185, 0.3622],

[ 0.0905, 0.6483, -0.1252, 0.3903, 0.0359, -0.3011]],

[[-0.2833, -0.3383, 0.2421, -0.2168, -0.6694, -0.5462],

[ 0.2976, 0.0724, -0.0116, -0.1295, -0.6324, -0.0302]],

[[-0.0522, -0.5991, -0.3114, -0.7089, 0.3824, 0.1903],

[-0.2588, -0.4145, -0.2608, -0.3799, 0.4464, 0.1960]]],

grad_fn=<StackBackward0>)

output.shape = torch.Size([2, 3, 6])

hn.shape = torch.Size([4, 2, 6])

"""

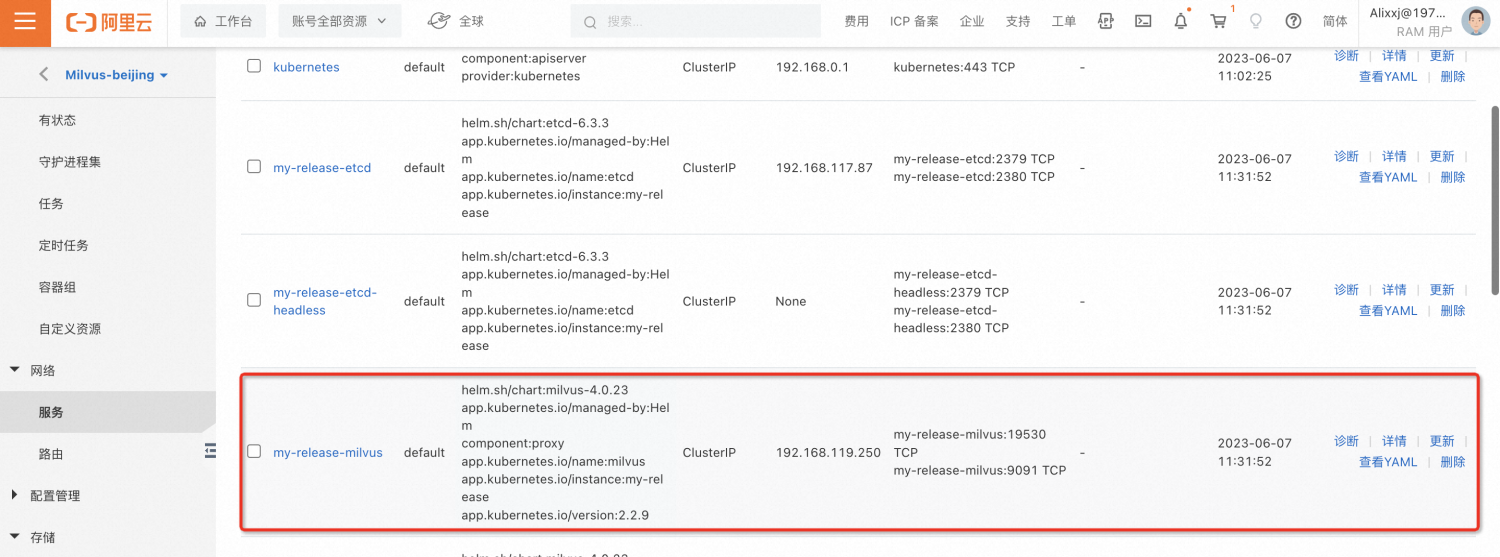

需要特别注意的是 nn.RNN 的第二个输出 hn 表示所有掩藏层的在最后一个 time step 隐状态,听起来很难理解,看下面的红色方框内的数据就懂了。即 output[:, -1, :] = hn[-1, : , :]

这里 hn 保存了四次循环中最后一个 time step 隐状态的数值,以输入 i love you 为了,hn 保存的是单词 you 的隐状态。

2. nn.LSTM 构建单向 LSTM

torch.nn.RNN 的 PyTorch 链接:torch.nn.LSTM(*args, **kwargs)

nn.LSTM 的用法和输入输出参数的介绍直接看代码:

import torch

import torch.nn as nn

batch_size = 4

seq_len = 3 # 输入的序列长度

embed_dim = 5 # 每个输入元素的特征维度

hidden_size = 5 * 2 # 隐状态的特征维度,根据工程经验可取 hidden_size = embed_dim * 2

num_layers = 2 # LSTM 的层数,一般设置为 1-4 层;多层 LSTM 的介绍可以参考 https://blog.csdn.net/weixin_41041772/article/details/88032093

lstm = nn.LSTM(input_size=embed_dim, hidden_size=hidden_size, num_layers=num_layers, batch_first=True)

# h0 可以缺省

input = torch.randn(batch_size, seq_len, embed_dim)

"""

output 表示隐藏层在各个 time step 上计算并输出的隐状态

hn 表示所有隐藏层的在最后一个 time step 隐状态, 即单词 you 的隐状态;所以 hn 与句子长度 seq_len 无关

hn[-1] 表示最后一个隐藏层的在最后一个 time step 隐状态,即 LSTM 的输出

cn 表示句子的最后一个单词的细胞状态;所以 cn 与句子长度 seq_len 无关

其中 output[:, -1, :] = hn[-1,:,:]

"""

output, (hn, cn) = lstm(input)

print(f"output.shape = {output.shape}") # torch.Size([4, 3, 10])

print(f"hn.shape = {hn.shape}") # torch.Size([2, 4, 10])

print(f"cn.shape = {cn.shape}") # torch.Size([2, 4, 10])

print(f"output = {output}")

print(f"hn = {hn}")

print(f"output[:, -1, :] = {output[:, -1, :]}")

print(f"hn[-1,:,:] = {hn[-1,:,:]}")

"""

output = tensor([[[ 0.0447, 0.0111, 0.0292, 0.0692, -0.0547, -0.0120, -0.0202, -0.0243, 0.1216, 0.0643],

[ 0.0780, 0.0279, 0.0231, 0.1061, -0.0819, -0.0027, -0.0269, -0.0509, 0.1800, 0.0921],

[ 0.0993, 0.0160, 0.0516, 0.1402, -0.1146, -0.0177, -0.0607, -0.0715, 0.2110, 0.0954]],

[[ 0.0542, -0.0053, 0.0415, 0.0899, -0.0561, -0.0376, -0.0327, -0.0276, 0.1159, 0.0545],

[ 0.0819, -0.0015, 0.0640, 0.1263, -0.1021, -0.0502, -0.0495, -0.0464, 0.1814, 0.0750],

[ 0.0914, 0.0034, 0.0558, 0.1418, -0.1327, -0.0643, -0.0616, -0.0674, 0.2195, 0.0886]],

[[ 0.0552, -0.0006, 0.0351, 0.0864, -0.0486, -0.0192, -0.0305, -0.0289, 0.1103, 0.0554],

[ 0.0835, -0.0099, 0.0415, 0.1396, -0.0758, -0.0829, -0.0616, -0.0604, 0.1740, 0.0828],

[ 0.1202, -0.0113, 0.0570, 0.1608, -0.0836, -0.0801, -0.0792, -0.0874, 0.1923, 0.0829]],

[[ 0.0115, -0.0026, 0.0267, 0.0747, -0.0867, -0.0250, -0.0199, -0.0154, 0.1158, 0.0649],

[ 0.0628, 0.0003, 0.0297, 0.1191, -0.1028, -0.0342, -0.0509, -0.0496, 0.1759, 0.0831],

[ 0.0569, 0.0105, 0.0158, 0.1300, -0.1367, -0.0207, -0.0514, -0.0629, 0.2029, 0.1042]]], grad_fn=<TransposeBackward0>)

hn = tensor([[[-0.1933, -0.0058, -0.1237, 0.0348, -0.1394, 0.2403, 0.1591, -0.1143, 0.1211, -0.1971],

[-0.2387, 0.0433, -0.0296, 0.0877, -0.1198, 0.1919, 0.0832, 0.0738, 0.1907, -0.1807],

[-0.2174, 0.0721, -0.0447, 0.1081, -0.0520, 0.2519, 0.4040, -0.0033, 0.1378, -0.2930],

[-0.2130, -0.0404, -0.0588, -0.1346, -0.1865, 0.1032, -0.0269, 0.0265, -0.0664, -0.1800]],

[[ 0.0993, 0.0160, 0.0516, 0.1402, -0.1146, -0.0177, -0.0607, -0.0715, 0.2110, 0.0954],

[ 0.0914, 0.0034, 0.0558, 0.1418, -0.1327, -0.0643, -0.0616, -0.0674, 0.2195, 0.0886],

[ 0.1202, -0.0113, 0.0570, 0.1608, -0.0836, -0.0801, -0.0792, -0.0874, 0.1923, 0.0829],

[ 0.0569, 0.0105, 0.0158, 0.1300, -0.1367, -0.0207, -0.0514, -0.0629, 0.2029, 0.1042]]], grad_fn=<StackBackward0>)

验证 output[:, -1, :] = hn[-1,:,:]

output[:, -1, :] = tensor([[ 0.0993, 0.0160, 0.0516, 0.1402, -0.1146, -0.0177, -0.0607, -0.0715,0.2110, 0.0954],

[ 0.0914, 0.0034, 0.0558, 0.1418, -0.1327, -0.0643, -0.0616, -0.0674, 0.2195, 0.0886],

[ 0.1202, -0.0113, 0.0570, 0.1608, -0.0836, -0.0801, -0.0792, -0.0874, 0.1923, 0.0829],

[ 0.0569, 0.0105, 0.0158, 0.1300, -0.1367, -0.0207, -0.0514, -0.0629, 0.2029, 0.1042]], grad_fn=<SliceBackward0>)

hn[-1,:,:] = tensor([[ 0.0993, 0.0160, 0.0516, 0.1402, -0.1146, -0.0177, -0.0607, -0.0715,0.2110, 0.0954],

[ 0.0914, 0.0034, 0.0558, 0.1418, -0.1327, -0.0643, -0.0616, -0.0674, 0.2195, 0.0886],

[ 0.1202, -0.0113, 0.0570, 0.1608, -0.0836, -0.0801, -0.0792, -0.0874, 0.1923, 0.0829],

[ 0.0569, 0.0105, 0.0158, 0.1300, -0.1367, -0.0207, -0.0514, -0.0629, 0.2029, 0.1042]], grad_fn=<SliceBackward0>)

"""

3. 推荐参考资料

多层 LSTM 的介绍可以参考博客 RNN之多层LSTM理解:输入,输出,时间步,隐藏节点数,层数

RNN 的原理和 PyTorch 源码复现可以参考视频 PyTorch RNN的原理及其手写复现

LSTM 的原理和 PyTorch 源码复现可以参考视频 PyTorch LSTM和LSTMP的原理及其手写复现

![Linux系统下 - [linux命令]查找包含指定内容的文件](https://img-blog.csdnimg.cn/284ce3cbc4554246827d1007d862a06e.png)