前言:最近在做文本生成的工作,调研发现针对不同的文本生成场景(机器翻译、对话生成、图像描述、data-to-text 等),客观评价指标也不尽相同。虽然网络上已经有很多关于文本生成评价指标的文章,本博客也是基于现有资源的一个汇总,但这些文章大多是对评价指标原理的系统性梳理,很少结合相应的代码实现。我认为还是要使用理论实践相结合的方式,通过代码来辅助我们更好地理解这些评价指标,毕竟我们是要根据这些评价指标的关注点来决定最终选择使用哪些指标对我们的任务进行评价。本篇博文对这些客观评价指标的原理进行简要总结,并给出代码示例。

目录

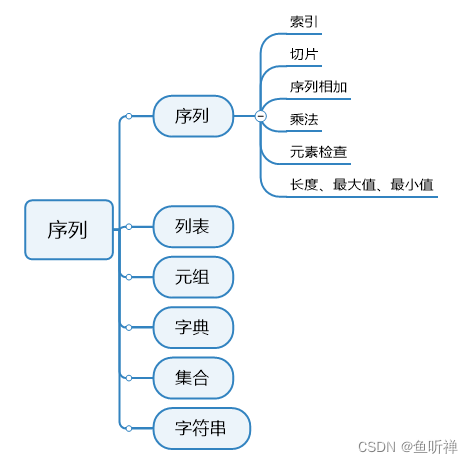

- 基于词重叠的评价指标

- BLEU(Bilingual Evaluation Understudy)

- ROUGE(Recall-Oriented Understudy for Gisting Evaluation)

- METEOR

- NIST(National Institute of standards and Technology)

- distinct

- 基于词向量的评价指标

- Embedding Average Score

- Greedy Matching Score

- Embedding Average Score

- 基于语言模型的评价指标

- BertScore

- BARTScore

- MoverScore

- BELURT

- Perplexity(困惑度)

- 基于距离的评价指标

- TER(Translation Edit Rate )

- 基于学习的评价指标

- 其他评价指标

- CIDEr(Translation Edit Rate )

- SPICE(Semantic Propositional Image Caption Evaluation)

- 参考资料

基于词重叠的评价指标

基于词重叠的评价指标关注词汇分布的相似性,主要包括BLEU、ROUGE、METEOR、NIST和distinct. 其中BLEU和METEOR常用于机器翻译任务,ROUGE常用于自动文本摘要。

BLEU(Bilingual Evaluation Understudy)

关注精确率,计算生成文本中n-gram出现在参照文本中的比例,适用于数据集量级,在句子级表现不佳。

相关文章:Bleu: a Method for Automatic Evaluation of Machine Translation

ROUGE(Recall-Oriented Understudy for Gisting Evaluation)

是对BLEU的改进,关注召回率,计算参照文本中n-gram出现在生成文本中的比例,又可细分为ROUGE-N、ROUGE-L、ROUGE-W、ROUGE-S.

相关文章:ROUGE: Recall-oriented understudy for gisting evaluation

METEOR

是对BLEU的改进,考虑了生成文本与参照文本之间的对齐关系,使用WordNet计算特定的序列匹配,同义词,词根和词缀,释义之间的匹配关系。

相关文章:METEOR: An Automatic Metric for MT Evaluation with Improved Correlation with Human Judgments

NIST(National Institute of standards and Technology)

是对BLEU的改进,引入了每个n-gram的信息量的概念。

代码实现参考:机器翻译评测——BLEU改进后的NIST算法

distinct

用于评价生成文本的多样性,计算生成文本中不重复的n-gram数量与n-gram总数量的比值。

相关文章:A diversity-promoting objective function for neural conversation models

代码实现-版本1 参考: 自然语言生成评测方法 BLEU, Distinct, F1 代码实现

# 代码实现——版本1

def get_dict(tokens, ngram, gdict=None):

"""

统计 n-gram 频率并用dict存储

"""

token_dict = {}

if gdict is not None:

token_dict = gdict

tlen = len(tokens)

for i in range(0, tlen - ngram + 1):

ngram_token = "".join(tokens[i:(i + ngram)])

if token_dict.get(ngram_token) is not None:

token_dict[ngram_token] += 1

else:

token_dict[ngram_token] = 1

return token_dict

def calc_distinct_ngram(pair_list, ngram):

ngram_total = 0.0

ngram_distinct_count = 0.0

pred_dict = {}

for predict_tokens, _ in pair_list:

get_dict(predict_tokens, ngram, pred_dict)

for _, freq in pred_dict.items():

ngram_total += freq

ngram_distinct_count += 1

#if freq == 1:

# ngram_distinct_count += freq

return ngram_distinct_count / ngram_total

if __name__ == "__main__":

predictions = ['This is a cat', 'It is so lovely!']

references = ["What's the weather today?", 'So cute!']

pair_list = [[predictions[0], references[0]], [predictions[1], references[1]]]

distinct1 = calc_distinct_ngram(pair_list, 1)

distinct2 = calc_distinct_ngram(pair_list, 2)

print(distinct1, distinct2)

# 0.5172413793103449 0.8148148148148148

代码实现——版本2 参考:【NLG】(四)文本生成评价指标—— diversity原理及代码示例

# 代码实现——版本2

from collections import defaultdict

def calc_diversity(predicts):

'''

生成结果加入空格

'''

tokens = [0.0, 0.0]

types = [defaultdict(int), defaultdict(int)]

for gg in predicts:

g = gg.rstrip().split()

for n in range(2):

for idx in range(len(g)-n):

ngram = ' '.join(g[idx:idx+n+1])

types[n][ngram] = 1

tokens[n] += 1

div1 = len(types[0].keys())/tokens[0]

div2 = len(types[1].keys())/tokens[1]

return [div1, div2]

if __name__ == '__main__':

predicts = ["What are you saying", "What did you say?"]

div1, div2 = calc_diversity(predicts)

print(div1, div2)

# 0.75, 1.0

基于词向量的评价指标

基于词向量的评价指标关注语义分布的相似性,主要包括 Embedding Average Score、Greedy Matching Score、Vector Extrema Score.

Embedding Average Score

分别对参照文本和生成文本的词向量取平均,作为二者的文本特征,然后计算二者的余弦相似度。

Greedy Matching Score

取参照文本和生成文本最相似的一对单词的词向量的余弦相似度作为二者的相似度。

Embedding Average Score

分别对参照文本和生成文本,取句中各单词词向量每个维度的最大值作为句子向量对应维度的最大值,得到两个句向量,然后计算二者的余弦相似度。

基于词重叠的评价指标(BLEU、ROUGE、METEOR)和基于词向量的评价指标(Embedding Average Score、Greedy Matching Score、Vector Extrema Score)相关代码直接调用nlg-eval实现,nlg-eval安装参考此篇博文。

# 代码实现

from nlgeval import NLGEval

references = ["This is a cat", "This is a feline"]

predictions = ["This is my cat"]

references=[[r] for r in references]

nlgeval_=NLGEval()

ans=nlgeval_.compute_metrics(hyp_list=predictions,ref_list=references)

print(ans)

# {'Bleu_1': 0.7499999996250004, 'Bleu_2': 0.4999999997291671, 'Bleu_3': 4.999999996944452e-06, 'Bleu_4': 1.8803015450937985e-08, 'METEOR': 0.8559670781893004, 'ROUGE_L': 0.75, 'CIDEr': 0.0, 'SkipThoughtCS': 0.85018563, 'EmbeddingAverageCosineSimilarity': 0.810215, 'EmbeddingAverageCosineSimilairty': 0.810215, 'VectorExtremaCosineSimilarity': 0.77313, 'GreedyMatchingScore': 0.867042}

基于语言模型的评价指标

基于语言模型的评价指标通过使用语言模型,来计算参照文本和生成文本的相似度,主要包括BertScore、BARTScore、MoverScore、BLEURT及Perplexity.

BertScore

对生成文本和参照文本(word piece进行tokenize)分别用bert提取特征,然后对两个句子的每一个词分别计算内积,可以得到一个相似性矩阵。基于这个矩阵,分别对参照文本和生成文本进行最大相似性得分的累加然后归一化,得到bertscore的precision,recall和F1值。

相关文章:BERTScore: Evaluating Text Generation with BERT (ICLR 2020)

代码链接:https://github.com/Tiiiger/bert_score

# 代码实现-版本1

from bert_score import score

def BertScore(predictions, references):

P, R, F1 = score(predictions, references, lang="en", verbose=True, model_type = "distilbert-base-uncased")

# print(f"System level F1 score: {F1.mean():.3f}")

return P, R, F1

def DrawBertScoreSimilarityMatrix(pred, ref):

from bert_score import plot_example

plot_example(pred, ref, lang="en", fname="/data/WWW/extra/metrics/bert_score.png")

# prediction 和 reference 长度必须相同

predictions = ['This is a cat', 'It is so lovely!']

references = ["What's the weather today?", 'So cute!']

print(BertScore(predictions, references))

# (tensor([0.6350, 0.8027]), tensor([0.6267, 0.8685]), tensor([0.6308, 0.8343]))

pred, ref = predictions[1], references[1]

DrawBertScoreSimilarityMatrix(pred, ref)

代码实现-版本2 调用 huggingface 中的 evaluate 库实现:https://huggingface.co/spaces/evaluate-metric/bertscore

# 代码实现-版本2

'''

The original BERTScore paper showed that BERTScore correlates well with human judgment on sentence-level and system-level evaluation, but this depends on the model and language pair selected.

'''

import evaluate

bertscore = evaluate.load("bertscore")

predictions = ['This is a cat', 'It is so lovely!']

references = ["What's the weather today?", 'So cute!']

results = bertscore.compute(predictions=predictions, references=references, lang="en", model_type = "distilbert-base-uncased")

print(results)

# {'precision': [0.6349790096282959, 0.8026740550994873], 'recall': [0.6267067193984985, 0.8684506416320801], 'f1': [0.6308157444000244, 0.8342678546905518], 'hashcode': 'distilbert-base-uncased_L5_no-idf_version=0.3.12(hug_trans=4.23.1)'}

BARTScore

将生成文本的评估看做是文本生成任务,采用无监督学习对生成文本的不同方面 (e.g. informativeness, fluency, or factuality) 进行评估。

相关文章:BARTSCORE: Evaluating Generated Text as Text Generation(NeuralPS 2021)

代码链接:https://github.com/neulab/BARTScore

# 代码实现

import torch

import torch.nn as nn

import traceback

from transformers import BartTokenizer, BartForConditionalGeneration

class BARTScorer:

def __init__(self, device='cuda:0', max_length=1024, checkpoint='facebook-bart-large-cnn'):

# Set up model

self.device = device

self.max_length = max_length

self.tokenizer = BartTokenizer.from_pretrained(checkpoint)

self.model = BartForConditionalGeneration.from_pretrained(checkpoint)

self.model.eval()

self.model.to(device)

# Set up loss

self.loss_fct = nn.NLLLoss(reduction='none', ignore_index=self.model.config.pad_token_id)

self.lsm = nn.LogSoftmax(dim=1)

def load(self, path=None):

""" Load model from paraphrase finetuning """

if path is None:

path = 'models/bart.pth'

self.model.load_state_dict(torch.load(path, map_location=self.device))

def score(self, srcs, tgts, batch_size=4):

""" Score a batch of examples """

score_list = []

for i in range(0, len(srcs), batch_size):

src_list = srcs[i: i + batch_size]

tgt_list = tgts[i: i + batch_size]

try:

with torch.no_grad():

encoded_src = self.tokenizer(

src_list,

max_length=self.max_length,

truncation=True,

padding=True,

return_tensors='pt'

)

encoded_tgt = self.tokenizer(

tgt_list,

max_length=self.max_length,

truncation=True,

padding=True,

return_tensors='pt'

)

src_tokens = encoded_src['input_ids'].to(self.device)

src_mask = encoded_src['attention_mask'].to(self.device)

tgt_tokens = encoded_tgt['input_ids'].to(self.device)

tgt_mask = encoded_tgt['attention_mask']

tgt_len = tgt_mask.sum(dim=1).to(self.device)

output = self.model(

input_ids=src_tokens,

attention_mask=src_mask,

labels=tgt_tokens

)

logits = output.logits.view(-1, self.model.config.vocab_size)

loss = self.loss_fct(self.lsm(logits), tgt_tokens.view(-1))

loss = loss.view(tgt_tokens.shape[0], -1)

loss = loss.sum(dim=1) / tgt_len

curr_score_list = [-x.item() for x in loss]

score_list += curr_score_list

except RuntimeError:

traceback.print_exc()

print(f'source: {src_list}')

print(f'target: {tgt_list}')

exit(0)

return score_list

def test(self, batch_size=3):

""" Test """

src_list = [

'This is a very good idea. Although simple, but very insightful.',

'Can I take a look?',

'Do not trust him, he is a liar.'

]

tgt_list = [

"That's stupid.",

"What's the problem?",

'He is trustworthy.'

]

print(self.score(src_list, tgt_list, batch_size))

# To use the CNNDM version BARTScore

bart_scorer = BARTScorer(device='cuda:0', checkpoint='facebook/bart-large-cnn')

score_list = bart_scorer.score(['This is interesting.'], ['This is fun.']) # generation scores from the first list of texts to the second list of texts.

print(score_list)

# [-2.510652780532837]

MoverScore

对BertScore的改进,原理讲解可查看本篇文章

相关文章:MoverScore: Text Generation Evaluating with Contextualized Embeddings and Earth Mover Distance(EMNLP 2019)

代码链接:https://github.com/AIPHES/emnlp19-moverscore

# 代码实现

#!/usr/bin/env python3

# -*- coding: utf-8 -*-

"""

Created on Thu Mar 11 02:01:42 2021

@author: zhao

"""

import os

# os.environ['MOVERSCORE_MODEL'] = "emilyalsentzer/Bio_ClinicalBERT"

os.environ['MOVERSCORE_MODEL'] = "albert-base-v2"

from typing import List, Union, Iterable

from itertools import zip_longest

import sacrebleu

from moverscore_v2 import word_mover_score

from collections import defaultdict

import numpy as np

os.environ['MOVERSCORE_MODEL'] = "albert-base-v2"

def sentence_score(hypothesis: str, references: List[str], trace=0):

idf_dict_hyp = defaultdict(lambda: 1.)

idf_dict_ref = defaultdict(lambda: 1.)

hypothesis = [hypothesis] * len(references)

sentence_score = 0

scores = word_mover_score(references, hypothesis, idf_dict_ref, idf_dict_hyp, stop_words=[], n_gram=1, remove_subwords=False)

sentence_score = np.mean(scores)

if trace > 0:

print(hypothesis, references, sentence_score)

return sentence_score

def corpus_score(sys_stream: List[str],

ref_streams:Union[str, List[Iterable[str]]], trace=0):

if isinstance(sys_stream, str):

sys_stream = [sys_stream]

if isinstance(ref_streams, str):

ref_streams = [[ref_streams]]

fhs = [sys_stream] + ref_streams

corpus_score = 0

for lines in zip_longest(*fhs):

if None in lines:

raise EOFError("Source and reference streams have different lengths!")

hypo, *refs = lines

corpus_score += sentence_score(hypo, refs, trace=0)

corpus_score /= len(sys_stream)

return corpus_score

def test_corpus_score():

refs = [['The dog bit the man.', 'It was not unexpected.', 'The man bit him first.'],

['The dog had bit the man.', 'No one was surprised.', 'The man had bitten the dog.']]

sys = ['The dog bit the man.', "It wasn't surprising.", 'The man had just bitten him.']

bleu = sacrebleu.corpus_bleu(sys, refs)

mover = corpus_score(sys, refs)

print(bleu.score)

print(mover)

def test_sentence_score():

refs = ['The dog bit the man.', 'The dog had bit the man.']

sys = 'The dog bit the man.'

bleu = sacrebleu.sentence_bleu(sys, refs)

mover = sentence_score(sys, refs)

print(bleu.score)

print(mover)

def DrawMoverScoreSimilarityMatrix(pred, ref):

from moverscore_v2 import plot_example

plot_example(True, ref, pred)

if __name__ == '__main__':

test_sentence_score()

# 100.00000000000004

# 0.8019052804445386

test_corpus_score()

# 48.530827009929865

# 0.7152593800256765

ref = 'they are now equipped with air conditioning and new toilets.'

pred = 'they have air conditioning and new toilets.'

DrawMoverScoreSimilarityMatrix(pred, ref)

BELURT

通过fine-tune语义相似度任务,用来做评价指标,原理讲解可查看本篇文章

相关文章:BLEURT: Learning Robust Metrics for Text Generation(ACL 2020)

代码链接:https://github.com/google-research/bleurt

计算BLEURT时,调用 huggingface 中的 evaluate 库实现:https://huggingface.co/spaces/evaluate-metric/bleurt

# 代码实现

import evaluate

predictions = ["hello there", "general kenobi"]

references = ["hello there", "general kenobi"]

bleurt = evaluate.load("bleurt", module_type="metric", checkpoint="bleurt-base-128")

results = bleurt.compute(predictions=predictions, references=references)

print(results)

# {'scores': [1.0295498371124268, 1.0445425510406494]}

Perplexity(困惑度)

用来评估生成文本的流畅性,其值越小,说明语言模型的建模能力越好,即生成的文本越接近自然语言。

相关文章:Perplexity—a measure of the difficulty of speech recognition tasks

计算困惑度时,调用 huggingface 中的 evaluate 库实现:https://huggingface.co/spaces/evaluate-metric/perplexity

# 代码实现

from collections import defaultdict

import evaluate

import datasets

'''

Note that the output value is based heavily on what text the model was trained on. This means that perplexity scores are not comparable between models or datasets.

'''

perplexity = evaluate.load("perplexity", module_type="metric")

input_texts = ["lorem ipsum", "Happy Birthday!", "Bienvenue"]

results = perplexity.compute(model_id='gpt2',

add_start_token=False,

predictions=input_texts)

input_texts = datasets.load_dataset("wikitext",

"wikitext-2-raw-v1",

split="test")["text"][:50]

input_texts = [s for s in input_texts if s!='']

results = perplexity.compute(model_id='gpt2', predictions=input_texts)

print(list(results.keys()))

print(round(results["mean_perplexity"], 2))

for i in results["perplexities"]: # 打印每句话的ppl

print(round(i, 2))

# ['perplexities', 'mean_perplexity']

# 646.74

# 32.25

# 1499.69

# 408.28

基于距离的评价指标

基于距离的评价指标是一种典型的 “错误率” 的度量方法,类似指标还有 WER,PER 等,只是在 “错误” 的定义上略有不同。这些指标多用于机器翻译任务中。

TER(Translation Edit Rate )

计算将生成文本转换为参照文本所需要的最少编辑操作次数。

计算TER时,调用 huggingface 中的 evaluate 库实现:https://huggingface.co/spaces/evaluate-metric/ter

# 代码实现

import evaluate

predictions = ["does this sentence match??", "what about this sentence?"]

references = [["does this sentence match", "does this sentence match!?!"],

["wHaT aBoUt ThIs SeNtEnCe?", "wHaT aBoUt ThIs SeNtEnCe?"]]

ter = evaluate.load("ter")

results = ter.compute(predictions=predictions,

references=references,

normalized=True,

case_sensitive=True)

print(results)

# {'score': 57.14285714285714, 'num_edits': 6, 'ref_length': 10.5}

基于学习的评价指标

使用机器学习/神经网络的方法来学习一个好的评价指标,使得模型打分和人工打分更接近。像各种 GANs、ADEM、Dual Encoder 等。

其他评价指标

还有一些针对特定文本生成任务设计的指标,如图像描述生成任务中的CIDEr和SPICE指标、data-to-text中的相关指标等。

CIDEr(Translation Edit Rate )

将每个句子都看作“文档”,将其表示成 Term Frequency Inverse Document Frequency(tf-idf)向量的形式,通过对每个n元组进行(TF-IDF) 权重计算,计算参照描述文本与生成描述文本的余弦相似度,来衡量图像标注的一致性的。

相关文章:CIDEr: Consensus-based image description evaluation

计算CIDEr时,可以直接调用nlg-eval实现,nlg-eval安装参考此篇博文。

SPICE(Semantic Propositional Image Caption Evaluation)

使用基于图的语义表示来编码描述中的 objects, attributes 和 relationships。它先将待评价 caption 和参考 captions 用 Probabilistic Context-Free Grammar (PCFG) dependency parser parse 成 syntactic dependencies trees,然后用基于规则的方法把 dependency tree 映射成 scene graphs。最后计算待评价的 caption 中 objects, attributes 和 relationships 的 F-score 值。

相关文章:SPICE: Semantic Propositional Image Caption Evaluation

文本生成中的主流客观评价指标就先介绍到这里啦 ~ 希望伙伴们能够对这些指标有一个系统性了解,选择合适的指标对自己的任务进行评估~

参考资料

- 文本生成13:万字长文梳理文本生成评价指标

- 对话系统评价指标

- 深度学习对话系统理论篇–数据集和评价指标介绍

- 文本生成评价指标的进化与推翻

- MoverScore原理讲解

- 基于深度学习的对话系统常用评价指标优缺点和适用范围

- 语言模型评价指标Perplexity

- 机器翻译评测——BLEU改进后的NIST算法

- 自然语言生成评测方法 BLEU, Distinct, F1 代码实现

![[附源码]JAVA毕业设计计算机组成原理教学演示软件(系统+LW)](https://img-blog.csdnimg.cn/6ba456fff5cb4967b843accee6a2d4fc.png)