深度学习算法实现流程:

1 从训练数据中随机选出一部分数据,称为mini-batch。我们的目标为减小mini-batch损失函数的值

2 计算损失函数关于权重的梯度。梯度方向即为损失函数值减小最快的方向

3 将权重沿梯度下降方向更新

4 重复以上步骤,在另外选取的一种mini-batch中更新权重

该方法由于使用随机的mini-batch数据,被称为随机梯度下降法(stochastic gradient descent SGD)

示例:

# coding: utf-8

import sys, os

sys.path.append(os.pardir) # 为了导入父目录的文件而进行的设定

from common.functions import *

from common.gradient import numerical_gradient

class TwoLayerNet:

def __init__(self, input_size, hidden_size, output_size, weight_init_std=0.01):

# 初始化权重

self.params = {}

self.params['W1'] = weight_init_std * np.random.randn(input_size, hidden_size)

self.params['b1'] = np.zeros(hidden_size)

self.params['W2'] = weight_init_std * np.random.randn(hidden_size, output_size)

self.params['b2'] = np.zeros(output_size)

def predict(self, x):

W1, W2 = self.params['W1'], self.params['W2']

b1, b2 = self.params['b1'], self.params['b2']

a1 = np.dot(x, W1) + b1

z1 = sigmoid(a1)

a2 = np.dot(z1, W2) + b2

y = softmax(a2)

return y

# x:输入数据, t:监督数据

def loss(self, x, t):

y = self.predict(x)

return cross_entropy_error(y, t)

def accuracy(self, x, t):

y = self.predict(x)

y = np.argmax(y, axis=1)

t = np.argmax(t, axis=1)

accuracy = np.sum(y == t) / float(x.shape[0])

return accuracy

# x:输入数据, t:监督数据

def numerical_gradient(self, x, t):

loss_W = lambda W: self.loss(x, t)

grads = {}

grads['W1'] = numerical_gradient(loss_W, self.params['W1'])

grads['b1'] = numerical_gradient(loss_W, self.params['b1'])

grads['W2'] = numerical_gradient(loss_W, self.params['W2'])

grads['b2'] = numerical_gradient(loss_W, self.params['b2'])

return grads

1

self.params = {}

self.params['W1'] = weight_init_std * np.random.randn(input_size, hidden_size)

self.params['b1'] = np.zeros(hidden_size)

self.params['W2'] = weight_init_std * np.random.randn(hidden_size, output_size)

self.params['b2'] = np.zeros(output_size)

初始化网络权重和偏置。权重初始值为0.1 * 随机正态。偏置初始值0

2

def predict(self, x):

W1, W2 = self.params['W1'], self.params['W2']

b1, b2 = self.params['b1'], self.params['b2']

a1 = np.dot(x, W1) + b1

z1 = sigmoid(a1)

a2 = np.dot(z1, W2) + b2

y = softmax(a2)

return y

得到网络输出。我们使用sigmoid函数作为第一层激活函数,softmax作为输出层激活函数计算结果

3

def loss(self, x, t):

y = self.predict(x)

return cross_entropy_error(y, t)

使用交叉熵计算总损失

4

def accuracy(self, x, t):

y = self.predict(x)

y = np.argmax(y, axis=1)

t = np.argmax(t, axis=1)

accuracy = np.sum(y == t) / float(x.shape[0])

return accuracy

计算神经网络预测准确率:预测正确次数除以输入样本量

注:

np.argmax() 得到数组中最大值,我们用此函数得到网络预测的数值和one-hot标签值

5

def numerical_gradient(self, x, t):

loss_W = lambda W: self.loss(x, t)

grads = {}

grads['W1'] = numerical_gradient(loss_W, self.params['W1'])

grads['b1'] = numerical_gradient(loss_W, self.params['b1'])

grads['W2'] = numerical_gradient(loss_W, self.params['W2'])

grads['b2'] = numerical_gradient(loss_W, self.params['b2'])

return grads

计算数值梯度。计算损失函数关于各个参数的偏导,得到损失函数关于神经网络参数的梯度

基于该神经网络的mini-batch训练

这里我们使用mnist手写数字集作为训练对象。

# coding: utf-8

import sys, os

sys.path.append(os.pardir) # 为了导入父目录的文件而进行的设定

import numpy as np

import matplotlib.pyplot as plt

from dataset.mnist import load_mnist

from two_layer_net import TwoLayerNet

# 读入数据

(x_train, t_train), (x_test, t_test) = load_mnist(normalize=True, one_hot_label=True)

network = TwoLayerNet(input_size=784, hidden_size=100, output_size=10)

iters_num = 100 # 适当设定循环的次数

train_size = x_train.shape[0]

batch_size = 10000

learning_rate = 0.5

train_loss_list = []

train_acc_list = []

test_acc_list = []

iter_per_epoch = max(train_size / batch_size, 1)

for i in range(iters_num):

batch_mask = np.random.choice(train_size, batch_size)

x_batch = x_train[batch_mask]

t_batch = t_train[batch_mask]

# 计算梯度

#grad = network.numerical_gradient(x_batch, t_batch)

grad = network.gradient(x_batch, t_batch)

# 更新参数

for key in ('W1', 'b1', 'W2', 'b2'):

network.params[key] -= learning_rate * grad[key]

loss = network.loss(x_batch, t_batch)

train_loss_list.append(loss)

if i % iter_per_epoch == 0:

train_acc = network.accuracy(x_train, t_train)

test_acc = network.accuracy(x_test, t_test)

train_acc_list.append(train_acc)

test_acc_list.append(test_acc)

print("train acc, test acc | " + str(train_acc) + ", " + str(test_acc))

# 绘制图形

markers = {'train': 'o', 'test': 's'}

x = np.arange(len(train_acc_list))

plt.plot(x, train_acc_list, label='train acc')

plt.plot(x, test_acc_list, label='test acc', linestyle='--')

plt.xlabel("epochs")

plt.ylabel("accuracy")

plt.ylim(0, 1.0)

plt.legend(loc='lower right')

plt.show()

1

network = TwoLayerNet(input_size=784, hidden_size=100, output_size=10)

创建神经网络。输入神经元数784为 28 X 28 图片展开。隐藏层神经元数量100,输出层数10,代表判断的数字结果0-9

2

iters_num = 100 # 适当设定循环的次数

train_size = x_train.shape[0]

batch_size = 10000

learning_rate = 0.5

超参数:迭代次数,训练集样本量,每次选取的mini-batch样本量,学习率

3

batch_mask = np.random.choice(train_size, batch_size)

x_batch = x_train[batch_mask]

t_batch = t_train[batch_mask]

初始化训练数据及标签

4

# 计算梯度

#grad = network.numerical_gradient(x_batch, t_batch)

grad = network.gradient(x_batch, t_batch)

# 更新参数

for key in ('W1', 'b1', 'W2', 'b2'):

network.params[key] -= learning_rate * grad[key]

计算梯度并更新参数

5

if i % iter_per_epoch == 0:

train_acc = network.accuracy(x_train, t_train)

test_acc = network.accuracy(x_test, t_test)

train_acc_list.append(train_acc)

test_acc_list.append(test_acc)

print("train acc, test acc | " + str(train_acc) + ", " + str(test_acc))

为了确保为了泛化能力,防止过拟合。我们每经过一个特定的epoch检测神经网络关于训练数据和关于测试数据的预测精准度。如果两个数据差距不大说明网络的泛化能力较强。在这里我们每当处理了和train_size相等的batch数量就进行一次检测

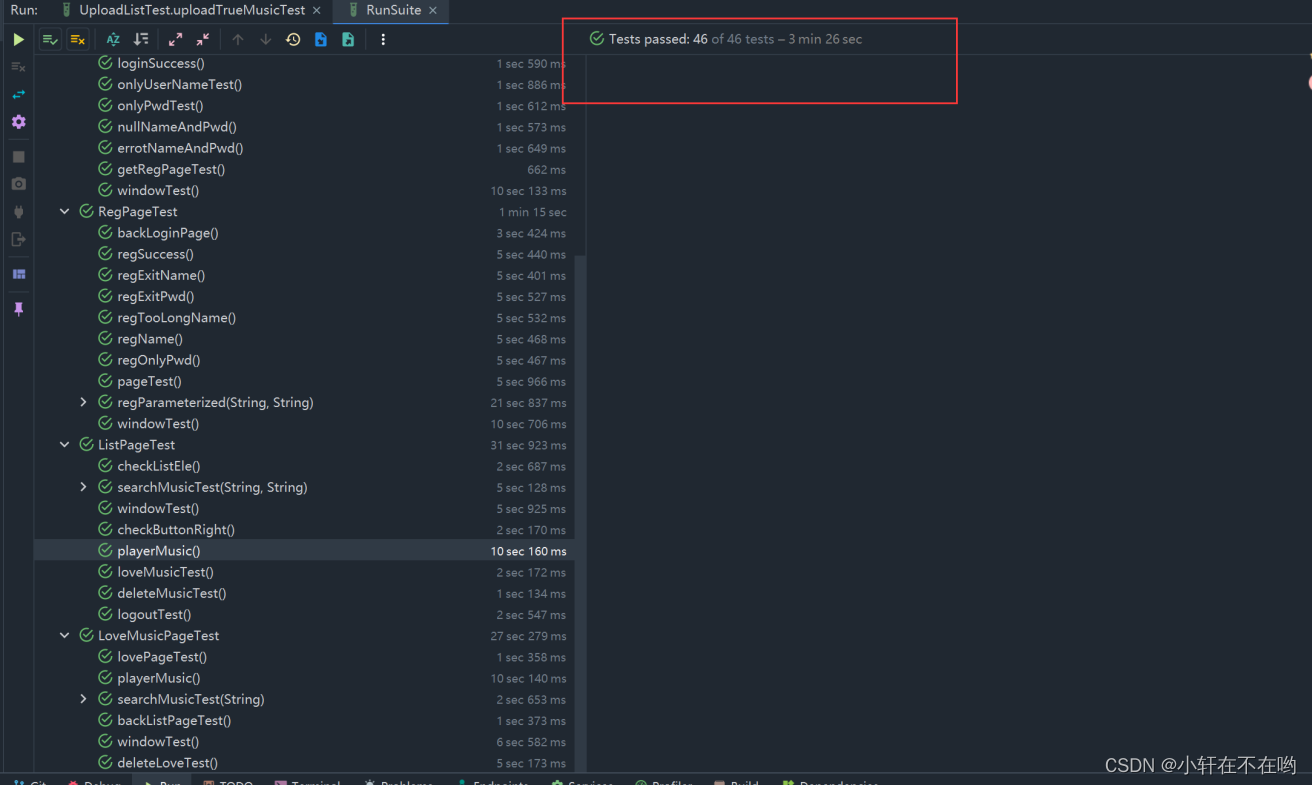

结果:

learning_rate = 0.5, hidden_size = 100, batch_size = 10000

train acc, test acc | 0.11236666666666667, 0.1135

train acc, test acc | 0.11236666666666667, 0.1135

train acc, test acc | 0.2014, 0.2007

train acc, test acc | 0.2825, 0.2805

train acc, test acc | 0.2062, 0.2052

train acc, test acc | 0.38693333333333335, 0.3913

train acc, test acc | 0.34846666666666665, 0.3494

train acc, test acc | 0.43978333333333336, 0.4341

train acc, test acc | 0.5131166666666667, 0.5132

train acc, test acc | 0.6023166666666666, 0.606

train acc, test acc | 0.6332666666666666, 0.6387

train acc, test acc | 0.6871833333333334, 0.6881

train acc, test acc | 0.68835, 0.6933

train acc, test acc | 0.7204666666666667, 0.7224

train acc, test acc | 0.7432166666666666, 0.7444

train acc, test acc | 0.7611333333333333, 0.768

train acc, test acc | 0.7908833333333334, 0.7933

这里我们看到神经网络预测准确度在不断上升,说明模型训练成功。train acc和test acc两条线基本上重合,说明模型具有很强泛化能力,没有出现过拟合