一、系统环境初始化

一)架构设计

所有节点都操作:3个master(etcd集群三个节点)和2个node

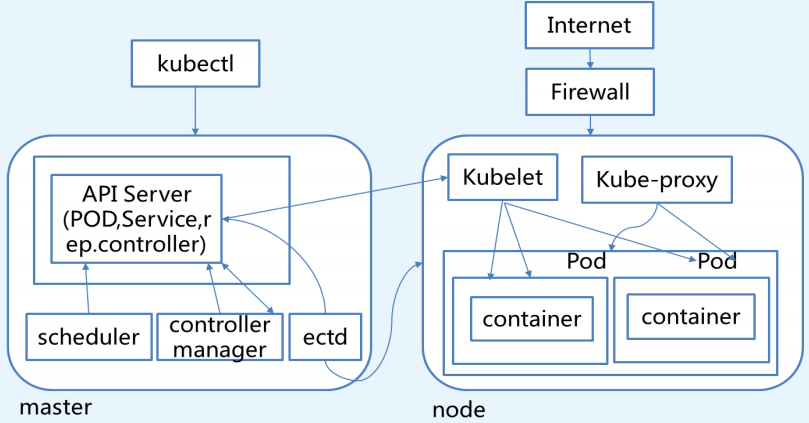

1、K8s服务调用如图

2、各组件说明

1、API Server

- 供Kubernetes API接口,主要处理 REST操作以及更新ETCD中的对象

- 所有资源增删改查的唯一入口。

2、Scheduler

- 资源调度,负责Pod到Node的调度。

3、Controller Manager

- 所有其他群集级别的功能,目前由控制器Manager执行。资源对象的自动化控制中心。

4、ETCD

- 所有持久化的状态信息存储在ETCD中。etcd组件作为一个高可用、强一致性的服务发现存储仓库。

5、Kubelet

- 管理Pods以及容器、镜像、 Volume等,实现对集群对节点的管理。

6、Kube-proxy

- 提供网络代理以及负载均衡,实现与Service通信

7、Docker Engine

- 负责节点的容器的管理工作

3、架构设计主机信息表

二)设置主机名、分发集群主机映射

1、设置主机名(根据实际需要创建)

hostnamectl --static set-hostname ops-k8s-master01

hostnamectl --static set-hostname ops-k8s-master02

hostnamectl --static set-hostname ops-k8s-master03

hostnamectl --static set-hostname ops-k8s-node01

hostnamectl --static set-hostname ops-k8s-node02

2、做主机映射

本机做主机映射

cat <<EOF>>/etc/hosts

10.0.0.10 ops-k8s-master01 ops-k8s-master01.local.com

10.0.0.11 ops-k8s-master02 ops-k8s-master02.local.com

10.0.0.12 ops-k8s-master03 ops-k8s-master03.local.com

10.0.0.13 ops-k8s-node01 ops-k8s-node01.local.com

10.0.0.14 ops-k8s-node02 ops-k8s-node02.local.com

10.0.0.15 ops-k8s-harbor01 harbor01.local.com

10.0.0.16 ops-k8s-harbor02 harbor02.local.com

EOF

分发hosts文件到集群其他节点

for i in ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02 ops-k8s-harbor01 ops-k8s-harbor02;do scp /etc/hosts $i:/etc/;done

三)集群免密钥登录

1、创建密钥对

ssh-keygen #一路回车即可2、分发密钥对(包括本机)

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02 ops-k8s-harbor01 ops-k8s-harbor02;do ssh-copy-id $i;done

四)K8s环境初始化

停防火墙、关闭Swap、关闭Selinux、设置内核、安装依赖包、配置ntp(配置完后建议重启一次)

1、初始化脚本

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02 ops-k8s-harbor01 ops-k8s-harbor02;do ssh -n $i "mkdir -p /opt/scripts/shell && exit";done

cat>/opt/scripts/shell/init_k8s_env.sh<<EOF

#!/bin/bash

#by wzs at 20180419

#auto install k8s

#1.stop firewall

systemctl stop firewalld

systemctl disable firewalld

#2.stop swap

swapoff -a

sed -i 's/.*swap.*/#&/' /etc/fstab

#3.stop selinux

setenforce 0

sed -i "s/^SELINUX=enforcing/SELINUX=disabled/g" /etc/sysconfig/selinux

sed -i "s/^SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config

sed -i "s/^SELINUX=permissive/SELINUX=disabled/g" /etc/sysconfig/selinux

sed -i "s/^SELINUX=permissive/SELINUX=disabled/g" /etc/selinux/config

#4.安装基本包

yum install -y net-tools vim lrzsz tree screen lsof tcpdump wget tree nmap tree dos2unix nc traceroute telnet nfs-utils mailx pciutils ftp ksh lvm2 gcc gcc-c++ dmidecode kde-l10n-Chinese* lsof ntp

#5.set ntpdate

systemctl enable ntpdate.service

echo '*/30 * * * * /usr/sbin/ntpdate time7.aliyun.com >/dev/null 2>&1' > /tmp/crontab2.tmp

crontab /tmp/crontab2.tmp

systemctl start ntpdate.service

#6.set security limit

echo "* soft nofile 65536" >> /etc/security/limits.conf

echo "* hard nofile 65536" >> /etc/security/limits.conf

echo "* soft nproc 65536" >> /etc/security/limits.conf

echo "* hard nproc 65536" >> /etc/security/limits.conf

echo "* soft memlock unlimited" >> /etc/security/limits.conf

echo "* hard memlock unlimited" >> /etc/security/limits.conf

EOF

2、发送初始化环境脚本到其他节点

for i in ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02 ops-k8s-harbor01 ops-k8s-harbor02;do scp /opt/scripts/shell/init_k8s_env.sh $i:/opt/scripts/shell/;done

3、执行初始化脚本

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02 ops-k8s-harbor01 ops-k8s-harbor02;do ssh -n $i "/bin/bash /opt/scripts/shell/init_k8s_env.sh && exit";done

五)安装Docker

1、使用国内Docker源

cd /etc/yum.repos.d/

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

2、Docker安装,启动docker并设置自启动

yum install -y docker-ce

systemctl enable docker

systemctl start docker

systemctl status docker

补充:

1、卸载老版本

yum list installed | grep docker

systemctl stop docker

yum -y remove docker.x86_64 docker-client.x86_64 docker-common.x86_64

##删除容器和镜像

rm -rf /var/lib/docker

#其他节点操作

for i in ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02 ops-k8s-harbor01 ops-k8s-harbor02;do ssh -n $i "systemctl stop docker && yum -y remove docker.x86_64 docker-client.x86_64 docker-common.x86_64 && rm -rf /var/lib/docker && exit";done

2、安装新版本

cat>install_docker.sh<<EOF

#!/bin/sh

###############################################################################

#

#VARS INIT

#

###############################################################################

###############################################################################

#

#Confirm Env

#

###############################################################################

date

echo "## Install Preconfirm"

echo "## Uname"

uname -r

echo

echo "## OS bit"

getconf LONG_BIT

echo

###############################################################################

#

#INSTALL yum-utils

#

###############################################################################

date

echo "## Install begins : yum-utils"

yum install -y yum-utils >/dev/null 2>&1

if [ $? -ne 0 ]; then

echo "Install failed..."

exit 1

fi

echo "## Install ends : yum-utils"

echo

###############################################################################

#

#Setting yum-config-manager

#

###############################################################################

echo "## Setting begins : yum-config-manager"

yum-config-manager \

--add-repo \

https://download.docker.com/linux/centos/docker-ce.repo >/dev/null 2>&1

if [ $? -ne 0 ]; then

echo "Install failed..."

exit 1

fi

echo "## Setting ends : yum-config-manager"

echo

###############################################################################

#

#Update Package Cache

#

###############################################################################

echo "## Setting begins : Update package cache"

yum makecache fast >/dev/null 2>&1

if [ $? -ne 0 ]; then

echo "Install failed..."

exit 1

fi

echo "## Setting ends : Update package cache"

echo

###############################################################################

#

#INSTALL Docker-engine

#

###############################################################################

date

echo "## Install begins : docker-ce"

yum install -y docker-ce

if [ $? -ne 0 ]; then

echo "Install failed..."

exit 1

fi

echo "## Install ends : docker-ce"

date

echo

###############################################################################

#

#Stop Firewalld

#

###############################################################################

echo "## Setting begins : stop firewall"

systemctl stop firewalld

if [ $? -ne 0 ]; then

echo "Install failed..."

exit 1

fi

systemctl disable firewalld

if [ $? -ne 0 ]; then

echo "Install failed..."

exit 1

fi

echo "## Setting ends : stop firewall"

echo

###############################################################################

#

#Clear Iptable rules

#

###############################################################################

echo "## Setting begins : clear iptable rules"

iptables -F

if [ $? -ne 0 ]; then

echo "Install failed..."

exit 1

fi

echo "## Setting ends : clear iptable rules"

echo

###############################################################################

#

#Enable docker

#

###############################################################################

echo "## Setting begins : systemctl enable docker"

systemctl enable docker

if [ $? -ne 0 ]; then

echo "Install failed..."

exit 1

fi

echo "## Setting ends : systemctl enable docker"

echo

###############################################################################

#

#start docker

#

###############################################################################

echo "## Setting begins : systemctl restart docker"

systemctl restart docker

if [ $? -ne 0 ]; then

echo "Install failed..."

exit 1

fi

echo "## Setting ends : systemctl restart docker"

echo

###############################################################################

#

#confirm docker version

#

###############################################################################

echo "## docker info"

docker info

echo

echo "## docker version"

docker version

EOF

3、分发脚本到其他节点并执行安装

for i in ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do scp /opt/scripts/shell/install_docker.sh $i:/opt/scripts/shell/;done

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do ssh -n $i " /bin/bash /opt/scripts/shell/install_docker.sh && exit";done六)准备软件包和管理目录

1、创建管理目录

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do ssh -n $i "mkdir -p /opt/kubernetes/{cfg,bin,ssl,log,yaml} && exit";done

目录详解

kubernetes/

├── bin #二进制可执行文件存放目录,设置环境变量

├── cfg #配置管理目录

├── log #日志管理目录

├── ssl #集群证书存放目录

└── yaml #yaml文件存放目录

5 directories, 0 files

2、下载并解压软件包

下载地址:百度网盘-免费云盘丨文件共享软件丨超大容量丨存储安全

cd /usr/local/src

#将软件包上传

unzip -d /usr/local/src k8s-v1.10.1-manual.zip

七)创建K8s的环境变量

在集群所有节点执行

echo "PATH=$PATH:/opt/kubernetes/bin">>/root/.bash_profile

source /root/.bash_profile

二、手动创建CA证书

一)安装CFSSL

1、下载证书

cd /usr/local/src

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

2、将cfssl添加执行权限,移动到设置的可执行命令的环境变量

chmod +x cfssl*

mv cfssl-certinfo_linux-amd64 /opt/kubernetes/bin/cfssl-certinfo

mv cfssljson_linux-amd64 /opt/kubernetes/bin/cfssljson

mv cfssl_linux-amd64 /opt/kubernetes/bin/cfssl

3、复制cfssl命令文件到到其他节点。如果实际中多个节点,就都需要同步复制。

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do scp -r /opt/kubernetes/bin/cfssl* $i:/opt/kubernetes/bin/;done

二)初始化cfssl

#创建管理证书的目录

mkdir -p /usr/local/src/ssl && cd /usr/local/src/ssl

cfssl print-defaults config > config.json

cfssl print-defaults csr > csr.json

三)创建用来生成 CA 文件的 JSON 配置文件

cat >ca-config.json<<EOF

{

"signing": {

"default": {

"expiry": "175200h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "175200h"

}

}

}

}

EOF

四)创建用来生成 CA 证书签名请求(CSR)的 JSON 配置文件

cat >ca-csr.json<<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

五)生成CA证书(ca.pem)和密钥(ca-key.pem)

cfssl gencert -initca ca-csr.json | cfssljson -bare ca

ls -l ca*

六)分发证书

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do scp -r ca.csr ca.pem ca-key.pem ca-config.json $i:/opt/kubernetes/ssl/;done

三、手动部署ETCD集群

etcd下载地址:Releases · etcd-io/etcd · GitHub

一)准备etcd软件包

cd /usr/local/src/

wget https://github.com/coreos/etcd/releases/download/v3.2.18/etcd-v3.2.18-linux-amd64.tar.gz

tar xf etcd-v3.2.18-linux-amd64.tar.gz

cd etcd-v3.2.18-linux-amd64

for i in in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03;do scp etcd etcdctl $i:/opt/kubernetes/bin/;done

二)创建 etcd 证书签名请求

cd /usr/local/src/ssl

cat>etcd-csr.json<<EOF

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"10.0.0.10",

"10.0.0.11",

"10.0.0.12"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}]

}

EOF

三)生成 etcd 证书和私钥

cfssl gencert -ca=/opt/kubernetes/ssl/ca.pem \

-ca-key=/opt/kubernetes/ssl/ca-key.pem \

-config=/opt/kubernetes/ssl/ca-config.json \

-profile=kubernetes etcd-csr.json | cfssljson -bare etcd

#生成以下证书文件

ls -l etcd*

四)将证书移动到/opt/kubernetes/ssl

并发送证书到etcd集群其他节点

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03;do scp etcd*.pem $i:/opt/kubernetes/ssl/;done

五)设置ETCD配置文件

cat>/opt/kubernetes/cfg/etcd.conf<<EOF

#[member]

ETCD_NAME="ops-k8s-master01"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

#ETCD_SNAPSHOT_COUNTER="10000"

#ETCD_HEARTBEAT_INTERVAL="100"

#ETCD_ELECTION_TIMEOUT="1000"

ETCD_LISTEN_PEER_URLS="https://10.0.0.10:2380"

ETCD_LISTEN_CLIENT_URLS="https://10.0.0.10:2379,https://127.0.0.1:2379"

#ETCD_MAX_SNAPSHOTS="5"

#ETCD_MAX_WALS="5"

#ETCD_CORS=""

#[cluster]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://10.0.0.10:2380"

# if you use different ETCD_NAME (e.g. test),

# set ETCD_INITIAL_CLUSTER value for this name, i.e. "test=http://..."

ETCD_INITIAL_CLUSTER="ops-k8s-master01=https://10.0.0.10:2380,ops-k8s-master02=https://10.0.0.11:2380,ops-k8s-master03=https://10.0.0.12:2380"

ETCD_INITIAL_CLUSTER_STATE="new"

ETCD_INITIAL_CLUSTER_TOKEN="k8s-etcd-cluster"

ETCD_ADVERTISE_CLIENT_URLS="https://10.0.0.10:2379"

#[security]

CLIENT_CERT_AUTH="true"

ETCD_CA_FILE="/opt/kubernetes/ssl/ca.pem"

ETCD_CERT_FILE="/opt/kubernetes/ssl/etcd.pem"

ETCD_KEY_FILE="/opt/kubernetes/ssl/etcd-key.pem"

PEER_CLIENT_CERT_AUTH="true"

ETCD_PEER_CA_FILE="/opt/kubernetes/ssl/ca.pem"

ETCD_PEER_CERT_FILE="/opt/kubernetes/ssl/etcd.pem"

ETCD_PEER_KEY_FILE="/opt/kubernetes/ssl/etcd-key.pem"

EOF

六)创建ETCD系统服务

cat>/etc/systemd/system/etcd.service<<EOF

[Unit]

Description=Etcd Server

After=network.target

[Service]

Type=simple

WorkingDirectory=/var/lib/etcd

EnvironmentFile=-/opt/kubernetes/cfg/etcd.conf

# set GOMAXPROCS to number of processors

ExecStart=/bin/bash -c "GOMAXPROCS=$(nproc) /opt/kubernetes/bin/etcd"

Type=notify

[Install]

WantedBy=multi-user.target

EOF

七)发送文件到集群其他节点,并启动服务

1、发送文件到集群其他节点

for i in ops-k8s-master02 ops-k8s-master03;do scp /opt/kubernetes/cfg/etcd.conf $i:/opt/kubernetes/cfg/;done

for i in ops-k8s-master02 ops-k8s-master03;do scp /etc/systemd/system/etcd.service $i:/etc/systemd/system/;done

注意:修改/opt/kubernetes/cfg/etcd.conf的ip地址和节点名称

2、创建服务必要的目录,并启动服务

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03;do ssh -n $i "mkdir -p /var/lib/etcd && exit";done

systemctl daemon-reload

systemctl enable etcd

systemctl start etcd

systemctl status etcd

注意:所有的 etcd 节点重复上面的步骤,直到所有机器的 etcd 服务都已启动。

八)验证etcd集群

etcdctl --endpoints=https://10.0.0.10:2379 \

--ca-file=/opt/kubernetes/ssl/ca.pem \

--cert-file=/opt/kubernetes/ssl/etcd.pem \

--key-file=/opt/kubernetes/ssl/etcd-key.pem cluster-health

#结果如下

member 69c08d868bbff6f1 is healthy: got healthy result from https://10.0.0.12:2379

member a87115828af54fe6 is healthy: got healthy result from https://10.0.0.10:2379

member f96d77d9089bd1e3 is healthy: got healthy result from https://10.0.0.11:2379

cluster is healthy

##验证结果如上就OK了

四、Master节点部署

若是集群的话,IP需要换成VIP地址

一)安装、配置keepalived

1、在所有的mster节点安装keepalived服务

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03;do ssh -n $i "yum install -y keepalived && cp /etc/keepalived/keepalived.conf{,.bak} && exit";done

2、修改配置文件

注意:

1、绑定的网卡名与本文配置不同,请自行更改

2、注意keepalived master和backup其他信息更改

1、ops-k8s-master01的keepalived.conf(keepaliced的master)

cat <<EOF > /etc/keepalived/keepalived.conf

global_defs {

router_id LVS_k8s

}

vrrp_script CheckK8sMaster {

script "curl -k https://10.0.0.7:6443"

interval 3

timeout 9

fall 2

rise 2

}

vrrp_instance VI_1 {

state MASTER

interface ens192

virtual_router_id 61

priority 100

advert_int 1

mcast_src_ip 10.0.0.10

nopreempt

authentication {

auth_type PASS

auth_pass sqP05dQgMSlzrxHj

}

unicast_peer {

10.0.0.11

10.0.0.12

}

virtual_ipaddress {

10.0.0.7/24

}

track_script {

CheckK8sMaster

}

}

EOF

2、ops-k8s-master02的keepalived.conf(keepaliced的backup01)

cat <<EOF > /etc/keepalived/keepalived.conf

global_defs {

router_id LVS_k8s

}

vrrp_script CheckK8sMaster {

script "curl -k https://10.0.0.7:6443"

interval 3

timeout 9

fall 2

rise 2

}

vrrp_instance VI_1 {

state BACKUP

interface ens192

virtual_router_id 61

priority 90

advert_int 1

mcast_src_ip 10.0.0.11

nopreempt

authentication {

auth_type PASS

auth_pass sqP05dQgMSlzrxHj

}

unicast_peer {

10.0.0.10

10.0.0.12

}

virtual_ipaddress {

10.0.0.7/24

}

track_script {

CheckK8sMaster

}

}

EOF

3、ops-k8s-master02的keepalived.conf(keepaliced的backup02)

cat <<EOF > /etc/keepalived/keepalived.conf

global_defs {

router_id LVS_k8s

}

vrrp_script CheckK8sMaster {

script "curl -k https://10.0.0.7:6443"

interval 3

timeout 9

fall 2

rise 2

}

vrrp_instance VI_1 {

state BACKUP

interface ens192

virtual_router_id 61

priority 80

advert_int 1

mcast_src_ip 10.0.0.12

nopreempt

authentication {

auth_type PASS

auth_pass sqP05dQgMSlzrxHj

}

unicast_peer {

10.0.0.10

10.0.0.11

}

virtual_ipaddress {

10.0.0.7/24

}

track_script {

CheckK8sMaster

}

}

EOF

3、启动keepalived

systemctl enable keepalived

systemctl start keepalived

systemctl status keepalived

4、验证结果

1、在主节点查看是否存在VIP

ip a|grep 10.0.0.7

2、挂掉master节点,在backup01节点看是否存在VIP

在主节点执行

systemctl stop keepalived

在backup01节点看是否存在VIP

ip a|grep 10.0.0.7

3、挂掉master、backup01节点,在backup02节点看是否存在VIP

在master、backup01节点执行

systemctl stop keepalived

在backup02节点看是否存在VIP

ip a|grep 10.0.0.7

二)K8s API服务部署

1、准备安装包,并拷贝命令到集群

1、补充安装包下载方式(参考)

方式一(推荐):从kubernetes/CHANGELOG.md at master · kubernetes/kubernetes · GitHub 页面下载 client 或 server tar包 文件

[root@k8s-master ~]# cd /usr/local/src/

[root@k8s-master src]# wget https://dl.k8s.io/v1.10.1/kubernetes.tar.gz

[root@k8s-master src]# wget https://dl.k8s.io/v1.10.1/kubernetes-server-linux-amd64.tar.gz

[root@k8s-master src]# wget https://dl.k8s.io/v1.10.1/kubernetes-client-linux-amd64.tar.gz

[root@k8s-master src]# wget https://dl.k8s.io/v1.10.1/kubernetes-node-linux-amd64.tar.gz

方式二:准备软件包从github release 页面下载发布版tar包,解压后再执行下载脚本.

[root@k8s-master ~]# cd /usr/local/src/

[root@k8s-master src]#wget https://github.com/kubernetes/kubernetes/releases/download/v1.10.3/kubernetes.tar.gz

[root@k8s-master src]# tar -zxvf kubernetes.tar.gz

[root@k8s-master src]# ll

total 2664

drwxr-xr-x 9 root root 156 May 21 18:16 kubernetes

-rw-r--r-- 1 root root 2726918 May 21 19:15 kubernetes.tar.gz

[root@k8s-master src]# cd kubernetes/cluster/

[root@k8s-master cluster]# ./get-kube-binaries.sh

2、集群部署步骤

cd /usr/local/src/

#上传包rz kubernetes-server-linux-amd64.tar.gz kubernetes.tar.gz

tar xf kubernetes-server-linux-amd64.tar.gz

tar xf kubernetes.tar.gz

cd kubernetes

##发送到master其他节点

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03;do scp /usr/local/src/kubernetes/server/bin/{kube-apiserver,kube-controller-manager,kube-scheduler} $i:/opt/kubernetes/bin/;done

2、创建生成CSR的JSON配置文件

cd /usr/local/src/ssl/

cat>kubernetes-csr.json<<EOF

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"10.1.0.1",

"10.0.0.10",

"10.0.0.11",

"10.0.0.12",

"10.0.0.7",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}]

}

EOF

3、生成 kubernetes 证书和私钥

cfssl gencert -ca=/opt/kubernetes/ssl/ca.pem \

-ca-key=/opt/kubernetes/ssl/ca-key.pem \

-config=/opt/kubernetes/ssl/ca-config.json \

-profile=kubernetes kubernetes-csr.json | cfssljson -bare kubernetes

#分发证书到master其他节点

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03;do scp kubernetes*.pem $i:/opt/kubernetes/ssl/;done

4、创建 kube-apiserver 使用的客户端 token 文件,发送到master其他节点

# head -c 16 /dev/urandom | od -An -t x | tr -d ' '

a39e5244495964d9f66a5b8e689546ae

cat>/opt/kubernetes/ssl/bootstrap-token.csv<<EOF

a39e5244495964d9f66a5b8e689546ae,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

for i in ops-k8s-master02 ops-k8s-master03;do scp /opt/kubernetes/ssl/bootstrap-token.csv $i:/opt/kubernetes/ssl/;done

5、创建基础用户名/密码认证配置

cat>/opt/kubernetes/ssl/basic-auth.csv<<EOF

admin,admin,1

readonly,readonly,2

EOF

for i in ops-k8s-master02 ops-k8s-master03;do scp /opt/kubernetes/ssl/basic-auth.csv $i:/opt/kubernetes/ssl/;done

6、部署Kubernetes API Server

etcd可写成VIP地址

cat>/usr/lib/systemd/system/kube-apiserver.service<<EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

[Service]

ExecStart=/opt/kubernetes/bin/kube-apiserver \

--admission-control=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota,NodeRestriction \

--bind-address=10.0.0.10 \

--insecure-bind-address=127.0.0.1 \

--authorization-mode=Node,RBAC \

--runtime-config=rbac.authorization.k8s.io/v1 \

--kubelet-https=true \

--anonymous-auth=false \

--basic-auth-file=/opt/kubernetes/ssl/basic-auth.csv \

--enable-bootstrap-token-auth \

--token-auth-file=/opt/kubernetes/ssl/bootstrap-token.csv \

--service-cluster-ip-range=10.1.0.0/16 \

--service-node-port-range=20000-40000 \

--tls-cert-file=/opt/kubernetes/ssl/kubernetes.pem \

--tls-private-key-file=/opt/kubernetes/ssl/kubernetes-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/kubernetes/ssl/ca.pem \

--etcd-certfile=/opt/kubernetes/ssl/kubernetes.pem \

--etcd-keyfile=/opt/kubernetes/ssl/kubernetes-key.pem \

--etcd-servers=https://10.0.0.10:2379,https://10.0.0.11:2379,https://10.0.0.12:2379 \

--enable-swagger-ui=true \

--allow-privileged=true \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/opt/kubernetes/log/api-audit.log \

--event-ttl=1h \

--v=2 \

--logtostderr=false \

--log-dir=/opt/kubernetes/log

Restart=on-failure

RestartSec=5

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

for i in ops-k8s-master02 ops-k8s-master03;do scp /usr/lib/systemd/system/kube-apiserver.service $i:/usr/lib/systemd/system/;done

注意:修改一下相对应etcd集群的IP地址和bind-address

7、启动API server服务

systemctl daemon-reload

systemctl enable kube-apiserver

systemctl start kube-apiserver

systemctl status kube-apiserver

三)部署Controller Manager服务

1、创建服务管理文件,发送到其他节点

cat>/usr/lib/systemd/system/kube-controller-manager.service<<EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

ExecStart=/opt/kubernetes/bin/kube-controller-manager \

--address=127.0.0.1 \

--master=http://127.0.0.1:8080 \

--allocate-node-cidrs=true \

--service-cluster-ip-range=10.1.0.0/16 \

--cluster-cidr=10.2.0.0/16 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \

--root-ca-file=/opt/kubernetes/ssl/ca.pem \

--leader-elect=true \

--v=2 \

--logtostderr=false \

--log-dir=/opt/kubernetes/log

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

for i in ops-k8s-master02 ops-k8s-master03;do scp /usr/lib/systemd/system/kube-controller-manager.service $i:/usr/lib/systemd/system/;done

2、启动Controller Manager,并查看服务状态

systemctl daemon-reload

systemctl enable kube-controller-manager

systemctl start kube-controller-manager

systemctl status kube-controller-manager

四)部署Kubernetes Scheduler

1、创建服务管理文件,发送到其他节点

cat>/usr/lib/systemd/system/kube-scheduler.service<<EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

ExecStart=/opt/kubernetes/bin/kube-scheduler \

--address=127.0.0.1 \

--master=http://127.0.0.1:8080 \

--leader-elect=true \

--v=2 \

--logtostderr=false \

--log-dir=/opt/kubernetes/log

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

for i in ops-k8s-master02 ops-k8s-master03;do scp /usr/lib/systemd/system/kube-scheduler.service $i:/usr/lib/systemd/system/;done

2、启动Kubernetes Scheduler,并查看服务状态

systemctl daemon-reload

systemctl enable kube-scheduler

systemctl start kube-scheduler

systemctl status kube-scheduler

五)部署kubectl 命令行工具

1、准备二进制包

cd /usr/local/src/

#上传包rz kubernetes-client-linux-amd64.tar.gz

tar xf kubernetes-client-linux-amd64.tar.gz

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03;do scp /usr/local/src/kubernetes/client/bin/kubectl $i:/opt/kubernetes/bin/;done

2、创建admin签名请求

cd /usr/local/src/ssl/

cat>admin-csr.json<<EOF

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

3、生成 admin 证书和私钥

cfssl gencert -ca=/opt/kubernetes/ssl/ca.pem \

-ca-key=/opt/kubernetes/ssl/ca-key.pem \

-config=/opt/kubernetes/ssl/ca-config.json \

-profile=kubernetes admin-csr.json | cfssljson -bare admin

ls -l admin*

#分发证书到集群其他节点

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03;do scp admin*.pem $i:/opt/kubernetes/ssl/;done

以下操作其他master节点也执行

4、设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=https://10.0.0.7:6443

5、设置客户端认证参数

kubectl config set-credentials admin \

--client-certificate=/opt/kubernetes/ssl/admin.pem \

--embed-certs=true \

--client-key=/opt/kubernetes/ssl/admin-key.pem

6、设置上下文参数

kubectl config set-context kubernetes \

--cluster=kubernetes \

--user=admin

7、设置默认上下文

kubectl config use-context kubernetes

8、使用kubectl工具

# kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-2 Healthy {"health": "true"}

etcd-0 Healthy {"health": "true"}

etcd-1 Healthy {"health": "true"}

9、安装kubectl命令补全包

yum install -y bash-completion

source /usr/share/bash-completion/bash_completion

source <(kubectl completion bash)

echo "source <(kubectl completion bash)" >> ~/.bashrc

五、Node节点部署

一)部署kubelet

1、二进制包准备

cd /usr/local/src/

#上传包kubernetes-node-linux-amd64.tar.gz

tar xf kubernetes-node-linux-amd64.tar.gz

cd /usr/local/src/kubernetes/node/bin

#发送至所有想创建pod的节点

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do scp -r /usr/local/src/kubernetes/node/bin/{kubelet,kube-proxy} $i:/opt/kubernetes/bin/;done

2、创建角色绑定

kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

3、创建 kubelet bootstrapping kubeconfig 文件

1、设置集群参数

cd /usr/local/src/ssl

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=https://10.0.0.7:6443 \

--kubeconfig=bootstrap.kubeconfig

2、设置客户端认证参数

kubectl config set-credentials kubelet-bootstrap \

--token=a39e5244495964d9f66a5b8e689546ae \

--kubeconfig=bootstrap.kubeconfig

3、设置上下文参数

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig

4、选择默认上下文

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

5、拷贝到本机和集群其他节点指定目录

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do scp bootstrap.kubeconfig $i:/opt/kubernetes/cfg/;done

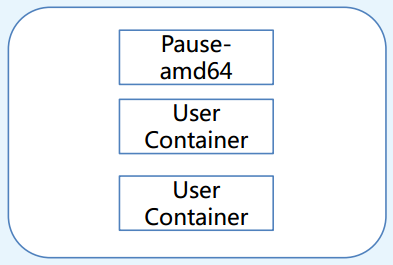

6、部署kubelet 1.设置CNI支持

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do ssh -n $i "mkdir -p /etc/cni/net.d/&& exit";done

cat>/etc/cni/net.d/10-default.conf<<EOF

{

"name": "flannel",

"type": "flannel",

"delegate": {

"bridge": "docker0",

"isDefaultGateway": true,

"mtu": 1400

}

}

EOF

for i in ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do scp -r /etc/cni/net.d/10-default.conf $i:/etc/cni/net.d/;done

4、kubelet目录

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do ssh -n $i "mkdir -p /var/lib/kubelet && exit";done

5、创建kubelet服务配置

1、创建管理文件

cat>/usr/lib/systemd/system/kubelet.service<<EOF

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/opt/kubernetes/bin/kubelet \

--address=10.0.0.10 \

--hostname-override=10.0.0.10 \

--pod-infra-container-image=mirrorgooglecontainers/pause-amd64:3.0 \

--experimental-bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \

--cert-dir=/opt/kubernetes/ssl \

--network-plugin=cni \

--cni-conf-dir=/etc/cni/net.d \

--cni-bin-dir=/opt/kubernetes/bin/cni \

--cluster-dns=10.1.0.2 \

--cluster-domain=cluster.local. \

--hairpin-mode hairpin-veth \

--allow-privileged=true \

--fail-swap-on=false \

--logtostderr=true \

--v=2 \

--logtostderr=false \

--log-dir=/opt/kubernetes/log

Restart=on-failure

RestartSec=5

EOF

2、发送到集群中其他节点,并更改成对应的IP地址

for i in ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do scp /usr/lib/systemd/system/kubelet.service $i:/usr/lib/systemd/system/;done

6、启动kubelet,并查看服务状态

systemctl daemon-reload

systemctl enable kubelet

systemctl start kubelet

systemctl status kubelet

7、查看csr请求 注意是在配置的服务器上执行

# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-0_w5F1FM_la_SeGiu3Y5xELRpYUjjT2icIFk9gO9KOU 1m kubelet-bootstrap Pending

8、批准kubelet 的 TLS 证书请求

kubectl get csr|grep 'Pending' | awk 'NR>0{print $1}'| xargs kubectl certificate approve

结果如下:说明认证通过

-rw-r--r-- 1 root root 1042 May 28 23:09 kubelet-client.crt

-rw------- 1 root root 227 May 28 23:08 kubelet-client.key

执行完毕后,查看节点状态已经是Ready的状态了

#kubectl get node NAME STATUS ROLES AGE VERSION

二)部署Kubernetes Proxy

1、配置kube-proxy使用LVS

yum install -y ipvsadm ipset conntrack

2、创建 kube-proxy 证书请求

cd /usr/local/src/ssl/

cat>kube-proxy-csr.json<<EOF

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

3、生成证书

cfssl gencert -ca=/opt/kubernetes/ssl/ca.pem \

-ca-key=/opt/kubernetes/ssl/ca-key.pem \

-config=/opt/kubernetes/ssl/ca-config.json \

-profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

4、分发证书到集群其他节点

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do scp kube-proxy*.pem $i:/opt/kubernetes/ssl/;done

5、创建kube-proxy配置文件

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=https://10.0.0.7:6443 \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-credentials kube-proxy \

--client-certificate=/opt/kubernetes/ssl/kube-proxy.pem \

--client-key=/opt/kubernetes/ssl/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

6、分发kubeconfig配置文件到集群其他节点

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do scp kube-proxy.kubeconfig $i:/opt/kubernetes/cfg/;done

7、创建kube-proxy服务配置

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do ssh -n $i "mkdir -p /var/lib/kube-proxy && exit";done

cat>/usr/lib/systemd/system/kube-proxy.service<<EOF

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

[Service]

WorkingDirectory=/var/lib/kube-proxy

ExecStart=/opt/kubernetes/bin/kube-proxy \

--bind-address=10.0.0.10 \

--hostname-override=10.0.0.10 \

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig \

--masquerade-all \

--feature-gates=SupportIPVSProxyMode=true \

--proxy-mode=ipvs \

--ipvs-min-sync-period=5s \

--ipvs-sync-period=5s \

--ipvs-scheduler=rr \

--logtostderr=true \

--v=2 \

--logtostderr=false \

--log-dir=/opt/kubernetes/log

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

发送管理文件到其他节点,并更改成相应的IP地址

for i in ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do scp /usr/lib/systemd/system/kube-proxy.service $i:/usr/lib/systemd/system/;done

8、启动Kubernetes Proxy,并查看启动状态

systemctl daemon-reload

systemctl enable kube-proxy

systemctl start kube-proxy

systemctl status kube-proxy

9、检查LVS状态,并查看node状态

ipvsadm -L -n

如果你在两台实验机器都安装了kubelet和proxy服务,使用下面的命令可以检查状态:

kubectl get node

六、Flannel网络部署

flannel下载地址(coreos旗下的):Releases · flannel-io/flannel · GitHub

一)Node运行pod的基础知识

1、Node节点上运行POD

2、需要了解知识点

1、RC

- RC是K8s集群中最早的保证Pod高可用的API对象。通过监控运行中的Pod来保证集群中运行指定数目的Pod副本。

- 指定的数目可以是多个也可以是1个;少于指定数目, RC就会启动运行新的Pod副本;多于指定数目, RC就会杀死多余的Pod副本。

- 即使在指定数目为1的情况下,通过RC运行Pod也比直接运行Pod更明智,因为RC也可以发挥它高可用的能力,保证永远有1个Pod在运行。

2、RS

- RS是新一代RC,提供同样的高可用能力,区别主要在于RS后来居上,能支持更多中的匹配模式。副本集对象一般不单独使用,而是作为部署的理想状态参数使用

- RS是K8S 1.2中出现的概念,是RC的升级。一般和Deployment共同使用。

- Deployment表示用户对K8s集群的一次更新操作。 Deployment是一个比RS应用模式更广的API对象可以是创建一个新的服务,更新一个新的服务,也可以是滚动升级一个服务。滚动升级一个服务,实际是创建一个新的RS,然后逐渐将新RS中副本数增加到理想状态,将旧RS中的副本数减小到0的复合操作;

3、deployment

- 一个复合操作用一个RS是不太好描述的,所以用一个更通用的Deployment来描述。

- RC、 RS和Deployment只是保证了支撑服务的POD的数量,但是没有解 决如何访问这些服务的问题。一个Pod只是一个运行服务的实例,随时可 能在一个节点上停止,在另一个节点以一个新的IP启动一个新的Pod,因此不能以确定的IP和端口号提供服务

- 要稳定地提供服务需要服务发现和负载均衡能力。服务发现完成的工作,是针对客户端访问的服务,找到对应的的后端服务实例。

4、service(cluster IP)

- 在K8集群中,客户端需要访问的服务就是Service对象。每个Service会对应一个集群内部有效的虚拟IP,集群内部通过虚拟IP访问一个服务。

5、Node IP、Pod IP、Cluster IP

- Node IP: 节点设备的IP,如物理机,虚拟机等容器宿主的实际IP。

- Pod IP: Pod 的IP地址,是根据docker0网格IP段进行分配的。

- Cluster IP: Service的IP,是一个虚拟IP,仅作用于service对象,由k8s管理和分配,需要结合service port才能使用,单独的IP没有通信功能,集群外访问需要一些修改。

在K8S集群内部, nodeip podip clusterip的通信机制是由k8s制定的路由规则,不是IP路由。

二)Flannel服务部署

1、创建flannel证书请求

cd /usr/local/src/ssl

cat>flanneld-csr.json<<EOF

{

"CN": "flanneld",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

2、生成证书

cfssl gencert -ca=/opt/kubernetes/ssl/ca.pem \

-ca-key=/opt/kubernetes/ssl/ca-key.pem \

-config=/opt/kubernetes/ssl/ca-config.json \

-profile=kubernetes flanneld-csr.json | cfssljson -bare flanneld

3、分发证书

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do scp flanneld*.pem $i:/opt/kubernetes/ssl/;done

4、下载安装flannel软件包

cd /usr/local/src

# wget https://github.com/coreos/flannel/releases/download/v0.10.0/flannel-v0.10.0-linux-amd64.tar.gz

#或上传包

#rz flannel-v0.10.0-linux-amd64.tar.gz

tar zxf flannel-v0.10.0-linux-amd64.tar.gz

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do scp flanneld mk-docker-opts.sh $i:/opt/kubernetes/bin/;done

cd /usr/local/src/kubernetes/cluster/centos/node/bin/

for i in ops-k8s-master01 ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do scp remove-docker0.sh $i:/opt/kubernetes/bin/;done

5、配置Flannel配置文件

配置本机的配置文件

cat>/opt/kubernetes/cfg/flannel<<EOF

FLANNEL_ETCD="-etcd-endpoints=https://10.0.0.10:2379,https://10.0.0.11:2379,https://10.0.0.12:2379"

FLANNEL_ETCD_KEY="-etcd-prefix=/kubernetes/network"

FLANNEL_ETCD_CAFILE="--etcd-cafile=/opt/kubernetes/ssl/ca.pem"

FLANNEL_ETCD_CERTFILE="--etcd-certfile=/opt/kubernetes/ssl/flanneld.pem"

FLANNEL_ETCD_KEYFILE="--etcd-keyfile=/opt/kubernetes/ssl/flanneld-key.pem"

EOF

发送到k8s集群其他节点

for i in ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do scp /opt/kubernetes/cfg/flannel $i:/opt/kubernetes/cfg/;done

6、设置Flannel系统服务

cat>/usr/lib/systemd/system/flannel.service<<EOF

[Unit]

Description=Flanneld overlay address etcd agent

After=network.target

Before=docker.service

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/flannel

ExecStartPre=/opt/kubernetes/bin/remove-docker0.sh

ExecStart=/opt/kubernetes/bin/flanneld ${FLANNEL_ETCD} ${FLANNEL_ETCD_KEY} ${FLANNEL_ETCD_CAFILE} ${FLANNEL_ETCD_CERTFILE} ${FLANNEL_ETCD_KEYFILE}

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -d /run/flannel/docker

Type=notify

[Install]

WantedBy=multi-user.target

RequiredBy=docker.service

EOF

发送到k8s集群其他节点

for i in ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do scp /usr/lib/systemd/system/flannel.service $i:/usr/lib/systemd/system/;done

三)Flannel CNI集成

1、简述CNI

CNI(Container Network Interface)容器网络接口,是Linux容器网络配置的一组标准和库,用户需要根据这些标准和库来开发自己的容器网络插件。在github里已经提供了一些常用的插件。CNI只专注解决容器网络连接和容器销毁时的资源释放,提供一套框架,所以CNI可以支持大量不同的网络模式,并且容易实现。

相对于k8s exec直接执行可执行程序,cni 插件是对执行程序的封装,规定了可执行程序的框架,当然最后还是和exec 插件一样,执行可执行程序。只不过exec 插件通过命令行数据读取参数,cni插件通过环境变量以及配置文件读入参数.

2、下载CNI插件

Releases · containernetworking/plugins · GitHub

cd /usr/local/src/

wget https://github.com/containernetworking/plugins/releases/download/v0.7.1/cni-plugins-amd64-v0.7.1.tgz

#或者上传 rz cni-plugins-amd64-v0.7.1.tgz

mkdir /opt/kubernetes/bin/cni

tar zxf cni-plugins-amd64-v0.7.1.tgz -C /opt/kubernetes/bin/cni

发送插件到集群其他节点

for i in ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do scp -r /opt/kubernetes/bin/cni $i:/opt/kubernetes/bin/;done

3、创建Etcd的key

/opt/kubernetes/bin/etcdctl --ca-file /opt/kubernetes/ssl/ca.pem --cert-file /opt/kubernetes/ssl/flanneld.pem --key-file /opt/kubernetes/ssl/flanneld-key.pem \

--no-sync -C https://10.0.0.10:2379,https://10.0.0.11:2379,https://10.0.0.12:2379 \

mk /kubernetes/network/config '{ "Network": "10.2.0.0/16", "Backend": { "Type": "vxlan", "VNI": 1 }}' >/dev/null 2>&1

4、启动flannel,并查看服务状态(所有节点操作)

systemctl daemon-reload

systemctl enable flannel

chmod +x /opt/kubernetes/bin/*

systemctl start flannel

systemctl status flannel

四)配置Docker使用Flannel

1、更改docker的系统服务文件/usr/lib/systemd/system/docker.service

[Unit] #在Unit下面修改After和增加Requires

After=network-online.target firewalld.service flannel.service

Wants=network-online.target

Requires=flannel.service

[Service] #增加EnvironmentFile=-/run/flannel/docker,flannel启动后就会创建这个文件

Type=notify

EnvironmentFile=-/run/flannel/docker

ExecStart=/usr/bin/dockerd $DOCKER_OPTS

2、分发到k8s集群其他节点

for i in ops-k8s-master02 ops-k8s-master03 ops-k8s-node01 ops-k8s-node02;do scp -r /usr/lib/systemd/system/docker.service $i:/usr/lib/systemd/system/;done

3、重启docker,并查看启动状态

systemctl daemon-reload

systemctl restart docker

systemctl status docker

4、查看集群节点docker的ip变化

##应该集群节点分配了不同的IP段

ip a

5、创建一个应用,测试网络是否互通

1、创建一个测试用的deployment

kubectl run net-test --image=alpine --replicas=2 sleep 360000

2、查看获取IP情况

kubectl get pod -o wide

3、测试连通性

ping 10.2.83.2

测试网络互通了,说明Flannel配置成功!

七、CoreDNS和Dashboard部署

注意:namespace是kube-system

一)部署CoreDNS

1、创建yaml管理目录

mkdir -p /opt/kubernetes/yaml/coredns

2、写 coredns.yaml文件

根据需求更改相应的配置(尤其是资源控制)

cat>coredns.yaml<<EOF

apiVersion: v1

kind: ServiceAccount

metadata:

name: coredns

namespace: kube-system

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

kubernetes.io/bootstrapping: rbac-defaults

addonmanager.kubernetes.io/mode: Reconcile

name: system:coredns

rules:

- apiGroups:

- ""

resources:

- endpoints

- services

- pods

- namespaces

verbs:

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

addonmanager.kubernetes.io/mode: EnsureExists

name: system:coredns

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:coredns

subjects:

- kind: ServiceAccount

name: coredns

namespace: kube-system

---

apiVersion: v1

kind: ConfigMap

metadata:

name: coredns

namespace: kube-system

labels:

addonmanager.kubernetes.io/mode: EnsureExists

data:

Corefile: |

.:53 {

errors

health

kubernetes cluster.local. in-addr.arpa ip6.arpa {

pods insecure

upstream

fallthrough in-addr.arpa ip6.arpa

}

prometheus :9153

proxy . /etc/resolv.conf

cache 30

}

---

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: coredns

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

kubernetes.io/name: "CoreDNS"

spec:

replicas: 2

strategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 1

selector:

matchLabels:

k8s-app: coredns

template:

metadata:

labels:

k8s-app: coredns

spec:

serviceAccountName: coredns

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

- key: "CriticalAddonsOnly"

operator: "Exists"

containers:

- name: coredns

image: coredns/coredns:1.0.6

imagePullPolicy: IfNotPresent

resources:

limits:

memory: 2Gi

requests:

cpu: 2

memory: 1Gi

args: [ "-conf", "/etc/coredns/Corefile" ]

volumeMounts:

- name: config-volume

mountPath: /etc/coredns

ports:

- containerPort: 53

name: dns

protocol: UDP

- containerPort: 53

name: dns-tcp

protocol: TCP

livenessProbe:

httpGet:

path: /health

port: 8080

scheme: HTTP

initialDelaySeconds: 60

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 5

dnsPolicy: Default

volumes:

- name: config-volume

configMap:

name: coredns

items:

- key: Corefile

path: Corefile

---

apiVersion: v1

kind: Service

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: coredns

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

kubernetes.io/name: "CoreDNS"

spec:

selector:

k8s-app: coredns

clusterIP: 10.1.0.2

ports:

- name: dns

port: 53

protocol: UDP

- name: dns-tcp

port: 53

protocol: TCP

EOF

3、创建CoreDNS

kubectl create -f coredns.yaml

kubectl get pod -n kube-system

4、测试

#查看转发记录

ipvadm -Ln

#运行一个pod测试(--rm 退出容器立即删除)

kubectl run dns-test --rm -it --image=alpine /bin/bash

#进入容器

##看是否外网可通

ping baidu.com

二)部署Dashboard

1、创建yaml管理目录

mkdir -p /opt/kubernetes/yaml/dashboard

2、写dashboard相关的yaml文件

3、创建Dashboard

kubectl create -f dashboard/

#获取登录的token

kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep admin-user | awk '{print $1}')