注:

- 本文是基于吴恩达《LangChain for LLM Application Development》课程的学习笔记;

- 完整的课程内容以及示例代码/Jupyter笔记见:LangChain-for-LLM-Application-Development;

课程大纲

目前 LLM 基本上都有最大 Token 的限制,即限制每次对话中输入的最长的文字个数。目前常见的 gpt-3.5-turbo 模型支持的最大 Token 数是 4096,最强的 gpt-4 模型支持的最大 Token 数是 8192 。

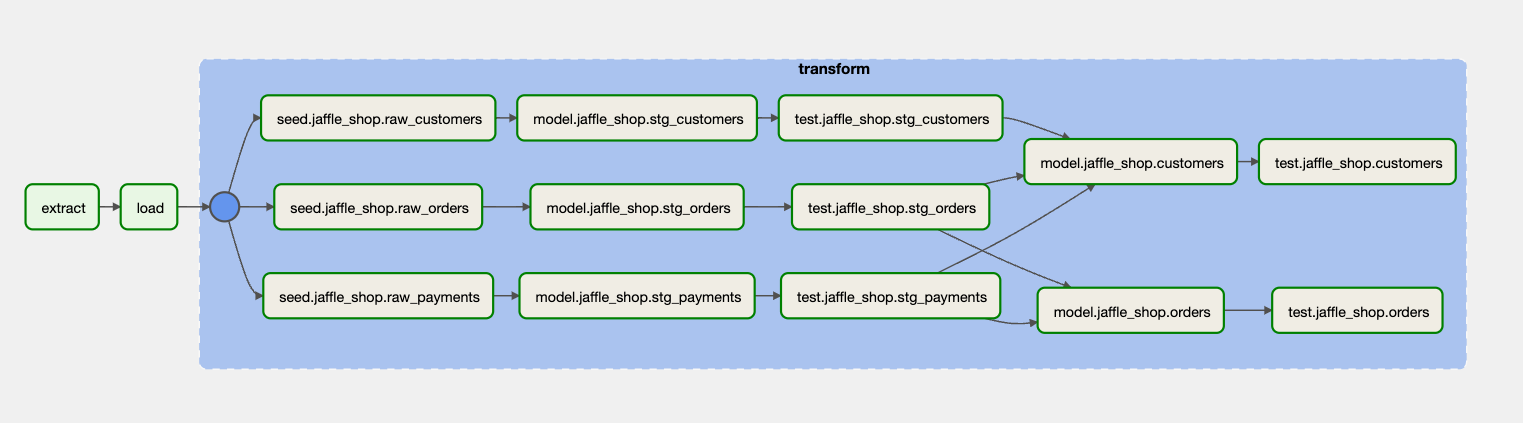

本课程主要讲解 LangChain 中的以下几种 memory 方式:

- ConversationBufferMemory:对话内容缓存,方便存储对话内容;

- ConversationBufferWindowMemory:根据对话的次数来控制记忆长度;

- ConversationTokenBufferMemory:根据 Token 数量来控制记忆长度;

- ConversationSummaryMemory:总结对话内容来减少token占用,从而有更长的记忆;

主要内容如下图:

初始化设置

首先会初始化/读取环境变量信息,代码大致如下:

import os

from dotenv import load_dotenv, find_dotenv

_ = load_dotenv(find_dotenv()) # read local .env file

import warnings

warnings.filterwarnings('ignore')

ConversationBufferMemory

from langchain.chat_models import ChatOpenAI

from langchain.chains import ConversationChain

from langchain.memory import ConversationBufferMemory

llm = ChatOpenAI(temperature=0.0)

memory = ConversationBufferMemory()

# 创建一个对话,并传入 memory

conversation = ConversationChain(

llm=llm,

memory = memory,

# 会打印 LangChain 的 Prompt 信息,自己尝试时可以打开此选项

verbose=False

)

下面是使用 conversation 进行的三次对话,其中最后一句会询问第一句中的姓名信息,根据输出可知对话过程中是可以记住上下文信息的。

# 使用 predict 函数开始对话

conversation.predict(input="Hi, my name is Andrew")

conversation.predict(input="What is 1+1?")

conversation.predict(input="What is my name?")

'Your name is Andrew, as you mentioned earlier.'

我们使用 memory.buffer 获取对话的上下文信息,打印出的信息如下:

print(memory.buffer)

Human: Hi, my name is Andrew

AI: Hello Andrew, it's nice to meet you. My name is AI. How can I assist you today?

Human: What is 1+1?

AI: The answer to 1+1 is 2.

Human: What is my name?

AI: Your name is Andrew, as you mentioned earlier.

也可用通过 load_memory_variables 函数,获取对话历史,如下:

memory.load_memory_variables({})

{'history': "Human: Hi, my name is Andrew\nAI: Hello Andrew, it's nice to meet you. My name is AI. How can I assist you today?\nHuman: What is 1+1?\nAI: The answer to 1+1 is 2.\nHuman: What is my name?\nAI: Your name is Andrew, as you mentioned earlier."}

自定义 ConversationBufferMemory

上面是真实的对话过程中产生的是对话记录,我们也可以通过 save_context 函数来预设一些对话内容,如下:

memory = ConversationBufferMemory()

memory.save_context({"input": "Hi"},

{"output": "What's up"})

print(memory.buffer)

Human: Hi

AI: What's up

也可以不停的进行追加对话内容,如下:

memory.save_context({"input": "Not much, just hanging"},

{"output": "Cool"})

print(memory.buffer)

Human: Hi

AI: What's up

Human: Not much, just hanging

AI: Cool

ConversationBufferMemory 可以很方便的记录对话的内容,在使用的时候会很方便。由于对话内容的不断增加以及 LLM 模型有最大 Token 的限制,所以对话不会无限制的传递给 LLM 进行使用。按照什么策略选取对话内容就变得比较重要了,下面就先介绍下滑动窗口的方式来记录对话内容了。

ConversationBufferWindowMemory

以滑动窗口的方式来记录对话内容,可以设置记忆对话的轮数。

from langchain.memory import ConversationBufferWindowMemory

# 创建 ConversationBufferWindowMemory 示例,并且设置记忆窗口为 1,及只能记住一次对话内容

memory = ConversationBufferWindowMemory(k=1)

memory.save_context({"input": "Hi"},

{"output": "What's up"})

memory.save_context({"input": "Not much, just hanging"},

{"output": "Cool"})

# 只会打印最近一次的对话内容

memory.load_memory_variables({})

{'history': 'Human: Not much, just hanging\nAI: Cool'}

下面在与 LLM 模型结合看一下是否会记住上下文的对话内容,还是上面和 Andrew 对话的内容,代码如下:

# 初始化

llm = ChatOpenAI(temperature=0.0)

memory = ConversationBufferWindowMemory(k=1)

conversation = ConversationChain(

llm=llm,

memory = memory,

verbose=False

)

# 多轮对话,并不会记住 Andrew 的名字

conversation.predict(input="Hi, my name is Andrew")

conversation.predict(input="What is 1+1?")

conversation.predict(input="What is my name?")

"I'm sorry, I don't have access to that information. Could you please tell me your name?"

你可以通过修改 K 的值,来体验一下 LLM 能记住的内容。当然,你也可以将 verbose 设置为 Ture 来看一下 LangChain 内部是如何设计 Prompot 的。开启后,输出如下:

Prompt after formatting:

The following is a friendly conversation between a human and an AI. The AI is talkative and provides lots of specific details from its context. If the AI does not know the answer to a question, it truthfully says it does not know.

Current conversation:

Human: Hi, my name is Andrew

AI:

ConversationTokenBufferMemory

除了使用 ConversationBufferWindowMemory 方式之外,还可以 Token 的数量来限制记忆的大小。

# 安装 tiktoken

!pip install tiktoken

from langchain.memory import ConversationTokenBufferMemory

from langchain.llms import OpenAI

# 初始化 llm

llm = ChatOpenAI(temperature=0.0)

# 初始化 ConversationTokenBufferMemory,设置只能记忆 30 个 Token 以内的对话

memory = ConversationTokenBufferMemory(llm=llm, max_token_limit=30)

# 构造对话历史

memory.save_context({"input": "AI is what?!"},

{"output": "Amazing!"})

memory.save_context({"input": "Backpropagation is what?"},

{"output": "Beautiful!"})

memory.save_context({"input": "Chatbots are what?"},

{"output": "Charming!"})

# 打印对话记忆

memory.load_memory_variables({})

{'history': 'AI: Beautiful!\nHuman: Chatbots are what?\nAI: Charming!'}

通过日志输出可以看出,只记录了下半段的对话内容。

ConversationSummaryMemory

上面两种的记忆方式都是很精准的,但是能记录的内容有限。参考人类的记忆方式,我们很难完整详细的记住一件事情的所有细节,就像我们很难叙述今天一天完整的流水一样。我们通常只会记忆一次很长的对话的总结内容,ConversationSummaryMemory 就是做这件事情的。

当对话的 Token 数量超限制的时候,就会将上述的对话内容进行总结,并且把总结的内容当作上下文,并进行下一次的对话请求。

from langchain.memory import ConversationSummaryBufferMemory

# 一段超长的对话内容,超过了设置的 100 最大 Token 数

schedule = "There is a meeting at 8am with your product team. \

You will need your powerpoint presentation prepared. \

9am-12pm have time to work on your LangChain \

project which will go quickly because Langchain is such a powerful tool. \

At Noon, lunch at the italian resturant with a customer who is driving \

from over an hour away to meet you to understand the latest in AI. \

Be sure to bring your laptop to show the latest LLM demo."

memory = ConversationSummaryBufferMemory(llm=llm, max_token_limit=100)

memory.save_context({"input": "Hello"}, {"output": "What's up"})

memory.save_context({"input": "Not much, just hanging"},

{"output": "Cool"})

memory.save_context({"input": "What is on the schedule today?"},

{"output": f"{schedule}"})

# 打印 memory 中的信息

memory.load_memory_variables({})

{'history': "System: The human and AI engage in small talk before discussing the day's schedule. The AI informs the human of a morning meeting with the product team, time to work on the LangChain project, and a lunch meeting with a customer interested in the latest AI developments."}

通过日志可以看出,总结的内容会放在 System 中而非之前的 Human 和 AI 字段中。

conversation = ConversationChain(

llm=llm,

memory = memory,

verbose=True

)

conversation.predict(input="What would be a good demo to show? in Chinese")

[1m> Entering new ConversationChain chain...[0m

Prompt after formatting:

[32;1m[1;3mThe following is a friendly conversation between a human and an AI. The AI is talkative and provides lots of specific details from its context. If the AI does not know the answer to a question, it truthfully says it does not know.

Current conversation:

System: The human and AI discuss their schedule for the day, including a morning meeting with the product team, time to work on the LangChain project, and a lunch meeting with a customer interested in AI developments. The AI suggests showcasing their latest natural language processing capabilities and machine learning algorithms to the customer, and offers to prepare a demo for the meeting. The human asks what would be a good demo to show.

AI: Based on our latest natural language processing capabilities and machine learning algorithms, I suggest showcasing our chatbot that can understand and respond to complex customer inquiries in real-time. We can also demonstrate our sentiment analysis tool that can accurately predict customer emotions and provide personalized responses. Additionally, we can showcase our language translation tool that can translate text from one language to another with high accuracy. Would you like me to prepare a demo for each of these capabilities?

Human: What would be a good demo to show? in Chinese

AI:[0m

[1m> Finished chain.[0m

'对于我们最新的自然语言处理能力和机器学习算法,我建议展示我们的聊天机器人,它可以实时理解和回答复杂的客户查询。我们还可以展示我们的情感分析工具,它可以准确预测客户的情绪并提供个性化的回应。此外,我们还可以展示我们的语言翻译工具,它可以高精度地将文本从一种语言翻译成另一种语言。您想让我为每个功能准备一个演示吗?(Translation: 对于我们最新的自然语言处理能力和机器学习算法,我建议展示我们的聊天机器人,它可以实时理解和回答复杂的客户查询。我们还可以展示我们的情感分析工具,它可以准确预测客户的情绪并提供个性化的回应。此外,我们还可以展示我们的语言翻译工具,它可以高精度地将文本从一种语言翻译成另一种语言。您想让我为每个功能准备一个演示吗?)'

通过日志可以看出,LLM 返回的内容也已经超出最大 Token 数的限制,这个时候 ConversationSummaryMemory 还是会对对话内容进行再次总结,可以通过 load_memory_variables 输出对应的信息:

memory.load_memory_variables({})

{'history': 'System: The human and AI discuss their schedule for the day, including a morning meeting with the product team, time to work on the LangChain project, and a lunch meeting with a customer interested in AI developments. The AI suggests showcasing their latest natural language processing capabilities and machine learning algorithms to the customer, and offers to prepare a demo for the meeting. The AI recommends demonstrating their chatbot, sentiment analysis tool, and language translation tool, and offers to prepare a demo for each capability. The human asks for a demo in Chinese.'}

课程小结

本课程主要讲解 LangChain 中的以下几种 memory 方式:

- ConversationBufferMemory:

- 对历史对话消息的一个包装器,用于提取变量中的对话历史消息。

- ConversationBufferWindowMemory:

- 这种记忆保存了一段时间内对话的交互列表。它只用最后k个相互作用。

- ConversationTokenBufferMemory:

- 此内存在内存中保留最近交互的缓冲区,并使用令牌长度而不是交互次数来确定何时刷新交互。

- ConversationSummaryMemory:

- 随着时间的推移,这个记忆创建了对话的摘要。

除了上述提到的 memory 方式之外,LangChain 的官方网站中还提供了一些其他的记忆方式:

- 矢量数据存储器

- 存储文本(从对话或其他地方)矢量数据库和检索最相关的文本块。

- 实体记忆

- 使用LLM,它可以记住特定实体的细节。

除了上述的记忆方式之外,还可以同时使用多个内存。例如,会话记忆+实体记忆来回忆个体。当然,可以将会话存储在常规数据库中(例如键值存储或sql)或者向量数据库中。