import torch

import math

def get_positional_encoding(max_len, d_model):

"""

计算位置编码

参数:

max_len -- 序列的最大长度

d_model -- 位置编码的维度

返回:

一个形状为 (max_len, d_model) 的位置编码张量

"""

positional_encoding = torch.zeros(max_len, d_model)

position = torch.arange(0, max_len, dtype=torch.float).unsqueeze(1)

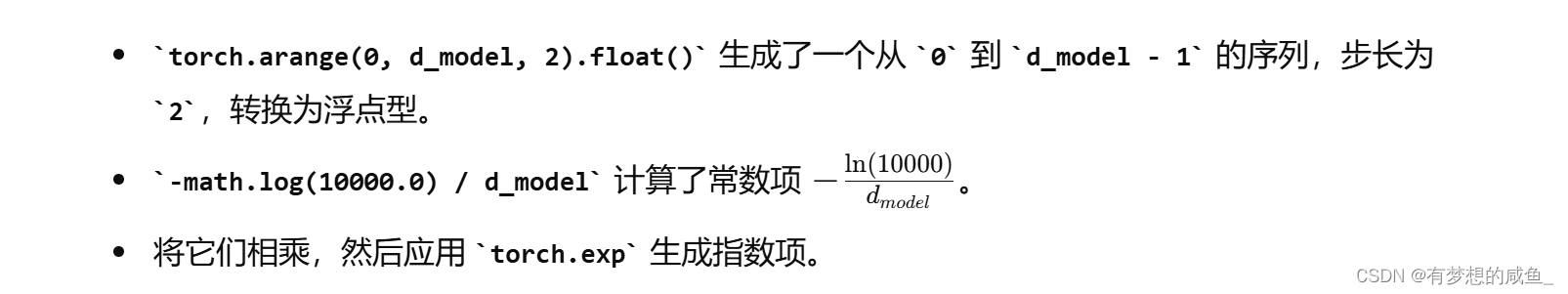

div_term = torch.exp(torch.arange(0, d_model, 2).float() * (-math.log(10000.0) / d_model))

positional_encoding[:, 0::2] = torch.sin(position * div_term)

positional_encoding[:, 1::2] = torch.cos(position * div_term)

return positional_encoding

# 示例参数

max_len = 100

d_model = 512

# 计算位置编码

positional_encoding = get_positional_encoding(max_len, d_model)

print(positional_encoding)

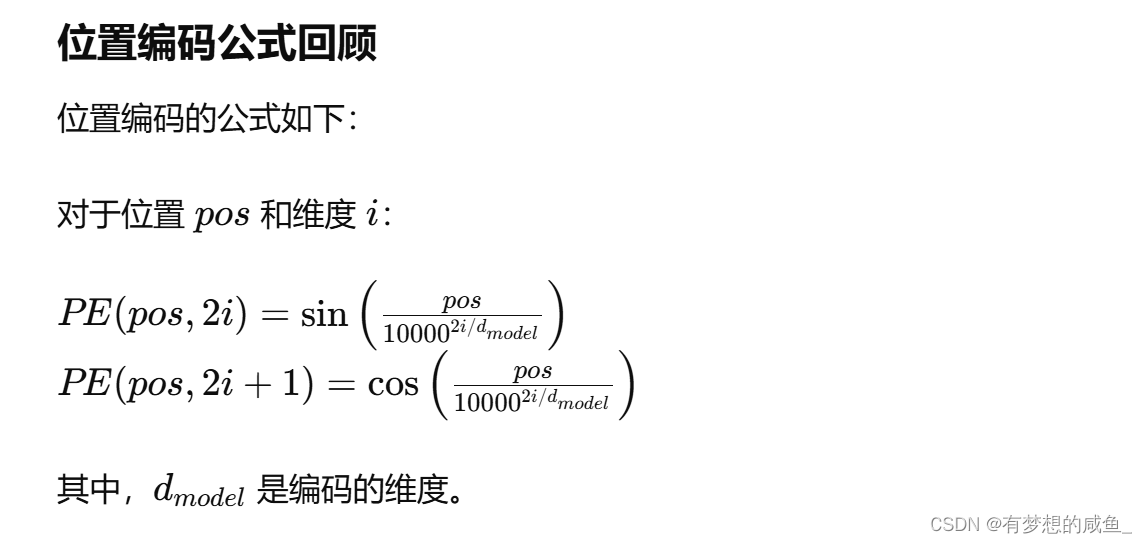

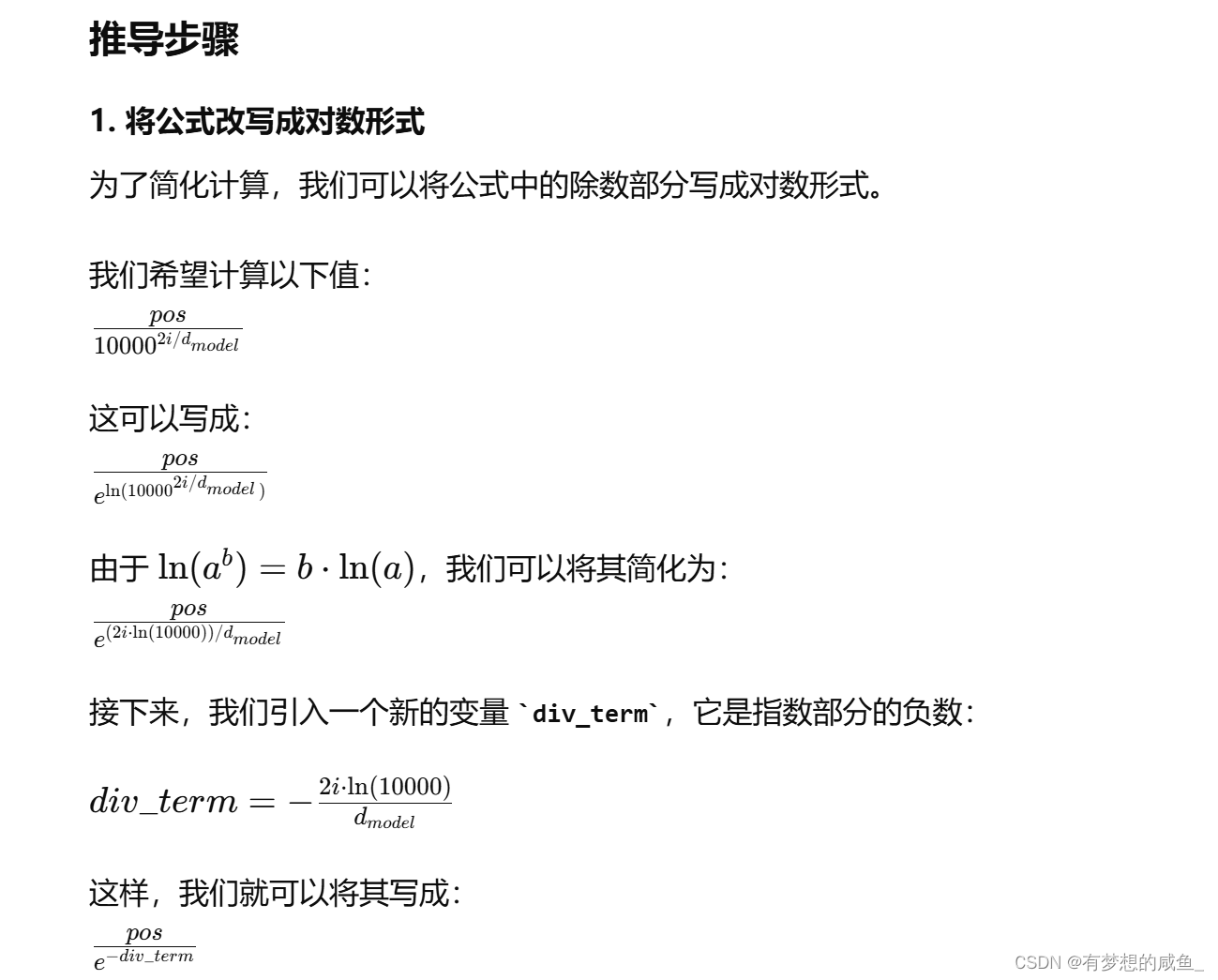

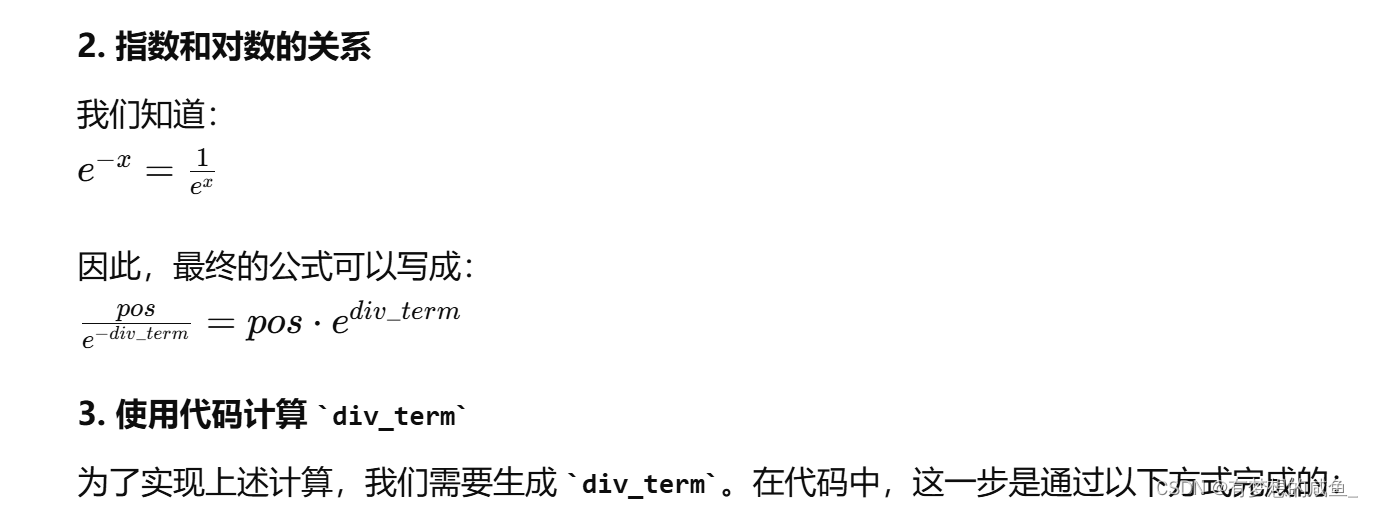

这里为什么要这么实现

div_term = torch.exp(torch.arange(0, d_model, 2).float() * (-math.log(10000.0) / d_model))

div_term = torch.exp(torch.arange(0, d_model, 2).float() * (-math.log(10000.0) / d_model))

![[chisel]马上要火的硬件语言,快来了解一下优缺点](https://img-blog.csdnimg.cn/img_convert/f9a9c642e73791d405078c6422c17704.webp?x-oss-process=image/format,png)