一、介绍

官网

DataStream API 得名于特殊的 DataStream 类,该类用于表示 Flink 程序中的数据集合。你可以认为 它们是可以包含重复项的不可变数据集合。这些数据可以是有界(有限)的,也可以是无界(无限)的,但用于处理它们的API是相同的。

二、基础算子

1、Map

Map算子:输入一个元素同时输出一个元素,这里的写法和Java类似,可以使用糖化语法或者实现Function接口

package com.xx.common.study.api.base;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

/**

* @author xiaxing

* @describe Map算子,输入一个元素同时输出一个元素,这里的写法和Java类似,可以使用糖化语法或者实现Function接口

* @since 2024/5/17 14:27

*/

public class DataStreamMapApiDemo {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<Integer> sourceStream = env.fromElements(1, 2, 3);

// 糖化语法

SingleOutputStreamOperator<Integer> multiStream = sourceStream.map(e -> e * 2);

multiStream.print("数据乘2");

// 实现Function接口

SingleOutputStreamOperator<Integer> addStream = sourceStream.map(new MapFunction<Integer, Integer>() {

@Override

public Integer map(Integer value) throws Exception {

return value + 2;

}

});

addStream.print("数据加2");

env.execute();

}

}

2、FlatMap

FlatMap算子:输入一个元素同时产生零个、一个或多个元素

package com.xx.common.study.api.base;

import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import java.util.Arrays;

import java.util.List;

/**

* @author xiaxing

* @describe FlatMap算子,输入一个元素同时产生零个、一个或多个元素

* @since 2024/5/17 14:27

*/

public class DataStreamFlatMapApiDemo {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<String> sourceStream = env.fromElements("1,2,3");

// 对source进行加工处理

sourceStream.flatMap((FlatMapFunction<String, List<String>>) (value, out) -> {

String[] split = value.split(",");

out.collect(Arrays.asList(split));

}).print();

// 错误写法,和Java写法不用,无法使用这种糖化语法

// sourceStream.flatMap((k, v) -> {

// String[] split = k.split(",");

// v.collect(split);

// }).print();

env.execute();

}

}

3、Filter

Filter算子:为每个元素执行一个布尔 function,并保留那些 function 输出值为 true 的元素

package com.xx.common.study.api.base;

import org.apache.flink.api.common.functions.FilterFunction;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

/**

* @author xiaxing

* @describe Filter算子,为每个元素执行一个布尔 function,并保留那些 function 输出值为 true 的元素

* @since 2024/5/17 14:27

*/

public class DataStreamFilterApiDemo {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<Integer> sourceStream = env.fromElements(1, 2, 3);

// 保留整数

sourceStream.filter(e -> (e % 2) == 0).print("糖化语法保留整数");

sourceStream.filter(new FilterFunction<Integer>() {

@Override

public boolean filter(Integer value) throws Exception {

return value % 2 == 0;

}

}).print("实现Function保留整数");

env.execute();

}

}

4、KeyBy

KeyBy算子:在逻辑上将流划分为不相交的分区。具有相同 key 的记录都分配到同一个分区。在内部, keyBy() 是通过哈希分区实现的

package com.xx.common.study.api.base;

import lombok.AllArgsConstructor;

import lombok.Data;

import lombok.NoArgsConstructor;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.KeyedStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

/**

* @author xiaxing

* @describe KeyBy算子,在逻辑上将流划分为不相交的分区。具有相同 key 的记录都分配到同一个分区。在内部, keyBy() 是通过哈希分区实现的

* @since 2024/5/17 14:27

*/

public class DataStreamKeyByApiDemo {

@Data

@AllArgsConstructor

@NoArgsConstructor

public static class keyByDemo {

private Integer id;

private Integer count;

}

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<keyByDemo> sourceStream = env.fromElements(

new keyByDemo(1, 1),

new keyByDemo(2, 2),

new keyByDemo(3, 3),

new keyByDemo(1, 4)

);

KeyedStream<keyByDemo, Integer> keyByStream = sourceStream.keyBy(keyByDemo::getId);

keyByStream.print("按照key分组");

// 使用key分组之后可以使用一些常用的聚合算子

// positionToSum:可以用于Tuple类型数据传递索引位置,field:传递字段名称

keyByStream.sum("count").print();

env.execute();

}

}

5、Reduce

Reduce算子:在相同 key 的数据流上“滚动”执行 reduce。将当前元素与最后一次 reduce 得到的值组合然后输出新值

package com.xx.common.study.api.base;

import lombok.AllArgsConstructor;

import lombok.Data;

import lombok.NoArgsConstructor;

import org.apache.flink.api.common.functions.ReduceFunction;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

/**

* @author xiaxing

* @describe Reduce算子,在相同 key 的数据流上“滚动”执行 reduce。将当前元素与最后一次 reduce 得到的值组合然后输出新值

* @since 2024/5/17 14:27

*/

public class DataStreamReduceApiDemo {

@Data

@AllArgsConstructor

@NoArgsConstructor

public static class reduceByDemo {

private String id;

private Integer count;

}

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<reduceByDemo> sourceStream = env.fromElements(

new reduceByDemo("1", 1),

new reduceByDemo("2", 2),

new reduceByDemo("3", 3),

new reduceByDemo("1", 4)

);

sourceStream.keyBy(reduceByDemo::getId).reduce(new ReduceFunction<reduceByDemo>() {

@Override

public reduceByDemo reduce(reduceByDemo value1, reduceByDemo value2) throws Exception {

value1.setCount(value1.getCount() + value2.getCount());

return value1;

}

}).print();

env.execute();

}

}

三、窗口算子

官网地址

3.1、概念

窗口(Window)是处理无界流的关键所在。窗口可以将数据流装入大小有限的“桶”中,再对每个“桶”加以处理。

Flink 中的时间有三种类型:

-

Event Time:是事件创建的时间。它通常由事件中的时间戳描述,例如采集的日志数据中,每一条日志都会记录自己的生成时间,Flink 通过时间戳分配器访问事件时间戳。

-

Ingestion Time:是数据进入 Flink 的时间。

-

Processing Time:是每一个执行基于时间操作的算子的本地系统时间,与机器相关,默认的时间属性就是 Processing Time。

3.2、语法

Keyed Windows

stream

.keyBy(...) <- 仅 keyed 窗口需要

.window(...) <- 必填项:"assigner"

[.trigger(...)] <- 可选项:"trigger" (省略则使用默认 trigger)

[.evictor(...)] <- 可选项:"evictor" (省略则不使用 evictor)

[.allowedLateness(...)] <- 可选项:"lateness" (省略则为 0)

[.sideOutputLateData(...)] <- 可选项:"output tag" (省略则不对迟到数据使用 side output)

.reduce/aggregate/apply() <- 必填项:"function"

[.getSideOutput(...)] <- 可选项:"output tag"

Non-Keyed Windows

stream

.windowAll(...) <- 必填项:"assigner"

[.trigger(...)] <- 可选项:"trigger" (else default trigger)

[.evictor(...)] <- 可选项:"evictor" (else no evictor)

[.allowedLateness(...)] <- 可选项:"lateness" (else zero)

[.sideOutputLateData(...)] <- 可选项:"output tag" (else no side output for late data)

.reduce/aggregate/apply() <- 必填项:"function"

[.getSideOutput(...)] <- 可选项:"output tag"

3.3、Window Assigners

Window Assigners为抽象类,Flink默认已经实现了4种窗口

3.3.1、滚动窗口(Tumbling Windows)

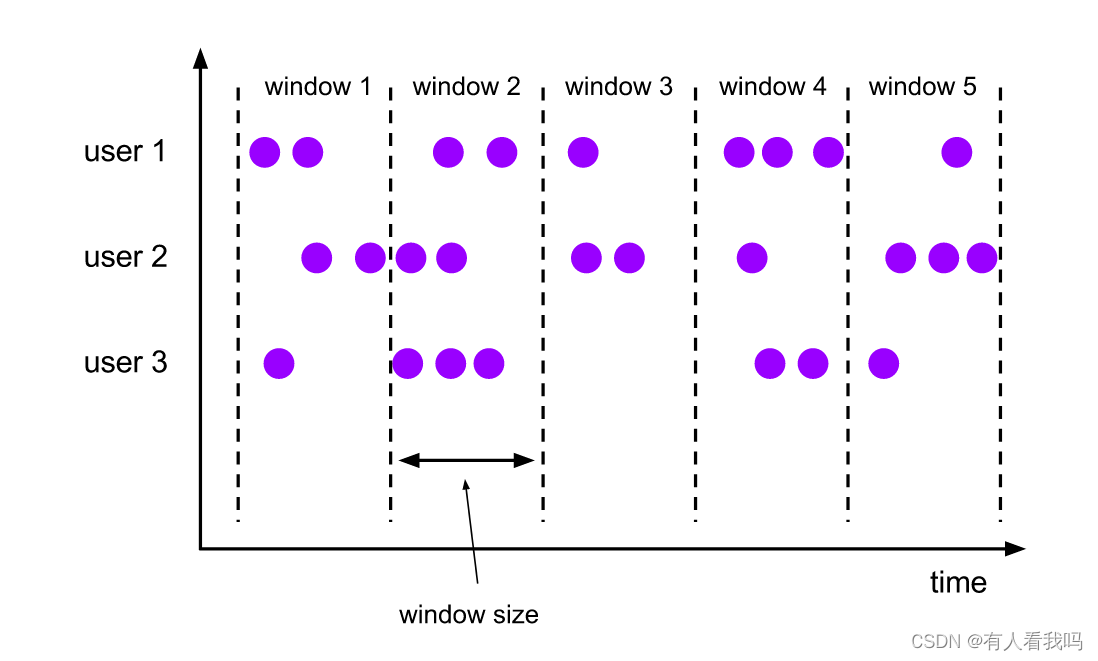

滚动窗口的 assigner 分发元素到指定大小的窗口。滚动窗口的大小是固定的,且各自范围之间不重叠。 比如说,如果你指定了滚动窗口的大小为 5 分钟,那么每 5 分钟就会有一个窗口被计算,且一个新的窗口被创建(如下图所示)。

DataStream<T> input = ...;

// 滚动 event-time 窗口

input

.keyBy(<key selector>)

.window(TumblingEventTimeWindows.of(Time.seconds(5)))

.<windowed transformation>(<window function>);

// 滚动 processing-time 窗口

input

.keyBy(<key selector>)

.window(TumblingProcessingTimeWindows.of(Time.seconds(5)))

.<windowed transformation>(<window function>);

// 长度为一天的滚动 event-time 窗口, 偏移量为 -8 小时。

input

.keyBy(<key selector>)

.window(TumblingEventTimeWindows.of(Time.days(1), Time.hours(-8)))

.<windowed transformation>(<window function>);

3.3.2、滑动窗口(Sliding Windows)

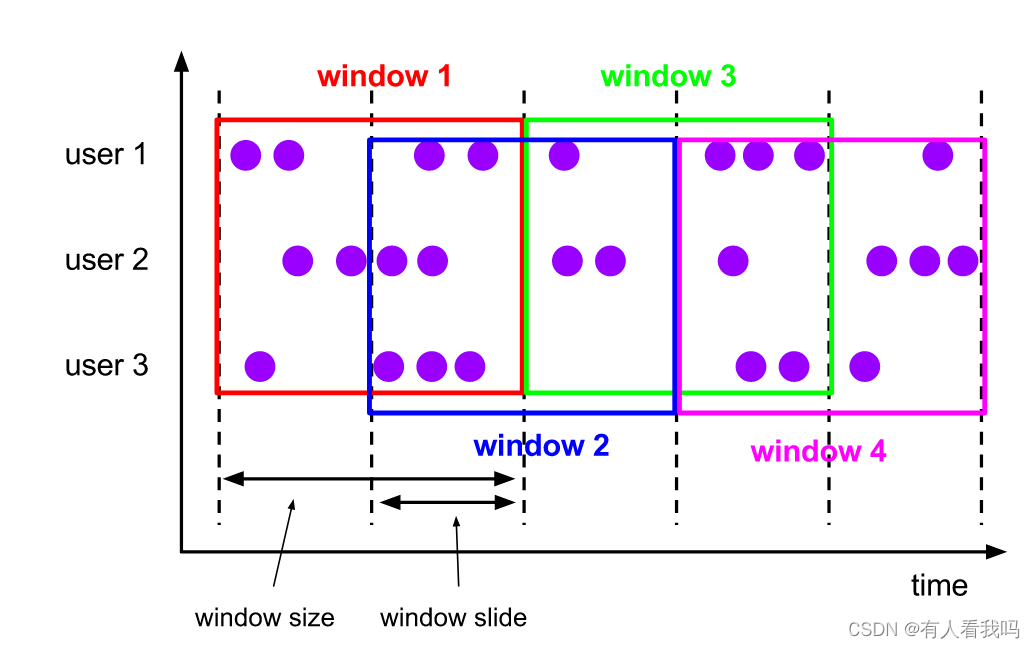

与滚动窗口类似,滑动窗口的 assigner 分发元素到指定大小的窗口,窗口大小通过 window size 参数设置。 滑动窗口需要一个额外的滑动距离(window slide)参数来控制生成新窗口的频率。 因此,如果 slide 小于窗口大小,滑动窗口可以允许窗口重叠。这种情况下,一个元素可能会被分发到多个窗口。

比如说,你设置了大小为 10 分钟,滑动距离 5 分钟的窗口,你会在每 5 分钟得到一个新的窗口, 里面包含之前 10 分钟到达的数据(如下图所示)。

DataStream<T> input = ...;

// 滑动 event-time 窗口

input

.keyBy(<key selector>)

.window(SlidingEventTimeWindows.of(Time.seconds(10), Time.seconds(5)))

.<windowed transformation>(<window function>);

// 滑动 processing-time 窗口

input

.keyBy(<key selector>)

.window(SlidingProcessingTimeWindows.of(Time.seconds(10), Time.seconds(5)))

.<windowed transformation>(<window function>);

// 滑动 processing-time 窗口,偏移量为 -8 小时

input

.keyBy(<key selector>)

.window(SlidingProcessingTimeWindows.of(Time.hours(12), Time.hours(1), Time.hours(-8)))

.<windowed transformation>(<window function>);

3.3.3、会话窗口(Session Windows)

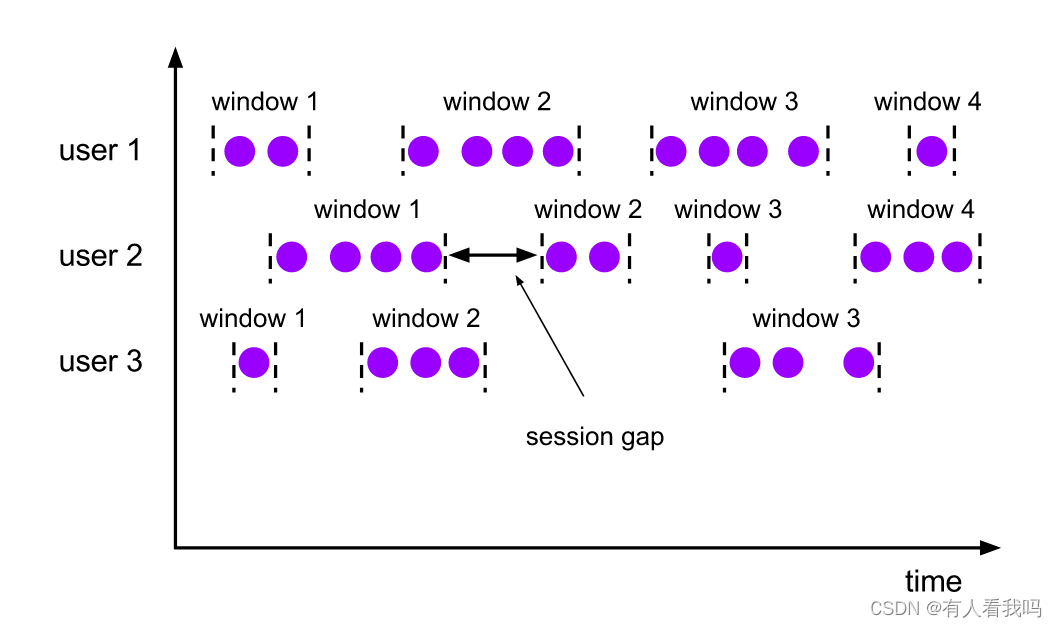

会话窗口的 assigner 会把数据按活跃的会话分组。 与滚动窗口和滑动窗口不同,会话窗口不会相互重叠,且没有固定的开始或结束时间。 会话窗口在一段时间没有收到数据之后会关闭,即在一段不活跃的间隔之后。 会话窗口的 assigner 可以设置固定的会话间隔(session gap)或 用 session gap extractor 函数来动态地定义多长时间算作不活跃。 当超出了不活跃的时间段,当前的会话就会关闭,并且将接下来的数据分发到新的会话窗口。

DataStream<T> input = ...;

// 设置了固定间隔的 event-time 会话窗口

input

.keyBy(<key selector>)

.window(EventTimeSessionWindows.withGap(Time.minutes(10)))

.<windowed transformation>(<window function>);

// 设置了动态间隔的 event-time 会话窗口

input

.keyBy(<key selector>)

.window(EventTimeSessionWindows.withDynamicGap((element) -> {

// 决定并返回会话间隔

}))

.<windowed transformation>(<window function>);

// 设置了固定间隔的 processing-time session 窗口

input

.keyBy(<key selector>)

.window(ProcessingTimeSessionWindows.withGap(Time.minutes(10)))

.<windowed transformation>(<window function>);

// 设置了动态间隔的 processing-time 会话窗口

input

.keyBy(<key selector>)

.window(ProcessingTimeSessionWindows.withDynamicGap((element) -> {

// 决定并返回会话间隔

}))

.<windowed transformation>(<window function>);

3.3.4、全局窗口(Global Windows)

全局窗口的 assigner 将拥有相同 key 的所有数据分发到一个全局窗口。 这样的窗口模式仅在你指定了自定义的 trigger 时有用。 否则,计算不会发生,因为全局窗口没有天然的终点去触发其中积累的数据。

DataStream<T> input = ...;

input

.keyBy(<key selector>)

.window(GlobalWindows.create())

.<windowed transformation>(<window function>);

3.4、窗口函数

窗口函数有三种:ReduceFunction、AggregateFunction 或 ProcessWindowFunction

3.4.1、ReduceFunction

ReduceFunction 指定两条输入数据如何合并起来产生一条输出数据,输入和输出数据的类型必须相同

package com.xx.common.study.api.windows;

import lombok.AllArgsConstructor;

import lombok.Data;

import lombok.NoArgsConstructor;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.common.functions.ReduceFunction;

import org.apache.flink.api.java.functions.KeySelector;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.windowing.assigners.TumblingProcessingTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

/**

* @author xiaxing

* @describe 窗口函数-reduce

* @since 2024/5/17 14:27

*/

public class DataStreamWindowsReduceApiDemo {

@Data

@NoArgsConstructor

@AllArgsConstructor

public static class TumblingWindows {

private Integer id;

private Integer count;

}

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<String> socketStream = env.socketTextStream("localhost", 7777);

socketStream

.map(new MapFunction<String, TumblingWindows>() {

@Override

public TumblingWindows map(String value) throws Exception {

String[] split = value.split(",");

return new TumblingWindows(Integer.valueOf(split[0]), Integer.valueOf(split[1]));

}

})

.keyBy(new KeySelector<TumblingWindows, Integer>() {

@Override

public Integer getKey(TumblingWindows value) throws Exception {

return value.getId();

}

})

.window(TumblingProcessingTimeWindows.of(Time.seconds(5L)))

.reduce(new ReduceFunction<TumblingWindows>() {

@Override

public TumblingWindows reduce(TumblingWindows value1, TumblingWindows value2) throws Exception {

return new TumblingWindows(value1.getId(), value1.getCount() + value2.getCount());

}

})

.print();

env.execute();

}

}

3.4.2、ReduceFunction糖化语法

使用Lambda糖化语法对代码进行了简化

package com.xx.common.study.api.windows;

import lombok.AllArgsConstructor;

import lombok.Data;

import lombok.NoArgsConstructor;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.windowing.assigners.TumblingProcessingTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

/**

* @author xiaxing

* @describe 窗口函数-reduce-糖化语法

* @since 2024/5/17 14:27

*/

public class DataStreamWindowsReduceLambdaApiDemo {

@Data

@NoArgsConstructor

@AllArgsConstructor

public static class TumblingWindows {

private Integer id;

private Integer count;

}

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<String> socketStream = env.socketTextStream("localhost", 7777);

socketStream

.map(value -> {

String[] split = value.split(",");

return new TumblingWindows(Integer.valueOf(split[0]), Integer.valueOf(split[1]));

})

.keyBy(TumblingWindows::getId)

.window(TumblingProcessingTimeWindows.of(Time.seconds(5L)))

.reduce((value1, value2) -> new TumblingWindows(value1.getId(), value1.getCount() + value2.getCount()))

.print();

env.execute();

}

}

3.4.3、AggregateFunction

ReduceFunction 是 AggregateFunction 的特殊情况。 AggregateFunction 接收三个类型:输入数据的类型(IN)、累加器的类型(ACC)和输出数据的类型(OUT)。 输入数据的类型是输入流的元素类型,AggregateFunction 接口有如下几个方法: 把每一条元素加进累加器、创建初始累加器、合并两个累加器、从累加器中提取输出(OUT 类型)

package com.xx.common.study.api.windows;

import lombok.AllArgsConstructor;

import lombok.Data;

import lombok.NoArgsConstructor;

import org.apache.flink.api.common.functions.AggregateFunction;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.windowing.assigners.TumblingProcessingTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import java.util.Optional;

/**

* @author xiaxing

* @describe 窗口函数-Aggregate

* @since 2024/5/17 14:27

*/

public class DataStreamWindowsAggregateApiDemo {

@Data

@NoArgsConstructor

@AllArgsConstructor

public static class TumblingWindows {

private Integer id;

private Integer count;

}

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<String> socketStream = env.socketTextStream("localhost", 7777);

// 求和

AggregateFunction<TumblingWindows, TumblingWindows, TumblingWindows> aggregateFunction = new AggregateFunction<TumblingWindows, TumblingWindows, TumblingWindows>() {

@Override

public TumblingWindows createAccumulator() {

// 创建累加器,并将其初始化为默认值

return new TumblingWindows();

}

@Override

public TumblingWindows add(TumblingWindows value, TumblingWindows accumulator) {

// 将输入的元素添加到累加器,返回更新后的累加器

Integer count1 = Optional.of(value.getCount()).orElse(0);

Integer count2 = Optional.ofNullable(accumulator.getCount()).orElse(0);

return new TumblingWindows(value.getId(), count1 + count2);

}

@Override

public TumblingWindows getResult(TumblingWindows accumulator) {

// 从累加器中提取操作的结果

return accumulator;

}

@Override

public TumblingWindows merge(TumblingWindows a, TumblingWindows b) {

// 将两个累加器合并为一个新的累加器

return new TumblingWindows(a.getId(), a.getCount() + b.getCount());

}

};

socketStream

.map(value -> {

String[] split = value.split(",");

return new TumblingWindows(Integer.valueOf(split[0]), Integer.valueOf(split[1]));

})

.keyBy(TumblingWindows::getId)

.window(TumblingProcessingTimeWindows.of(Time.seconds(5L)))

.aggregate(aggregateFunction)

.print();

env.execute();

}

}

3.4.4、ProcessWindowFunction

ProcessWindowFunction 可以与 ReduceFunction 或 AggregateFunction 搭配使用, 使其能够在数据到达窗口的时候进行增量聚合。当窗口关闭时,ProcessWindowFunction 将会得到聚合的结果。 这样它就可以增量聚合窗口的元素并且从 ProcessWindowFunction` 中获得窗口的元数据。

package com.xx.common.study.api.windows;

import lombok.AllArgsConstructor;

import lombok.Data;

import lombok.NoArgsConstructor;

import org.apache.flink.api.common.functions.ReduceFunction;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.windowing.ProcessWindowFunction;

import org.apache.flink.streaming.api.windowing.assigners.TumblingProcessingTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow;

import org.apache.flink.util.Collector;

/**

* @author xiaxing

* @describe 窗口函数-reduce-process

* @since 2024/5/17 14:27

*/

public class DataStreamWindowsReduceProcessApiDemo {

@Data

@NoArgsConstructor

@AllArgsConstructor

public static class TumblingWindows {

private Integer id;

private Integer count;

}

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<String> socketStream = env.socketTextStream("localhost", 7777);

socketStream

.map(value -> {

String[] split = value.split(",");

return new TumblingWindows(Integer.valueOf(split[0]), Integer.valueOf(split[1]));

})

.keyBy(TumblingWindows::getId)

.window(TumblingProcessingTimeWindows.of(Time.seconds(5L)))

.reduce(new MyReduceFunction(), new MyProcessWindowsFunction())

.print();

env.execute();

}

private static class MyReduceFunction implements ReduceFunction<TumblingWindows> {

@Override

public TumblingWindows reduce(TumblingWindows value1, TumblingWindows value2) throws Exception {

return new TumblingWindows(value1.getId(), value1.getCount() + value2.getCount());

}

}

private static class MyProcessWindowsFunction extends ProcessWindowFunction<TumblingWindows, TumblingWindows, Integer, TimeWindow> {

@Override

public void process(Integer integer, ProcessWindowFunction<TumblingWindows, TumblingWindows, Integer, TimeWindow>.Context context, Iterable<TumblingWindows> elements, Collector<TumblingWindows> out) throws Exception {

elements.forEach(e -> {

Integer count = e.getCount();

// 当count > 10时才数据元素

if (count > 10) {

out.collect(e);

}

});

}

}

}