引子

由于工作上需要,一直在用Qwen做大模型推理,有个再训练的需求,特此琢磨下Qwen的训练。OK,我们开始吧。

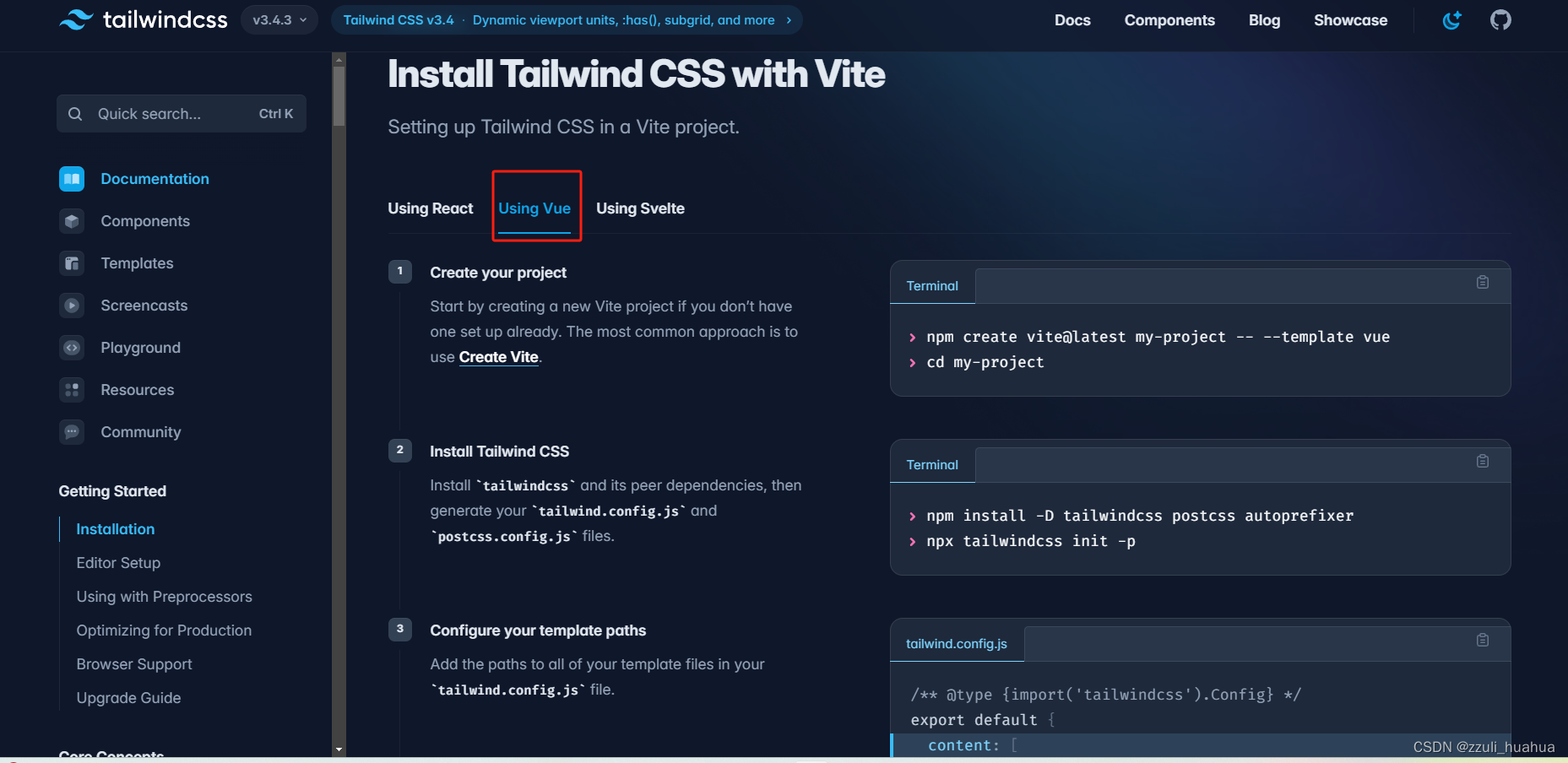

一、安装环境

查看显卡驱动版本

根据官网推荐

OK,docker在手,天下我有。

docker pull qwenllm/qwen:cu117

docker run -it --rm --gpus=all -v /mnt/code/LLM_Service/:/workspace qwenllm/qwen:cu117 bash

二、测试环境

1、数据集准备

2、下载代码

GitHub - QwenLM/Qwen1.5: Qwen1.5 is the improved version of Qwen, the large language model series developed by Qwen team, Alibaba Cloud.

cd /workspace/qwen1.5_train/Qwen1.5/examples/sft

3、配置

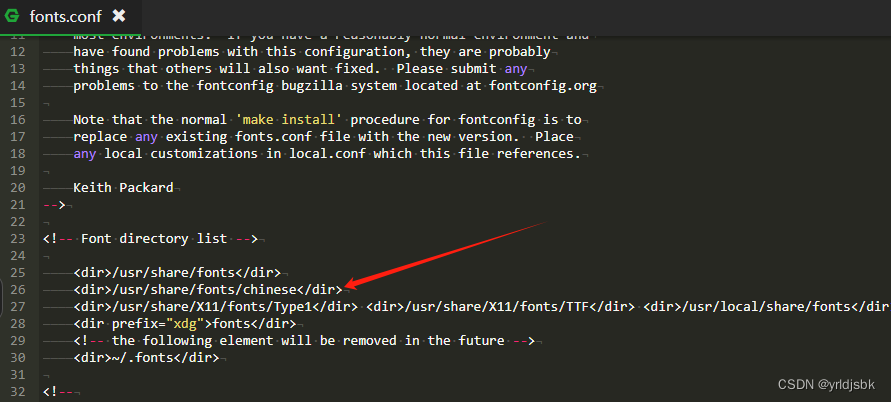

修改脚本,/workspace/qwen1.5_train/Qwen1.5/examples/sft/finetune.sh

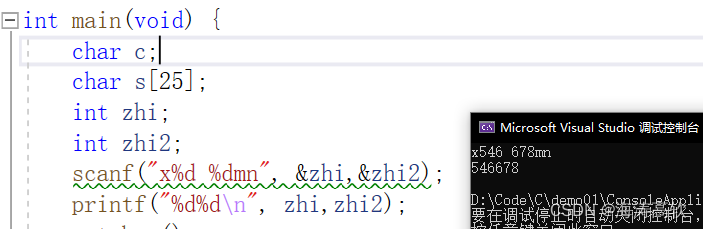

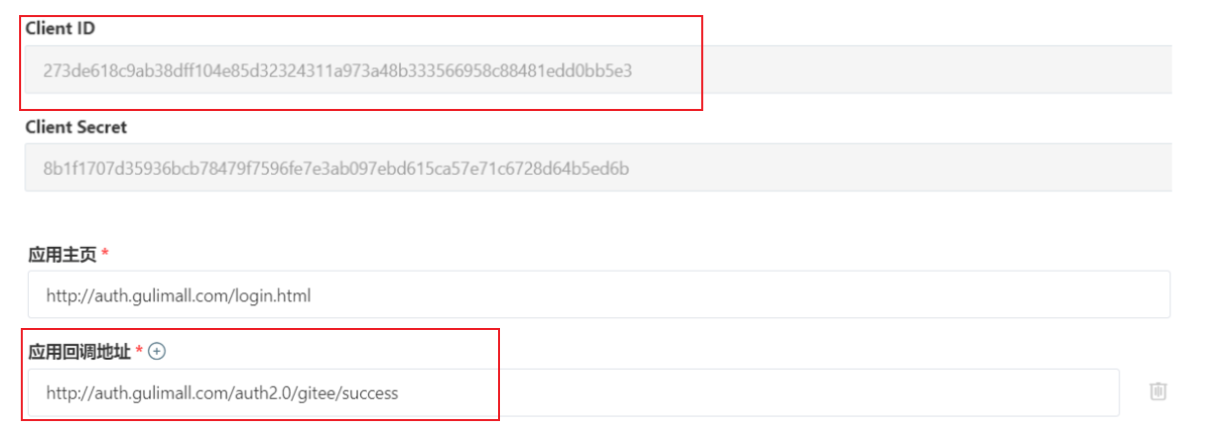

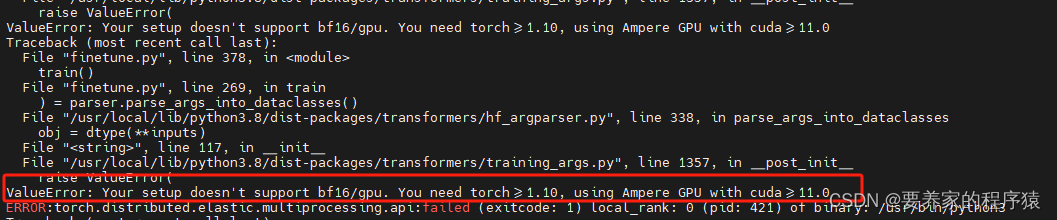

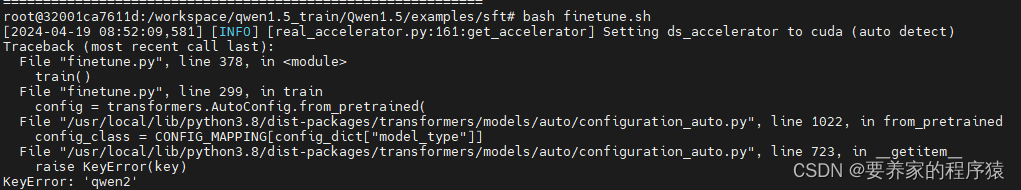

执行命令, bash finetune.sh,报错如下:

修改如下:

报错,显卡不支持bf16,改为fp16精度

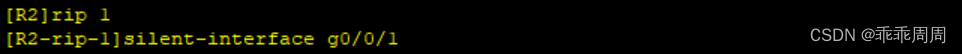

本地训练,修改脚本

继续报错,transformer没更新

git install transformer -i Simple Index

执行命令, bash finetune.sh

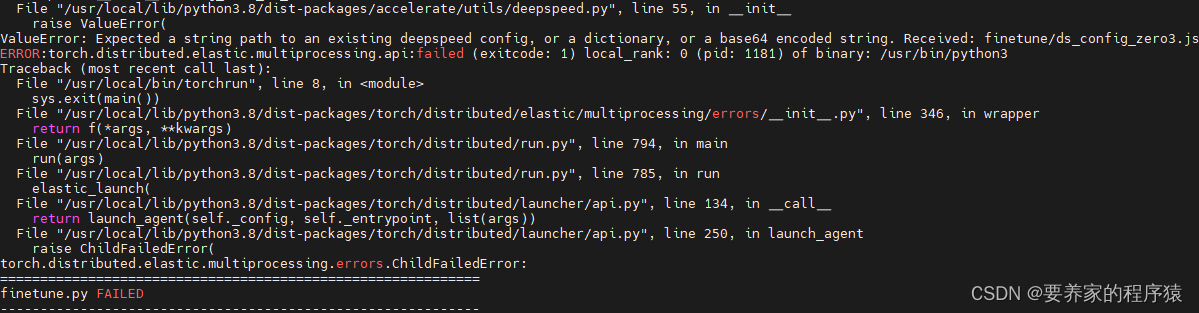

继续报错,accelerate版本不对

pip install accelerate==0.27.2

单机多卡,继续报错。

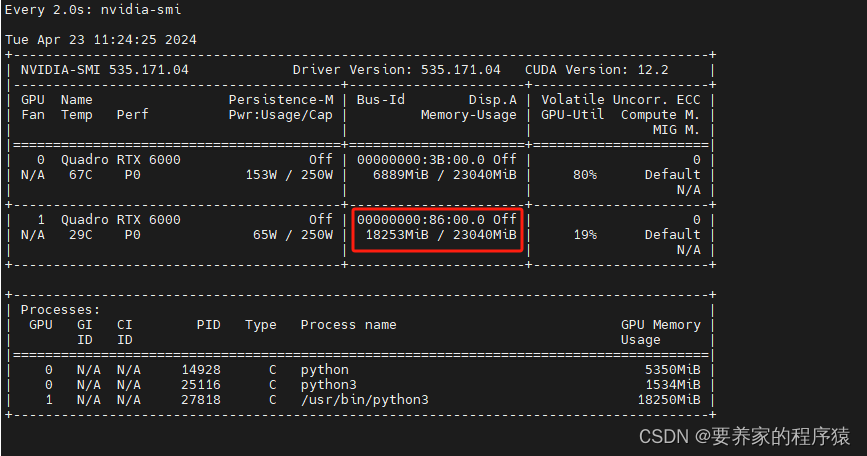

修改为单机单卡,重启容器,docker run -it --rm --gpus='"device=1"' -v /mnt/code/LLM_Service/:/workspace qwen:v1.0 bash

out of memory,修改为7B模型重新尝试,下载地址https://huggingface.co/Qwen/CodeQwen1.5-7B-Chat/tree/main

两条数据训练完成

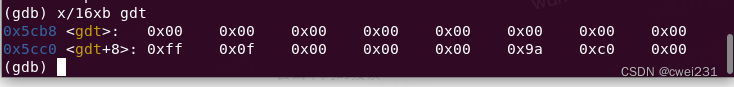

4、显存占用

三、模型合并

from peft import PeftModel

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

"""

使用该脚本,将lora的权重合并到base model中

"""

def merge_lora_to_base_model():

model_name_or_path = '/workspace/model/Qwen1.5-7B-Chat'

adapter_name_or_path = '/workspace/qwen1.5_train/Qwen1.5/examples/sft/output_qwen'

save_path = 'finetune-qwen1.5-7b'

tokenizer = AutoTokenizer.from_pretrained(

model_name_or_path,

trust_remote_code=True

)

model = AutoModelForCausalLM.from_pretrained(

model_name_or_path,

trust_remote_code=True,

low_cpu_mem_usage=True,

torch_dtype=torch.float16,

device_map='auto'

)

model = PeftModel.from_pretrained(model, adapter_name_or_path)

model = model.merge_and_unload()

tokenizer.save_pretrained(save_path)

model.save_pretrained(save_path)

if __name__ == '__main__':

merge_lora_to_base_model()