Go源码

分享48个Go源码,总有一款适合您

Go源码下载链接:https://pan.baidu.com/s/1FhQ6NzB3TWsv9res1OsJaA?pwd=r2d3

提取码:r2d3

下面是文件的名字,我放了一些图片,文章里不是所有的图主要是放不下...,大家下载后可以看到。

import os

from time import sleep

import requests

from bs4 import BeautifulSoup

from docx import Document

from docx.shared import Inches

from framework.access.sprider.SpriderAccess import SpriderAccess

from framework.base.BaseFrame import BaseFrame

from framework.pulgin.Tools import Tools

from sprider.business.DownLoad import DownLoad

from sprider.model.SpriderEntity import SpriderEntity

from sprider.business.SpriderTools import SpriderTools

from sprider.business.UserAgent import UserAgent

class ChinaZCode:

page_count = 1 # 每个栏目开始业务content="text/html; charset=gb2312"

base_url = "https://down.chinaz.com" # 采集的网址 https://sc.chinaz.com/tag_ppt/zhongguofeng.html

save_path = "D:\\Freedom\\Sprider\\ChinaZ\\"

sprider_count = 66 # 采集数量

haved_sprider_count = 0 # 正在采集第429页的第15个资源共499页资源 正在采集第208页的第12个资源共499页资源

word_content_list = []

folder_name = ""

first_column_name = "PHP"

sprider_start_count=0 #已经采集完成第136个 debug

max_pager=16 #每页的数量

# 如果解压提升密码错误 ,烦请去掉空格。如果还是不行烦请下载WinRAR

# https: // www.yadinghao.com / file / 393740984E6754

# D18635BF2DF0749D87.html

# 此压缩文件采用WinRAR压缩。

# 此WinRAR是破解版。

def __init__(self):

#A5AndroidCoder().sprider("android", "youxi", 895) #

pass

def sprider(self, title_name="Go"):

"""

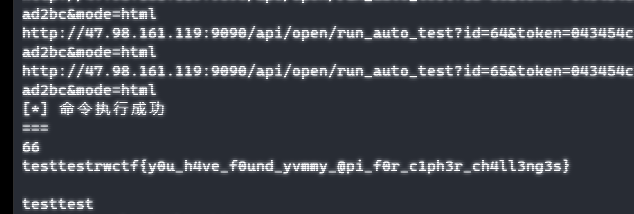

采集 https://down.chinaz.com/class/572_5_1.htm

:return:

"""

if title_name == "PHP":

self.folder_name = "PHP源码"

self.second_column_name = "572_5"

elif title_name == "Go":

self.folder_name = "Go源码"

self.second_column_name = "606_572"

merchant = int(self.sprider_start_count) // int(self.max_pager) + 1

second_folder_name = str(self.sprider_count) + "个" + self.folder_name

self.save_path = self.save_path+ os.sep + "Code" + os.sep + second_folder_name

print("开始采集ChinaZCode"+self.folder_name+"...")

sprider_url = (self.base_url + "/class/{0}_1.htm".format(self.second_column_name))

#print(sprider_url)

#sprider_url = (self.base_url + "/" + self.first_column_name + "/" + second_column_name + ".html")

response = requests.get(sprider_url, timeout=10, headers=UserAgent().get_random_header(self.base_url))

response.encoding = 'UTF-8'

soup = BeautifulSoup(response.text, "html5lib")

#print(soup)

div_list =soup.find('div', attrs={"class": 'main'})

div_list=div_list.find_all('div', attrs={"class": 'item'})

#print(div_list)

laster_pager_ul = soup.find('ul', attrs={"class": 'el-pager'})

laster_pager_li = laster_pager_ul.find_all('li', attrs={"class": 'number'})

laster_pager_url = laster_pager_li[len(laster_pager_li)-1]

#<a href="zhongguofeng_89.html"><b>89</b></a>

page_end_number = int(laster_pager_url.string)

#print(page_end_number)

self.page_count = merchant

while self.page_count <= int(page_end_number): # 翻完停止

try:

if self.page_count == 1:

self.sprider_detail(div_list,self.page_count,page_end_number)

else:

if self.haved_sprider_count == self.sprider_count:

BaseFrame().debug("采集到达数量采集停止...")

BaseFrame().debug("开始写文章...")

self.builder_word(self.folder_name, self.save_path, self.word_content_list)

BaseFrame().debug("文件编写完毕,请到对应的磁盘查看word文件和下载文件!")

break

next_url =self.base_url + "/class/{0}_{1}.htm".format(self.second_column_name,self.page_count )

response = requests.get(next_url, timeout=10, headers=UserAgent().get_random_header(self.base_url))

response.encoding = 'UTF-8'

soup = BeautifulSoup(response.text, "html5lib")

div_list = soup.find('div', attrs={"class": 'main'})

div_list = div_list.find_all('div', attrs={"class": 'item'})

self.sprider_detail(div_list, self.page_count,page_end_number)

pass

except Exception as e:

print("sprider()执行过程出现错误" + str(e))

pass

self.page_count = self.page_count + 1 # 页码增加1

def sprider_detail(self, element_list, page_count,max_page):

try:

element_length = len(element_list)

self.sprider_start_index = int(self.sprider_start_count) % int(self.max_pager)

index = self.sprider_start_index

while index < element_length:

a=element_list[index]

if self.haved_sprider_count == self.sprider_count:

BaseFrame().debug("采集到达数量采集停止...")

break

index = index + 1

sprider_info = "正在采集第" + str(page_count) + "页的第" + str(index) + "个资源共"+str(max_page)+"页资源"

print(sprider_info)

#title_image_obj = a.find('img', attrs={"class": 'lazy'})

url_A_obj=a.find('a', attrs={"class": 'name-text'})

next_url = self.base_url+url_A_obj.get("href")

coder_title = url_A_obj.get("title")

response = requests.get(next_url, timeout=10, headers=UserAgent().get_random_header(self.base_url))

response.encoding = 'UTF-8'

soup = BeautifulSoup(response.text, "html5lib")

#print(soup)

down_load_file_div = soup.find('div', attrs={"class": 'download-list'})

if down_load_file_div is None:

BaseFrame().debug("应该是多版本的暂时不下载因此跳过哦....")

continue

down_load_file_url =self.base_url+down_load_file_div.find('a').get("href")

#image_obj = soup.find('img', attrs={"class": "el-image__inner"})

#image_src =self.base_url+image_obj.get("src")

#print(image_src)

codeEntity = SpriderEntity() # 下载过的资源不再下载

codeEntity.sprider_base_url = self.base_url

codeEntity.create_datetime = SpriderTools.get_current_datetime()

codeEntity.sprider_url = next_url

codeEntity.sprider_pic_title = coder_title

codeEntity.sprider_pic_index = str(index)

codeEntity.sprider_pager_index = page_count

codeEntity.sprider_type = "code"

if SpriderAccess().query_sprider_entity_by_urlandindex(next_url, str(index)) is None:

SpriderAccess().save_sprider(codeEntity)

else:

BaseFrame().debug(coder_title + next_url + "数据采集过因此跳过")

continue

if (DownLoad(self.save_path).down_load_file__(down_load_file_url, coder_title, self.folder_name)):

#DownLoad(self.save_path).down_cover_image__(image_src, coder_title) # 资源的 封面

sprider_content = [coder_title,

self.save_path + os.sep + "image" + os.sep + coder_title + ".jpg"] # 采集成功的记录

self.word_content_list.append(sprider_content) # 增加到最终的数组

self.haved_sprider_count = self.haved_sprider_count + 1

BaseFrame().debug("已经采集完成第" + str(self.haved_sprider_count) + "个")

if (int(page_count) == int(max_page)):

self.builder_word(self.folder_name, self.save_path, self.word_content_list)

BaseFrame().debug("文件编写完毕,请到对应的磁盘查看word文件和下载文件!")

except Exception as e:

print("sprider_detail:" + str(e))

passkubernetes生产级别的容器编排系统 v1.26.0

etcd分布式存储系统 v3.4.23

Harbor开放源代码注册中心 v2.7.0

Vuls漏洞扫描器 v0.22.0 源码包

frp内网穿透工具 v0.46.0

Gokins开发工具 v1.0.2

mayfly-go v1.3.1

BookStack在线文档管理系统 v2.10

MOSN云原生网络数据平面 v1.3.0

bbs-go开源社区系统 v3.5.5

etcd分布式存储系统 v3.5.6

Harbor开放源代码注册中心 v1.10.15

GFast后台管理系统 v3.0

ferry工单系统 v1.0

Yearning SQL 审核平台 v3.1.1

Rainbond云原生应用管理平台 v5.8.1

Excelize文档类库 v2.6.1

A-Tune性能调优引擎 v1.1.0

goproxy代理软件 v12.0

渠成百宝箱 v1.3

Gogs轻量级git服务 v0.12.10

Gitea源码包 v1.16.8

TiDB数据库 v5.4.1

LiteIDE开发工具 x38.0

wallpaper动态壁纸 v1.3.3

TiDB数据库 v4.0.14

go-fastdfs分布式文件系统 v1.4.3

GOFLY客服系统 v0.6.0 源码包

Rancher企业级Kubernetes管理平台 v2.5.11

Yearning SQL 审核平台 v2.3.5

kubernetes生产级别的容器编排系统 v1.20.9

kubernetes生产级别的容器编排系统 v1.19.13

HFish跨平台蜜罐平台 v2.3.0

Jenkins CLI v0.0.34

HFish跨平台蜜罐平台 v2.2.0

kubernetes生产级别的容器编排系统 v1.18.17

Rancher企业级Kubernetes管理平台 v2.4.15

syncd v2.0.0

kgcms v1.0

fcc政务数据共享区块链 v1.0

Crawlab分布式爬虫管理平台 v0.5.1

dog tunnel端口映射工具 v1.4.2

TiDB数据库 v3.0.12

Open Falcon企业级监控系统 v0.3.0

GoFileView在线文件预览程序 v1.0

TinyBg博客系统 v1.0

goblog博客系统 v1.0

DocHub 类百度文库 v2.4

Pholcus(幽灵蛛)爬虫软件 v1.2

分享48个Go源码,总有一款适合您

Go源码下载链接:https://pan.baidu.com/s/1FhQ6NzB3TWsv9res1OsJaA?pwd=r2d3

提取码:r2d3

import os

# 查找指定文件夹下所有相同名称的文件

def search_file(dirPath, fileName):

dirs = os.listdir(dirPath) # 查找该层文件夹下所有的文件及文件夹,返回列表

for currentFile in dirs: # 遍历列表

absPath = dirPath + '/' + currentFile

if os.path.isdir(absPath): # 如果是目录则递归,继续查找该目录下的文件

search_file(absPath, fileName)

elif currentFile == fileName:

print(absPath) # 文件存在,则打印该文件的绝对路径

os.remove(absPath)最后送大家一首诗:

山高路远坑深,

大军纵横驰奔,

谁敢横刀立马?

惟有点赞加关注大军。