1 实时流式计算

1.1 概念

流式计算一般对实时性要求较高,同时一般是先定义目标计算,然后数据到来之后将计算逻辑应用于数据。同时为了提高计算效率,往往尽可能采用增量计算代替全量计算。也就是将数据先聚集在集中全量处理。

2.2 应用场景

- 日志分析

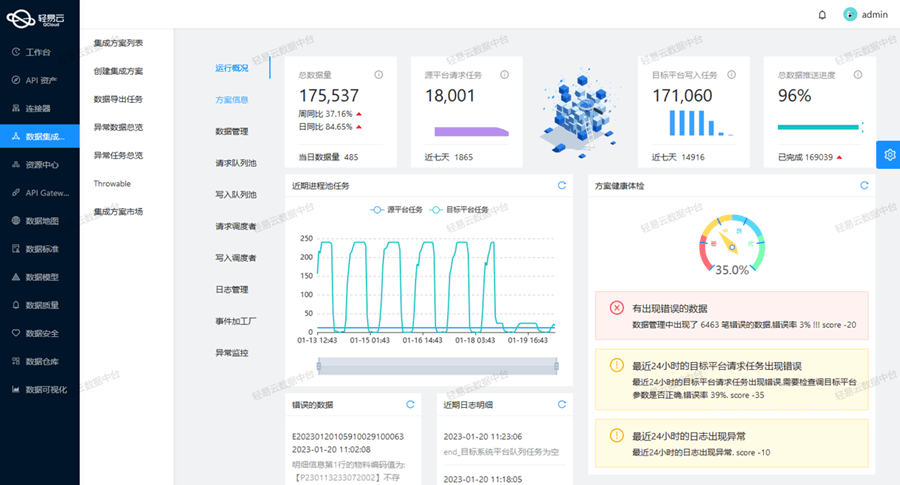

- 大屏看板统计

- 公交实时数据

- 实时文章分值计算

2 Kafka Stream

2.1 概述

Kafka Stream是Apache Kafka从0.10版本引入的一个新Feature。它是提供了对存储于Kafka内的数据进行流式处理和分析的功能。

Kafka Stream的特点如下:

- Kafka Stream提供了一个非常简单而轻量的Library,它可以非常方便地嵌入任意Java应用中,也可以任意方式打包和部署

- 除了Kafka外,无任何外部依赖

3 实践-app端热点文章计算

3.1 引入依赖

1)在之前的kafka-demo工程的pom文件中引入

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-streams</artifactId>

<exclusions>

<exclusion>

<artifactId>connect-json</artifactId>

<groupId>org.apache.kafka</groupId>

</exclusion>

<exclusion>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

</exclusion>

</exclusions>

</dependency>

2)发送者的微服务的nacos配置中加入kafka配置

```yaml

spring:

application:

name: leadnews-behavior

kafka:

bootstrap-servers: 192.168.200.130:9092

producer:

retries: 10

key-serializer: org.apache.kafka.common.serialization.StringSerializer

value-serializer: org.apache.kafka.common.serialization.StringSerializer

主要有发送的key和value的序列化器以及服务地址的重试次数

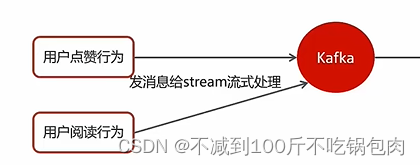

3.2 点赞行为发送给kafka流处理

1)调用kafkaTemplate.send发送数据

//发送消息,数据聚合

kafkaTemplate.send(HotArticleConstants.HOT_ARTICLE_SCORE_TOPIC,JSON.toJSONString(mess));

//观看的消息,设置类型为viesw,数据聚合

UpdateArticleMess mess = new UpdateArticleMess();

mess.setArticleId(dto.getArticleId());

mess.setType(UpdateArticleMess.UpdateArticleType.VIEWS);

mess.setAdd(1);

kafkaTemplate.send(HotArticleConstants.HOT_ARTICLE_SCORE_TOPIC,JSON.toJSONString(mess));

2)定义消息发送封装类:UpdateArticleMess

package com.heima.model.mess;

import lombok.Data;

@Data

public class UpdateArticleMess {

/**

* 修改文章的字段类型

*/

private UpdateArticleType type;

/**

* 文章ID

*/

private Long articleId;

/**

* 修改数据的增量,可为正负

*/

private Integer add;

public enum UpdateArticleType{

COLLECTION,COMMENT,LIKES,VIEWS;

}

}

这里的enum是迭代的意思,与后面聚合操作条件判断有关系

3.3 使用kafkaStream实时接收消息,聚合内容

1)定义实体类,用于聚合之后的分值封装

package com.heima.model.article.mess;

import lombok.Data;

@Data

public class ArticleVisitStreamMess {

/**

* 文章id

*/

private Long articleId;

/**

* 阅读

*/

private int view;

/**

* 收藏

*/

private int collect;

/**

* 评论

*/

private int comment;

/**

* 点赞

*/

private int like;

}

2)修改常量类:增加常量

package com.heima.common.constans;

public class HotArticleConstants {

public static final String HOT_ARTICLE_SCORE_TOPIC="hot.article.score.topic";

public static final String HOT_ARTICLE_INCR_HANDLE_TOPIC="hot.article.incr.handle.topic";

}

两个常量的意思是需要修改redis缓存中当前项和默认项的热点缓存数据

3)定义stream,接收消息并聚合

package com.heima.article.stream;

import com.alibaba.fastjson.JSON;

import com.heima.common.constants.HotArticleConstants;

import com.heima.model.mess.ArticleVisitStreamMess;

import com.heima.model.mess.UpdateArticleMess;

import lombok.extern.slf4j.Slf4j;

import org.apache.commons.lang3.StringUtils;

import org.apache.kafka.streams.KeyValue;

import org.apache.kafka.streams.StreamsBuilder;

import org.apache.kafka.streams.kstream.*;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

import java.time.Duration;

@Configuration

@Slf4j

public class HotArticleStreamHandler {

@Bean

public KStream<String,String> kStream(StreamsBuilder streamsBuilder){

//接收消息

KStream<String,String> stream = streamsBuilder.stream(HotArticleConstants.HOT_ARTICLE_SCORE_TOPIC);

//聚合流式处理

stream.map((key,value)->{

UpdateArticleMess mess = JSON.parseObject(value, UpdateArticleMess.class);

//重置消息的key:1234343434 和 value: likes:1

return new KeyValue<>(mess.getArticleId().toString(),mess.getType().name()+":"+mess.getAdd());

})

//按照文章id进行聚合

.groupBy((key,value)->key)

//时间窗口

.windowedBy(TimeWindows.of(Duration.ofSeconds(10)))

/**

* 自行的完成聚合的计算

*/

.aggregate(new Initializer<String>() {

/**

* 初始方法,返回值是消息的value

* @return

*/

@Override

public String apply() {

return "COLLECTION:0,COMMENT:0,LIKES:0,VIEWS:0";

}

/**

* 真正的聚合操作,返回值是消息的value

*/

}, new Aggregator<String, String, String>() {

@Override

public String apply(String key, String value, String aggValue) {

if(StringUtils.isBlank(value)){

return aggValue;

}

String[] aggAry = aggValue.split(",");

int col = 0,com=0,lik=0,vie=0;

for (String agg : aggAry) {

String[] split = agg.split(":");

/**

* 获得初始值,也是时间窗口内计算之后的值

*/

switch (UpdateArticleMess.UpdateArticleType.valueOf(split[0])){

case COLLECTION:

col = Integer.parseInt(split[1]);

break;

case COMMENT:

com = Integer.parseInt(split[1]);

break;

case LIKES:

lik = Integer.parseInt(split[1]);

break;

case VIEWS:

vie = Integer.parseInt(split[1]);

break;

}

}

/**

* 累加操作

*/

String[] valAry = value.split(":");

switch (UpdateArticleMess.UpdateArticleType.valueOf(valAry[0])){

case COLLECTION:

col += Integer.parseInt(valAry[1]);

break;

case COMMENT:

com += Integer.parseInt(valAry[1]);

break;

case LIKES:

lik += Integer.parseInt(valAry[1]);

break;

case VIEWS:

vie += Integer.parseInt(valAry[1]);

break;

}

String formatStr = String.format("COLLECTION:%d,COMMENT:%d,LIKES:%d,VIEWS:%d", col, com, lik, vie);

System.out.println("文章的id:"+key);

System.out.println("当前时间窗口内的消息处理结果:"+formatStr);

return formatStr;

}

}, Materialized.as("hot-atricle-stream-count-001"))

.toStream()

.map((key,value)->{

return new KeyValue<>(key.key().toString(),formatObj(key.key().toString(),value));

})

//发送消息

.to(HotArticleConstants.HOT_ARTICLE_INCR_HANDLE_TOPIC);

return stream;

}

/**

* 格式化消息的value数据

* @param articleId

* @param value

* @return

*/

public String formatObj(String articleId,String value){

ArticleVisitStreamMess mess = new ArticleVisitStreamMess();

mess.setArticleId(Long.valueOf(articleId));

//COLLECTION:0,COMMENT:0,LIKES:0,VIEWS:0

String[] valAry = value.split(",");

for (String val : valAry) {

String[] split = val.split(":");

switch (UpdateArticleMess.UpdateArticleType.valueOf(split[0])){

case COLLECTION:

mess.setCollect(Integer.parseInt(split[1]));

break;

case COMMENT:

mess.setComment(Integer.parseInt(split[1]));

break;

case LIKES:

mess.setLike(Integer.parseInt(split[1]));

break;

case VIEWS:

mess.setView(Integer.parseInt(split[1]));

break;

}

}

log.info("聚合消息处理之后的结果为:{}",JSON.toJSONString(mess));

return JSON.toJSONString(mess);

}

}

主要通过new Aggregator<String, String, String>(){两个apply方法}定义转换的规则。这里是将相同操作的值进行相加操作,输出的JSON通过通过ArticleVisitStreamMess(包含文章id字段)添加文章id到json字符串中,进而交给消费者

3.4 消费端监听消息,完成对缓存的修改

@Component

@Slf4j

public class ArticleIncrHandleListener {

@Autowired

private ApArticleService apArticleService;

@KafkaListener(topics = HotArticleConstants.HOT_ARTICLE_INCR_HANDLE_TOPIC)

public void onMessage(String mess){

if(StringUtils.isNotBlank(mess)){

ArticleVisitStreamMess articleVisitStreamMess = JSON.parseObject(mess, ArticleVisitStreamMess.class);

apArticleService.updateScore(articleVisitStreamMess);

}

}

}

2)updateScore更新分值,跟新mysql和redis

更新mysql

private ApArticle updateArticle(ArticleVisitStreamMess mess) {

ApArticle apArticle = getById(mess.getArticleId());

apArticle.setCollection(apArticle.getCollection()==null?0:apArticle.getCollection()+mess.getCollect());

apArticle.setComment(apArticle.getComment()==null?0:apArticle.getComment()+mess.getComment());

apArticle.setLikes(apArticle.getLikes()==null?0:apArticle.getLikes()+mess.getLike());

apArticle.setViews(apArticle.getViews()==null?0:apArticle.getViews()+mess.getView());

updateById(apArticle);

return apArticle;

更新redis中的当前页热点数据和默认页数据

```java

/**

* 更新文章的分值 同时更新缓存中的热点文章数据

* @param mess

*/

@Override

public void updateScore(ArticleVisitStreamMess mess) {

//1.更新文章的阅读、点赞、收藏、评论的数量

ApArticle apArticle = updateArticle(mess);

//2.计算文章的分值

Integer score = computeScore(apArticle);

score = score * 3;

//3.替换当前文章对应频道的热点数据

replaceDataToRedis(apArticle, score, ArticleConstants.HOT_ARTICLE_FIRST_PAGE + apArticle.getChannelId());

//4.替换推荐对应的热点数据

replaceDataToRedis(apArticle, score, ArticleConstants.HOT_ARTICLE_FIRST_PAGE + ArticleConstants.DEFAULT_TAG);

}

/**

* 替换数据并且存入到redis

* @param apArticle

* @param score

* @param s

*/

private void replaceDataToRedis(ApArticle apArticle, Integer score, String s) {

String articleListStr = cacheService.get(s);

if (StringUtils.isNotBlank(articleListStr)) {

List<HotArticleVo> hotArticleVoList = JSON.parseArray(articleListStr, HotArticleVo.class);

boolean flag = true;

//如果缓存中存在该文章,只更新分值

for (HotArticleVo hotArticleVo : hotArticleVoList) {

if (hotArticleVo.getId().equals(apArticle.getId())) {

hotArticleVo.setScore(score);

flag = false;

break;

}

}

//如果缓存中不存在,查询缓存中分值最小的一条数据,进行分值的比较,如果当前文章的分值大于缓存中的数据,就替换

if (flag) {

if (hotArticleVoList.size() >= 30) {

hotArticleVoList = hotArticleVoList.stream().sorted(Comparator.comparing(HotArticleVo::getScore).reversed()).collect(Collectors.toList());

HotArticleVo lastHot = hotArticleVoList.get(hotArticleVoList.size() - 1);

if (lastHot.getScore() < score) {

hotArticleVoList.remove(lastHot);

HotArticleVo hot = new HotArticleVo();

BeanUtils.copyProperties(apArticle, hot);

hot.setScore(score);

hotArticleVoList.add(hot);

}

} else {

HotArticleVo hot = new HotArticleVo();

BeanUtils.copyProperties(apArticle, hot);

hot.setScore(score);

hotArticleVoList.add(hot);

}

}

//缓存到redis

hotArticleVoList = hotArticleVoList.stream().sorted(Comparator.comparing(HotArticleVo::getScore).reversed()).collect(Collectors.toList());

cacheService.set(s, JSON.toJSONString(hotArticleVoList));

}

}

![[RoarCTF 2019]Easy Java1](https://img-blog.csdnimg.cn/965112caadea46d5b53f31416a82c97f.png)