本文参考:

pytorch实现并训练MobileNetV3 - 灰信网(软件开发博客聚合)

【神经网络】(16) MobileNetV3 代码复现,网络解析,附Tensorflow完整代码 - 代码天地

1 MobileNetV3与V1、V2对比

(1)MobileNetV1的主要思想是将普通卷积操作分解为两步,先做一次仅卷积不求和的depthwise conv(深度卷积:可理解为处理长宽方向的空间信息)操作,再使用1*1的pointwise conv(逐点卷积:只处理跨通道方向的信息)对深度卷积得到的多通道结果进行融合,减少了减少了大量的通道融合时间和参数。

(2)MobileNetV2:对比ResNet中的Bottleneck残差块通道数先变少再变多,每层中的激活函数会导致丢失一部分的信息,那么在输入通道数很多时丢的信息会很多。MobileNetV2的Bottlenect改为先将通道数增加,再将通道数减少,所以被称为“反转残差块”。

(3)MobileNetV3:引入了H-Switch激活函数与ReLU搭配使用,另外还引入了注意力机制的SE模块,SE模块为该版本最精华部分,同时使用了新的激活函数。

2 MobileNetV3概述

2.1 SE注意力机制

每个Block经过两个卷积层后得到一个由channel个元素组成的向量,每个元素是针对每个通道的权重,将权重和原特征图对应相乘,得到新的特征图数据。

2.2 使用不同的激活函数

2.3 总体流程

图像输入,先通过1*1卷积上升通道数;

然后在高维空间下使用深度卷积;

再经过SE注意力机制优化特征图数据;

最后经过1*1卷积下降通道数(使用F(x)=x的线性激活函数)。

当步长等于1且输入和输出特征图的shape相同时,使用残差连接输入和输出;当步长等于2(下采样阶段)直接输出降维后的特征图。

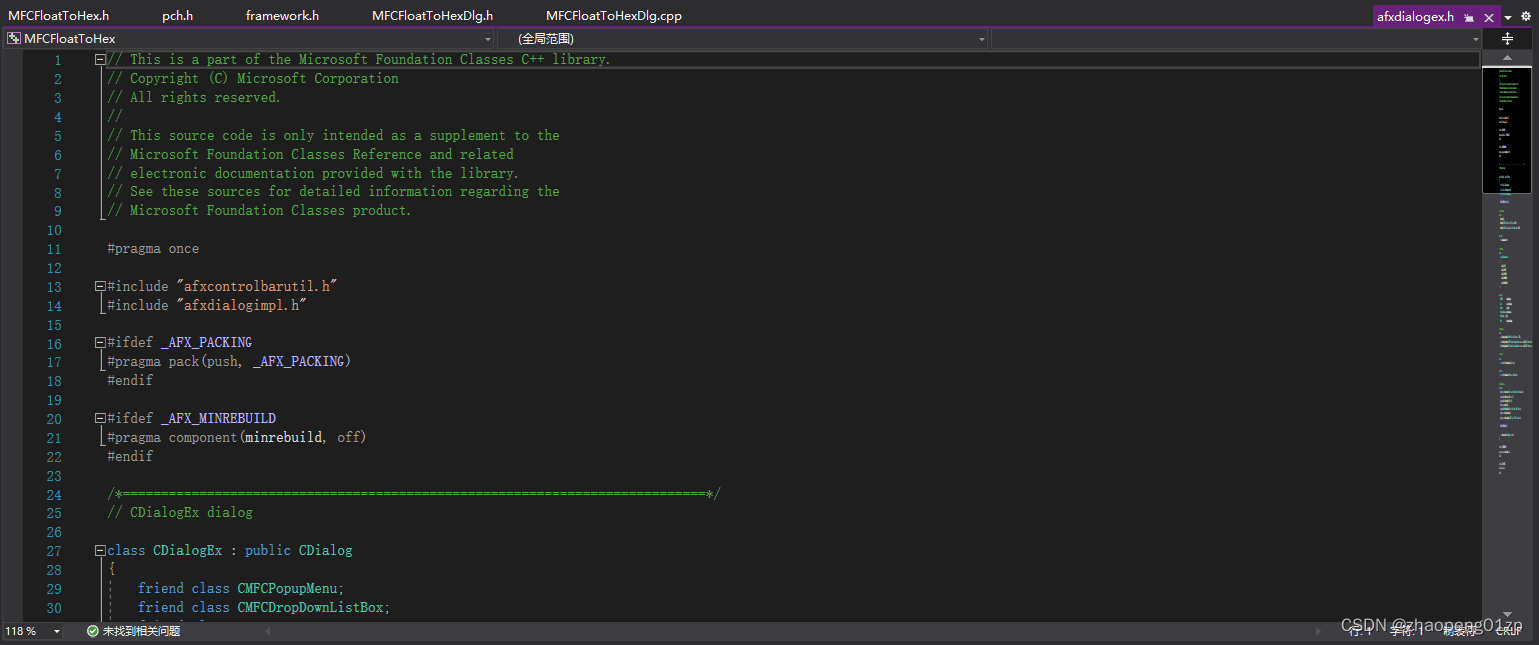

3、代码实现

import torch

from torch import nn

import torch.nn.functional as F

class hswish(nn.Module):

def __init__(self):

super(hswish, self).__init__()

self.relu6 = nn.ReLU6(inplace=True)

def forward(self, x):

out = x * self.relu6(x + 3) / 6

return out

class hsigmoid(nn.Module):

def __init__(self):

super(hsigmoid, self).__init__()

self.relu6 = nn.ReLU6(inplace=True)

def forward(self, x):

out = self.relu6(x + 3) / 6

return out

# 注意力机制

class SE(nn.Module):

def __init__(self, in_channels, reduce=4):

super(SE, self).__init__()

self.se = nn.Sequential(

nn.AdaptiveAvgPool2d(1),

nn.Conv2d(in_channels, in_channels // reduce, 1, bias=False),

nn.BatchNorm2d(in_channels // reduce),

nn.ReLU6(inplace=True),

nn.Conv2d(in_channels // reduce, in_channels, 1, bias=False),

nn.BatchNorm2d(in_channels),

hsigmoid()

)

def forward(self, x):

out = self.se(x)

out = x * out

return out

class Block(nn.Module):

def __init__(self, kernel_size, in_channels, expand_size, out_channels, stride, se=False, nolinear='RE'):

super(Block, self).__init__()

self.se = nn.Sequential()

if se:

self.se = SE(expand_size)

if nolinear == 'RE':

self.nolinear = nn.ReLU6(inplace=True)

elif nolinear == 'HS':

self.nolinear = hswish()

self.block = nn.Sequential(

nn.Conv2d(in_channels, expand_size, 1, stride=1, padding=0, bias=False),

nn.BatchNorm2d(expand_size),

self.nolinear,

nn.Conv2d(expand_size, expand_size, kernel_size, stride=stride, padding=kernel_size // 2, groups=expand_size, bias=False),

nn.BatchNorm2d(expand_size),

self.se,

self.nolinear,

nn.Conv2d(expand_size, out_channels, 1, stride=1, padding=0, bias=False),

nn.BatchNorm2d(out_channels)

)

self.shortcut = nn.Sequential()

if stride == 1 and in_channels != out_channels:

self.shortcut = nn.Sequential(

nn.Conv2d(in_channels, out_channels, 1, bias=False),

nn.BatchNorm2d(out_channels)

)

self.stride = stride

def forward(self, x):

out = self.block(x)

if self.stride == 1:

out += self.shortcut(x)

return out

class MobileNetV3(nn.Module):

def __init__(self, class_num=10):

super().__init__()

self.conv1 = nn.Sequential(

nn.Conv2d(3, 16, 3, stride=2, padding=1, bias=False),

nn.BatchNorm2d(16),

hswish()

)

self.neck = nn.Sequential(

Block(3, 16, 16, 16, 2, se=True),

Block(3, 16, 72, 24, 2),

Block(3, 24, 88, 24, 1),

Block(5, 24, 96, 40, 2, se=True, nolinear='HS'),

Block(5, 40, 240, 40, 1, se=True, nolinear='HS'),

Block(5, 40, 240, 40, 1, se=True, nolinear='HS'),

Block(5, 40, 120, 48, 1, se=True, nolinear='HS'),

Block(5, 48, 144, 48, 1, se=True, nolinear='HS'),

Block(5, 48, 288, 96, 2, se=True, nolinear='HS'),

Block(5, 96, 576, 96, 1, se=True, nolinear='HS'),

Block(5, 96, 576, 96, 1, se=True, nolinear='HS'),

)

self.conv2 = nn.Sequential(

nn.Conv2d(96, 576, 1, bias=False),

nn.BatchNorm2d(576),

hswish()

)

self.avgpool = nn.AdaptiveAvgPool2d(1)

self.conv3 = nn.Sequential(

nn.Conv2d(576, 1280, 1, bias=False),

nn.BatchNorm2d(1280),

hswish()

)

self.conv4 = nn.Conv2d(1280, class_num, 1, bias=False)

def forward(self, x):

x = self.conv1(x)

x = self.neck(x)

x = self.conv2(x)

x = self.conv3(x)

x = self.conv4(x)

x = x.flatten(1)

return x

if __name__ == '__main__':

model = MobileNetV3(10)

input = torch.randn(2, 3, 516, 516) # batch_size =1 会报错

out = model(input)

print(out.shape)4、MobileNetV3在CenterNet目标检测落地情况

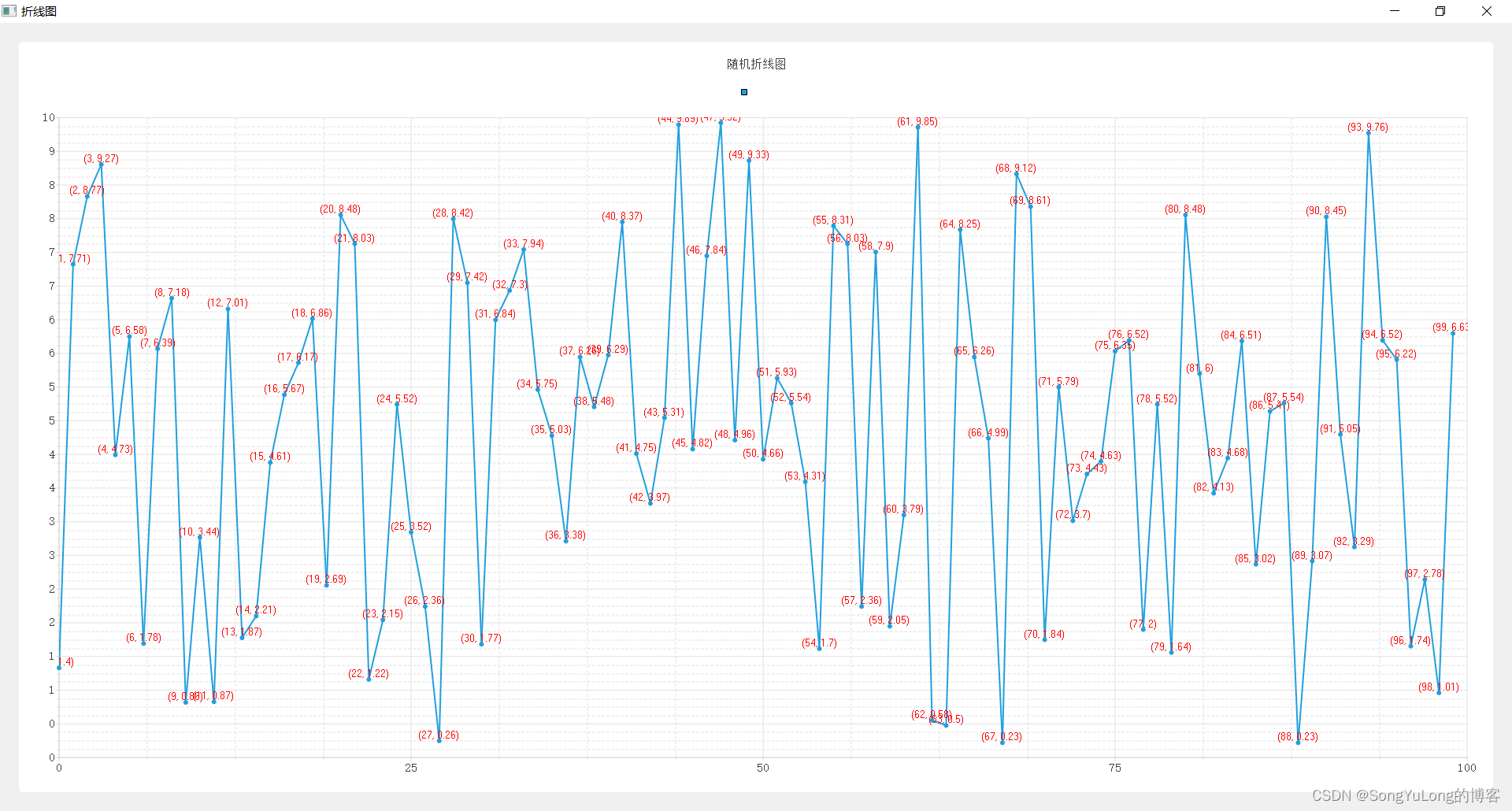

(1)训练情况

训练loss,mobilenetV1在batch_size=16时最少达到4.0左右;

mobileNetV2在batch_size=16时最少达到0.5以下;

mobileNetV3在batch_size=16时最少达到0.25左右。

(2)目标检测效果

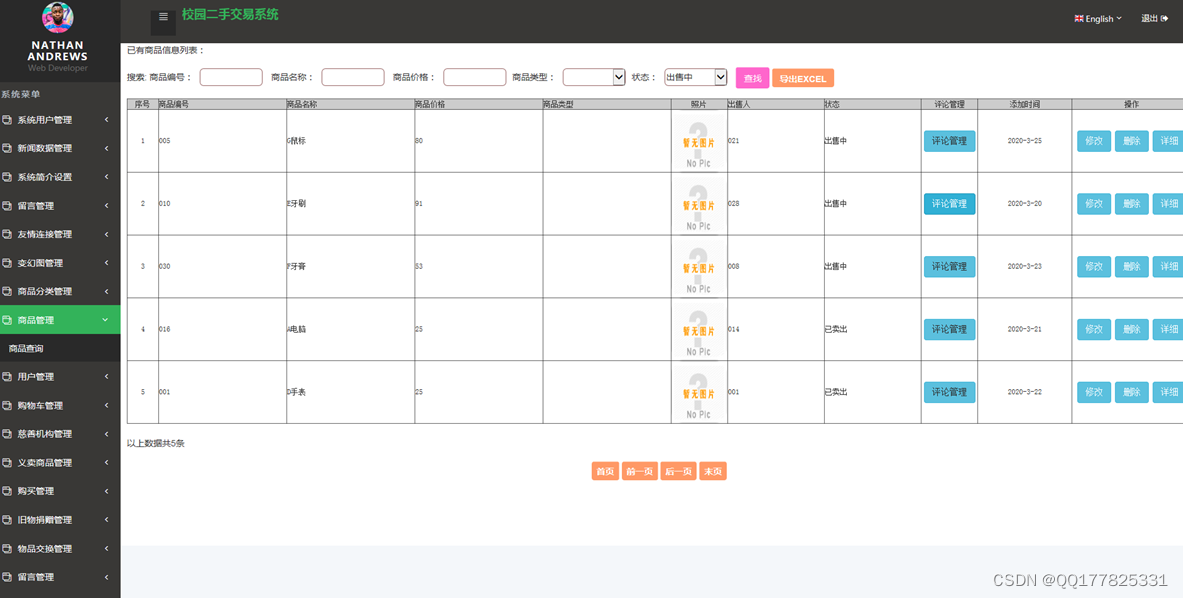

(3)模型参数量:

DLASeg为2000W个左右

MobileNetV1为320W个左右

MobileNetV2为430W个左右,总模型大小为17M

MobileNetV3为166W个左右,总模型大小为7M

(4)CPU运行时间

DLASeg为1.2s

MobileNetV1为250ms

MobileNetV2为600ms

MobileNetV3为120ms