先决条件

安装以下软件包:git, kubectl, helm, helm-docs,请参阅本教程。

在 CentOS 上启用 snap 并安装 helm

启用 snapd

- 使用以下命令将 EPEL 存储库添加到您的系统中:

sudo yum install epel-release- 按如下方式安装 Snap:

sudo yum install snapd- 安装后,需要启用管理主 snap 通信套接字 的systemd单元:

sudo systemctl enable --now snapd.socket- 创建软链/snap:

sudo ln -s /var/lib/snapd/snap /snap- 注销并重新登录,或重新启动系统,以确保正确更新 snap 的路径。

安装 helm

使用以下命令安装 helm:

sudo snap install helm --classic安装victoria-metrics-alert

- 使用以下命令添加图表 helm 存储库:

helm repo add vm https://victoriametrics.github.io/helm-charts/

helm repo update- 列出vm/victoria-metrics-alert可供安装的helm版本:

helm search repo vm/victoria-metrics-alert -l- victoria-metrics-alert将图表的默认值导出到文件values.yaml:

helm show values vm/victoria-metrics-alert > values.yaml根据环境需要更改values.yaml文件中的值。

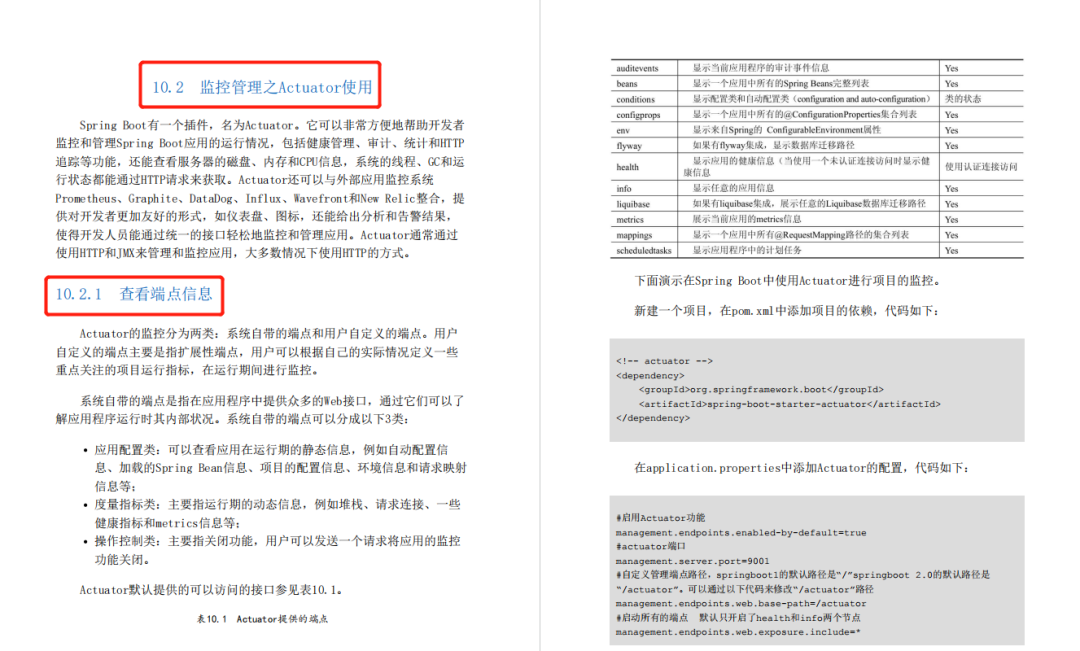

[root@bastion ~]# cat values.yaml

# Default values for victoria-metrics-alert.

# This is a YAML-formatted file.

# Declare variables to be passed into your templates.

serviceAccount:

# Specifies whether a service account should be created

create: true

# Annotations to add to the service account

annotations: {}

# The name of the service account to use.

# If not set and create is true, a name is generated using the fullname template

name:

# mount API token to pod directly

automountToken: true

imagePullSecrets: []

rbac:

create: true

pspEnabled: true

namespaced: false

extraLabels: {}

annotations: {}

server:

name: server

enabled: true

image:

repository: victoriametrics/vmalert

tag: "" # rewrites Chart.AppVersion

pullPolicy: IfNotPresent

nameOverride: ""

fullnameOverride: ""

## See `kubectl explain poddisruptionbudget.spec` for more

## ref: https://kubernetes.io/docs/tasks/run-application/configure-pdb/

podDisruptionBudget:

enabled: false

# minAvailable: 1

# maxUnavailable: 1

labels: {}

# -- Additional environment variables (ex.: secret tokens, flags) https://github.com/VictoriaMetrics/VictoriaMetrics#environment-variables

env:

[]

# - name: VM_remoteWrite_basicAuth_password

# valueFrom:

# secretKeyRef:

# name: auth_secret

# key: password

replicaCount: 1

# deployment strategy, set to standard k8s default

strategy:

type: RollingUpdate

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%

# specifies the minimum number of seconds for which a newly created Pod should be ready without any of its containers crashing/terminating

# 0 is the standard k8s default

minReadySeconds: 0

# vmalert reads metrics from source, next section represents its configuration. It can be any service which supports

# MetricsQL or PromQL.

datasource:

url: "http://192.168.47.9:8481/select/0/prometheus/"

basicAuth:

username: ""

password: ""

remote:

write:

url: ""

read:

url: ""

notifier:

alertmanager:

url: "http://192.168.112.68:9093"

extraArgs:

envflag.enable: "true"

envflag.prefix: VM_

loggerFormat: json

# Additional hostPath mounts

extraHostPathMounts:

[]

# - name: certs-dir

# mountPath: /etc/kubernetes/certs

# subPath: ""

# hostPath: /etc/kubernetes/certs

# readOnly: true

# Extra Volumes for the pod

extraVolumes:

[]

#- name: example

# configMap:

# name: example

# Extra Volume Mounts for the container

extraVolumeMounts:

[]

# - name: example

# mountPath: /example

extraContainers:

[]

#- name: config-reloader

# image: reloader-image

service:

annotations: {}

labels: {}

clusterIP: ""

## Ref: https://kubernetes.io/docs/user-guide/services/#external-ips

##

externalIPs: []

loadBalancerIP: ""

loadBalancerSourceRanges: []

servicePort: 8880

type: ClusterIP

# Ref: https://kubernetes.io/docs/tasks/access-application-cluster/create-external-load-balancer/#preserving-the-client-source-ip

# externalTrafficPolicy: "local"

# healthCheckNodePort: 0

ingress:

enabled: false

annotations: {}

# kubernetes.io/ingress.class: nginx

# kubernetes.io/tls-acme: 'true'

extraLabels: {}

hosts: []

# - name: vmselect.local

# path: /select

# port: http

tls: []

# - secretName: vmselect-ingress-tls

# hosts:

# - vmselect.local

# For Kubernetes >= 1.18 you should specify the ingress-controller via the field ingressClassName

# See https://kubernetes.io/blog/2020/04/02/improvements-to-the-ingress-api-in-kubernetes-1.18/#specifying-the-class-of-an-ingress

# ingressClassName: nginx

# -- pathType is only for k8s >= 1.1=

pathType: Prefix

podSecurityContext: {}

# fsGroup: 2000

securityContext:

{}

# capabilities:

# drop:

# - ALL

# readOnlyRootFilesystem: true

# runAsNonRoot: true

# runAsUser: 1000

resources:

{}

# We usually recommend not to specify default resources and to leave this as a conscious

# choice for the user. This also increases chances charts run on environments with little

# resources, such as Minikube. If you do want to specify resources, uncomment the following

# lines, adjust them as necessary, and remove the curly braces after 'resources:'.

# limits:

# cpu: 100m

# memory: 128Mi

# requests:

# cpu: 100m

# memory: 128Mi

# Annotations to be added to the deployment

annotations: {}

# labels to be added to the deployment

labels: {}

# Annotations to be added to pod

podAnnotations: {}

podLabels: {}

nodeSelector: {}

priorityClassName: ""

tolerations: []

affinity: {}

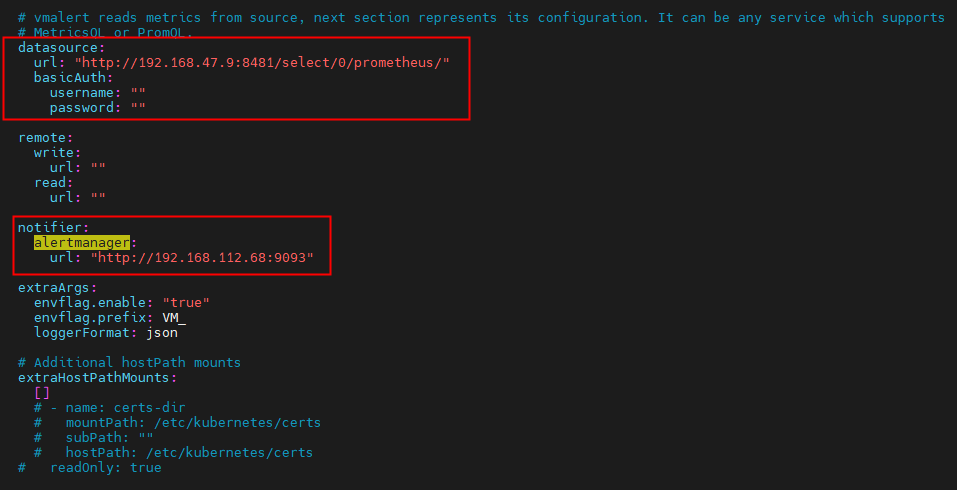

# vmalert alert rules configuration configuration:

# use existing configmap if specified

# otherwise .config values will be used

configMap: ""

config:

alerts:

groups:

- name: 主机状态

rules:

- alert: 主机状态

expr: up == 0

for: 1m

labels:

status: warning

annotations:

summary: "{{$labels.instance}}:服务器关闭"

description: "{{$labels.instance}}:服务器关闭"

serviceMonitor:

enabled: false

extraLabels: {}

annotations: {}

# interval: 15s

# scrapeTimeout: 5s

# -- Commented. HTTP scheme to use for scraping.

# scheme: https

# -- Commented. TLS configuration to use when scraping the endpoint

# tlsConfig:

# insecureSkipVerify: true

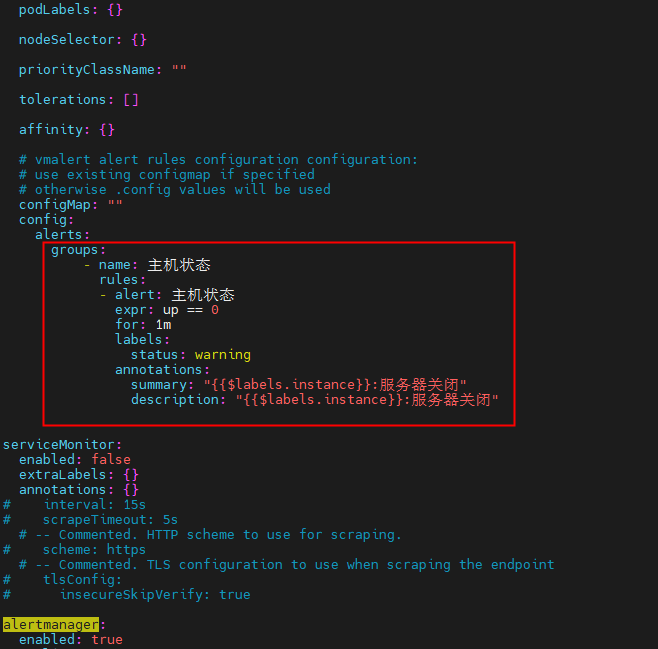

alertmanager:

enabled: true

replicaCount: 1

podMetadata:

labels: {}

annotations: {}

image: prom/alertmanager

tag: v0.20.0

retention: 120h

nodeSelector: {}

priorityClassName: ""

resources: {}

tolerations: []

imagePullSecrets: []

podSecurityContext: {}

extraArgs: {}

# key: value

# external URL, that alertmanager will expose to receivers

baseURL: ""

# use existing configmap if specified

# otherwise .config values will be used

configMap: ""

config:

global:

resolve_timeout: 5m

route:

# default receiver

receiver: ops_notify

# tag to group by

group_by: ["alertname"]

# How long to initially wait to send a notification for a group of alerts

group_wait: 30s

# How long to wait before sending a notification about new alerts that are added to a group

group_interval: 10s

# How long to wait before sending a notification again if it has already been sent successfully for an alert

repeat_interval: 1h

receivers:

- name: ops_notify

webhook_configs:

- url: http://localhost:8060/dingtalk/webhook1/send

send_resolved: true

templates: {}

# alertmanager.tmpl: |-

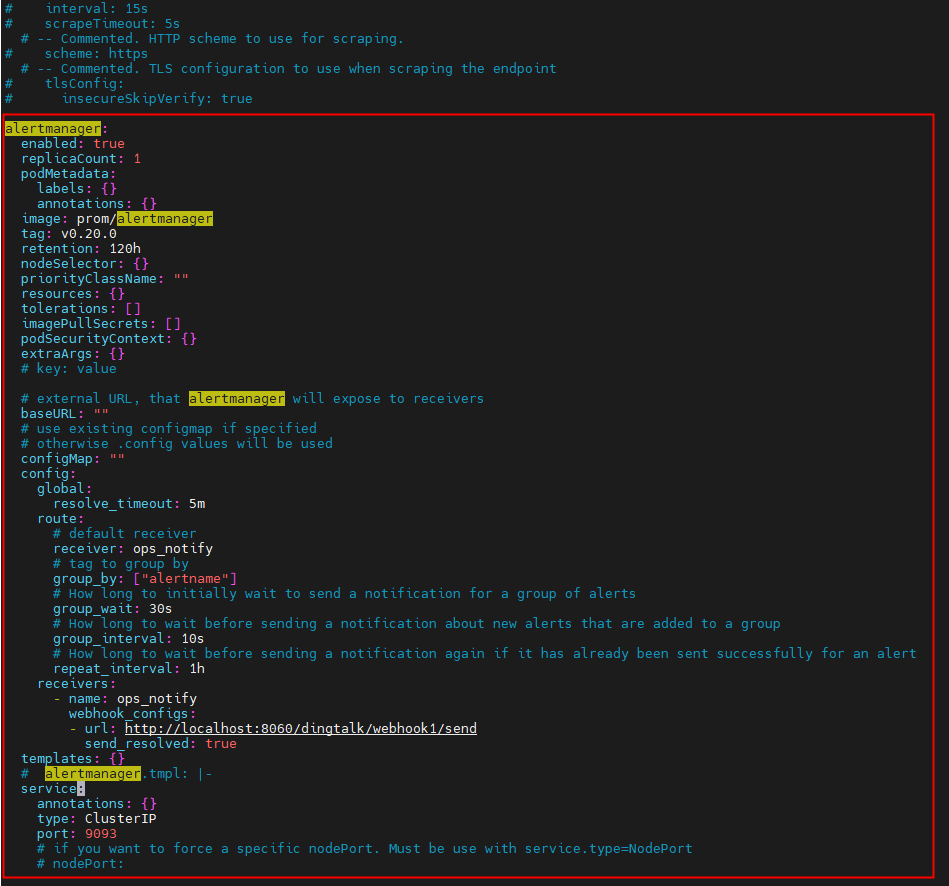

service:

annotations: {}

type: ClusterIP

port: 9093

# if you want to force a specific nodePort. Must be use with service.type=NodePort

# nodePort:

ingress:

enabled: false

annotations: {}

# kubernetes.io/ingress.class: nginx

# kubernetes.io/tls-acme: 'true'

extraLabels: {}

hosts: []

# - name: alertmanager.local

# path: /

# port: web

tls: []

# - secretName: alertmanager-ingress-tls

# hosts:

# - alertmanager.local

# For Kubernetes >= 1.18 you should specify the ingress-controller via the field ingressClassName

# See https://kubernetes.io/blog/2020/04/02/improvements-to-the-ingress-api-in-kubernetes-1.18/#specifying-the-class-of-an-ingress

# ingressClassName: nginx

# -- pathType is only for k8s >= 1.1=

pathType: Prefix

persistentVolume:

# -- Create/use Persistent Volume Claim for alertmanager component. Empty dir if false

enabled: false

# -- Array of access modes. Must match those of existing PV or dynamic provisioner. Ref: [http://kubernetes.io/docs/user-guide/persistent-volumes/](http://kubernetes.io/docs/user-guide/persistent-volumes/)

accessModes:

- ReadWriteOnce

# -- Persistant volume annotations

annotations: {}

# -- StorageClass to use for persistent volume. Requires alertmanager.persistentVolume.enabled: true. If defined, PVC created automatically

storageClass: ""

# -- Existing Claim name. If defined, PVC must be created manually before volume will be bound

existingClaim: ""

# -- Mount path. Alertmanager data Persistent Volume mount root path.

mountPath: /data

# -- Mount subpath

subPath: ""

# -- Size of the volume. Better to set the same as resource limit memory property.

size: 50Mi

- 使用命令测试安装:

helm install vmalert vm/victoria-metrics-alert -f values.yaml -n NAMESPACE --debug --dry-run- 使用以下命令安装

helm install vmalert vm/victoria-metrics-alert -f values.yaml -n NAMESPACE- 通过运行以下命令获取 pod 列表:

kubectl get pods -A | grep 'alert'- 通过运行以下命令获取应用程序:

helm list -f vmalert -n NAMESPACE- 使用命令查看应用程序版本的历史记录vmalert:

helm history vmalert -n NAMESPACE参考文档:https://github.com/VictoriaMetrics/helm-charts/tree/master/charts/victoria-metrics-alert

参考文档:https://snapcraft.io/install/helm/centos#install