WebRTC音视频通话-实现GPUImage视频美颜滤镜效果

在WebRTC音视频通话的GPUImage美颜效果图如下

可以看下

之前搭建ossrs服务,可以查看:https://blog.csdn.net/gloryFlow/article/details/132257196

之前实现iOS端调用ossrs音视频通话,可以查看:https://blog.csdn.net/gloryFlow/article/details/132262724

之前WebRTC音视频通话高分辨率不显示画面问题,可以查看:https://blog.csdn.net/gloryFlow/article/details/132262724

修改SDP中的码率Bitrate,可以查看:https://blog.csdn.net/gloryFlow/article/details/132263021

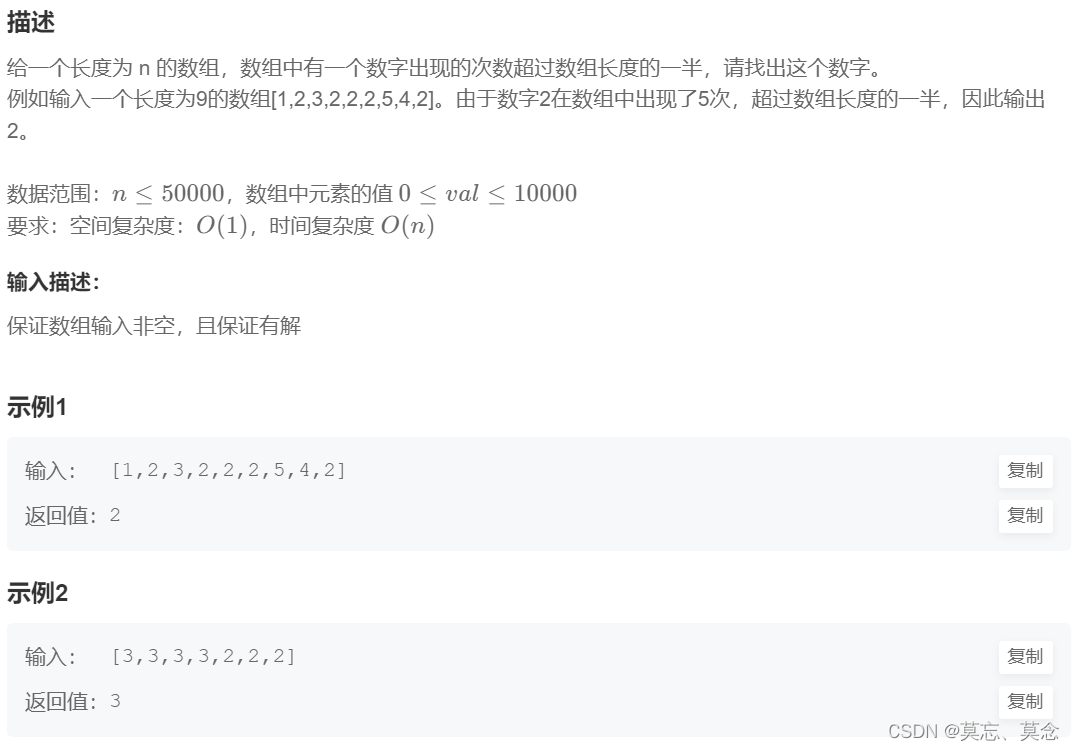

一、GPUImage是什么?

GPUImage是iOS上一个基于OpenGL进行图像处理的开源框架,内置大量滤镜,架构灵活,可以在其基础上很轻松地实现各种图像处理功能。

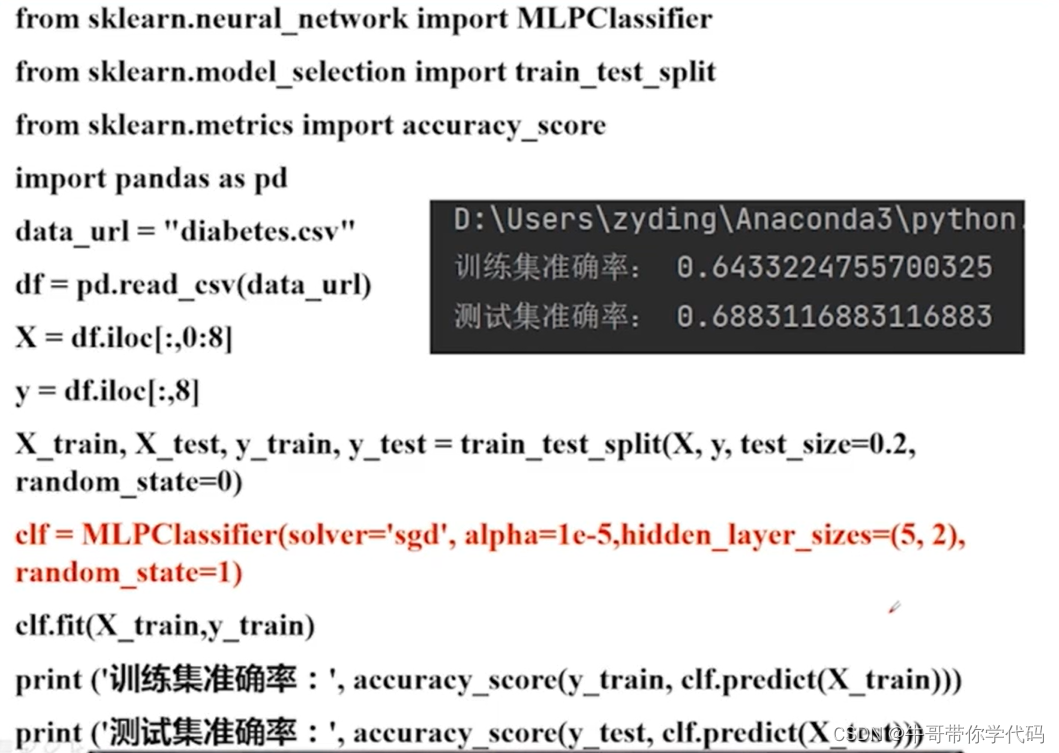

GPUImage中包含各种滤镜,这里我不会使用那么多,使用的是GPUImageLookupFilter及GPUImagePicture

GPUImage中有一个专门针对lookup table进行处理的滤镜函数GPUImageLookupFilter,使用这个函数就可以直接对图片进行滤镜添加操作了。代码如下

/**

GPUImage中有一个专门针对lookup table进行处理的滤镜函数GPUImageLookupFilter,使用这个函数就可以直接对图片进行滤镜添加操作了。

originalImg是你希望添加滤镜的原始图片

@param image 原图

@return 处理后的图片

*/

+ (UIImage *)applyLookupFilter:(UIImage *)image lookUpImage:(UIImage *)lookUpImage {

if (lookUpImage == nil) {

return image;

}

UIImage *inputImage = image;

UIImage *outputImage = nil;

GPUImagePicture *stillImageSource = [[GPUImagePicture alloc] initWithImage:inputImage];

//添加滤镜

GPUImageLookupFilter *lookUpFilter = [[GPUImageLookupFilter alloc] init];

//导入之前保存的NewLookupTable.png文件

GPUImagePicture *lookupImg = [[GPUImagePicture alloc] initWithImage:lookUpImage];

[lookupImg addTarget:lookUpFilter atTextureLocation:1];

[stillImageSource addTarget:lookUpFilter atTextureLocation:0];

[lookUpFilter useNextFrameForImageCapture];

if([lookupImg processImageWithCompletionHandler:nil] && [stillImageSource processImageWithCompletionHandler:nil]) {

outputImage= [lookUpFilter imageFromCurrentFramebuffer];

}

return outputImage;

}

这个需要lookUpImage,图列表如下

由于暂时没有整理demo的git

这里在使用applyLomofiFilter再试下效果

SDApplyFilter.m中的几个方法

+ (UIImage *)applyBeautyFilter:(UIImage *)image {

GPUImageBeautifyFilter *filter = [[GPUImageBeautifyFilter alloc] init];

[filter forceProcessingAtSize:image.size];

GPUImagePicture *pic = [[GPUImagePicture alloc] initWithImage:image];

[pic addTarget:filter];

[pic processImage];

[filter useNextFrameForImageCapture];

return [filter imageFromCurrentFramebuffer];

}

/**

Amatorka滤镜 Rise滤镜,可以使人像皮肤得到很好的调整

@param image image

@return 处理后的图片

*/

+ (UIImage *)applyAmatorkaFilter:(UIImage *)image

{

GPUImageAmatorkaFilter *filter = [[GPUImageAmatorkaFilter alloc] init];

[filter forceProcessingAtSize:image.size];

GPUImagePicture *pic = [[GPUImagePicture alloc] initWithImage:image];

[pic addTarget:filter];

[pic processImage];

[filter useNextFrameForImageCapture];

return [filter imageFromCurrentFramebuffer];

}

/**

复古型滤镜,感觉像旧上海滩

@param image image

@return 处理后的图片

*/

+ (UIImage *)applySoftEleganceFilter:(UIImage *)image

{

GPUImageSoftEleganceFilter *filter = [[GPUImageSoftEleganceFilter alloc] init];

[filter forceProcessingAtSize:image.size];

GPUImagePicture *pic = [[GPUImagePicture alloc] initWithImage:image];

[pic addTarget:filter];

[pic processImage];

[filter useNextFrameForImageCapture];

return [filter imageFromCurrentFramebuffer];

}

/**

图像黑白化,并有大量噪点

@param image 原图

@return 处理后的图片

*/

+ (UIImage *)applyLocalBinaryPatternFilter:(UIImage *)image

{

GPUImageLocalBinaryPatternFilter *filter = [[GPUImageLocalBinaryPatternFilter alloc] init];

[filter forceProcessingAtSize:image.size];

GPUImagePicture *pic = [[GPUImagePicture alloc] initWithImage:image];

[pic addTarget:filter];

[pic processImage];

[filter useNextFrameForImageCapture];

return [filter imageFromCurrentFramebuffer];

}

/**

单色滤镜

@param image 原图

@return 处理后的图片

*/

+ (UIImage *)applyMonochromeFilter:(UIImage *)image

{

GPUImageMonochromeFilter *filter = [[GPUImageMonochromeFilter alloc] init];

[filter forceProcessingAtSize:image.size];

GPUImagePicture *pic = [[GPUImagePicture alloc] initWithImage:image];

[pic addTarget:filter];

[pic processImage];

[filter useNextFrameForImageCapture];

return [filter imageFromCurrentFramebuffer];

}

使用GPUImageSoftEleganceFilter复古型滤镜,感觉像旧上海滩效果图如下

使用GPUImageLocalBinaryPatternFilter图像黑白化效果图如下

使用GPUImageMonochromeFilter 效果图如下

二、WebRTC实现音视频通话中视频滤镜处理

之前实现iOS端调用ossrs音视频通话,可以查看:https://blog.csdn.net/gloryFlow/article/details/132262724

这个已经有完整的代码了,这里暂时做一下调整。

为RTCCameraVideoCapturer的delegate指向代理

- (RTCVideoTrack *)createVideoTrack {

RTCVideoSource *videoSource = [self.factory videoSource];

self.localVideoSource = videoSource;

// 如果是模拟器

if (TARGET_IPHONE_SIMULATOR) {

if (@available(iOS 10, *)) {

self.videoCapturer = [[RTCFileVideoCapturer alloc] initWithDelegate:self];

} else {

// Fallback on earlier versions

}

} else{

self.videoCapturer = [[RTCCameraVideoCapturer alloc] initWithDelegate:self];

}

RTCVideoTrack *videoTrack = [self.factory videoTrackWithSource:videoSource trackId:@"video0"];

return videoTrack;

}

实现RTCVideoCapturerDelegate的方法didCaptureVideoFrame

#pragma mark - RTCVideoCapturerDelegate处理代理

- (void)capturer:(RTCVideoCapturer *)capturer didCaptureVideoFrame:(RTCVideoFrame *)frame {

// DebugLog(@"capturer:%@ didCaptureVideoFrame:%@", capturer, frame);

// 调用SDWebRTCBufferFliter的滤镜处理

RTCVideoFrame *aFilterVideoFrame;

if (self.delegate && [self.delegate respondsToSelector:@selector(webRTCClient:didCaptureVideoFrame:)]) {

aFilterVideoFrame = [self.delegate webRTCClient:self didCaptureVideoFrame:frame];

}

// 操作C 需要手动释放 否则内存暴涨

// CVPixelBufferRelease(_buffer)

// 拿到pixelBuffer

// ((RTCCVPixelBuffer*)frame.buffer).pixelBuffer

if (!aFilterVideoFrame) {

aFilterVideoFrame = frame;

}

[self.localVideoSource capturer:capturer didCaptureVideoFrame:frame];

}

之后调用SDWebRTCBufferFliter,实现滤镜效果。

实现将((RTCCVPixelBuffer *)frame.buffer).pixelBuffer进行渲染,这里用到了EAGLContext、CIContext

EAGLContext是OpenGL绘制句柄或者上下文,在绘制试图之前,需要指定使用创建的上下文绘制。

CIContext是用来渲染CIImage,将作用在CIImage上的滤镜链应用到原始的图片数据中。我这里需要将UIImage转换成CIImage。

具体代码实现如下

SDWebRTCBufferFliter.h

#import <Foundation/Foundation.h>

#import "WebRTCClient.h"

@interface SDWebRTCBufferFliter : NSObject

- (RTCVideoFrame *)webRTCClient:(WebRTCClient *)client didCaptureVideoFrame:(RTCVideoFrame *)frame;

@end

SDWebRTCBufferFliter.m

#import "SDWebRTCBufferFliter.h"

#import <VideoToolbox/VideoToolbox.h>

#import "SDApplyFilter.h"

@interface SDWebRTCBufferFliter ()

// 滤镜

@property (nonatomic, strong) EAGLContext *eaglContext;

@property (nonatomic, strong) CIContext *coreImageContext;

@property (nonatomic, strong) UIImage *lookUpImage;

@end

@implementation SDWebRTCBufferFliter

- (instancetype)init

{

self = [super init];

if (self) {

self.eaglContext = [[EAGLContext alloc] initWithAPI:kEAGLRenderingAPIOpenGLES3];

self.coreImageContext = [CIContext contextWithEAGLContext:self.eaglContext options:nil];

self.lookUpImage = [UIImage imageNamed:@"lookup_jiari"];

}

return self;

}

- (RTCVideoFrame *)webRTCClient:(WebRTCClient *)client didCaptureVideoFrame:(RTCVideoFrame *)frame {

CVPixelBufferRef pixelBufferRef = ((RTCCVPixelBuffer *)frame.buffer).pixelBuffer;

// CFRetain(pixelBufferRef);

if (pixelBufferRef) {

CIImage *inputImage = [CIImage imageWithCVPixelBuffer:pixelBufferRef];

CGImageRef imgRef = [_coreImageContext createCGImage:inputImage fromRect:[inputImage extent]];

UIImage *fromImage = nil;

if (!fromImage) {

fromImage = [UIImage imageWithCGImage:imgRef];

}

UIImage *toImage;

toImage = [SDApplyFilter applyMonochromeFilter:fromImage];

//

// if (toImage == nil) {

// toImage = [SDApplyFilter applyLookupFilter:fromImage lookUpImage:self.lookUpImage];

// } else {

// toImage = [SDApplyFilter applyLookupFilter:fromImage lookUpImage:self.lookUpImage];

// }

if (toImage == nil) {

toImage = fromImage;

}

CGImageRef toImgRef = toImage.CGImage;

CIImage *ciimage = [CIImage imageWithCGImage:toImgRef];

[_coreImageContext render:ciimage toCVPixelBuffer:pixelBufferRef];

CGImageRelease(imgRef);//必须释放

fromImage = nil;

toImage = nil;

ciimage = nil;

inputImage = nil;

}

RTCCVPixelBuffer *rtcPixelBuffer =

[[RTCCVPixelBuffer alloc] initWithPixelBuffer:pixelBufferRef];

RTCVideoFrame *filteredFrame =

[[RTCVideoFrame alloc] initWithBuffer:rtcPixelBuffer

rotation:frame.rotation

timeStampNs:frame.timeStampNs];

return filteredFrame;

}

@end

至此可以看到在WebRTC音视频通话中GPUImage视频美颜滤镜的具体效果了。

三、小结

WebRTC音视频通话-实现GPUImage视频美颜滤镜效果。主要用到GPUImage处理视频画面CVPixelBufferRef,将处理后的CVPixelBufferRef生成RTCVideoFrame,通过调用localVideoSource中实现的didCaptureVideoFrame方法。内容较多,描述可能不准确,请见谅。

本文地址:https://blog.csdn.net/gloryFlow/article/details/132265842

学习记录,每天不停进步。