1. 什么是 RNN

循环神经网络(Recurrent Neural Network,RNN)是一种以序列数据为输入来进行建模的深度学习模型,它是 NLP 中最常用的模型。其结构如下图:

x是输入,h是隐层单元,o为输出,L为损失函数,y为训练集的标签.

这些元素右上角带的t代表t时刻的状态,其中需要注意的是,因策单元h在t时刻的表现不仅由此刻的输入决定,还受t时刻之前时刻的影响。V、W、U是权值,同一类型的权连接权值相同。

有了上面的理解,前向传播算法其实非常简单,对于t时刻:

其中为激活函数,一般来说会选择tanh函数,b为偏置。

t时刻的输出就更为简单:

最终模型的预测输出为:

其中为激活函数,通常RNN用于分类,故这里一般用softmax函数。

2. 实验代码

2.1. 搭建一个只有一层RNN和Dense网络的模型。

def simple_rnn_layer():

# Create a dense layer with 10 output neurons and input shape of (None, 20)

model = Sequential()

model.add(SimpleRNN(units=3, input_shape=(3, 2),)) # 3 units in the RNN layer, input_shape=(timesteps, features)

model.add(Dense(1)) # Output layer with one neuron

# Print the summary of the dense layer

print(model.summary())

if __name__ == '__main__':

simple_rnn_layer()输出

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

simple_rnn (SimpleRNN) (None, 3) 18

dense (Dense) (None, 1) 4

=================================================================

Total params: 22

Trainable params: 22

Non-trainable params: 0

_________________________________________________________________

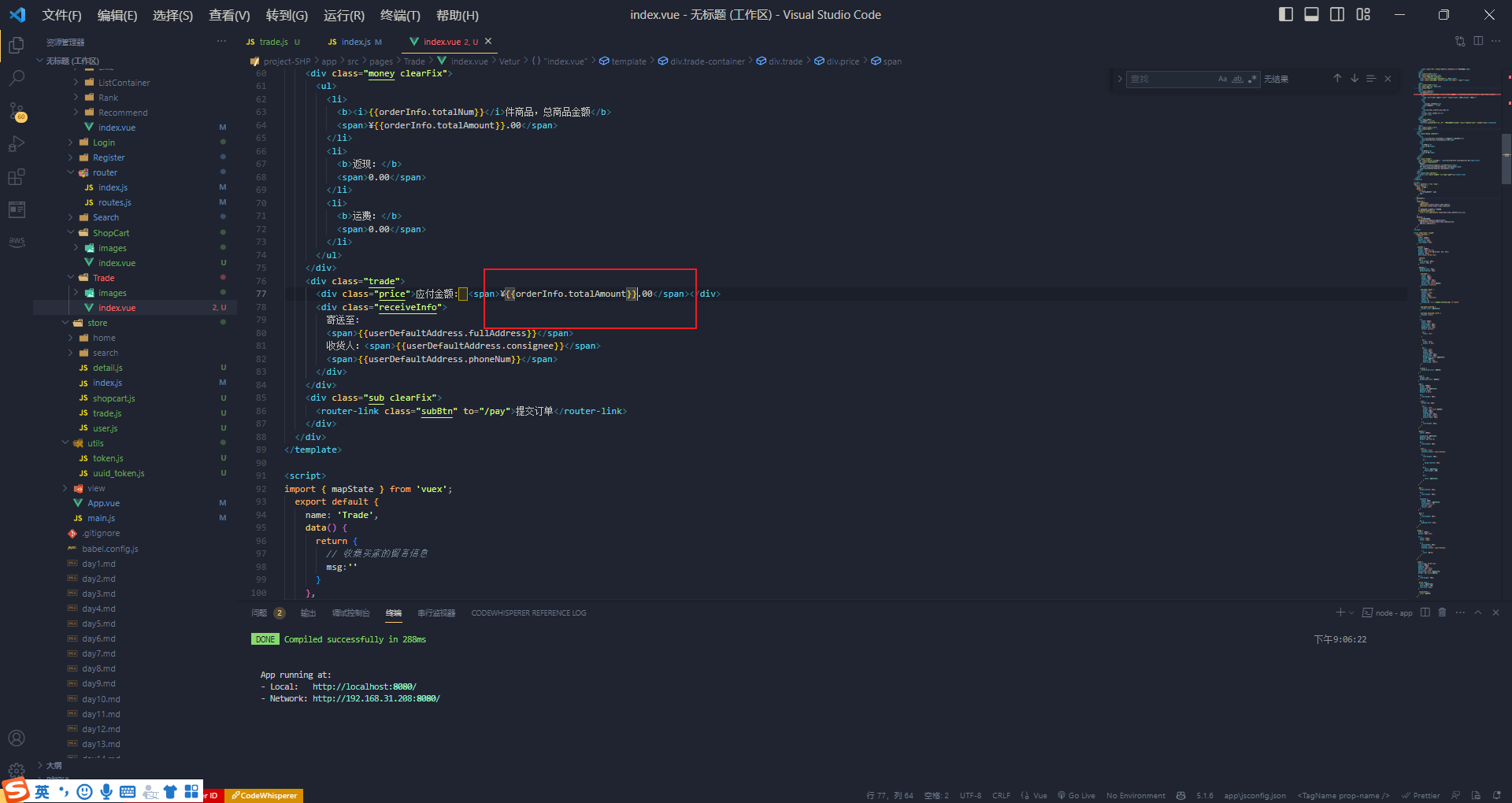

None2.2. 验证RNN里的逻辑

写代码验证这个过程,看看结果是不是一样的。

import keras.optimizers.optimizer

import numpy as np

from keras.models import Sequential

from keras.layers import SimpleRNN, Dense

def change_weight():

# Create a simple Dense layer

rnn_layer = SimpleRNN(units=3, input_shape=(3, 2), activation=None, return_sequences=True)

# Simulate input data (batch size of 1 for demonstration)

input_data = np.array([

[[1.0, 2], [2, 3], [3, 4]],

[[5, 6], [6, 7], [7, 8]],

[[9, 10], [10, 11], [11, 12]]

])

# Pass the input data through the layer to initialize the weights and biases

_ = rnn_layer(input_data)

# Access the weights and biases of the dense layer

kernel, recurrent_kernel, biases = rnn_layer.get_weights()

# Print the initial weights and biases

print("recurrent_kernel:", recurrent_kernel) # (3,3)

print('kernal:',kernel) #(2,3)

print('biase: ',biases) # (3)

kernel = np.array([[1, 0, 2], [2, 1, 3]])

recurrent_kernel = np.array([[1, 2, 1.0], [1, 0, 1], [0, 1, 0]])

biases = np.array([0, 0, 1.0])

rnn_layer.set_weights([kernel, recurrent_kernel, biases])

print(rnn_layer.get_weights())

test_data = np.array([

[[1.0, 3], [1, 1], [2, 3]]

])

output = rnn_layer(test_data)

print(output)

if __name__ == '__main__':

change_weight()

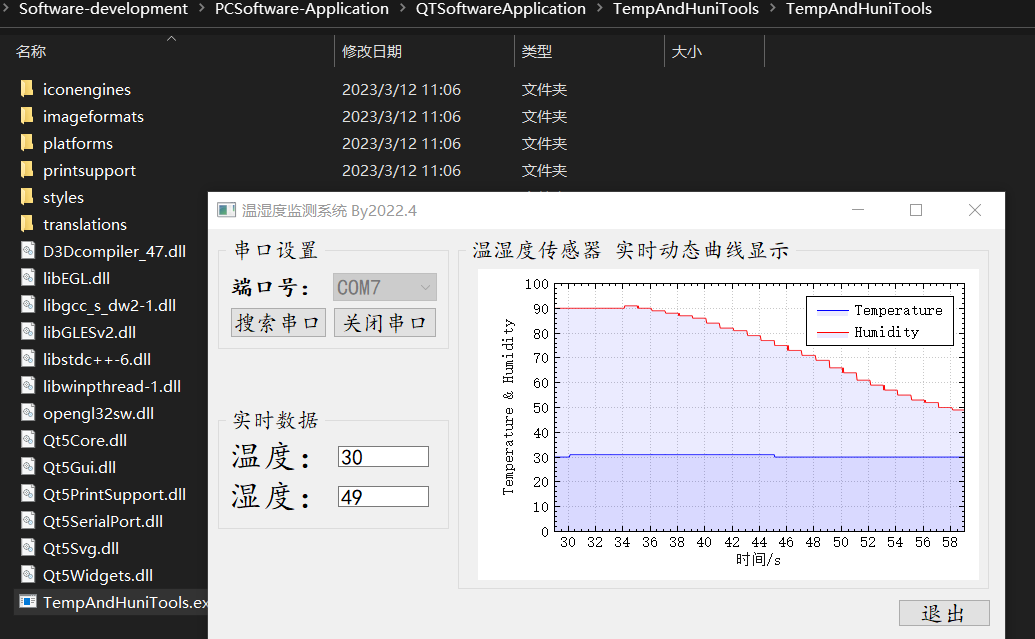

输出结果如下:可以看到结果是我手算的是一致的。

recurrent_kernel: [[ 0.06973135 0.40464386 0.9118119 ]

[ 0.6186313 -0.7345941 0.27868783]

[ 0.7825809 0.5446422 -0.3015495 ]]

kernal: [[-0.48868906 0.52718353 -0.08321357]

[-1.0569452 -0.9872779 0.72809434]]

biase: [0. 0. 0.]

[array([[1., 0., 2.],

[2., 1., 3.]], dtype=float32), array([[1., 2., 1.],

[1., 0., 1.],

[0., 1., 0.]], dtype=float32), array([0., 0., 1.], dtype=float32)]

tf.Tensor(

[[[ 7. 3. 12.]

[13. 27. 16.]

[48. 45. 54.]]], shape=(1, 3, 3), dtype=float32)2.3 代码实现一个简单的例子

import keras.optimizers.optimizer

import numpy as np

import tensorflow as tf

from keras.models import Sequential

from keras.layers import SimpleRNN, Dense

# Sample sequential data

# Each sequence has three timesteps, and each timestep has two features

data = np.array([

[[1, 2], [2, 3], [3, 4]],

[[5, 6], [6, 7], [7, 8]],

[[9, 10], [10, 11], [11, 12]]

])

print('data.shape= ',data.shape)

# Define the RNN model

model = Sequential()

model.add(SimpleRNN(units=4, input_shape=(3, 2), name="simpleRNN")) # 4 units in the RNN layer, input_shape=(timesteps, features)

model.add(Dense(1, name= "output")) # Output layer with one neuron

# Compile the model

model.compile(loss='mse', optimizer=keras.optimizers.Adam(learning_rate=0.01))

# Print the model summary

model.summary()

before_RNN_weight = model.get_layer("simpleRNN").get_weights()

print('before train ', before_RNN_weight)

# Train the model

model.fit(data, np.array([[10], [20], [30]]), epochs=2000, verbose=1)

RNN_weight = model.get_layer("simpleRNN").get_weights()

print('after train ', len(RNN_weight),)

for i in range(len(RNN_weight)):

print('====',RNN_weight[i].shape, RNN_weight[i])

# Make predictions

predictions = model.predict(data)

print("Predictions:", predictions.flatten())

代码输出

data.shape= (3, 3, 2)

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

simpleRNN (SimpleRNN) (None, 4) 28

output (Dense) (None, 1) 5

=================================================================

Total params: 33

Trainable params: 33

Non-trainable params: 0

_________________________________________________________________

before train [array([[-0.00466371, 0.53100157, 0.5298798 , 0.05514288],

[-0.08896947, 0.43185067, 0.7861788 , -0.80616236]],

dtype=float32), array([[-0.10712242, -0.03620092, -0.02182053, -0.9933471 ],

[-0.6549012 , -0.02620655, 0.7532524 , 0.05503315],

[-0.01986913, 0.9989996 , 0.02001702, -0.03470401],

[-0.74781984, 0.00159313, -0.657065 , 0.09502006]],

dtype=float32), array([0., 0., 0., 0.], dtype=float32)]

2023-08-05 16:02:44.111298: W tensorflow/tsl/platform/profile_utils/cpu_utils.cc:128] Failed to get CPU frequency: 0 Hz

Epoch 1/2000

....

Epoch 1999/2000

1/1 [==============================] - 0s 11ms/step - loss: 0.0071

Epoch 2000/2000

1/1 [==============================] - 0s 13ms/step - loss: 0.0070

after train 3

==== (2, 4) [[ 0.27645147 0.6025058 1.6083356 -0.38382724]

[ 0.11586202 0.32901326 1.4760928 -1.2268958 ]]

==== (4, 4) [[-0.99628973 -2.444563 1.7412992 -1.5265529 ]

[ 0.80340594 0.9488743 2.44552 -0.7439341 ]

[-0.1827681 -1.3091801 1.547736 -0.6644555 ]

[-0.5724374 2.3090494 -2.1779017 0.35992467]]

==== (4,) [-0.40184066 -1.2391611 0.33460653 -0.29144585]

1/1 [==============================] - 0s 78ms/step

Predictions: [10.000422 19.999924 29.85534 ]