概述

《Linux内存回收入口_nginux的博客-CSDN博客》前文我们概略的描述了几种内存回收入口,我们知道几种回收入口最终都会调用进入shrink_node函数,本文将以Linux 5.9源码来描述shrink_node函数的源码实现。

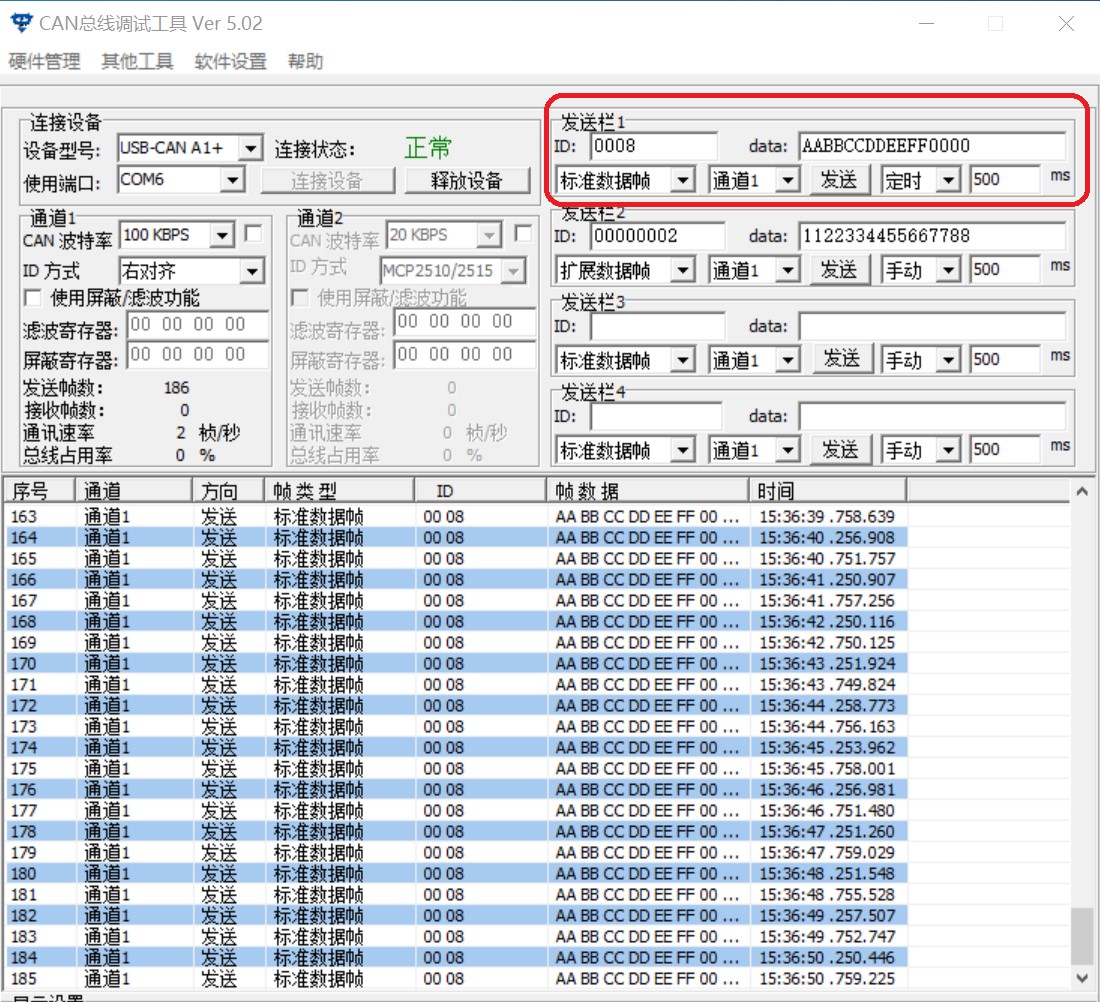

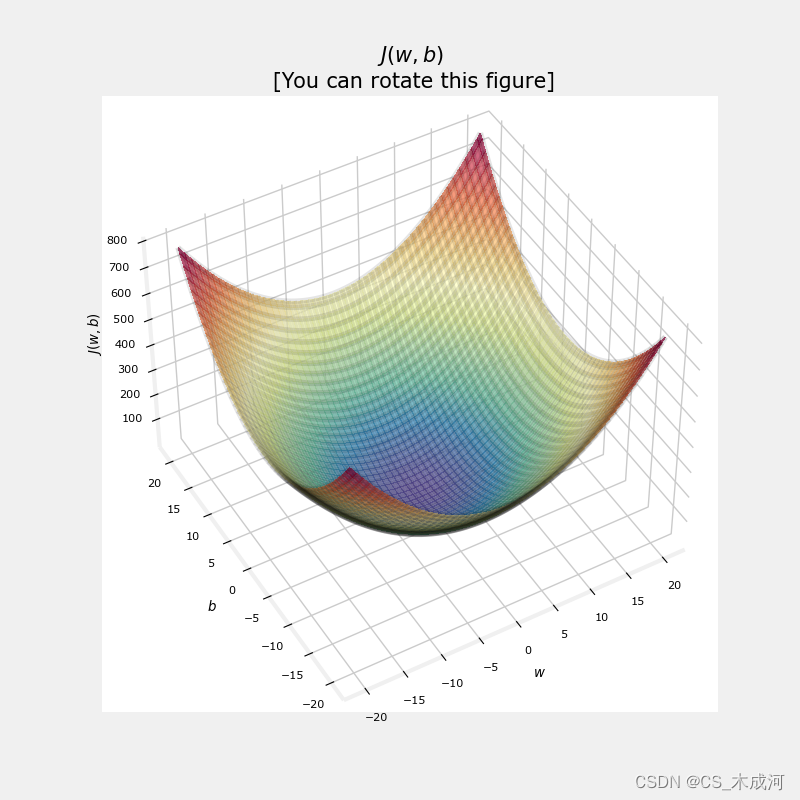

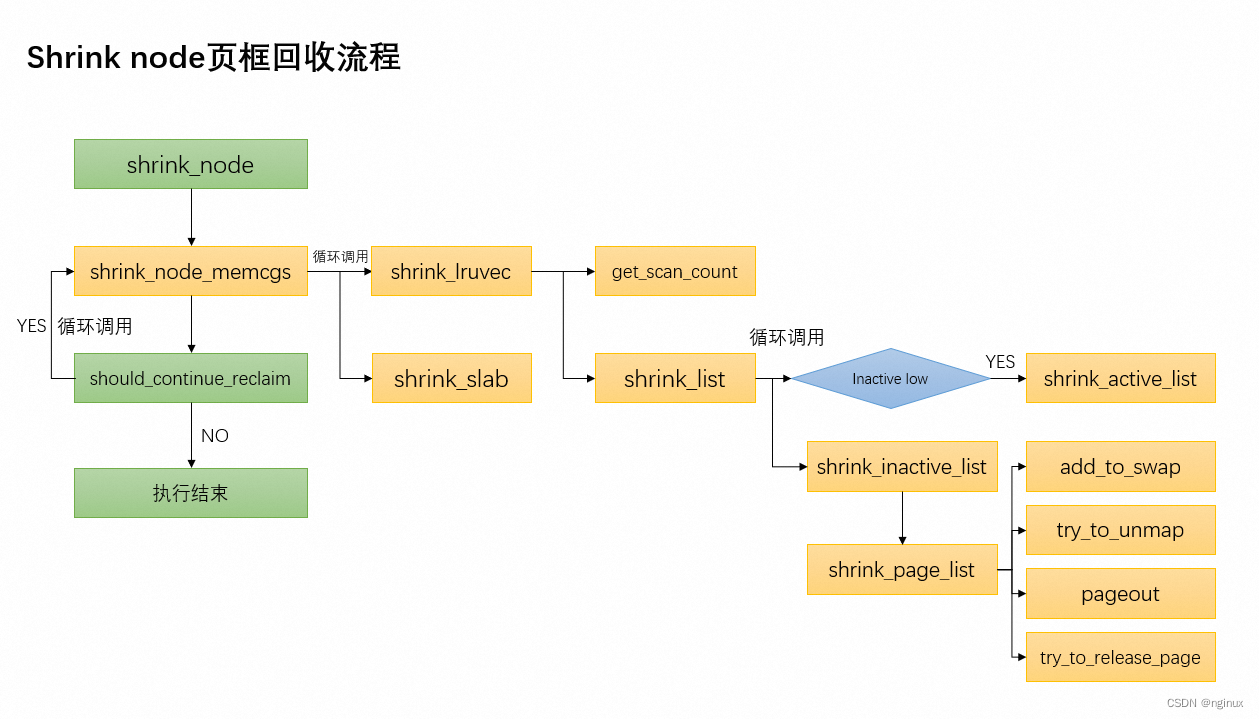

函数调用流程图

scan_control数据结构

struct scan_control {

/* How many pages shrink_list() should reclaim */

unsigned long nr_to_reclaim;

/*

* Nodemask of nodes allowed by the caller. If NULL, all nodes

* are scanned.

*/

nodemask_t *nodemask;

/*

* The memory cgroup that hit its limit and as a result is the

* primary target of this reclaim invocation.

*/

struct mem_cgroup *target_mem_cgroup;

/*

* Scan pressure balancing between anon and file LRUs

*/

unsigned long anon_cost;

unsigned long file_cost;

/* Can active pages be deactivated as part of reclaim? */

//是否能从active lru列表进行deactivate的reclaim

#define DEACTIVATE_ANON 1

#define DEACTIVATE_FILE 2

unsigned int may_deactivate:2;

//如果是1:代表强制进行deactivate,即同时deactivate file和anon

//如果是0,按需进行deactivate file或者anon,具体条件见下面shrink_node源码分析

unsigned int force_deactivate:1;

unsigned int skipped_deactivate:1;

/* Writepage batching in laptop mode; RECLAIM_WRITE */

unsigned int may_writepage:1;

/* Can mapped pages be reclaimed? */

unsigned int may_unmap:1;

/* Can pages be swapped as part of reclaim? */

unsigned int may_swap:1;

/*

* Cgroups are not reclaimed below their configured memory.low,

* unless we threaten to OOM. If any cgroups are skipped due to

* memory.low and nothing was reclaimed, go back for memory.low.

*/

unsigned int memcg_low_reclaim:1;

unsigned int memcg_low_skipped:1;

unsigned int hibernation_mode:1;

/* One of the zones is ready for compaction */

unsigned int compaction_ready:1;

/* There is easily reclaimable cold cache in the current node */

//设置为1代表只回收file page cache,不回收aone page

unsigned int cache_trim_mode:1;

/* The file pages on the current node are dangerously low */

//设置1代表只回收aone page,不回收file page

unsigned int file_is_tiny:1;

/* Allocation order */

s8 order;

/* Scan (total_size >> priority) pages at once */

s8 priority;

/* The highest zone to isolate pages for reclaim from */

s8 reclaim_idx;

/* This context's GFP mask */

gfp_t gfp_mask;

/* Incremented by the number of inactive pages that were scanned */

unsigned long nr_scanned;

/* Number of pages freed so far during a call to shrink_zones() */

unsigned long nr_reclaimed;

struct {

unsigned int dirty;

unsigned int unqueued_dirty;

unsigned int congested;

unsigned int writeback;

unsigned int immediate;

unsigned int file_taken;

unsigned int taken;

} nr;

/* for recording the reclaimed slab by now */

struct reclaim_state reclaim_state;

};shrink_node函数

static void shrink_node(pg_data_t *pgdat, struct scan_control *sc)

{

struct reclaim_state *reclaim_state = current->reclaim_state;

unsigned long nr_reclaimed, nr_scanned;

struct lruvec *target_lruvec;

bool reclaimable = false;

unsigned long file;

target_lruvec = mem_cgroup_lruvec(sc->target_mem_cgroup, pgdat);

again:

memset(&sc->nr, 0, sizeof(sc->nr));

nr_reclaimed = sc->nr_reclaimed;

nr_scanned = sc->nr_scanned;

/*

* Determine the scan balance between anon and file LRUs.

*/

spin_lock_irq(&pgdat->lru_lock);

sc->anon_cost = target_lruvec->anon_cost;

sc->file_cost = target_lruvec->file_cost;

spin_unlock_irq(&pgdat->lru_lock);

/*

* Target desirable inactive:active list ratios for the anon

* and file LRU lists.

*/

if (!sc->force_deactivate) {

unsigned long refaults;

refaults = lruvec_page_state(target_lruvec,

WORKINGSET_ACTIVATE_ANON);

//anon的refaults值比上次回收发生了变化,或者inactive anon很少,设置

//DEACTIVATE_ANON表示需要deactivate anon

if (refaults != target_lruvec->refaults[0] ||

inactive_is_low(target_lruvec, LRU_INACTIVE_ANON))

sc->may_deactivate |= DEACTIVATE_ANON;

else

sc->may_deactivate &= ~DEACTIVATE_ANON;

/*

* When refaults are being observed, it means a new

* workingset is being established. Deactivate to get

* rid of any stale active pages quickly.

*/

refaults = lruvec_page_state(target_lruvec,

WORKINGSET_ACTIVATE_FILE);

if (refaults != target_lruvec->refaults[1] ||

inactive_is_low(target_lruvec, LRU_INACTIVE_FILE))

sc->may_deactivate |= DEACTIVATE_FILE;

else

sc->may_deactivate &= ~DEACTIVATE_FILE;

} else

sc->may_deactivate = DEACTIVATE_ANON | DEACTIVATE_FILE;

/*

* If we have plenty of inactive file pages that aren't

* thrashing, try to reclaim those first before touching

* anonymous pages.

*/

//file是inactive file的数量

file = lruvec_page_state(target_lruvec, NR_INACTIVE_FILE);

if (file >> sc->priority && !(sc->may_deactivate & DEACTIVATE_FILE))

//只回收file page,影响get_scan_count

sc->cache_trim_mode = 1;

else

sc->cache_trim_mode = 0;

/*

* Prevent the reclaimer from falling into the cache trap: as

* cache pages start out inactive, every cache fault will tip

* the scan balance towards the file LRU. And as the file LRU

* shrinks, so does the window for rotation from references.

* This means we have a runaway feedback loop where a tiny

* thrashing file LRU becomes infinitely more attractive than

* anon pages. Try to detect this based on file LRU size.

*/

if (!cgroup_reclaim(sc)) {

unsigned long total_high_wmark = 0;

unsigned long free, anon;

int z;

free = sum_zone_node_page_state(pgdat->node_id, NR_FREE_PAGES);

file = node_page_state(pgdat, NR_ACTIVE_FILE) +

node_page_state(pgdat, NR_INACTIVE_FILE);

for (z = 0; z < MAX_NR_ZONES; z++) {

struct zone *zone = &pgdat->node_zones[z];

if (!managed_zone(zone))

continue;

total_high_wmark += high_wmark_pages(zone);

}

/*

* Consider anon: if that's low too, this isn't a

* runaway file reclaim problem, but rather just

* extreme pressure. Reclaim as per usual then.

*/

anon = node_page_state(pgdat, NR_INACTIVE_ANON);

//设置1代表只回收aone page,不回收file page

sc->file_is_tiny =

file + free <= total_high_wmark &&

!(sc->may_deactivate & DEACTIVATE_ANON) &&

anon >> sc->priority;

}

//回收的核心函数,后面文章专门分析

shrink_node_memcgs(pgdat, sc);

if (reclaim_state) {

sc->nr_reclaimed += reclaim_state->reclaimed_slab;

reclaim_state->reclaimed_slab = 0;

}

/* Record the subtree's reclaim efficiency */

vmpressure(sc->gfp_mask, sc->target_mem_cgroup, true,

sc->nr_scanned - nr_scanned,

sc->nr_reclaimed - nr_reclaimed);

//这一轮回收到了页面

if (sc->nr_reclaimed - nr_reclaimed)

reclaimable = true;

//只允许kswapd线程设置这些flag,因为只有kswapd能clear这些flag,避免混乱

//比如memcg reclaim也能设置,没法保证kswapd肯定会被wakeup去clear这些标志

if (current_is_kswapd()) {

/*

* If reclaim is isolating dirty pages under writeback,

* it implies that the long-lived page allocation rate

* is exceeding the page laundering rate. Either the

* global limits are not being effective at throttling

* processes due to the page distribution throughout

* zones or there is heavy usage of a slow backing

* device. The only option is to throttle from reclaim

* context which is not ideal as there is no guarantee

* the dirtying process is throttled in the same way

* balance_dirty_pages() manages.

*

* Once a node is flagged PGDAT_WRITEBACK, kswapd will

* count the number of pages under pages flagged for

* immediate reclaim and stall if any are encountered

* in the nr_immediate check below.

*/

//设置PGDAT_DIRTY代表reclaim发现很多页面正在回写

if (sc->nr.writeback && sc->nr.writeback == sc->nr.taken)

set_bit(PGDAT_WRITEBACK, &pgdat->flags);

/* Allow kswapd to start writing pages during reclaim.*/

设置PGDAT_DIRTY代表reclaim发现很多脏页

if (sc->nr.unqueued_dirty == sc->nr.file_taken)

set_bit(PGDAT_DIRTY, &pgdat->flags);

/*

* If kswapd scans pages marked for immediate

* reclaim and under writeback (nr_immediate), it

* implies that pages are cycling through the LRU

* faster than they are written so also forcibly stall.

*/

if (sc->nr.immediate)

congestion_wait(BLK_RW_ASYNC, HZ/10);

}

/*

* Tag a node/memcg as congested if all the dirty pages

* scanned were backed by a congested BDI and

* wait_iff_congested will stall.

*

* Legacy memcg will stall in page writeback so avoid forcibly

* stalling in wait_iff_congested().

*/

//只允许kswapd线程设置LRUVEC_CONGESTED,因为只有kswapd能clear LRUVEC_CONGESTED,

//比如memcg reclaim也能设置,没法保证kswap能唤醒去clear LRUVEC_CONGESTED,导致

//direct reclaim阻塞在wait_iff_congested

if ((current_is_kswapd() ||

(cgroup_reclaim(sc) && writeback_throttling_sane(sc))) &&

sc->nr.dirty && sc->nr.dirty == sc->nr.congested)

set_bit(LRUVEC_CONGESTED, &target_lruvec->flags);

/*

* Stall direct reclaim for IO completions if underlying BDIs

* and node is congested. Allow kswapd to continue until it

* starts encountering unqueued dirty pages or cycling through

* the LRU too quickly.

*/

//如果是非kswapd线程,且判定当前回收设置过拥塞flag,就要等待,所以direct reclaim

//会被阻塞

if (!current_is_kswapd() && current_may_throttle() &&

!sc->hibernation_mode &&

test_bit(LRUVEC_CONGESTED, &target_lruvec->flags))

wait_iff_congested(BLK_RW_ASYNC, HZ/10);

//如果需要继续回收,就goto again继续

if (should_continue_reclaim(pgdat, sc->nr_reclaimed - nr_reclaimed,

sc))

goto again;

/*

* Kswapd gives up on balancing particular nodes after too

* many failures to reclaim anything from them and goes to

* sleep. On reclaim progress, reset the failure counter. A

* successful direct reclaim run will revive a dormant kswapd.

*/

if (reclaimable)

pgdat->kswapd_failures = 0;

}should_continue_reclaim

/*

* Reclaim/compaction is used for high-order allocation requests. It reclaims

* order-0 pages before compacting the zone. should_continue_reclaim() returns

* true if more pages should be reclaimed such that when the page allocator

* calls try_to_compact_pages() that it will have enough free pages to succeed.

* It will give up earlier than that if there is difficulty reclaiming pages.

*/

static inline bool should_continue_reclaim(struct pglist_data *pgdat,

unsigned long nr_reclaimed,

struct scan_control *sc)

{

unsigned long pages_for_compaction;

unsigned long inactive_lru_pages;

int z;

/* If not in reclaim/compaction mode, stop */

if (!in_reclaim_compaction(sc))

return false;

/*

* Stop if we failed to reclaim any pages from the last SWAP_CLUSTER_MAX

* number of pages that were scanned. This will return to the caller

* with the risk reclaim/compaction and the resulting allocation attempt

* fails. In the past we have tried harder for __GFP_RETRY_MAYFAIL

* allocations through requiring that the full LRU list has been scanned

* first, by assuming that zero delta of sc->nr_scanned means full LRU

* scan, but that approximation was wrong, and there were corner cases

* where always a non-zero amount of pages were scanned.

*/

if (!nr_reclaimed)

return false;

//compaction_suitable会检查水位是否已满足条件(要根据orderPAGE_ALLOC_COSTLY_ORDER

//使用不同的watermark,如果不满足就不会返回success/continue

/* If compaction would go ahead or the allocation would succeed, stop */

for (z = 0; z <= sc->reclaim_idx; z++) {

struct zone *zone = &pgdat->node_zones[z];

if (!managed_zone(zone))

continue;

//满足了水位return false,代表不要继续shrink_node了

switch (compaction_suitable(zone, sc->order, 0, sc->reclaim_idx)) {

case COMPACT_SUCCESS:

case COMPACT_CONTINUE:

return false;

default:

/* check next zone */

;

}

}

/*

* If we have not reclaimed enough pages for compaction and the

* inactive lists are large enough, continue reclaiming

*/

//上面水位检查不通过,且也没有reclaim足够的page来做compaction,那就继续reclaim吧

pages_for_compaction = compact_gap(sc->order);

inactive_lru_pages = node_page_state(pgdat, NR_INACTIVE_FILE);

if (get_nr_swap_pages() > 0)

inactive_lru_pages += node_page_state(pgdat, NR_INACTIVE_ANON);

return inactive_lru_pages > pages_for_compaction;

}参考文章:

[PATCH v2 4/4] mm/vmscan: Don't mess with pgdat->flags in memcg reclaim. - Andrey Ryabinin

Linux 内存管理_workingset内存_jianchwa的博客-CSDN博客