深度学习----第J2周:ResNet50V2算法实现

文章目录

- 深度学习----第J2周:ResNet50V2算法实现

- 前言

- 一、ResNetV2与ResNet结构对比

- 二、模型复现

- 2.1 Residual Block

- 2.2 堆叠的 Residual Block

- 2.3 ResNet50V2

- 2.4 查看模型结构

- 2.5 tf下全部代码

- 三、Pytorch复现

- 3.1 代码实现

- 3.2 结构打印

前言

- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- ** 参考文章:Pytorch实战 | 第P5周:运动鞋识别**

- 🍖 原作者:K同学啊|接辅导、项目定制

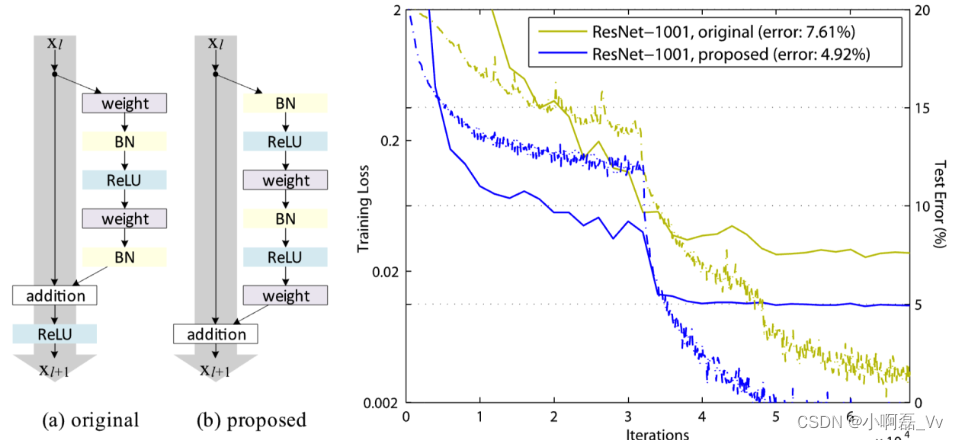

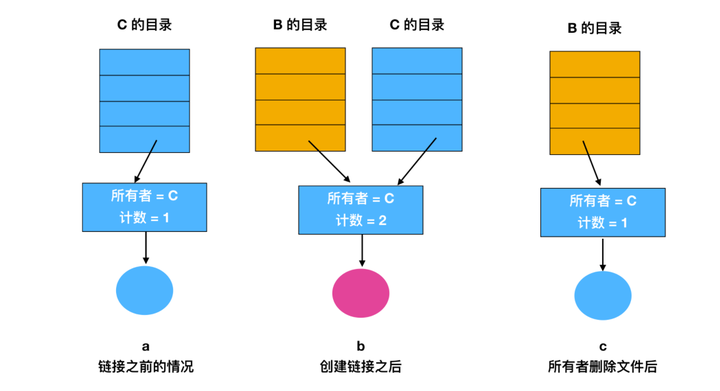

一、ResNetV2与ResNet结构对比

- 在阅读原论文中,主要区别就是残差结构的不通过,我们可以发现新的结构先进行BN和激活函数计算后卷积,把addition后的ReLU计算放到残差结构内部。

二、模型复现

2.1 Residual Block

def block2(x, filters, kernel_size=3, stride=1, conv_shortcut=False, name=None):

preact = layers.BatchNormalization(name=name + '_preact_bn_')(x)

preact = layers.Activation('relu', name=name + '_preact_relu')(preact)

if conv_shortcut:

shortcut = layers.Conv2D(4*filters, 1, strides=stride, name=name + '_0_conv')(preact)

else:

shortcut = layers.MaxPooling2D(1, strides=stride)(x) if stride > 1 else x

x = layers.Conv2D(filters, 1, strides=1, use_bias=False, name=name + '_1_conv')(preact)

x = layers.BatchNormalization(name=name + '_1_bn')(x)

x = layers.Activation('relu', name=name + '_1_relu')(x)

x = layers.ZeroPadding2D(padding=((1, 1), (1, 1)), name=name + '_2_pad')(x)

x = layers.Conv2D(filters, kernel_size,

strides=stride, use_bias=False, name=name + '_2_conv')(x)

x = layers.BatchNormalization(name=name + '_2_bn')(x)

x = layers.Activation('relu', name=name + '_2_relu')(x)

x = layers.Conv2D(4*filters, 1, name=name + '_3_conv')(x)

x = layers.Add(name=name + '_out')([shortcut, x])

return x

2.2 堆叠的 Residual Block

def stack2(x, filters, blocks, stride1=2, name=None):

x = block2(x, filters, conv_shortcut=True, name=name + '_block1')

for i in range(2, blocks):

x = block2(x, filters, name=name + '_block' + str(i))

x = block2(x, filters, stride=stride1, name=name + '_block' + str(blocks))

return x

2.3 ResNet50V2

def ResNet50V2(include_top=True,

preact=True,

use_bias=True,

weights='imagenet',

input_tensor=None,

input_shape=None,

pooling=None,

classes=1000,

classifier_activation='softmax'):

img_input = layers.Input(shape=input_shape)

x = layers.ZeroPadding2D(padding=((3, 3), (3, 3)), name='conv1_pad')(img_input)

x = layers.Conv2D(64, 7, strides=2, use_bias=use_bias, name='conv1_conv')(x)

if not preact:

x = layers.BatchNormalization(name='conv1_bn')(x)

x = layers.Activation('relu', name='conv1_relu')(x)

x = layers.ZeroPadding2D(padding=((1, 1), (1, 1)), name='pool1_pad')(x)

x = layers.MaxPooling2D(3, strides=2, name='pool1_pool')(x)

x = stack2(x, 64, 3, name='conv2')

x = stack2(x, 128, 4, name='conv3')

x = stack2(x, 256, 6, name='conv4')

x = stack2(x, 512, 3, stride1=1, name='conv5')

if preact:

x = layers.BatchNormalization(name='post_bn')(x)

x = layers.Activation('relu', name='post_relu')(x)

if include_top:

x = layers.GlobalAveragePooling2D(name='avg_pool')(x)

x = layers.Dense(classes, activation=classifier_activation, name='predictions')(x)

else:

if pooling == 'avg':

x = layers.GlobalAveragePooling2D(name='avg_pool')(x)

elif pooling == 'max':

x = layers.GlobalMaxPooling2D(name='max_pool')(x)

model = Model(img_input, x)

return model

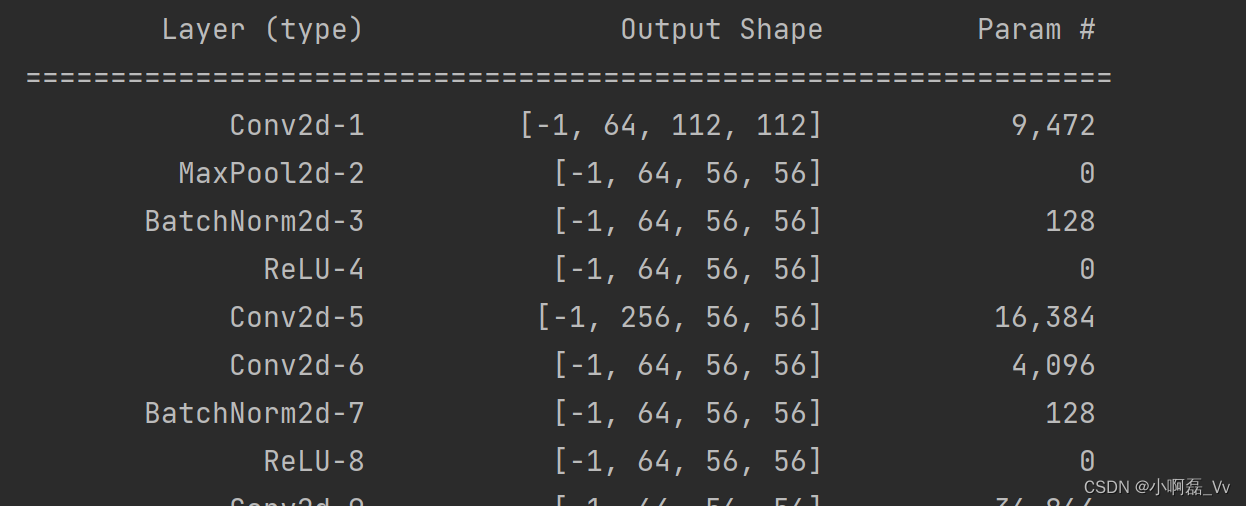

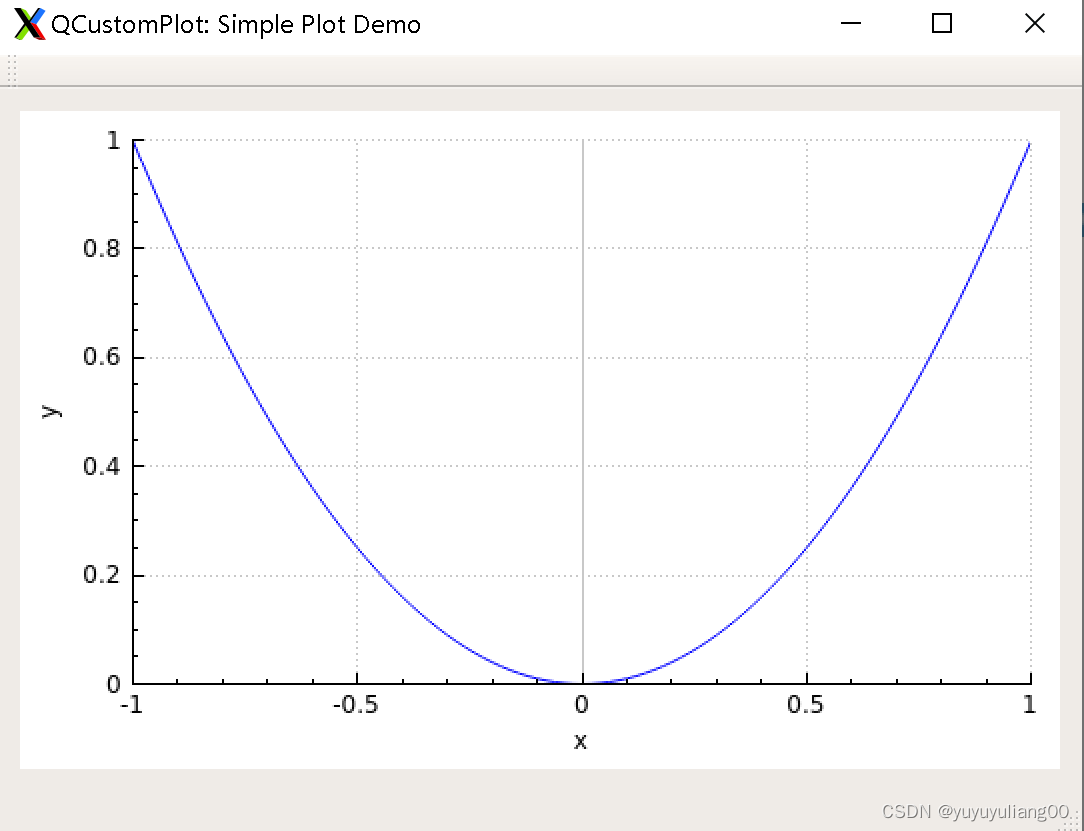

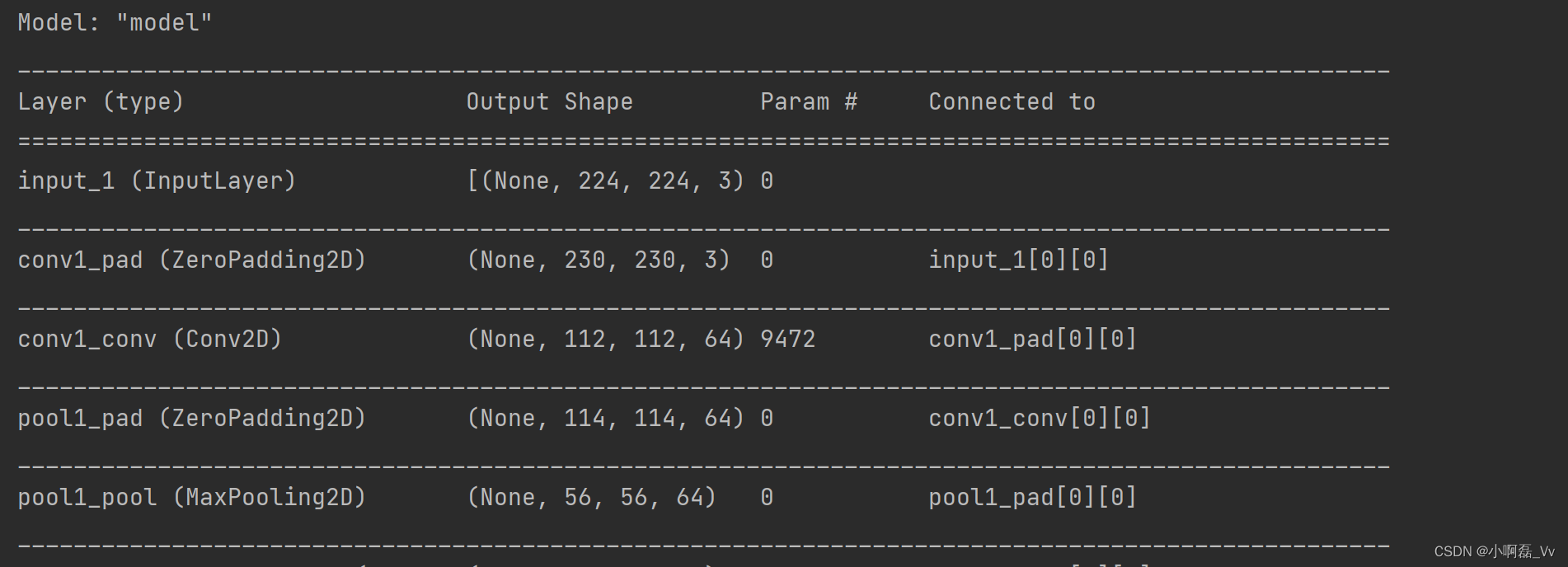

2.4 查看模型结构

if __name__ == '__main__':

model = ResNet50V2(input_shape=(224, 224, 3))

model.summary()

部分结构如下:

2.5 tf下全部代码

import tensorflow as tf

import tensorflow.keras.layers as layers

from tensorflow.keras.models import Model

# Residual Block

def block2(x, filters, kernel_size=3, stride=1, conv_shortcut=False, name=None):

preact = layers.BatchNormalization(name=name + '_preact_bn_')(x)

preact = layers.Activation('relu', name=name + '_preact_relu')(preact)

if conv_shortcut:

shortcut = layers.Conv2D(4*filters, 1, strides=stride, name=name + '_0_conv')(preact)

else:

shortcut = layers.MaxPooling2D(1, strides=stride)(x) if stride > 1 else x

x = layers.Conv2D(filters, 1, strides=1, use_bias=False, name=name + '_1_conv')(preact)

x = layers.BatchNormalization(name=name + '_1_bn')(x)

x = layers.Activation('relu', name=name + '_1_relu')(x)

x = layers.ZeroPadding2D(padding=((1, 1), (1, 1)), name=name + '_2_pad')(x)

x = layers.Conv2D(filters, kernel_size,

strides=stride, use_bias=False, name=name + '_2_conv')(x)

x = layers.BatchNormalization(name=name + '_2_bn')(x)

x = layers.Activation('relu', name=name + '_2_relu')(x)

x = layers.Conv2D(4*filters, 1, name=name + '_3_conv')(x)

x = layers.Add(name=name + '_out')([shortcut, x])

return x

# 堆叠的 Residual Block

def stack2(x, filters, blocks, stride1=2, name=None):

x = block2(x, filters, conv_shortcut=True, name=name + '_block1')

for i in range(2, blocks):

x = block2(x, filters, name=name + '_block' + str(i))

x = block2(x, filters, stride=stride1, name=name + '_block' + str(blocks))

return x

# ResNet50V2

def ResNet50V2(include_top=True,

preact=True,

use_bias=True,

weights='imagenet',

input_tensor=None,

input_shape=None,

pooling=None,

classes=1000,

classifier_activation='softmax'):

img_input = layers.Input(shape=input_shape)

x = layers.ZeroPadding2D(padding=((3, 3), (3, 3)), name='conv1_pad')(img_input)

x = layers.Conv2D(64, 7, strides=2, use_bias=use_bias, name='conv1_conv')(x)

if not preact:

x = layers.BatchNormalization(name='conv1_bn')(x)

x = layers.Activation('relu', name='conv1_relu')(x)

x = layers.ZeroPadding2D(padding=((1, 1), (1, 1)), name='pool1_pad')(x)

x = layers.MaxPooling2D(3, strides=2, name='pool1_pool')(x)

x = stack2(x, 64, 3, name='conv2')

x = stack2(x, 128, 4, name='conv3')

x = stack2(x, 256, 6, name='conv4')

x = stack2(x, 512, 3, stride1=1, name='conv5')

if preact:

x = layers.BatchNormalization(name='post_bn')(x)

x = layers.Activation('relu', name='post_relu')(x)

if include_top:

x = layers.GlobalAveragePooling2D(name='avg_pool')(x)

x = layers.Dense(classes, activation=classifier_activation, name='predictions')(x)

else:

if pooling == 'avg':

x = layers.GlobalAveragePooling2D(name='avg_pool')(x)

elif pooling == 'max':

x = layers.GlobalMaxPooling2D(name='max_pool')(x)

model = Model(img_input, x)

return model

if __name__ == '__main__':

model = ResNet50V2(input_shape=(224, 224, 3))

model.summary()

三、Pytorch复现

3.1 代码实现

import torch

import torch.nn as nn

import torchvision.transforms as transforms

import torchvision

from torchvision import transforms, datasets

import os, PIL, pathlib, warnings

from torchsummary import summary

#忽略警告信息

warnings.filterwarnings("ignore")

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

"""

Residual Block

"""

class Block2(nn.Module):

def __init__(self, in_channel, filters, kernel_size=3, stride=1, conv_shortcut=False):

super(Block2, self).__init__()

self.preact = nn.Sequential(

nn.BatchNorm2d(in_channel),

nn.ReLU(True)

)

self.shortcut = conv_shortcut

if self.shortcut:

self.short = nn.Conv2d(in_channel, 4*filters, 1, stride=stride, padding=0, bias=False)

elif stride>1:

self.short = nn.MaxPool2d(kernel_size=1, stride=stride, padding=0)

else:

self.short = nn.Identity()

self.conv1 = nn.Sequential(

nn.Conv2d(in_channel, filters, 1, stride=1, bias=False),

nn.BatchNorm2d(filters),

nn.ReLU(True)

)

self.conv2 = nn.Sequential(

nn.Conv2d(filters, filters, kernel_size, stride=stride, padding=1, bias=False),

nn.BatchNorm2d(filters),

nn.ReLU(True)

)

self.conv3 = nn.Conv2d(filters, 4*filters, 1, stride=1, bias=False)

def forward(self, x):

x1 = self.preact(x)

if self.shortcut:

x2 = self.short(x1)

else:

x2 = self.short(x)

x1 = self.conv1(x1)

x1 = self.conv2(x1)

x1 = self.conv3(x1)

x = x1 + x2

return x

class Stack2(nn.Module):

def __init__(self, in_channel, filters, blocks, stride=2):

super(Stack2, self).__init__()

self.conv = nn.Sequential()

self.conv.add_module(str(0), Block2(in_channel, filters, conv_shortcut=True))

for i in range(1, blocks-1):

self.conv.add_module(str(i), Block2(4*filters, filters))

self.conv.add_module(str(blocks-1), Block2(4*filters, filters, stride=stride))

def forward(self, x):

x = self.conv(x)

return x

"""

构建ResNet50V2

"""

class ResNet50V2(nn.Module):

def __init__(self,

include_top=True, # 是否包含位于网络顶部的全链接层

preact=True, # 是否使用预激活

use_bias=True, # 是否对卷积层使用偏置

input_shape=[224, 224, 3],

classes=1000,

pooling=None): # 用于分类图像的可选类数

super(ResNet50V2, self).__init__()

self.conv1 = nn.Sequential()

self.conv1.add_module('conv', nn.Conv2d(3, 64, 7, stride=2, padding=3, bias=use_bias, padding_mode='zeros'))

if not preact:

self.conv1.add_module('bn', nn.BatchNorm2d(64))

self.conv1.add_module('relu', nn.ReLU())

self.conv1.add_module('max_pool', nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

self.conv2 = Stack2(64, 64, 3)

self.conv3 = Stack2(256, 128, 4)

self.conv4 = Stack2(512, 256, 6)

self.conv5 = Stack2(1024, 512, 3, stride=1)

self.post = nn.Sequential()

if preact:

self.post.add_module('bn', nn.BatchNorm2d(2048))

self.post.add_module('relu', nn.ReLU())

if include_top:

self.post.add_module('avg_pool', nn.AdaptiveAvgPool2d((1, 1)))

self.post.add_module('flatten', nn.Flatten())

self.post.add_module('fc', nn.Linear(2048, classes))

else:

if pooling=='avg':

self.post.add_module('avg_pool', nn.AdaptiveAvgPool2d((1, 1)))

elif pooling=='max':

self.post.add_module('max_pool', nn.AdaptiveMaxPool2d((1, 1)))

def forward(self, x):

x = self.conv1(x)

x = self.conv2(x)

x = self.conv3(x)

x = self.conv4(x)

x = self.conv5(x)

x = self.post(x)

return x

model = ResNet50V2().to(device)

summary(model, (3, 224, 224))

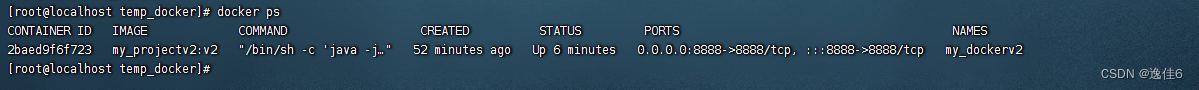

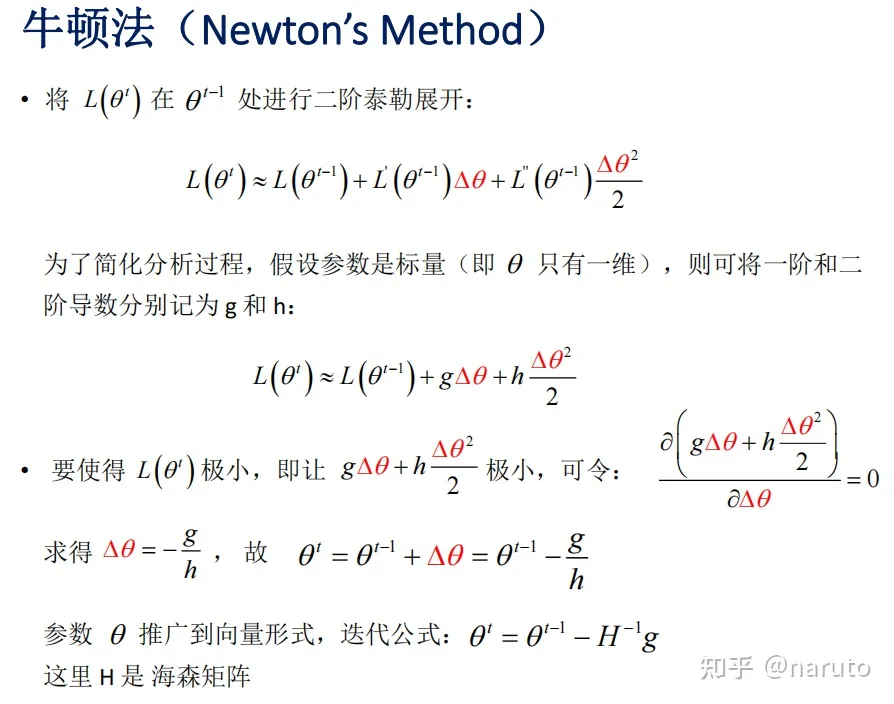

3.2 结构打印

网络结构部分如下