🏡 我的环境:

- 语言环境:Python3.10.11

- 编译器:Jupyter Notebook

- 深度学习框架:TensorFlow2.4.1

- 显卡(GPU):NVIDIA GeForce RTX 4070

🥂 相关教程:

- 编译器教程:【新手入门深度学习 | 1-2:编译器Jupyter Notebook】

- 深度学习环境配置教程:【新手入门深度学习 | 1-1:配置深度学习环境】

- 一个深度学习小白需要的所有资料我都放这里了:【新手入门深度学习 | 目录】

建议你学习本文之前先看看下面这篇入门文章,以便你可以更好的理解本文:🍨 新手入门深度学习 | 2-1:图像数据建模流程示例

强烈建议大家使用Jupyter Notebook编译器打开源码,你接下来的操作将会非常便捷的!

- 如果你是

深度学习小白,阅读本文前建议先学习一下 📖《新手入门深度学习》 - 如果你有一定基础,但是

缺乏实战经验,可通过 📖《深度学习100例》 补齐基础 - 另外,我们正在通过 🔥365天深度学习训练营🔥 抱团学习,营内为大家提供系统的学习教案与专业的指导、非常良好的学习氛围,欢迎你的加入

文章目录

- 一、准备数据

- 1. 设置GPU

- 2. 加载数据

- 二、构建模型

- 三、训练模型

- 四、模型评估

- 1. Accuracy与Loss图

- 2. 混淆矩阵

- 3. Loss/Precision/Recall/prc

- 4. ROC曲线

一、准备数据

1. 设置GPU

import matplotlib.pyplot as plt

import numpy as np

#隐藏警告

import warnings

warnings.filterwarnings("ignore") #忽略警告信息

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

from tensorflow.keras import layers

import tensorflow as tf

gpus = tf.config.list_physical_devices("GPU")

if gpus:

tf.config.experimental.set_memory_growth(gpus[0], True) #设置GPU显存用量按需使用

tf.config.set_visible_devices([gpus[0]],"GPU")

# 打印显卡信息,确认GPU可用

print(gpus)

[]

2. 加载数据

data_dir = "E:/Jupyter Lab/dataK/37-dataK-facial_expression/"

img_height = 224

img_width = 224

batch_size = 32

"""

关于image_dataset_from_directory()的详细介绍可以参考文章:https://mtyjkh.blog.csdn.net/article/details/117018789

"""

train_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.3,

subset="training",

label_mode = "categorical",

seed=12,

image_size=(img_height, img_width),

batch_size=batch_size)

Found 14023 files belonging to 4 classes.

Using 9817 files for training.

"""

关于image_dataset_from_directory()的详细介绍可以参考文章:https://mtyjkh.blog.csdn.net/article/details/117018789

"""

val_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.3,

subset="training",

label_mode = "categorical",

seed=12,

image_size=(img_height, img_width),

batch_size=batch_size)

Found 14023 files belonging to 4 classes.

Using 9817 files for training.

由于原始数据集不包含测试集,因此需要创建一个。使用 tf.data.experimental.cardinality 确定验证集中有多少批次的数据,然后将其中的 20% 移至测试集。

val_batches = tf.data.experimental.cardinality(val_ds)

test_ds = val_ds.take(val_batches // 5)

val_ds = val_ds.skip(val_batches // 5)

print('Number of validation batches: %d' % tf.data.experimental.cardinality(val_ds))

print('Number of test batches: %d' % tf.data.experimental.cardinality(test_ds))

Number of validation batches: 246

Number of test batches: 61

class_names = train_ds.class_names

print(class_names)

['Angry', 'Disgust', 'Fear', 'Happy']

AUTOTUNE = tf.data.AUTOTUNE

def preprocess_image(image, label):

return (image/255.0, label)

# 归一化处理

train_ds = train_ds.map(preprocess_image, num_parallel_calls=AUTOTUNE)

val_ds = val_ds.map(preprocess_image, num_parallel_calls=AUTOTUNE)

test_ds = test_ds.map(preprocess_image, num_parallel_calls=AUTOTUNE)

train_ds = train_ds.cache().prefetch(buffer_size=AUTOTUNE)

val_ds = val_ds.cache().prefetch(buffer_size=AUTOTUNE)

plt.figure(figsize=(15, 10)) # 图形的宽为15高为10

plt.suptitle('关注微信公众号(K同学啊)获取源码')

for images, labels in train_ds.take(1):

for i in range(32):

ax = plt.subplot(5, 8, i + 1)

plt.imshow(images[i])

plt.title(class_names[np.argmax(labels[i])])

plt.axis("off")

二、构建模型

model = tf.keras.Sequential([

layers.Conv2D(16, 3, padding='same', activation='relu'),

layers.MaxPooling2D(),

layers.Conv2D(32, 3, padding='same', activation='relu'),

layers.MaxPooling2D(),

layers.Conv2D(64, 3, padding='same', activation='relu'),

layers.MaxPooling2D(),

layers.Conv2D(64, 3, padding='same', activation='relu'),

layers.MaxPooling2D(),

layers.Conv2D(64, 3, padding='same', activation='relu'),

layers.MaxPooling2D(),

layers.Flatten(),

layers.Dense(128, activation='relu'),

layers.Dense(len(class_names) ,activation='softmax')

])

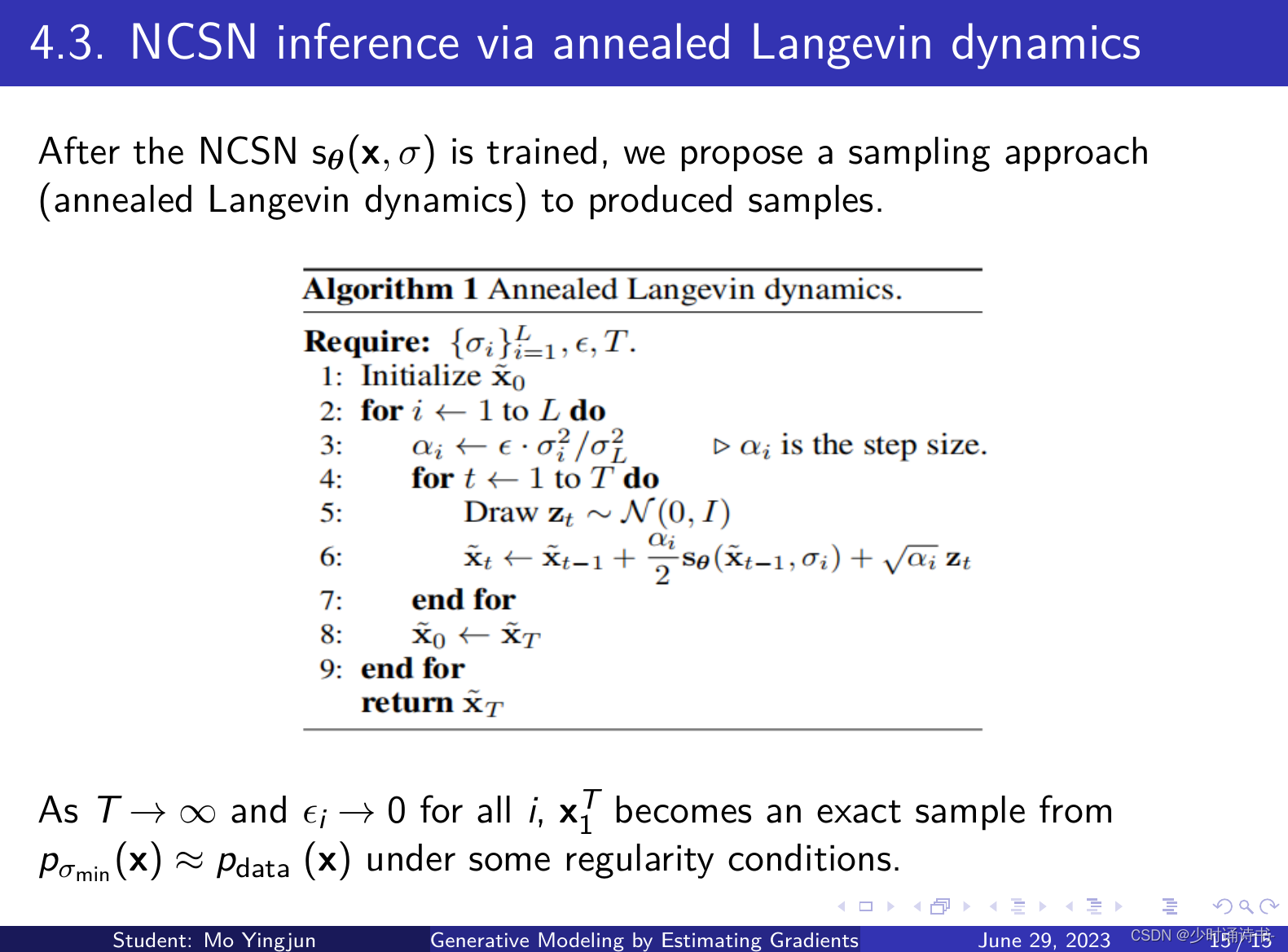

在准备对模型进行训练之前,还需要再对其进行一些设置。以下内容是在模型的编译步骤中添加的:

- 损失函数(loss):用于衡量模型在训练期间的准确率。

- 优化器(optimizer):决定模型如何根据其看到的数据和自身的损失函数进行更新。

- 评价函数(metrics):用于监控训练和测试步骤。以下示例使用了准确率,即被正确分类的图像的比率。

"""

关于评估指标的相关内容可以参考文章:https://mtyjkh.blog.csdn.net/article/details/123786871

"""

METRICS = [

tf.keras.metrics.TruePositives(name='tp'),

tf.keras.metrics.FalsePositives(name='fp'),

tf.keras.metrics.TrueNegatives(name='tn'),

tf.keras.metrics.FalseNegatives(name='fn'),

tf.keras.metrics.CategoricalAccuracy(name='accuracy'),

tf.keras.metrics.Precision(name='precision'),

tf.keras.metrics.Recall(name='recall'),

tf.keras.metrics.AUC(name='auc'),

tf.keras.metrics.AUC(name='prc', curve='PR'), # precision-recall curve

]

model.compile(optimizer="adam",

loss='categorical_crossentropy',

metrics=METRICS)

三、训练模型

epochs = 10

history = model.fit(

train_ds,

validation_data=val_ds,

epochs=epochs

)

Epoch 1/10

307/307 [==============================] - 250s 810ms/step - loss: 1.1943 - tp: 233.0000 - fp: 250.0000 - tn: 29201.0000 - fn: 9584.0000 - accuracy: 0.3956 - precision: 0.4824 - recall: 0.0237 - auc: 0.6951 - prc: 0.3833 - val_loss: 1.1629 - val_tp: 220.0000 - val_fp: 164.0000 - val_tn: 23431.0000 - val_fn: 7645.0000 - val_accuracy: 0.4356 - val_precision: 0.5729 - val_recall: 0.0280 - val_auc: 0.7283 - val_prc: 0.4336

Epoch 2/10

307/307 [==============================] - 250s 814ms/step - loss: 1.0757 - tp: 2495.0000 - fp: 1399.0000 - tn: 28052.0000 - fn: 7322.0000 - accuracy: 0.5060 - precision: 0.6407 - recall: 0.2542 - auc: 0.7840 - prc: 0.5401 - val_loss: 0.9535 - val_tp: 3113.0000 - val_fp: 1328.0000 - val_tn: 22267.0000 - val_fn: 4752.0000 - val_accuracy: 0.5880 - val_precision: 0.7010 - val_recall: 0.3958 - val_auc: 0.8378 - val_prc: 0.6482

Epoch 3/10

307/307 [==============================] - 244s 795ms/step - loss: 0.9184 - tp: 4262.0000 - fp: 1802.0000 - tn: 27649.0000 - fn: 5555.0000 - accuracy: 0.5994 - precision: 0.7028 - recall: 0.4341 - auc: 0.8488 - prc: 0.6715 - val_loss: 0.8377 - val_tp: 4064.0000 - val_fp: 1476.0000 - val_tn: 22119.0000 - val_fn: 3801.0000 - val_accuracy: 0.6437 - val_precision: 0.7336 - val_recall: 0.5167 - val_auc: 0.8774 - val_prc: 0.7299

Epoch 4/10

307/307 [==============================] - 234s 763ms/step - loss: 0.8155 - tp: 5139.0000 - fp: 1865.0000 - tn: 27586.0000 - fn: 4678.0000 - accuracy: 0.6469 - precision: 0.7337 - recall: 0.5235 - auc: 0.8822 - prc: 0.7363 - val_loss: 0.7145 - val_tp: 4791.0000 - val_fp: 1486.0000 - val_tn: 22109.0000 - val_fn: 3074.0000 - val_accuracy: 0.6941 - val_precision: 0.7633 - val_recall: 0.6092 - val_auc: 0.9105 - val_prc: 0.7961

Epoch 5/10

307/307 [==============================] - 237s 773ms/step - loss: 0.7253 - tp: 5798.0000 - fp: 1812.0000 - tn: 27639.0000 - fn: 4019.0000 - accuracy: 0.6902 - precision: 0.7619 - recall: 0.5906 - auc: 0.9074 - prc: 0.7875 - val_loss: 0.6365 - val_tp: 5219.0000 - val_fp: 1400.0000 - val_tn: 22195.0000 - val_fn: 2646.0000 - val_accuracy: 0.7362 - val_precision: 0.7885 - val_recall: 0.6636 - val_auc: 0.9292 - val_prc: 0.8344

Epoch 6/10

307/307 [==============================] - 242s 789ms/step - loss: 0.6383 - tp: 6470.0000 - fp: 1721.0000 - tn: 27730.0000 - fn: 3347.0000 - accuracy: 0.7332 - precision: 0.7899 - recall: 0.6591 - auc: 0.9286 - prc: 0.8322 - val_loss: 0.6005 - val_tp: 5485.0000 - val_fp: 1401.0000 - val_tn: 22194.0000 - val_fn: 2380.0000 - val_accuracy: 0.7533 - val_precision: 0.7965 - val_recall: 0.6974 - val_auc: 0.9371 - val_prc: 0.8506

Epoch 7/10

307/307 [==============================] - 242s 788ms/step - loss: 0.5609 - tp: 7001.0000 - fp: 1562.0000 - tn: 27889.0000 - fn: 2816.0000 - accuracy: 0.7712 - precision: 0.8176 - recall: 0.7132 - auc: 0.9449 - prc: 0.8669 - val_loss: 0.5107 - val_tp: 5954.0000 - val_fp: 1278.0000 - val_tn: 22317.0000 - val_fn: 1911.0000 - val_accuracy: 0.7898 - val_precision: 0.8233 - val_recall: 0.7570 - val_auc: 0.9541 - val_prc: 0.8882

Epoch 8/10

307/307 [==============================] - 245s 797ms/step - loss: 0.4748 - tp: 7506.0000 - fp: 1395.0000 - tn: 28056.0000 - fn: 2311.0000 - accuracy: 0.8082 - precision: 0.8433 - recall: 0.7646 - auc: 0.9605 - prc: 0.9027 - val_loss: 0.4831 - val_tp: 6182.0000 - val_fp: 1248.0000 - val_tn: 22347.0000 - val_fn: 1683.0000 - val_accuracy: 0.8095 - val_precision: 0.8320 - val_recall: 0.7860 - val_auc: 0.9598 - val_prc: 0.9022

Epoch 9/10

307/307 [==============================] - 240s 782ms/step - loss: 0.3949 - tp: 8014.0000 - fp: 1199.0000 - tn: 28252.0000 - fn: 1803.0000 - accuracy: 0.8442 - precision: 0.8699 - recall: 0.8163 - auc: 0.9722 - prc: 0.9307 - val_loss: 0.4707 - val_tp: 6357.0000 - val_fp: 1213.0000 - val_tn: 22382.0000 - val_fn: 1508.0000 - val_accuracy: 0.8226 - val_precision: 0.8398 - val_recall: 0.8083 - val_auc: 0.9632 - val_prc: 0.9109

Epoch 10/10

307/307 [==============================] - 237s 771ms/step - loss: 0.3336 - tp: 8348.0000 - fp: 1051.0000 - tn: 28400.0000 - fn: 1469.0000 - accuracy: 0.8707 - precision: 0.8882 - recall: 0.8504 - auc: 0.9796 - prc: 0.9484 - val_loss: 0.5240 - val_tp: 6415.0000 - val_fp: 1277.0000 - val_tn: 22318.0000 - val_fn: 1450.0000 - val_accuracy: 0.8234 - val_precision: 0.8340 - val_recall: 0.8156 - val_auc: 0.9597 - val_prc: 0.9036

eva = model.evaluate(test_ds)

print("\n模型的识别准确率为:", eva[5])

61/61 [==============================] - 12s 197ms/step - loss: 0.5483 - tp: 1551.0000 - fp: 357.0000 - tn: 5499.0000 - fn: 401.0000 - accuracy: 0.8038 - precision: 0.8129 - recall: 0.7946 - auc: 0.9557 - prc: 0.8932

模型的识别准确率为: 0.8037909865379333

四、模型评估

eva = model.evaluate(test_ds)

print("\n模型的识别准确率为:", eva[5])

61/61 [==============================] - 12s 196ms/step - loss: 0.5606 - tp: 1541.0000 - fp: 365.0000 - tn: 5491.0000 - fn: 411.0000 - accuracy: 0.7992 - precision: 0.8085 - recall: 0.7894 - auc: 0.9541 - prc: 0.8905

模型的识别准确率为: 0.7991803288459778

1. Accuracy与Loss图

"""

关于Matplotlib画图的内容可以参考我的专栏《Matplotlib实例教程》

专栏地址:https://blog.csdn.net/qq_38251616/category_11351625.html

"""

acc = history.history['accuracy']

val_acc = history.history['val_accuracy']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs_range = range(epochs)

plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)

# plt.suptitle('关注微信公众号(K同学啊)获取源码')

plt.plot(epochs_range, acc, label='Training Accuracy')

plt.plot(epochs_range, val_acc, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, loss, label='Training Loss')

plt.plot(epochs_range, val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-7YNLEnEK-1688087191069)(output_22_0.png)]

2. 混淆矩阵

from sklearn.metrics import confusion_matrix

import seaborn as sns

import pandas as pd

# 定义一个绘制混淆矩阵图的函数

def plot_cm(labels, predictions):

# 生成混淆矩阵

conf_numpy = confusion_matrix(labels, predictions)

# 将矩阵转化为 DataFrame

conf_df = pd.DataFrame(conf_numpy, index=class_names ,columns=class_names)

plt.figure(figsize=(8,7))

plt.suptitle('关注微信公众号(K同学啊)获取源码')

sns.heatmap(conf_df, annot=True, fmt="d", cmap="icefire_r")

plt.title('混淆矩阵',fontsize=15)

plt.ylabel('真实值',fontsize=14)

plt.xlabel('预测值',fontsize=14)

val_pre = []

val_label = []

for images, labels in val_ds.take(1):#这里可以取部分验证数据(.take(1))生成混淆矩阵

for image, label in zip(images, labels):

# 需要给图片增加一个维度

img_array = tf.expand_dims(image, 0)

# 使用模型预测图片中的人物

prediction = model.predict(img_array)

val_pre.append(np.argmax(prediction))

val_label.append([np.argmax(one_hot) for one_hot in [label]][0])

plot_cm(val_label, val_pre)

3. Loss/Precision/Recall/prc

colors = plt.rcParams['axes.prop_cycle'].by_key()['color']

plt.figure(figsize=(12,8))

def plot_metrics(history):

metrics = ['loss', 'prc', 'precision', 'recall']

for n, metric in enumerate(metrics):

name = metric.replace("_"," ").capitalize()

plt.subplot(2,2,n+1)

plt.plot(history.epoch, history.history[metric], color=colors[1], label='Train')

plt.plot(history.epoch, history.history['val_'+metric],color=colors[2],

linestyle="--", label='Val')

plt.xlabel('Epoch',fontsize=12)

plt.ylabel(name,fontsize=12)

plt.legend()

plot_metrics(history)

4. ROC曲线

关于ROC曲线的更多内容可以看我这篇文章:https://blog.csdn.net/qq_38251616/article/details/122321938

关于ROC曲线应用的其他实例:

- 深度学习100例 | 第35天:脑肿瘤识别

import sklearn

from tensorflow.keras.utils import to_categorical

def plot_roc( labelsـ, predictions):

fpr = dict()

tpr = dict()

roc_auc = dict()

temp = class_names

for i, item in enumerate(temp):

fpr[i], tpr[i], _ = sklearn.metrics.roc_curve(labelsـ[:, i], predictions[:, i])

roc_auc[i] = sklearn.metrics.auc(fpr[i], tpr[i])

plt.subplots(figsize=(7, 7))

for i, item in enumerate(temp):

plt.plot(100*fpr[i], 100*tpr[i], label=f'ROC curve {item} (area = {roc_auc[i]:.2f})', linewidth=2, linestyle="--")

plt.xlabel('False positives [%]',fontsize=12)

plt.ylabel('True positives [%]',fontsize=12)

plt.legend(loc="lower right")

plt.grid(True)

# 调用函数

plot_roc(to_categorical(val_label), to_categorical(val_pre))