yolov8 openvino量化部署

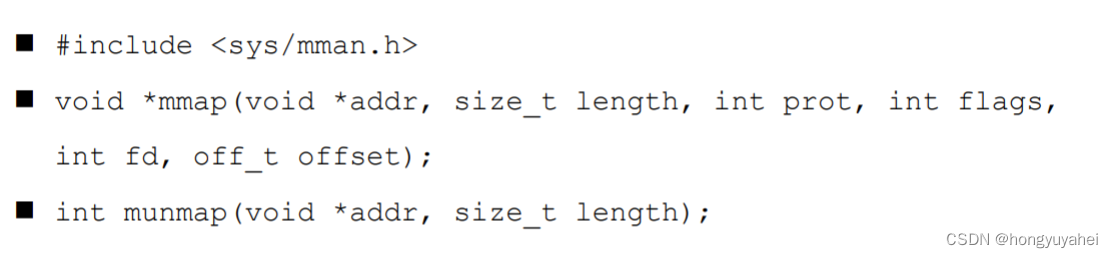

环境配置:

pip install ultralytics && pip install openvino-dev

将pytorch模型转为openvino模型:

from ultralytics import YOLO

# Load a model

model = YOLO("./yolov8n.pt") # load an official model

# Export the model

model.export(format="openvino")

python量化脚本:(改编自https://github.com/openvinotoolkit/openvino_notebooks/blob/main/notebooks/230-yolov8-optimization/230-yolov8-optimization.ipynb)

from pathlib import Path

from ultralytics import YOLO

from PIL import Image

import random

DET_MODEL_NAME = "yolov8n"

det_model = YOLO(f'{DET_MODEL_NAME}.pt')

label_map = det_model.model.names

# object detection model

det_model_path = Path(f"{DET_MODEL_NAME}_openvino_model/{DET_MODEL_NAME}.xml")

det_model.export(format="openvino", half=True)

from typing import Tuple

import cv2

import numpy as np

from ultralytics.yolo.utils import ops

import torch

def letterbox(img: np.ndarray, new_shape:Tuple[int, int] = (640, 640), color:Tuple[int, int, int] = (114, 114, 114), auto:bool = False, scale_fill:bool = False, scaleup:bool = False, stride:int = 32):

"""

Resize image and padding for detection. Takes image as input,

resizes image to fit into new shape with saving original aspect ratio and pads it to meet stride-multiple constraints

Parameters:

img (np.ndarray): image for preprocessing

new_shape (Tuple(int, int)): image size after preprocessing in format [height, width]

color (Tuple(int, int, int)): color for filling padded area

auto (bool): use dynamic input size, only padding for stride constrins applied

scale_fill (bool): scale image to fill new_shape

scaleup (bool): allow scale image if it is lower then desired input size, can affect model accuracy

stride (int): input padding stride

Returns:

img (np.ndarray): image after preprocessing

ratio (Tuple(float, float)): hight and width scaling ratio

padding_size (Tuple(int, int)): height and width padding size

"""

# Resize and pad image while meeting stride-multiple constraints

shape = img.shape[:2] # current shape [height, width]

if isinstance(new_shape, int):

new_shape = (new_shape, new_shape)

# Scale ratio (new / old)

r = min(new_shape[0] / shape[0], new_shape[1] / shape[1])

if not scaleup: # only scale down, do not scale up (for better test mAP)

r = min(r, 1.0)

# Compute padding

ratio = r, r # width, height ratios

new_unpad = int(round(shape[1] * r)), int(round(shape[0] * r))

dw, dh = new_shape[1] - new_unpad[0], new_shape[0] - new_unpad[1] # wh padding

if auto: # minimum rectangle

dw, dh = np.mod(dw, stride), np.mod(dh, stride) # wh padding

elif scale_fill: # stretch

dw, dh = 0.0, 0.0

new_unpad = (new_shape[1], new_shape[0])

ratio = new_shape[1] / shape[1], new_shape[0] / shape[0] # width, height ratios

dw /= 2 # divide padding into 2 sides

dh /= 2

if shape[::-1] != new_unpad: # resize

img = cv2.resize(img, new_unpad, interpolation=cv2.INTER_LINEAR)

top, bottom = int(round(dh - 0.1)), int(round(dh + 0.1))

left, right = int(round(dw - 0.1)), int(round(dw + 0.1))

img = cv2.copyMakeBorder(img, top, bottom, left, right, cv2.BORDER_CONSTANT, value=color) # add border

return img, ratio, (dw, dh)

def preprocess_image(img0: np.ndarray):

"""

Preprocess image according to YOLOv8 input requirements.

Takes image in np.array format, resizes it to specific size using letterbox resize and changes data layout from HWC to CHW.

Parameters:

img0 (np.ndarray): image for preprocessing

Returns:

img (np.ndarray): image after preprocessing

"""

# resize

img = letterbox(img0)[0]

# Convert HWC to CHW

img = img.transpose(2, 0, 1)

img = np.ascontiguousarray(img)

return img

def image_to_tensor(image:np.ndarray):

"""

Preprocess image according to YOLOv8 input requirements.

Takes image in np.array format, resizes it to specific size using letterbox resize and changes data layout from HWC to CHW.

Parameters:

img (np.ndarray): image for preprocessing

Returns:

input_tensor (np.ndarray): input tensor in NCHW format with float32 values in [0, 1] range

"""

input_tensor = image.astype(np.float32) # uint8 to fp32

input_tensor /= 255.0 # 0 - 255 to 0.0 - 1.0

# add batch dimension

if input_tensor.ndim == 3:

input_tensor = np.expand_dims(input_tensor, 0)

return input_tensor

def postprocess(

pred_boxes:np.ndarray,

input_hw:Tuple[int, int],

orig_img:np.ndarray,

min_conf_threshold:float = 0.25,

nms_iou_threshold:float = 0.7,

agnosting_nms:bool = False,

max_detections:int = 300,

pred_masks:np.ndarray = None,

retina_mask:bool = False

):

"""

YOLOv8 model postprocessing function. Applied non maximum supression algorithm to detections and rescale boxes to original image size

Parameters:

pred_boxes (np.ndarray): model output prediction boxes

input_hw (np.ndarray): preprocessed image

orig_image (np.ndarray): image before preprocessing

min_conf_threshold (float, *optional*, 0.25): minimal accepted confidence for object filtering

nms_iou_threshold (float, *optional*, 0.45): minimal overlap score for removing objects duplicates in NMS

agnostic_nms (bool, *optiona*, False): apply class agnostinc NMS approach or not

max_detections (int, *optional*, 300): maximum detections after NMS

pred_masks (np.ndarray, *optional*, None): model ooutput prediction masks, if not provided only boxes will be postprocessed

retina_mask (bool, *optional*, False): retina mask postprocessing instead of native decoding

Returns:

pred (List[Dict[str, np.ndarray]]): list of dictionary with det - detected boxes in format [x1, y1, x2, y2, score, label] and segment - segmentation polygons for each element in batch

"""

nms_kwargs = {"agnostic": agnosting_nms, "max_det":max_detections}

# if pred_masks is not None:

# nms_kwargs["nm"] = 32

preds = ops.non_max_suppression(

torch.from_numpy(pred_boxes),

min_conf_threshold,

nms_iou_threshold,

nc=80,

**nms_kwargs

)

results = []

proto = torch.from_numpy(pred_masks) if pred_masks is not None else None

for i, pred in enumerate(preds):

shape = orig_img[i].shape if isinstance(orig_img, list) else orig_img.shape

if not len(pred):

results.append({"det": [], "segment": []})

continue

if proto is None:

pred[:, :4] = ops.scale_boxes(input_hw, pred[:, :4], shape).round()

results.append({"det": pred})

continue

if retina_mask:

pred[:, :4] = ops.scale_boxes(input_hw, pred[:, :4], shape).round()

masks = ops.process_mask_native(proto[i], pred[:, 6:], pred[:, :4], shape[:2]) # HWC

segments = [ops.scale_segments(input_hw, x, shape, normalize=False) for x in ops.masks2segments(masks)]

else:

masks = ops.process_mask(proto[i], pred[:, 6:], pred[:, :4], input_hw, upsample=True)

pred[:, :4] = ops.scale_boxes(input_hw, pred[:, :4], shape).round()

segments = [ops.scale_segments(input_hw, x, shape, normalize=False) for x in ops.masks2segments(masks)]

results.append({"det": pred[:, :6].numpy(), "segment": segments})

return results

from typing import Tuple, Dict

import cv2

import numpy as np

from PIL import Image

from ultralytics.yolo.utils.plotting import colors

def plot_one_box(box:np.ndarray, img:np.ndarray, color:Tuple[int, int, int] = None, mask:np.ndarray = None, label:str = None, line_thickness:int = 5):

"""

Helper function for drawing single bounding box on image

Parameters:

x (np.ndarray): bounding box coordinates in format [x1, y1, x2, y2]

img (no.ndarray): input image

color (Tuple[int, int, int], *optional*, None): color in BGR format for drawing box, if not specified will be selected randomly

mask (np.ndarray, *optional*, None): instance segmentation mask polygon in format [N, 2], where N - number of points in contour, if not provided, only box will be drawn

label (str, *optonal*, None): box label string, if not provided will not be provided as drowing result

line_thickness (int, *optional*, 5): thickness for box drawing lines

"""

# Plots one bounding box on image img

tl = line_thickness or round(0.002 * (img.shape[0] + img.shape[1]) / 2) + 1 # line/font thickness

color = color or [random.randint(0, 255) for _ in range(3)]

c1, c2 = (int(box[0]), int(box[1])), (int(box[2]), int(box[3]))

cv2.rectangle(img, c1, c2, color, thickness=tl, lineType=cv2.LINE_AA)

if label:

tf = max(tl - 1, 1) # font thickness

t_size = cv2.getTextSize(label, 0, fontScale=tl / 3, thickness=tf)[0]

c2 = c1[0] + t_size[0], c1[1] - t_size[1] - 3

cv2.rectangle(img, c1, c2, color, -1, cv2.LINE_AA) # filled

cv2.putText(img, label, (c1[0], c1[1] - 2), 0, tl / 3, [225, 255, 255], thickness=tf, lineType=cv2.LINE_AA)

if mask is not None:

image_with_mask = img.copy()

mask

cv2.fillPoly(image_with_mask, pts=[mask.astype(int)], color=color)

img = cv2.addWeighted(img, 0.5, image_with_mask, 0.5, 1)

return img

def draw_results(results:Dict, source_image:np.ndarray, label_map:Dict):

"""

Helper function for drawing bounding boxes on image

Parameters:

image_res (np.ndarray): detection predictions in format [x1, y1, x2, y2, score, label_id]

source_image (np.ndarray): input image for drawing

label_map; (Dict[int, str]): label_id to class name mapping

Returns:

"""

boxes = results["det"]

masks = results.get("segment")

h, w = source_image.shape[:2]

for idx, (*xyxy, conf, lbl) in enumerate(boxes):

label = f'{label_map[int(lbl)]} {conf:.2f}'

mask = masks[idx] if masks is not None else None

source_image = plot_one_box(xyxy, source_image, mask=mask, label=label, color=colors(int(lbl)), line_thickness=1)

return source_image

from openvino.runtime import Core, Model

core = Core()

det_ov_model = core.read_model(det_model_path)

device = "CPU" # "GPU"

if device != "CPU":

det_ov_model.reshape({0: [1, 3, 640, 640]})

det_compiled_model = core.compile_model(det_ov_model, device)

def detect(image:np.ndarray, model:Model):

"""

OpenVINO YOLOv8 model inference function. Preprocess image, runs model inference and postprocess results using NMS.

Parameters:

image (np.ndarray): input image.

model (Model): OpenVINO compiled model.

Returns:

detections (np.ndarray): detected boxes in format [x1, y1, x2, y2, score, label]

"""

num_outputs = len(model.outputs)

preprocessed_image = preprocess_image(image)

input_tensor = image_to_tensor(preprocessed_image)

result = model(input_tensor)

boxes = result[model.output(0)]

masks = None

if num_outputs > 1:

masks = result[model.output(1)]

input_hw = input_tensor.shape[2:]

detections = postprocess(pred_boxes=boxes, input_hw=input_hw, orig_img=image, pred_masks=masks)

return detections

IMAGE_PATH = "bus.jpg"

input_image = np.array(Image.open(IMAGE_PATH))

detections = detect(input_image, det_compiled_model)[0]

image_with_boxes = draw_results(detections, input_image, label_map)

res = Image.fromarray(image_with_boxes)

res.save("res.jpg")

from ultralytics.yolo.utils import DEFAULT_CFG

from ultralytics.yolo.cfg import get_cfg

from ultralytics.yolo.data.utils import check_det_dataset

OUT_DIR = Path('./datasets')

args = get_cfg(cfg=DEFAULT_CFG)

args.data = str(OUT_DIR / "coco128.yaml")

det_validator = det_model.ValidatorClass(args=args)

det_validator.data = check_det_dataset(args.data)

det_validator.is_coco = True

det_validator.class_map = ops.coco80_to_coco91_class()

det_validator.names = det_model.model.names

det_validator.metrics.names = det_validator.names

det_validator.nc = det_model.model.model[-1].nc

det_data_loader = det_validator.get_dataloader("datasets/coco128", 1)

import nncf # noqa: F811

from typing import Dict

def transform_fn(data_item:Dict):

"""

Quantization transform function. Extracts and preprocess input data from dataloader item for quantization.

Parameters:

data_item: Dict with data item produced by DataLoader during iteration

Returns:

input_tensor: Input data for quantization

"""

input_tensor = det_validator.preprocess(data_item)['img'].numpy()

return input_tensor

quantization_dataset = nncf.Dataset(det_data_loader, transform_fn)

# Detection model

quantized_det_model = nncf.quantize(

det_ov_model,

quantization_dataset,

preset=nncf.QuantizationPreset.MIXED,

)

from openvino.runtime import serialize

int8_model_det_path = Path(f'{DET_MODEL_NAME}_openvino_int8_model/{DET_MODEL_NAME}.xml')

print(f"Quantized detection model will be saved to {int8_model_det_path}")

serialize(quantized_det_model, str(int8_model_det_path))

if device != "CPU":

quantized_det_model.reshape({0, [1, 3, 640, 640]})

quantized_det_compiled_model = core.compile_model(quantized_det_model, device)

input_image = np.array(Image.open(IMAGE_PATH))

detections = detect(input_image, quantized_det_compiled_model)[0]

image_with_boxes = draw_results(detections, input_image, label_map)

res = Image.fromarray(image_with_boxes)

res.save("res_int8.jpg")

python推理:

from openvino.runtime import Core

import numpy as np

import cv2, time

from ultralytics.yolo.utils import ROOT, yaml_load

from ultralytics.yolo.utils.checks import check_yaml

MODEL_NAME = "yolov8n-int8"

CLASSES = yaml_load(check_yaml('coco128.yaml'))['names']

colors = np.random.uniform(0, 255, size=(len(CLASSES), 3))

def draw_bounding_box(img, class_id, confidence, x, y, x_plus_w, y_plus_h):

label = f'{CLASSES[class_id]} ({confidence:.2f})'

color = colors[class_id]

cv2.rectangle(img, (x, y), (x_plus_w, y_plus_h), color, 2)

cv2.putText(img, label, (x - 10, y - 10), cv2.FONT_HERSHEY_SIMPLEX, 0.5, color, 2)

# 实例化Core对象

core = Core()

# 载入并编译模型

net = core.compile_model(f'{MODEL_NAME}.xml', device_name="CPU")

# 获得模型输出节点

output_node = net.outputs[0] # yolov8n只有一个输出节点

ir = net.create_infer_request()

cap = cv2.VideoCapture("test.mp4")

while True:

start = time.time()

ret, frame = cap.read()

if not ret:

break

[height, width, _] = frame.shape

length = max((height, width))

image = np.zeros((length, length, 3), np.uint8)

image[0:height, 0:width] = frame

scale = length / 640

blob = cv2.dnn.blobFromImage(image, scalefactor=1 / 255, size=(640, 640), swapRB=True)

outputs = ir.infer(blob)[output_node]

outputs = np.array([cv2.transpose(outputs[0])])

rows = outputs.shape[1]

boxes = []

scores = []

class_ids = []

for i in range(rows):

classes_scores = outputs[0][i][4:]

(minScore, maxScore, minClassLoc, (x, maxClassIndex)) = cv2.minMaxLoc(classes_scores)

if maxScore >= 0.25:

box = [outputs[0][i][0] - (0.5 * outputs[0][i][2]), outputs[0][i][1] - (0.5 * outputs[0][i][3]),

outputs[0][i][2], outputs[0][i][3]]

boxes.append(box)

scores.append(maxScore)

class_ids.append(maxClassIndex)

result_boxes = cv2.dnn.NMSBoxes(boxes, scores, 0.25, 0.45, 0.5)

for i in range(len(result_boxes)):

index = result_boxes[i]

box = boxes[index]

draw_bounding_box(frame, class_ids[index], scores[index], round(box[0] * scale), round(box[1] * scale),

round((box[0] + box[2]) * scale), round((box[1] + box[3]) * scale))

end = time.time()

# show FPS

fps = (1 / (end - start))

fps_label = "Throughput: %.2f FPS" % fps

cv2.putText(frame, fps_label, (10, 25), cv2.FONT_HERSHEY_SIMPLEX, 1, (0, 0, 255), 2)

cv2.imshow('YOLOv8 OpenVINO Infer Demo on AIxBoard', frame)

# wait key for ending

if cv2.waitKey(1) > -1:

cap.release()

cv2.destroyAllWindows()

break

C++推理:(openvino库读取xml文件在compile_model时报错,暂时不明原因,改用onnx格式推理)

#include <iostream>

#include <opencv2/opencv.hpp>

#include <openvino/openvino.hpp>

#include <inference_engine.hpp>

//数据集的标签 官方模型yolov5s.onnx为80种

std::vector<std::string> class_names = { "person", "bicycle", "car", "motorbike", "aeroplane", "bus", "train", "truck", "boat", "traffic light","fire hydrant",

"stop sign", "parking meter", "bench", "bird", "cat", "dog", "horse", "sheep", "cow", "elephant", "bear", "zebra", "giraffe","backpack", "umbrella",

"handbag", "tie", "suitcase", "frisbee", "skis", "snowboard", "sports ball", "kite", "baseball bat", "baseball glove","skateboard", "surfboard",

"tennis racket", "bottle", "wine glass", "cup", "fork", "knife", "spoon", "bowl", "banana", "apple", "sandwich", "orange","broccoli", "carrot",

"hot dog", "pizza", "donut", "cake", "chair", "sofa", "pottedplant", "bed", "diningtable", "toilet", "tvmonitor", "laptop", "mouse","remote",

"keyboard", "cell phone", "microwave", "oven", "toaster", "sink", "refrigerator", "book", "clock", "vase", "scissors", "teddy bear", "hair drier", "toothbrush" };

/*@brief 对网络的输入为图片数据的节点进行赋值,实现图片数据输入网络

@param input_tensor 输入节点的tensor

@param inpt_image 输入图片数据

*/

void fill_tensor_data_image(ov::Tensor& input_tensor, const cv::Mat& input_image)

{

// 获取输入节点要求的输入图片数据的大小

ov::Shape tensor_shape = input_tensor.get_shape();

const size_t width = tensor_shape[3]; // 要求输入图片数据的宽度

const size_t height = tensor_shape[2]; // 要求输入图片数据的高度

const size_t channels = tensor_shape[1]; // 要求输入图片数据的维度

// 读取节点数据内存指针

float* input_tensor_data = input_tensor.data<float>();

// 将图片数据填充到网络中

// 原有图片数据为 H、W、C 格式,输入要求的为 C、H、W 格式

for (size_t c = 0; c < channels; c++)

{

for (size_t h = 0; h < height; h++)

{

for (size_t w = 0; w < width; w++)

{

input_tensor_data[c * width * height + h * width + w] = input_image.at<cv::Vec<float, 3>>(h, w)[c];

}

}

}

}

int main(int argc, char* argv[])

{

ov::Core ie;

ov::CompiledModel compiled_model = ie.compile_model("yolov8n.onnx", "CPU");//AUTO GPU CPU

ov::InferRequest infer_request = compiled_model.create_infer_request();

ov::Tensor input_image_tensor = infer_request.get_tensor("images"); //获取输入节点tensor

int input_h = input_image_tensor.get_shape()[2]; //获得"images"节点的Height

int input_w = input_image_tensor.get_shape()[3]; //获得"images"节点的Width

//int input_c = input_image_tensor.get_shape()[1]; //获得"images"节点的channel

ov::Tensor output_tensor = infer_request.get_tensor("output0"); //获取输出节点tensor

int out_rows = output_tensor.get_shape()[1]; //获得"output"节点的out_rows 84

int out_cols = output_tensor.get_shape()[2]; //获得"output"节点的Width 8400

cv::VideoCapture cap("test.mp4");

if (!cap.isOpened())

{

std::cerr << "Error opening video filn" << std::endl;;

return -1;

}

cv::Mat frame;

while (true)

{

cap.read(frame);

if (frame.empty())

break;

int64 start = cv::getTickCount();

int w = frame.cols;

int h = frame.rows;

int _max = std::max(h, w);

cv::Mat image = cv::Mat::zeros(cv::Size(_max, _max), CV_8UC3);

frame.copyTo(image(cv::Rect(0, 0, w, h)));

cvtColor(image, image, cv::COLOR_BGR2RGB);

float x_factor = image.cols / input_w;

float y_factor = image.rows / input_h;

cv::Mat blob_image;

cv::resize(image, blob_image, cv::Size(input_w, input_h));

blob_image.convertTo(blob_image, CV_32F);

blob_image = blob_image / 255.0;

fill_tensor_data_image(input_image_tensor, blob_image); //将图片数据填充到tensor数据内存中

infer_request.infer(); //执行推理计算

const ov::Tensor& output_tensor = infer_request.get_tensor("output0"); //获得推理结果

cv::Mat det_output(out_rows, out_cols, CV_32F, (float*)output_tensor.data()); //解析推理结果

std::vector<cv::Rect> boxes;

std::vector<int> classIds;

std::vector<float> confidences;

for (int i = 0; i < det_output.cols; i++)

{

cv::Mat classes_scores = det_output.col(i).rowRange(4, 84);

cv::Point classIdPoint;

double score;

cv::minMaxLoc(classes_scores, 0, &score, 0, &classIdPoint);

if (score > 0.8)

{

float cx = det_output.at<float>(0, i);

float cy = det_output.at<float>(1, i);

float ow = det_output.at<float>(2, i);

float oh = det_output.at<float>(3, i);

int x = static_cast<int>((cx - 0.5 * ow) * x_factor);

int y = static_cast<int>((cy - 0.5 * oh) * y_factor);

int width = static_cast<int>(ow * x_factor);

int height = static_cast<int>(oh * y_factor);

cv::Rect box;

box.x = x;

box.y = y;

box.width = width;

box.height = height;

boxes.push_back(box);

classIds.push_back(classIdPoint.x);

confidences.push_back(score);

}

}

std::vector<int> indexes;

cv::dnn::NMSBoxes(boxes, confidences, 0.25, 0.45, indexes);

for (size_t i = 0; i < indexes.size(); i++)

{

int index = indexes[i];

int idx = classIds[index];

float iscore = confidences[index];

cv::rectangle(frame, boxes[index], cv::Scalar(0, 0, 255), 2, 8);

cv::rectangle(frame, cv::Point(boxes[index].tl().x, boxes[index].tl().y - 20), cv::Point(boxes[index].br().x, boxes[index].tl().y), cv::Scalar(0, 255, 255), -1);

std::string nameText = class_names[idx];

nameText.append(std::to_string(iscore));

cv::putText(frame, nameText, cv::Point(boxes[index].tl().x, boxes[index].tl().y - 10), cv::FONT_HERSHEY_SIMPLEX, .5, cv::Scalar(0, 0, 0));

}

float t = (cv::getTickCount() - start) / static_cast<float>(cv::getTickFrequency());

cv::putText(frame, cv::format("FPS: %.2f", 1.0 / t), cv::Point(20, 40), cv::FONT_HERSHEY_PLAIN, 2.0, cv::Scalar(255, 0, 0), 2, 8);

cv::imshow("YOLOv8 OpenVINO", frame);

cv::waitKey(1);

}

return 0;

}

yolov8 tensorrt量化部署

参考:https://github.com/Monday-Leo/YOLOv8_Tensorrt

使用官方命令导出ONNX模型:

yolo mode=export model=yolov8n.pt format=onnx dynamic=False

再使用脚本v8_transform.py转换官方的ONNX模型,生成yolov8n.transd.onnx:

import onnx

import onnx.helper as helper

import sys

import os

def main():

if len(sys.argv) < 2:

print("Usage:\n python v8_transform.py yolov8n.onnx")

return 1

file = sys.argv[1]

if not os.path.exists(file):

print(f"Not exist path: {file}")

return 1

prefix, suffix = os.path.splitext(file)

dst = prefix + ".transd" + suffix

model = onnx.load(file)

node = model.graph.node[-1]

old_output = node.output[0]

node.output[0] = "pre_transpose"

for specout in model.graph.output:

if specout.name == old_output:

shape0 = specout.type.tensor_type.shape.dim[0]

shape1 = specout.type.tensor_type.shape.dim[1]

shape2 = specout.type.tensor_type.shape.dim[2]

new_out = helper.make_tensor_value_info(

specout.name,

specout.type.tensor_type.elem_type,

[0, 0, 0]

)

new_out.type.tensor_type.shape.dim[0].CopyFrom(shape0)

new_out.type.tensor_type.shape.dim[2].CopyFrom(shape1)

new_out.type.tensor_type.shape.dim[1].CopyFrom(shape2)

specout.CopyFrom(new_out)

model.graph.node.append(

helper.make_node("Transpose", ["pre_transpose"], [old_output], perm=[0, 2, 1])

)

print(f"Model save to {dst}")

onnx.save(model, dst)

return 0

if __name__ == "__main__":

sys.exit(main())

使用官方trtexec转化onnx模型,FP32预测删除–fp16参数即可。

trtexec --onnx=yolov8n.transd.onnx --saveEngine=yolov8n_fp16.trt --fp16

C++推理:(工程内容较多,这里只展示main函数部分)

#include "infer.hpp"

#include "yolo.hpp"

#include <iostream>

#include <ctime>

#include <opencv2/opencv.hpp>

enum yolo::Type type = yolo::Type::yolov8;

const float confidence_threshold = 0.25f;

const float nms_threshold = 0.5f;

const char* labels[] = { "person", "bicycle", "car", "motorcycle", "airplane", "bus", "train", "truck", "boat", "traffic light",

"fire hydrant", "stop sign", "parking meter", "bench", "bird", "cat", "dog", "horse", "sheep", "cow",

"elephant", "bear", "zebra", "giraffe", "backpack", "umbrella", "handbag", "tie", "suitcase", "frisbee",

"skis", "snowboard", "sports ball", "kite", "baseball bat", "baseball glove", "skateboard", "surfboard",

"tennis racket", "bottle", "wine glass", "cup", "fork", "knife", "spoon", "bowl", "banana", "apple",

"sandwich", "orange", "broccoli", "carrot", "hot dog", "pizza", "donut", "cake", "chair", "couch",

"potted plant", "bed", "dining table", "toilet", "tv", "laptop", "mouse", "remote", "keyboard", "cell phone",

"microwave", "oven", "toaster", "sink", "refrigerator", "book", "clock", "vase", "scissors", "teddy bear",

"hair drier", "toothbrush" };

int main(int argc, char** argv)

{

std::string img_path = "zidane.jpg";

std::string model_path = "yolov8n_fp16.trt";

cv::Mat image = cv::imread(img_path);

auto yolo = yolo::load(model_path, type, confidence_threshold, nms_threshold);

if (yolo == nullptr)

return -1;

cv::VideoCapture cap("test.mp4");

while (true)

{

cap.read(image);

if (image.empty())

break;

yolo::BoxArray objs;

//objs = yolo->forward(yolo::Image(image.data, image.cols, image.rows));

clock_t start = clock();

//for (size_t i = 0; i < 1000; i++)

objs = yolo->forward(yolo::Image(image.data, image.cols, image.rows));

clock_t stop = clock();

std::cout << stop - start << "ms" << std::endl;

for (int i = 0; i < objs.size(); ++i)

{

auto name = labels[objs[i].class_label];

auto caption = cv::format("%s %.2f", name, objs[i].confidence);

int width = cv::getTextSize(caption, 0, 1, 2, nullptr).width + 10;

cv::rectangle(image, cv::Point(objs[i].left, objs[i].top), cv::Point(objs[i].right, objs[i].bottom), cv::Scalar(255, 0, 0), 2);

cv::rectangle(image, cv::Point(objs[i].left - 3, objs[i].top - 33), cv::Point(objs[i].left + width, objs[i].top), cv::Scalar(0, 0, 255), -1);

cv::putText(image, caption, cv::Point(objs[i].left, objs[i].top - 5), 0, 1, cv::Scalar::all(0), 2, 16);

}

cv::imshow("result.jpg", image);

cv::waitKey(1);

}

return 0;

}

python推理:(LZ未尝试)

在刚才的C++工程中右键yolov8,点击属性,修改为动态链接库。将仓库https://github.com/Monday-Leo/YOLOv8_Tensorrt的python_trt.py复制到dll文件夹下。设置模型路径,dll路径和想要预测的图片路径,特别注意模型路径需要加b’'。

det = Detector(model_path=b"./yolov8n_fp16.trt",dll_path="./yolov8.dll") # b'' is needed

img = cv2.imread("./zidane.jpg")

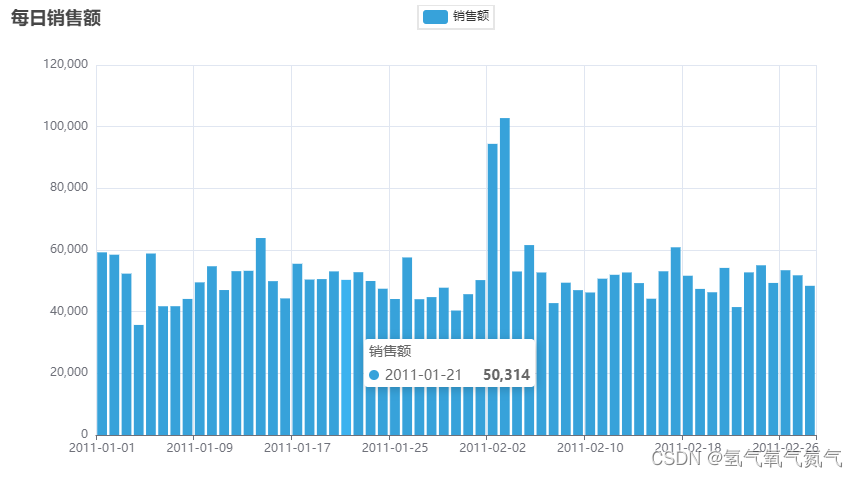

视频检测效果(原速播放):

C++和python的所有源代码、模型文件、推理用的图片和视频资源文件放在下载链接:https://download.csdn.net/download/taifyang/87895869

![[SpringBoot]Spring Security框架](https://img-blog.csdnimg.cn/b0e3e87e14c540e197f999a1f2411658.png)