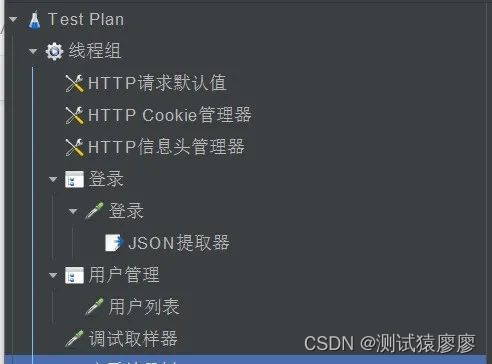

目录

- RepVGG重参

- 前言

- 1. RepVGG

- 2. RepVGG网络搭建

- 2.1 conv_bn

- 2.2 RepVGG Block初始化

- 2.3 forward

- 2.4 branch的合并

- 2.5 重参的实现

- 2.6 整体网络结构搭建

- 2.7 模型导出

- 3. 完整示例代码

- 总结

RepVGG重参

前言

手写AI推出的全新模型剪枝与重参课程。记录下个人学习笔记,仅供自己参考。

本次课程主要讲解RepVGG的重参。

课程大纲可看下面的思维导图

1. RepVGG

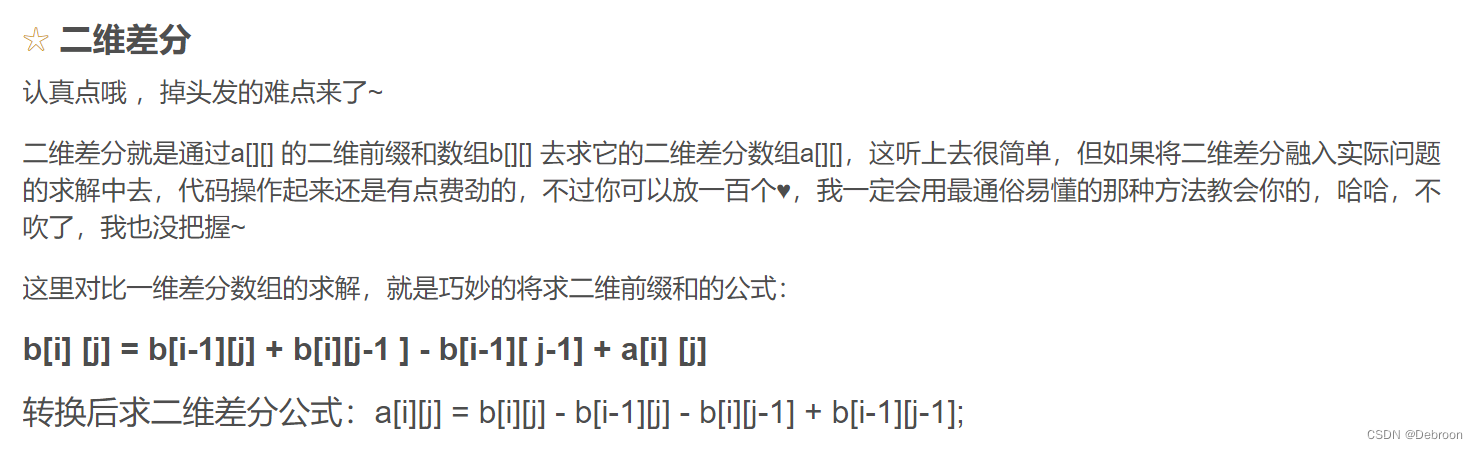

RepVGG 通过结构化重参数思想,能够提高 VGG 网络性能,使其准确率直逼其它 SOTA 网络(如RegNet和EfficientNet),让训练网络的多路结构转换为推理网络的单路结构,提高其推理速度。

2. RepVGG网络搭建

2.1 conv_bn

先写一个函数用来实现conv+bn

def conv_bn(in_channels, out_channels, kernel_size, stride, padding, groups=1):

result = nn.Sequential()

result.add_module('conv', nn.Conv2d(in_channels=in_channels, out_channels=out_channels, kernel_size=kernel_size,

stride=stride, padding=padding, groups=groups, bias=False))

result.add_module('bn', nn.BatchNorm2d(num_features=out_channels))

return result

2.2 RepVGG Block初始化

RepVGG模块的初始化

class RepVGGBlock(nn.Module):

def __init__(self, in_channels, out_channels, kernel_size,

stride=1, padding=0, dilation=1, padding_mode='zeros',

groups=1, deploy=False):

super().__init__()

self.deploy = deploy

self.groups = groups

self.in_channels = in_channels

assert kernel_size == 3

assert padding == 1

padding_l1 = padding - kernel_size // 2

self.nonlinear = nn.ReLU()

self.se = nn.Identity()

if deploy:

self.rbr_reparam = nn.Conv2d(in_channels=in_channels, out_channels=out_channels, kernel_size=kernel_size,

stride=stride, padding=padding, dilation=dilation,

groups=groups, bias=True, padding_mode=padding_mode)

else:

self.rbr_identity = nn.BatchNorm2d(num_features=out_channels) if out_channels == in_channels and stride == 1 else None

self.rbr_dense = conv_bn(in_channels=in_channels, out_channels=out_channels, kernel_size=kernel_size,

stride=stride, padding=padding, groups=groups)

self.rbr_1x1 = conv_bn(in_channels=in_channels, out_channels=out_channels, kernel_size=1,

stride=stride, padding=padding_l1, groups=groups)

print('RepVGG Block, identity = ', self.rbr_identity)

2.3 forward

前向传播的实现

class RepVGGBlock(nn.Module):

def __init__(self, in_channels, out_channels, kernel_size,

stride=1, padding=0, dilation=1, padding_mode='zeros',

groups=1, deploy=False):

super().__init__()

...

def forward(self, inputs):

if hasattr(self, 'rbr_reparam'):

return self.nonlinear(self.se(self.rbr_reparam(inputs)))

if self.rbr_identity is None:

id_out = 0

else:

id_out = self.rbr_identity(inputs)

return self.nonlinear(self.se(self.rbr_dense(inputs) + self.rbr_1x1(inputs) + id_out))

2.4 branch的合并

多分支结构合并的实现

class RepVGGBlock(nn.Module):

def __init__(self, in_channels, out_channels, kernel_size,

stride=1, padding=0, dilation=1, padding_mode='zeros',

groups=1, deploy=False):

super().__init__()

...

def forward(self, inputs):

...

def switch_to_deploy(self):

self.deploy = True

if hasattr(self, 'rbr_reparam'):

return

kernel, bias = self.get_equivalent_kernel_bias()

self.rbr_reparam = nn.Conv2d(in_channels=self.rbr_dense.conv.in_channels, out_channels=self.rbr_dense.conv.out_channels,

kernel_size=self.rbr_dense.conv.kernel_size, stride=self.rbr_dense.conv.stride,

padding=self.rbr_dense.conv.padding, dilation=self.rbr_dense.conv.dilation,

groups=self.rbr_dense.conv.groups, bias=True)

self.rbr_reparam.weight.data = kernel

self.rbr_reparam.bias.data = bias

self.__delattr__('rbr_dense')

self.__delattr__('rbr_1x1')

if hasattr(self, 'rbr_identity'):

self.__delattr__('rbr_identity')

if hasattr(self, 'id_tensor'):

self.__delattr__('id_tensor')

def get_equivalent_kernel_bias(self):

kernel3x3, bias3x3 = self._fuse_bn_tensor(self.rbr_dense)

kernel1x1, bias1x1 = self._fuse_bn_tensor(self.rbr_1x1)

kernelid, biasid = self._fuse_bn_tensor(self.rbr_identity)

return kernel3x3 + self._pad_1x1_to_3x3_tensor(kernel1x1) + kernelid, bias3x3 + bias1x1 +biasid

def _fuse_bn_tensor(self):

pass

def _pad_1x1_to_3x3_tensor(self):

pass

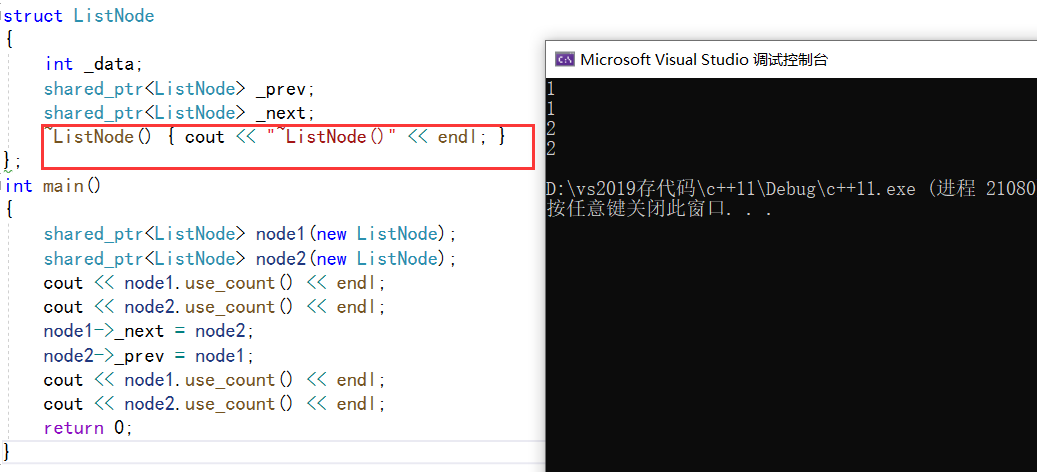

2.5 重参的实现

3x3卷积1x1卷积BN层的重参实现

class RepVGGBlock(nn.Module):

def __init__(self, in_channels, out_channels, kernel_size,

stride=1, padding=0, dilation=1, padding_mode='zeros',

groups=1, deploy=False):

super().__init__()

...

def forward(self, inputs):

...

def switch_to_deploy(self):

...

def get_equivalent_kernel_bias(self):

...

def _fuse_bn_tensor(self, branch):

if branch is None:

return 0, 0

if isinstance(branch, nn.Sequential):

kernel = branch.conv.weight

running_mean = branch.bn.running_mean

running_var = branch.bn.running_var

gamma = branch.bn.weight

beta = branch.bn.bias

eps = branch.bn.eps

else:

assert isinstance(branch, nn.BatchNorm2d)

if not hasattr(self, 'id_tensor'):

input_dim = self.in_channels // self.groups

kernel_value = np.zeros((self.in_channels, input_dim, 3, 3), dtype=np.float32)

for i in range(self.in_channels):

kernel_value[i, i % input_dim, 1, 1] = 1

self.id_tensor = torch.from_numpy(kernel_value).to(branch.weight.device)

kernel = self.id_tensor

running_mean = branch.running_mean

running_var = branch.running_var

gamma = branch.weight

beta = branch.bias

eps = branch.eps

std = (running_var + eps).sqrt()

t = (gamma / std).reshape(-1, 1, 1, 1)

return kernel * t, beta - running_mean * gamma / std

def _pad_1x1_to_3x3_tensor(self, kernel1x1):

if kernel1x1 is None:

return 0

else:

return torch.nn.functional.pad(kernel1x1, [1, 1, 1, 1])

2.6 整体网络结构搭建

RepVGG整体网络结构(RepVGG-B0)

# num_blocks:[1, 4, 6, 16, 1] width_multiplier:[1, 1, 1, 2.5]

class RepVGG(nn.Module):

def __init__(self, num_blocks, num_classes=1000, width_multiplier=None, deploy=False):

super(RepVGG, self).__init__()

assert len(width_multiplier) == 4

self.deploy = deploy

self.in_planes = min(64, int(64 * width_multiplier[0]))

self.stage0 = RepVGGBlock(in_channels=3, out_channels=self.in_planes, kernel_size=3, stride=2, padding=1, deploy=self.deploy)

self.stage1 = self._make_stage(int(64 * width_multiplier[0]), num_blocks[0], stride=2)

self.stage2 = self._make_stage(int(128 * width_multiplier[1]), num_blocks[1], stride=2)

self.stage3 = self._make_stage(int(256 * width_multiplier[2]), num_blocks[2], stride=2)

self.stage4 = self._make_stage(int(512 * width_multiplier[3]), num_blocks[3], stride=2)

self.gap = nn.AdaptiveAvgPool2d(output_size=1)

self.linear = nn.Linear(int(512 * width_multiplier[3]), num_classes)

def _make_stage(self, planes, num_blocks, stride):

strides = [stride] + [1]*(num_blocks-1)

blocks = []

for stride in strides:

blocks.append(RepVGGBlock(in_channels=self.in_planes, out_channels=planes, kernel_size=3,

stride=stride, padding=1, deploy=self.deploy))

self.in_planes = planes

return nn.ModuleList(blocks)

def forward(self, x):

out = self.stage0(x)

for stage in (self.stage1, self.stage2, self.stage3, self.stage4):

for block in stage:

out = block(out)

out = self.gap(out)

out = out.view(out.size(0), -1)

out = self.linear(out)

return out

2.7 模型导出

RepVGG网络模型的导出和对比

if __name__ == '__main__':

model = RepVGG(num_blocks=[4, 6, 16, 1], num_classes=1000, width_multiplier=[2, 2, 2, 2.5], deploy=False)

x = torch.randn(1, 3, 224, 224)

model.eval()

for module in model.modules():

if isinstance(module, torch.nn.BatchNorm2d):

nn.init.uniform_(module.running_mean, 0, 0.1)

nn.init.uniform_(module.running_var, 0, 0.1)

nn.init.uniform_(module.weight, 0, 0.1)

nn.init.uniform_(module.bias, 0, 0.1)

out = model(x)

torch.onnx.export(model=model, args=x, f="./RepVGG.onnx", verbose=False)

for module in model.modules():

if hasattr(module, 'switch_to_deploy'):

module.switch_to_deploy()

deployout = model(x)

torch.onnx.export(model=model, args=x, f='./RepVGG-deploy.onnx', verbose=False)

print("Diff between the outputs of the origin-RepVGG and rep-RevVGG: {}\n".format(

((deployout - out)**2).sum()

))

3. 完整示例代码

RepVGG网络的完整示例代码如下:

import torch

import copy

import numpy as np

import torch.nn as nn

import torch.nn.functional as F

def conv_bn(in_channels, out_channels, kernel_size, stride, padding, groups=1):

result = nn.Sequential()

result.add_module('conv', nn.Conv2d(in_channels=in_channels,

out_channels=out_channels,

kernel_size=kernel_size,

stride=stride,

padding=padding,

groups=groups,

bias=False))

result.add_module('bn', nn.BatchNorm2d(num_features=out_channels))

return result

class RepVGGBlock(nn.Module):

def __init__(self, in_channels, out_channels, kernel_size,

stride=1, padding=0, dilation=1, groups=1, padding_mode='zeros', deploy=False):

super().__init__()

self.deploy = deploy

self.groups = groups

self.in_channels = in_channels

assert kernel_size == 3

assert padding == 1

padding_11 = padding - kernel_size // 2

self.nonlinearity = nn.ReLU()

self.se = nn.Identity()

if deploy:

self.rbr_reparam = nn.Conv2d(in_channels=in_channels, out_channels=out_channels, kernel_size=kernel_size,

stride=stride, padding=padding, dilation=dilation,

groups=groups, bias=True, padding_mode=padding_mode)

else:

self.rbr_identity = nn.BatchNorm2d(num_features=in_channels) if out_channels == in_channels and stride == 1 else None

self.rbr_dense = conv_bn(in_channels=in_channels, out_channels=out_channels, kernel_size=kernel_size,

stride=stride, padding=padding, groups=groups)

self.rbr_1x1 = conv_bn(in_channels=in_channels, out_channels=out_channels, kernel_size=1,

stride=stride, padding=padding_11, groups=groups)

print('RepVGG Block, identity = ', self.rbr_identity)

def forward(self, inputs):

if hasattr(self, 'rbr_reparam'):

return self.nonlinearity(self.se(self.rbr_reparam(inputs)))

if self.rbr_identity is None:

id_out = 0

else:

id_out = self.rbr_identity(inputs)

return self.nonlinearity(self.se(self.rbr_dense(inputs) + self.rbr_1x1(inputs) + id_out))

def switch_to_deploy(self):

self.deploy = True

if hasattr(self, 'rbr_reparam'):

return

kernel, bias = self.get_equivalent_kernel_bias()

self.rbr_reparam = nn.Conv2d(in_channels=self.rbr_dense.conv.in_channels, out_channels=self.rbr_dense.conv.out_channels,

kernel_size=self.rbr_dense.conv.kernel_size, stride=self.rbr_dense.conv.stride,

padding=self.rbr_dense.conv.padding, dilation=self.rbr_dense.conv.dilation,

groups=self.rbr_dense.conv.groups, bias=True)

self.rbr_reparam.weight.data = kernel

self.rbr_reparam.bias.data = bias

self.__delattr__('rbr_dense')

self.__delattr__('rbr_1x1')

if hasattr(self, 'rbr_identity'):

self.__delattr__('rbr_identity')

if hasattr(self, 'id_tensor'):

self.__delattr__('id_tensor')

# This func derives the equivalent kernel and bias in a DIFFERENTIABLE way.

# You can get the equivalent kernel and bias at any time and do whatever you want,

# for example, apply some penalties or constraints during training, just like you do to the other models.

# May be useful for quantization or pruning.

def get_equivalent_kernel_bias(self):

kernel3x3, bias3x3 = self._fuse_bn_tensor(self.rbr_dense)

kernel1x1, bias1x1 = self._fuse_bn_tensor(self.rbr_1x1)

kernelid, biasid = self._fuse_bn_tensor(self.rbr_identity)

return kernel3x3 + self._pad_1x1_to_3x3_tensor(kernel1x1) + kernelid, bias3x3 + bias1x1 + biasid

def _fuse_bn_tensor(self, branch):

if branch is None:

return 0, 0

if isinstance(branch, nn.Sequential):

kernel = branch.conv.weight

running_mean = branch.bn.running_mean

running_var = branch.bn.running_var

gamma = branch.bn.weight

beta = branch.bn.bias

eps = branch.bn.eps

else:

assert isinstance(branch, nn.BatchNorm2d)

if not hasattr(self, 'id_tensor'):

input_dim = self.in_channels // self.groups

kernel_value = np.zeros((self.in_channels, input_dim, 3, 3), dtype=np.float32)

for i in range(self.in_channels):

kernel_value[i, i % input_dim, 1, 1] = 1

self.id_tensor = torch.from_numpy(kernel_value).to(branch.weight.device)

kernel = self.id_tensor

running_mean = branch.running_mean

running_var = branch.running_var

gamma = branch.weight

beta = branch.bias

eps = branch.eps

std = (running_var + eps).sqrt()

t = (gamma / std).reshape(-1, 1, 1, 1)

return kernel * t, beta - running_mean * gamma / std

def _pad_1x1_to_3x3_tensor(self, kernel1x1):

if kernel1x1 is None:

return 0

else:

return torch.nn.functional.pad(kernel1x1, [1,1,1,1])

#RepVGG(num_blocks=[4, 6, 16, 1], num_classes=1000,width_multiplier=[2, 2, 2, 4], deploy=deploy)

class RepVGG(nn.Module):

def __init__(self, num_blocks, num_classes=1000, width_multiplier=None, deploy=False):

super(RepVGG, self).__init__()

assert len(width_multiplier) == 4

self.deploy = deploy

self.in_planes = min(64, int(64 * width_multiplier[0]))

self.stage0 = RepVGGBlock(in_channels=3, out_channels=self.in_planes, kernel_size=3, stride=2, padding=1, deploy=self.deploy)

self.stage1 = self._make_stage(int(64 * width_multiplier[0]), num_blocks[0], stride=2)

self.stage2 = self._make_stage(int(128 * width_multiplier[1]), num_blocks[1], stride=2)

self.stage3 = self._make_stage(int(256 * width_multiplier[2]), num_blocks[2], stride=2)

self.stage4 = self._make_stage(int(512 * width_multiplier[3]), num_blocks[3], stride=2)

self.gap = nn.AdaptiveAvgPool2d(output_size=1)

self.linear = nn.Linear(int(512 * width_multiplier[3]), num_classes)

def _make_stage(self, planes, num_blocks, stride):

'''

planes: out channels of each stage

num_blocks: Layers of each stage

'''

strides = [stride] + [1]*(num_blocks-1)

blocks = []

for stride in strides:

blocks.append(RepVGGBlock(in_channels=self.in_planes, out_channels=planes, kernel_size=3,

stride=stride, padding=1, deploy=self.deploy))

self.in_planes = planes

return nn.ModuleList(blocks)

def forward(self, x):

out = self.stage0(x)

for stage in (self.stage1, self.stage2, self.stage3, self.stage4):

for block in stage:

out = block(out)

out = self.gap(out)

out = out.view(out.size(0), -1)

out = self.linear(out)

return out

def create_RepVGG_B1(deploy=False):

'''

num_blocks: Layers of each stage

width_multiplier: a & b applied to stages

'''

return RepVGG(num_blocks=[4, 6, 16, 1], num_classes=1000,

width_multiplier=[2, 2, 2, 4],

deploy=deploy)

if __name__ == '__main__':

x = torch.randn(1, 3, 224, 224)

model = create_RepVGG_B1(deploy=False)

model.eval()

for module in model.modules():

if isinstance(module, torch.nn.BatchNorm2d):

nn.init.uniform_(module.running_mean, 0, 0.1)

nn.init.uniform_(module.running_var, 0, 0.1)

nn.init.uniform_(module.weight, 0, 0.1)

nn.init.uniform_(module.bias, 0, 0.1)

out = model(x)

# print(model)

torch.onnx.export(model=model, args=x, f='../RepVGG.onnx', verbose=False)

for module in model.modules():

if hasattr(module, 'switch_to_deploy'):

module.switch_to_deploy()

deployout = model(x)

# print(model)

torch.onnx.export(model=model, args=x, f='../RepVGG-deploy.onnx', verbose=False)

print("Diff between the outputs of the origin-RepVGG and rep-RevVGG: {}\n".format(

((deployout - out)**2).sum()

))

总结

本次课程主要学习 RepVGG 的重参,相比于 DBB 网络的多分支结构的重参,RepVGG 的重参相对简单,主要是对 conv+bn 进行重参。