YOLOv5+单目跟踪(python)

- 1. 目标跟踪

- 2. 测距模块

- 2.1 设置测距模块

- 2.2 添加测距

- 3. 主代码

- 4. 实验效果

相关链接

1. YOLOv5+单目测距(python)

2. YOLOv7+单目测距(python)

3. YOLOv7+单目跟踪(python)

4. 具体效果已在Bilibili发布,点击跳转

工程代码在文章末尾

1. 目标跟踪

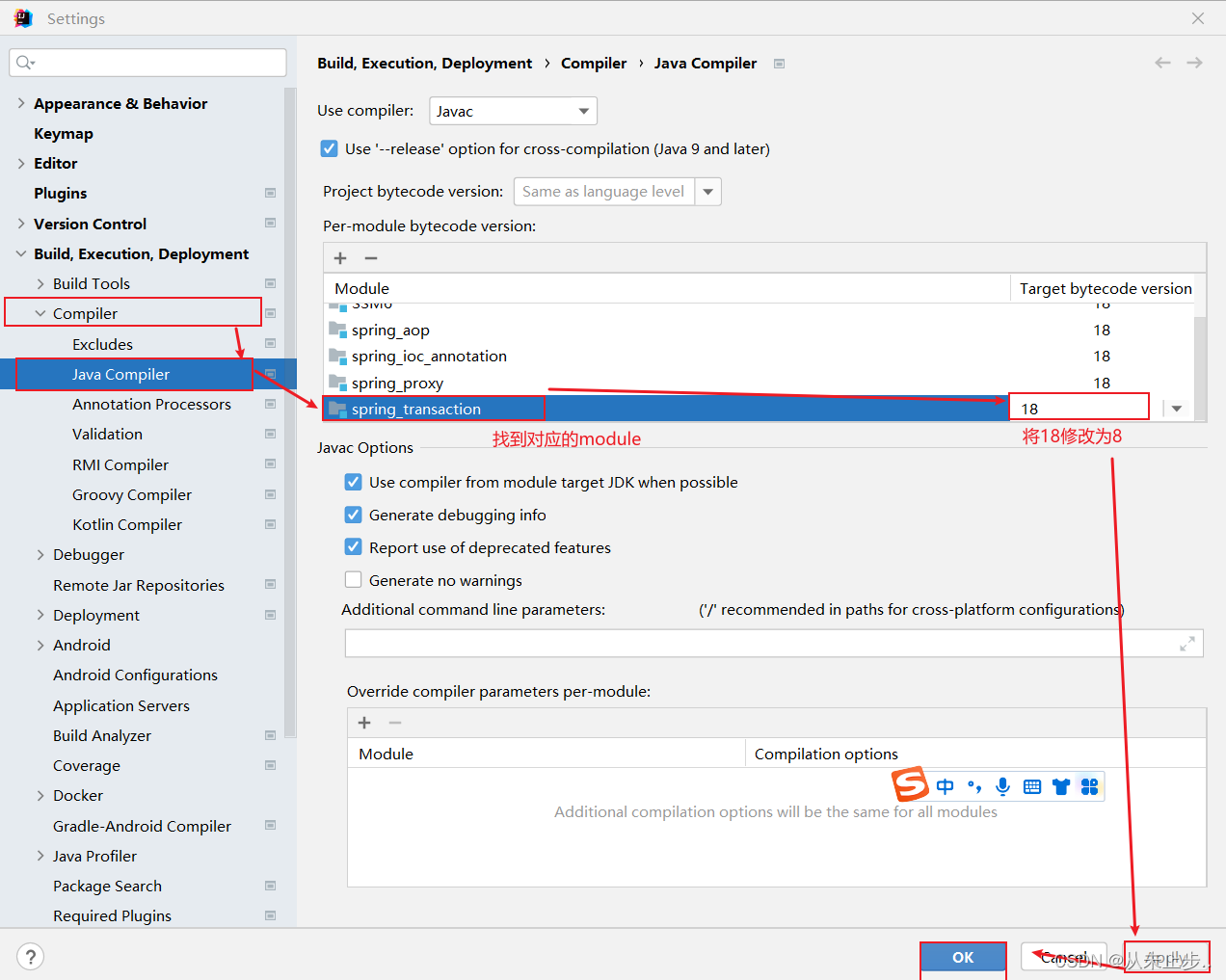

用yolov5实现跟踪步骤比较简单,去官网下载deepsort源码,这里有个版本对应关系

DeepSort v3.0 ~YOLOv5 v5.0

DeepSort v4.0 ~ YOLOv5 v6.1

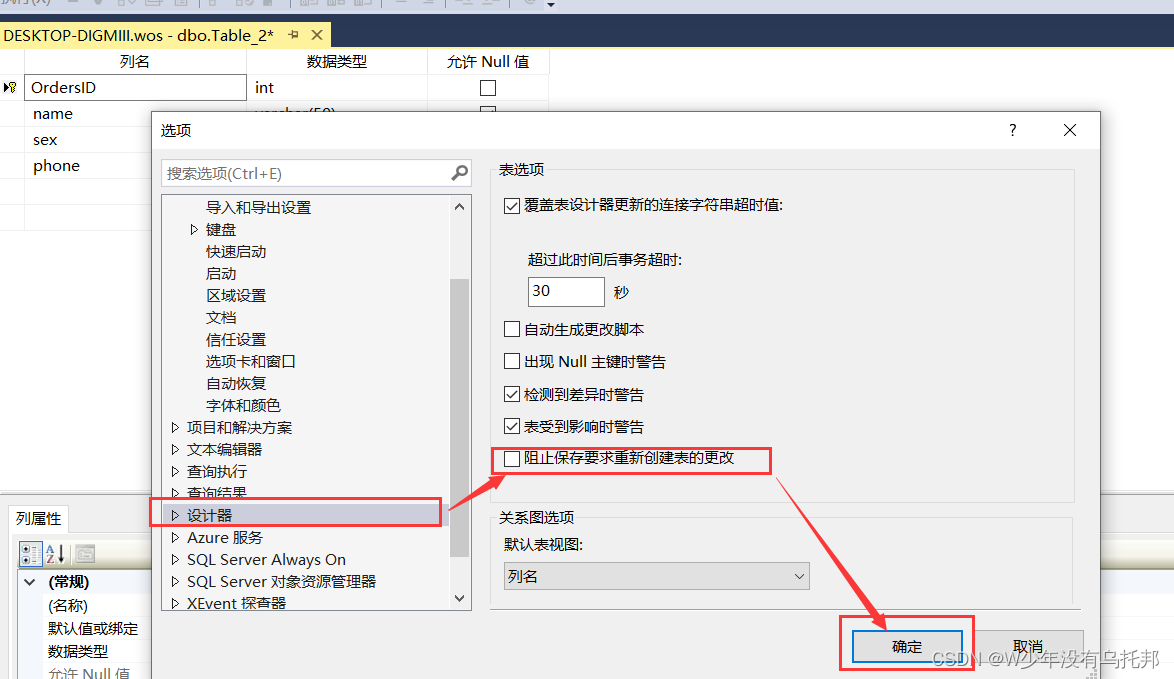

下载完DeepSort之后去YOLO官网下载相应的YOLO版本,然后把下载的YOLO拖进DeepSort文件夹里,并把YOLO文件夹改名为yolov5,接下来把环境装好,然后运行代码 track.py ,此时如果不出问题就完成了普通检测

也可以用终端运行命令python track.py --source 1.mp4 --show-vid --save-vid --yolo_weights yolov5/weights/yolov5s.pt

2. 测距模块

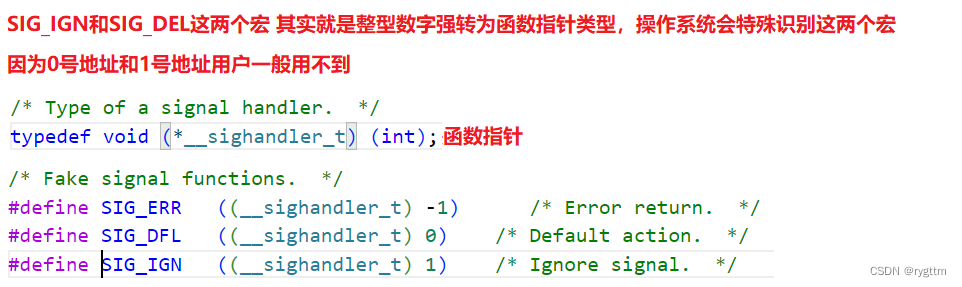

2.1 设置测距模块

测距部分之前已经写过了,具体见这篇文章,我们在Deepsort文件夹里创建一个名为distance.py的文件,或者直接把测距那篇文章里的distance.py文件拖进来也可以

distance.py

foc = 1990.0 # 镜头焦距

real_hight_person = 66.9 # 行人高度

real_hight_car = 57.08 # 轿车高度

# 自定义函数,单目测距

def person_distance(h):

dis_inch = (real_hight_person * foc) / (h - 2)

dis_cm = dis_inch * 2.54

dis_cm = int(dis_cm)

dis_m = dis_cm/100

return dis_m

def car_distance(h):

dis_inch = (real_hight_car * foc) / (h - 2)

dis_cm = dis_inch * 2.54

dis_cm = int(dis_cm)

dis_m = dis_cm/100

return dis_m

2.2 添加测距

接下来调用测距代码到主代码 track.py 文件中,先在代码开头导入库,添加

from distance import person_distance,car_distance

与测距那篇文章不同,由于跟踪代码自带画框,我们只需要将测距模块写进画框这里,具体如下(注释部分是我添加修改的)

def draw_boxes(img, bbox, identities=None, offset=(0, 0)):

for i, box in enumerate(bbox):

x1, y1, x2, y2 = [int(i) for i in box]

h = y2 - y1 # 计算边框像素点高度

dis_m = person_distance(h) # 调用函数,计算行人实际距离

x1 += offset[0]

x2 += offset[0]

y1 += offset[1]

y2 += offset[1]

# box text and bar

id = int(identities[i]) if identities is not None else 0

color = compute_color_for_labels(id)

label = '{}{:d}'.format("", id)

label = label + " "+"dis:"+str(dis_m)+"m" # 将距离添加进标签

t_size = cv2.getTextSize(label, cv2.FONT_HERSHEY_PLAIN, 0, 1)[0]

cv2.rectangle(img, (x1, y1), (x2, y2), color, 1)

cv2.rectangle(img, (x1, y1), (x1 + t_size[0] + 3, y1 + t_size[1] + 4), color, -1)

cv2.putText(img, label, (x1, y1 +

t_size[1] + 1), cv2.FONT_HERSHEY_PLAIN, 1, [255, 255, 255], 2)

return img

3. 主代码

import sys

import threading

import random

from yolov5.utils.plots import plot_one_box

sys.path.insert(0, './yolov5')

from yolov5.utils.google_utils import attempt_download

from yolov5.models.experimental import attempt_load

from yolov5.utils.datasets import LoadImages, LoadStreams

from yolov5.utils.general import check_img_size, non_max_suppression, scale_coords, \

check_imshow,xyxy2xywh

from yolov5.utils.torch_utils import select_device, time_synchronized

from deep_sort_pytorch.utils.parser import get_config

from deep_sort_pytorch.deep_sort import DeepSort

import argparse

import os

import platform

import shutil

import time

from pathlib import Path

import cv2

import torch

import torch.backends.cudnn as cudnn

from yolov5.utils.plots import plot_one_box

palette = (2 ** 11 - 1, 2 ** 15 - 1, 2 ** 20 - 1)

from distance import person_distance,car_distance

def xyxy_to_xywh(*xyxy):

"""" Calculates the relative bounding box from absolute pixel values. """

bbox_left = min([xyxy[0].item(), xyxy[2].item()])

bbox_top = min([xyxy[1].item(), xyxy[3].item()])

bbox_w = abs(xyxy[0].item() - xyxy[2].item())

bbox_h = abs(xyxy[1].item() - xyxy[3].item())

x_c = (bbox_left + bbox_w / 2)

y_c = (bbox_top + bbox_h / 2)

w = bbox_w

h = bbox_h

return x_c, y_c, w, h

def xyxy_to_tlwh(bbox_xyxy):

tlwh_bboxs = []

for i, box in enumerate(bbox_xyxy):

x1, y1, x2, y2 = [int(i) for i in box]

top = x1

left = y1

w = int(x2 - x1)

h = int(y2 - y1)

tlwh_obj = [top, left, w, h]

tlwh_bboxs.append(tlwh_obj)

return tlwh_bboxs

def compute_color_for_labels(label):

"""

Simple function that adds fixed color depending on the class

"""

color = [int((p * (label ** 2 - label + 1)) % 255) for p in palette]

return tuple(color)

def draw_boxes(img, bbox, identities=None, offset=(0, 0)):

for i, box in enumerate(bbox):

x1, y1, x2, y2 = [int(i) for i in box]

h = y2 - y1

dis_m = person_distance(h) # 调用函数,计算行人实际高度

x1 += offset[0]

x2 += offset[0]

y1 += offset[1]

y2 += offset[1]

# box text and bar

id = int(identities[i]) if identities is not None else 0

color = compute_color_for_labels(id)

label = '{}{:d}'.format("", id)

label = label + " "+"dis:"+str(dis_m)+"m"

t_size = cv2.getTextSize(label, cv2.FONT_HERSHEY_PLAIN, 0, 1)[0] #修改字符,原设置: 2,2

cv2.rectangle(img, (x1, y1), (x2, y2), color, 1) # 修改线框为1, 原设置:3

cv2.rectangle(img, (x1, y1), (x1 + t_size[0] + 3, y1 + t_size[1] + 4), color, -1)

cv2.putText(img, label, (x1, y1 +

t_size[1] + 1), cv2.FONT_HERSHEY_PLAIN, 1, [255, 255, 255], 2) #修改 2,.,2

return img

def detect(opt):

out, source, yolo_weights, deep_sort_weights, show_vid, save_vid, save_txt, imgsz, evaluate = \

opt.output, opt.source, opt.yolo_weights, opt.deep_sort_weights, opt.show_vid, opt.save_vid, \

opt.save_txt, opt.img_size, opt.evaluate

webcam = source == '0' or source.startswith(

'rtsp') or source.startswith('http') or source.endswith('.txt')

# initialize deepsort

cfg = get_config()

cfg.merge_from_file(opt.config_deepsort)

attempt_download(deep_sort_weights, repo='mikel-brostrom/Yolov5_DeepSort_Pytorch')

deepsort = DeepSort(cfg.DEEPSORT.REID_CKPT,

max_dist=cfg.DEEPSORT.MAX_DIST, min_confidence=cfg.DEEPSORT.MIN_CONFIDENCE,

nms_max_overlap=cfg.DEEPSORT.NMS_MAX_OVERLAP, max_iou_distance=cfg.DEEPSORT.MAX_IOU_DISTANCE,

max_age=cfg.DEEPSORT.MAX_AGE, n_init=cfg.DEEPSORT.N_INIT, nn_budget=cfg.DEEPSORT.NN_BUDGET,

use_cuda=True)

# Initialize

device = select_device(opt.device)

# The MOT16 evaluation runs multiple inference streams in parallel, each one writing to

# its own .txt file. Hence, in that case, the output folder is not restored

if not evaluate:

if os.path.exists(out):

pass

shutil.rmtree(out) # delete output folder

os.makedirs(out) # make new output folder

half = device.type != 'cpu' # half precision only supported on CUDA

# Load model

model = attempt_load(yolo_weights, map_location=device) # load FP32 model

stride = int(model.stride.max()) # model stride

imgsz = check_img_size(imgsz, s=stride) # check img_size

names = model.module.names if hasattr(model, 'module') else model.names # get class names

if half:

model.half() # to FP16

# Set Dataloader

vid_path, vid_writer = None, None

# Check if environment supports image displays

if show_vid:

show_vid = check_imshow()

if webcam:

cudnn.benchmark = True # set True to speed up constant image size inference

dataset = LoadStreams(source, img_size=imgsz, stride=stride)

else:

dataset = LoadImages(source, img_size=imgsz)

# Get names and colors

names = model.module.names if hasattr(model, 'module') else model.names

colors = [[random.randint(0, 255) for _ in range(3)] for _ in names]

# Run inference

if device.type != 'cpu':

model(torch.zeros(1, 3, imgsz, imgsz).to(device).type_as(next(model.parameters()))) # run once

t0 = time.time()

save_path = str(Path(out))

# extract what is in between the last '/' and last '.'

txt_file_name = source.split('/')[-1].split('.')[0]

txt_path = str(Path(out)) + '/' + txt_file_name + '.txt'

for frame_idx, (path, img, im0s, vid_cap) in enumerate(dataset):

img = torch.from_numpy(img).to(device)

img = img.half() if half else img.float() # uint8 to fp16/32

img /= 255.0 # 0 - 255 to 0.0 - 1.0

if img.ndimension() == 3:

img = img.unsqueeze(0)

# Inference

t1 = time_synchronized()

pred = model(img, augment=opt.augment)[0]

# Apply NMS

pred = non_max_suppression(

pred, opt.conf_thres, opt.iou_thres, classes=opt.classes, agnostic=opt.agnostic_nms)

t2 = time_synchronized()

# Process detections

for i, det in enumerate(pred): # detections per image

if webcam: # batch_size >= 1

p, s, im0 = path[i], '%g: ' % i, im0s[i].copy()

else:

p, s, im0 = path, '', im0s

s += '%gx%g ' % img.shape[2:] # print string

save_path = str(Path(out) / Path(p).name)

if det is not None and len(det):

# Rescale boxes from img_size to im0 size

det[:, :4] = scale_coords(

img.shape[2:], det[:, :4], im0.shape).round()

# Print results

for c in det[:, -1].unique():

n = (det[:, -1] == c).sum() # detections per class

s += '%g %ss, ' % (n, names[int(c)]) # add to string

xywh_bboxs = []

confs = []

# Adapt detections to deep sort input format

for *xyxy, conf, cls in det:

# to deep sort format

x_c, y_c, bbox_w, bbox_h = xyxy_to_xywh(*xyxy)

xywh_obj = [x_c, y_c, bbox_w, bbox_h]

xywh_bboxs.append(xywh_obj)

confs.append([conf.item()])

xywhs = torch.Tensor(xywh_bboxs)

confss = torch.Tensor(confs)

# pass detections to deepsort

outputs = deepsort.update(xywhs, confss, im0)

# draw boxes for visualization

if len(outputs) > 0:

bbox_xyxy = outputs[:, :4]

identities = outputs[:, -1]

draw_boxes(im0, bbox_xyxy, identities)

# to MOT format

tlwh_bboxs = xyxy_to_tlwh(bbox_xyxy)

# Write MOT compliant results to file

if save_txt:

for j, (tlwh_bbox, output) in enumerate(zip(tlwh_bboxs, outputs)):

bbox_top = tlwh_bbox[0]

bbox_left = tlwh_bbox[1]

bbox_w = tlwh_bbox[2]

bbox_h = tlwh_bbox[3]

identity = output[-1]

with open(txt_path, 'a') as f:

f.write(('%g ' * 10 + '\n') % (frame_idx, identity, bbox_top,

bbox_left, bbox_w, bbox_h, -1, -1, -1, -1)) # label format

else:

deepsort.increment_ages()

# Print time (inference + NMS)

print('%sDone. (%.3fs)' % (s, t2 - t1))

# Stream results

if show_vid:

cv2.namedWindow("Webcam", cv2.WINDOW_NORMAL)

cv2.resizeWindow("Webcam", 1280, 720)

cv2.moveWindow("Webcam", 0, 100)

cv2.imshow("Webcam", im0)

#cv2.imshow(p, im0)

if cv2.waitKey(1) == ord('q'): # q to quit

raise StopIteration

# Save results (image with detections)

if save_vid:

if vid_path != save_path: # new video

vid_path = save_path

if isinstance(vid_writer, cv2.VideoWriter):

vid_writer.release() # release previous video writer

if vid_cap: # video

fps = vid_cap.get(cv2.CAP_PROP_FPS)

w = int(vid_cap.get(cv2.CAP_PROP_FRAME_WIDTH))

h = int(vid_cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

else: # stream

fps, w, h = 30, im0.shape[1], im0.shape[0]

save_path += '.mp4'

vid_writer = cv2.VideoWriter(save_path, cv2.VideoWriter_fourcc(*'mp4v'), fps, (w, h))

vid_writer.write(im0)

if save_txt or save_vid:

print('Results saved to %s' % os.getcwd() + os.sep + out)

if platform == 'darwin': # MacOS

os.system('open ' + save_path)

print('Done. (%.3fs)' % (time.time() - t0))

if __name__ == '__main__':

parser = argparse.ArgumentParser()

parser.add_argument('--yolo_weights', type=str, default='yolov5/weights/yolov5s.pt', help='model.pt path')

parser.add_argument('--deep_sort_weights', type=str, default='deep_sort_pytorch/deep_sort/deep/checkpoint/ckpt.t7', help='ckpt.t7 path')

# file/folder, 0 for webcam

#parser.add_argument('--source', type=str, default='0', help='source')

parser.add_argument('--source', type=str, default='1.mp4', help='source')

parser.add_argument('--output', type=str, default='output', help='output folder') # output folder

parser.add_argument('--img-size', type=int, default=640, help='inference size (pixels)')

parser.add_argument('--conf-thres', type=float, default=0.4, help='object confidence threshold')

parser.add_argument('--iou-thres', type=float, default=0.5, help='IOU threshold for NMS')

parser.add_argument('--fourcc', type=str, default='mp4v', help='output video codec (verify ffmpeg support)')

parser.add_argument('--device', default='', help='cuda device, i.e. 0 or 0,1,2,3 or cpu')

parser.add_argument('--show-vid', action='store_true', help='display tracking video results')

parser.add_argument('--save-vid', action='store_true', help='save video tracking results')

parser.add_argument('--save-txt', action='store_true', help='save MOT compliant results to *.txt')

# class 0 is person, 1 is bycicle, 2 is car... 79 is oven

#parser.add_argument('--classes', nargs='+', type=int, help='filter by class')

parser.add_argument('--classes', nargs='+', default=[0], type=int, help='filter by class')

parser.add_argument('--agnostic-nms', action='store_true', help='class-agnostic NMS')

parser.add_argument('--augment', action='store_true', help='augmented inference')

parser.add_argument('--evaluate', action='store_true', help='augmented inference')

parser.add_argument("--config_deepsort", type=str, default="deep_sort_pytorch/configs/deep_sort.yaml")

args = parser.parse_args()

args.img_size = check_img_size(args.img_size)

with torch.no_grad():

detect(args)

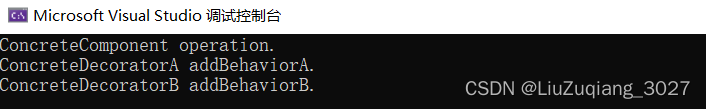

4. 实验效果

同理,运行 track.py 或者用终端运行命令python track.py --source 1.mp4 --show-vid --save-vid --yolo_weights yolov5/weights/yolov5s.pt

代码打包下载

链接1:https://download.csdn.net/download/qq_45077760/87715776

链接2:https://github.com/up-up-up-up/yolov5_Monocular_ranging

博客主页有更多有关测距的内容