文章目录

- Hadoop 运行环境搭建(开发重点)

- 一、安装JDK

- 二、安装配置 Hadoop

- 1、安装 hadoop

- 2、hadoop 目录结构

- 3、设置免密登录

- 4、完全分布式模式(开发重点)

- 1)分发jdk

- 2)集群配置

- (1) 集群部署规划

- (2) 配置文件说明

- (3) 配置集群

- 4) 集群基本测试

- (1) 上传文件到集群

- 5) 配置历史服务器

Hadoop 运行环境搭建(开发重点)

一、安装JDK

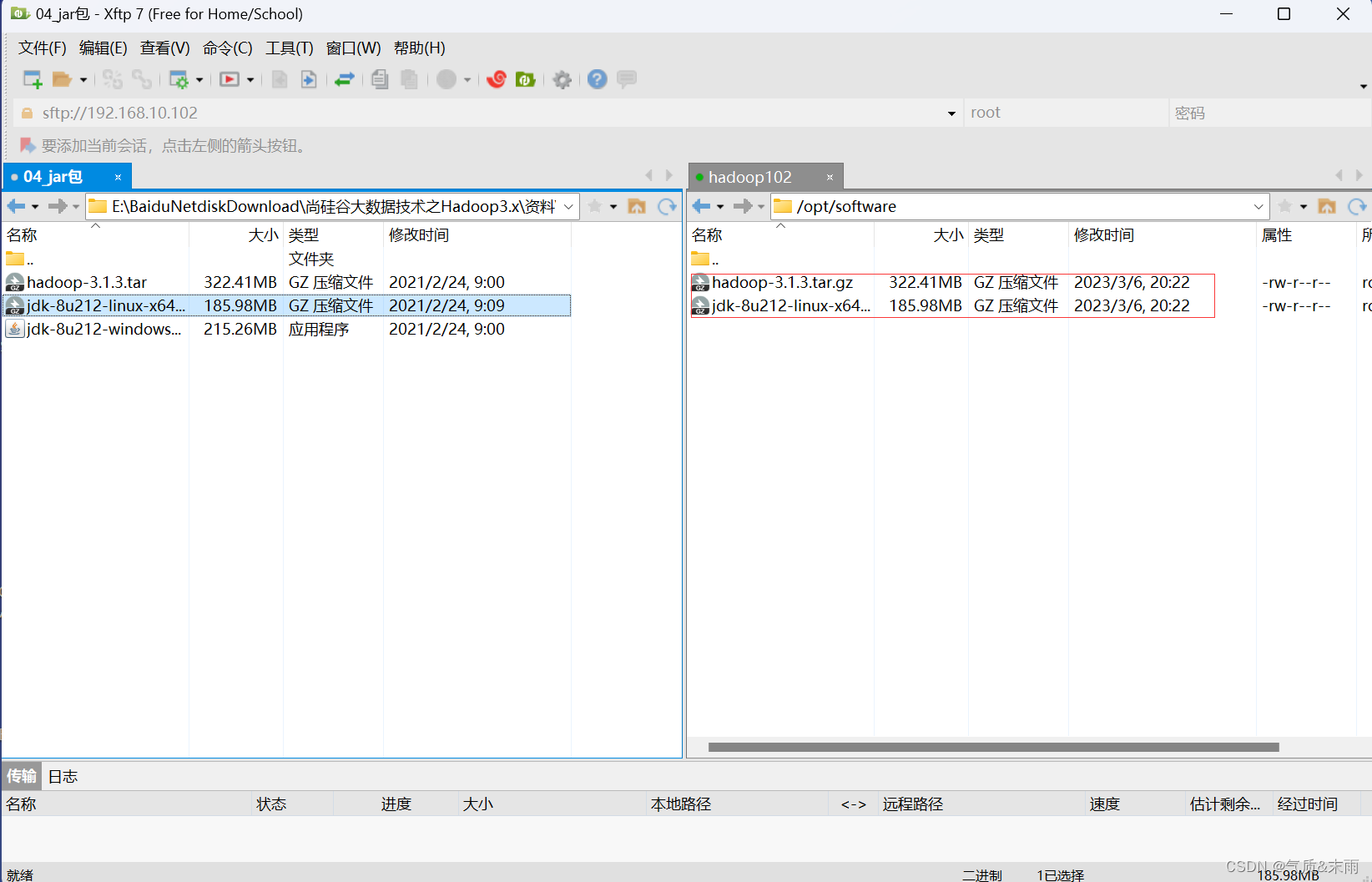

将 hadoop 的安装包和 jdk 的安装包,上传到 hadoop102 的 /opt/software 目录下

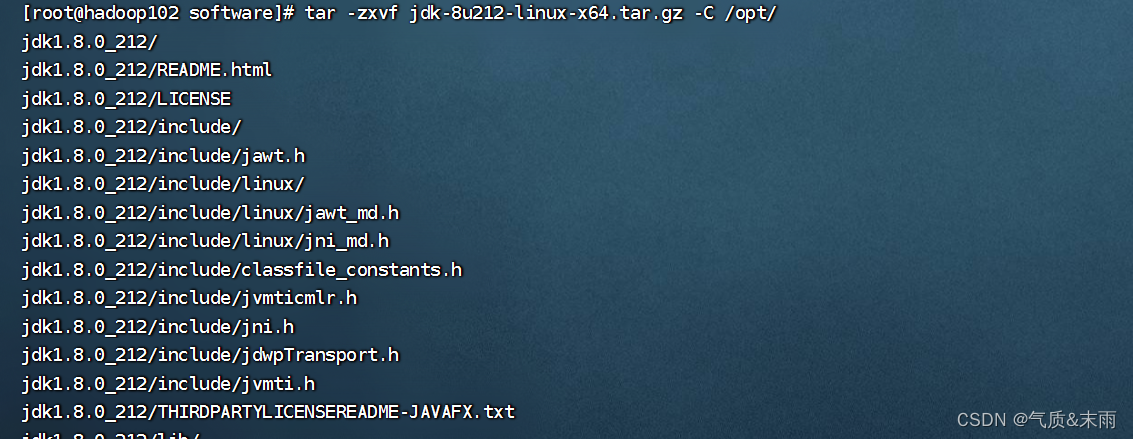

输入命令:tar -zxvf jdk-8u212-linux-x64.tar.gz -C /opt/ 将jdk压缩包解压到 /opt 目录下

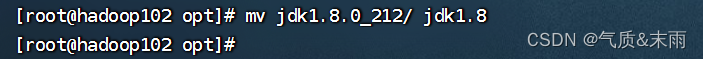

输入命令: mv jdk1.8.0_212/ jdk1.8 包的名字太长了我们给他改成jdk1.8

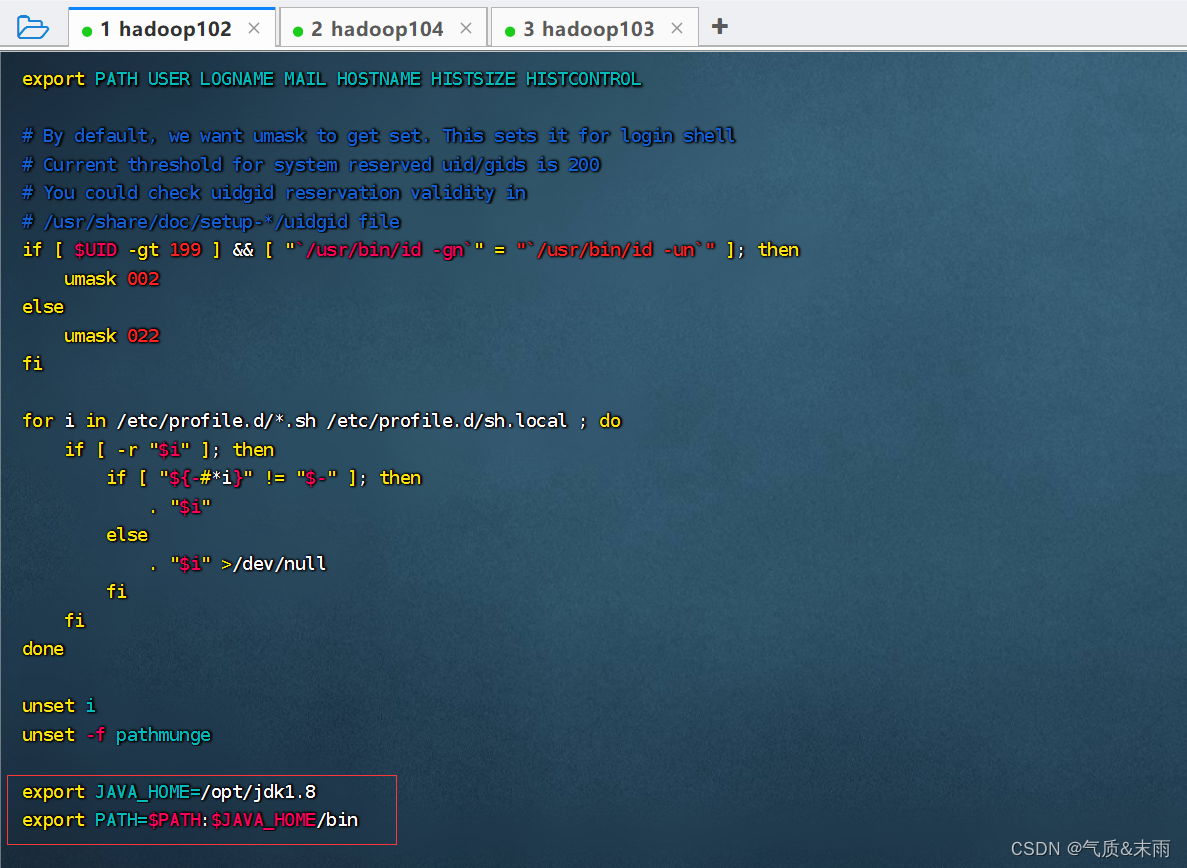

然后接下来,配置jdk的环境变量

输入命令:vim /etc/profile 添加以下的变量

export JAVA_HOME=/opt/jdk1.8

export PATH=$PATH:$JAVA_HOME/bin

输入命令:source /etc/profile 保存配置

输入命令:java -version 可以看到jdk安装成功了

二、安装配置 Hadoop

1、安装 hadoop

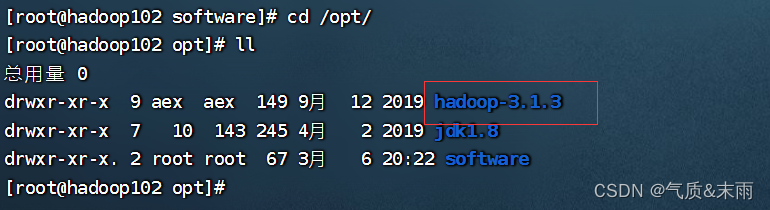

将 /opt/software 目录下的hadoop压缩包,解压到 /opt 目录下

输入命令: tar -zxvf hadoop-3.1.3.tar.gz -C /opt 可以看到 opt目录下就有hadoop包了

输入命令:vim /etc/profile 配置Hadoop的环境变量

export HADOOP_HOME=/opt/hadoop-3.1.3

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH

export HDFS_NAMENODE_USER=root

export HDFS_DATANODE_USER=root

export HDFS_SECONDARYNAMENODE_USER=root

输入命令:source /etc/profile 让环境生效

输入命令:hadoop version,可以看到hadoop安装成功

2、hadoop 目录结构

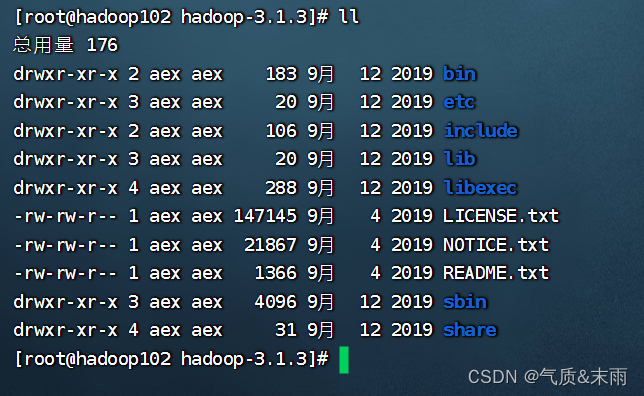

查看 hadoop 的目录结构

hdfs 是存储的,mapred 是计算的,yarn是资源调度的

hadoop 重要目录:

(1)bin 目录:存放对 Hadoop 相关服务(hdfs,yarn,mapred)进行操作的脚本

(2)etc 目录:Hadoop 的配置文件目录,存放 Hadoop 的配置文件

(3)lib 目录:存放 Hadoop 的本地库(对数据进行压缩解压缩功能)

(4)sbin 目录:存放启动或停止 Hadoop 相关服务的脚本

(5)share 目录:存放 Hadoop 的依赖 jar 包、文档、和官方案例

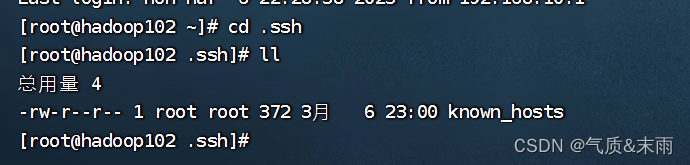

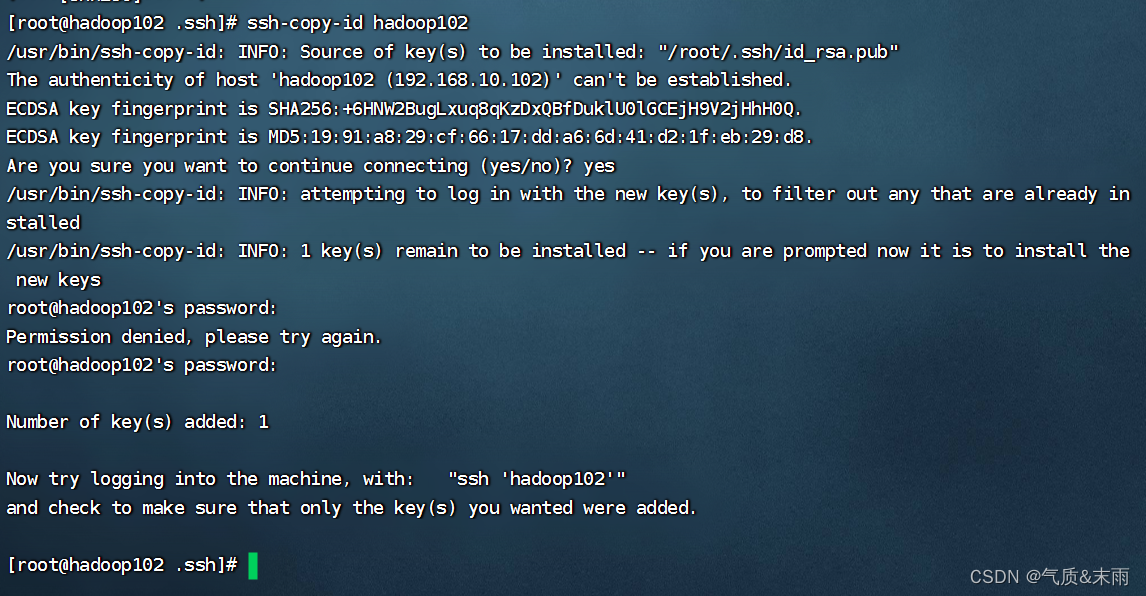

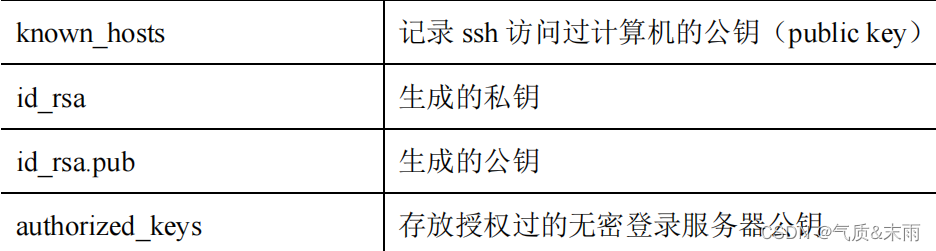

3、设置免密登录

输入命令:cd .ssh

输入命令:ssh-keygen -t rsa 然后按三次回车

就会生成两个文件 id_rsa(私钥)、id_rsa.pub(公钥)

)将公钥拷将公钥拷贝到要免密登录的目标机器上

)将公钥拷将公钥拷贝到要免密登录的目标机器上

ssh-copy-id hadoop102

ssh-copy-id hadoop103

ssh-copy-id hadoop104

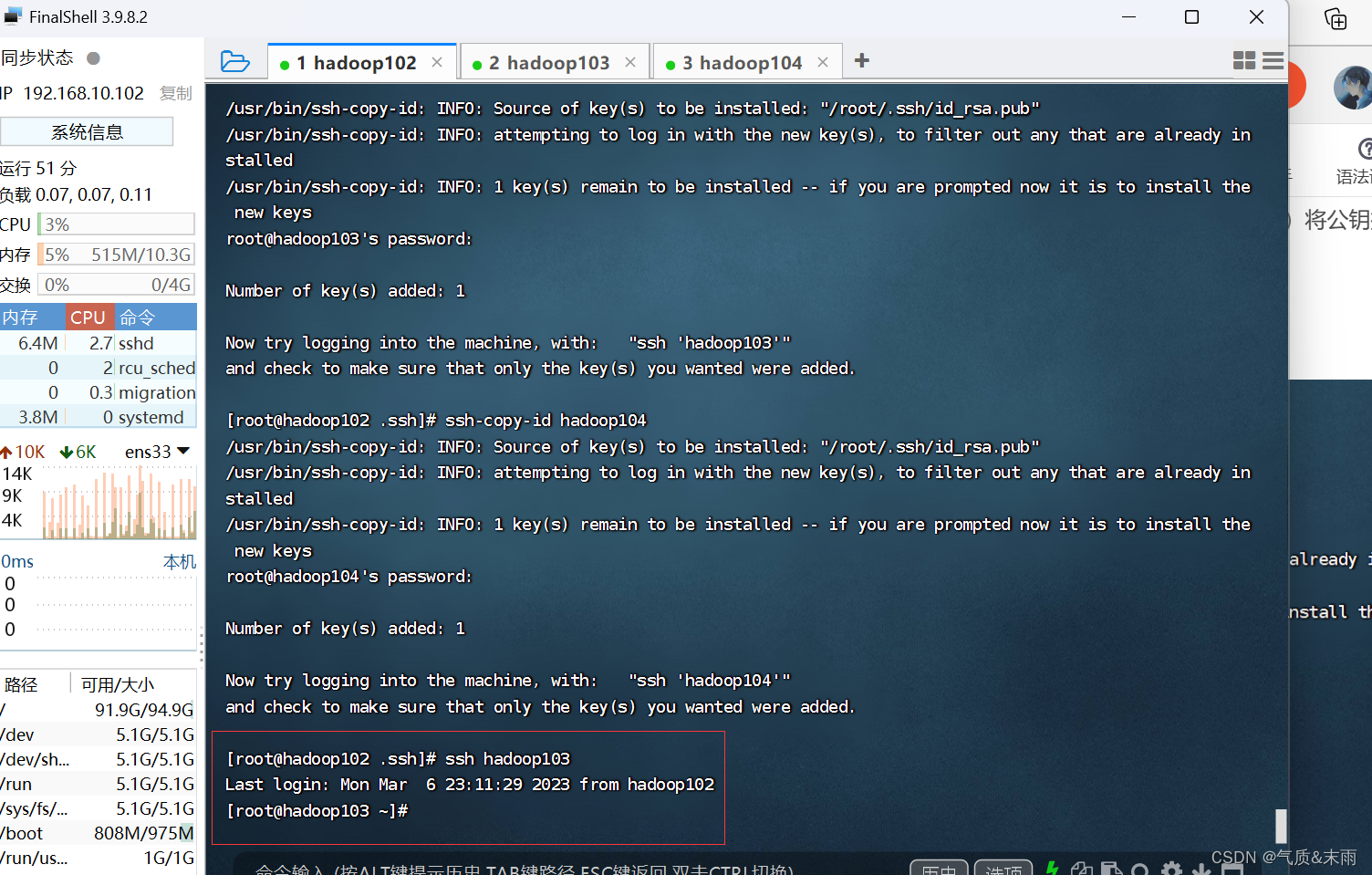

现在登录到其他的虚拟机上就不会输密码了

注意:

还需要在 hadoop103 上采用 root 账号配置一下无密登录到 hadoop102、hadoop103、

hadoop104 服务器上。

还需要在 hadoop104 上采用 root 账号配置一下无密登录到 hadoop102、hadoop103、

hadoop104 服务器上。

还需要在 hadoop102 上采用 root 账号,配置一下无密登录到 hadoop102、hadoop103、

hadoop104

4、完全分布式模式(开发重点)

1)准备三台客户机(关闭防火墙,静态IP,主机名称)

2)安装JDK

3)配置环境变量

4)安装 Hadoop

5)配置环境变量

6)配置集群

7)单点启动

8)配置ssh

9)群起并测试集群

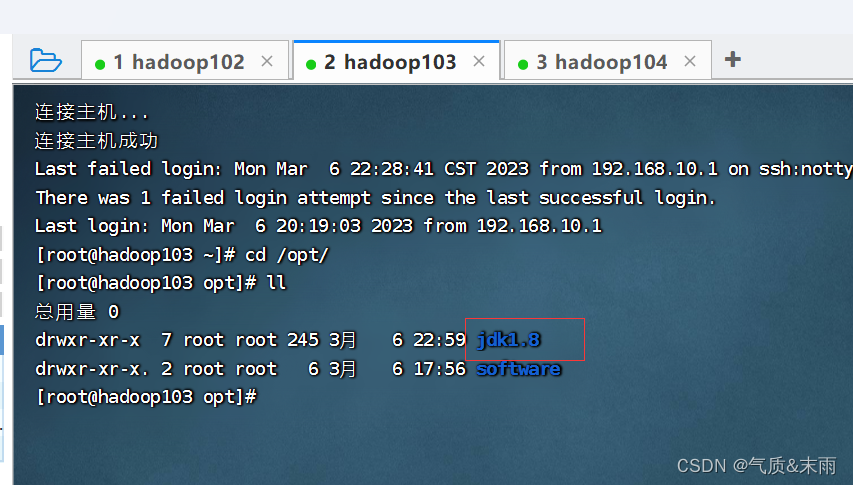

1)分发jdk

在 hadoop102 上,将hadoop12 中 /opt/jdk1.8 目录拷贝到 hadoop103,hadoop104 上面去

输入命令:scp -r $JAVA_HOME root@hadoop103:/opt

输入命令:scp -r $JAVA_HOME root@hadoop104:/opt

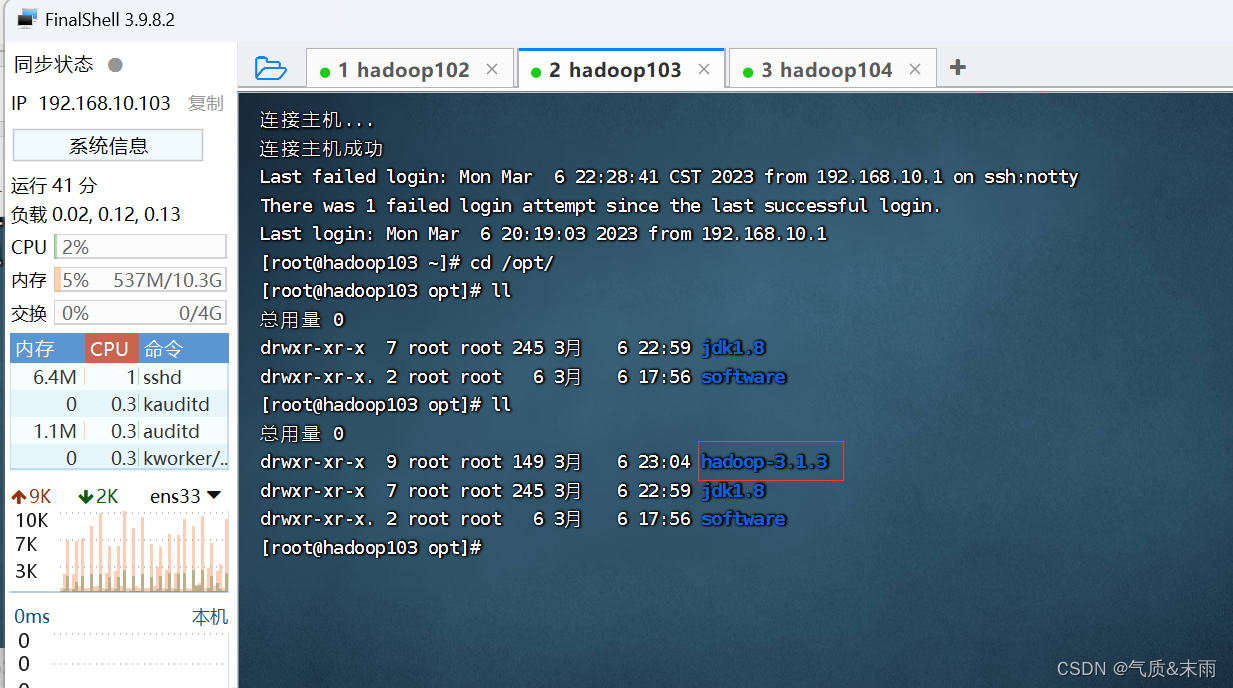

可以看到 hadoop103 和 hadoop104 也有jdk文件了

hadoop 也是同样的拷贝,拷贝到 hadoop103 和 hadoop104

输入命令: scp -r $HADOOP_HOME root@hadoop103:/opt

可以看到hadoop103 和 hadoop104 也有hadoop包了

2)集群配置

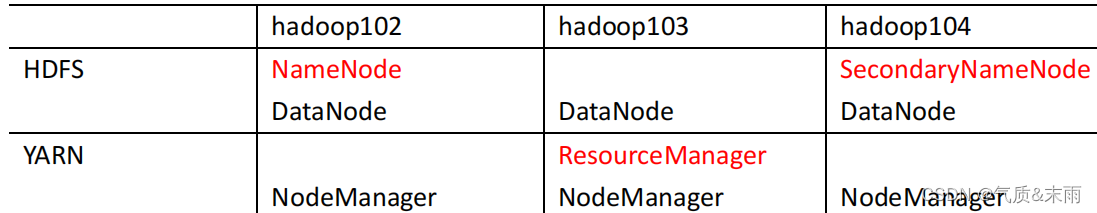

(1) 集群部署规划

注意:

NameNode 和 SecondaryNameNode 不要安装在同一台服务器

ResourceManager 也很消耗内存,不要和 NameNode、SecondaryNameNode 配置在

同一台机器上

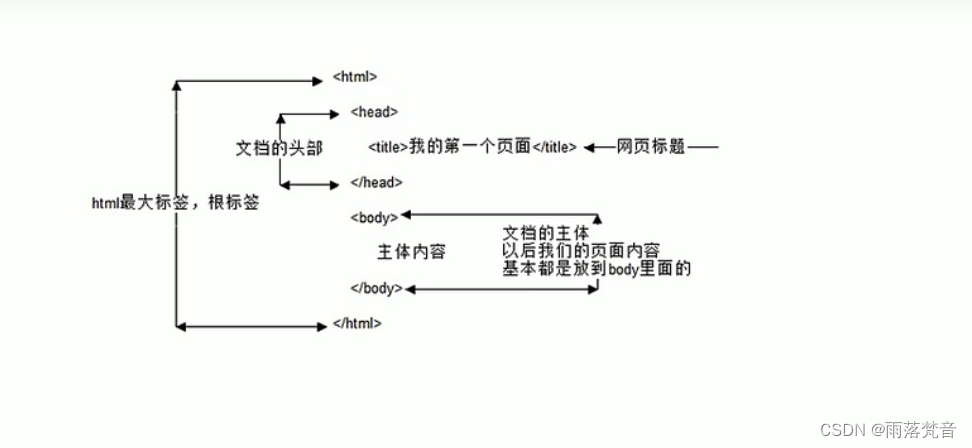

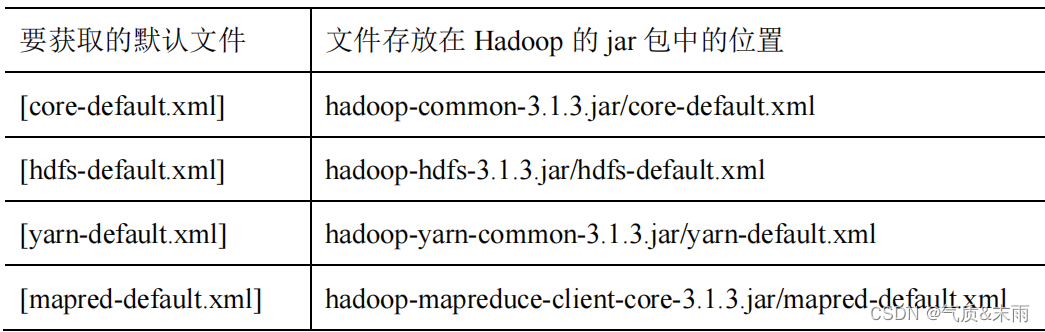

(2) 配置文件说明

Hadoop 配置文件分两类:默认配置文件和自定义配置文件,只有用户想修改某一默认

配置值时,才需要修改自定义配置文件,更改相应属性值。

1、默认配置文件:

2、自定义配置文件:

core-site.xml、hdfs-site.xml、yarn-site.xml、mapred-site.xml 四个配置文件存放在

$HADOOP_HOME/etc/hadoop 这个路径上,用户可以根据项目需求重新进行修改配置

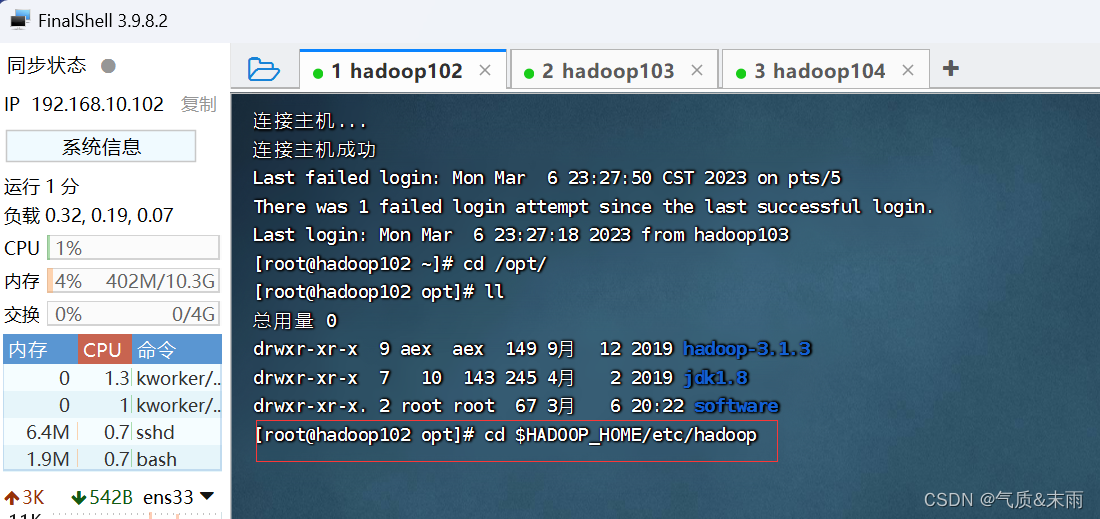

(3) 配置集群

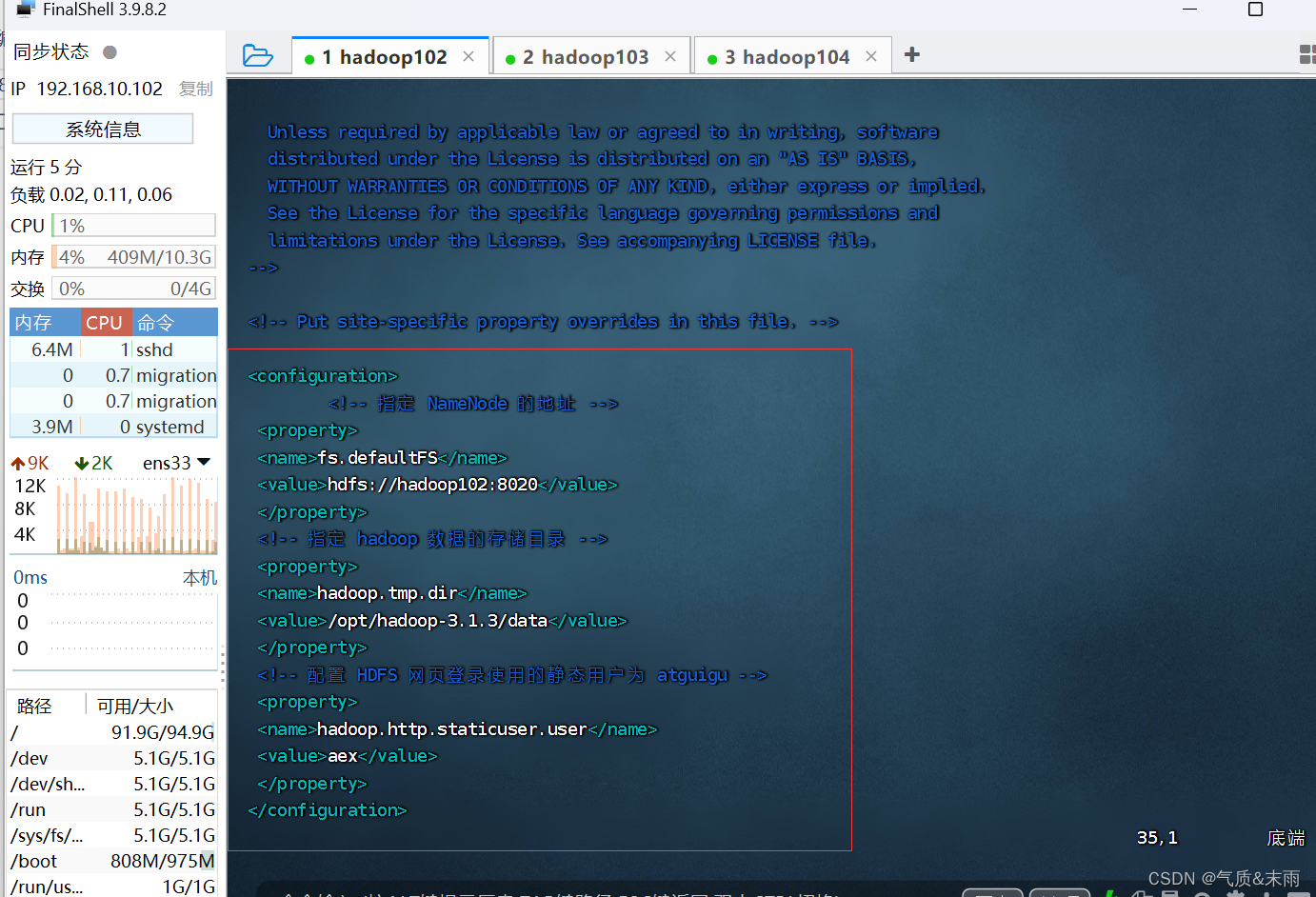

1、核心配置文件

配置 core-site.xml

输入命令:cd $HADOOP_HOME/etc/hadoop 进入hadoop的配置的目录

输入命令:vim core-site.xml 配置core-site.xml 文件

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<!-- 指定 NameNode 的地址 -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://hadoop102:8020</value>

</property>

<!-- 指定 hadoop 数据的存储目录 -->

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/hadoop-3.1.3/data</value>

</property>

<!-- 配置 HDFS 网页登录使用的静态用户为 atguigu -->

<property>

<name>hadoop.http.staticuser.user</name>

<value>aex</value>

</property>

</configuration>

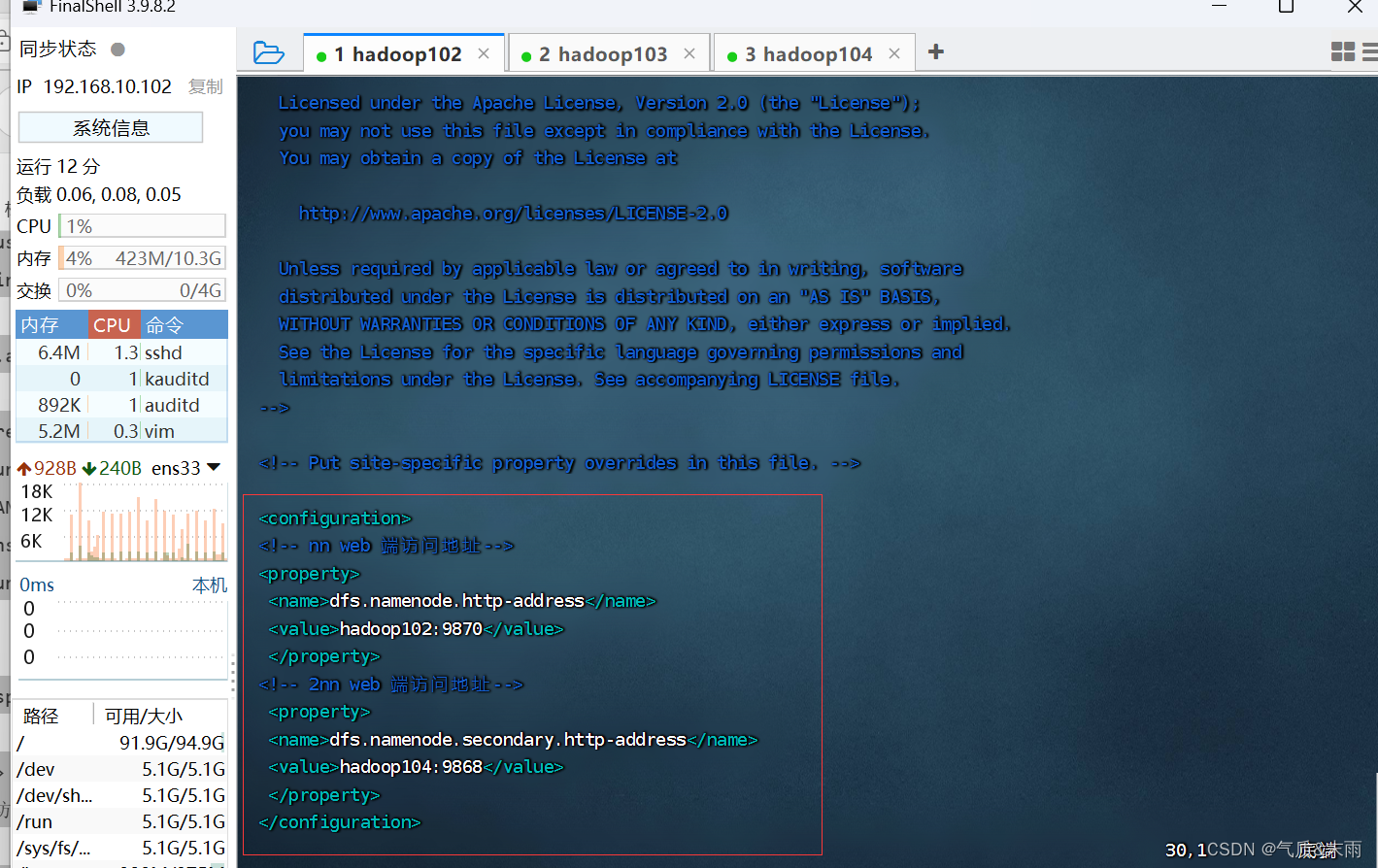

2、HDFS 配置文件

配置 hdfs-site.xml

输入命令:vim hdfs-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<!-- nn web 端访问地址-->

<property>

<name>dfs.namenode.http-address</name>

<value>hadoop102:9870</value>

</property>

<!-- 2nn web 端访问地址-->

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>hadoop104:9868</value>

</property>

</configuration>

3、YARN 配置文件

配置yarn-site.xml

输入命令:vim yarn-site.xml

<?xml version="1.0"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<configuration>

<!-- 指定 MR 走 shuffle -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<!-- 指定 ResourceManager 的地址-->

<property>

<name>yarn.resourcemanager.hostname</name>

<value>hadoop103</value>

</property>

<!-- 环境变量的继承 -->

<property>

<name>yarn.nodemanager.env-whitelist</name>

<value>JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CO

NF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_MAP

RED_HOME</value>

</property>

</configuration>

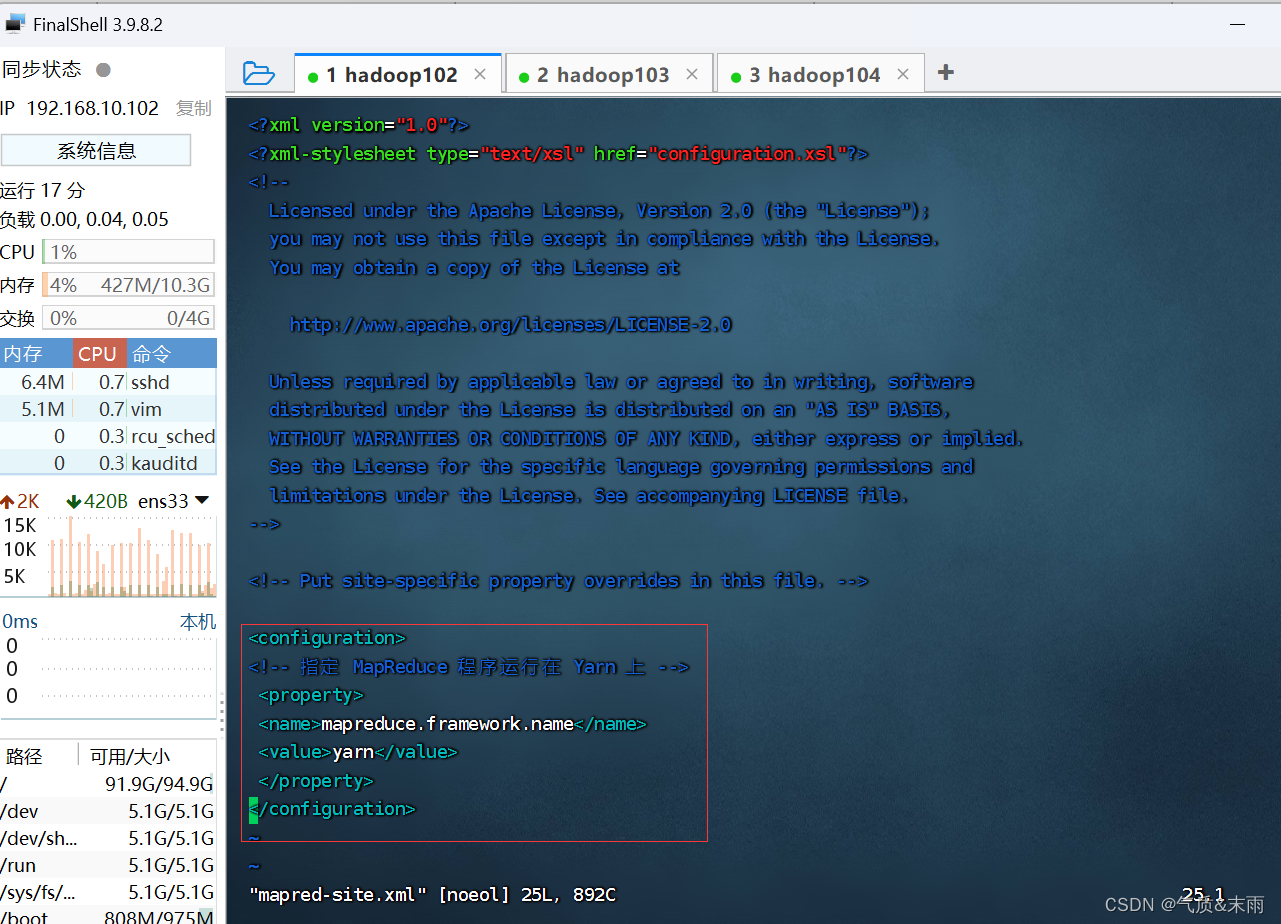

4、MapReduce 配置文件

输入命令:vim mapred-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<!-- 指定 MapReduce 程序运行在 Yarn 上 -->

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

5、在集群上分发1配置好的Hadoop配置文件

输入命令: scp -r $HADOOP_HOME root@hadoop103:$HADOOP_HOME 将配置分发给hadoop103

输入命令: scp -r $HADOOP_HOME root@hadoop104:$HADOOP_HOME 将配置分发给hadoop104

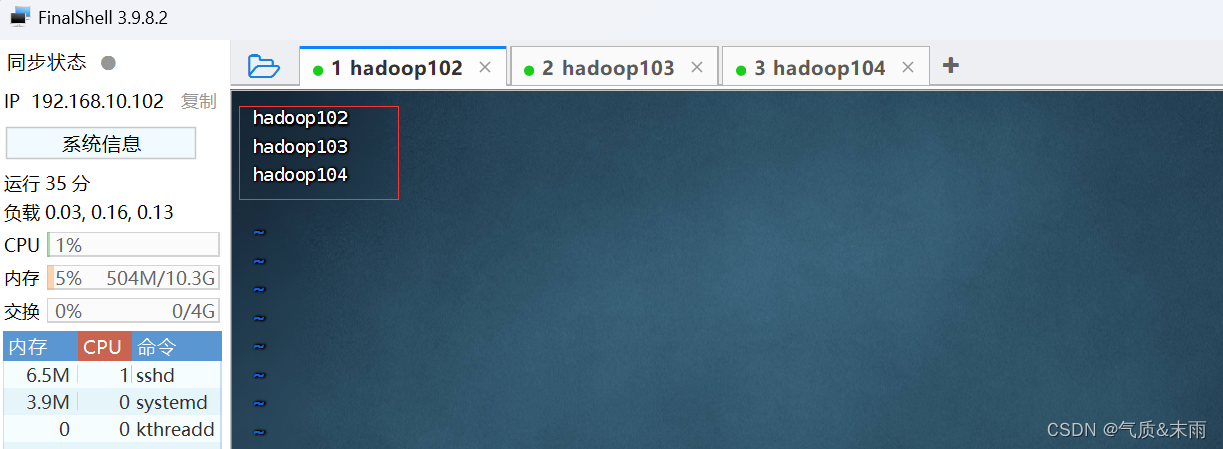

6、配置worekers

输入命令:vim worekers

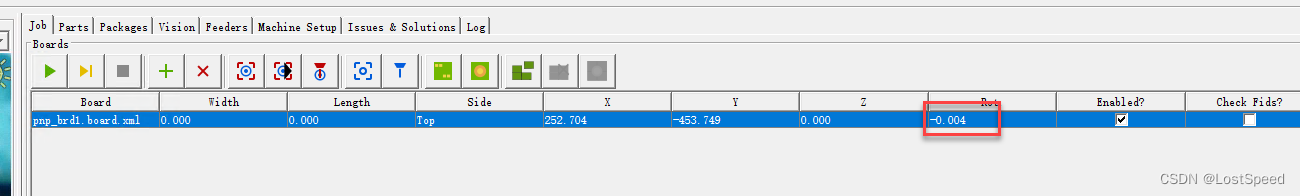

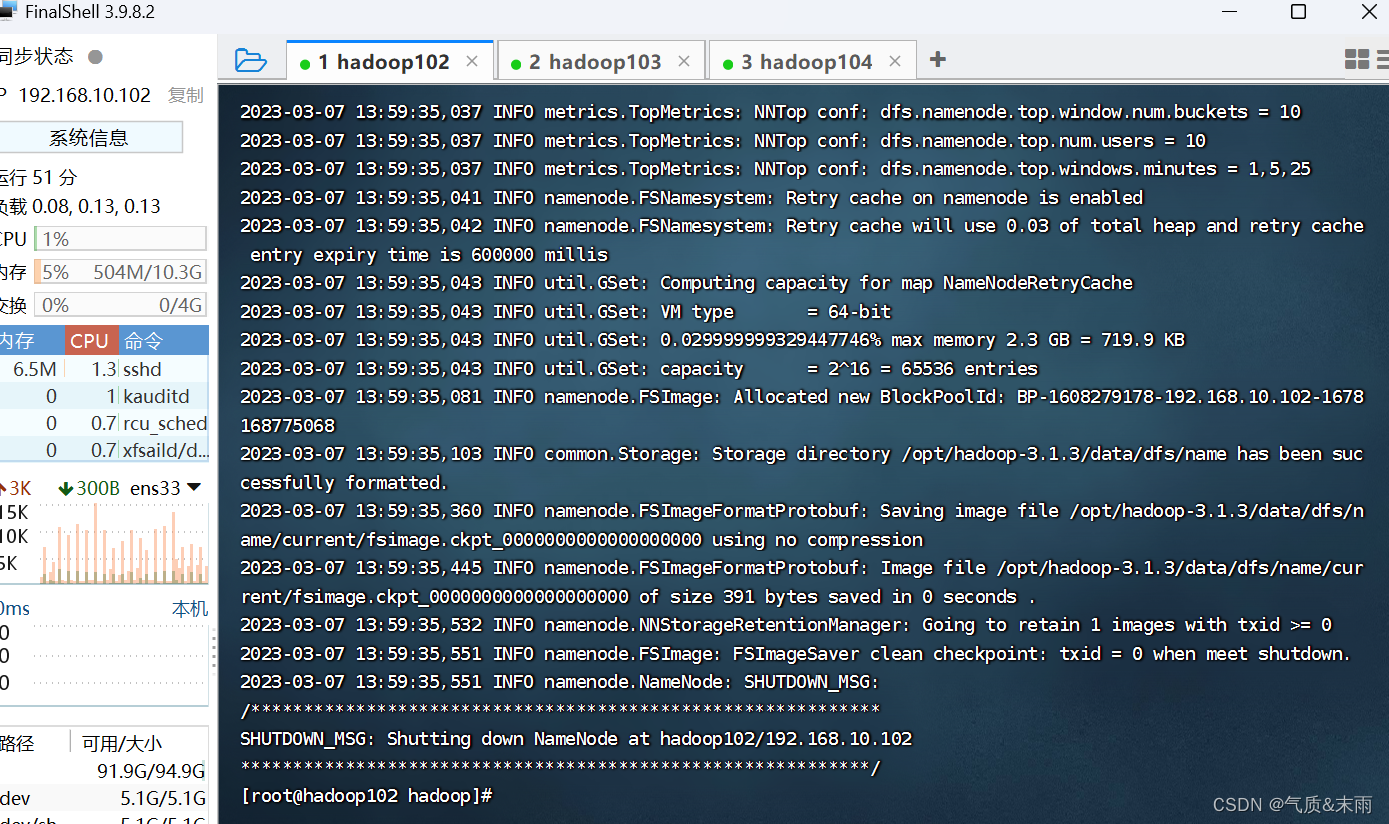

5、进行初始化

输入命令:hdfs namenode -format 下面会出现很长一串

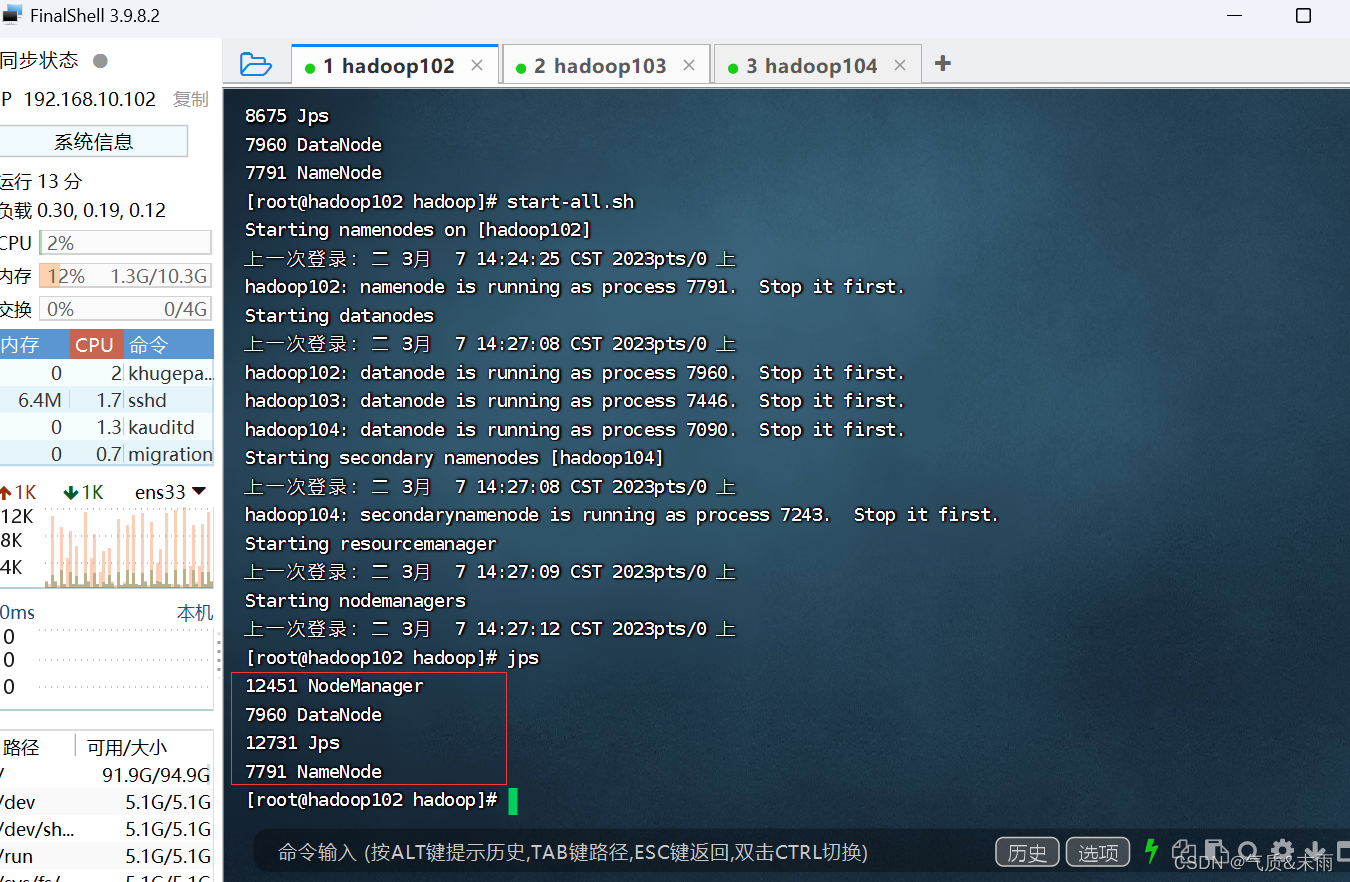

启动节点

输入命令:start-all.sh

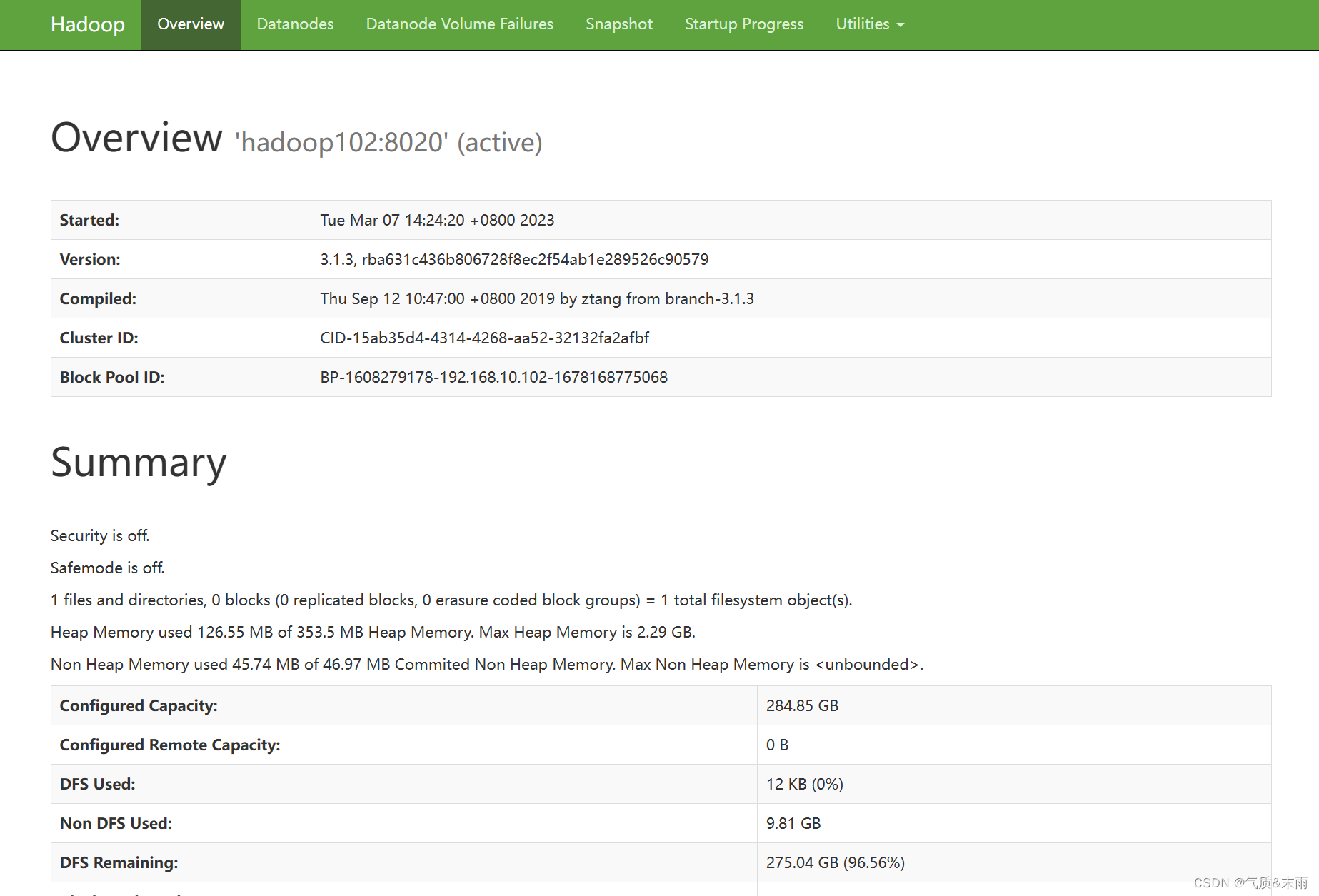

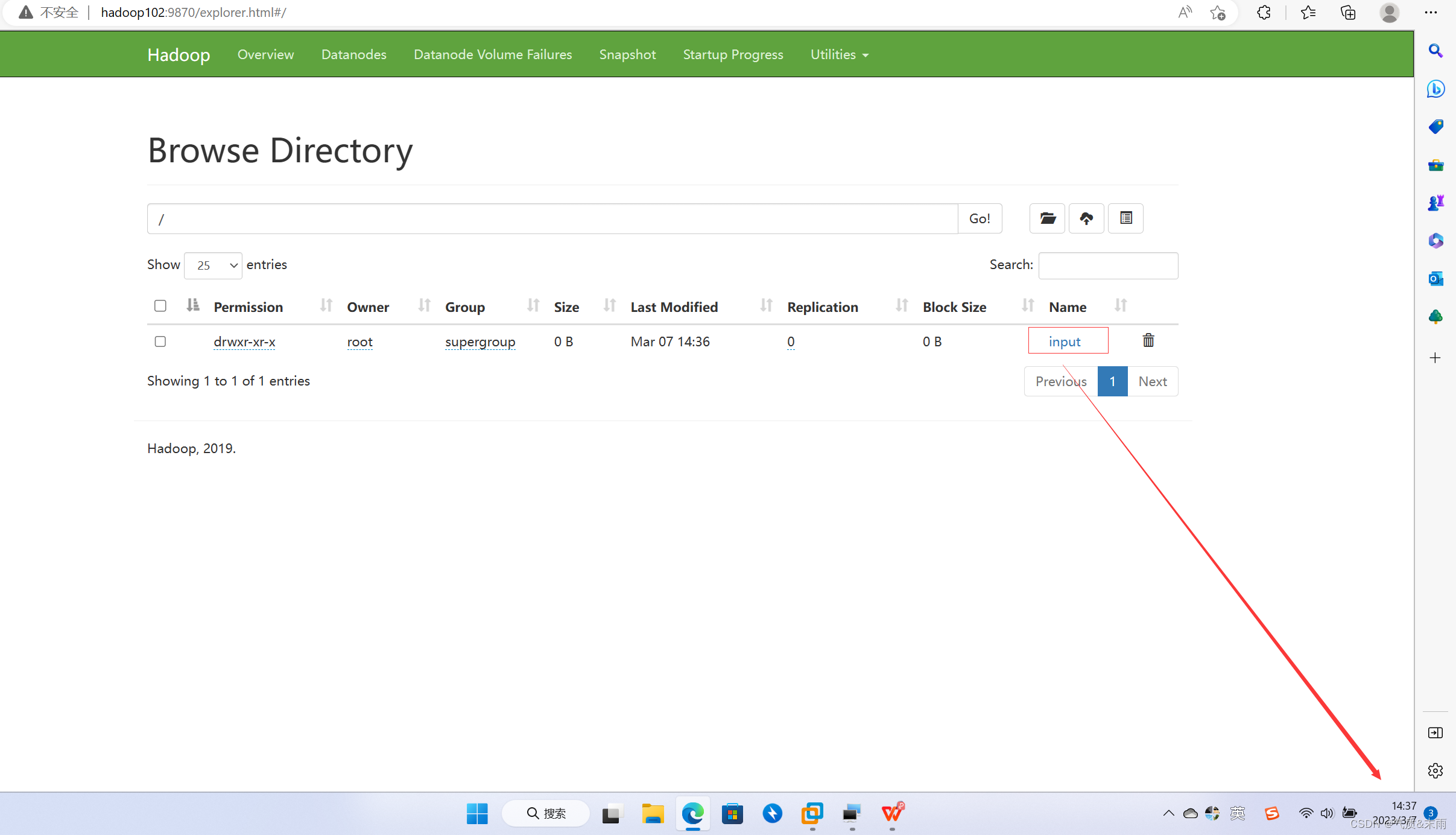

在webUI 界面上查看 hdfs,浏览器输入 hadoop102:9870

4) 集群基本测试

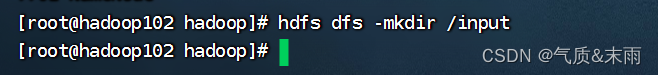

(1) 上传文件到集群

输入命令:hdfs dfs -mkdrir /input 先在hdfs上创建一个 input目录

可以看到 hdfs 上已经有这个文件了

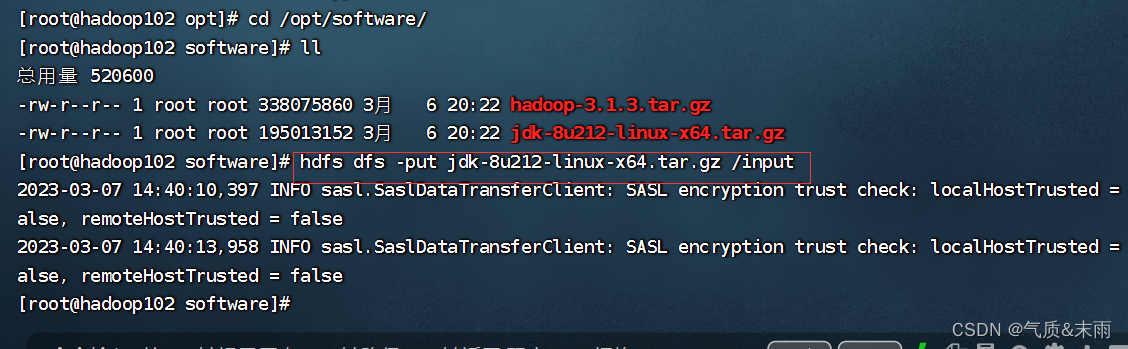

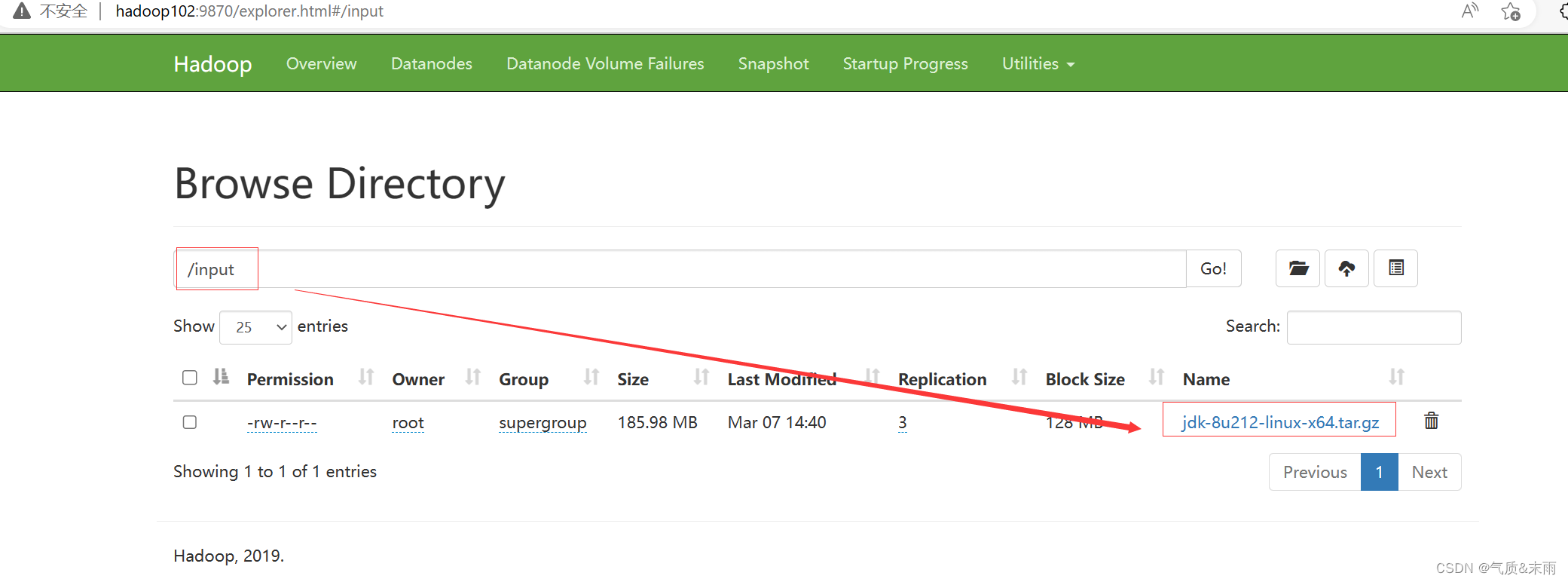

上传一个文件上去

输入命令: hdfs dfs -put jdk-8u212-linux-x64.tar.gz /input

可以看到文件已经上传上去了

5) 配置历史服务器

为了查看程序的历史运行情况,需要配置一下历史服务器,具体配置如下:

1、配置 mapred-site.xml

输入命令:vim mapred-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<!-- 指定 MapReduce 程序运行在 Yarn 上 -->

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<!-- 历史服务器端地址 -->

<property>

<name>mapreduce.jobhistory.address</name>

<value>hadoop102:10020</value>

</property>

<!-- 历史服务器 web 端地址 -->

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>hadoop102:19888</value>

</property>

</configuration>

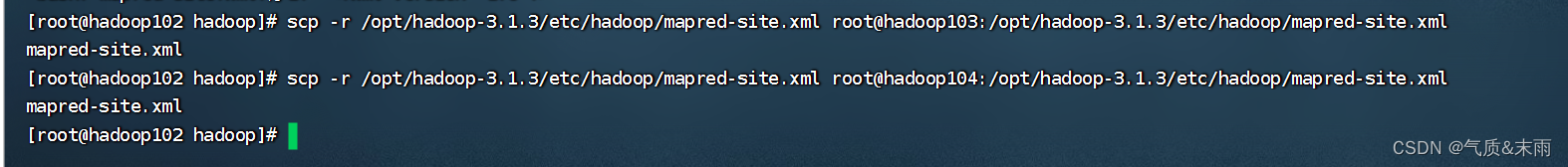

2、分发配置

输入命令:scp -r /opt/hadoop-3.1.3/etc/hadoop/mapred-site.xml root@hadoop103:/opt/hadoop-3.1.3/etc/hadoop/mapred-site.xml mapred-site.xml

输入命令:scp -r /opt/hadoop-3.1.3/etc/hadoop/mapred-site.xml root@hadoop104:/opt/hadoop-3.1.3/etc/hadoop/mapred-site.xml mapred-site.xml

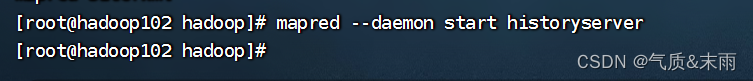

3、在hadoop102启动历史服务器

输入命令:mapred --daemon start historyserver

4、查看历史服务器是否启动

可以看到已经启动了