作者:杨文

DBA,负责客户项目的需求与维护,会点数据库,不限于MySQL、Redis、Cassandra、GreenPlum、ClickHouse、Elastic、TDSQL等等。

本文来源:原创投稿

*爱可生开源社区出品,原创内容未经授权不得随意使用,转载请联系小编并注明来源。

一、环境说明:

集群扩容分为两种情况:一种是扩副本,一种是扩资源。

原集群部署模式:1-1-1。

下面介绍两种扩容方式:

- 扩容副本:扩容后的模式:1-1-1-1-1;

- 扩容资源:扩容后的模式:2-2-2。

说明:扩容操作有两种方式:白屏方式操作和黑屏方式操作。

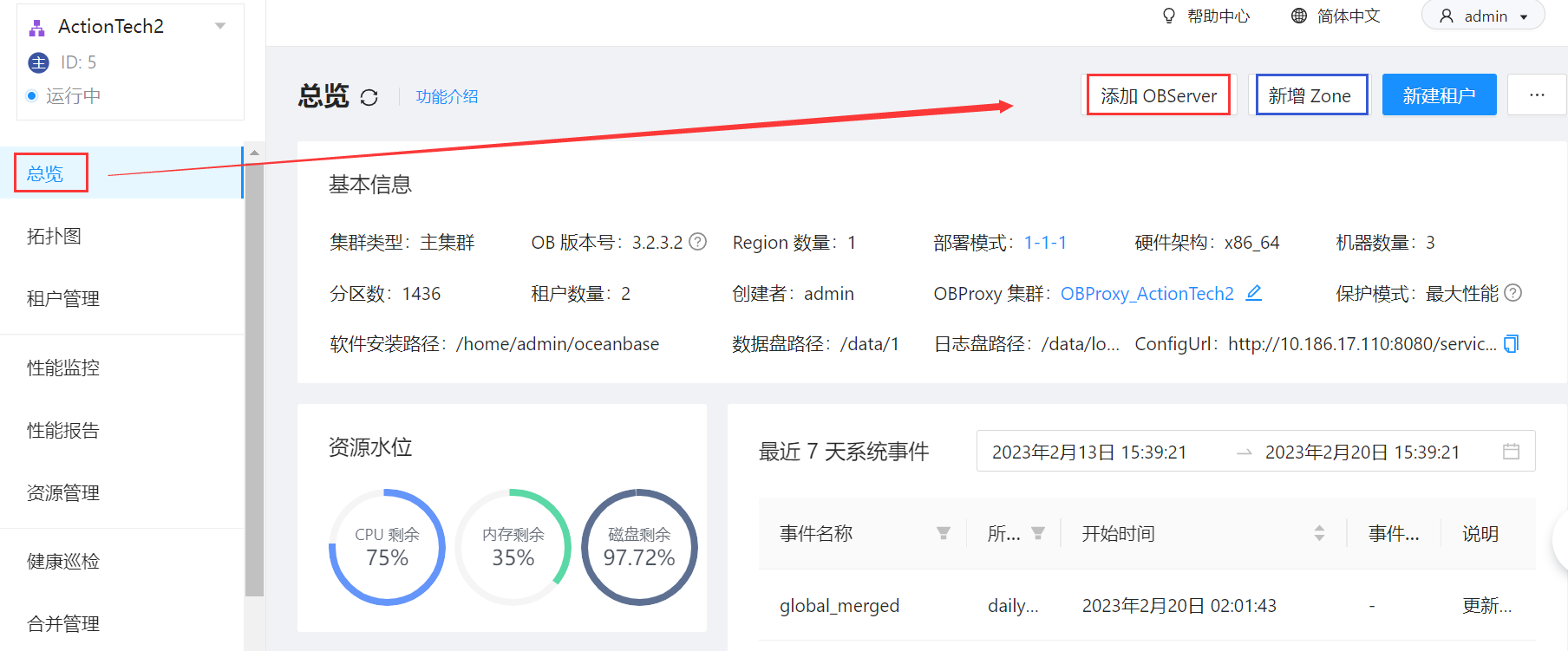

二、白屏方式进行扩容:

扩容副本:进入OCP -> 找到要扩容的集群 -> 总览 -> 新增zone;

扩容资源:进入OCP -> 找到要扩容的集群 -> 总览 -> 新增OBServer;

如图:

三、黑屏方式进行扩容:

说明:为了避免篇幅重复,此处扩容副本和扩容资源将分别使用自动化方式扩容和手工方式扩容。

3.1、扩容副本(Zone):

通过OBD方式进行自动化扩容:

1)准备配置文件:

vi /data/5zones.yaml

# Only need to configure when remote login is required

user:

username: admin

#password: admin

key_file: /home/admin/.ssh/id_rsa.pub

#port: your ssh port, default 22

# timeout: ssh connection timeout (second), default 30

oceanbase-ce:

servers:

# Please don use hostname, only IP can be supported

- name: observer4

ip: 10.186.60.175

- name: observer5

ip: 10.186.60.176

global:

......

appname: ywob2

......

observer4:

mysql_port: 2881 # External port for OceanBase Database. The default value is 2881.

rpc_port: 2882 # Internal port for OceanBase Database. The default value is 2882.

# The working directory for OceanBase Database. OceanBase Database is started under this directory. This is a required field.

home_path: /home/admin/oceanbase-ce

# The directory for data storage. The default value is $home_path/store.

data_dir: /data

# The directory for clog, ilog, and slog. The default value is the same as the data_dir value.

redo_dir: /redo

zone: zone4

observer5:

mysql_port: 2881 # External port for OceanBase Database. The default value is 2881.

rpc_port: 2882 # Internal port for OceanBase Database. The default value is 2882.

# The working directory for OceanBase Database. OceanBase Database is started under this directory. This is a required field.

home_path: /home/admin/oceanbase-ce

# The directory for data storage. The default value is $home_path/store.

data_dir: /data

# The directory for clog, ilog, and slog. The default value is the same as the data_dir value.

redo_dir: /redo

zone: zone5

2)部署集群:

obd cluster deploy ywob2 -c 5zones.yaml

3)合并配置:将新配置文件的内容复制到原本配置文件中(此处重复内容较多,故不粘贴文本了)。

4)针对原集群名进行启动集群:

obd cluster start ywob

+------------------------------------------------+

| observer |

+--------------+---------+------+-------+--------+

|ip | version | port | zone | status |

+--------------+---------+------+-------+--------+

|10.186.60.85 | 3.2.2 | 2881 | zone1 | active |

|10.186.60.173 | 3.2.2 | 2881 | zone2 | active |

|10.186.60.174 | 3.2.2 | 2881 | zone3 | active |

+--------------+---------+------+-------+--------+

说明:此时observer显示仍为原来的三个IP。

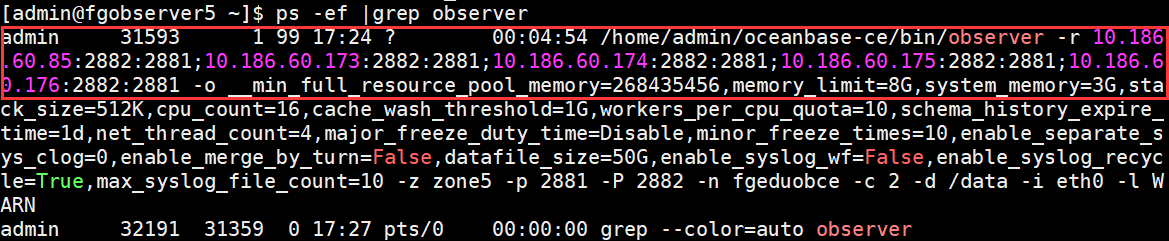

5)查看进程:虽然新的observer中没有显示在列表中,但是实际上新的observer已经启动了。

6)集群中添加新节点:

mysql> alter system add zone 'zone4' region 'sys_region';

mysql> alter system add zone 'zone5' region 'sys_region';

mysql> alter system start zone 'zone4';

mysql> alter system start zone 'zone5';

mysql> alter system add server '10.186.60.175:2882' zone 'zone4';

mysql> alter system add server '10.186.60.176:2882' zone 'zone5';

mysql> alter system start server '10.186.60.175:2882' zone 'zone4';

mysql> alter system start server '10.186.60.176:2882' zone 'zone5';

7)再次检查:

obd cluster display ywob

+------------------------------------------------+

| observer |

+--------------+---------+------+-------+--------+

|ip | version | port | zone | status |

+--------------+---------+------+-------+--------+

|10.186.60.85 | 3.2.2 | 2881 | zone1 | active |

|10.186.60.173 | 3.2.2 | 2881 | zone2 | active |

|10.186.60.174 | 3.2.2 | 2881 | zone3 | active |

|10.186.60.175 | 3.2.2 | 2881 | zone4 | active |

|10.186.60.176 | 3.2.2 | 2881 | zone5 | active |

+--------------+---------+------+-------+--------+

8)为业务租户扩容数据副本。

说明:如果要缩容,步骤如下:收缩节点 -> 发起合并 -> 修改locality -> 收缩资源池 -> 下线zone。

3.2、扩容资源(OBServer):

1)扩容前,建议进行资源检查操作,此处省略。

2)按照之前的环境进行创建目录,安装并启动observer进程:

10.186.60.175:

su - admin

sudo rpm -ivh /tmp/oceanbase-ce-*.rpm

cd ~/oceanbase && bin/observer -i eth0 -p 2881 -P 2882 -z zone1 \

-d ~/oceanbase/store/ywob \

-r '10.186.65.85:2882:2881;10.186.60.173:2882:2881;10.186.60.174:2882:2881;10.186.60.175:2882:2881;10.186.60.176:2882:2881;10.186.60.177:2882:2881' \

-c 20230131 -n ywob \

-o "memory_limit=8G,cache_wash_threshold=1G,__min_full_resource_pool_memory=268435456,\

system_memory=3G,memory_chunk_cache_size=128M,cpu_count=12,net_thread_count=4,\

datafile_size=50G,stack_size=1536K,\

config_additional_dir=/data/ywob/etc3;/redo/ywob/etc2"

10.186.60.176:

su - admin

sudo rpm -ivh /tmp/oceanbase-ce-*.rpm

cd ~/oceanbase && bin/observer -i eth0 -p 2881 -P 2882 -z zone2 \

-d ~/oceanbase/store/ywob \

-r '10.186.65.85:2882:2881;10.186.60.173:2882:2881;10.186.60.174:2882:2881;10.186.60.175:2882:2881;10.186.60.176:2882:2881;10.186.60.177:2882:2881' \

-c 20230131 -n ywob \

-o "memory_limit=8G,cache_wash_threshold=1G,__min_full_resource_pool_memory=268435456,\

system_memory=3G,memory_chunk_cache_size=128M,cpu_count=12,net_thread_count=4,\

datafile_size=50G,stack_size=1536K,\

config_additional_dir=/data/ywob/etc3;/redo/ywob/etc2"

10.186.60.177:

su - admin

sudo rpm -ivh /tmp/oceanbase-ce-*.rpm

cd ~/oceanbase && bin/observer -i eth0 -p 2881 -P 2882 -z zone3 \

-d ~/oceanbase/store/ywob \

-r '10.186.65.85:2882:2881;10.186.60.173:2882:2881;10.186.60.174:2882:2881;10.186.60.175:2882:2881;10.186.60.176:2882:2881;10.186.60.177:2882:2881' \

-c 20230131 -n ywob \

-o "memory_limit=8G,cache_wash_threshold=1G,__min_full_resource_pool_memory=268435456,\

system_memory=3G,memory_chunk_cache_size=128M,cpu_count=12,net_thread_count=4,\

datafile_size=50G,stack_size=1536K,\

config_additional_dir=/data/ywob/etc3;/redo/ywob/etc2"

检查进程和端口:

ps -ef |grep observer

netstat -ntlp

此时应该是可以看到进程的,并且可以监听到2881和2882端口。

3)将新节点添加到zone中:

mysql> alter system add server '10.186.60.175:2882' zone 'zone1';

mysql> alter system add server '10.186.60.176:2882' zone 'zone2';

mysql> alter system add server '10.186.60.177:2882' zone 'zone3';

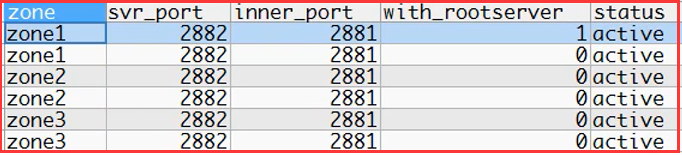

4)节点添加完成后,信息查看:

查看集群所有节点信息:

select zone,svr_port,inner_port,with_rootserver,status from __all_server order by zone, svr_ip;

可以看到zone发生了变化。

分区副本迁移重新选主的过程,可以通过视图__all_rootservice_event_history 查看集群 event 事件信息。

等待资源平衡分区副本迁移完成后,查看资源使用情况:

select a.zone,concat(a.svr_ip,':',a.svr_port) observer, cpu_total, (cpu_total-cpu_assigned) cpu_free,

round(mem_total/1024/1024/1024) mem_total_gb,

round((mem_total-mem_assigned)/1024/1024/1024) mem_free_gb,

round(disk_total/1024/1024/1024) disk_total_gb,unit_num,

substr(a.build_version,1,6) version,usec_to_time(b.start_service_time) start_service_time,b.status

from __all_virtual_server_stat a join __all_server b on (a.svr_ip=b.svr_ip and a.svr_port=b.svr_port)

order by a.zone, a.svr_ip;

此时资源还没被使用;

5)租户扩容:

增加unit数量,由1增加为2:

alter resource pool pool_yw1 unit_num = 2;

unit_num = 2,表示每个zone下有两个unit资源;

由于集群是2-2-2架构,每个zone下有2台observer,因此每个zone下都会有2个租户的unit资源,由于集群负载均衡策略,会导致副本迁移和leader重新选主;

分区副本迁移重新选主的过程,可以通过视图__all_rootservice_event_history 查看集群负载均衡任务的执行情况。__all_virtual_partition_migration_status视图可以看正在发生的迁移;

最后是可以看到数据副本发生了变化,数据重新平衡。

3.3、收缩资源(OBServer):

集群模式:由2-2-2模式缩减为1-1-1模式。

1)信息检查:资源检查、unit检查(此处省略)。

2)资源调整:

由于我们是三副本没变,只是扩了副本资源与unit数量,所以不需要调整副本;

减少unit数量:

alter resource pool pool_yw1 unit_num = 1;

3)检查:分区副本迁移重新选主的过程,可以通过视图__all_rootservice_event_history 查看集群 event 事件信息。

SELECT * FROM __all_rootservice_event_history WHERE 1 = 1 ORDER BY gmt_create LIMIT 50;

可以看到分区副本迁移重新选主的过程;

4)删除observer:

alter system delete server '10.186.60.175:2882' zone zone3;

alter system delete server '10.186.60.176:2882' zone zone2;

alter system delete server '10.186.60.177:2882' zone zone1;

5)检查:

查看 OB 集群所有节点信息:

select zone,svr_ip,svr_port,inner_port,with_rootserver,status from __all_server order by zone;

查看业务租户的分区副本所在节点分布情况:

SELECT t1.tenant_id, t1.tenant_name, t2.database_name,

t3.table_id, t3.table_Name, t3.tablegroup_id, t3.part_num,

t4.partition_Id, t4.zone, t4.svr_ip, t4.role,

round(t4.data_size / 1024 / 1024) data_size_mb

from gv$tenant t1

join gv$database t2 on (t1.tenant_id = t2.tenant_id)

join gv$table t3 on (t2.tenant_id = t3.tenant_id and t2.database_id = t3.database_id and t3.index_type = 0)

left join gv$partition t4 on (t2.tenant_id = t4.tenant_id and (t3.table_id = t4.table_id or t3.tablegroup_id = t4.table_id) and t4.role in (1, 2))

where t1.tenant_id > 1000

order by t1.tenant_id, t3.tablegroup_id, t3.table_name, t4.partition_Id, t4.role;

可以看到数据平衡完了,数据全部都只分布在10.186.60.85/173/174主机上。

最后,分别通过直连和OBProxy进行连接数据库查验数据。