第六章.卷积神经网络(CNN)

6.2 CNN的实现(搭建手写数字识别的CNN)

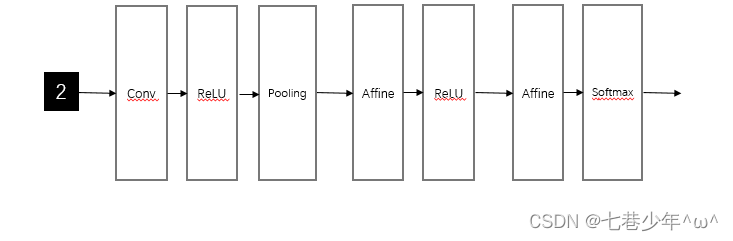

1.网络构成

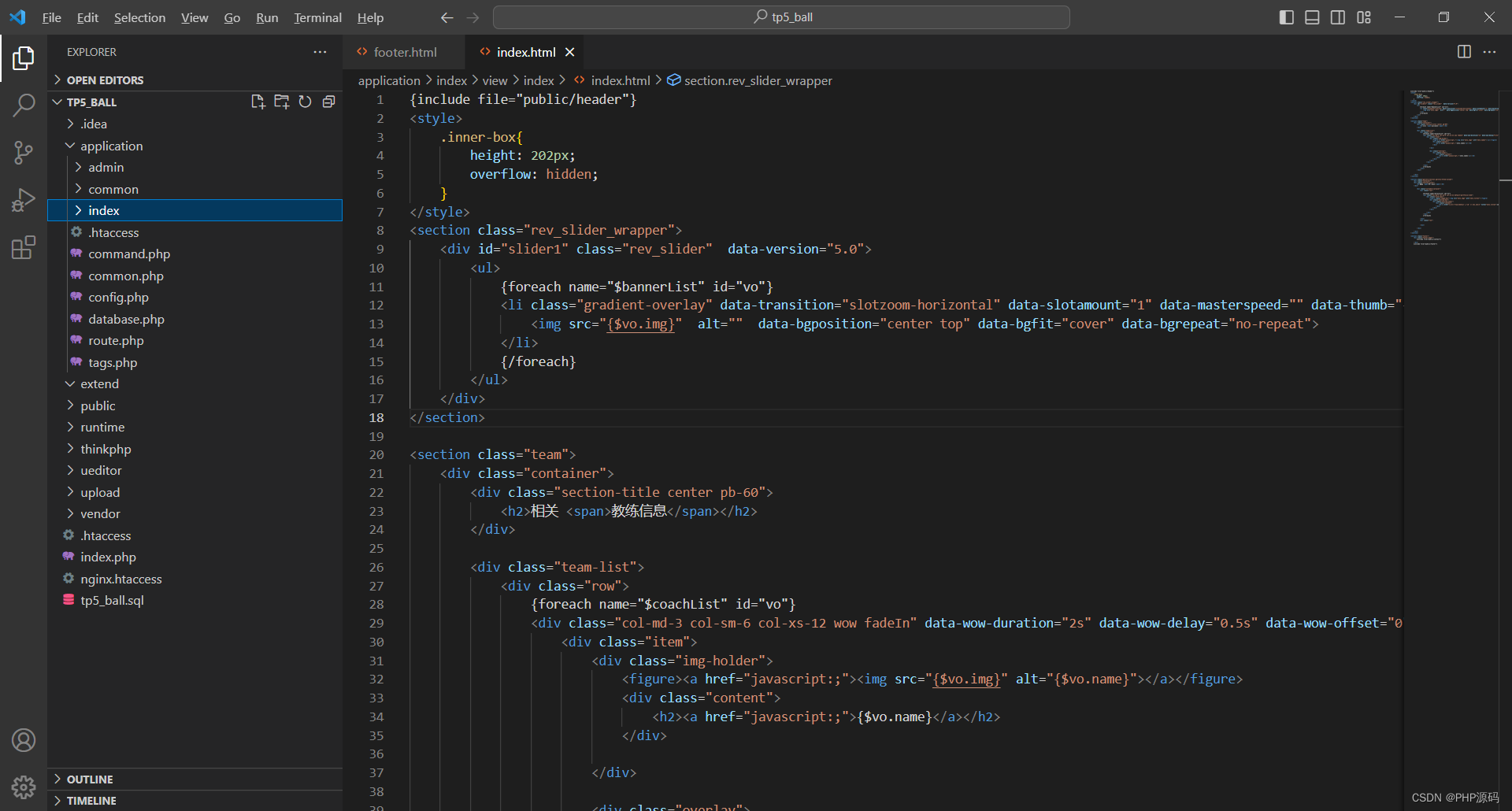

2.代码实现

import pickle

import matplotlib.pyplot as plt

import numpy as np

import sys, os

sys.path.append(os.pardir)

from dataset.mnist import load_mnist

from collections import OrderedDict

# 从图像到矩阵

def im2col(input_data, filter_h, filter_w, stride=1, pad=0):

N, C, H, W = input_data.shape

out_h = (H + 2 * pad - filter_h) // stride + 1

out_w = (W + 2 * pad - filter_w) // stride + 1

img = np.pad(input_data, [(0, 0), (0, 0), (pad, pad), (pad, pad)], 'constant')

col = np.zeros((N, C, filter_h, filter_w, out_h, out_w))

for y in range(filter_h):

y_max = y + stride * out_h

for x in range(filter_w):

x_max = x + stride * out_w

col[:, :, y, x, :, :] = img[:, :, y:y_max:stride, x:x_max:stride]

col = col.transpose(0, 4, 5, 1, 2, 3).reshape(N * out_h * out_w, -1)

return col

# 从矩阵到图像

def col2im(col, input_shape, filter_h, filter_w, stride=1, pad=0):

N, C, H, W = input_shape

out_h = (H + 2 * pad - filter_h) // stride + 1

out_w = (W + 2 * pad - filter_w) // stride + 1

col = col.reshape(N, out_h, out_w, C, filter_h, filter_w).transpose(0, 3, 4, 5, 1, 2)

img = np.zeros((N, C, H + 2 * pad + stride - 1, W + 2 * pad + stride - 1))

for y in range(filter_h):

y_max = y + stride * out_h

for x in range(filter_w):

x_max = x + stride * out_w

img[:, :, y:y_max:stride, x:x_max:stride] += col[:, :, y, x, :, :]

return img[:, :, pad:H + pad, pad:W + pad]

class SGD:

def __init__(self, lr=0.01):

self.lr = lr

def update(self, params, grads):

for key in params.keys():

params[key] -= self.lr * grads[key]

class Momentum:

def __init__(self, lr=0.01, momentum=0.9):

self.lr = lr

self.momentum = momentum

self.v = None

def update(self, params, grads):

if self.v is None:

self.v = {}

for key, val in params.items():

self.v[key] = np.zeros_like(val)

for key in params.keys():

self.v[key] = self.momentum * self.v[key] - self.lr * grads[key]

params[key] += self.v[key]

class Nesterov:

def __init__(self, lr=0.01, momentum=0.9):

self.lr = lr

self.momentum = momentum

self.v = None

def update(self, params, grads):

if self.v is None:

self.v = {}

for key, val in params.items():

self.v[key] = np.zeros_like(val)

for key in params.keys():

self.v[key] *= self.momentum

self.v[key] -= self.lr * grads[key]

params[key] += self.momentum * self.momentum * self.v[key]

params[key] -= (1 + self.momentum) * self.lr * grads[key]

class AdaGrad:

def __init__(self, lr=0.01):

self.lr = lr

self.h = None

def update(self, params, grads):

if self.h is None:

self.h = {}

for key, val in params.items():

self.h[key] = np.zeros_like(val)

for key in params.keys():

self.h[key] += grads[key] * grads[key]

params[key] -= self.lr * grads[key] / (np.sqrt(self.h[key]) + 1e-7)

class RMSprop:

def __init__(self, lr=0.01, decay_rate=0.99):

self.lr = lr

self.decay_rate = decay_rate

self.h = None

def update(self, params, grads):

if self.h is None:

self.h = {}

for key, val in params.items():

self.h[key] = np.zeros_like(val)

for key in params.keys():

self.h[key] *= self.decay_rate

self.h[key] += (1 - self.decay_rate) * grads[key] * grads[key]

params[key] -= self.lr * grads[key] / (np.sqrt(self.h[key]) + 1e-7)

class Adam:

def __init__(self, lr=0.001, beta1=0.9, beta2=0.999):

self.lr = lr

self.beta1 = beta1

self.beta2 = beta2

self.iter = 0

self.m = None

self.v = None

def update(self, params, grads):

if self.m is None:

self.m, self.v = {}, {}

for key, val in params.items():

self.m[key] = np.zeros_like(val)

self.v[key] = np.zeros_like(val)

self.iter += 1

lr_t = self.lr * np.sqrt(1.0 - self.beta2 ** self.iter) / (1.0 - self.beta1 ** self.iter)

for key in params.keys():

# self.m[key] = self.beta1*self.m[key] + (1-self.beta1)*grads[key]

# self.v[key] = self.beta2*self.v[key] + (1-self.beta2)*(grads[key]**2)

self.m[key] += (1 - self.beta1) * (grads[key] - self.m[key])

self.v[key] += (1 - self.beta2) * (grads[key] ** 2 - self.v[key])

params[key] -= lr_t * self.m[key] / (np.sqrt(self.v[key]) + 1e-7)

# unbias_m += (1 - self.beta1) * (grads[key] - self.m[key]) # correct bias

# unbisa_b += (1 - self.beta2) * (grads[key]*grads[key] - self.v[key]) # correct bias

# params[key] += self.lr * unbias_m / (np.sqrt(unbisa_b) + 1e-7)

# 激活函数Relu

class Relu:

def __init__(self):

self.mask = None

def forward(self, x):

self.mask = (x <= 0)

out = x.copy()

out[self.mask] = 0

return out

def backward(self, dout):

dout[self.mask] = 0

dx = dout

return dx

# 卷积层

class Convolution:

def __init__(self, W, b, stride=1, pad=0):

self.W = W

self.b = b

self.stride = stride

self.pad = pad

# 中间数据(backward时使用)

self.x = None

self.col = None

self.col_W = None

# 权重和偏置参数的梯度

self.dW = None

self.db = None

# 正向传播

def forward(self, x):

FN, C, FH, FW = self.W.shape

N, C, H, W = x.shape

out_h = int((H + 2 * self.pad - FH) / self.stride) + 1

out_w = int((W + 2 * self.pad - FW) / self.stride) + 1

col = im2col(x, FH, FW, self.stride, self.pad)

col_W = self.W.reshape(FN, -1).T

out = np.dot(col, col_W) + self.b

out = out.reshape(N, out_h, out_w, -1).transpose(0, 3, 1, 2)

self.x = x

self.col = col

self.col_W = col_W

return out

# 反向传播

def backward(self, dout):

FN, C, FH, FW = self.W.shape

dout = dout.transpose(0, 2, 3, 1).reshape(-1, FN)

self.db = np.sum(dout, axis=0)

self.dW = np.dot(self.col.T, dout)

self.dW = self.dW.transpose(1, 0).reshape(FN, C, FH, FW)

dcol = np.dot(dout, self.col_W.T)

dx = col2im(dcol, self.x.shape, FH, FW, self.stride, self.pad)

return dx

# 池化层

class Pooling:

def __init__(self, pool_h, pool_w, stride=1, pad=0):

self.pool_h = pool_h

self.pool_w = pool_w

self.stride = stride

self.pad = pad

self.x = None

self.arg_max = None

# 正向传播

def forward(self, x):

N, C, H, W = x.shape

out_h = int(1 + (H - self.pool_h) / self.stride)

out_w = int(1 + (W - self.pool_w) / self.stride)

col = im2col(x, self.pool_h, self.pool_w, self.stride, self.pad)

col = col.reshape(-1, self.pool_h * self.pool_w)

arg_max = np.argmax(col, axis=1)

out = np.max(col, axis=1)

out = out.reshape(N, out_h, out_w, C).transpose(0, 3, 1, 2)

self.x = x

self.arg_max = arg_max

return out

# 反向传播

def backward(self, dout):

dout = dout.transpose(0, 2, 3, 1)

pool_size = self.pool_h * self.pool_w

dmax = np.zeros((dout.size, pool_size))

dmax[np.arange(self.arg_max.size), self.arg_max.flatten()] = dout.flatten()

dmax = dmax.reshape(dout.shape + (pool_size,))

dcol = dmax.reshape(dmax.shape[0] * dmax.shape[1] * dmax.shape[2], -1)

dx = col2im(dcol, self.x.shape, self.pool_h, self.pool_w, self.stride, self.pad)

return dx

# Affine层

class Affine:

def __init__(self, W, b):

self.W = W

self.b = b

self.x = None

self.original_x_shape = None

# 权重和偏置参数的导数

self.dW = None

self.db = None

def forward(self, x):

# 对应张量

self.original_x_shape = x.shape # 例如:x.shape=(209, 64, 64, 3)

x = x.reshape(x.shape[0], -1) # x=(209, 64*64*3)

self.x = x

out = np.dot(self.x, self.W) + self.b

return out

def backward(self, dout):

dx = np.dot(dout, self.W.T)

self.dW = np.dot(self.x.T, dout)

self.db = np.sum(dout, axis=0)

dx = dx.reshape(*self.original_x_shape) # 还原输入数据的形状(对应张量)

return dx

# 输出层

class SoftmaxWithLoss:

def __init__(self):

self.loss = None # 损失

self.y = None # softmax的输出

self.t = None # 监督数据(one_hot vector)

# 输出层函数:softmax

def softmax(self, x):

if x.ndim == 2:

x = x.T

x = x - np.max(x, axis=0)

y = np.exp(x) / np.sum(np.exp(x), axis=0)

return y.T

x = x - np.max(x) # 溢出对策

return np.exp(x) / np.sum(np.exp(x))

# 交叉熵误差

def cross_entropy_error(self, y, t):

if y.ndim == 1:

t = t.reshape(1, t.size)

y = y.reshape(1, y.size)

# 监督数据是one-hot-vector的情况下,转换为正确解标签的索引

if t.size == y.size:

t = t.argmax(axis=1)

batch_size = y.shape[0]

return -np.sum(np.log(y[np.arange(batch_size), t] + 1e-7)) / batch_size

# 正向传播

def forward(self, x, t):

self.t = t

self.y = self.softmax(x)

self.loss = self.cross_entropy_error(self.y, self.t)

return self.loss

# 反向传播

def backward(self, dout=1):

batch_size = self.t.shape[0]

if self.t.size == self.y.size: # 监督数据是one-hot-vector的情况

dx = (self.y - self.t) / batch_size

else:

dx = self.y.copy()

dx[np.arange(batch_size), self.t] -= 1

dx = dx / batch_size

return dx

class Trainer:

"""进行神经网络的训练的类

"""

def __init__(self, network, x_train, t_train, x_test, t_test,

epochs=20, mini_batch_size=100,

optimizer='SGD', optimizer_param={'lr': 0.01},

evaluate_sample_num_per_epoch=None, verbose=True):

self.network = network

self.verbose = verbose

self.x_train = x_train

self.t_train = t_train

self.x_test = x_test

self.t_test = t_test

self.epochs = epochs

self.batch_size = mini_batch_size

self.evaluate_sample_num_per_epoch = evaluate_sample_num_per_epoch

# optimzer

optimizer_class_dict = {'sgd': SGD, 'momentum': Momentum, 'nesterov': Nesterov,

'adagrad': AdaGrad, 'rmsprpo': RMSprop, 'adam': Adam}

self.optimizer = optimizer_class_dict[optimizer.lower()](**optimizer_param)

self.train_size = x_train.shape[0]

self.iter_per_epoch = max(self.train_size / mini_batch_size, 1)

self.max_iter = int(epochs * self.iter_per_epoch)

self.current_iter = 0

self.current_epoch = 0

self.train_loss_list = []

self.train_acc_list = []

self.test_acc_list = []

def train_step(self):

batch_mask = np.random.choice(self.train_size, self.batch_size)

x_batch = self.x_train[batch_mask]

t_batch = self.t_train[batch_mask]

grads = self.network.gradient(x_batch, t_batch)

self.optimizer.update(self.network.params, grads)

loss = self.network.loss(x_batch, t_batch)

self.train_loss_list.append(loss)

if self.verbose: print("train loss:" + str(loss))

if self.current_iter % self.iter_per_epoch == 0:

self.current_epoch += 1

x_train_sample, t_train_sample = self.x_train, self.t_train

x_test_sample, t_test_sample = self.x_test, self.t_test

if not self.evaluate_sample_num_per_epoch is None:

t = self.evaluate_sample_num_per_epoch

x_train_sample, t_train_sample = self.x_train[:t], self.t_train[:t]

x_test_sample, t_test_sample = self.x_test[:t], self.t_test[:t]

train_acc = self.network.accuracy(x_train_sample, t_train_sample)

test_acc = self.network.accuracy(x_test_sample, t_test_sample)

self.train_acc_list.append(train_acc)

self.test_acc_list.append(test_acc)

if self.verbose: print(

"=== epoch:" + str(self.current_epoch) + ", train acc:" + str(train_acc) + ", test acc:" + str(

test_acc) + " ===")

self.current_iter += 1

def train(self):

for i in range(self.max_iter):

self.train_step()

test_acc = self.network.accuracy(self.x_test, self.t_test)

if self.verbose:

print("=============== Final Test Accuracy ===============")

print("test acc:" + str(test_acc))

# 手写数字识别CNN的实现类: conv - relu - pool - affine - relu - affine - softmax

class SimpleConvNet:

def __init__(self, input_dim=(1, 28, 28), conv_param={'filter_num': 30, 'filter_size': 5, 'pad': 0, 'stride': 1},

hidden_size=100, output_size=10, weight_int_std=0.01):

filter_num = conv_param['filter_num']

filter_size = conv_param['filter_size']

filter_pad = conv_param['pad']

filter_stride = conv_param['stride']

input_size = input_dim[1]

conv_output_size = (input_size + 2 * filter_pad - filter_size) / filter_stride + 1

pool_output_size = int(filter_num * (conv_output_size / 2) * (conv_output_size / 2))

# 初始化权重

self.params = {}

self.params['W1'] = weight_int_std * np.random.randn(filter_num, input_dim[0], filter_size, filter_size)

self.params['b1'] = np.zeros(filter_num)

self.params['W2'] = weight_int_std * np.random.randn(pool_output_size, hidden_size)

self.params['b2'] = np.zeros(hidden_size)

self.params['W3'] = weight_int_std * np.random.randn(hidden_size, output_size)

self.params['b3'] = np.zeros(output_size)

# 生成层

self.layers = OrderedDict()

self.layers['Conv1'] = Convolution(self.params['W1'], self.params['b1'], filter_stride, filter_pad)

self.layers['Relu1'] = Relu()

self.layers['pool1'] = Pooling(pool_h=2, pool_w=2, stride=2)

self.layers['Affine1'] = Affine(self.params['W2'], self.params['b2'])

self.layers['Relu2'] = Relu()

self.layers['Affine2'] = Affine(self.params['W3'], self.params['b3'])

self.last_layer = SoftmaxWithLoss()

# 推理函数

def predict(self, x):

for layer in self.layers.values():

x = layer.forward(x)

return x

# 损失函数

def loss(self, x, t):

y = self.predict(x)

return self.last_layer.forward(y, t)

# 识别精度

def accuracy(self, x, t, batch_size=100):

if t.ndim != 1: t = np.argmax(t, axis=1)

acc = 0.0

for i in range(int(x.shape[0] / batch_size)):

tx = x[i * batch_size:(i + 1) * batch_size]

tt = t[i * batch_size:(i + 1) * batch_size]

y = self.predict(tx)

y = np.argmax(y, axis=1)

acc += np.sum(y == tt)

return acc / x.shape[0]

def numerical_gradient(f, x):

h = 1e-4 # 0.0001

grad = np.zeros_like(x)

it = np.nditer(x, flags=['multi_index'], op_flags=['readwrite'])

while not it.finished:

idx = it.multi_index

tmp_val = x[idx]

x[idx] = float(tmp_val) + h

fxh1 = f(x) # f(x+h)

x[idx] = tmp_val - h

fxh2 = f(x) # f(x-h)

grad[idx] = (fxh1 - fxh2) / (2 * h)

x[idx] = tmp_val # 还原值

it.iternext()

return grad

# 数值微分

def numerical_gradient(self, x, t):

loss_w = lambda w: self.loss(x, t)

grads = {}

for idx in (1, 2, 3):

grads['W' + str(idx)] = self.numerical_gradient(loss_w, self.params['W' + str(idx)])

grads['b' + str(idx)] = self.numerical_gradient(loss_w, self.params['b' + str(idx)])

return grads

# 误差反向传播法求梯度

def gradient(self, x, t):

self.loss(x, t)

dout = 1

dout = self.last_layer.backward(dout)

layers = list(self.layers.values())

layers.reverse()

for layer in layers:

dout = layer.backward(dout)

# 设定

grads = {}

grads['W1'], grads['b1'] = self.layers['Conv1'].dW, self.layers['Conv1'].db

grads['W2'], grads['b2'] = self.layers['Affine1'].dW, self.layers['Affine1'].db

grads['W3'], grads['b3'] = self.layers['Affine2'].dW, self.layers['Affine2'].db

return grads

# 保存参数

def save_param(self, file_name='params.pkl'):

params = {}

for key, val in self.params.items():

params[key] = val

with open(file_name, 'wb') as f:

pickle.dump(params, f)

# 加载参数

def load_param(self, file_name='params.pkl'):

with open(file_name, 'rb') as f:

params = pickle.load(f)

for key, val in params.items():

self.params[key] = val

for i, key in enumerate(['Conv1', 'Affine1', 'Affine2']):

self.layers[key].W = self.params['W' + str(i + 1)]

self.layers[key].b = self.params['b' + str(i + 1)]

#加载数据

(x_train,t_train),(x_test,t_test)=load_mnist(flatten=False)

#较少数据

x_train,t_train=x_train[:5000],t_train[:5000]

x_test,t_test=x_test[:1000],t_test[:1000]

max_epoch=20

network=SimpleConvNet( input_dim=(1, 28, 28), conv_param={'filter_num': 30, 'filter_size': 5, 'pad': 0, 'stride': 1},

hidden_size=100, output_size=10, weight_int_std=0.01)

trainer=Trainer(network, x_train, t_train, x_test, t_test,

epochs=max_epoch, mini_batch_size=100,

optimizer='Adam', optimizer_param={'lr': 0.001},

evaluate_sample_num_per_epoch=1000)

trainer.train()

#保存参数

network.save_param("params.pkl")

print("Save Network Parameters!")

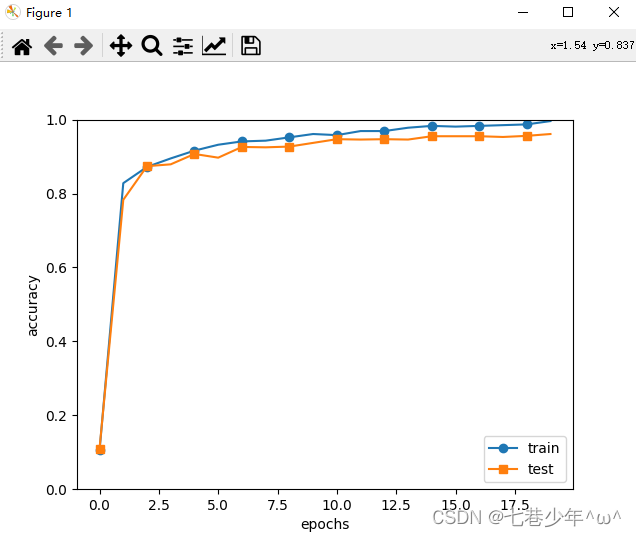

#绘制图像

x=np.arange(max_epoch)

plt.plot(x,trainer.train_acc_list,marker='o',label='train',markevery=2)

plt.plot(x,trainer.test_acc_list,marker='s',label='test',markevery=2)

plt.xlabel("epochs")

plt.ylabel("accuracy")

plt.ylim(0, 1.0)

plt.legend(loc='lower right')

plt.show()

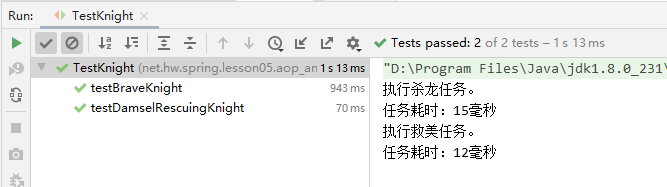

3.结果展示

4.CNN的代表性网络

1).LeNet

-

传统的CNN & LeNet的差异:

①.激活函数不同:LeNet使用sigmoid函数,传统的CNN网络使用的是Relu函数

②.原始的LeNet中使用子采样缩小中间数据的大小,传统的CNN网络主要使用Max池化。

2).AlexNet

-

LeNet & AlexNet的差异:

①.激活函数不同:LeNet使用sigmoid函数,AlexNet使用Relu函数

②.使用进行局部正则化的LRN(Local Response Normalization)层

③.使用Dropout

![[Css]Grid属性简单陈列(适合开发时有基础的快速过一眼)](https://img-blog.csdnimg.cn/9d338deb4497486c91cdb94f242c0401.png)

![[6/101] 101次软件测试面试之经典面试题剖析](https://img-blog.csdnimg.cn/img_convert/93beb3258b0f4104af3599b5acf6f032.jpeg)