相关链接

(1)2023年美赛C题Wordle预测问题一建模及Python代码详细讲解

(2)2023年美赛C题Wordle预测问题二建模及Python代码详细讲解

(3)2023年美赛C题Wordle预测问题三、四建模及Python代码详细讲解

(4)2023年美赛C题Wordle预测问题25页论文

C题:Wordle预测

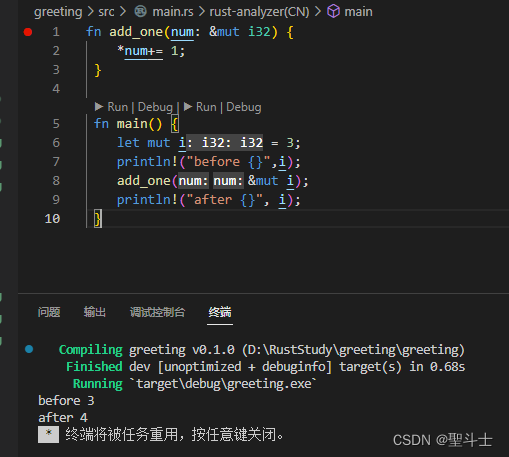

代码运行环境

编译器:vsCode

编程语言:Python

如果要运行代码,出现错误了,不要着急,百度一下错误,一般都是哪个包没有安装,用conda命令或者pip命令都能安装上。

1、问题一

1.1 第一小问

第一小问,建立一个时间序列预测模型,首先对数据按先后顺序排序,查看数据分布

import pandas as pd

import datetime as dt

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

from scipy.stats import skew,kurtosis

pd.options.display.notebook_repr_html=False # 表格显示

plt.rcParams['figure.dpi'] = 75 # 图形分辨率

sns.set_theme(style='darkgrid') # 图形主题

df = pd.read_excel('data/Problem_C_Data_Wordle.xlsx',header=1)

data = df.drop(columns='Unnamed: 0')

data['Date'] = pd.to_datetime(data['Date'])

data.set_index("Date", inplace=True)

data.sort_index(ascending=True,inplace=True)

data

(1)查看数据分布

sns.lineplot(x="Date", y="Number of reported results",data=data)

plt.savefig('img/1.png',dpi=300)

plt.show()

(2)使用箱线图进行查看异常值,300000以上是异常值,黑色的,需要进行处理,本代码中采用的向前填充法,就是用异常值前一天的数据来填充。

sns.boxplot(data['Number of reported results'],color='red')

plt.savefig('img/2.png',dpi=300)

(3)因为Number of reported results是数值特征,在线性回归模型中,为了取得更好的建模效果,在建立回归评估模型之前,应该检查确认样本的分布,如果符合正态分布,则这种训练集是及其理想的,否则应该补充完善训练集或者通过技术手段对训练集进行优化。由KDE图和Q-Q图可知,价格属性呈右偏分布且不服从正态部分,在回归之前需要对数据进一步数据转换。

import scipy.stats as st

plt.figure(figsize=(20, 6))

y = data.Numbers

plt.subplot(121)

plt.title('johnsonsu Distribution fitting',fontsize=20)

sns.distplot(y, kde=False, fit=st.johnsonsu, color='Red')

y2 = data.Numbers

plt.subplot(122)

st.probplot(y2, dist="norm", plot=plt)

plt.title('Q-Q Figure',fontsize=20)

plt.xlabel('X quantile',fontsize=15)

plt.ylabel('Y quantile',fontsize=15)

plt.savefig('img/5.png',dpi=300)

plt.show()

转换前

转换后,注意,预测得到的结果,还要转换回来,采用指数转换。公式是log(x) =y,x=e^y。

import scipy.stats as st

plt.figure(figsize=(20, 6))

y = np.log(data.Numbers)

plt.subplot(121)

plt.title('johnsonsu Distribution fitting',fontsize=20)

sns.distplot(y, kde=False, fit=st.johnsonsu, color='Red')

y2 = np.log(data.Numbers)

plt.subplot(122)

st.probplot(y2, dist="norm", plot=plt)

plt.title('Q-Q Figure',fontsize=20)

plt.xlabel('X quantile',fontsize=15)

plt.ylabel('Y quantile',fontsize=15)

plt.savefig('img/6.png',dpi=300)

plt.show()

(4)可视化所有特征与label的相关性,采用皮尔逊相关性方法,筛选相关性较高作为数据集的特征。得到41个特征。

# 可视化Top20相关性最高的特征

df =data.copy()

corr = df[["target_t1"]+features].corr().abs()

k = 15

col = corr.nlargest(k,'target_t1')['target_t1'].index

plt.subplots(figsize = (10,10))

plt.title("Pearson correlation with label")

sns.heatmap(df[col].corr(),annot=True,square=True,annot_kws={"size":14},cmap="YlGnBu")

plt.savefig('img/10.png',dpi=300)

plt.show()

(5)划分数据集前,需要标准化特征数据,标准化后,将1-11月的数据作为训练集,12月的数据作为测试集。可以看到用简单线性回归可以拟合曲线。

data_feateng = df[features + targets].dropna()

nobs= len(data_feateng)

print("样本数量: ", nobs)

X_train = data_feateng.loc["2022-1":"2022-11"][features]

y_train = data_feateng.loc["2022-1":"2022-11"][targets]

X_test = data_feateng.loc["2022-12"][features]

y_test = data_feateng.loc["2022-12"][targets]

n, k = X_train.shape

print("Train: {}{}, \nTest: {}{}".format(X_train.shape, y_train.shape,

X_test.shape, y_test.shape))

plt.plot(y_train.index, y_train.target_t1.values, label="train")

plt.plot(y_test.index, y_test.target_t1.values, label="test")

plt.title("Train/Test split")

plt.legend()

plt.xticks(rotation=45)

plt.savefig('img/11.png',dpi=300)

plt.show()

(5)采用线性回归

from sklearn.linear_model import LinearRegression

from sklearn.metrics import mean_squared_error

X_train = data_feateng.loc["2022-1":"2022-11"][features]

y_train = data_feateng.loc["2022-1":"2022-11"][targets]

X_test = data_feateng.loc["2022-12"][features]

y_test = data_feateng.loc["2022-12"][targets]

reg = LinearRegression().fit(X_train, y_train["target_t1"])

p_train = reg.predict(X_train)

p_test = reg.predict(X_test)

y_pred = np.exp(p_test*std+mean)

y_true = np.exp(y_test["target_t1"]*std+mean)

RMSE_test = np.sqrt(mean_squared_error(y_true,y_pred))

print("Test RMSE: {}".format(RMSE_test))

模型误差是RMSE: 1992.293296317915

模型训练和预测

from sklearn.linear_model import LinearRegression

reg = LinearRegression().fit(X_train, y_train["target_t1"])

p_train = reg.predict(X_train)

arr = np.array(X_test).reshape((1,-1))

p_test = reg.predict(arr)

y_pred = np.exp(p_test*std+mean)

print(f"预测区间是[{int(y_pred-RMSE_test)}至{int(y_pred+int(RMSE_test))}]")

预测得到的结果减去误差,得到预测区间的左边界,加上误差,得到预测区间的右边界。最后得出的预测区间是【18578-22562】

1.2 第二小问

我提取了每个单词中每个字母位置的特征(如a编码为1,b编码为2,c编码为3依次类推,z编码为26,那5个单词的位置就填入相应的数值,类似于ont-hot编码)、元音的字母的频率(五个单词中元音字母出现了几次),辅音字母的频率(5个单词中辅音字母出现了几次),还有一个是单词的词性(形容词,副词,名词等等,这部分没有做)

特征在代码中未这几个:‘w1’,‘w2’,‘w3’,‘w4’,‘w5’,‘Vowel_fre’,‘Consonant_fre’

然后分别计算1-7次尝试百分比与这几个特征的相关性,采用皮尔逊相关性方法。同学们,继续对图片中的数值进行解读,应用到论文中,可以用表格阐述。

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

df = pd.read_excel('data/Problem_C_Data_Wordle.xlsx',header=1)

data = df.drop(columns='Unnamed: 0')

data['Date'] = pd.to_datetime(data['Date'])

df.set_index('Date',inplace=True)

df.sort_index(ascending=True,inplace=True)

df =data.copy()

df['Words'] = df['Word'].apply(lambda x:str(list(x))[1:-1].replace("'","").replace(" ",""))

df['w1'], df['w2'],df['w3'], df['w4'],df['w5'] = df['Words'].str.split(',',n=4).str

df

small = [str(chr(i)) for i in range(ord('a'),ord('z')+1)]

letter_map = dict(zip(small,range(1,27)))

letter_map

{‘a’: 1, ‘b’: 2, ‘c’: 3, ‘d’: 4, ‘e’: 5, ‘f’: 6, ‘g’: 7, ‘h’: 8, ‘i’: 9, ‘j’: 10, ‘k’: 11, ‘l’: 12, ‘m’: 13, ‘n’: 14, ‘o’: 15, ‘p’: 16, ‘q’: 17, ‘r’: 18, ‘s’: 19, ‘t’: 20, ‘u’: 21, ‘v’: 22, ‘w’: 23, ‘x’: 24, ‘y’: 25, ‘z’: 26}

df['w1'] = df['w1'].map(letter_map)

df['w2'] = df['w2'].map(letter_map)

df['w3'] = df['w3'].map(letter_map)

df['w4'] = df['w4'].map(letter_map)

df['w5'] = df['w5'].map(letter_map)

df

(1)统计元音辅音频率

Vowel = ['a','e','i','o','u']

Consonant = list(set(small).difference(set(Vowel)))

def count_Vowel(s):

c = 0

for i in range(len(s)):

if s[i] in Vowel:

c+=1

return c

def count_Consonant(s):

c = 0

for i in range(len(s)):

if s[i] in Consonant:

c+=1

return c

df['Vowel_fre'] = df['Word'].apply(lambda x:count_Vowel(x))

df['Consonant_fre'] = df['Word'].apply(lambda x:count_Consonant(x))

df

(2)分析相关性

# 可视化Top20相关性最高的特征

features = ['w1','w2','w3','w4','w5','Vowel_fre','Consonant_fre']

label = ['1 try','6 tries','6 tries','6 tries','6 tries','6 tries','7 or more tries (X)']

n = 11

for i in label:

corr = df[[i]+features].corr().abs()

k = len(features)

col = corr.nlargest(k,i)[i].index

plt.subplots(figsize = (10,10))

plt.title(f"Pearson correlation with {i}")

sns.heatmap(df[col].corr(),annot=True,square=True,annot_kws={"size":14},cmap="YlGnBu")

plt.savefig(f'img/1/{n}.png',dpi=300)

n+=1

plt.show()

3 Code

Code获取,在浏览器中输入:betterbench.top/#/40/detail,或者Si我

剩下的问题二、三、四代码实现,在我主页查看,陆续发布出来。