文章目录

- 1、初始化集群

- 1.1 集群机器配置

- 1.2 配置主机名

- 1.3 配置hosts文件

- 1.4、配置互信

- 1.5、关闭防火墙

- 1.6、关闭selinux

- 1.7、配置Ceph安装源

- 1.8、配置时间同步

- 1.9、安装基础软件包

- 2、安装ceph集群

- 2.1 安装ceph-deploy

- 2.2 创建monitor节点

- 2.3 安装ceph-monitor

- 2.4 部署osd服务

- 2.5 创建ceph文件系统

- 2.6 测试k8s挂载ceph rbd

- 2.7 基于ceph rbd生成pv

- 2.8 基于storageclass动态生成pv

- 2.9 k8s挂载cephfs

1、初始化集群

1.1 集群机器配置

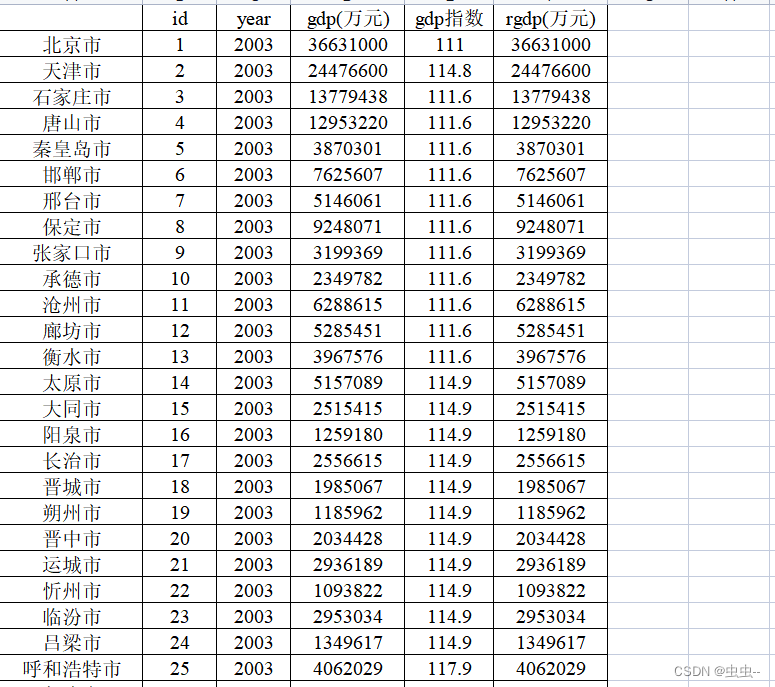

| 主机名 | ip | 硬盘大小 |

|---|---|---|

| master1-admin | 192.168.75.160 | 100G60G60G |

| node1-monitor | 192.168.75.161 | 100G60G60G |

| node2-osd | 192.168.75.162 | 100G60G60G |

1.2 配置主机名

[root@localhost ~]# hostnamectl set-hostname master1-admin && bash

[root@localhost ~]# hostnamectl set-hostname node1-monitor && bash

[root@localhost ~]# hostnamectl set-hostname node2-osd && bash

1.3 配置hosts文件

修改master1-admin、node1-monitor、node2-osd机器的/etc/hosts文件,增加如下三行:

192.168.75.160 master1-admin

192.168.75.161 node1-monitor

192.168.75.162 node2-osd

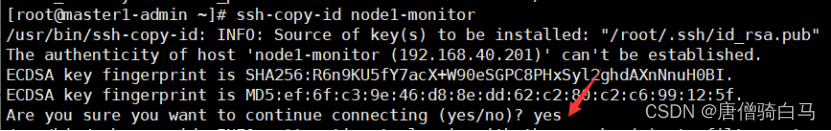

1.4、配置互信

生成ssh 密钥对

[root@master1-admin ~]# ssh-keygen -t rsa #一路回车,不输入密码

把本地的ssh公钥文件安装到远程主机对应的账户

[root@master1-admin ~]# ssh-copy-id node1-monitor

[root@master1-admin ~]# ssh-copy-id node2-osd

[root@master1-admin ~]# ssh-copy-id master1-admin

[root@node1-monitor ~]# ssh-keygen #一路回车,不输入密码

把本地的ssh公钥文件安装到远程主机对应的账户

[root@node1-monitor ~]# ssh-copy-id master1-admin

[root@node1-monitor ~]# ssh-copy-id node1-monitor

[root@node1-monitor ~]# ssh-copy-id node2-osd

[root@node2-osd ~]# ssh-keygen #一路回车,不输入密码

把本地的ssh公钥文件安装到远程主机对应的账户

[root@node2-osd ~]# ssh-copy-id master1-admin

[root@node2-osd ~]# ssh-copy-id node1-monitor

[root@node2-osd ~]# ssh-copy-id node2-osd

1.5、关闭防火墙

[root@master1-admin ~]# systemctl stop firewalld ; systemctl disable firewalld

[root@node1-monitor ~]# systemctl stop firewalld ; systemctl disable firewalld

[root@node2-osd ~]# systemctl stop firewalld ; systemctl disable firewalld

1.6、关闭selinux

所有ceph节点的selinux都关闭

#临时关闭

setenforce 0

#永久关闭

sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/selinux/config

#注意:修改selinux配置文件之后,重启机器,selinux才能永久生效

1.7、配置Ceph安装源

配置阿里云的repo源,master1-admin、node1-monitor、node2-osd上操作:

yum install -y yum-utils && sudo yum-config-manager --add-repo https://dl.fedoraproject.org/pub/epel/7/x86_64/ && sudo yum install --nogpgcheck -y epel-release && sudo rpm --import /etc/pki/rpm-gpg/RPM-GPG-KEY-EPEL-7 && sudo rm /etc/yum.repos.d/dl.fedoraproject.org*

在 /etc/yum.repos.d/ceph.repo新建repo源

cat /etc/yum.repos.d/ceph.repo

[Ceph]

name=Ceph packages for $basearch

baseurl=http://mirrors.aliyun.com/ceph/rpm-jewel/el7/x86_64/

enabled=1

gpgcheck=0

type=rpm-md

gpgkey=https://mirrors.aliyun.com/ceph/keys/release.asc

priority=1

[Ceph-noarch]

name=Ceph noarch packages

baseurl=http://mirrors.aliyun.com/ceph/rpm-jewel/el7/noarch/

enabled=1

gpgcheck=0

type=rpm-md

gpgkey=https://mirrors.aliyun.com/ceph/keys/release.asc

priority=1

[ceph-source]

name=Ceph source packages

baseurl=http://mirrors.aliyun.com/ceph/rpm-jewel/el7/SRPMS/

enabled=1

gpgcheck=0

type=rpm-md

gpgkey=https://mirrors.aliyun.com/ceph/keys/release.asc

priority=1

1.8、配置时间同步

配置机器时间跟网络时间同步,在ceph的每台机器上操作

service ntpd stop

ntpdate cn.pool.ntp.org

crontab -e

* */1 * * * /usr/sbin/ntpdate cn.pool.ntp.org

service crond restart

1.9、安装基础软件包

安装基础软件包,在ceph的每台机器上操作

yum install -y yum-utils device-mapper-persistent-data lvm2 wget net-tools nfs-utils lrzsz gcc gcc-c++ make cmake libxml2-devel openssl-devel curl curl-devel unzip sudo ntp libaio-devel wget vim ncurses-devel autoconf automake zlib-devel python-devel epel-release openssh-server socat ipvsadm conntrack ntpdate telnet deltarpm

2、安装ceph集群

2.1 安装ceph-deploy

在master1-admin节点安装ceph-deploy

[root@master1-admin ~]# yum install python-setuptools ceph-deploy -y

在master1-admin、node1-monitor和node2-osd节点安装ceph

[root@master1-admin]# yum install ceph ceph-radosgw -y

[root@node1-monitor ~]# yum install ceph ceph-radosgw -y

[root@node2-osd ~]# yum install ceph ceph-radosgw -y

root@master1-admin ~]# ceph --version

ceph version 10.2.11 (e4b061b47f07f583c92a050d9e84b1813a35671e)

2.2 创建monitor节点

创建一个目录,用于保存 ceph-deploy 生成的配置文件信息的

[root@master1-admin ceph ~]# cd /etc/ceph

[root@master1-admin ceph]# ceph-deploy new master1-admin node1-monitor node2-osd

[root@master1-admin ceph]# ls

#生成了如下配置文件

ceph.conf ceph-deploy-ceph.log ceph.mon.keyring

Ceph配置文件、一个monitor密钥环和一个日志文件

2.3 安装ceph-monitor

1、修改ceph配置文件

#把ceph.conf配置文件里的默认副本数从3改成1 。把osd_pool_default_size = 2

加入[global]段,这样只有2个osd也能达到active+clean状态:

[root@master1-admin ceph]# cat ceph.conf

[global]

fsid = 6300ffea-4e1d-4f19-ad27-935894f2d6ee

mon_initial_members = node1-monitor

mon_host = 192.168.75.161

auth_cluster_required = cephx

auth_service_required = cephx

auth_client_required = cephx

filestore_xattr_use_omap = true

osd_pool_default_size = 2

mon clock drift allowed = 0.500

mon clock drift warn backoff = 10

mon clock drift allowed #监视器间允许的时钟漂移量默认值0.05

mon clock drift warn backoff #时钟偏移警告的退避指数。默认值5

ceph对每个mon之间的时间同步延时默认要求在0.05s之间,这个时间有的时候太短了。所以如果ceph集群如果出现clock问题就检查ntp时间同步或者适当放宽这个误差时间。

cephx是认证机制是整个 Ceph 系统的用户名/密码

2、配置初始monitor、收集所有的密钥

[root@master1-admin]# cd /etc/ceph

[root@master1-admin]# ceph-deploy mon create-initial

[root@master1-admin]# ls *.keyring

ceph.bootstrap-mds.keyring ceph.bootstrap-mgr.keyring ceph.bootstrap-osd.keyring ceph.bootstrap-rgw.keyring ceph.client.admin.keyring ceph.mon.keyring

2.4 部署osd服务

准备osd

[root@ master1-admin ceph]# cd /etc/ceph/

[root@master1-admin ceph]# ceph-deploy osd prepare master1-admin:/dev/sdb [root@master1-admin ceph]# ceph-deploy osd prepare node1-monitor:/dev/sdb

[root@master1-admin ceph]# ceph-deploy osd prepare node2-osd:/dev/sdb

激活osd

[root@master1-admin ceph]# ceph-deploy osd activate master1-admin:/dev/sdb1

[root@master1-admin ceph]# ceph-deploy osd activate node1-monitor:/dev/sdb1

[root@master1-admin ceph]# ceph-deploy osd activate node2-osd:/dev/sdb1

查看状态

[root@ master1-admin ceph]# ceph-deploy osd list master1-admin node1-monitor node2-osd

要使用Ceph文件系统,你的Ceph的存储集群里至少需要存在一个Ceph的元数据服务器(mds)。

2.5 创建ceph文件系统

创建mds

[root@ master1-admin ceph]# ceph-deploy mds create master1-admin node1-monitor node2-osd

查看ceph当前文件系统

[root@ master1-admin ceph]# ceph fs ls

No filesystems enabled

一个cephfs至少要求两个librados存储池,一个为data,一个为metadata。当配置这两个存储池时,注意:

- 为metadata pool设置较高级别的副本级别,因为metadata的损坏可能导致整个文件系统不用

- 建议,metadata pool使用低延时存储,比如SSD,因为metadata会直接影响客户端的响应速度。

创建存储池

[root@ master1-admin ceph]# ceph osd pool create cephfs_data 128

pool 'cephfs_data' created

[root@ master1-admin ceph]# ceph osd pool create cephfs_metadata 128

pool 'cephfs_metadata' created

关于创建存储池

确定 pg_num 取值是强制性的,因为不能自动计算。下面是几个常用的值:

*少于 5 个 OSD 时可把 pg_num 设置为 128

*OSD 数量在 5 到 10 个时,可把 pg_num 设置为 512

*OSD 数量在 10 到 50 个时,可把 pg_num 设置为 4096

*OSD 数量大于 50 时,你得理解权衡方法、以及如何自己计算 pg_num 取值

*自己计算 pg_num 取值时可借助 pgcalc 工具

随着 OSD 数量的增加,正确的 pg_num 取值变得更加重要,因为它显著地影响着集群的行为、以及出错时的数据持久性(即灾难性事件导致数据丢失的概率)。

创建文件系统

创建好存储池后,你就可以用 fs new 命令创建文件系统了

[root@master1-admin ceph]# ceph fs new xianchao cephfs_metadata cephfs_data

new fs with metadata pool 2 and data pool 1

其中:new后的fsname 可自定义

[root@ master1-admin ceph]# ceph fs ls #查看创建后的cephfs

[root@ master1-admin ceph]# ceph mds stat #查看mds节点状态

xianchao:1 {0=master1-admin=up:active} 2 up:standby

active是活跃的,另1个是处于热备份的状态

[root@master1-admin ceph]# ceph -s

cluster 6300ffea-4e1d-4f19-ad27-935894f2d6ee

health HEALTH_OK

monmap e1: 1 mons at {node1-monitor=192.168.75.161:6789/0}

election epoch 3, quorum 0 node1-monitor

fsmap e6: 1/1/1 up {0=node2-osd=up:active}, 2 up:standby

osdmap e20: 3 osds: 3 up, 3 in

flags sortbitwise,require_jewel_osds

pgmap v46: 320 pgs, 3 pools, 2068 bytes data, 20 objects

326 MB used, 15000 MB / 15326 MB avail

320 active+clean

**HEALTH_OK表示ceph**集群正常

2.6 测试k8s挂载ceph rbd

需要有一套k8s环境

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master Ready control-plane,master 117m v1.23.1

node1 Ready <none> 116m v1.23.1

master节点ip:192.168.75.140

node1节点ip:192.168.75.141

kubernetes要想使用ceph,需要在k8s的每个node节点安装ceph-common,把ceph节点上的ceph.repo文件拷贝到k8s各个节点/etc/yum.repos.d/目录下,然后在k8s的各个节点yum install ceph-common -y

[root@master1-admin ceph]# scp /etc/yum.repos.d/ceph.repo 192.168.75.140:/etc/yum.repos.d/

[root@master1-admin ceph]# scp /etc/yum.repos.d/ceph.repo 192.168.75.141:/etc/yum.repos.d/

[root@node1 ~]# yum install ceph-common -y

[root@master ~]# yum install ceph-common -y

将ceph配置文件拷贝到k8s的控制节点和工作节点

[root@master1-admin ceph]# scp /etc/ceph/* 192.168.75.140:/etc/ceph/

[root@master1-admin ceph]# scp /etc/ceph/* 192.168.75.141:/etc/ceph/

创建ceph rbd

[root@master1-admin ~]# ceph osd pool create k8srbd1 6

pool 'k8srbd1' created

[root@master1-admin ~]# rbd create rbda -s 1024 -p k8srbd1

[root@master1-admin ~]# rbd feature disable k8srbd1/rbda object-map fast-diff deep-flatten

创建pod,挂载ceph rbd

[root@master ceph]# cat pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: testrbd

spec:

containers:

- image: nginx

name: nginx

imagePullPolicy: IfNotPresent

volumeMounts:

- name: testrbd

mountPath: /mnt

volumes:

- name: testrbd

rbd:

monitors:

- '192.168.75.160:6789'

- '192.168.75.161:6789'

- '192.168.75.162:6789'

pool: k8srbd1

image: rbda

fsType: xfs

readOnly: false

user: admin

keyring: /etc/ceph/ceph.client.admin.keyring

[root@master ceph]# kubectl apply -f pod.yaml

查看pod是否创建成功

[root@master ceph]# kubectl get pod -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

testrbd 1/1 Running 0 43s 10.244.166.130 node1 <none> <none>

注意: k8srbd1下的rbda被pod挂载了,那其他pod就不能占用这个k8srbd1下的rbda了

例:创建一个pod-1.yaml

[root@master ceph]# cat pod-1.yaml

apiVersion: v1

kind: Pod

metadata:

name: testrbd1

spec:

containers:

- image: nginx

name: nginx

imagePullPolicy: IfNotPresent

volumeMounts:

- name: testrbd

mountPath: /mnt

volumes:

- name: testrbd

rbd:

monitors:

- '192.168.75.160:6789'

- '192.168.75.161:6789'

- '192.168.75.162:6789'

pool: k8srbd1

image: rbda

fsType: xfs

readOnly: false

user: admin

keyring: /etc/ceph/ceph.client.admin.keyring

[root@master ceph]# kubectl apply -f pod-1.yaml

[root@master ceph]# kubectl get pods

NAME READY STATUS RESTARTS AGE

testrbd 1/1 Running 0 4m41s

testrbd1 0/1 Pending 0 5s

查看testrbd1详细信息

[root@master ceph]# kubectl describe pods testrbd1

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning FailedScheduling 52s default-scheduler 0/2 nodes are available: 1 node(s) had no available disk, 1 node(s) had taint {node-role.kubernetes.io/master: }, that the pod didn't tolerate.

上面一直pending状态,通过warnning可以发现是因为我的pool: k8srbd1 image: rbda,已经被其他pod占用了

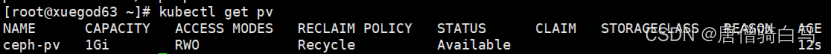

2.7 基于ceph rbd生成pv

1、创建ceph-secret这个k8s secret对象,这个secret对象用于k8s volume插件访问ceph集群,获取client.admin的keyring值,并用base64编码,在master1-admin(ceph管理节点)操作

[root@master1-admin ~]# ceph auth get-key client.admin | base64

QVFBWk0zeGdZdDlhQXhBQVZsS0poYzlQUlBianBGSWJVbDNBenc9PQ==

2.创建ceph的secret,在k8s的控制节点操作

[root@master~]# cat ceph-secret.yaml

apiVersion: v1

kind: Secret

metadata:

name: ceph-secret

data:

key: QVFBWk0zeGdZdDlhQXhBQVZsS0poYzlQUlBianBGSWJVbDNBenc9PQ==

[root@master~]# kubectl apply -f ceph-secret.yaml

3.回到ceph 管理节点创建pool池

[root@master1-admin ~]# ceph osd pool create k8stest 6

pool 'k8stest' created

[root@master1-admin ~]# rbd create rbda -s 1024 -p k8stest

[root@master1-admin ~]# rbd feature disable k8stest/rbda object-map fast-diff deep-flatten

4、创建pv

[root@master~]# cat pv.yaml

apiVersion: v1

kind: PersistentVolume

metadata:

name: ceph-pv

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

rbd:

monitors:

- '192.168.75.160:6789'

- '192.168.75.161:6789'

- '192.168.75.162:6789'

pool: k8stest

image: rbda

user: admin

secretRef:

name: ceph-secret

fsType: xfs

readOnly: false

persistentVolumeReclaimPolicy: Recycle

[root@master~]# kubectl apply -f pv.yaml

persistentvolume/ceph-pv created

[root@master~]# kubectl get pv

5、创建pvc

[root@master~]# cat pvc.yaml

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: ceph-pvc

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1Gi

[root@master~]# kubectl apply -f pvc.yaml

[root@masterceph]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

ceph-pvc Bound ceph-pv 1Gi RWO

6、测试挂载pvc

[root@master~]# cat pod-2.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

spec:

selector:

matchLabels:

app: nginx

replicas: 2 # tells deployment to run 2 pods matching the template

template: # create pods using pod definition in this template

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

volumeMounts:

- mountPath: "/ceph-data"

name: ceph-data

volumes:

- name: ceph-data

persistentVolumeClaim:

claimName: ceph-pvc

[root@master~]# kubectl apply -f pod-2.yaml

[root@master~]# kubectl get pods -l app=nginx

NAME READY STATUS RESTARTS AGE

nginx-deployment-fc894c564-8tfhn 1/1 Running 0 78s

nginx-deployment-fc894c564-l74km 1/1 Running 0 78s

[root@master~]# cat pod-3.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-1-deployment

spec:

selector:

matchLabels:

appv1: nginxv1

replicas: 2 # tells deployment to run 2 pods matching the template

template: # create pods using pod definition in this template

metadata:

labels:

appv1: nginxv1

spec:

containers:

- name: nginx

image: nginx

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

volumeMounts:

- mountPath: "/ceph-data"

name: ceph-data

volumes:

- name: ceph-data

persistentVolumeClaim:

claimName: ceph-pvc

[root@master~]# kubectl apply -f pod-3.yaml

[root@master~]# kubectl get pods -l appv1=nginxv1

NAME READY STATUS RESTARTS AGE

nginx-1-deployment-cd74b5dd4-jqvxj 1/1 Running 0 56s

nginx-1-deployment-cd74b5dd4-lrddc 1/1 Running 0 56s

通过上面实验可以发现pod是可以以ReadWriteOnce共享挂载相同的pvc的

注意:ceph rbd块存储的特点

ceph rbd块存储能在同一个node上跨pod以ReadWriteOnce共享挂载

ceph rbd块存储能在同一个node上同一个pod多个容器中以ReadWriteOnce共享挂载

ceph rbd块存储不能跨node以ReadWriteOnce共享挂载

如果一个使用ceph rdb的pod所在的node挂掉,这个pod虽然会被调度到其它node,但是由于rbd不能跨node多次挂载和挂掉的pod不能自动解绑pv的问题,这个新pod不会正常运行

Deployment更新特性:

deployment触发更新的时候,它确保至少所需 Pods 75% 处于运行状态(最大不可用比例为 25%)。故像一个pod的情况,肯定是新创建一个新的pod,新pod运行正常之后,再关闭老的pod。

默认情况下,它可确保启动的 Pod 个数比期望个数最多多出 25%

问题:

结合ceph rbd共享挂载的特性和deployment更新的特性,我们发现原因如下:

由于deployment触发更新,为了保证服务的可用性,deployment要先创建一个pod并运行正常之后,再去删除老pod。而如果新创建的pod和老pod不在一个node,就会导致此故障。

解决办法:

1,使用能支持跨node和pod之间挂载的共享存储,例如cephfs,GlusterFS等

2,给node添加label,只允许deployment所管理的pod调度到一个固定的node上。(不建议,这个node挂掉的话,服务就故障了)

2.8 基于storageclass动态生成pv

[root@master1-admin]# chmod 777 -R /etc/ceph/*

[root@node1-monitor ~]# chmod 777 -R /etc/ceph/*

[root@node2-osd]# chmod 777 -R /etc/ceph/*

[root@master~]# chmod 777 -R /etc/ceph/*

[root@master]# chmod 777 -R /etc/ceph/*

[root@master1-admin]# mkdir /root/.ceph/

[root@master1-admin]# cp -ar /etc/ceph/ /root/.ceph/

[root@node1-monitor ~]#mkdir /root/.ceph/

[root@node1-monitor ~]#cp -ar /etc/ceph/ /root/.ceph/

[root@node2-osd]# mkdir /root/.ceph/

[root@node2-osd]# cp -ar /etc/ceph/ /root/.ceph/

[root@master~]#mkdir /root/.ceph/

[root@master~]#cp -ar /etc/ceph/ /root/.ceph/

[root@node1]# mkdir /root/.ceph/

[root@node1~]#cp -ar /etc/ceph/ /root/.ceph/

1、创建rbd的供应商provisioner

[root@master~]# cat rbd-provisioner.yaml

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: rbd-provisioner

rules:

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

- apiGroups: [""]

resources: ["services"]

resourceNames: ["kube-dns","coredns"]

verbs: ["list", "get"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: rbd-provisioner

subjects:

- kind: ServiceAccount

name: rbd-provisioner

namespace: default

roleRef:

kind: ClusterRole

name: rbd-provisioner

apiGroup: rbac.authorization.k8s.io

---

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

name: rbd-provisioner

rules:

- apiGroups: [""]

resources: ["secrets"]

verbs: ["get"]

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: rbd-provisioner

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: rbd-provisioner

subjects:

- kind: ServiceAccount

name: rbd-provisioner

namespace: default

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: rbd-provisioner

spec:

selector:

matchLabels:

app: rbd-provisioner

replicas: 1

strategy:

type: Recreate

template:

metadata:

labels:

app: rbd-provisioner

spec:

containers:

- name: rbd-provisioner

image: quay.io/xianchao/external_storage/rbd-provisioner:v1

imagePullPolicy: IfNotPresent

env:

- name: PROVISIONER_NAME

value: ceph.com/rbd

serviceAccount: rbd-provisioner

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: rbd-provisioner

[root@master~]# kubectl apply -f rbd-provisioner.yaml

[root@master~]# kubectl get pods -l app=rbd-provisioner

NAME READY STATUS RESTARTS AGE

rbd-provisioner-685746688f-8mbz5 1/1 Running 0 111s

2、创建ceph-secret

创建pool池

[root@master1-admin ~]# ceph osd pool create k8stest1 6

[root@master~]# cat ceph-secret-1.yaml

apiVersion: v1

kind: Secret

metadata:

name: ceph-secret-1

type: "ceph.com/rbd"

data:

key: QVFBWk0zeGdZdDlhQXhBQVZsS0poYzlQUlBianBGSWJVbDNBenc9PQ==

[root@master~]# kubectl apply -f ceph-secret-1.yaml

3、创建storageclass

[root@master~]# cat storageclass.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: k8s-rbd

provisioner: ceph.com/rbd

parameters:

monitors: 192.168.75.161:6789

adminId: admin

adminSecretName: ceph-secret-1

pool: k8stest1

userId: admin

userSecretName: ceph-secret-1

fsType: xfs

imageFormat: "2"

imageFeatures: "layering"

[root@master~]# kubectl apply -f storageclass.yaml

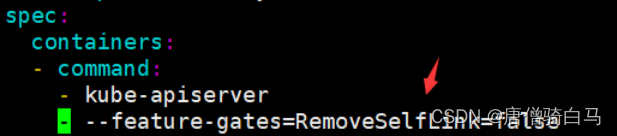

注意:

k8s1.20版本通过rbd provisioner动态生成pv会报错:

[root@xianchaomaster1 ~]# kubectl logs rbd-provisioner-685746688f-8mbz

E0418 15:50:09.610071 1 controller.go:1004] provision “default/rbd-pvc” class “k8s-rbd”: unexpected error getting claim reference: selfLink was empty, can’t make reference,报错原因是1.20版本仅用了selfLink,解决方法如下;

编辑/etc/kubernetes/manifests/kube-apiserver.yaml

在这里:

spec:

containers:

- command:

- kube-apiserver

添加这一行:

—feature-gates=RemoveSelfLink=false

[root@master~]# systemctl restart kubelet

[root@master~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

kube-apiserver-master 1/1 Running

4、创建pvc

[root@master~]# cat rbd-pvc.yaml

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: rbd-pvc

spec:

accessModes:

- ReadWriteOnce

volumeMode: Filesystem

resources:

requests:

storage: 1Gi

storageClassName: k8s-rbd

[root@xianchaomaster1 ~]# kubectl apply -f rbd-pvc.yaml

[root@xianchaomaster1 ~]# kubectl get pvc

创建pod,挂载pvc

[root@master~]# cat pod-sto.yaml

apiVersion: v1

kind: Pod

metadata:

labels:

test: rbd-pod

name: ceph-rbd-pod

spec:

containers:

- name: ceph-rbd-nginx

image: nginx

imagePullPolicy: IfNotPresent

volumeMounts:

- name: ceph-rbd

mountPath: /mnt

readOnly: false

volumes:

- name: ceph-rbd

persistentVolumeClaim:

claimName: rbd-pvc

[root@xianchaomaster1 ~]# kubectl apply -f pod-sto.yaml

[root@xianchaomaster1 ~]# kubectl get pods -l test=rbd-pod

NAME READY STATUS RESTARTS AGE

ceph-rbd-pod 1/1 Running 0 50s

2.9 k8s挂载cephfs

[root@master1-admin ~]# ceph fs ls

name: xianchao, metadata pool: cephfs_metadata, data pools: [cephfs_data ]

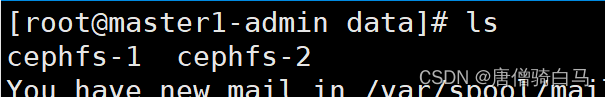

1、创建ceph子目录

为了别的地方能挂载cephfs,先创建一个secretfile

[root@master1-admin ~]# cat /etc/ceph/ceph.client.admin.keyring |grep key|awk -F" " '{print $3}' > /etc/ceph/admin.secret

挂载cephfs的根目录到集群的mon节点下的一个目录,比如xianchao_data,因为挂载后,我们就可以直接在xianchao_data下面用Linux命令创建子目录了。

[root@master1-admin ~]# mkdir ceph_data

[root@master1-admin ~]# mount -t ceph 192.168.75.161:6789:/ /root/ceph_data-o name=admin,secretfile=/etc/ceph/admin.secret

[root@master1-admin ~]# df -h

192.168.40.201:6789:/ 165G 106M 165G 1% /root/xianchao_data

在cephfs的根目录里面创建了一个子目录lucky,k8s以后就可以挂载这个目录

[root@master1-admin ~]# cd /root/ceph_data/

[root@master1-admin ceph_data]# mkdir data

[root@master1-admin ceph_data]# chmod 0777 data/

2、测试k8s的pod挂载cephfs

1)创建k8s连接ceph使用的secret

将/etc/ceph/ceph.client.admin.keyring里面的key的值转换为base64,否则会有问题

[root@master1-admin ceph_data]# echo "AQB/XudjVvj9BBAASX0b1EBKRrXc7tAOYAi0qQ==" | base64

QVFCL1h1ZGpWdmo5QkJBQVNYMGIxRUJLUnJYYzd0QU9ZQWkwcVE9PQo=

[root@master ceph]# cat cephfs-secret.yaml

apiVersion: v1

kind: Secret

metadata:

name: cephfs-secret

data:

key: QVFCL1h1ZGpWdmo5QkJBQVNYMGIxRUJLUnJYYzd0QU9ZQWkwcVE9PQo=

[root@master ceph]# kubectl apply -f cephfs-secret.yaml

[root@master ceph]# cat cephfs-pv.yaml

apiVersion: v1

kind: PersistentVolume

metadata:

name: cephfs-pv

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteMany

cephfs:

monitors:

- 192.168.75.161:6789

path: /data

user: admin

readOnly: false

secretRef:

name: cephfs-secret

persistentVolumeReclaimPolicy: Recycle

[root@master ceph]# kubectl apply -f cephfs-pv.yaml

[root@master ceph]# cat cephfs-pvc.yaml

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: cephfs-pvc

spec:

accessModes:

- ReadWriteMany

volumeName: cephfs-pv

resources:

requests:

storage: 1Gi

[root@master ceph]# kubectl apply -f cephfs-pvc.yaml

[root@master ceph]# kubectl get pvc

NAME STATUS VOLUME CAPACITY

cephfs-pvc Bound cephfs-pv 1Gi RWX

创建第一个pod,挂载cephfs-pvc

[root@master ceph]# cat cephfs-pod-1.yaml

apiVersion: v1

kind: Pod

metadata:

name: cephfs-pod-1

spec:

containers:

- image: nginx

name: nginx

imagePullPolicy: IfNotPresent

volumeMounts:

- name: test-v1

mountPath: /mnt

volumes:

- name: test-v1

persistentVolumeClaim:

claimName: cephfs-pvc

创建第二个pod,挂载cephfs-pvc

[root@master ceph]# cat cephfs-pod-2.yaml

apiVersion: v1

kind: Pod

metadata:

name: cephfs-pod-2

spec:

containers:

- image: nginx

name: nginx

imagePullPolicy: IfNotPresent

volumeMounts:

- name: test-v1

mountPath: /mnt

volumes:

- name: test-v1

persistentVolumeClaim:

claimName: cephfs-pvc

[root@master ceph]# kubectl exec -it cephfs-pod-1 -- /bin/bash

root@cephfs-pod-1:/mnt# touch cephfs-1

[root@master ceph]# kubectl exec -it cephfs-pod-2 -- /bin/bash

root@cephfs-pod-1:/mnt# touch cephfs-2

回到master1-admin上,可以看到在cephfs文件目录下已经存在内容了