通义千问 简介

通义千问是阿里云推出的超大规模语言模型,以下是其优缺点:

优点

- 强大的基础能力:具备语义理解与抽取、闲聊、上下文对话、生成与创作、知识与百科、代码、逻辑与推理、计算、角色扮演等多种能力。可以续写小说、编写邮件、解答学习问题、生成创意文案等,还能辅助程序员写代码、读代码、查bug、优化代码,支持200多种编程语言。

- 多模态理解:通义千问2.0版本支持文本回答、图片理解、文档解析三种模式,用户可以上传图片和文档并询问与之相关的问题。例如通义智文可智能阅读网页、论文、图书和文档,帮助用户获取提要和概述,通义听悟可对音频内容进行转写、翻译、角色分离等多种处理。

- 模型优化与创新:采用了transformer框架,并对架构进行了多处修改,如选择不受限的嵌入方法、采用ROPE位置编码并使用FP32精确度、在模型中移除大多数层的偏差并在QKV注意力层添加偏差、采用Swiglu激活函数等,提高了模型的性能表现和精确度。还利用简单的免训练技术扩展上下文长度,包括NTK感知插值、动态NTK感知插值、logn - scaling、window attention等,有效扩展了Transformer模型的上下文长度,而不影响计算效率或准确性。

- 开源与生态发展:不断推进模型的开源进展,开源了多种参数规模的模型以及多模态大模型,如Qwen - 7B、Qwen - 14B、Qwen - 72B等,累计下载量超过150万,催生出150多款新模型、新应用,推动了AI领域的技术交流和发展。

- 成本效益优势:发布的推理模型QwQ - 32B参数规模为320亿,性能与激活参数370亿的DeepSeek - R1相当,通过精简参数降低了部署成本,为行业提供了更具性价比的解决方案,适用于实时性要求较高的应用场景,可在保证输出质量的前提下,显著降低算力成本。

- 安全与合规性:阿里云为通义千问提供安全可隔离的专属数据存储空间,通过服务器端加密机制,实现高安全性、高合规性的数据保护,保障用户数据隐私。

缺点

- 特定任务表现待提升:在一些非常专业、精细的特定任务上,可能还需要进一步优化和训练才能达到更理想的效果。例如在复杂的科学计算、某些专业领域的深度分析等方面,可能不如专门针对这些领域开发的模型表现出色。

- 存在错误和不准确情况:尽管通义千问具有强大的知识理解和生成能力,但像其他大语言模型一样,也可能会生成错误或不准确的信息,尤其是在处理一些复杂、模糊或罕见的问题时。

- 多语言处理局限:虽然预训练数据涉及多语言,但主要以中文和英文为主,在处理其他小语种语言任务时,可能不如专门的多语言模型表现好。

- 商业化落地挑战:对于其开源模型,在商业化落地过程中,面临着技术迭代速度快、行业竞争压力大、在具体行业中的适配性需验证等问题,例如医疗、金融等领域对模型精度和合规性要求极高,需要进一步探索如何满足这些领域的实际需求。

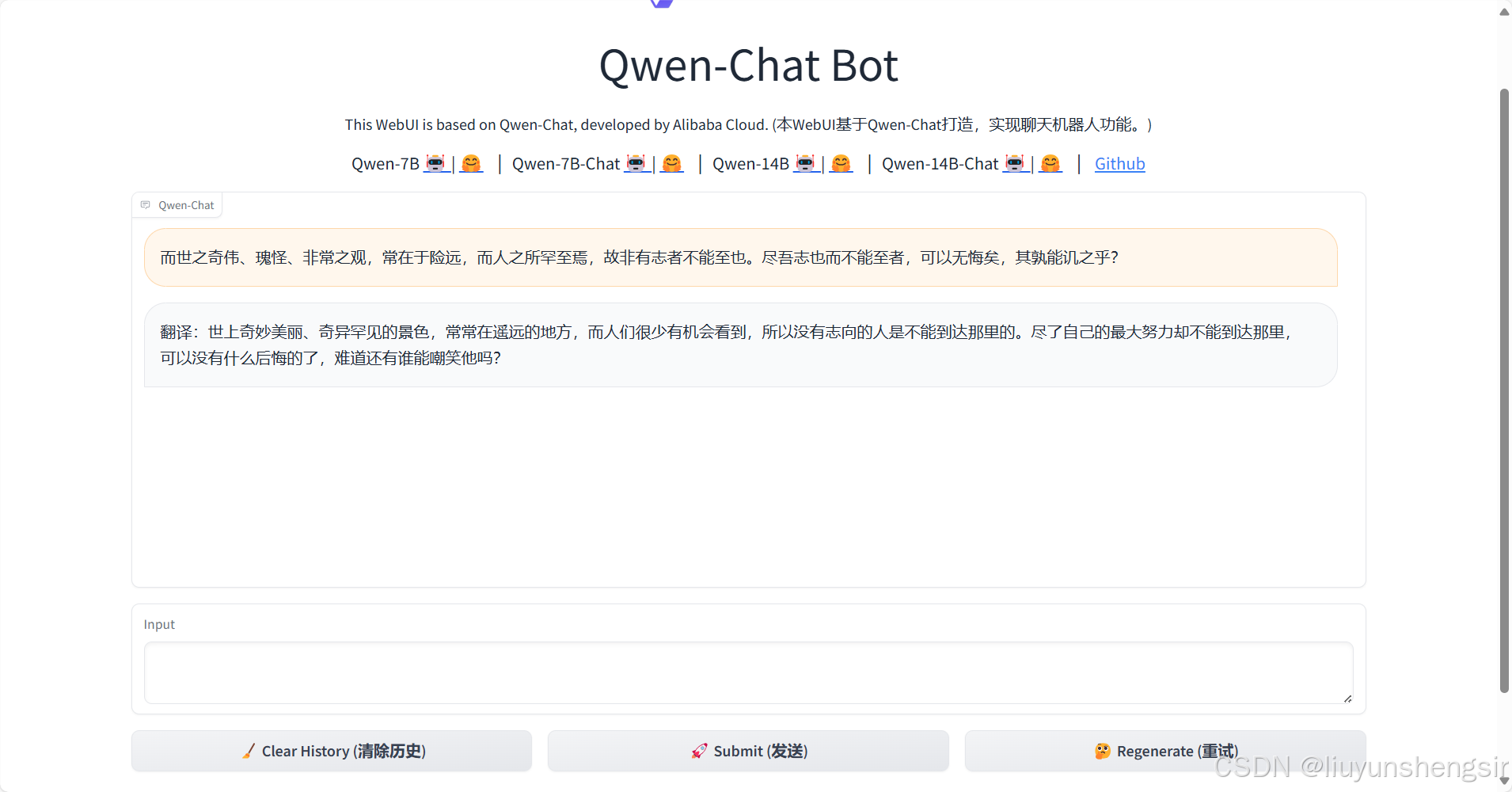

下载模型

git clone https://www.modelscope.cn/models/qwen/Qwen-7B-Chat.git

git clone https://modelscope.cn/models/qwen/Qwen-1_8B-Chat.git

下载Qwen

git clone https://github.com/QwenLM/Qwen.git

安装依赖、启动

cd /mnt/workspace/Qwen-7B-Chat/Qwen

pip install -r requirements.txt

pip install -r requirements_web_demo.txt

python web_demo.py

web_demo.py 代码

# Copyright (c) Alibaba Cloud.

#

# This source code is licensed under the license found in the

# LICENSE file in the root directory of this source tree.

"""A simple web interactive chat demo based on gradio."""

import os

from argparse import ArgumentParser

import gradio as gr

import mdtex2html

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

from transformers.generation import GenerationConfig

# 32G内存都没跑起来,只能跑小的,要么量化后再跑

#DEFAULT_CKPT_PATH = 'Qwen/Qwen-7B-Chat'

DEFAULT_CKPT_PATH = '/mnt/workspace/Qwen-7B-Chat/Qwen-1_8B-Chat'

def _get_args():

parser = ArgumentParser()

parser.add_argument("-c", "--checkpoint-path", type=str, default=DEFAULT_CKPT_PATH,

help="Checkpoint name or path, default to %(default)r")

parser.add_argument("--cpu-only", action="store_true", help="Run demo with CPU only")

parser.add_argument("--share", action="store_true", default=False,

help="Create a publicly shareable link for the interface.")

parser.add_argument("--inbrowser", action="store_true", default=False,

help="Automatically launch the interface in a new tab on the default browser.")

parser.add_argument("--server-port", type=int, default=8000,

help="Demo server port.")

parser.add_argument("--server-name", type=str, default="0.0.0.0",

help="Demo server name.")

args = parser.parse_args()

return args

def _load_model_tokenizer(args):

tokenizer = AutoTokenizer.from_pretrained(

args.checkpoint_path, trust_remote_code=True, resume_download=True,

)

if args.cpu_only:

device_map = "cpu"

else:

device_map = "auto"

model = AutoModelForCausalLM.from_pretrained(

args.checkpoint_path,

device_map=device_map,

trust_remote_code=True,

resume_download=True,

).eval()

config = GenerationConfig.from_pretrained(

args.checkpoint_path, trust_remote_code=True, resume_download=True,

)

return model, tokenizer, config

def postprocess(self, y):

if y is None:

return []

for i, (message, response) in enumerate(y):

y[i] = (

None if message is None else mdtex2html.convert(message),

None if response is None else mdtex2html.convert(response),

)

return y

gr.Chatbot.postprocess = postprocess

def _parse_text(text):

lines = text.split("\n")

lines = [line for line in lines if line != ""]

count = 0

for i, line in enumerate(lines):

if "```" in line:

count += 1

items = line.split("`")

if count % 2 == 1:

lines[i] = f'<pre><code class="language-{items[-1]}">'

else:

lines[i] = f"<br></code></pre>"

else:

if i > 0:

if count % 2 == 1:

line = line.replace("`", r"\`")

line = line.replace("<", "<")

line = line.replace(">", ">")

line = line.replace(" ", " ")

line = line.replace("*", "*")

line = line.replace("_", "_")

line = line.replace("-", "-")

line = line.replace(".", ".")

line = line.replace("!", "!")

line = line.replace("(", "(")

line = line.replace(")", ")")

line = line.replace("$", "$")

lines[i] = "<br>" + line

text = "".join(lines)

return text

def _gc():

import gc

gc.collect()

if torch.cuda.is_available():

torch.cuda.empty_cache()

def _launch_demo(args, model, tokenizer, config):

def predict(_query, _chatbot, _task_history):

print(f"User: {_parse_text(_query)}")

_chatbot.append((_parse_text(_query), ""))

full_response = ""

for response in model.chat_stream(tokenizer, _query, history=_task_history, generation_config=config):

_chatbot[-1] = (_parse_text(_query), _parse_text(response))

yield _chatbot

full_response = _parse_text(response)

print(f"History: {_task_history}")

_task_history.append((_query, full_response))

print(f"Qwen-Chat: {_parse_text(full_response)}")

def regenerate(_chatbot, _task_history):

if not _task_history:

yield _chatbot

return

item = _task_history.pop(-1)

_chatbot.pop(-1)

yield from predict(item[0], _chatbot, _task_history)

def reset_user_input():

return gr.update(value="")

def reset_state(_chatbot, _task_history):

_task_history.clear()

_chatbot.clear()

_gc()

return _chatbot

with gr.Blocks() as demo:

gr.Markdown("""\

<p align="center"><img src="https://qianwen-res.oss-cn-beijing.aliyuncs.com/logo_qwen.jpg" style="height: 80px"/><p>""")

gr.Markdown("""<center><font size=8>Qwen-Chat Bot</center>""")

gr.Markdown(

"""\

<center><font size=3>This WebUI is based on Qwen-Chat, developed by Alibaba Cloud. \

(本WebUI基于Qwen-Chat打造,实现聊天机器人功能。)</center>""")

gr.Markdown("""\

<center><font size=4>

Qwen-7B <a href="https://modelscope.cn/models/qwen/Qwen-7B/summary">🤖 </a> |

<a href="https://huggingface.co/Qwen/Qwen-7B">🤗</a>  |

Qwen-7B-Chat <a href="https://modelscope.cn/models/qwen/Qwen-7B-Chat/summary">🤖 </a> |

<a href="https://huggingface.co/Qwen/Qwen-7B-Chat">🤗</a>  |

Qwen-14B <a href="https://modelscope.cn/models/qwen/Qwen-14B/summary">🤖 </a> |

<a href="https://huggingface.co/Qwen/Qwen-14B">🤗</a>  |

Qwen-14B-Chat <a href="https://modelscope.cn/models/qwen/Qwen-14B-Chat/summary">🤖 </a> |

<a href="https://huggingface.co/Qwen/Qwen-14B-Chat">🤗</a>  |

<a href="https://github.com/QwenLM/Qwen">Github</a></center>""")

chatbot = gr.Chatbot(label='Qwen-Chat', elem_classes="control-height")

query = gr.Textbox(lines=2, label='Input')

task_history = gr.State([])

with gr.Row():

empty_btn = gr.Button("🧹 Clear History (清除历史)")

submit_btn = gr.Button("🚀 Submit (发送)")

regen_btn = gr.Button("🤔️ Regenerate (重试)")

submit_btn.click(predict, [query, chatbot, task_history], [chatbot], show_progress=True)

submit_btn.click(reset_user_input, [], [query])

empty_btn.click(reset_state, [chatbot, task_history], outputs=[chatbot], show_progress=True)

regen_btn.click(regenerate, [chatbot, task_history], [chatbot], show_progress=True)

gr.Markdown("""\

<font size=2>Note: This demo is governed by the original license of Qwen. \

We strongly advise users not to knowingly generate or allow others to knowingly generate harmful content, \

including hate speech, violence, pornography, deception, etc. \

(注:本演示受Qwen的许可协议限制。我们强烈建议,用户不应传播及不应允许他人传播以下内容,\

包括但不限于仇恨言论、暴力、色情、欺诈相关的有害信息。)""")

demo.queue().launch(

share=args.share,

inbrowser=args.inbrowser,

server_port=args.server_port,

server_name=args.server_name,

)

def main():

args = _get_args()

model, tokenizer, config = _load_model_tokenizer(args)

_launch_demo(args, model, tokenizer, config)

if __name__ == '__main__':

main()

![STM32单片机入门学习——第13节: [6-1] TIM定时中断](https://i-blog.csdnimg.cn/direct/f5783eecb6be4d7e8b448cd4312d3be7.png)