arXiv-2019

https://github.com/dmlc/gluon-cv

文章目录

- 1 Background and Motivation

- 2 Related Work

- 3 Advantages / Contributions

- 4 Method

- 4.1 Visually Coherent Image Mixup for Object Detection

- 4.2 Classification Head Label Smoothing

- 4.3 Data Preprocessing

- 4.4 Training Schedule Revamping

- 4.5 Synchronized Batch Normalization

- 4.6 Random shapes training for singlestage object detection networks

- 5 Experiments

- 5.1 Datasets and Metrics

- 5.2 Incremental trick evaluation on Pascal VOC

- 5.3 Bag of Freebies on MS COCO

- 5.4 Impact of mixup on different phases of training detection network

- 6 Conclusion(own) / Future work

1 Background and Motivation

分类任务出了篇 【BoT】《Bag of Tricks for Image Classification with Convolutional Neural Networks》(CVPR-2019),目标检测任务比图像分类任务复杂,作者基于目标检测任务,来借鉴整合了些 bag of freebies,inference free,有明显涨点

2 Related Work

-

Scattering tricks from Image Classification

- Learning rate warmup

- Label smoothing

- mixup

- Cosine annealing strategy

-

Deep Object Detection Pipelines

- one stage

- two stage

3 Advantages / Contributions

整理了一些目标检测的 bag of freebies(proposed a visually coherent image mixup methods),使 yolov3 在 coco 数据集上提了 5 个点

4 Method

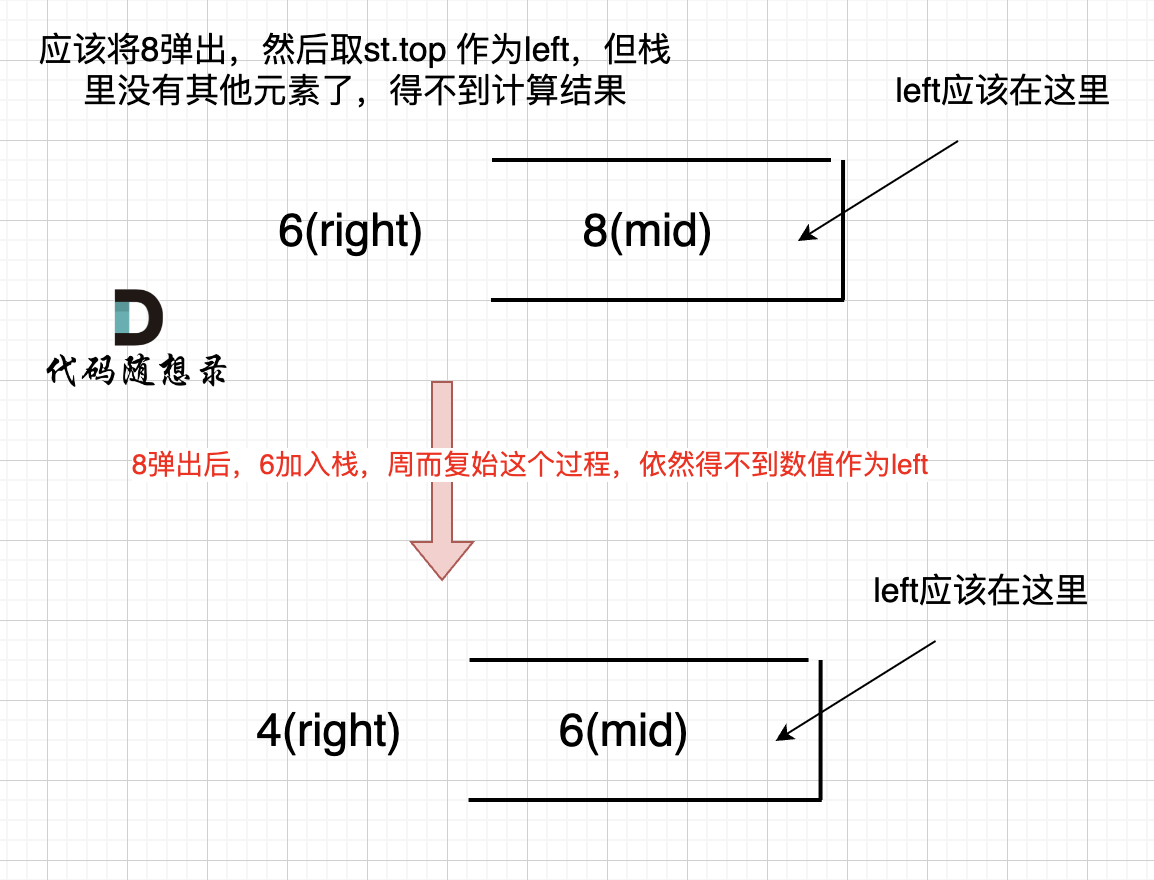

4.1 Visually Coherent Image Mixup for Object Detection

原版的 【Mixup】《Mixup:Beyond Empirical Risk Minimization》(ICLR-2018)在分类任务中的应用

beta 分布取得是

α

=

β

=

0.5

\alpha=\beta=0.5

α=β=0.5,混合比例比较极端,基本非 A 即 B

beta 分布的这种分布应用在目标检测任务中的结果如下

贴在画面中的大象很容易漏检

作者把 mixup 应用在目标检测的时候,把 beta 分布的参数改为了 α = β = 1.5 \alpha=\beta=1.5 α=β=1.5

混合的更充分,作者对这种混合形式的语言描述如下

similar to the transition frames commonly observed when we are watching low FPS movies or surveillance videos.

混合效果如下

networks are encouraged to observe unusual crowded patches

4.2 Classification Head Label Smoothing

正常的 label smoothing,用在分类分支上,来自 【Inception-v3】《Rethinking the Inception Architecture for Computer Vision》(CVPR-2016)

标签的 one-shot 的分布(缺点 This encourages the model to be too confident)改为上述公式分布

4.3 Data Preprocessing

(1)Random geometry transformation

-

random cropping (with constraints)

-

random expansion

-

random horizontal flip

-

random resize (with random interpolation)

two-stage 的目标检测相比 one stage,多了一个 roi pooling 以及之后的过程,所以 two-stage 的时候,not use random cropping techniques during data augmentation.

(2)Random color jittering

-

brightness

-

hue

-

saturation

-

contrast

4.4 Training Schedule Revamping

传统 step learning rate 的缺点

Step schedule has sharp learning rate transition which may cause the optimizer to re-stabilize the learning momentum in the next few iterations.

作者采用余弦学习率(the higher frequency of learning rate adjustment) + warm up(avoid gradient explosion during the initial training iterations.)

4.5 Synchronized Batch Normalization

跨机器 synchronized batch normalization in object detection

4.6 Random shapes training for singlestage object detection networks

H = W = { 320 ; 352 ; 384 ; 416 ; 448 ; 480 ; 512 ; 544 ; 576 ; 608 } H =W = \{320; 352; 384; 416; 448; 480; 512; 544; 576; 608\} H=W={320;352;384;416;448;480;512;544;576;608}

5 Experiments

-

yolov3

-

faster rcnn

5.1 Datasets and Metrics

-

PASCAL VOC

Pascal VOC 2007 trainval and 2012 trainval for training and 2007 test set for validation. -

COCO

5.2 Incremental trick evaluation on Pascal VOC

mixup 改进提升点

看看其他 bag of freebies 的提升情况

可以看到 one-stage 对 data augmentation 更依赖

two-stage sampling based proposals can effectively replace random cropping,对 data augmentation 的依赖更少

5.3 Bag of Freebies on MS COCO

对 yolov3 的提升还是很猛的

全类别,基本都是提升的红色

5.4 Impact of mixup on different phases of training detection network

mix up 有两个地方涉及到

-

pre-training classification network backbone with traditional mixup

-

training detection networks using proposed visually coherent image mixup for object detection

预训练和训练的时候都用 mix up 提升最明显

作者的解释

We expect by applying mixup in both training phases, shallow layers of networks are receiving statistically similar inputs, resulting in less perturbations for low level filters.

6 Conclusion(own) / Future work

- Rosenfeld A, Zemel R, Tsotsos J K. The elephant in the room[J]. arXiv preprint arXiv:1808.03305, 2018.

- a large amount of anchor size(up to 30k) is effectively contributing to batch size implicitly

![[数据集][目标检测]智慧养殖场肉鸡健康状态检测数据集VOC+YOLO格式4657张2类别](https://i-blog.csdnimg.cn/direct/5e275e3792f84fb4b0edc9e2b3e08b27.png)