目录

基于 MindSpore 的分组卷积类定义与实现

基于 MindSpore 的 ShuffleV1Block 类定义与数据处理

基于 MindSpore 的 ShuffleNetV1 网络定义与构建

Cifar-10 数据集的获取、预处理与分批操作

基于 ShuffleNetV1 模型在 CPU 上的训练配置与执行

ShuffleNetV1 模型在 CPU 上的测试与评估

ShuffleNetV1 模型对 Cifar10 数据集的推理效果展示

基于 MindSpore 的分组卷积类定义与实现

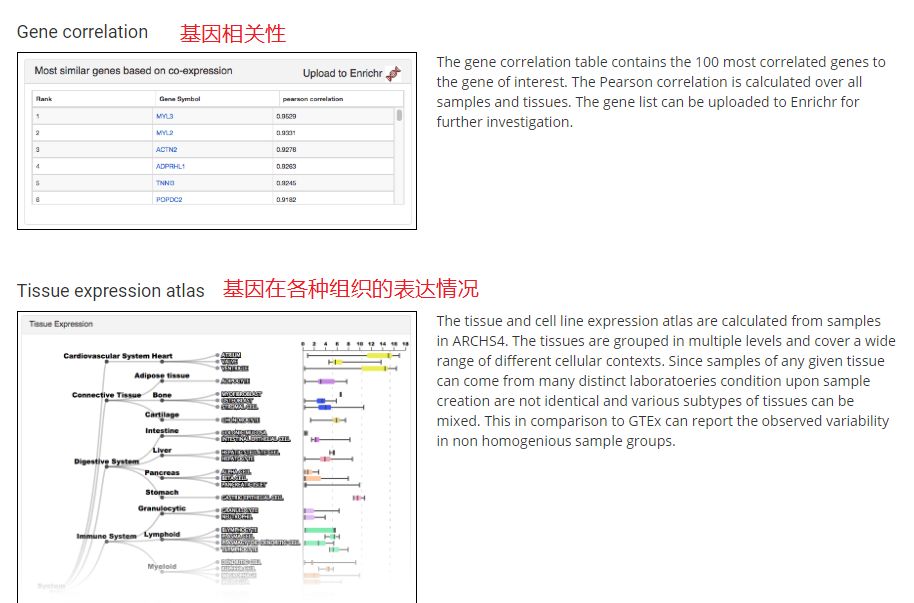

定义了一个名为 GroupConv 的类,用于实现分组卷积操作。首先,通过 pip 命令确保安装了特定版本的 mindspore 库。在 GroupConv 类中,初始化方法接收多个参数,包括输入通道数、输出通道数、卷积核大小、步长、填充模式、填充值、分组数和是否有偏置等。通过创建一个 nn.CellList 来存储每个分组的卷积层。在构造方法中,将输入特征按分组数进行分割,然后分别通过每个分组的卷积层进行处理,最后将处理结果拼接起来返回。

代码如下:

%%capture captured_output

# 实验环境已经预装了mindspore==2.3.0rc1,如需更换mindspore版本,可更改下面mindspore的版本号

!pip uninstall mindspore -y

!pip install -i https://pypi.mirrors.ustc.edu.cn/simple mindspore==2.3.0rc1

# 查看当前 mindspore 版本

!pip show mindspore

from mindspore import nn

import mindspore.ops as ops

from mindspore import Tensor

class GroupConv(nn.Cell):

def __init__(self, in_channels, out_channels, kernel_size,

stride, pad_mode="pad", pad=0, groups=1, has_bias=False):

super(GroupConv, self).__init__()

self.groups = groups

self.convs = nn.CellList()

for _ in range(groups):

self.convs.append(nn.Conv2d(in_channels // groups, out_channels // groups,

kernel_size=kernel_size, stride=stride, has_bias=has_bias,

padding=pad, pad_mode=pad_mode, group=1, weight_init='xavier_uniform'))

def construct(self, x):

features = ops.split(x, split_size_or_sections=int(len(x[0]) // self.groups), axis=1)

outputs = ()

for i in range(self.groups):

outputs = outputs + (self.convs[i](features[i].astype("float32")),)

out = ops.cat(outputs, axis=1)

return out基于 MindSpore 的 ShuffleV1Block 类定义与数据处理

定义了一个名为 ShuffleV1Block 的类,它继承自 nn.Cell 类。在初始化方法 __init__ 中,接收了多个参数,包括输入通道数 inp 、输出通道数 oup 、分组数 group 等,并根据步长 stride 的情况计算输出的通道数。还定义了一些激活函数和卷积、批归一化等操作的配置。通过构建两个顺序结构 branch_main_1 和 branch_main_2 来定义主要的分支操作。在构造方法 construct 中,根据步长的不同处理输入数据,对右侧分支进行一系列操作,并在适当的情况下进行通道打乱和元素相加或拼接,最后通过激活函数输出结果。channel_shuffle 方法用于实现通道打乱的操作。

代码如下:

class ShuffleV1Block(nn.Cell):

def __init__(self, inp, oup, group, first_group, mid_channels, ksize, stride):

super(ShuffleV1Block, self).__init__()

self.stride = stride

pad = ksize // 2

self.group = group

if stride == 2:

outputs = oup - inp

else:

outputs = oup

self.relu = nn.ReLU()

branch_main_1 = [

GroupConv(in_channels=inp, out_channels=mid_channels,

kernel_size=1, stride=1, pad_mode="pad", pad=0,

groups=1 if first_group else group),

nn.BatchNorm2d(mid_channels),

nn.ReLU(),

]

branch_main_2 = [

nn.Conv2d(mid_channels, mid_channels, kernel_size=ksize, stride=stride,

pad_mode='pad', padding=pad, group=mid_channels,

weight_init='xavier_uniform', has_bias=False),

nn.BatchNorm2d(mid_channels),

GroupConv(in_channels=mid_channels, out_channels=outputs,

kernel_size=1, stride=1, pad_mode="pad", pad=0,

groups=group),

nn.BatchNorm2d(outputs),

]

self.branch_main_1 = nn.SequentialCell(branch_main_1)

self.branch_main_2 = nn.SequentialCell(branch_main_2)

if stride == 2:

self.branch_proj = nn.AvgPool2d(kernel_size=3, stride=2, pad_mode='same')

def construct(self, old_x):

left = old_x

right = old_x

out = old_x

right = self.branch_main_1(right)

if self.group > 1:

right = self.channel_shuffle(right)

right = self.branch_main_2(right)

if self.stride == 1:

out = self.relu(left + right)

elif self.stride == 2:

left = self.branch_proj(left)

out = ops.cat((left, right), 1)

out = self.relu(out)

return out

def channel_shuffle(self, x):

batchsize, num_channels, height, width = ops.shape(x)

group_channels = num_channels // self.group

x = ops.reshape(x, (batchsize, group_channels, self.group, height, width))

x = ops.transpose(x, (0, 2, 1, 3, 4))

x = ops.reshape(x, (batchsize, num_channels, height, width))

return x基于 MindSpore 的 ShuffleNetV1 网络定义与构建

定义了一个名为 ShuffleNetV1 的类,它继承自 nn.Cell 类。在初始化方法中,根据指定的模型大小 model_size 和分组数 group 来设置不同阶段的输出通道数。定义了一些初始的卷积、池化操作和特征提取模块。通过循环构建多个 ShuffleV1Block 来组成特征提取部分,并根据不同阶段和重复次数设置相应的参数。最后定义了全局平均池化和全连接分类器。在构造方法中,按照顺序对输入数据进行初始卷积、最大池化、特征提取、全局池化、形状调整和分类操作,最终输出分类结果。

代码如下:

class ShuffleNetV1(nn.Cell):

def __init__(self, n_class=1000, model_size='2.0x', group=3):

super(ShuffleNetV1, self).__init__()

print('model size is ', model_size)

self.stage_repeats = [4, 8, 4]

self.model_size = model_size

if group == 3:

if model_size == '0.5x':

self.stage_out_channels = [-1, 12, 120, 240, 480]

elif model_size == '1.0x':

self.stage_out_channels = [-1, 24, 240, 480, 960]

elif model_size == '1.5x':

self.stage_out_channels = [-1, 24, 360, 720, 1440]

elif model_size == '2.0x':

self.stage_out_channels = [-1, 48, 480, 960, 1920]

else:

raise NotImplementedError

elif group == 8:

if model_size == '0.5x':

self.stage_out_channels = [-1, 16, 192, 384, 768]

elif model_size == '1.0x':

self.stage_out_channels = [-1, 24, 384, 768, 1536]

elif model_size == '1.5x':

self.stage_out_channels = [-1, 24, 576, 1152, 2304]

elif model_size == '2.0x':

self.stage_out_channels = [-1, 48, 768, 1536, 3072]

else:

raise NotImplementedError

input_channel = self.stage_out_channels[1]

self.first_conv = nn.SequentialCell(

nn.Conv2d(3, input_channel, 3, 2, 'pad', 1, weight_init='xavier_uniform', has_bias=False),

nn.BatchNorm2d(input_channel),

nn.ReLU(),

)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, pad_mode='same')

features = []

for idxstage in range(len(self.stage_repeats)):

numrepeat = self.stage_repeats[idxstage]

output_channel = self.stage_out_channels[idxstage + 2]

for i in range(numrepeat):

stride = 2 if i == 0 else 1

first_group = idxstage == 0 and i == 0

features.append(ShuffleV1Block(input_channel, output_channel,

group=group, first_group=first_group,

mid_channels=output_channel // 4, ksize=3, stride=stride))

input_channel = output_channel

self.features = nn.SequentialCell(features)

self.globalpool = nn.AvgPool2d(7)

self.classifier = nn.Dense(self.stage_out_channels[-1], n_class)

def construct(self, x):

x = self.first_conv(x)

x = self.maxpool(x)

x = self.features(x)

x = self.globalpool(x)

x = ops.reshape(x, (-1, self.stage_out_channels[-1]))

x = self.classifier(x)

return x

Cifar-10 数据集的获取、预处理与分批操作

首先从指定的 URL 下载了一个数据集压缩文件。然后定义了一个函数 get_dataset ,用于获取 Cifar10 数据集。根据数据集的使用场景(训练或测试),为图像数据定义了不同的预处理操作,包括随机裁剪、水平翻转、调整大小、缩放、归一化和格式转换等,对标签数据进行类型转换。接着,使用 Cifar10Dataset 类加载数据集,并对图像和标签进行相应的映射操作,最后将数据集按照指定的批大小进行分批处理。最后,通过调用 get_dataset 函数获取训练数据集,并计算了每个 epoch 包含的批次数。

代码如下:

from download import download

url = "https://mindspore-website.obs.cn-north-4.myhuaweicloud.com/notebook/datasets/cifar-10-binary.tar.gz"

download(url, "./dataset", kind="tar.gz", replace=True)

import mindspore as ms

from mindspore.dataset import Cifar10Dataset

from mindspore.dataset import vision, transforms

def get_dataset(train_dataset_path, batch_size, usage):

image_trans = []

if usage == "train":

image_trans = [

vision.RandomCrop((32, 32), (4, 4, 4, 4)),

vision.RandomHorizontalFlip(prob=0.5),

vision.Resize((224, 224)),

vision.Rescale(1.0 / 255.0, 0.0),

vision.Normalize([0.4914, 0.4822, 0.4465], [0.2023, 0.1994, 0.2010]),

vision.HWC2CHW()

]

elif usage == "test":

image_trans = [

vision.Resize((224, 224)),

vision.Rescale(1.0 / 255.0, 0.0),

vision.Normalize([0.4914, 0.4822, 0.4465], [0.2023, 0.1994, 0.2010]),

vision.HWC2CHW()

]

label_trans = transforms.TypeCast(ms.int32)

dataset = Cifar10Dataset(train_dataset_path, usage=usage, shuffle=True, num_samples=2000)

dataset = dataset.map(image_trans, 'image')

dataset = dataset.map(label_trans, 'label')

dataset = dataset.batch(batch_size, drop_remainder=True)

return dataset

dataset = get_dataset("./dataset/cifar-10-batches-bin", 4, "train")

batches_per_epoch = dataset.get_dataset_size()

运行结果:

基于 ShuffleNetV1 模型在 CPU 上的训练配置与执行

定义了一个名为 train 的函数,用于训练一个 ShuffleNetV1 模型。首先设置了运行环境为 PYNATIVE_MODE 并指定在 CPU 上运行。定义了交叉熵损失函数,设置了学习率调度器、优化器和损失缩放管理器。构建了 Model 对象,配置了一些回调函数,包括时间监控、损失监控和模型检查点保存等。最后打印训练开始信息,进行模型训练,并计算和打印训练所用的总时间。

代码如下:

import time

import mindspore

import numpy as np

from mindspore import Tensor, nn

from mindspore.train import ModelCheckpoint, CheckpointConfig, TimeMonitor, LossMonitor, Model, Top1CategoricalAccuracy, Top5CategoricalAccuracy

def train():

mindspore.set_context(mode=mindspore.PYNATIVE_MODE, device_target="CPU")

net = ShuffleNetV1(model_size="2.0x", n_class=10)

loss = nn.CrossEntropyLoss(weight=None, reduction='mean', label_smoothing=0.1)

min_lr = 0.0005

base_lr = 0.05

lr_scheduler = mindspore.nn.cosine_decay_lr(min_lr,

base_lr,

batches_per_epoch*2,

batches_per_epoch,

decay_epoch=2)

lr = Tensor(lr_scheduler[-1])

optimizer = nn.Momentum(params=net.trainable_params(), learning_rate=lr, momentum=0.9, weight_decay=0.00004, loss_scale=1024)

loss_scale_manager = ms.amp.FixedLossScaleManager(1024, drop_overflow_update=False)

model = Model(net, loss_fn=loss, optimizer=optimizer, amp_level="O3", loss_scale_manager=loss_scale_manager)

callback = [TimeMonitor(), LossMonitor()]

save_ckpt_path = "./"

config_ckpt = CheckpointConfig(save_checkpoint_steps=batches_per_epoch, keep_checkpoint_max=5)

ckpt_callback = ModelCheckpoint("shufflenetv1", directory=save_ckpt_path, config=config_ckpt)

callback += [ckpt_callback]

print("============== Starting Training ==============")

start_time = time.time()

model.train(1, dataset, callbacks=callback)

use_time = time.time() - start_time

hour = str(int(use_time // 60 // 60))

minute = str(int(use_time // 60 % 60))

second = str(int(use_time % 60))

print("total time:" + hour + "h " + minute + "m " + second + "s")

print("============== Train Success ==============")

if __name__ == '__main__':

train()运行结果:

ShuffleNetV1 模型在 CPU 上的测试与评估

定义了一个名为 test 的函数。首先设置了运行环境为 PYNATIVE_MODE 并指定在 CPU 上运行,获取了测试数据集。然后加载了之前训练保存的模型参数到 ShuffleNetV1 模型中,并设置模型为评估模式。定义了交叉熵损失函数和评估指标,构建了 Model 对象用于评估。计算了模型在测试数据集上评估所用的时间,并将评估结果、模型检查点路径和时间信息整理成日志字符串,打印出来并保存到指定的文件中。

代码如下:

from mindspore import load_checkpoint, load_param_into_net

def test():

mindspore.set_context(mode=mindspore.PYNATIVE_MODE, device_target="CPU")

dataset = get_dataset("./dataset/cifar-10-batches-bin", 2, "test")

net = ShuffleNetV1(model_size="2.0x", n_class=10)

param_dict = load_checkpoint("shufflenetv1-1_500.ckpt")

load_param_into_net(net, param_dict)

net.set_train(False)

loss = nn.CrossEntropyLoss(weight=None, reduction='mean', label_smoothing=0.1)

eval_metrics = {'Loss': nn.Loss(), 'Top_1_Acc': Top1CategoricalAccuracy(),

'Top_5_Acc': Top5CategoricalAccuracy()}

model = Model(net, loss_fn=loss, metrics=eval_metrics)

start_time = time.time()

res = model.eval(dataset, dataset_sink_mode=False)

use_time = time.time() - start_time

hour = str(int(use_time // 60 // 60))

minute = str(int(use_time // 60 % 60))

second = str(int(use_time % 60))

log = "result:" + str(res) + ", ckpt:'" + "./shufflenetv1-1_500.ckpt" \

+ "', time: " + hour + "h " + minute + "m " + second + "s"

print(log)

filename = './eval_log.txt'

with open(filename, 'a') as file_object:

file_object.write(log + '\n')

if __name__ == '__main__':

test()运行结果:

model size is 2.0x

result:{'Loss': 2.2262978378534317, 'Top_1_Acc': 0.211, 'Top_5_Acc': 0.758}, ckpt:'./shufflenetv1-1_500.ckpt', time: 0h 3m 17s

ShuffleNetV1 模型对 Cifar10 数据集的推理效果展示

首先加载了训练好的 ShuffleNetV1 模型参数。然后,从指定路径获取了 Cifar10 数据集的训练数据,并对用于预测和展示的数据进行了预处理和分批操作。定义了类别字典,用于将预测的类别索引转换为具体的类别名称。通过模型对预测数据进行推理,得到预测结果,并将预测结果对应的类别名称作为标题,与原始图像一起在图形中展示。

代码如下:

import mindspore

import matplotlib.pyplot as plt

import mindspore.dataset as ds

net = ShuffleNetV1(model_size="2.0x", n_class=10)

show_lst = []

param_dict = load_checkpoint("shufflenetv1-1_500.ckpt")

load_param_into_net(net, param_dict)

model = Model(net)

dataset_predict = ds.Cifar10Dataset(dataset_dir="./dataset/cifar-10-batches-bin", shuffle=False, usage="train")

dataset_show = ds.Cifar10Dataset(dataset_dir="./dataset/cifar-10-batches-bin", shuffle=False, usage="train")

dataset_show = dataset_show.batch(16)

show_images_lst = next(dataset_show.create_dict_iterator())["image"].asnumpy()

image_trans = [

vision.RandomCrop((32, 32), (4, 4, 4, 4)),

vision.RandomHorizontalFlip(prob=0.5),

vision.Resize((224, 224)),

vision.Rescale(1.0 / 255.0, 0.0),

vision.Normalize([0.4914, 0.4822, 0.4465], [0.2023, 0.1994, 0.2010]),

vision.HWC2CHW()

]

dataset_predict = dataset_predict.map(image_trans, 'image')

dataset_predict = dataset_predict.batch(16)

class_dict = {0:"airplane", 1:"automobile", 2:"bird", 3:"cat", 4:"deer", 5:"dog", 6:"frog", 7:"horse", 8:"ship", 9:"truck"}

# 推理效果展示(上方为预测的结果,下方为推理效果图片)

plt.figure(figsize=(16, 5))

predict_data = next(dataset_predict.create_dict_iterator())

output = model.predict(ms.Tensor(predict_data['image']))

pred = np.argmax(output.asnumpy(), axis=1)

index = 0

for image in show_images_lst:

plt.subplot(2, 8, index+1)

plt.title('{}'.format(class_dict[pred[index]]))

index += 1

plt.imshow(image)

plt.axis("off")

plt.show()运行结果:

打印时间: