学习目标:熟练掌握MindSpore使用方法

学习心得体会,记录时间

- 了解MindSpore总体架构

- 学会使用MindSpore

- 简单应用时间-手写数字识别

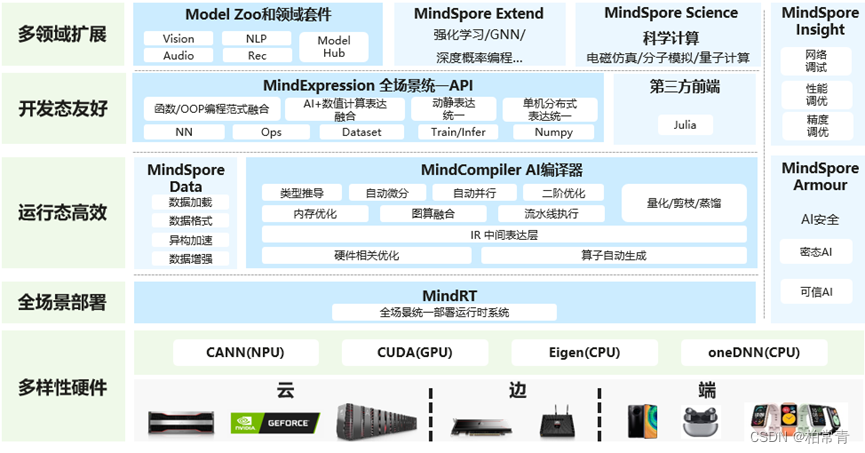

一、MindSpore总体架构

华为MindSpore为全场景深度学习框架,开发高效,全场景统一部署特点。

二、学会使用MindSpore

2.1 jupyter云上开发配置

方法一、在昇思大模型平台上有应有的环境,还可以申请使用算力,在自己电脑上需要下载mindspore安装,再安装依赖库down等

- 登录官方网网址;

- 注册账号

- 进入AI实验室

- 申请算力。启用算力支持,进入jupyter云上开发,即可开始你的算法设计。

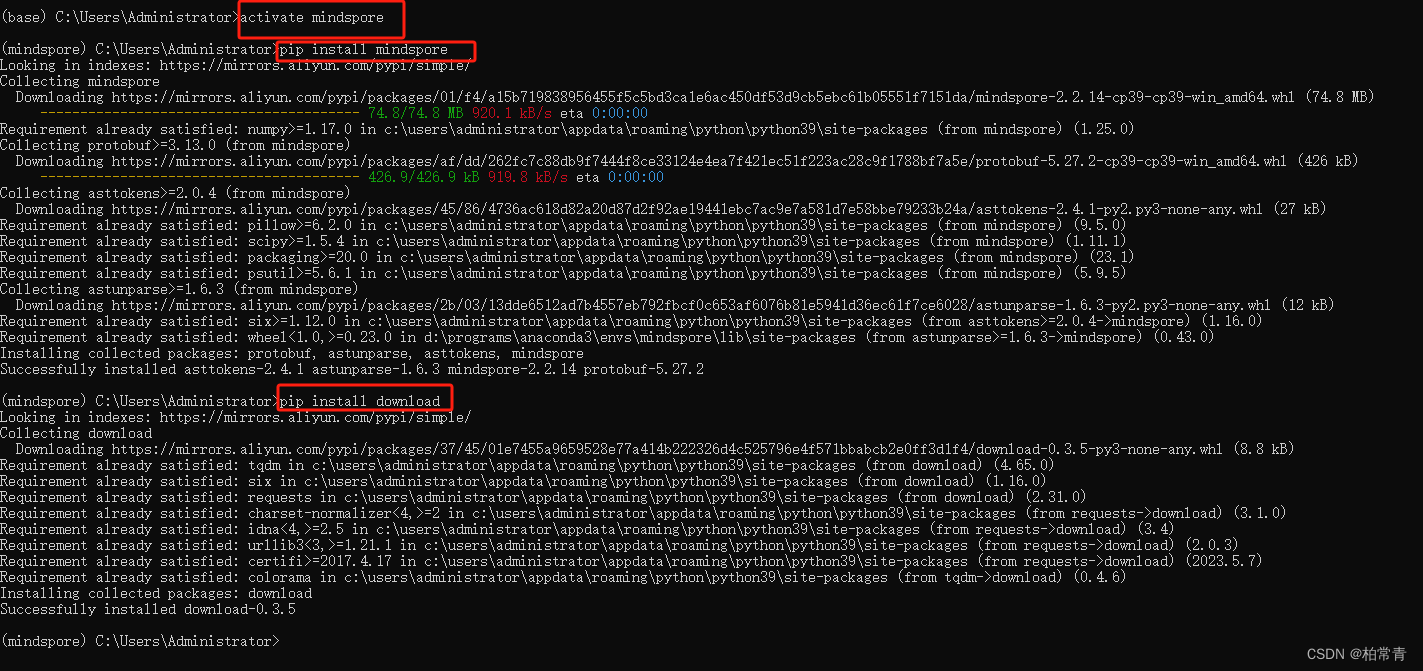

2.2 本地开发配置Mindspore

方法二、本地搭建mindspore环境,安装相关依赖库,即可开始算法设计

比如在本地电脑anaconda3上配置mindspore框架环境。

anaconda prompt命令窗口创建环境

conda create -n mindspore python=3.9.19```

- 切换到该环境

activate mindspore

- 安装

mindspore

pip install mindspore

- 安装依赖库

download,加载常用数据集

pip install download

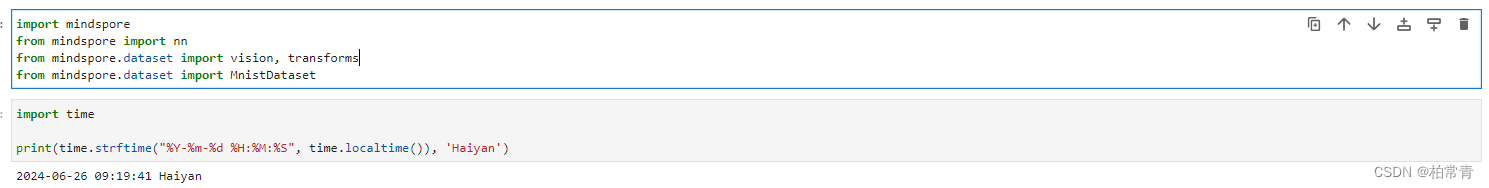

2.3 制作数据集

1.直接导入MnistDataset

mindspore和其他成熟的框架,如torch,类似。包含处理深度学习和数据集的方法,如nn,transforms,vision等;以及常用的数据集API,mindspore.dataset可供加载的数据集,如MNIST、CIFAR-10、CIFAR-100、VOC、COCO、ImageNet、CelebA、CLUE等,也支持加载业界标准格式的数据集,包括MindRecord、TFRecord、Manifest等。此外,用户还可以使用此模块定义和加载自己的数据集。

import mindspore.dataset as ds

import mindspore.dataset.transforms as transforms

import mindspore.dataset.vision as vision

常用数据集术语说明如下:

Dataset,所有数据集的基类,提供了数据处理方法来帮助预处理数据。

SourceDataset,一个抽象类,表示数据集管道的来源,从文件和数据库等数据源生成数据。

MappableDataset,一个抽象类,表示支持随机访问的源数据集。

Iterator,用于枚举元素的数据集迭代器的基类。

- 生成自定义数据集示例如下:

import numpy as np

import mindspore as ms

import mindspore.dataset as ds

import mindspore.dataset.vision as vision

import mindspore.dataset.transforms as transforms

# 构造图像和标签

data1 = np.array(np.random.sample(size=(300, 300, 3)) * 255, dtype=np.uint8)

data2 = np.array(np.random.sample(size=(300, 300, 3)) * 255, dtype=np.uint8)

data3 = np.array(np.random.sample(size=(300, 300, 3)) * 255, dtype=np.uint8)

data4 = np.array(np.random.sample(size=(300, 300, 3)) * 255, dtype=np.uint8)

label = [1, 2, 3, 4]

# 加载数据集

dataset = ds.NumpySlicesDataset(([data1, data2, data3, data4], label), ["data", "label"])

# 对data数据增强

dataset = dataset.map(operations=vision.RandomCrop(size=(250, 250)), input_columns="data")

dataset = dataset.map(operations=vision.Resize(size=(224, 224)), input_columns="data")

dataset = dataset.map(operations=vision.Normalize(mean=[0.485 * 255, 0.456 * 255, 0.406 * 255],

std=[0.229 * 255, 0.224 * 255, 0.225 * 255]),

input_columns="data")

dataset = dataset.map(operations=vision.HWC2CHW(), input_columns="data")

# 对label变换类型

dataset = dataset.map(operations=transforms.TypeCast(ms.int32), input_columns="label")

# batch操作

dataset = dataset.batch(batch_size=2)

# 创建迭代器

epochs = 2

ds_iter = dataset.create_dict_iterator(output_numpy=True, num_epochs=epochs)

for _ in range(epochs):

for item in ds_iter:

print("item: {}".format(item), flush=True)

实验输出结果:

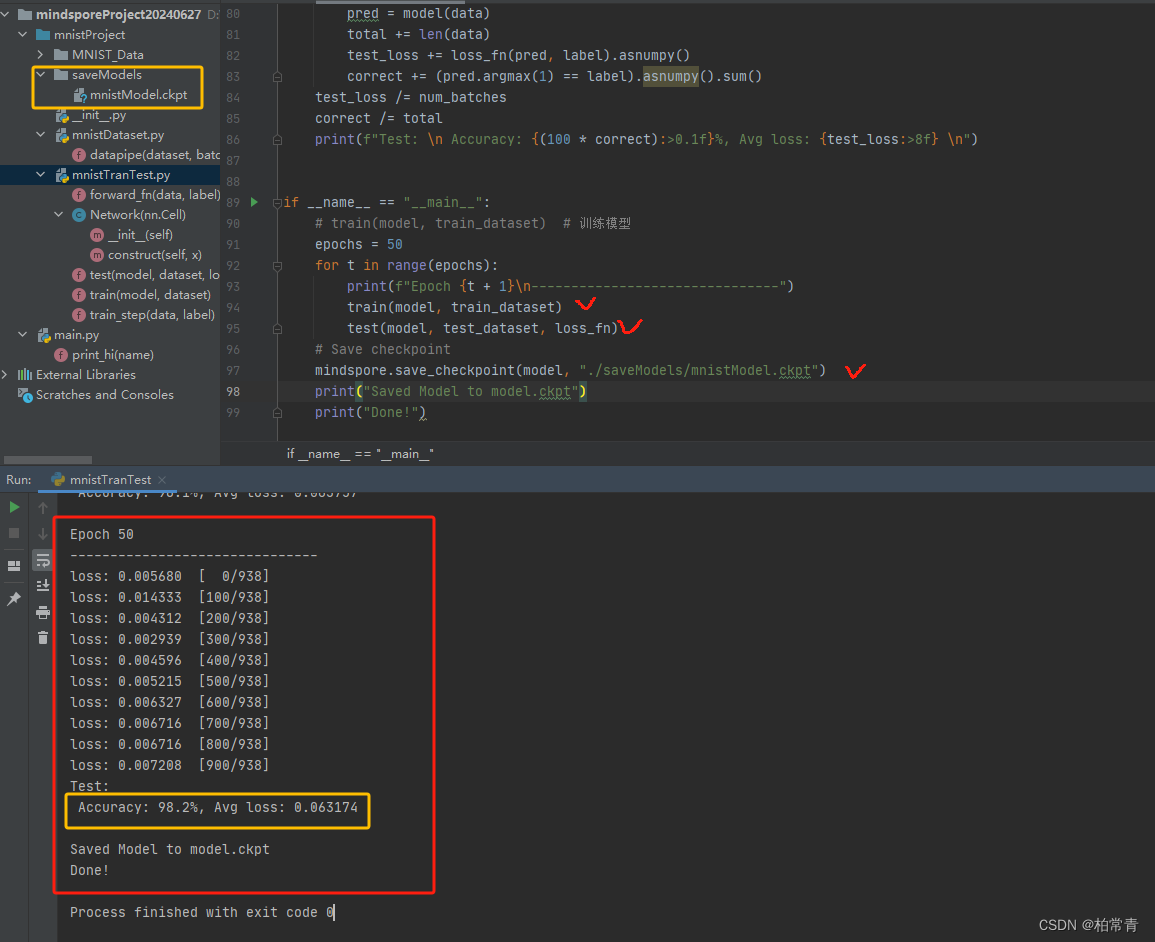

三、手写数字识别

pycharm IDE工具创建工程项目,搭载前面配置的环境mindspore,编写.py文件。数据处理py,模型训练测试py。

- 数据集

import mindspore

from mindspore import nn

from mindspore.dataset import vision, transforms

from mindspore.dataset import MnistDataset

from download import download

url = "https://mindspore-website.obs.cn-north-4.myhuaweicloud.com/" \

"notebook/datasets/MNIST_Data.zip"

# 运行过一次,后面就是

# path = download(url, "./", kind="zip", replace=True)

train_dataset = MnistDataset('MNIST_Data/train')

test_dataset = MnistDataset('MNIST_Data/test')

# print(train_dataset.get_col_names())

# MindSpore的dataset使用数据处理流水线(Data Processing Pipeline)

def datapipe(dataset, batch_size):

image_transforms = [

vision.Rescale(1.0 / 255.0, 0),

vision.Normalize(mean=(0.1307,), std=(0.3081,)),

vision.HWC2CHW()

]

label_transform = transforms.TypeCast(mindspore.int32)

dataset = dataset.map(image_transforms, 'image')

dataset = dataset.map(label_transform, 'label')

dataset = dataset.batch(batch_size)

return dataset

# Map vision transforms and batch dataset

train_dataset = datapipe(train_dataset, 64)

test_dataset = datapipe(test_dataset, 64)

if __name__ == "__main__":

for image, label in test_dataset.create_tuple_iterator():

print(f"Shape of image [N, C, H, W]: {image.shape} {image.dtype}")

print(f"Shape of label: {label.shape} {label.dtype}")

break

- 模型

# Define model

class Network(nn.Cell):

def __init__(self):

super().__init__()

self.flatten = nn.Flatten()

self.dense_relu_sequential = nn.SequentialCell(

nn.Dense(28 * 28, 512),

nn.ReLU(),

nn.Dense(512, 512),

nn.ReLU(),

nn.Dense(512, 10)

)

def construct(self, x):

x = self.flatten(x)

logits = self.dense_relu_sequential(x)

return logits

model = Network()

# print(model)

3.损失函数,优化器,学习率

# Instantiate loss function and optimizer

loss_fn = nn.CrossEntropyLoss() # 损失函数

optimizer = nn.SGD(model.trainable_params(), 1e-2) # 优化器函数,学习率0.01

前向传播函数

# 1. Define forward function

def forward_fn(data, label):

logits = model(data)

loss = loss_fn(logits, label)

return loss, logits

梯度函数

# 2. Get gradient function

grad_fn = mindspore.value_and_grad(forward_fn, None, optimizer.parameters, has_aux=True)

梯度反向优化函数

# 3. Define function of one-step training

def train_step(data, label):

(loss, _), grads = grad_fn(data, label)

optimizer(grads)

return loss

- 模型训练

def train(model, dataset):

size = dataset.get_dataset_size()

model.set_train()

for batch, (data, label) in enumerate(dataset.create_tuple_iterator()):

loss = train_step(data, label)

if batch % 100 == 0:

loss, current = loss.asnumpy(), batch

print(f"loss: {loss:>7f} [{current:>3d}/{size:>3d}]")

- 模型保存

mindspore.save_checkpoint(model, "./saveModels/mnistModel.ckpt")

print("Saved Model to mnistModel.ckpt")

6.模型测试

def test(model, dataset, loss_fn):

num_batches = dataset.get_dataset_size()

model.set_train(False)

total, test_loss, correct = 0, 0, 0

for data, label in dataset.create_tuple_iterator():

pred = model(data)

total += len(data)

test_loss += loss_fn(pred, label).asnumpy()

correct += (pred.argmax(1) == label).asnumpy().sum()

test_loss /= num_batches

correct /= total

print(f"Test: \n Accuracy: {(100 * correct):>0.1f}%, Avg loss: {test_loss:>8f} \n")

- 模型加载测试

# Instantiate a random initialized model

model = Network()

# Load checkpoint and load parameter to model

param_dict = mindspore.load_checkpoint("./saveModels/mnistModel.ckpt")

param_not_load, _ = mindspore.load_param_into_net(model, param_dict)

print(param_not_load)

- 测试加载模型

model.set_train(False)

for data, label in test_dataset:

pred = model(data)

predicted = pred.argmax(1)

print(f'Predicted: "{predicted[:10]}", Actual: "{label[:10]}"')

break

9.训练测试结果