I used RAG with LLAMA3 for AI bot. I find RAG with chromadb is much slower than call LLM itself. Following the test result, with just one simple web page about 1000 words, it takes more than 2 seconds for retrieving:

我使用RAG(可能是指某种特定的算法或模型)与LLAMA3一起构建AI机器人。我发现使用chromadb的RAG比直接调用LLM(大型语言模型)本身要慢得多。根据测试结果,仅仅为了检索一个大约包含1000个单词的简单网页,它就需要超过2秒的时间:

Time used for retrieving: 2.245511054992676

Time used for LLM: 2.1182022094726562

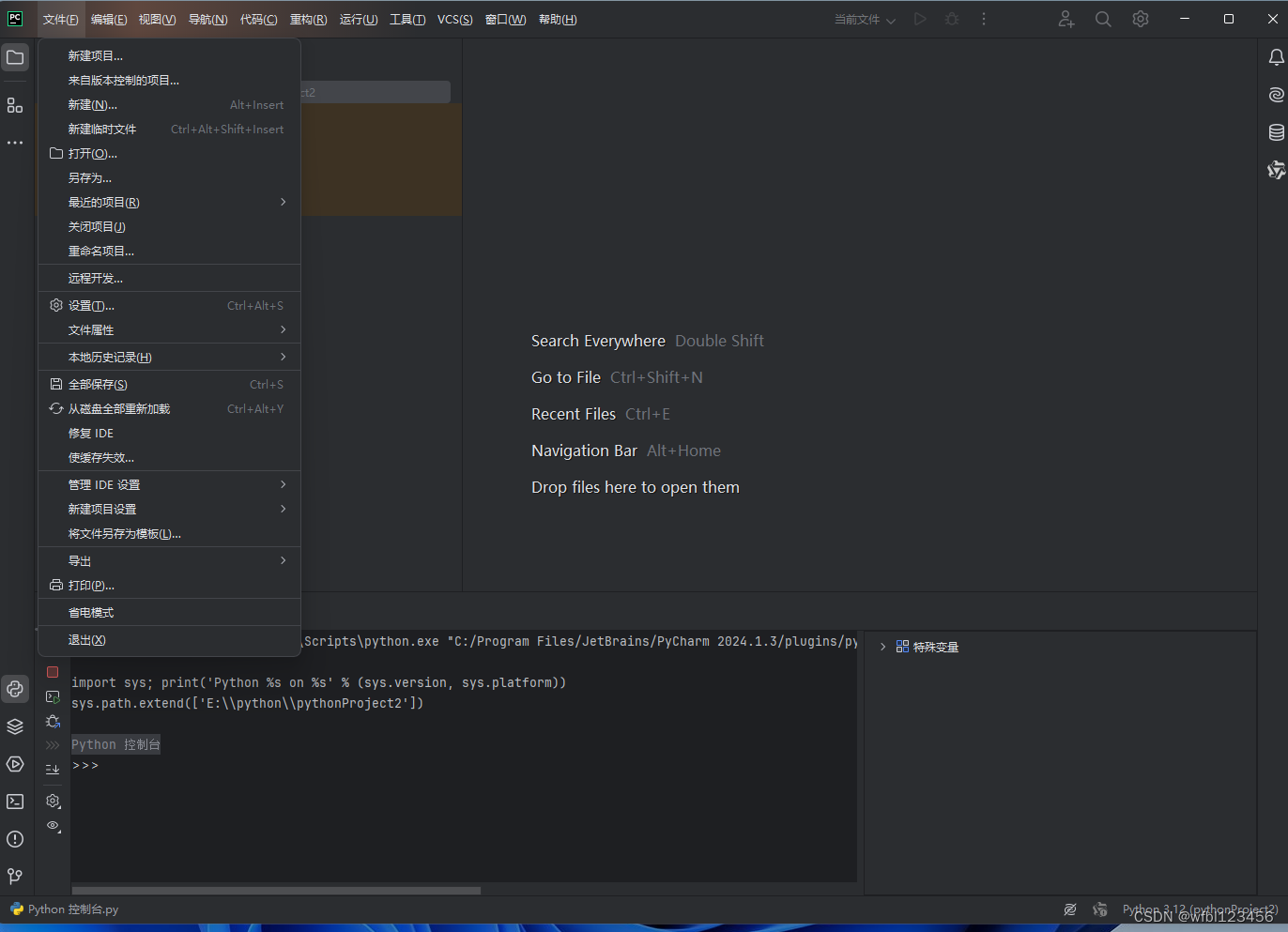

Here is my simple code: 这是我的简单代码:

embeddings = OllamaEmbeddings(model="llama3")

vectorstore = Chroma.from_documents(documents=splits, embedding=embeddings)

retriever = vectorstore.as_retriever()

question = "What is COCONut?"

start = time.time()

retrieved_docs = retriever.invoke(question)

formatted_context = combine_docs(retrieved_docs)

end = time.time()

print(f"Time used for retrieving: {end - start}")

start = time.time()

answer = ollama_llm(question, formatted_context)

end = time.time()

print(f"Time used for LLM: {end - start}")

I found when my chromaDB size just about 1.4M, it takes more than 20 seconds for retrieving and still only takes about 3 or 4 seconds for LLM. Is there anything I missing? or RAG tech itself is so slow?

我发现当我的chromaDB大小约为1.4M时,检索需要超过20秒的时间,而直接调用LLM(大型语言模型)仍然只需要大约3或4秒。是我遗漏了什么吗?还是RAG技术本身就这么慢?

参考回答:

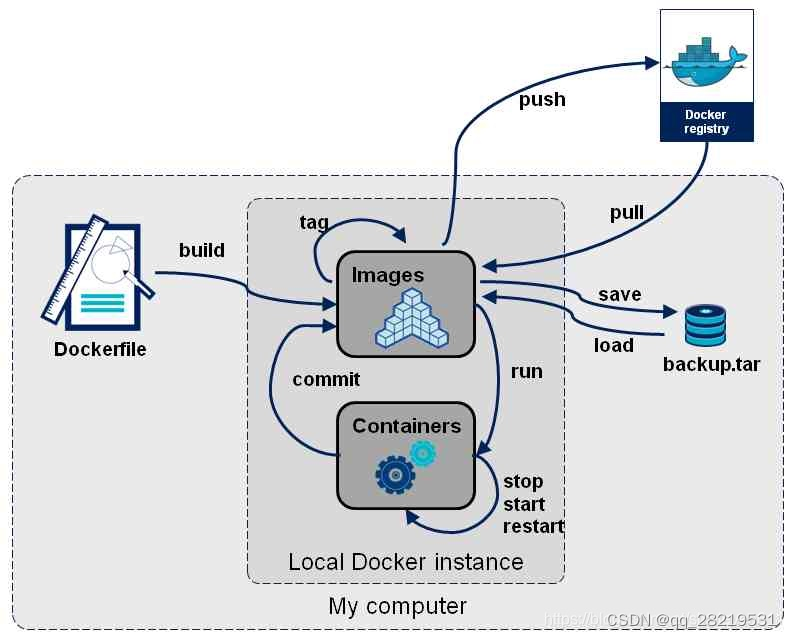

- Retrieval-Augmented Generation (RAG) models are slower as compared to Large Language Models (LLMs) due to an extra retrieval step.

与大型语言模型(LLMs)相比,检索增强生成(Retrieval-Augmented Generation,RAG)模型由于多出了一个检索步骤,因此速度更慢。

- Since RAG models search a database for relevant information, which can be time-consuming, especially with large databases, it is tend to be slower. Versus LLMs respond faster as they rely on pre-trained information and skip the said database retrieval step.

由于RAG模型需要在数据库中搜索相关信息,这可能会很耗时,尤其是当数据库很大时,因此它往往会比较慢。相比之下,LLMs(大型语言模型)响应更快,因为它们依赖于预训练的信息,并跳过了上述的数据库检索步骤。

- You must also note that LLMs may lack the most current or specific information compared to RAG models, which usually access external data sources and can provide more detailed responses using the latest information.

你还必须注意,与RAG模型相比,LLMs(大型语言模型)可能缺乏最新或特定的信息,因为RAG模型通常可以访问外部数据源,并使用最新信息提供更详细的响应。

- Thus, Despite being slower, RAG models have the advantage in response quality and relevance for complex, information-rich queries. Hope I am able to help.

因此,尽管速度较慢,但RAG模型在处理复杂且信息丰富的查询时,在响应质量和相关性方面更具优势。希望我能帮到你。

![[已解决]ImportError: DLL load failed while importing win32api: 找不到指定的程序。](https://img-blog.csdnimg.cn/direct/97654980b9a3432f9107060518ccc3fd.png)