train_data='./datasets/duuie'

output_folder='./datasets/duuie_pre'

ignore_datasets=["DUEE", "DUEE_FIN_LITE"]

schema_folder='./datasets/seen_schema'

# 对CCKS2022 竞赛数据进行预处理

import shutil

# shutil.copytree(train_data,output_folder)

import os

life_folder = os.path.join(output_folder, "DUIE_LIFE_SPO")

org_folder = os.path.join(output_folder, "DUIE_ORG_SPO")

print(life_folder,org_folder)

import json

def load_jsonlines_file(filename):

return [json.loads(line) for line in open(filename, encoding="utf8")]

life_train_instances = load_jsonlines_file(f"{life_folder}/train.json")

org_train_instances = load_jsonlines_file(f"{org_folder}/train.json")

for i in range(27695,27698):

print(life_train_instances[i],'|',org_train_instances[i])

class RecordSchema:

def __init__(self, type_list, role_list, type_role_dict):

self.type_list = type_list

self.role_list = role_list

self.type_role_dict = type_role_dict

def __repr__(self) -> str:

repr_list = [f"Type: {self.type_list}\n", f"Role: {self.role_list}\n", f"Map: {self.type_role_dict}"]

return "\n".join(repr_list)

@staticmethod

def get_empty_schema():

return RecordSchema(type_list=list(), role_list=list(), type_role_dict=dict())

@staticmethod

def read_from_file(filename):

lines = open(filename, encoding="utf8").readlines()

type_list = json.loads(lines[0])# 类型

role_list = json.loads(lines[1]) # 角色

type_role_dict = json.loads(lines[2])#类型-角色

return RecordSchema(type_list, role_list, type_role_dict)

def write_to_file(self, filename):

with open(filename, "w", encoding="utf8") as output:

# 用于将Python对象编码(序列化)为JSON格式的字符串。设置ensure_ascii=False参数

# 会告诉json.dumps()函数不要转义非ASCII字符

output.write(json.dumps(self.type_list, ensure_ascii=False) + "\n")

output.write(json.dumps(self.role_list, ensure_ascii=False) + "\n")

output.write(json.dumps(self.type_role_dict, ensure_ascii=False) + "\n")

RecordSchema.read_from_file(f"{life_folder}/record.schema")

life_relation = RecordSchema.read_from_file(f"{life_folder}/record.schema").role_list

org_relation = RecordSchema.read_from_file(f"{org_folder}/record.schema").role_list

from collections import defaultdict

instance_dict = defaultdict(list)

for instance in life_train_instances + org_train_instances:

instance_dict[instance["text"]] += [instance]

a=[i for i in life_train_instances for j in org_train_instances if i['text']==j['text']]

b=[i for i in org_train_instances for j in a if i['text']==j['text']]

for i in range(3):

print(a[i]['relation'],'|',b[i]['relation'])

dict_1={1:2,3:4}

for i in dict_1:#相当于字典的keys()

print(i)

from typing import Tuple, List, Dict

def merge_instance(instance_list):

def all_equal(_x):#判断是否全相同

for __x in _x:

if __x != _x[0]:

return False

return True

def entity_key(_x):

return (tuple(_x["offset"]), _x["type"])

def relation_key(_x):

return (

tuple(_x["type"]),

tuple(_x["args"][0]["offset"]),

_x["args"][0]["type"],

tuple(_x["args"][1]["offset"]),

_x["args"][1]["type"],

)

def event_key(_x):

return (tuple(_x["offset"]), _x["type"])

assert all_equal([x["text"] for x in instance_list])

element_dict = {

"entity": dict(),

"relation": dict(),

"event": dict(),

}

instance_id_list = list()

for x in instance_list:

instance_id_list += [x["id"]]

for entity in x.get("entity", list()):

element_dict["entity"][entity_key(entity)] = entity

for relation in x.get("relation", list()):

element_dict["relation"][relation_key(relation)] = relation

for event in x.get("event", list()):

element_dict["event"][event_key(event)] = event

return {

"id": "-".join(instance_id_list),

"text": instance_list[0]["text"],

"tokens": instance_list[0]["tokens"],

"entity": list(element_dict["entity"].values()),

"relation": list(element_dict["relation"].values()),

"event": list(element_dict["event"].values()),

}

for text in instance_dict:

instance_dict[text] = merge_instance(instance_dict[text])

for i in range(800,802):

print(list(instance_dict.values())[i]['relation'])

import copy

with open(f"{life_folder}/train.json", "w") as output:

for instance in instance_dict.values():

new_instance = copy.deepcopy(instance)

new_instance["relation"] = list(filter(lambda x: x["type"] in life_relation, instance["relation"]))

output.write(json.dumps(new_instance) + "\n")

with open(f"{org_folder}/train.json", "w") as output:

for instance in instance_dict.values():

new_instance = copy.deepcopy(instance)

new_instance["relation"] = list(filter(lambda x: x["type"] in org_relation, instance["relation"]))

output.write(json.dumps(new_instance) + "\n")

a_instances = load_jsonlines_file(f"{life_folder}/train.json")

b_instances = load_jsonlines_file(f"{org_folder}/train.json")

print(len(a_instances),len(b_instances))

import yaml

def load_definition_schema_file(filename):

return yaml.load(open(filename, encoding="utf8"), Loader=yaml.FullLoader)

aa = load_definition_schema_file(os.path.join(schema_folder,'体育竞赛.yaml'))

mm=list()

for i in aa['事件'].values():

mm+=i["参数"]

mm=list(set(mm))

[x for x in aa['事件']]

aa['事件']['退役']["参数"].keys()

aaa={1:2,3:4}

for k,v in aaa.items():

print(k,v)

def dump_schema(output_folder, schema_dict):

if not os.path.exists(output_folder):

os.makedirs(output_folder)

for schema_name, schema in schema_dict.items():

schema_file = f"{output_folder}/{schema_name}.schema"

with open(schema_file, "w", encoding="utf8") as output:

for element in schema:

output.write(json.dumps(element, ensure_ascii=False) + "\n")

def dump_event_schema(event_map, output_folder):

role_list = list()

for roles in event_map.values():

role_list += roles["参数"]

rols_list = list(set(role_list))

type_list = list(event_map.keys())

type_role_map = {event_type: list(event_map[event_type]["参数"].keys()) for event_type in event_map}

dump_schema(

output_folder=output_folder,

schema_dict={

"entity": [[], [], {}],

"relation": [[], [], {}],

"event": [type_list, rols_list, type_role_map],

"record": [type_list, rols_list, type_role_map],

},

)

def filter_event_in_instance(instances,required_event_types):

"""Filter events in the instance, keep event mentions with `required_event_types`

过滤实例中的事件,只保留需要的事件类别的事件标注

"""

new_instances = list()

for instance in instances:

new_instance = copy.deepcopy(instance)

new_instance["event"] = list(filter(lambda x: x["type"] in required_event_types, new_instance["event"]))

new_instances += [new_instance]

return new_instances

def dump_instances(instances, output_filename):

with open(output_filename, "w", encoding="utf8") as output:

for instance in instances:

output.write(json.dumps(instance, ensure_ascii=False) + "\n")

def filter_event(data_folder, event_types, output_folder):

"""Keep event with `event_types` in `data_folder` save to `output_folder`

过滤 `data_folder` 中的事件,只保留 `event_types` 类型事件保存到 `output_folder`"""

dump_event_schema(event_types, output_folder)

for split in ["train", "val"]:

filename = os.path.join(data_folder, f"{split}.json")

instances = [json.loads(line.strip()) for line in open(filename, encoding="utf8")]

new_instances = filter_event_in_instance(instances, required_event_types=event_types)

dump_instances(new_instances, os.path.join(output_folder, f"{split}.json"))

# 对事件数据进行预处理,过滤除 `灾害意外` 和 `体育竞赛` 外的事件标注

for schema in ["灾害意外", "体育竞赛"]:

print(f"Building {schema} dataset ...")

duee_folder = os.path.join(output_folder, "DUEE")

schema_file = os.path.join(schema_folder, f"{schema}.yaml")

output_folder2 = os.path.join(output_folder, schema)

schema = load_definition_schema_file(schema_file)

filter_event(

duee_folder,

schema["事件"],

output_folder2,

)

ty_instances = load_jsonlines_file(f"{output_folder}/体育竞赛/train.json")

zh_instances = load_jsonlines_file(f"{output_folder}/灾害意外/train.json")

print(len(ty_instances),len(zh_instances))

for i in range(11508,11608):

print(ty_instances[i],'|',zh_instances[i])

bb=load_definition_schema_file(os.path.join(schema_folder, "金融信息.yaml"))

for i in bb['事件'].keys():

print(i)

mm=list()

mm+=bb['事件']['中标']["参数"]

mm=list(set(mm))

bb["事件"]['中标']["参数"] .keys()

for schema in ["金融信息"]:

print(f"Building {schema} dataset ...")

duee_fin_folder = os.path.join(output_folder, "DUEE_FIN_LITE")

schema_file = os.path.join(schema_folder, f"{schema}.yaml")

output_folder2 = os.path.join(output_folder, schema)

schema = load_definition_schema_file(schema_file)

# 依据不同事件类别将多事件抽取分割成多个单事件类型抽取

# Separate multi-type extraction to multiple single-type extraction

for event_type in schema["事件"]:

filter_event(

duee_fin_folder,

{event_type: schema["事件"][event_type]},

output_folder2 + "_" + event_type,

)

vv=load_jsonlines_file(f"{output_folder}/DUEE_FIN_LITE/train.json")

zb_instances = load_jsonlines_file(f"{output_folder}/金融信息_中标/train.json")

zy_instances = load_jsonlines_file(f"{output_folder}/金融信息_质押/train.json")

print(len(zb_instances),len(zy_instances))

for i in range(6985,7015):

print(zb_instances[i],'|',zy_instances[i])

def annonote_graph(

entities: List[Dict] = [],

relations: List[Dict] = [],

events: List[Dict] = []):

spot_dict = dict()

asoc_dict = defaultdict(list)

# 将实体关系事件转换为点关联图

def add_spot(spot):

spot_key = (tuple(spot["offset"]), spot["type"])

spot_dict[spot_key] = spot

def add_asoc(spot, asoc, tail):

spot_key = (tuple(spot["offset"]), spot["type"])

asoc_dict[spot_key] += [(tuple(tail["offset"]), tail["text"], asoc)]

for entity in entities:

add_spot(spot=entity)

for relation in relations:

add_spot(spot=relation["args"][0])

add_asoc(spot=relation["args"][0], asoc=relation["type"], tail=relation["args"][1])

for event in events:

add_spot(spot=event)

for argument in event["args"]:

add_asoc(spot=event, asoc=argument["type"], tail=argument)

spot_asoc_instance = list()

for spot_key in sorted(spot_dict.keys()):

offset, label = spot_key

if len(spot_dict[spot_key]["offset"]) == 0:

continue

spot_instance = {

"span": spot_dict[spot_key]["text"],

"label": label,

"asoc": list(),

}

for tail_offset, tail_text, asoc in sorted(asoc_dict.get(spot_key, [])):

if len(tail_offset) == 0:

continue

spot_instance["asoc"] += [(asoc, tail_text)]

spot_asoc_instance += [spot_instance]

spot_labels = set([label for _, label in spot_dict.keys()])

asoc_labels = set()

for _, asoc_list in asoc_dict.items():

for _, _, asoc in asoc_list:

asoc_labels.add(asoc)

return spot_labels, asoc_labels, spot_asoc_instance

def add_spot_asoc_to_single_file(filename):

instances = [json.loads(line) for line in open(filename, encoding="utf8")]

print(f"Add spot asoc to {filename} ...")

with open(filename, "w", encoding="utf8") as output:

for instance in instances:

spots, asocs, spot_asoc_instance = annonote_graph(

entities=instance["entity"],#实体

relations=instance["relation"],#关系

events=instance["event"],#事件

)

# 为对象添加spot_asoc

instance["spot_asoc"] = spot_asoc_instance

# 为对象添加spot

instance["spot"] = list(spots)

# 为对象添加asoc

instance["asoc"] = list(asocs)

output.write(json.dumps(instance, ensure_ascii=False) + "\n")

ff = os.path.join(output_folder,'金融信息_企业破产',"train.json")

ff_instances = [json.loads(line) for line in open(ff, encoding="utf8")]

for i in range(1046,1050):

print(ff_instances[i])

a,b,yyj=annonote_graph( entities=ff_instances[11000]["entity"],

relations=ff_instances[11000]["relation"],

events=ff_instances[11000]["event"],)

data_folder=output_folder

def merge_schema(schema_list: List[RecordSchema]):

type_set = set()

role_set = set()

type_role_dict = defaultdict(list)

for schema in schema_list:

for type_name in schema.type_list:

type_set.add(type_name)

for role_name in schema.role_list:

role_set.add(role_name)

for type_name in schema.type_role_dict:

type_role_dict[type_name] += schema.type_role_dict[type_name]

for type_name in type_role_dict:

type_role_dict[type_name] = list(set(type_role_dict[type_name]))

return RecordSchema(

type_list=list(type_set),

role_list=list(role_set),

type_role_dict=type_role_dict,

)

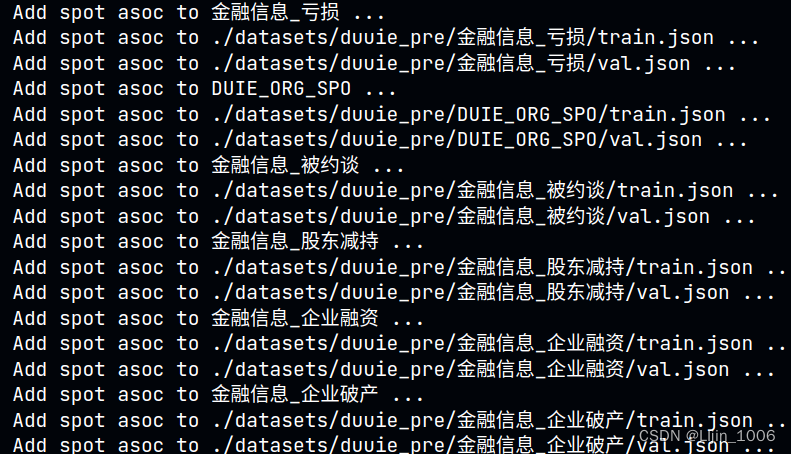

def convert_duuie_to_spotasoc(data_folder, ignore_datasets):

schema_list = list()

for task_folder in os.listdir(data_folder):#过滤无效

if task_folder in ignore_datasets:

continue

if not os.path.isdir(os.path.join(data_folder, task_folder)):#过滤非文件夹

continue

print(f"Add spot asoc to {task_folder} ...")

# 读取单任务的 Schema

task_schema_file = os.path.join(data_folder, task_folder, "record.schema")

# 向单任务数据中添加 Spot Asoc 标注

add_spot_asoc_to_single_file(os.path.join(data_folder, task_folder, "train.json"))

add_spot_asoc_to_single_file(os.path.join(data_folder, task_folder, "val.json"))

record_schema = RecordSchema.read_from_file(task_schema_file)

schema_list += [record_schema]

# 融合不同任务的 Schema

multi_schema = merge_schema(schema_list)

multi_schema.write_to_file(os.path.join(data_folder, "record.schema"))

convert_duuie_to_spotasoc(output_folder,ignore_datasets)