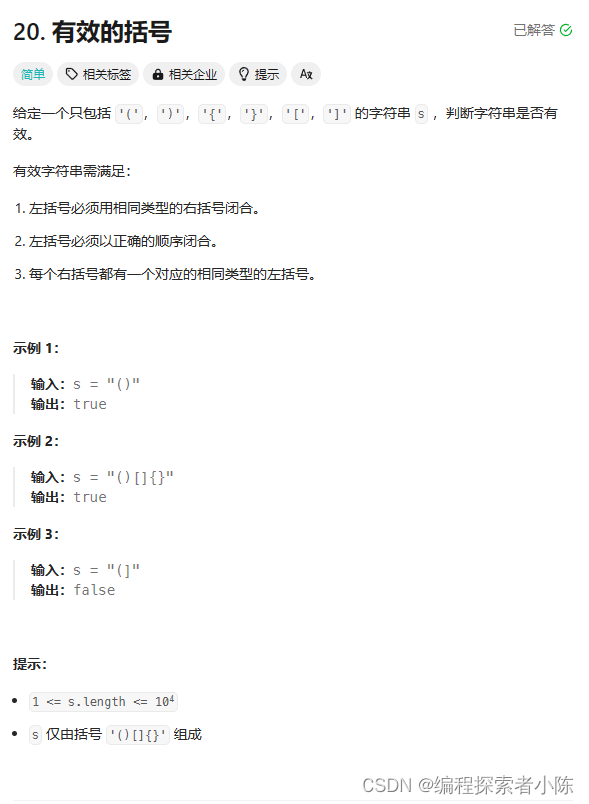

idea上的MapReduce

一般在开发中,若是等到环境搭配好了再进行测试或者统计数据,数据处理等操作,那会很耽误时间,所以一般都是2头跑,1波人去在客户机上搭建环境,1波人通过在idea上搭建虚拟hadoop环境,然后再虚拟环境下编写测试功能代码

使用Java API实现MapReduce经典案例

【案例1:数据去重】

1)配置windows下的hadoop环境变量

步骤1:将hadoop的安装包解压到指定位置(本例指定位置是:C:\Program Files)

步骤2:新建系统环境变量HADOOP_HOME

步骤3:编辑系统环境变量path

步骤4:添加windows系统的依赖文件,在hadoop安装路径下添加winutils.exe,winutils.pdb和hadoop.dll共3个文件

注意:

1)一定要重启电脑让以上配置生效(有时候不用重启也可以)

2)在命令提示符cmd中找不到hadoop的版本不影响后续编程

2)配置好Maven

步骤1:将maven相关文件夹apache-maven-3.6.0放在D盘的根目录

步骤2:使用idea新建maven项目,并做如下maven设置

3)编辑pom.xml文件,添加Maven库依赖

<dependencies>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>3.1.3</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>3.1.3</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>3.1.3</version>

</dependency>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

</dependency>

</dependencies>

4)Map阶段的实现:编写DedupMapper.java代码 (教材P116

package com.xyzy;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.io.Text;

import java.io.IOException;

public class DedupDriver {

public static void main (String[] args) throws IOException,

ClassNotFoundException, InterruptedException {

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

job.setJarByClass(DedupDriver.class);

job.setMapperClass(DedupMapper.class);

job.setReducerClass(DedupReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(NullWritable.class);

FileInputFormat.setInputPaths(job,new Path("D:/testdata/input"));

FileOutputFormat.setOutputPath(job, new Path("D:/testdata/output2"));

boolean res = job.waitForCompletion(true);

System.exit(res ? 0 : 1);

}

}

5)Reduce阶段的实现:编写DedupReducer.java代码(教材P117)

package com.xyzy;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class DedupMapper extends Mapper<LongWritable, Text, Text,NullWritable> {

private static Text field = new Text();

@ Override

protected void map(LongWritable key, Text value , Context context)

throws IOException, InterruptedException{

field = value;

context.write(field, NullWritable.get());

}

}

6)驱动类的实现:编写DedupDriver.java代码(教材P117)

package com.xyzy;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class DedupReducer extends Reducer<Text,NullWritable, Text,NullWritable> {

@ Override

protected void reduce(Text key, Iterable<NullWritable>value,Context context) throws

IOException, InterruptedException{

context.write(key, NullWritable.get());

}

}

7)要提前在d:/testdata/input中准备好素材(提醒一下output不是自己创建的文件夹,而是运行系统自动生成的!!!)

8)运行后的效果:

自动在d:/testdata/产生目录output,内容如下:

如果已经产生一次结果,若再想使用去重操作,则需要改写结果存储的文件夹名,例如将output改为output1即可