在计算机视觉领域,特征点匹配是一个基础而关键的任务,广泛应用于图像拼接、三维重建、目标跟踪等方向。传统的特征点匹配方法通常基于相同尺度下提取的特征进行匹配,然而在实际场景中,由于成像距离、分辨率等因素的差异,待匹配图像间存在显著的尺度变化,直接利用原始尺度的特征难以获得理想的匹配效果。为了克服这一难题,构建图像金字塔并在不同层级进行特征提取和匹配成为一种行之有效的策略。本文将给出如何使用图神经网络匹配算法SuperGlue的代码,实现跨金字塔层级的特征点高效匹配,充分利用不同尺度信息,显著提升匹配的准确性和鲁棒性。

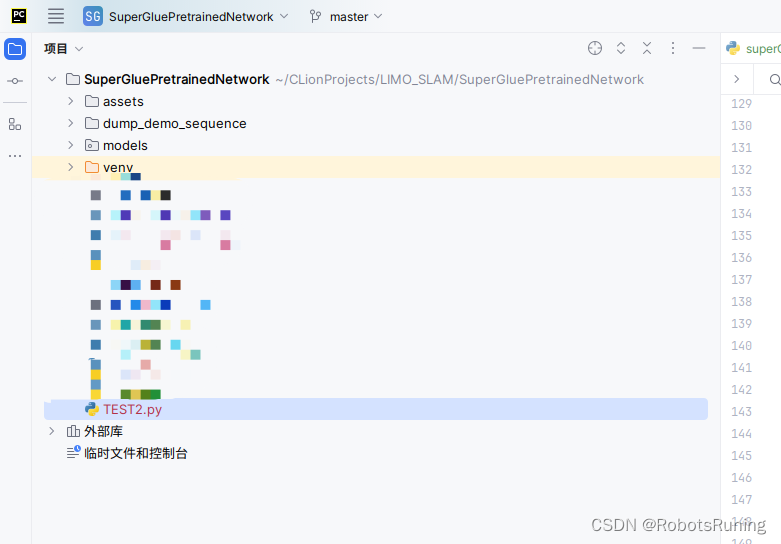

1. 文件结构

2. 具体代码

#! /usr/bin/env python3

import cv2

import torch # 这一句

torch.set_grad_enabled(False) # 这一句

from models.matching import Matching # 这一句

from models.utils import (frame2tensor) # 这一句

import numpy as np

config = {

'superpoint': {

'nms_radius': 4,

'keypoint_threshold': 0.005,

'max_keypoints': -1

},

'superglue': {

'weights': 'outdoor',

'sinkhorn_iterations': 20,

'match_threshold': 0.2,

}

}

#

# device = 'cuda' if torch.cuda.is_available() else 'cpu'

device = 'cuda'

matching = Matching(config).eval().to(device) # 这一句

keys = ['keypoints', 'scores', 'descriptors']

######################################################################################################

def match_frames_with_super_glue(frame0,frame1):

print("正在调用基于 superGlue 匹配的函数进行特征点匹配...") # 添加了print语句

# 将参考帧和当前帧转换为PyTorch张量格式

frame_tensor0 = frame2tensor(frame0, device)

frame_tensor1 = frame2tensor(frame1, device)

# 使用SuperPoint网络提取参考帧的特征点

last_data = matching.superpoint({'image': frame_tensor0})

# 将提取到的参考帧特征点数据转换为字典格式

last_data = {k + '0': last_data[k] for k in keys}

last_data['image0'] = frame_tensor0

# 获取参考帧的特征点坐标

kpts0 = last_data['keypoints0'][0].cpu().numpy()

# 使用SuperGlue网络在参考帧和当前帧之间进行特征点匹配

pred = matching({**last_data, 'image1': frame_tensor1})

# 获取当前帧的特征点坐标

kpts1 = pred['keypoints1'][0].cpu().numpy()

# 获取特征点匹配结果和匹配置信度

matches = pred['matches0'][0].cpu().numpy()

confidence = pred['matching_scores0'][0].cpu().numpy()

# 筛选出有效的匹配对

valid = matches > -1

mkpts0 = kpts0[valid]

mkpts1 = kpts1[matches[valid]]

# 打印匹配结果

#

# print(f"----已经完成帧间的关键点匹配----")

for i, (kp0, kp1) in enumerate(zip(mkpts0, mkpts1)):

print(f"Match {i}: ({kp0[0]:.2f}, {kp0[1]:.2f}) -> ({kp1[0]:.2f}, {kp1[1]:.2f})")

# 确保两个图像都是三通道

if len(frame0.shape) == 2:

vis_frame0 = cv2.cvtColor(frame0, cv2.COLOR_GRAY2BGR)

else:

vis_frame0 = frame0.copy()

if len(frame1.shape) == 2:

vis_frame1 = cv2.cvtColor(frame1, cv2.COLOR_GRAY2BGR)

else:

vis_frame1 = frame1.copy()

# 绘制第一个输入图像及其特征点

vis_frame0_with_kpts = vis_frame0.copy()

for kp in kpts0:

cv2.circle(vis_frame0_with_kpts, (int(kp[0]), int(kp[1])), 3, (0, 255, 0), -1)

cv2.imshow("Input Frame 0 with Keypoints", vis_frame0_with_kpts)

# 绘制第二个输入图像及其特征点

vis_frame1_with_kpts = vis_frame1.copy()

for kp in kpts1:

cv2.circle(vis_frame1_with_kpts, (int(kp[0]), int(kp[1])), 3, (0, 255, 0), -1)

cv2.imshow("Input Frame 1 with Keypoints", vis_frame1_with_kpts)

# 绘制特征点

for kp in mkpts0:

cv2.circle(vis_frame0, (int(kp[0]), int(kp[1])), 3, (0, 255, 0), -1)

for kp in mkpts1:

cv2.circle(vis_frame1, (int(kp[0]), int(kp[1])), 3, (0, 255, 0), -1)

# 调整高度一致,通过在较短的图像上下填充黑色背景

max_height = max(vis_frame0.shape[0], vis_frame1.shape[0])

if vis_frame0.shape[0] < max_height:

diff = max_height - vis_frame0.shape[0]

pad_top = np.zeros((diff // 2, vis_frame0.shape[1], 3), dtype=np.uint8)

pad_bottom = np.zeros((diff - diff // 2, vis_frame0.shape[1], 3), dtype=np.uint8)

vis_frame0 = np.vstack((pad_top, vis_frame0, pad_bottom))

if vis_frame1.shape[0] < max_height:

diff = max_height - vis_frame1.shape[0]

pad_top = np.zeros((diff // 2, vis_frame1.shape[1], 3), dtype=np.uint8)

pad_bottom = np.zeros((diff - diff // 2, vis_frame1.shape[1], 3), dtype=np.uint8)

vis_frame1 = np.vstack((pad_top, vis_frame1, pad_bottom))

# 计算右侧图像的垂直偏移量

right_pad_top = pad_top.shape[0]

# 绘制匹配线段

concat_frame = np.hstack((vis_frame0, vis_frame1))

for kp0, kp1 in zip(mkpts0, mkpts1):

pt0 = (int(kp0[0]), int(kp0[1]))

pt1 = (int(kp1[0]) + vis_frame0.shape[1], int(kp1[1]) + right_pad_top)

cv2.line(concat_frame, pt0, pt1, (0, 255, 0), 1)

# 缩小可视化窗口大小

scale_factor = 1

resized_frame = cv2.resize(concat_frame, None, fx=scale_factor, fy=scale_factor)

# 显示可视化结果

cv2.imshow("Matched Features", resized_frame)

cv2.waitKey(0)

cv2.destroyAllWindows()

return mkpts0, mkpts1, confidence[valid]

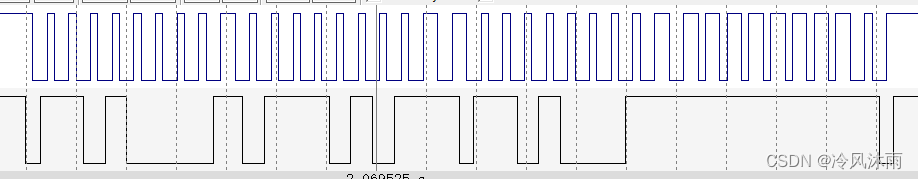

def build_pyramid(image, scale=1.2, min_size=(30, 30)):

pyramid = [image]

while True:

last_image = pyramid[-1]

width = int(last_image.shape[1] / scale)

height = int(last_image.shape[0] / scale)

if width < min_size[0] or height < min_size[1]:

break

next_image = cv2.resize(last_image, (width, height))

pyramid.append(next_image)

return pyramid

if __name__ == "__main__":

# 读取两帧图像

frame0 = cv2.imread("/home/fairlee/786D6A341753F4B4/KITTI/sequences_kitti_00_21/01/image_0/000630.png", 0)

frame1 = cv2.imread("/home/fairlee/786D6A341753F4B4/KITTI/sequences_kitti_00_21/01/image_0/000631.png", 0)

# 构建 frame1 的金字塔

pyramid1 = build_pyramid(frame1, scale=1.2)

# # # 显示金字塔层

# for i, layer in enumerate(pyramid1):

# cv2.imshow(f"Layer {i}", layer)

# cv2.waitKey(500) # 显示500毫秒

# cv2.destroyAllWindows()

# 选择合适的金字塔层作为 frame1 的替代

frame1_substitute = pyramid1[2] # 例如,选择第二层

# 调用match_frames_with_super_glue函数进行特征点匹配

mkpts0, mkpts1, confidence = match_frames_with_super_glue(frame0, frame1_substitute)

# 打印匹配结果

print(f"第一帧的特征点匹配到的特征点数量: {len(mkpts0)}")

print(f"第二帧的特征点匹配到的特征点数量: {len(mkpts1)}")

print(f"匹配置信度的长度为: {len(confidence)}")

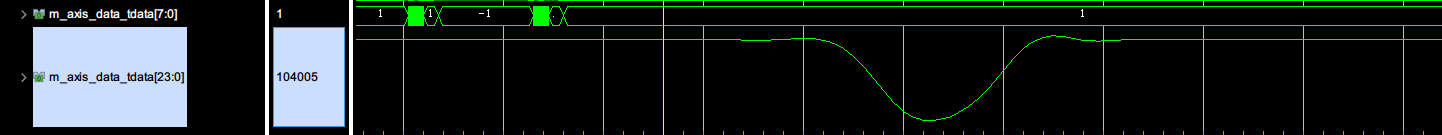

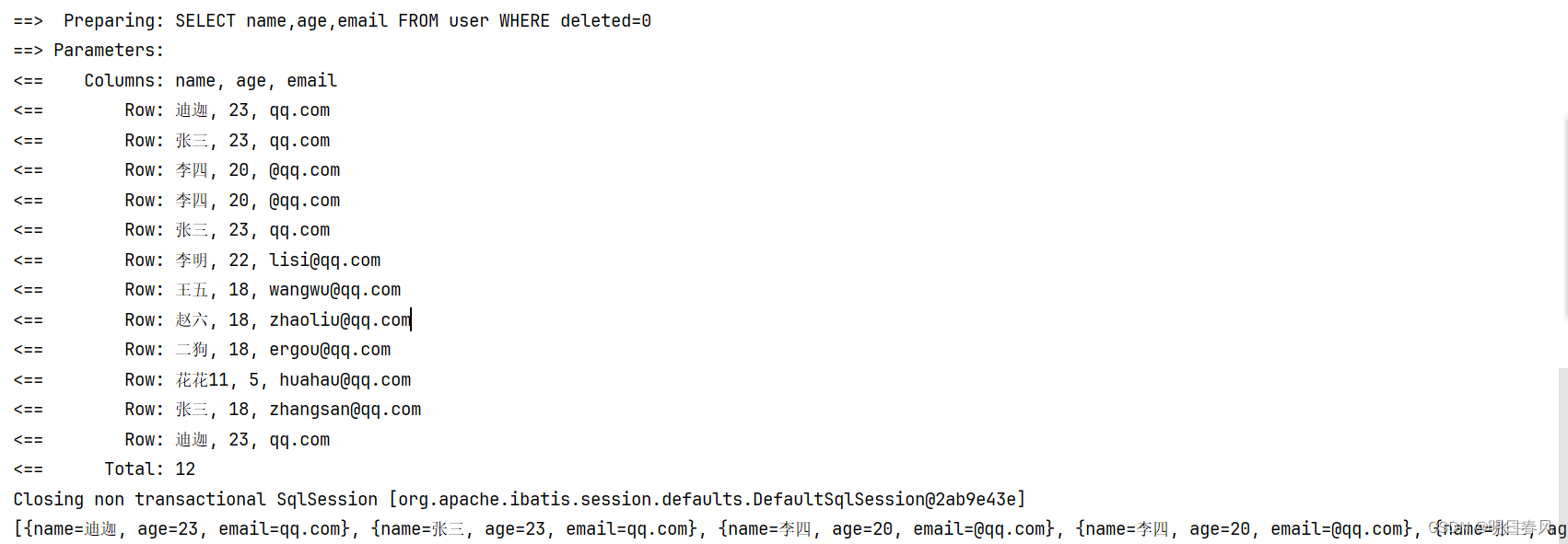

3. 运行结果

代码实现展示了该方法的具体流程,通过选取合适的金字塔层作为待匹配图像的替代,实现了跨尺度的特征点匹配。实验结果表明,该方法能够有效地处理存在显著尺度变化的图像,获得数量可观且置信度较高的匹配点对,为后续的图像拼接、三维重建等任务提供了重要的基础。该方法的优越性在于巧妙地结合了图像金字塔的多尺度表示和SuperGlue的强大匹配能力,为解决复杂场景下的特征匹配难题提供了新的思路和方案。