使用llama.cpp实现LLM大模型的量化、推理、部署

- 大模型的格式转换、量化、推理、部署

- 概述

- 克隆和编译

- 环境准备

- 模型格式转换

- GGUF格式

- bin格式

- 模型量化

- 模型加载与推理

- 模型API服务

- 模型API服务(第三方)

- GPU推理

大模型的格式转换、量化、推理、部署

概述

llama.cpp的主要目标是能够在各种硬件上实现LLM推理,只需最少的设置,并提供最先进的性能。提供1.5位、2位、3位、4位、5位、6位和8位整数量化,以加快推理速度并减少内存使用。

GitHub:https://github.com/ggerganov/llama.cpp

克隆和编译

克隆最新版llama.cpp仓库代码

git clone https://github.com/ggerganov/llama.cpp

对llama.cpp项目进行编译,在目录下会生成一系列可执行文件

main:使用模型进行推理

quantize:量化模型

server:提供模型API服务

1.编译构建CPU执行环境,安装简单,适用于没有GPU的操作系统

cd llama.cpp

mkdir

2.编译构建GPU执行环境,确保安装CUDA工具包,适用于有GPU的操作系统

如果CUDA设置正确,那么执行

nvidia-smi、nvcc --version没有错误提示,则表示一切设置正确。

make clean && make LLAMA_CUDA=1

3.如果编译失败或者需要重新编译,可尝试清理并重新编译,直至编译成功

make clean

环境准备

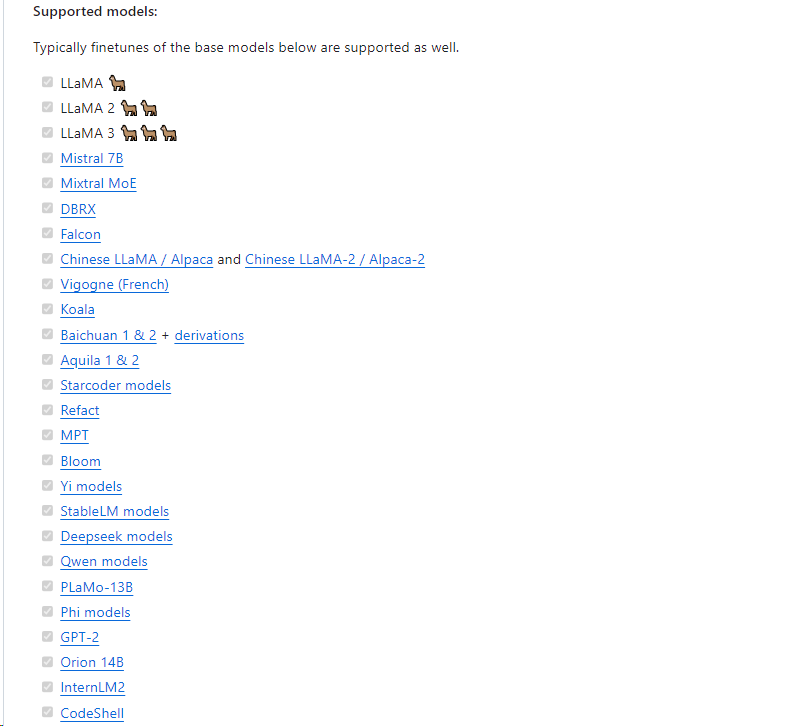

1.下载受支持的模型

要使用llamma.cpp,首先需要准备它支持的模型。在官方文档中给出了说明,这里仅仅截取其中一部分

2.安装依赖

llama.cpp项目下带有requirements.txt 文件,直接安装依赖即可。

pip install -r requirements.txt

模型格式转换

根据模型架构,可以使用

convert.py或convert-hf-to-gguf.py文件。

转换脚本读取模型配置、分词器、张量名称+数据,并将它们转换为GGUF元数据和张量。

GGUF格式

Llama-3相比其前两代显著扩充了词表大小,由32K扩充至128K,并且改为BPE词表。因此需要使用

--vocab-type参数指定分词算法,默认值是spm,如果是bpe,需要显示指定

注意:

官方文档说convert.py不支持LLaMA 3,喊使用convert-hf-to-gguf.py,但它不支持

--vocab-type,且出现异常:error: unrecognized arguments: --vocab-type bpe,因此使用convert.py且没出问题

使用llama.cpp项目中的convert.py脚本转换模型为GGUF格式

root@master:~/work/llama.cpp# python3 ./convert.py /root/work/models/Llama3-Chinese-8B-Instruct/ --outtype f16 --vocab-type bpe --outfile ./models/Llama3-FP16.gguf

INFO:convert:Loading model file /root/work/models/Llama3-Chinese-8B-Instruct/model-00001-of-00004.safetensors

INFO:convert:Loading model file /root/work/models/Llama3-Chinese-8B-Instruct/model-00001-of-00004.safetensors

INFO:convert:Loading model file /root/work/models/Llama3-Chinese-8B-Instruct/model-00002-of-00004.safetensors

INFO:convert:Loading model file /root/work/models/Llama3-Chinese-8B-Instruct/model-00003-of-00004.safetensors

INFO:convert:Loading model file /root/work/models/Llama3-Chinese-8B-Instruct/model-00004-of-00004.safetensors

INFO:convert:model parameters count : 8030261248 (8B)

INFO:convert:params = Params(n_vocab=128256, n_embd=4096, n_layer=32, n_ctx=8192, n_ff=14336, n_head=32, n_head_kv=8, n_experts=None, n_experts_used=None, f_norm_eps=1e-05, rope_scaling_type=None, f_rope_freq_base=500000.0, f_rope_scale=None, n_orig_ctx=None, rope_finetuned=None, ftype=<GGMLFileType.MostlyF16: 1>, path_model=PosixPath('/root/work/models/Llama3-Chinese-8B-Instruct'))

INFO:convert:Loaded vocab file PosixPath('/root/work/models/Llama3-Chinese-8B-Instruct/tokenizer.json'), type 'bpe'

INFO:convert:Vocab info: <BpeVocab with 128000 base tokens and 256 added tokens>

INFO:convert:Special vocab info: <SpecialVocab with 280147 merges, special tokens {'bos': 128000, 'eos': 128001}, add special tokens unset>

INFO:convert:Writing models/Llama3-FP16.gguf, format 1

WARNING:convert:Ignoring added_tokens.json since model matches vocab size without it.

INFO:gguf.gguf_writer:gguf: This GGUF file is for Little Endian only

INFO:gguf.vocab:Adding 280147 merge(s).

INFO:gguf.vocab:Setting special token type bos to 128000

INFO:gguf.vocab:Setting special token type eos to 128001

INFO:gguf.vocab:Setting chat_template to {% set loop_messages = messages %}{% for message in loop_messages %}{% set content = '<|start_header_id|>' + message['role'] + '<|end_header_id|>

'+ message['content'] | trim + '<|eot_id|>' %}{% if loop.index0 == 0 %}{% set content = bos_token + content %}{% endif %}{{ content }}{% endfor %}{{ '<|start_header_id|>assistant<|end_header_id|>

' }}

INFO:convert:[ 1/291] Writing tensor token_embd.weight | size 128256 x 4096 | type F16 | T+ 1

INFO:convert:[ 2/291] Writing tensor blk.0.attn_norm.weight | size 4096 | type F32 | T+ 2

INFO:convert:[ 3/291] Writing tensor blk.0.ffn_down.weight | size 4096 x 14336 | type F16 | T+ 2

INFO:convert:[ 4/291] Writing tensor blk.0.ffn_gate.weight | size 14336 x 4096 | type F16 | T+ 2

INFO:convert:[ 5/291] Writing tensor blk.0.ffn_up.weight | size 14336 x 4096 | type F16 | T+ 2

INFO:convert:[ 6/291] Writing tensor blk.0.ffn_norm.weight | size 4096 | type F32 | T+ 2

INFO:convert:[ 7/291] Writing tensor blk.0.attn_k.weight | size 1024 x 4096 | type F16 | T+ 2

INFO:convert:[ 8/291] Writing tensor blk.0.attn_output.weight | size 4096 x 4096 | type F16 | T+ 2

INFO:convert:[ 9/291] Writing tensor blk.0.attn_q.weight | size 4096 x 4096 | type F16 | T+ 3

INFO:convert:[ 10/291] Writing tensor blk.0.attn_v.weight | size 1024 x 4096 | type F16 | T+ 3

INFO:convert:[ 11/291] Writing tensor blk.1.attn_norm.weight | size 4096 | type F32 | T+ 3

转换为FP16的GGUF格式,模型体积大概15G。

root@master:~/work/llama.cpp# ll models -h

-rw-r--r-- 1 root root 15G May 17 07:47 Llama3-FP16.gguf

bin格式

root@master:~/work/llama.cpp# python3 ./convert.py /root/work/models/Llama3-Chinese-8B-Instruct/ --outtype f16 --vocab-type bpe --outfile ./models/Llama3-FP16.bin

INFO:convert:Loading model file /root/work/models/Llama3-Chinese-8B-Instruct/model-00001-of-00004.safetensors

INFO:convert:Loading model file /root/work/models/Llama3-Chinese-8B-Instruct/model-00001-of-00004.safetensors

INFO:convert:Loading model file /root/work/models/Llama3-Chinese-8B-Instruct/model-00002-of-00004.safetensors

INFO:convert:Loading model file /root/work/models/Llama3-Chinese-8B-Instruct/model-00003-of-00004.safetensors

INFO:convert:Loading model file /root/work/models/Llama3-Chinese-8B-Instruct/model-00004-of-00004.safetensors

INFO:convert:model parameters count : 8030261248 (8B)

INFO:convert:params = Params(n_vocab=128256, n_embd=4096, n_layer=32, n_ctx=8192, n_ff=14336, n_head=32, n_head_kv=8, n_experts=None, n_experts_used=None, f_norm_eps=1e-05, rope_scaling_type=None, f_rope_freq_base=500000.0, f_rope_scale=None, n_orig_ctx=None, rope_finetuned=None, ftype=<GGMLFileType.MostlyF16: 1>, path_model=PosixPath('/root/work/models/Llama3-Chinese-8B-Instruct'))

INFO:convert:Loaded vocab file PosixPath('/root/work/models/Llama3-Chinese-8B-Instruct/tokenizer.json'), type 'bpe'

INFO:convert:Vocab info: <BpeVocab with 128000 base tokens and 256 added tokens>

INFO:convert:Special vocab info: <SpecialVocab with 280147 merges, special tokens {'bos': 128000, 'eos': 128001}, add special tokens unset>

INFO:convert:Writing models/Llama3-FP16.bin, format 1

WARNING:convert:Ignoring added_tokens.json since model matches vocab size without it.

INFO:gguf.gguf_writer:gguf: This GGUF file is for Little Endian only

INFO:gguf.vocab:Adding 280147 merge(s).

INFO:gguf.vocab:Setting special token type bos to 128000

INFO:gguf.vocab:Setting special token type eos to 128001

INFO:gguf.vocab:Setting chat_template to {% set loop_messages = messages %}{% for message in loop_messages %}{% set content = '<|start_header_id|>' + message['role'] + '<|end_header_id|>

'+ message['content'] | trim + '<|eot_id|>' %}{% if loop.index0 == 0 %}{% set content = bos_token + content %}{% endif %}{{ content }}{% endfor %}{{ '<|start_header_id|>assistant<|end_header_id|>

' }}

INFO:convert:[ 1/291] Writing tensor token_embd.weight | size 128256 x 4096 | type F16 | T+ 4

INFO:convert:[ 2/291] Writing tensor blk.0.attn_norm.weight | size 4096 | type F32 | T+ 4

INFO:convert:[ 3/291] Writing tensor blk.0.ffn_down.weight | size 4096 x 14336 | type F16 | T+ 4

INFO:convert:[ 4/291] Writing tensor blk.0.ffn_gate.weight | size 14336 x 4096 | type F16 | T+ 5

INFO:convert:[ 5/291] Writing tensor blk.0.ffn_up.weight | size 14336 x 4096 | type F16 | T+ 5

INFO:convert:[ 6/291] Writing tensor blk.0.ffn_norm.weight | size 4096 | type F32 | T+ 5

INFO:convert:[ 7/291] Writing tensor blk.0.attn_k.weight | size 1024 x 4096 | type F16 | T+ 5

INFO:convert:[ 8/291] Writing tensor blk.0.attn_output.weight | size 4096 x 4096 | type F16 | T+ 5

INFO:convert:[ 9/291] Writing tensor blk.0.attn_q.weight | size 4096 x 4096 | type F16 | T+ 5

INFO:convert:[ 10/291] Writing tensor blk.0.attn_v.weight | size 1024 x 4096 | type F16 | T+ 5

INFO:convert:[ 11/291] Writing tensor blk.1.attn_norm.weight | size 4096 | type F32 | T+ 5

INFO:convert:[ 12/291] Writing tensor blk.1.ffn_down.weight | size 4096 x 14336 | type F16 | T+ 5

INFO:convert:[ 13/291] Writing tensor blk.1.ffn_gate.weight | size 14336 x 4096 | type F16 | T+ 5

root@master:~/work/llama.cpp# ll models -h

-rw-r--r-- 1 root root 15G May 17 07:47 Llama3-FP16.gguf

-rw-r--r-- 1 root root 15G May 17 08:02 Llama3-FP16.bin

模型量化

模型量化使用quantize命令,其具体可用参数与允许量化的类型如下:

root@master:~/work/llama.cpp# ./quantize

usage: ./quantize [--help] [--allow-requantize] [--leave-output-tensor] [--pure] [--imatrix] [--include-weights] [--exclude-weights] [--output-tensor-type] [--token-embedding-type] [--override-kv] model-f32.gguf [model-quant.gguf] type [nthreads]

--allow-requantize: Allows requantizing tensors that have already been quantized. Warning: This can severely reduce quality compared to quantizing from 16bit or 32bit

--leave-output-tensor: Will leave output.weight un(re)quantized. Increases model size but may also increase quality, especially when requantizing

--pure: Disable k-quant mixtures and quantize all tensors to the same type

--imatrix file_name: use data in file_name as importance matrix for quant optimizations

--include-weights tensor_name: use importance matrix for this/these tensor(s)

--exclude-weights tensor_name: use importance matrix for this/these tensor(s)

--output-tensor-type ggml_type: use this ggml_type for the output.weight tensor

--token-embedding-type ggml_type: use this ggml_type for the token embeddings tensor

--keep-split: will generate quatized model in the same shards as input --override-kv KEY=TYPE:VALUE

Advanced option to override model metadata by key in the quantized model. May be specified multiple times.

Note: --include-weights and --exclude-weights cannot be used together

Allowed quantization types:

2 or Q4_0 : 3.56G, +0.2166 ppl @ LLaMA-v1-7B

3 or Q4_1 : 3.90G, +0.1585 ppl @ LLaMA-v1-7B

8 or Q5_0 : 4.33G, +0.0683 ppl @ LLaMA-v1-7B

9 or Q5_1 : 4.70G, +0.0349 ppl @ LLaMA-v1-7B

19 or IQ2_XXS : 2.06 bpw quantization

20 or IQ2_XS : 2.31 bpw quantization

28 or IQ2_S : 2.5 bpw quantization

29 or IQ2_M : 2.7 bpw quantization

24 or IQ1_S : 1.56 bpw quantization

31 or IQ1_M : 1.75 bpw quantization

10 or Q2_K : 2.63G, +0.6717 ppl @ LLaMA-v1-7B

21 or Q2_K_S : 2.16G, +9.0634 ppl @ LLaMA-v1-7B

23 or IQ3_XXS : 3.06 bpw quantization

26 or IQ3_S : 3.44 bpw quantization

27 or IQ3_M : 3.66 bpw quantization mix

12 or Q3_K : alias for Q3_K_M

22 or IQ3_XS : 3.3 bpw quantization

11 or Q3_K_S : 2.75G, +0.5551 ppl @ LLaMA-v1-7B

12 or Q3_K_M : 3.07G, +0.2496 ppl @ LLaMA-v1-7B

13 or Q3_K_L : 3.35G, +0.1764 ppl @ LLaMA-v1-7B

25 or IQ4_NL : 4.50 bpw non-linear quantization

30 or IQ4_XS : 4.25 bpw non-linear quantization

15 or Q4_K : alias for Q4_K_M

14 or Q4_K_S : 3.59G, +0.0992 ppl @ LLaMA-v1-7B

15 or Q4_K_M : 3.80G, +0.0532 ppl @ LLaMA-v1-7B

17 or Q5_K : alias for Q5_K_M

16 or Q5_K_S : 4.33G, +0.0400 ppl @ LLaMA-v1-7B

17 or Q5_K_M : 4.45G, +0.0122 ppl @ LLaMA-v1-7B

18 or Q6_K : 5.15G, +0.0008 ppl @ LLaMA-v1-7B

7 or Q8_0 : 6.70G, +0.0004 ppl @ LLaMA-v1-7B

1 or F16 : 14.00G, -0.0020 ppl @ Mistral-7B

32 or BF16 : 14.00G, -0.0050 ppl @ Mistral-7B

0 or F32 : 26.00G @ 7B

COPY : only copy tensors, no quantizing

使用quantize量化模型,它提供各种量化位数的模型:Q2、Q3、Q4、Q5、Q6、Q8、F16。

量化模型的命名方法遵循: Q + 量化比特位 + 变种。量化位数越少,对硬件资源的要求越低,但是模型的精度也越低。

模型经过量化之后,可以发现模型的大小从15G降低到8G,但模型精度从16位浮点数降低到8位整数。

root@master:~/work/llama.cpp# ./quantize ./models/Llama3-FP16.gguf ./models/Llama3-q8.gguf q8_0

main: build = 2908 (359cbe3f)

main: built with cc (Ubuntu 11.4.0-1ubuntu1~22.04) 11.4.0 for x86_64-linux-gnu

main: quantizing '/root/work/models/Llama3-FP16.gguf' to '/root/work/models/Llama3-q8.gguf' as Q8_0

llama_model_loader: loaded meta data with 21 key-value pairs and 291 tensors from /root/work/models/Llama3-FP16.gguf (version GGUF V3 (latest))

llama_model_loader: Dumping metadata keys/values. Note: KV overrides do not apply in this output.

llama_model_loader: - kv 0: general.architecture str = llama

llama_model_loader: - kv 1: general.name str = Llama3-Chinese-8B-Instruct

llama_model_loader: - kv 2: llama.vocab_size u32 = 128256

llama_model_loader: - kv 3: llama.context_length u32 = 8192

llama_model_loader: - kv 4: llama.embedding_length u32 = 4096

llama_model_loader: - kv 5: llama.block_count u32 = 32

llama_model_loader: - kv 6: llama.feed_forward_length u32 = 14336

llama_model_loader: - kv 7: llama.rope.dimension_count u32 = 128

llama_model_loader: - kv 8: llama.attention.head_count u32 = 32

llama_model_loader: - kv 9: llama.attention.head_count_kv u32 = 8

llama_model_loader: - kv 10: llama.attention.layer_norm_rms_epsilon f32 = 0.000010

llama_model_loader: - kv 11: llama.rope.freq_base f32 = 500000.000000

llama_model_loader: - kv 12: general.file_type u32 = 1

llama_model_loader: - kv 13: tokenizer.ggml.model str = gpt2

llama_model_loader: - kv 14: tokenizer.ggml.tokens arr[str,128256] = ["!", "\"", "#", "$", "%", "&", "'", ...

llama_model_loader: - kv 15: tokenizer.ggml.scores arr[f32,128256] = [0.000000, 0.000000, 0.000000, 0.0000...

llama_model_loader: - kv 16: tokenizer.ggml.token_type arr[i32,128256] = [1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, ...

llama_model_loader: - kv 17: tokenizer.ggml.merges arr[str,280147] = ["Ġ Ġ", "Ġ ĠĠĠ", "ĠĠ ĠĠ", "...

llama_model_loader: - kv 18: tokenizer.ggml.bos_token_id u32 = 128000

llama_model_loader: - kv 19: tokenizer.ggml.eos_token_id u32 = 128001

llama_model_loader: - kv 20: tokenizer.chat_template str = {% set loop_messages = messages %}{% ...

llama_model_loader: - type f32: 65 tensors

llama_model_loader: - type f16: 226 tensors

[ 1/ 291] token_embd.weight - [ 4096, 128256, 1, 1], type = f16, converting to q8_0 .. size = 1002.00 MiB -> 532.31 MiB

[ 2/ 291] blk.0.attn_norm.weight - [ 4096, 1, 1, 1], type = f32, size = 0.016 MB

[ 3/ 291] blk.0.ffn_down.weight - [14336, 4096, 1, 1], type = f16, converting to q8_0 .. size = 112.00 MiB -> 59.50 MiB

[ 4/ 291] blk.0.ffn_gate.weight - [ 4096, 14336, 1, 1], type = f16, converting to q8_0 .. size = 112.00 MiB -> 59.50 MiB

[ 5/ 291] blk.0.ffn_up.weight - [ 4096, 14336, 1, 1], type = f16, converting to q8_0 .. size = 112.00 MiB -> 59.50 MiB

[ 6/ 291] blk.0.ffn_norm.weight - [ 4096, 1, 1, 1], type = f32, size = 0.016 MB

[ 7/ 291] blk.0.attn_k.weight - [ 4096, 1024, 1, 1], type = f16, converting to q8_0 .. size = 8.00 MiB -> 4.25 MiB

[ 8/ 291] blk.0.attn_output.weight - [ 4096, 4096, 1, 1], type = f16, converting to q8_0 .. size = 32.00 MiB -> 17.00 MiB

[ 9/ 291] blk.0.attn_q.weight - [ 4096, 4096, 1, 1], type = f16, converting to q8_0 .. size = 32.00 MiB -> 17.00 MiB

[ 10/ 291] blk.0.attn_v.weight - [ 4096, 1024, 1, 1], type = f16, converting to q8_0 .. size = 8.00 MiB -> 4.25 MiB

[ 11/ 291] blk.1.attn_norm.weight - [ 4096, 1, 1, 1], type = f32, size = 0.016 MB

[ 12/ 291] blk.1.ffn_down.weight - [14336, 4096, 1, 1], type = f16, converting to q8_0 .. size = 112.00 MiB -> 59.50 MiB

[ 13/ 291] blk.1.ffn_gate.weight - [ 4096, 14336, 1, 1], type = f16, converting to q8_0 .. size = 112.00 MiB -> 59.50 MiB

[ 14/ 291] blk.1.ffn_up.weight - [ 4096, 14336, 1, 1], type = f16, converting to q8_0 .. size = 112.00 MiB -> 59.50 MiB

[ 15/ 291] blk.1.ffn_norm.weight - [ 4096, 1, 1, 1], type = f32, size = 0.016 MB

[ 16/ 291] blk.1.attn_k.weight - [ 4096, 1024, 1, 1], type = f16, converting to q8_0 .. size = 8.00 MiB -> 4.25 MiB

[ 17/ 291] blk.1.attn_output.weight - [ 4096, 4096, 1, 1], type = f16, converting to q8_0 .. size = 32.00 MiB -> 17.00 MiB

[ 18/ 291] blk.1.attn_q.weight - [ 4096, 4096, 1, 1], type = f16, converting to q8_0 .. size = 32.00 MiB -> 17.00 MiB

[ 19/ 291] blk.1.attn_v.weight - [ 4096, 1024, 1, 1], type = f16, converting to q8_0 .. size = 8.00 MiB -> 4.25 MiB

root@master:~/work/llama.cpp# ll -h models/

-rw-r--r-- 1 root root 8.0G May 17 07:54 Llama3-q8.gguf

模型加载与推理

模型加载与推理使用main命令,其支持如下可用参数:

root@master:~/work/llama.cpp# ./main -h

usage: ./main [options]

options:

-h, --help show this help message and exit

--version show version and build info

-i, --interactive run in interactive mode

--interactive-specials allow special tokens in user text, in interactive mode

--interactive-first run in interactive mode and wait for input right away

-cnv, --conversation run in conversation mode (does not print special tokens and suffix/prefix)

-ins, --instruct run in instruction mode (use with Alpaca models)

-cml, --chatml run in chatml mode (use with ChatML-compatible models)

--multiline-input allows you to write or paste multiple lines without ending each in '\'

-r PROMPT, --reverse-prompt PROMPT

halt generation at PROMPT, return control in interactive mode

(can be specified more than once for multiple prompts).

--color colorise output to distinguish prompt and user input from generations

-s SEED, --seed SEED RNG seed (default: -1, use random seed for < 0)

-t N, --threads N number of threads to use during generation (default: 30)

-tb N, --threads-batch N

number of threads to use during batch and prompt processing (default: same as --threads)

-td N, --threads-draft N number of threads to use during generation (default: same as --threads)

-tbd N, --threads-batch-draft N

number of threads to use during batch and prompt processing (default: same as --threads-draft)

-p PROMPT, --prompt PROMPT

prompt to start generation with (default: empty)

可以加载预训练模型或者经过量化之后的模型,这里选择加载量化后的模型进行推理。

在llama.cpp项目的根目录,执行如下命令,加载模型进行推理。

root@master:~/work/llama.cpp# ./main -m models/Llama3-q8.gguf --color -f prompts/alpaca.txt -ins -c 2048 --temp 0.2 -n 256 --repeat_penalty 1.1

Log start

main: build = 2908 (359cbe3f)

main: built with cc (Ubuntu 11.4.0-1ubuntu1~22.04) 11.4.0 for x86_64-linux-gnu

main: seed = 1715935175

llama_model_loader: loaded meta data with 22 key-value pairs and 291 tensors from models/Llama3-q8.gguf (version GGUF V3 (latest))

llama_model_loader: Dumping metadata keys/values. Note: KV overrides do not apply in this output.

llama_model_loader: - kv 0: general.architecture str = llama

llama_model_loader: - kv 1: general.name str = Llama3-Chinese-8B-Instruct

llama_model_loader: - kv 2: llama.vocab_size u32 = 128256

llama_model_loader: - kv 3: llama.context_length u32 = 8192

llama_model_loader: - kv 4: llama.embedding_length u32 = 4096

llama_model_loader: - kv 5: llama.block_count u32 = 32

llama_model_loader: - kv 6: llama.feed_forward_length u32 = 14336

llama_model_loader: - kv 7: llama.rope.dimension_count u32 = 128

== Running in interactive mode. ==

- Press Ctrl+C to interject at any time.

- Press Return to return control to LLaMa.

- To return control without starting a new line, end your input with '/'.

- If you want to submit another line, end your input with '\'.

<|begin_of_text|>Below is an instruction that describes a task. Write a response that appropriately completes the request.

> hi

Hello! How can I help you today?<|eot_id|>

>

在提示符>之后输入prompt,使用ctrl+c中断输出,多行信息以\作为行尾。执行./main -h命令查看帮助和参数说明,以下是一些常用的参数:

`

| 命令 | 描述 |

|---|---|

| -m | 指定 LLaMA 模型文件的路径 |

| -mu | 指定远程 http url 来下载文件 |

| -i | 以交互模式运行程序,允许直接提供输入并接收实时响应。 |

| -ins | 以指令模式运行程序,这在处理羊驼模型时特别有用。 |

| -f | 指定prompt模板,alpaca模型请加载prompts/alpaca.txt |

| -n | 控制回复生成的最大长度(默认:128) |

| -c | 设置提示上下文的大小,值越大越能参考更长的对话历史(默认:512) |

| -b | 控制batch size(默认:8),可适当增加 |

| -t | 控制线程数量(默认:4),可适当增加 |

--repeat_penalty | 控制生成回复中对重复文本的惩罚力度 |

--temp | 温度系数,值越低回复的随机性越小,反之越大 |

--top_p, top_k | 控制解码采样的相关参数 |

--color | 区分用户输入和生成的文本 |

模型API服务

llama.cpp提供了完全与OpenAI API兼容的API接口,使用经过编译生成的server可执行文件启动API服务。

root@master:~/work/llama.cpp# ./server -m models/Llama3-q8.gguf --host 0.0.0.0 --port 8000

{"tid":"140018656950080","timestamp":1715936504,"level":"INFO","function":"main","line":2942,"msg":"build info","build":2908,"commit":"359cbe3f"}

{"tid":"140018656950080","timestamp":1715936504,"level":"INFO","function":"main","line":2947,"msg":"system info","n_threads":30,"n_threads_batch":-1,"total_threads":30,"system_info":"AVX = 1 | AVX_VNNI = 0 | AVX2 = 1 | AVX512 = 1 | AVX512_VBMI = 0 | AVX512_VNNI = 0 | FMA = 1 | NEON = 0 | ARM_FMA = 0 | F16C = 1 | FP16_VA = 0 | WASM_SIMD = 0 | BLAS = 0 | SSE3 = 1 | SSSE3 = 1 | VSX = 0 | MATMUL_INT8 = 0 | LLAMAFILE = 1 | "}

llama_model_loader: loaded meta data with 22 key-value pairs and 291 tensors from models/Llama3-q8.gguf (version GGUF V3 (latest))

llama_model_loader: Dumping metadata keys/values. Note: KV overrides do not apply in this output.

llama_model_loader: - kv 0: general.architecture str = llama

llama_model_loader: - kv 1: general.name str = Llama3-Chinese-8B-Instruct

llama_model_loader: - kv 2: llama.vocab_size u32 = 128256

llama_model_loader: - kv 3: llama.context_length u32 = 8192

llama_model_loader: - kv 4: llama.embedding_length u32 = 4096

llama_model_loader: - kv 5: llama.block_count u32 = 32

llama_model_loader: - kv 6: llama.feed_forward_length u32 = 14336

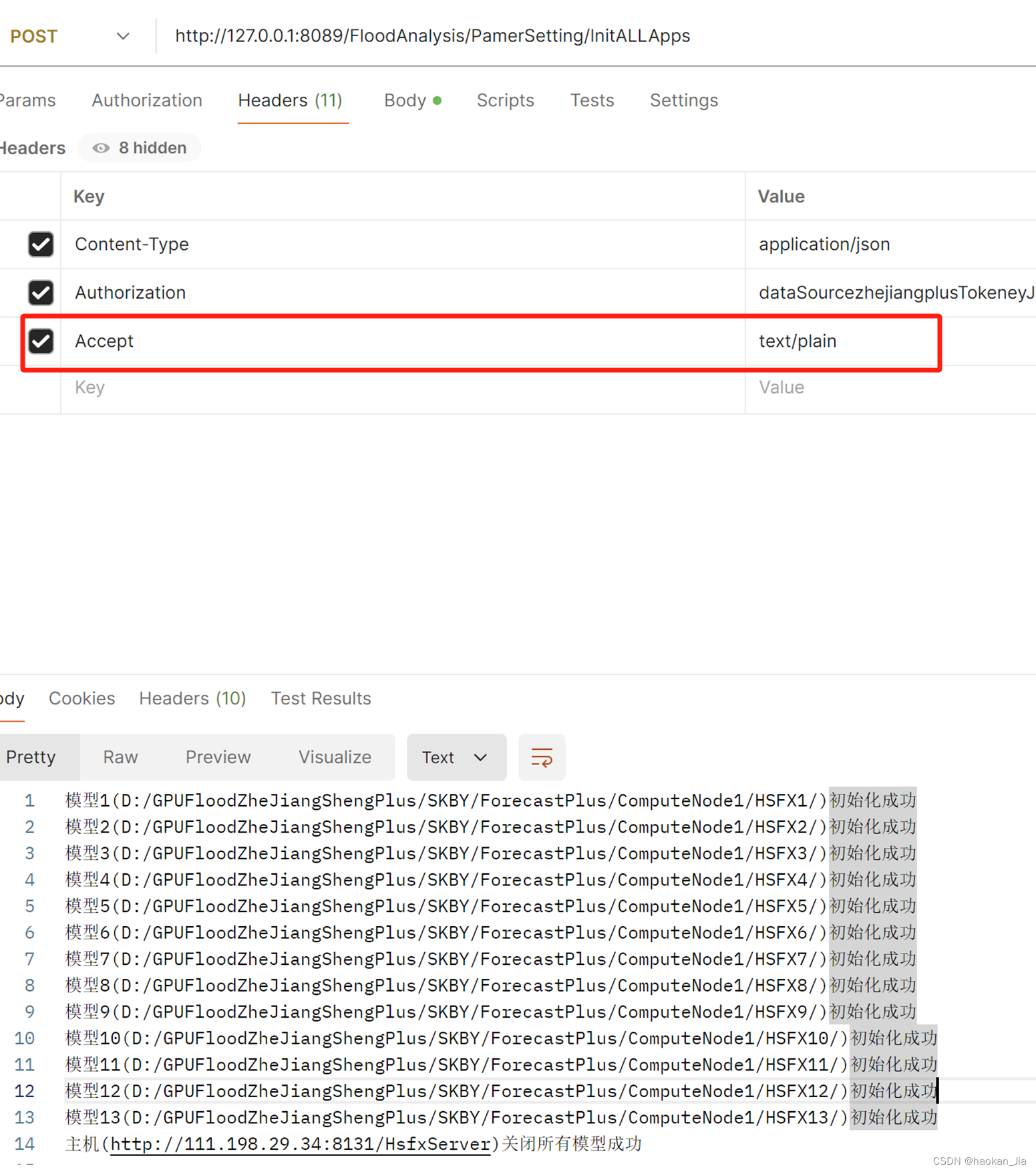

启动API服务后,可以使用curl命令进行测试

curl --request POST \

--url http://localhost:8000/completion \

--header "Content-Type: application/json" \

--data '{"prompt": "Hi"}'

模型API服务(第三方)

在llamm.cpp项目中有提到各种语言编写的第三方工具包,可以使用这些工具包提供API服务,这里以Python为例,使用llama-cpp-python提供API服务。

安装依赖

pip install llama-cpp-python

pip install llama-cpp-python -i https://mirrors.aliyun.com/pypi/simple/

注意:可能还需要安装以下缺失依赖,可根据启动时的异常提示分别安装。

pip install sse_starlette starlette_context pydantic_settings

启动API服务,默认运行在http://localhost:8000

python -m llama_cpp.server --model models/Llama3-q8.gguf

安装openai依赖

pip install openai

使用openai调用API服务

import os

from openai import OpenAI # 导入OpenAI库

# 设置OpenAI的BASE_URL

os.environ["OPENAI_BASE_URL"] = "http://localhost:8000/v1"

client = OpenAI() # 创建OpenAI客户端对象

# 调用模型

completion = client.chat.completions.create(

model="llama3", # 任意填

messages=[

{"role": "system", "content": "你是一个乐于助人的助手。"},

{"role": "user", "content": "你好!"}

]

)

# 输出模型回复

print(completion.choices[0].message)

GPU推理

如果编译构建了GPU执行环境,可以使用

-ngl N或--n-gpu-layers N参数,指定offload层数,让模型在GPU上运行推理

例如:

-ngl 40表示offload 40层模型参数到GPU

未使用-ngl N或 --n-gpu-layers N参数,程序默认在CPU上运行

root@master:~/work/llama.cpp# ./server -m models/Llama3-FP16.gguf --host 0.0.0.0 --port 8000

可从以下关键启动日志看出,模型并没有在GPU上执行

ggml_cuda_init: GGML_CUDA_FORCE_MMQ: no

ggml_cuda_init: CUDA_USE_TENSOR_CORES: yes

ggml_cuda_init: found 1 CUDA devices:

Device 0: Tesla V100S-PCIE-32GB, compute capability 7.0, VMM: yes

llm_load_tensors: ggml ctx size = 0.15 MiB

llm_load_tensors: offloading 0 repeating layers to GPU

llm_load_tensors: offloaded 0/33 layers to GPU

llm_load_tensors: CPU buffer size = 8137.64 MiB

.........................................................................................

llama_new_context_with_model: n_ctx = 2048

llama_new_context_with_model: n_batch = 2048

llama_new_context_with_model: n_ubatch = 512

使用-ngl N或 --n-gpu-layers N参数,程序默认在GPU上运行

root@master:~/work/llama.cpp# ./server -m models/Llama3-FP16.gguf --host 0.0.0.0 --port 8000 --n-gpu-layers 1000

可从以下关键启动日志看出,模型在GPU上执行

ggml_cuda_init: GGML_CUDA_FORCE_MMQ: no

ggml_cuda_init: CUDA_USE_TENSOR_CORES: yes

ggml_cuda_init: found 1 CUDA devices:

Device 0: Tesla V100S-PCIE-32GB, compute capability 7.0, VMM: yes

llm_load_tensors: ggml ctx size = 0.30 MiB

llm_load_tensors: offloading 32 repeating layers to GPU

llm_load_tensors: offloading non-repeating layers to GPU

llm_load_tensors: offloaded 33/33 layers to GPU

llm_load_tensors: CPU buffer size = 1002.00 MiB

llm_load_tensors: CUDA0 buffer size = 14315.02 MiB

.........................................................................................

llama_new_context_with_model: n_ctx = 512

llama_new_context_with_model: n_batch = 512

llama_new_context_with_model: n_ubatch = 512

llama_new_context_with_model: flash_attn = 0

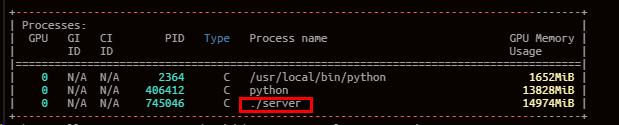

执行nvidia-smi命令,可以进一步验证模型已在GPU上运行。