目录

一、随机森林算法

1.1 算法简介

1.2 OpenCV-随机森林(Random Forest)

二、cv::ml::RTrees应用

2.2 RTrees应用

2.2 程序编译

2.3 main.cpp全代码

一、随机森林算法

1.1 算法简介

随机森林算法是一种集成学习(Ensemble Learning)方法,由多个决策树组成。它结合了决策树的高效性和集成学习的准确性,具有很强的模型泛化能力。

随机森林算法的原理主要包括两个方面:随机性和集成。

- 随机性:体现在样本的随机性和特征的随机性。算法通过引入随机性来构建多个决策树,每个决策树都是基于随机抽样的训练数据和随机选择的特征进行构建的。这种随机性能够有效地减少过拟合的风险,提高模型的泛化能力。

- 集成:通过集成多个决策树的预测结果来完成最终的分类或回归任务。通常采用投票的方式进行集成,即多数表决原则。在随机森林中,每棵决策树的构建过程都是相互独立的,这意味着每棵决策树都是在不同的训练数据和特征子集上进行构建的,这种随机性能够有效地降低模型的方差,提高模型的稳定性。

1.2 OpenCV-随机森林(Random Forest)

在OpenCV中,随机森林(Random Forest)是一种集成学习方法,通过构建并组合多个决策树来做出预测。OpenCV提供了cv::ml::RTrees类来实现随机森林算法。决策树在使用时仅仅构建一棵树,这样容易出现过拟合现象,可以通过构建多个决策树来避免过拟合现象。当构建多个决策树时,就出现了随机森林。这种方法通过多个决策树的投票来得到在最终的结果。

//创建 RTrees对象

cv::Ptr<cv::ml::RTrees> rf = cv::ml::RTrees::create();

RTrees类:

setMaxDepth() 设置决策树的最大深度

setMinSampleCount() 设置叶子节点上的最小样本数

setRegressionAccuracy() 非必须 回归算法的精度

setPriors() 非必须 数据类型

setCalculateVarImportance() 非必须 是否要计算var

setActiveVarCount() 非必须 设置var的数目

setTermCriteria() 设置终止条件

.....随机森林通过随机选择特征和样本子集来构建每棵决策树,并将它们的预测结果进行集成。这种方法通常能够降低过拟合的风险,并提高模型的预测性能。

二、cv::ml::RTrees应用

2.1 数据集样本准备

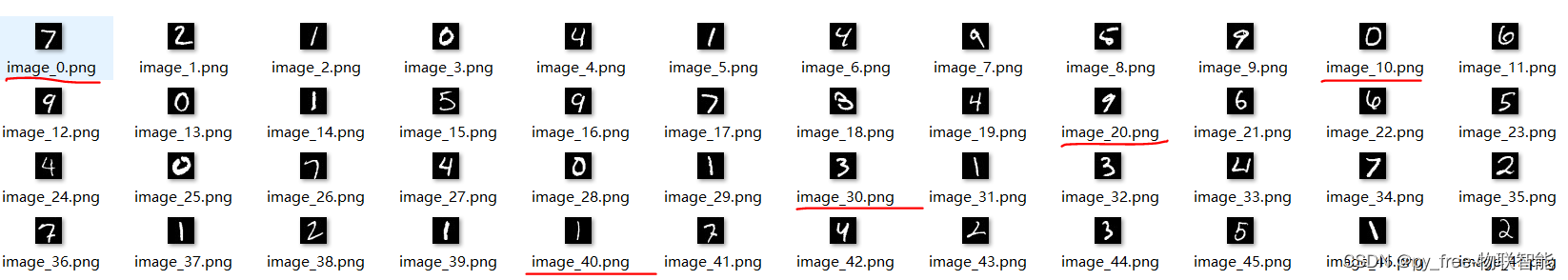

本文为了快速验证使用,采用mnist数据集,参考本专栏博文《C/C++开发,opencv-ml库学习,支持向量机(SVM)应用-CSDN博客》下载MNIST 数据集(手写数字识别),并解压。

同时参考该博文“2.4 SVM(支持向量机)实时识别应用”的章节资料,利用python代码解压t10k-images.idx3-ubyte出图片数据文件。

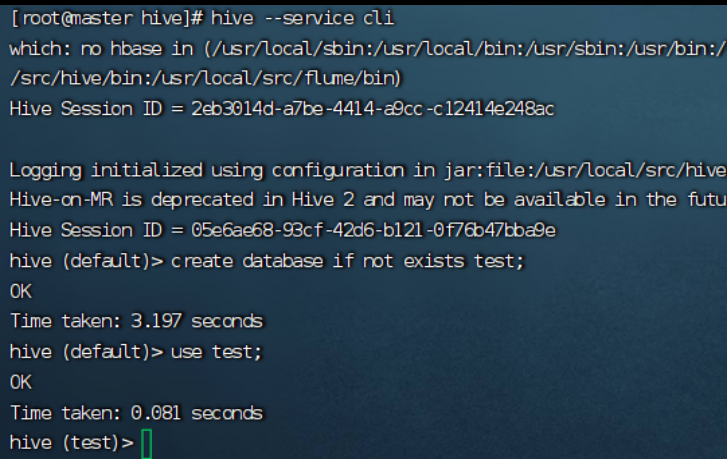

2.2 RTrees应用

创建了一个 cv::ml::RTrees对象,并设置了训练数据和终止条件。接着,我们调用 train 方法来训练决策树模型。最后,我们使用训练好的模型来预测一个新样本的类别。

// 3. 设置并训练随机森林模型

cv::Ptr<cv::ml::RTrees> rf = cv::ml::RTrees::create();

rf->setMaxDepth(30); // 设置决策树的最大深度

rf->setMinSampleCount(2); // 设置叶子节点上的最小样本数

rf->setTermCriteria(cv::TermCriteria(cv::TermCriteria::EPS + cv::TermCriteria::COUNT, 10, 0.1)); // 设置终止条件

rf->train(trainingData, cv::ml::ROW_SAMPLE, labelsMat);

......

cv::Mat testResp;

float response = rf->predict(testData,testResp);

......

rf->save("mnist_svm.xml"); 训练及测试过的算法模型,保存输出,然后调用

cv::Ptr<cv::ml::RTrees> rf = cv::ml::StatModel::load<cv::ml::RTrees>("mnist_svm.xml");

//预测图片

float ret = rf ->predict(image);

std::cout << "predict val = "<< ret << std::endl;2.2 程序编译

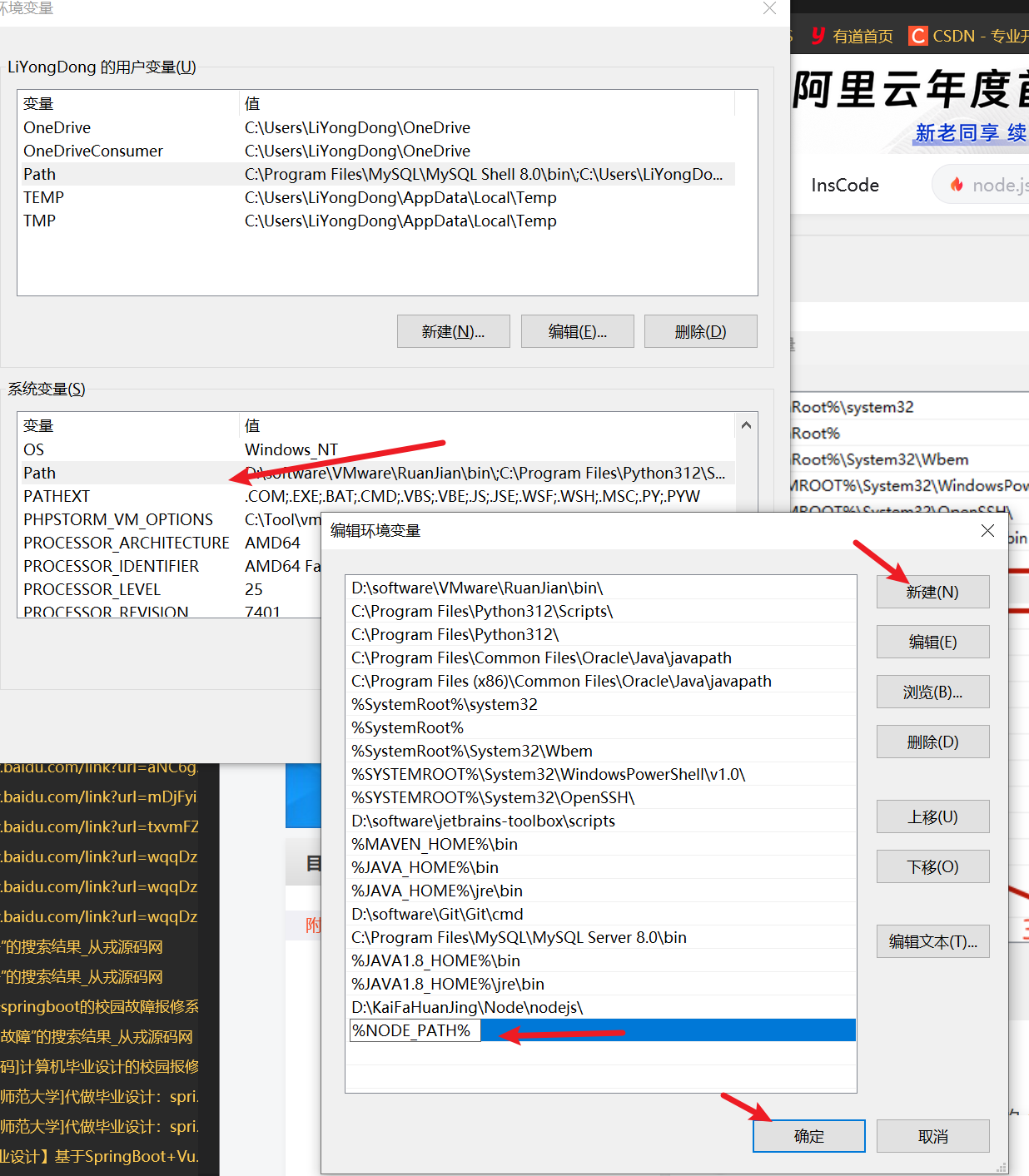

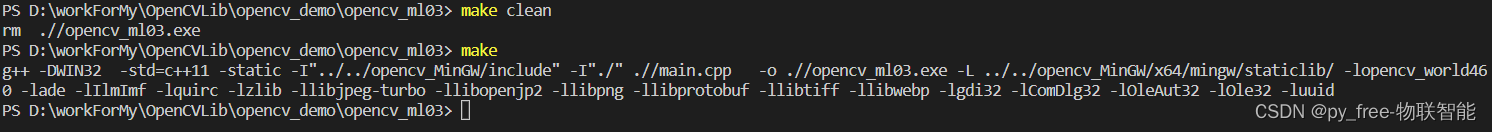

和讲述支持向量机(SVM)应用的博文编译类似,采用opencv+mingw+makefile方式编译:

#/bin/sh

#win32

CX= g++ -DWIN32

#linux

#CX= g++ -Dlinux

BIN := ./

TARGET := opencv_ml03.exe

FLAGS := -std=c++11 -static

SRCDIR := ./

#INCLUDES

INCLUDEDIR := -I"../../opencv_MinGW/include" -I"./"

#-I"$(SRCDIR)"

staticDir := ../../opencv_MinGW/x64/mingw/staticlib/

#LIBDIR := $(staticDir)/libopencv_world460.a\

# $(staticDir)/libade.a \

# $(staticDir)/libIlmImf.a \

# $(staticDir)/libquirc.a \

# $(staticDir)/libzlib.a \

# $(wildcard $(staticDir)/liblib*.a) \

# -lgdi32 -lComDlg32 -lOleAut32 -lOle32 -luuid

#opencv_world放弃前,然后是opencv依赖的第三方库,后面的库是MinGW编译工具的库

LIBDIR := -L $(staticDir) -lopencv_world460 -lade -lIlmImf -lquirc -lzlib \

-llibjpeg-turbo -llibopenjp2 -llibpng -llibprotobuf -llibtiff -llibwebp \

-lgdi32 -lComDlg32 -lOleAut32 -lOle32 -luuid

source := $(wildcard $(SRCDIR)/*.cpp)

$(TARGET) :

$(CX) $(FLAGS) $(INCLUDEDIR) $(source) -o $(BIN)/$(TARGET) $(LIBDIR)

clean:

rm $(BIN)/$(TARGET)

make编译,make clean 清除可重新编译。

运行效果,同样数据样本,相比决策树算法训练结果,其准确率有了较大改善,大家可以尝试调整参数验证:

2.3 main.cpp全代码

main.cpp源代码,由于是基于前两篇博文支持向量机(SVM)应用、决策树(DTrees)应用基础上,快速移用实现的,有很多支持向量机(SVM)应用或决策树(DTrees)的痕迹,采用的数据样本也非较合适的,仅仅是为了阐述c++ opencv 随机森林(RTrees)应用说明。

#include <opencv2/opencv.hpp>

#include <opencv2/ml/ml.hpp>

#include <opencv2/imgcodecs.hpp>

#include <iostream>

#include <vector>

#include <iostream>

#include <fstream>

int intReverse(int num)

{

return (num>>24|((num&0xFF0000)>>8)|((num&0xFF00)<<8)|((num&0xFF)<<24));

}

std::string intToString(int num)

{

char buf[32]={0};

itoa(num,buf,10);

return std::string(buf);

}

cv::Mat read_mnist_image(const std::string fileName) {

int magic_number = 0;

int number_of_images = 0;

int img_rows = 0;

int img_cols = 0;

cv::Mat DataMat;

std::ifstream file(fileName, std::ios::binary);

if (file.is_open())

{

std::cout << "open images file: "<< fileName << std::endl;

file.read((char*)&magic_number, sizeof(magic_number));//format

file.read((char*)&number_of_images, sizeof(number_of_images));//images number

file.read((char*)&img_rows, sizeof(img_rows));//img rows

file.read((char*)&img_cols, sizeof(img_cols));//img cols

magic_number = intReverse(magic_number);

number_of_images = intReverse(number_of_images);

img_rows = intReverse(img_rows);

img_cols = intReverse(img_cols);

std::cout << "format:" << magic_number

<< " img num:" << number_of_images

<< " img row:" << img_rows

<< " img col:" << img_cols << std::endl;

std::cout << "read img data" << std::endl;

DataMat = cv::Mat::zeros(number_of_images, img_rows * img_cols, CV_32FC1);

unsigned char temp = 0;

for (int i = 0; i < number_of_images; i++) {

for (int j = 0; j < img_rows * img_cols; j++) {

file.read((char*)&temp, sizeof(temp));

//svm data is CV_32FC1

float pixel_value = float(temp);

DataMat.at<float>(i, j) = pixel_value;

}

}

std::cout << "read img data finish!" << std::endl;

}

file.close();

return DataMat;

}

cv::Mat read_mnist_label(const std::string fileName) {

int magic_number;

int number_of_items;

cv::Mat LabelMat;

std::ifstream file(fileName, std::ios::binary);

if (file.is_open())

{

std::cout << "open label file: "<< fileName << std::endl;

file.read((char*)&magic_number, sizeof(magic_number));

file.read((char*)&number_of_items, sizeof(number_of_items));

magic_number = intReverse(magic_number);

number_of_items = intReverse(number_of_items);

std::cout << "format:" << magic_number << " ;label_num:" << number_of_items << std::endl;

std::cout << "read Label data" << std::endl;

//data type:CV_32SC1,channel:1

LabelMat = cv::Mat::zeros(number_of_items, 1, CV_32SC1);

for (int i = 0; i < number_of_items; i++) {

unsigned char temp = 0;

file.read((char*)&temp, sizeof(temp));

LabelMat.at<unsigned int>(i, 0) = (unsigned int)temp;

}

std::cout << "read label data finish!" << std::endl;

}

file.close();

return LabelMat;

}

//change path for real paths

std::string trainImgFile = "D:\\workForMy\\OpenCVLib\\opencv_demo\\opencv_ml01\\train-images.idx3-ubyte";

std::string trainLabeFile = "D:\\workForMy\\OpenCVLib\\opencv_demo\\opencv_ml01\\train-labels.idx1-ubyte";

std::string testImgFile = "D:\\workForMy\\OpenCVLib\\opencv_demo\\opencv_ml01\\t10k-images.idx3-ubyte";

std::string testLabeFile = "D:\\workForMy\\OpenCVLib\\opencv_demo\\opencv_ml01\\t10k-labels.idx1-ubyte";

void train_SVM()

{

//read train images, data type CV_32FC1

cv::Mat trainingData = read_mnist_image(trainImgFile);

//images data normalization

trainingData = trainingData/255.0;

std::cout << "trainingData.size() = " << trainingData.size() << std::endl;

std::cout << "trainingData.type() = " << trainingData.type() << std::endl;

std::cout << "trainingData.rows = " << trainingData.rows << std::endl;

std::cout << "trainingData.cols = " << trainingData.cols << std::endl;

//read train label, data type CV_32SC1

cv::Mat labelsMat = read_mnist_label(trainLabeFile);

std::cout << "labelsMat.size() = " << labelsMat.size() << std::endl;

std::cout << "labelsMat.type() = " << labelsMat.type() << std::endl;

std::cout << "labelsMat.rows = " << labelsMat.rows << std::endl;

std::cout << "labelsMat.cols = " << labelsMat.cols << std::endl;

std::cout << "trainingData & labelsMat finish!" << std::endl;

// //create SVM model

// cv::Ptr<cv::ml::SVM> svm = cv::ml::SVM::create();

// //set svm args,type and KernelTypes

// svm->setType(cv::ml::SVM::C_SVC);

// svm->setKernel(cv::ml::SVM::POLY);

// //KernelTypes POLY is need set gamma and degree

// svm->setGamma(3.0);

// svm->setDegree(2.0);

// //Set iteration termination conditions, maxCount is importance

// svm->setTermCriteria(cv::TermCriteria(cv::TermCriteria::EPS | cv::TermCriteria::COUNT, 1000, 1e-8));

// std::cout << "create SVM object finish!" << std::endl;

// std::cout << "trainingData.rows = " << trainingData.rows << std::endl;

// std::cout << "trainingData.cols = " << trainingData.cols << std::endl;

// std::cout << "trainingData.type() = " << trainingData.type() << std::endl;

// // svm model train

// svm->train(trainingData, cv::ml::ROW_SAMPLE, labelsMat);

// std::cout << "SVM training finish!" << std::endl;

// // 创建决策树对象

// cv::Ptr<cv::ml::DTrees> dtree = cv::ml::DTrees::create();

// dtree->setMaxDepth(30); // 设置树的最大深度

// dtree->setCVFolds(0);

// dtree->setMinSampleCount(1); // 设置分裂内部节点所需的最小样本数

// std::cout << "create dtree object finish!" << std::endl;

// // 训练决策树--trainingData训练数据,labelsMat训练标签

// cv::Ptr<cv::ml::TrainData> td = cv::ml::TrainData::create(trainingData, cv::ml::ROW_SAMPLE, labelsMat);

// std::cout << "create TrainData object finish!" << std::endl;

// if(dtree->train(td))

// {

// std::cout << "dtree training finish!" << std::endl;

// }else{

// std::cout << "dtree training fail!" << std::endl;

// }

// 3. 设置并训练随机森林模型

cv::Ptr<cv::ml::RTrees> rf = cv::ml::RTrees::create();

rf->setMaxDepth(30); // 设置决策树的最大深度

rf->setMinSampleCount(2); // 设置叶子节点上的最小样本数

rf->setTermCriteria(cv::TermCriteria(cv::TermCriteria::EPS + cv::TermCriteria::COUNT, 10, 0.1)); // 设置终止条件

rf->train(trainingData, cv::ml::ROW_SAMPLE, labelsMat);

// svm model test

cv::Mat testData = read_mnist_image(testImgFile);

//images data normalization

testData = testData/255.0;

std::cout << "testData.rows = " << testData.rows << std::endl;

std::cout << "testData.cols = " << testData.cols << std::endl;

std::cout << "testData.type() = " << testData.type() << std::endl;

//read test label, data type CV_32SC1

cv::Mat testlabel = read_mnist_label(testLabeFile);

cv::Mat testResp;

// float response = svm->predict(testData,testResp);

// float response = dtree->predict(testData,testResp);

float response = rf->predict(testData,testResp);

// std::cout << "response = " << response << std::endl;

testResp.convertTo(testResp,CV_32SC1);

int map_num = 0;

for (int i = 0; i <testResp.rows&&testResp.rows==testlabel.rows; i++)

{

if (testResp.at<int>(i, 0) == testlabel.at<int>(i, 0))

{

map_num++;

}

// else{

// std::cout << "testResp.at<int>(i, 0) " << testResp.at<int>(i, 0) << std::endl;

// std::cout << "testlabel.at<int>(i, 0) " << testlabel.at<int>(i, 0) << std::endl;

// }

}

float proportion = float(map_num) / float(testResp.rows);

std::cout << "map rate: " << proportion * 100 << "%" << std::endl;

std::cout << "SVM testing finish!" << std::endl;

//save svm model

// svm->save("mnist_svm.xml");

// dtree->save("mnist_svm.xml");

rf->save("mnist_svm.xml");

}

void prediction(const std::string fileName,cv::Ptr<cv::ml::DTrees> dtree)

// void prediction(const std::string fileName,cv::Ptr<cv::ml::SVM> svm)

{

//read img 28*28 size

cv::Mat image = cv::imread(fileName, cv::IMREAD_GRAYSCALE);

//uchar->float32

image.convertTo(image, CV_32F);

//image data normalization

image = image / 255.0;

//28*28 -> 1*784

image = image.reshape(1, 1);

//预测图片

float ret = dtree->predict(image);

std::cout << "predict val = "<< ret << std::endl;

}

std::string imgDir = "D:\\workForMy\\OpenCVLib\\opencv_demo\\opencv_ml01\\t10k-images\\";

std::string ImgFiles[5] = {"image_0.png","image_10.png","image_20.png","image_30.png","image_40.png",};

void predictimgs()

{

//load svm model

// cv::Ptr<cv::ml::SVM> svm = cv::ml::StatModel::load<cv::ml::SVM>("mnist_svm.xml");

//load DTrees model

// cv::Ptr<cv::ml::DTrees> dtree = cv::ml::StatModel::load<cv::ml::DTrees>("mnist_svm.xml");

cv::Ptr<cv::ml::RTrees> rf = cv::ml::StatModel::load<cv::ml::RTrees>("mnist_svm.xml");

for (size_t i = 0; i < 5; i++)

{

prediction(imgDir+ImgFiles[i],rf);

}

}

int main()

{

train_SVM();

predictimgs();

return 0;

}