数据增强

增强一个已有的数据集,使得有更多的多样性

1.在语言里面加入各种不同的背景噪音

2.改变图片的颜色和形状

一般是在线生成、随机增强

常见数据增强

1.左右翻转

2.上下翻转(不总可行)

3.切割:从图片中切割一块,变形到固定形状(随机高宽比[3/4 or 4/3]、随机大小[8% or 100%]、随机位置)

4.颜色:改变色调,饱和度,明亮度

总结

1.数据增广通过变形数据来获得多样性,从而使得模型泛化性能更好

2.常见操作:翻转、切割、变色

额外补充,colab读取图片遇到的问题

img = d2l.Image.open(‘…\img\cat1.jpg’)会遇到找不到图片的问题,可参考https://blog.csdn.net/weixin_47505105/article/details/128514242

%matplotlib inline

import torch

import torchvision

from torch import nn

from d2l import torch as d2l

d2l.set_figsize()

img = d2l.Image.open('cat1.jpg')

d2l.plt.imshow(img);

# 此处图片保存在同个文件夹下,具体操作参考 http://t.csdnimg.cn/DP6au

# 或者读取URL路径

import requests

from PIL import Image

import io

import matplotlib.pyplot as plt

# 图片的URL

image_url = 'https://raw.githubusercontent.com/d2l-ai/d2l-en/master/img/catdog.jpg' # 替换为实际的图片URL

# 发送请求获取图片内容

response = requests.get(image_url)

image_content = response.content

# 使用BytesIO来读取二进制流,然后转换成Image对象

image_stream = io.BytesIO(image_content)

img = Image.open(image_stream)

# 显示图片

plt.imshow(img)

plt.axis('off') # 不显示坐标轴

plt.show()

定义辅助函数apply:此函数在输入图像img上多次运行图像增广方法aug并显示所有结果。

def apply(img, aug, num_rows=2, num_cols=4, scale=1.5):

Y = [aug(img) for _ in range(num_rows * num_cols)]

d2l.show_images(Y, num_rows, num_cols, scale=scale)

翻转

左右:使用transforms模块来创建RandomFlipLeftRight实例,这样就各有50%的几率使图像向左或向右翻转。

apply(img, torchvision.transforms.RandomHorizontalFlip())

上下:RandomFlipTopBottom实例,使图像各有50%的几率向上或向下翻转。

apply(img, torchvision.transforms.RandomVerticalFlip())

裁剪

随机裁剪一个面积为原始面积10%到100%的区域,该区域的宽高比从0.5~2之间随机取值。 然后,区域的宽度和高度都被缩放到200像素。

shape_aug = torchvision.transforms.RandomResizedCrop(

(200, 200), scale=(0.1, 1), ratio=(0.5, 2))

apply(img, shape_aug)

改变颜色

随机更改图像的亮度,随机值为原始图像的50%(1-0.5)到150%(1+0.5)之间。

apply(img, torchvision.transforms.ColorJitter(

brightness=0.5, contrast=0, saturation=0, hue=0))

随机更改色调

apply(img, torchvision.transforms.ColorJitter(

brightness=0, contrast=0, saturation=0, hue=0.5))

创建一个RandomColorJitter实例,并设置如何同时随机更改图像的亮度(brightness)、对比度(contrast)、饱和度(saturation)和色调(hue)

color_aug = torchvision.transforms.ColorJitter(

brightness=0.5, contrast=0.5, saturation=0.5, hue=0.5)

apply(img, color_aug)

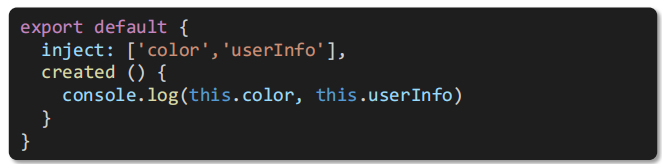

多种图像增广

通过使用一个Compose实例来综合上面定义的不同的图像增广方法,并将它们应用到每个图像。

augs = torchvision.transforms.Compose([

torchvision.transforms.RandomHorizontalFlip(), color_aug, shape_aug])

apply(img, augs)

图像增广进行训练

使用CIFAR-10数据集, CIFAR-10数据集中的前32个训练图像如下所示

all_images = torchvision.datasets.CIFAR10(train=True, root="../data",

download=True)

d2l.show_images([all_images[i][0] for i in range(32)], 4, 8, scale=0.8);

Downloading https://www.cs.toronto.edu/~kriz/cifar-10-python.tar.gz to ../data/cifar-10-python.tar.gz

100%|██████████| 170498071/170498071 [00:04<00:00, 40330181.37it/s]

Extracting ../data/cifar-10-python.tar.gz to ../data

只使用最简单的随机左右翻转,我们使用ToTensor实例将一批图像转换为深度学习框架所要求的格式,即形状为(批量大小,通道数,高度,宽度)的32位浮点数,取值范围为0~1。

train_augs = torchvision.transforms.Compose([

torchvision.transforms.RandomHorizontalFlip(),

torchvision.transforms.ToTensor()])

test_augs = torchvision.transforms.Compose([

torchvision.transforms.ToTensor()])

PyTorch数据集提供的transform参数应用图像增广来转化图像。

def load_cifar10(is_train, augs, batch_size):

dataset = torchvision.datasets.CIFAR10(root="../data", train=is_train,

transform=augs, download=True)

dataloader = torch.utils.data.DataLoader(dataset, batch_size=batch_size,

shuffle=is_train, num_workers=d2l.get_dataloader_workers())

return dataloader

多CPU训练

#

def train_batch_ch13(net, X, y, loss, trainer, devices):

"""用多GPU进行小批量训练"""

if isinstance(X, list):

# 微调BERT中所需

X = [x.to(devices[0]) for x in X]

else:

X = X.to(devices[0])

y = y.to(devices[0])

net.train()

trainer.zero_grad()

pred = net(X)

l = loss(pred, y)

l.sum().backward()

trainer.step()

train_loss_sum = l.sum()

train_acc_sum = d2l.accuracy(pred, y)

return train_loss_sum, train_acc_sum

#

def train_ch13(net, train_iter, test_iter, loss, trainer, num_epochs,

devices=d2l.try_all_gpus()):

"""用多GPU进行模型训练"""

timer, num_batches = d2l.Timer(), len(train_iter)

animator = d2l.Animator(xlabel='epoch', xlim=[1, num_epochs], ylim=[0, 1],

legend=['train loss', 'train acc', 'test acc'])

net = nn.DataParallel(net, device_ids=devices).to(devices[0])

for epoch in range(num_epochs):

# 4个维度:储存训练损失,训练准确度,实例数,特点数

metric = d2l.Accumulator(4)

for i, (features, labels) in enumerate(train_iter):

timer.start()

l, acc = train_batch_ch13(

net, features, labels, loss, trainer, devices)

metric.add(l, acc, labels.shape[0], labels.numel())

timer.stop()

if (i + 1) % (num_batches // 5) == 0 or i == num_batches - 1:

animator.add(epoch + (i + 1) / num_batches,

(metric[0] / metric[2], metric[1] / metric[3],

None))

test_acc = d2l.evaluate_accuracy_gpu(net, test_iter)

animator.add(epoch + 1, (None, None, test_acc))

print(f'loss {metric[0] / metric[2]:.3f}, train acc '

f'{metric[1] / metric[3]:.3f}, test acc {test_acc:.3f}')

print(f'{metric[2] * num_epochs / timer.sum():.1f} examples/sec on '

f'{str(devices)}')

可以定义train_with_data_aug函数,使用图像增广来训练模型。该函数获取所有的GPU,并使用Adam作为训练的优化算法,将图像增广应用于训练集,最后调用刚刚定义的用于训练和评估模型的train_ch13函数。

batch_size, devices, net = 256, d2l.try_all_gpus(), d2l.resnet18(10, 3)

def init_weights(m):

if type(m) in [nn.Linear, nn.Conv2d]:

nn.init.xavier_uniform_(m.weight)

net.apply(init_weights)

def train_with_data_aug(train_augs, test_augs, net, lr=0.001):

train_iter = load_cifar10(True, train_augs, batch_size)

test_iter = load_cifar10(False, test_augs, batch_size)

loss = nn.CrossEntropyLoss(reduction="none")

trainer = torch.optim.Adam(net.parameters(), lr=lr)

train_ch13(net, train_iter, test_iter, loss, trainer, 10, devices)

/usr/local/lib/python3.10/dist-packages/torch/nn/modules/lazy.py:181: UserWarning: Lazy modules are a new feature under heavy development so changes to the API or functionality can happen at any moment.

warnings.warn('Lazy modules are a new feature under heavy development '

train_with_data_aug(train_augs, test_augs, net)

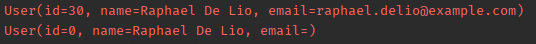

loss 0.223, train acc 0.921, test acc 0.846

1168.0 examples/sec on [device(type='cuda', index=0)]